Backup Architecture on NetApp FAS Systems

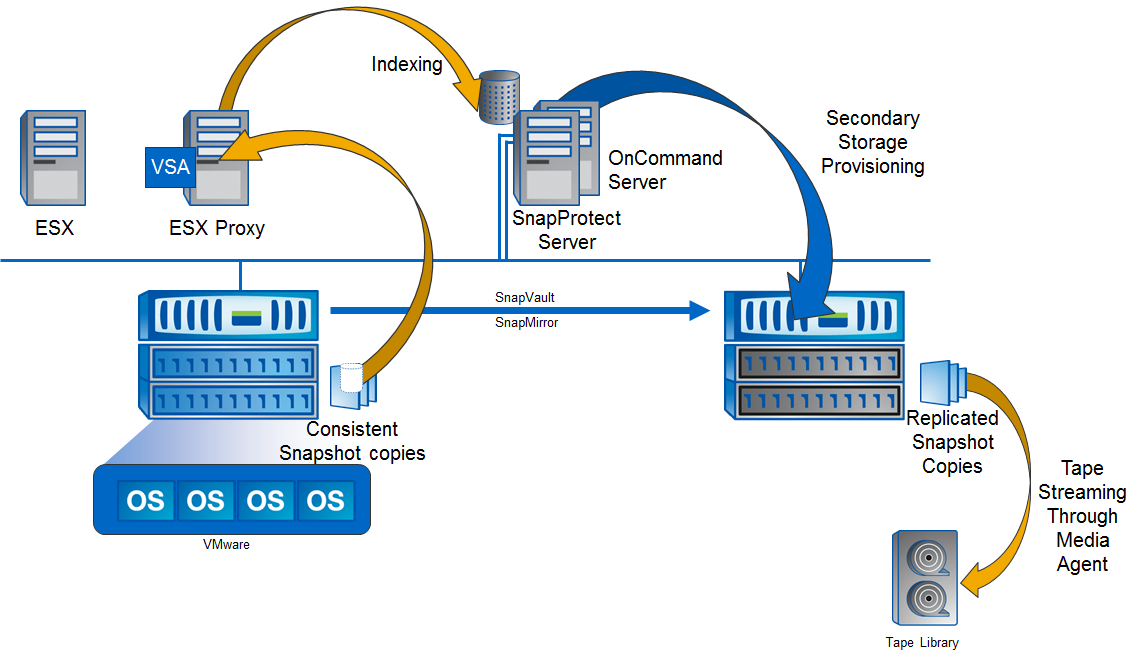

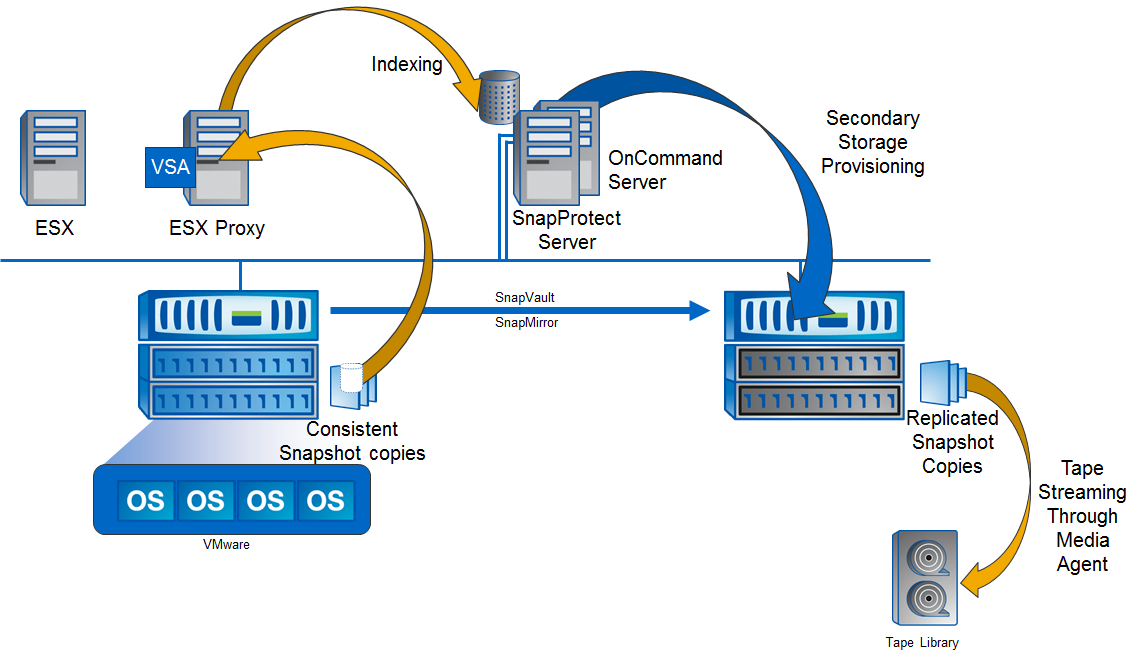

In this article, I will look at how the SnapProtect architecture brings to life the “NetApp backup paradigm” using the advanced storage technology and benefits of the FAS series of storage systems . SnapProtect (SP) software is designed to manage the backup life cycle, backup and recovery for the entire infrastructure located on NetApp FAS storage . SP provides application connectivity, backup consistency, replication / backup management between repositories, cataloging, data recovery if necessary, backup verification, and other functions. Fasvery versatile systems that can be used for tasks like the “main” storage system , “spare” ( DR ) and data archiving.

The SnapProtect complex consists of the following main components:

There are such iDA agents:

Consistency, as mentioned earlier, is performed using the iDA agent on the host, so before removing the hardware assistant SnapShot on the storage side, the agent “prepares” the application. This method of backup can be performed many times, right in the middle of the working day, as clients connected to such applications will not even notice these processes.

Replication of (consistent) snapshots between storages can be done using SnapMirror or SnapVault.

Pouring data from the primary or backup system to the tape library is performed through the media agent for SAN and NAS data. Also for NASYou can upload data directly from NetApp storage to a tape library using the NDMP and SMtape protocol, unfortunately, in this case, data cataloging is not supported.

The cataloging is performed as a post-process using the amplification of cloned snapshots from the NetApp repository (FlexClone technology).

Data recovery can be performed both from the remote storage, local, and from the tape library.

There are several ways (one might even say ways) of recovery, depending on the type of data, type of recovery and placement of this data.

So, to restore the entire Volume or qtree from a snapshot to local storage, the SnapRestore technology of instant recovery by means of storage from a snapshot can be used. With a similar recovery but from a remote system, you will need to perform “reverse replication” using SnapMirror or SnapVault technology. You can replicate not only the latest data, but also one of the snapshots located on the remote storage.

If necessary, “granular recovery", the internal logic looks like cloning a snapshot of a remote or main system and connecting it to a media agent, then the necessary objects are transferred from the media agent to the required host. Recovery from the tape library occurs in a similar way - through the media agent to the host.

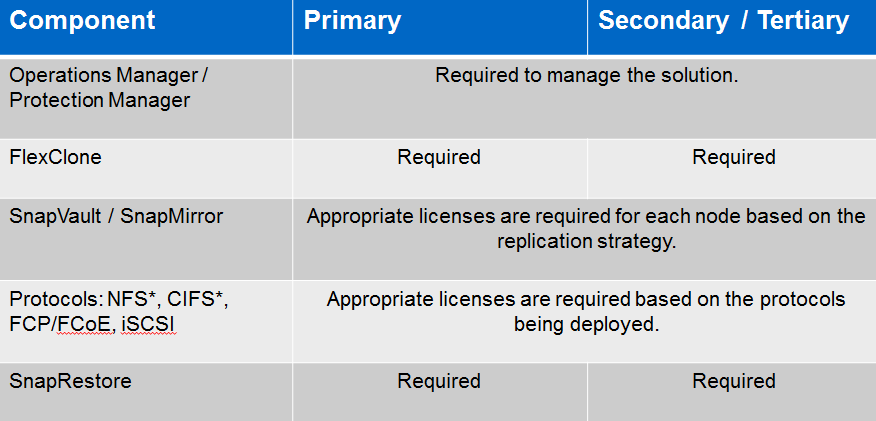

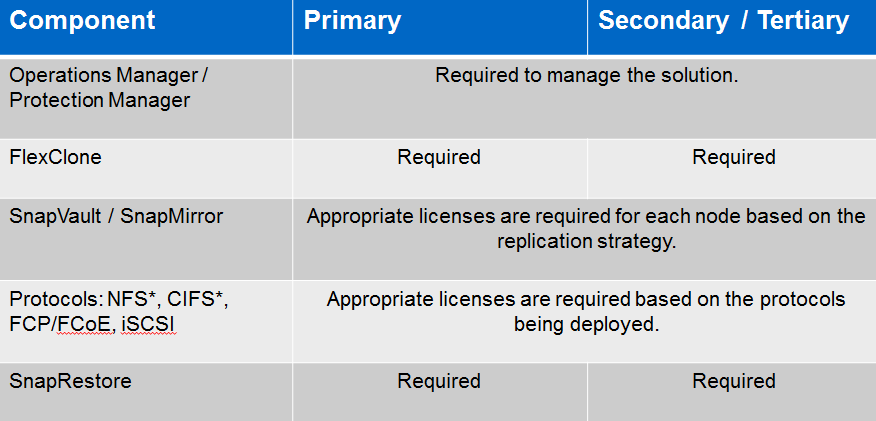

Technologies necessary for storage:

Thus, summing up, we can say that FlexClone, SnapRestore, one of the replication licenses (SnapVault / SnapMirror) and SnapProtect itself must be present on the main site.

On backup storage, FlexClone is necessary if cataloging will be performed there. If the backup / restore scheme requires the ability to quickly restore Volume from a snapshot to a remote storage, then such storage requires SnapRestore technology. Since we are replicating data from the main system to the remote system, we also need to support replication technology (SnapVault / SnapMirror) on the remote system. Everything else needs support for SnapProtect itself.

As a result, NetApp strictly regulates, for the “two-site model”, additional licenses for primary and backup storage for deploying SnapProtect architecture:

Granular recovery.

By recovery granularity is meant the ability to restore not an entire LUN / Volume with data, but individual objects or files located on the LUN . For example, for a virtual infrastructure, such an object can be a virtual machine, a virtual machine file, vDisk, or individual files inside a virtual machine. For Databases, such an object can be a separate database instance. For Exchange, it can be a separate mailbox or a separate letter, etc.

SAN + Granular Backup and Restore

In the case of using the SnapProtect architecture with a SAN network, it is necessary to store application data requiring granular recovery (such as Exchange, Oracle, MS SQL and others), to place it on a separate dedicated LUN . In the case of server virtualization with such applications, the same rule applies: you must place data from these applications on a separate RDM drive. The LUNs themselves on the side of the storage system must, each, lie in a separate Volume. For example, in the case of virtualization with an Oracle database and using a SAN network, it is necessary to separate a separate LUN for each data type for this database , connected in the formRDM , exactly by the same rules, as if instead of SnapProtect we used SnapManager. Those. the logic of partitioning and placing disks for SnapProtect will be the same as for SnapManager, the same rules apply here. Indeed, the technologies for the consistent removal of hardware-assistant snapshots in both cases are similar, if not identical.

It is possible to consider this example in more detail in my article "SnapManager for Oracle & SAN network" on a geek magazine

Please send messages about errors in the text to the LAN .

Comments and additions on the contrary please comment

The SnapProtect complex consists of the following main components:

- Server with SnapProtect Management Server (CommServe license), clustering is used for fault tolerance (at the application level + database clustering )

- Servers with installed MediaAgents.

- IDataAgent Agents (iDA). Installed on hosts for integration with the OS , file systems, applications, and other components of the host OS .

- SP communicates with NetApp repositories through Oncommand Unified Manager.

There are such iDA agents:

- VSA - For VMWare and Hyper-V

- for Oracle under Windows / Unix / Linux (Including with RAC )

- for Exchange (including with DAG )

- for MS SQL

- for snarepoint

- Qsnap Driver for Win / Unix File Systems

- NAS NDMP iDA

- DB2 Unix / Linux

- Lotus Domino on Windows

- Active Directory iDA

Consistency, as mentioned earlier, is performed using the iDA agent on the host, so before removing the hardware assistant SnapShot on the storage side, the agent “prepares” the application. This method of backup can be performed many times, right in the middle of the working day, as clients connected to such applications will not even notice these processes.

Replication of (consistent) snapshots between storages can be done using SnapMirror or SnapVault.

Pouring data from the primary or backup system to the tape library is performed through the media agent for SAN and NAS data. Also for NASYou can upload data directly from NetApp storage to a tape library using the NDMP and SMtape protocol, unfortunately, in this case, data cataloging is not supported.

The cataloging is performed as a post-process using the amplification of cloned snapshots from the NetApp repository (FlexClone technology).

Data recovery can be performed both from the remote storage, local, and from the tape library.

There are several ways (one might even say ways) of recovery, depending on the type of data, type of recovery and placement of this data.

So, to restore the entire Volume or qtree from a snapshot to local storage, the SnapRestore technology of instant recovery by means of storage from a snapshot can be used. With a similar recovery but from a remote system, you will need to perform “reverse replication” using SnapMirror or SnapVault technology. You can replicate not only the latest data, but also one of the snapshots located on the remote storage.

If necessary, “granular recovery", the internal logic looks like cloning a snapshot of a remote or main system and connecting it to a media agent, then the necessary objects are transferred from the media agent to the required host. Recovery from the tape library occurs in a similar way - through the media agent to the host.

Technologies necessary for storage:

Thus, summing up, we can say that FlexClone, SnapRestore, one of the replication licenses (SnapVault / SnapMirror) and SnapProtect itself must be present on the main site.

On backup storage, FlexClone is necessary if cataloging will be performed there. If the backup / restore scheme requires the ability to quickly restore Volume from a snapshot to a remote storage, then such storage requires SnapRestore technology. Since we are replicating data from the main system to the remote system, we also need to support replication technology (SnapVault / SnapMirror) on the remote system. Everything else needs support for SnapProtect itself.

As a result, NetApp strictly regulates, for the “two-site model”, additional licenses for primary and backup storage for deploying SnapProtect architecture:

Granular recovery.

By recovery granularity is meant the ability to restore not an entire LUN / Volume with data, but individual objects or files located on the LUN . For example, for a virtual infrastructure, such an object can be a virtual machine, a virtual machine file, vDisk, or individual files inside a virtual machine. For Databases, such an object can be a separate database instance. For Exchange, it can be a separate mailbox or a separate letter, etc.

SAN + Granular Backup and Restore

In the case of using the SnapProtect architecture with a SAN network, it is necessary to store application data requiring granular recovery (such as Exchange, Oracle, MS SQL and others), to place it on a separate dedicated LUN . In the case of server virtualization with such applications, the same rule applies: you must place data from these applications on a separate RDM drive. The LUNs themselves on the side of the storage system must, each, lie in a separate Volume. For example, in the case of virtualization with an Oracle database and using a SAN network, it is necessary to separate a separate LUN for each data type for this database , connected in the formRDM , exactly by the same rules, as if instead of SnapProtect we used SnapManager. Those. the logic of partitioning and placing disks for SnapProtect will be the same as for SnapManager, the same rules apply here. Indeed, the technologies for the consistent removal of hardware-assistant snapshots in both cases are similar, if not identical.

It is possible to consider this example in more detail in my article "SnapManager for Oracle & SAN network" on a geek magazine

Please send messages about errors in the text to the LAN .

Comments and additions on the contrary please comment