Automate system assembly

Pyotr Lukhin

Most people who first learn about automatic assembly are likely to be careful with it: excessive labor costs for organizing it and maintaining operability are real, but in return they are only offered a ghostly improvement in development efficiency in the future. Who is familiar with it firsthand is more optimistic, because they know that it’s much easier to avoid a number of problems when working with their system before they are formed than when it is too late to change everything.

So how can automatic assembly be of such interest, what opportunities can be realized with its help, and what results can be achieved? Let's try to figure it out.

However, before considering it in detail, you need to stipulate the following: the topic of assembly automation is quite extensive and there are a large number of articles on how you can implement this or that task. At the same time, there is little where you can read how to set it up for yourself, with all the intricacies of the organization and a description of potential opportunities. Therefore, in this article I wanted to consider automatic assemblies from this point of view. If anyone is interested in how this or that stage is actually implemented, then, if possible, links to additional materials or the names of programs that can be used for this will be listed.

When developing software, one often has to face the fact that the time from making corrections to the source code to getting them to the user depends on many factors. To do this, you must first collect the code in the form of libraries, then make sure that they work, then assemble the installers and test the product after they are installed - all these actions cannot be performed instantly, so the timing of their implementation is determined by how they will be pass.

On the one hand, all the above steps sound quite reasonable: indeed, why give the user a product that will contain errors. However, in practice, the situation is aggravated by a constant lack of time and resources: corrections of errors must be published as soon as possible, but at the same time those responsible for the quality of the product or its assembly are heavily engaged in other work on it where their presence is more necessary.

Therefore, periodically, part of the steps for issuing corrections is skipped (for example, the code is not checked for compliance with the design standard) or is not fully implemented (for example, only the fact of fixing the error is checked, but not its possible impact on other system functionality). As a result, the user receives either a poorly tested or partially inoperative solution, and the developers will subsequently have to deal with this and do not know how much time they will need.

However, such problematic situations can, if not be circumvented, at least reduce their impact. Note that most of the steps are fairly uniform and, more importantly, are well-algorithmizable. And, therefore, if you shift their execution to automation, then they will take less time and the chance to make a mistake during their execution or not to do something necessary is also minimized.

In order for the program to comply with all the requirements for it, you must first write them in a form that will be understandable to the computer. Developers are engaged in a similar transformation - as a result of their work, the source code appears. Depending on the approach used, it can be performed either immediately or after compilation. One of the useful properties of this representation is that different people have the ability to modify the system independently from each other without direct communication throughout the entire development period. Unlike spoken language, it’s easier to work with the code, it’s better preserved, you can keep a history of changes for it. At the same time, a single style of code design and structuring allows developers to think in a similar way,

To simplify the storage of source code and work with it to many people, various version control systems are simultaneously useful. For example, they include TFS, GIT or SVN. Their use in projects can reduce losses from problems such as:

With such centralized storage of the source code and timely updating it, you can not only not worry that any part of it will be lost (provided that the storage itself is guaranteed), but, more interestingly, you can perform actions on it in automatic order.

Depending on the version storage system used, the method of their launch may vary: for example, periodically according to a given schedule, or when making changes to the stored data itself. Typically, such automated sequences of actions are used in order to check the quality of the code without human intervention and the layout of the installers for transmission to users, so they are often simply called automatic assemblies.

A simple script launched by the scheduler of the operating system could serve as the basis for organizing their launch, tracking code changes and publishing the results, but it would be better if a more specialized program does this. For example, when using TFS, the TFS Build Server might be a smart choice. Or you can use universal solutions such as: TeamCity, Hudson or CriuseControl.

What actions can be automated? In fact, you can list a lot of them:

This is only part of what could really be useful in your project - in fact, there are a lot of similar items.

But what exactly can be automated when there is only the source code of the program, albeit stored centrally? Not much, since in this case it is possible to analyze only its files, which imposes its limitations: either use only the simplest checks, or search and use third-party static code analyzers, or write your own. However, in this situation, you can benefit:

The results of this assembly stage can be interpreted in different ways: some of the checks are purely informational in nature (for example, statistics), another indicates possible problems in the long term (for example, code execution or comments from a static analyzer), and the third - to complete the assembly in General (for example, the lack of files in the right places). Also, do not forget that the checks still take some time, sometimes significant, so in each case you should prioritize: what is more important for you - the mandatory passage of all checks or the fastest response from the later stages of the assembly.

As can be seen from the tasks listed above at this stage, most of them are quite superficial (for example, collecting statistics or checking for the presence of files), so for their implementation it will be easier to write your own utilities or scripts for verification, rather than looking for ready-made ones. Another, on the contrary, allows you to analyze the internal logic of the code itself, which is much harder, therefore it is preferable to use third-party solutions for this. For example, for static analysis, you can try to connect: PVS-Studio, Cppcheck, RATS, Graudit or look for something in other sources ( http://habrahabr.ru/post/75123/ , https://ru.wikipedia.org / wiki / Static_analysis_code ).

However, processing information about the source files for the assembly is usually not its immediate goal. Therefore, the next step is to compile the program code into a set of libraries that implement the logic of work described in it. If it is impossible to execute it, then with automatic assembly this fact will become clear soon after its launch, and not after a few days when the assembled version is required. The effect is especially good when the assembly starts immediately after the publication of inoperative code obtained as a result of combining several edits.

Running a little ahead, I would like to note one more fact. It consists in the fact that the more additional logic you will implement during the assembly process, the longer it will take to execute, which is not surprising. Therefore, it would be a logical step to arrange them to be executed only for code that really changed. How to do this: most likely, the code of your product is not a single inseparable entity - if so, it means either your project is still small enough, or it is a tightly interwoven tangle of relationships that worsen the process of understanding it not only to outsiders, but also to the developers themselves .

To do this, the project must be divided into separate independent modules: for example, a server application, a client application, shared libraries, external assembled components. The more such modules, the more flexible will be the development and further deployment of the system, but do not get carried away and break up excessively - the smaller the parts are, the more organizational problems will have to be addressed when making changes to several of them at once.

What kind of problems are these? Splitting the project into independent modules implies that for the normal development of one of them, it is completely optional to make changes to the others. For example, if you need to change the way information is received from the user in the graphical interface of the client application, then there is absolutely no need to make changes to the server code. So, a reasonable decision would be to limit those who deal with the client only with the source code related to it, and replace everything related to the modules they use with their ready-made and tested version in the form of assembled libraries. Accordingly, what advantages will we get in this case:

As a result, the assembly will be much faster than if it consistently assembled and checked all the components, which means that it will be possible to find out as quickly as possible whether errors were made by the current fixes or not. However, for such advantages, you will have to pay extra overhead for organizing work with the modular structure of the system:

There are different ways to implement a system partition into modules. It is enough for someone to split the code into several independent projects or solutions, someone prefers to use submodules in GIT or something similar. However, which organization method you would not choose: whether it be one sequential assembly or optimized for working with modules - as a result of compiling the code you will get a set of libraries that you can already work with in principle, and also, which is a more interesting fact - which you can test in automatic mode.

When it becomes necessary to write a program, the question immediately arises - how to verify that the assembled version meets all the requirements. Well, if their number is small - in this case, you can view everything and base your decision on the result of their verification. However, this is usually not the case at all - there are so many possible ways to use the program that it is difficult to even describe them all, not just to check.

Therefore, a different verification method is often taken as a standard - the system is considered to have passed it if it successfully passes fixed scenarios, that is, predefined sequences of actions that are selected as the most common when working with the system among users. The method is quite reasonable, since if errors are still found in the released version, those that will interfere with most people will be more critical, and those that appear only occasionally in rarely used functionality will turn out to be less priority.

How then do you conduct testing in this case: the system that is ready for deployment is passed to testers for one purpose - so that they try to go through all the predefined scenarios of working with it and tell the developers if they encountered any errors, and if something was found at the same time not directly related to the scripts. Moreover, such testing may drag on for several days, even if there are several testers.

Found errors and remarks during testing have the most direct impact on the time of the test: if no errors are found, then they will be needed less, but on the contrary, they can be significantly increased due to a long determination of the conditions for reproducing errors or ways to circumvent them other scripts could be tested. Therefore, a good option would be one in which when testing there would be as few comments as possible, and it could end faster without losing the quality of the test.

Some errors that can be introduced into the source code are revealed by the process of compilation. But he does not guarantee that the program will work stably and fulfill all the requirements for it. Errors, which, in principle, can be contained in it, can be divided by the complexity of their identification and correction into the following categories:

Typically, checks at this level are implemented using unit tests and integration tests. Ideally, for each method of the program there should be a set of certain checks, but often this is problematic: either because of the dependence of the code being tested on the environment in which it should be executed, or because of the additional labor costs for their creation and maintenance.

However, if sufficient resources are allocated for the formation and maintenance of the infrastructure for automatic testing of the collected libraries, it is possible to detect low-level errors even before the version reaches the testers. And this, in turn, means that they will be less likely to come across obvious software errors and crashes and, therefore, will quickly check the system without losing quality.

After the assembly passes the testing stage of the libraries, the following can be said about the resulting system:

Thus, it turns out that at this stage a rather large number of errors have already been marked aside, so the following actions can be performed taking into account this fact. What can be done next:

It is worth mentioning the priorities here. What is more important for your system: its operability or compliance with accepted development rules? The following options are possible:

However, it is worthwhile to understand that in any case, the assembly should be understood to have been successfully completed only after passing through all its stages - prioritization in this case only indicates what needs to be emphasized in the first place. For example, the assembly of documentation can be brought to the stage after the assembly of the installers, so that they can be received as soon as possible, and also so that, if it is impossible to generate them, the documentation does not even try to be collected.

To build documentation in .NET projects, it is very convenient to use the SandCastle program (article about use: http://habrahabr.ru/post/102177/ ). It is possible that for other programming languages, you can also find something similar.

After passing through the testing phase of the libraries, there are two main directions for further assembly. And if one of them consists in checking its compliance with some technical requirements or in the assembly of documentation, then the other consists in preparing a version for distribution.

The internal organization of system development is usually of little interest to the end user, since for him it is only important whether he can install it on his computer, whether it will be updated correctly, whether it will provide the declared functionality, and whether any additional problems will arise when using it .

Therefore, to achieve this goal, it is necessary to obtain from a set of disparate libraries what can be transferred to it. However, their successful formation does not mean that everything is in order - they can and should be tested again, but this time, by trying to perform actions not as a computer, but as if they were performed by the user himself.

There are quite a large number of ways to build installers: for example, you can use the standard installer project in Visual Studio or create it in InstallShield. You can read about many other options on IXBT ( http://www.ixbt.com/soft/installers-1.shtml , http://www.ixbt.com/soft/installers-2.shtml , http: // www .ixbt.com / soft / installers-3.shtml ).

It is possible to check the system’s performance in different ways: first, during the testing of libraries, individual nodes are checked, the final decision is made by testers. However, there is an opportunity to further simplify their work - try to automatically go through the main chains of work with the system and perform all those actions that the user could perform in real use.

Such automatic testing is often called interface testing, because during its execution on the computer screen everything that ordinary people would have happened: window display, handling of hover or mouse click events, typing in input fields and so on. On the one hand, this gives a high degree of verification of the system’s operability — the main scenarios and requirements for it are described in the user language and therefore can be written in the form of automated algorithms, however, additional difficulties appear here:

Summarizing all these remarks, we can come to the following conclusion: interface testing will help to make sure that all the tested requirements are fulfilled exactly as they were formulated, and all checks, even despite the time costs, will be completed faster than if they were carried out by testers . However, you have to pay for everything: in this case, you will have to pay labor costs for maintaining the interface test infrastructure, and be prepared for the fact that they will often fail.

If such difficulties do not stop you, then it makes sense to pay attention to the following tools with which you can organize front-end testing: for example, TestComplete, Selenium, HP QuickTest, IBM Rational Robot or UI Automation.

What is the result? In the presence of a centralized source code repository, it is possible to run automatic assemblies: on a schedule or at the time of making changes. In this case, a certain set of actions will be performed, the result of which will be:

Thus, we can say the following: automatic assembly allows you to remove a huge layer of work from the developers and testers of the system for yourself, and all this will be performed without user intervention in the background. You can submit your corrections to the central repository and know that after a while everything will be ready to transfer the version to the user or for final testing and not worry about all the intermediate steps - or at least find out that the edits were incorrect , As soon as possible.

In the end, I would like to touch a little on what is called continuous integration. The basic principle of this approach is that after making corrections, you can find out as early as possible whether corrections lead to errors or not. In the presence of automatic assembly, this is quite simple to do, because it by itself is almost what implements this principle - its transit time is its bottleneck.

If we consider all the stages, as they were described above, it will become clear that some of their components on the one hand take quite a lot of time (for example, static code analysis or assembly of documentation), and on the other they manifest themselves only in the long term. Therefore, it would be reasonable to redistribute the parts of the automatic assembly so that what is guaranteed to interfere with the release of the version (impossibility of compiling the code, crashes in the tests) is checked as early as possible. For example, you can first build libraries, run tests on them and get installers, and postpone static code analysis, assembly of documentation and front-end testing to a later time.

As for the errors that arise during the assembly, they can be divided into two groups: critical for it and non-critical. The first stages include those stages, the negative result of which would make it impossible to launch subsequent ones. For example, if the code does not compile, then why run the tests, and if they fail, then you do not need to collect documentation. Such early interruption of assembly processes allows you to complete them as early as possible so as not to overload the computers on which they are running. If the negative result of the stage is also the result (for example, the design of the code not according to the standard does not interfere with giving the installers to the user), then it makes sense to take this into account during further study, but the assembly should not be stopped.

As described above, a fairly large number of actions during the assembly of the system can be shifted to automation: the less uniform tasks will be performed by people, the more efficient their work will be. However, to complete the article, only the theoretical part would not be enough - because if you do not know whether all this is practicable, then it is not clear how much effort it will take to configure the automatic assembly yourself.

This article was not written from scratch. For several years, our company has repeatedly had to deal with the tasks of optimizing the effectiveness of the development and testing of software products. As a result, the most frequent of them were brought together, summarized, supplemented by ideas and considerations and arranged in the form of an article. At the moment, automatic assemblies are most actively used with us in the development and testing of the electronic document management system “E1 EVFRAT”. From a practical point of view, how exactly the principles described in the article are implemented during its creation could be quite useful for familiarization, but it would be better to write a separate article on this topic than to try to supplement the current one.

Most people who first learn about automatic assembly are likely to be careful with it: excessive labor costs for organizing it and maintaining operability are real, but in return they are only offered a ghostly improvement in development efficiency in the future. Who is familiar with it firsthand is more optimistic, because they know that it’s much easier to avoid a number of problems when working with their system before they are formed than when it is too late to change everything.

So how can automatic assembly be of such interest, what opportunities can be realized with its help, and what results can be achieved? Let's try to figure it out.

Part 0: Instead of introducing

However, before considering it in detail, you need to stipulate the following: the topic of assembly automation is quite extensive and there are a large number of articles on how you can implement this or that task. At the same time, there is little where you can read how to set it up for yourself, with all the intricacies of the organization and a description of potential opportunities. Therefore, in this article I wanted to consider automatic assemblies from this point of view. If anyone is interested in how this or that stage is actually implemented, then, if possible, links to additional materials or the names of programs that can be used for this will be listed.

When developing software, one often has to face the fact that the time from making corrections to the source code to getting them to the user depends on many factors. To do this, you must first collect the code in the form of libraries, then make sure that they work, then assemble the installers and test the product after they are installed - all these actions cannot be performed instantly, so the timing of their implementation is determined by how they will be pass.

On the one hand, all the above steps sound quite reasonable: indeed, why give the user a product that will contain errors. However, in practice, the situation is aggravated by a constant lack of time and resources: corrections of errors must be published as soon as possible, but at the same time those responsible for the quality of the product or its assembly are heavily engaged in other work on it where their presence is more necessary.

Therefore, periodically, part of the steps for issuing corrections is skipped (for example, the code is not checked for compliance with the design standard) or is not fully implemented (for example, only the fact of fixing the error is checked, but not its possible impact on other system functionality). As a result, the user receives either a poorly tested or partially inoperative solution, and the developers will subsequently have to deal with this and do not know how much time they will need.

However, such problematic situations can, if not be circumvented, at least reduce their impact. Note that most of the steps are fairly uniform and, more importantly, are well-algorithmizable. And, therefore, if you shift their execution to automation, then they will take less time and the chance to make a mistake during their execution or not to do something necessary is also minimized.

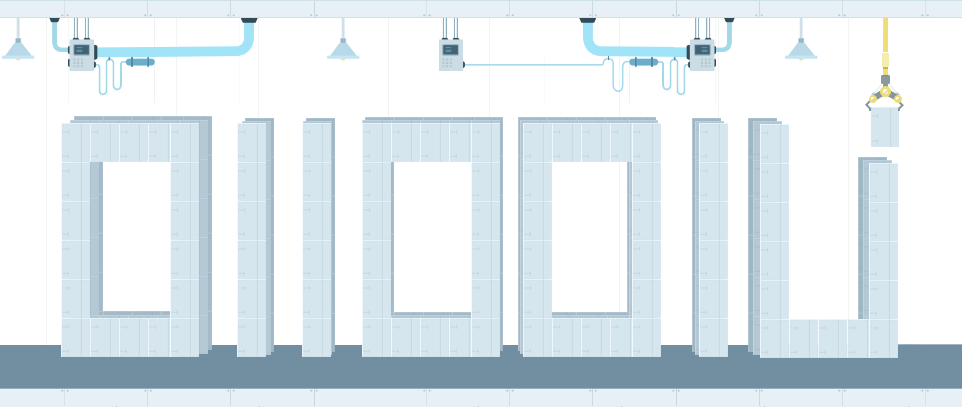

Part 1: Code

In order for the program to comply with all the requirements for it, you must first write them in a form that will be understandable to the computer. Developers are engaged in a similar transformation - as a result of their work, the source code appears. Depending on the approach used, it can be performed either immediately or after compilation. One of the useful properties of this representation is that different people have the ability to modify the system independently from each other without direct communication throughout the entire development period. Unlike spoken language, it’s easier to work with the code, it’s better preserved, you can keep a history of changes for it. At the same time, a single style of code design and structuring allows developers to think in a similar way,

To simplify the storage of source code and work with it to many people, various version control systems are simultaneously useful. For example, they include TFS, GIT or SVN. Their use in projects can reduce losses from problems such as:

- Getting multiple variations of the same code when editing a file by multiple people

- Loss of source code of an important component during the move or due to the forgetfulness of one of the employees

- Search for the author of the functionality in which an error was detected or in which it is necessary to make improvements

With such centralized storage of the source code and timely updating it, you can not only not worry that any part of it will be lost (provided that the storage itself is guaranteed), but, more interestingly, you can perform actions on it in automatic order.

Depending on the version storage system used, the method of their launch may vary: for example, periodically according to a given schedule, or when making changes to the stored data itself. Typically, such automated sequences of actions are used in order to check the quality of the code without human intervention and the layout of the installers for transmission to users, so they are often simply called automatic assemblies.

A simple script launched by the scheduler of the operating system could serve as the basis for organizing their launch, tracking code changes and publishing the results, but it would be better if a more specialized program does this. For example, when using TFS, the TFS Build Server might be a smart choice. Or you can use universal solutions such as: TeamCity, Hudson or CriuseControl.

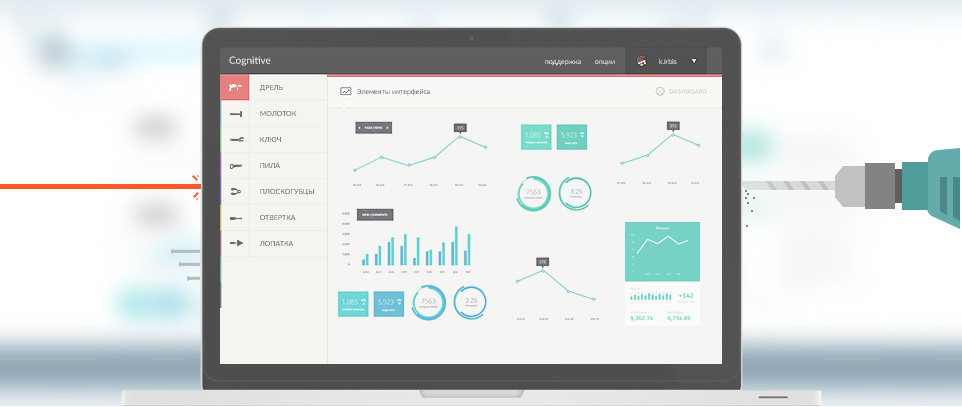

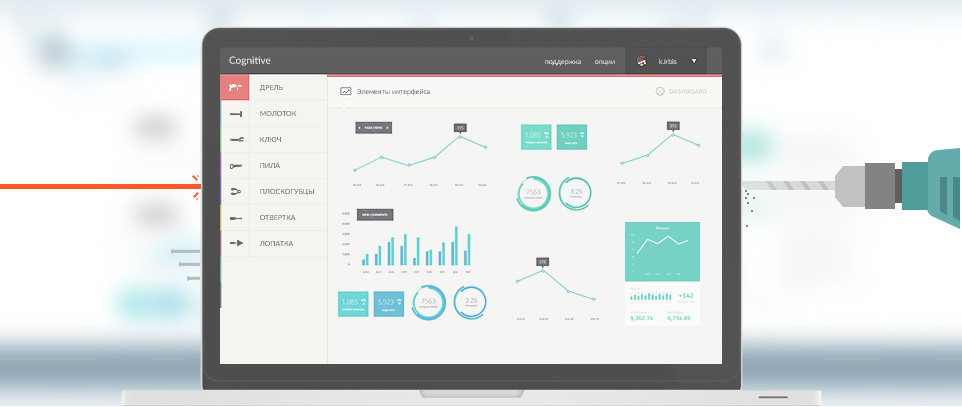

Part 2: Code Testing

What actions can be automated? In fact, you can list a lot of them:

- Layout of installers - users of the system are usually interested in it itself, and not how it was written or assembled, which means they should be able to install it on their own

- Formation of updates - if your system supports the technology of updates, then a good option would be not only to receive full-fledged installers, but also a set of incremental patches for a quick transition from one version to another

- System testing - users should receive only those versions that are stable and implement the required functionality, so they need to be checked, and some of the checks can be transferred to automation

- Documentation - this action can be useful, both if you give the external API of the system to third-party developers, and during internal development, because the larger the system and the more code in it, the fewer people who know it thoroughly

- Checking version compatibility - if you compare different versions of the compiled libraries, you can notice in time when the external API available in each module undergoes changes: if something in it ceases to be visible from the outside - the modules or programs using it may be incompatible with the new version; and vice versa - an excessive number of functions not written in the API documentation indicates that either they must be added to it or hidden from prying eyes

- Checking the accepted rules and standards - when the code is designed in the same way, then any of the developers of the system will be able to freely navigate it. If, when searching for something, it is not found in the expected place or written in a non-standard form, then even if the desired one is found or meaningful, additional time will be spent on this, which could have been avoided

- Checking the assembly itself - it can be quite unpleasant when, after prolonged use of the automatic assembly, it is discovered that it didn’t do what it was intended to do: for example, not all tests were run due to the fact that they were not found, or were not performed in it assembly of rarely used module documentation, which suddenly was urgently needed

This is only part of what could really be useful in your project - in fact, there are a lot of similar items.

But what exactly can be automated when there is only the source code of the program, albeit stored centrally? Not much, since in this case it is possible to analyze only its files, which imposes its limitations: either use only the simplest checks, or search and use third-party static code analyzers, or write your own. However, in this situation, you can benefit:

- You can check the availability of files or directories, their naming methods, nesting rules: it is unlikely to be useful to most developers, but sometimes there are similar situations

- Analyze the contents of source code files to verify compliance with accepted standards: for example, for some time it was common to place a multi-line comment at the beginning of each file with a description of the functionality and an indication of the author

- Check compliance with the requirements for files by external applications: for example, if you intend to run tests later in the assembly, you need to make sure that the file containing their description is configured correctly and the tests can detect the checked libraries when they are launched

- Static code analysis - when developers make errors in the program by negligence, there are cases in which such incorrectnesses are not detected even by tests and only a close examination of the source code helps to find them. External analyzers make it possible to identify such situations automatically, which greatly simplifies development

- Collection of various statistics: the number of lines in the code files, whether there are completely identical pieces of functions, the most edited files are not the most important information, but sometimes it makes it possible to look at the project a little differently

The results of this assembly stage can be interpreted in different ways: some of the checks are purely informational in nature (for example, statistics), another indicates possible problems in the long term (for example, code execution or comments from a static analyzer), and the third - to complete the assembly in General (for example, the lack of files in the right places). Also, do not forget that the checks still take some time, sometimes significant, so in each case you should prioritize: what is more important for you - the mandatory passage of all checks or the fastest response from the later stages of the assembly.

As can be seen from the tasks listed above at this stage, most of them are quite superficial (for example, collecting statistics or checking for the presence of files), so for their implementation it will be easier to write your own utilities or scripts for verification, rather than looking for ready-made ones. Another, on the contrary, allows you to analyze the internal logic of the code itself, which is much harder, therefore it is preferable to use third-party solutions for this. For example, for static analysis, you can try to connect: PVS-Studio, Cppcheck, RATS, Graudit or look for something in other sources ( http://habrahabr.ru/post/75123/ , https://ru.wikipedia.org / wiki / Static_analysis_code ).

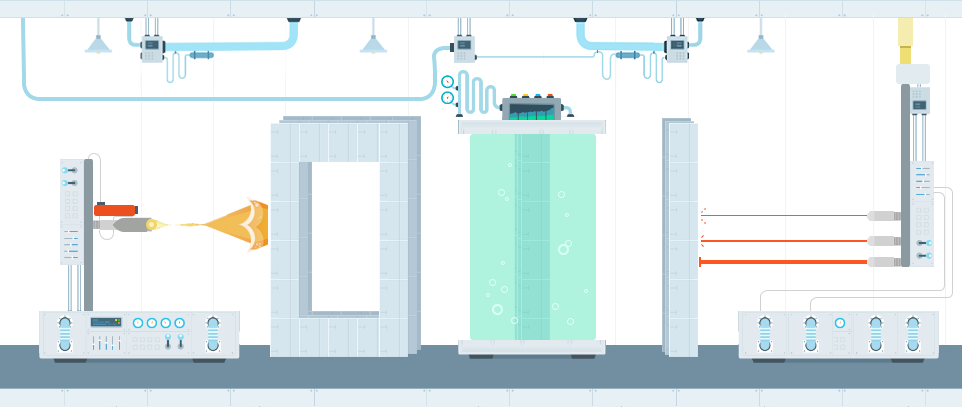

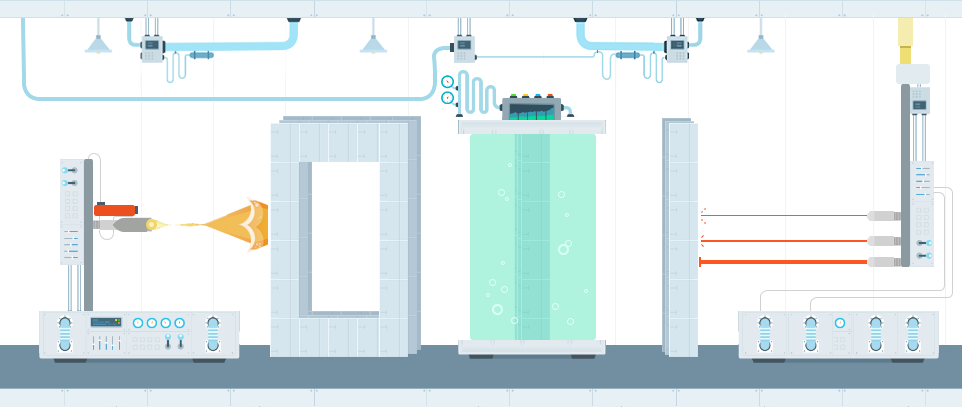

Part 3: Assembly

However, processing information about the source files for the assembly is usually not its immediate goal. Therefore, the next step is to compile the program code into a set of libraries that implement the logic of work described in it. If it is impossible to execute it, then with automatic assembly this fact will become clear soon after its launch, and not after a few days when the assembled version is required. The effect is especially good when the assembly starts immediately after the publication of inoperative code obtained as a result of combining several edits.

Running a little ahead, I would like to note one more fact. It consists in the fact that the more additional logic you will implement during the assembly process, the longer it will take to execute, which is not surprising. Therefore, it would be a logical step to arrange them to be executed only for code that really changed. How to do this: most likely, the code of your product is not a single inseparable entity - if so, it means either your project is still small enough, or it is a tightly interwoven tangle of relationships that worsen the process of understanding it not only to outsiders, but also to the developers themselves .

To do this, the project must be divided into separate independent modules: for example, a server application, a client application, shared libraries, external assembled components. The more such modules, the more flexible will be the development and further deployment of the system, but do not get carried away and break up excessively - the smaller the parts are, the more organizational problems will have to be addressed when making changes to several of them at once.

What kind of problems are these? Splitting the project into independent modules implies that for the normal development of one of them, it is completely optional to make changes to the others. For example, if you need to change the way information is received from the user in the graphical interface of the client application, then there is absolutely no need to make changes to the server code. So, a reasonable decision would be to limit those who deal with the client only with the source code related to it, and replace everything related to the modules they use with their ready-made and tested version in the form of assembled libraries. Accordingly, what advantages will we get in this case:

- Assembling a specific module will not collect related dependent components if no changes were made to them

- There is no need to check the quality of the internal logic of the operation of dependent modules, since they are considered to be sufficiently tested

As a result, the assembly will be much faster than if it consistently assembled and checked all the components, which means that it will be possible to find out as quickly as possible whether errors were made by the current fixes or not. However, for such advantages, you will have to pay extra overhead for organizing work with the modular structure of the system:

- If the functionality changes affect several modules, and not one, then it will be necessary to determine in what order they should be performed, and only after that proceed with compilation. It would be nice if such a determination could occur automatically

- The assembly of each individual module should guarantee not only its operability, but also ensure the operability of its dependent modules. To solve this problem, you can provide fixed interfaces that the module must implement and which will be present in each version. This may turn out to be quite problematic, however, in large or highly distributed projects, such work is still preferable to fixing compatibility errors that might otherwise occur.

There are different ways to implement a system partition into modules. It is enough for someone to split the code into several independent projects or solutions, someone prefers to use submodules in GIT or something similar. However, which organization method you would not choose: whether it be one sequential assembly or optimized for working with modules - as a result of compiling the code you will get a set of libraries that you can already work with in principle, and also, which is a more interesting fact - which you can test in automatic mode.

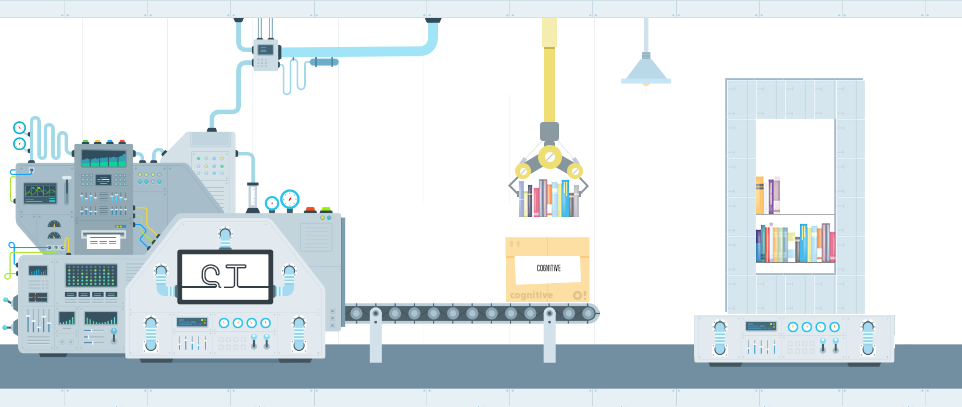

Part 4: Testing Libraries

When it becomes necessary to write a program, the question immediately arises - how to verify that the assembled version meets all the requirements. Well, if their number is small - in this case, you can view everything and base your decision on the result of their verification. However, this is usually not the case at all - there are so many possible ways to use the program that it is difficult to even describe them all, not just to check.

Therefore, a different verification method is often taken as a standard - the system is considered to have passed it if it successfully passes fixed scenarios, that is, predefined sequences of actions that are selected as the most common when working with the system among users. The method is quite reasonable, since if errors are still found in the released version, those that will interfere with most people will be more critical, and those that appear only occasionally in rarely used functionality will turn out to be less priority.

How then do you conduct testing in this case: the system that is ready for deployment is passed to testers for one purpose - so that they try to go through all the predefined scenarios of working with it and tell the developers if they encountered any errors, and if something was found at the same time not directly related to the scripts. Moreover, such testing may drag on for several days, even if there are several testers.

Found errors and remarks during testing have the most direct impact on the time of the test: if no errors are found, then they will be needed less, but on the contrary, they can be significantly increased due to a long determination of the conditions for reproducing errors or ways to circumvent them other scripts could be tested. Therefore, a good option would be one in which when testing there would be as few comments as possible, and it could end faster without losing the quality of the test.

Some errors that can be introduced into the source code are revealed by the process of compilation. But he does not guarantee that the program will work stably and fulfill all the requirements for it. Errors, which, in principle, can be contained in it, can be divided by the complexity of their identification and correction into the following categories:

- Trivial errors - these include, for example, typos in messages or minor corrections. Often more time is spent on conveying information to the developer than on the fix itself. Automation of checks for such errors is possible, but usually it’s not worth it to implement it.

- Errors of individual parts of the code - may occur if the developer has not provided all possible options for using its methods, as a result of which they turn out to be inoperative or return an unexpected result. Unlike other situations, such errors may well be detected automatically - when writing, the developer usually understands how his code will be used, which means that he can describe it in the form of checks

- Errors in the interaction of parts of the code - if usually one person is engaged in the development of each specific part, then when there are many parts, then understanding the principles of their joint work is also some common knowledge. In this case, it’s a little more difficult to determine all the options for using the code, however, with proper study, such situations can also be formalized as automatic checks, however, only for some scenarios of its operation

- Errors of logic - they are also errors of inconsistency of the program with the requirements presented to it. It is rather difficult to describe them in the form of automated checks, since they themselves are formulated in different terminology. Therefore, errors of this kind are better detected by manual testing.

Typically, checks at this level are implemented using unit tests and integration tests. Ideally, for each method of the program there should be a set of certain checks, but often this is problematic: either because of the dependence of the code being tested on the environment in which it should be executed, or because of the additional labor costs for their creation and maintenance.

However, if sufficient resources are allocated for the formation and maintenance of the infrastructure for automatic testing of the collected libraries, it is possible to detect low-level errors even before the version reaches the testers. And this, in turn, means that they will be less likely to come across obvious software errors and crashes and, therefore, will quickly check the system without losing quality.

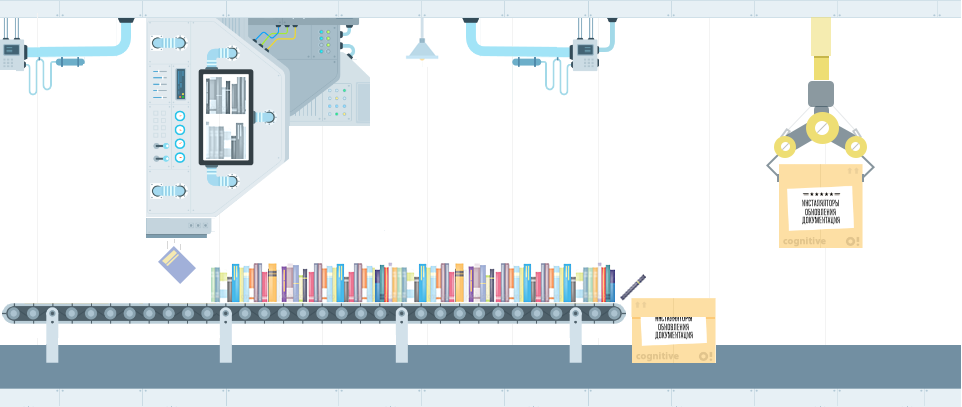

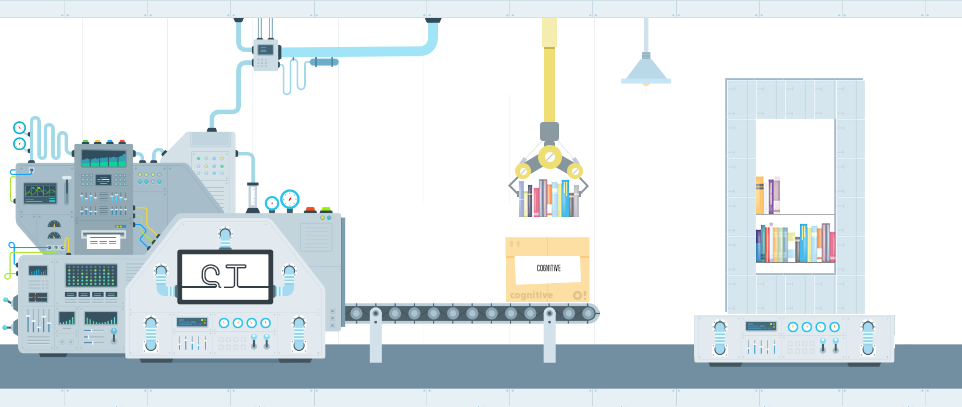

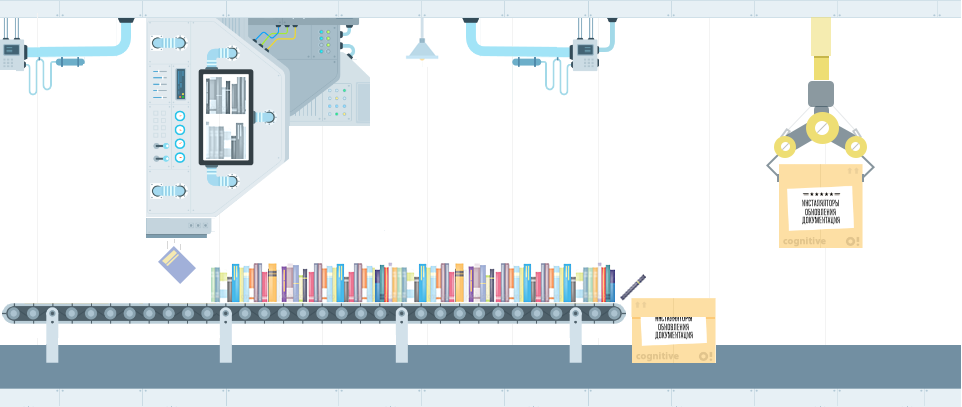

Part 5: Library Analysis

After the assembly passes the testing stage of the libraries, the following can be said about the resulting system:

- In addition to the program source code, compiled libraries are also available for the next steps

- There is some formal way to test part of the functionality, and it was successfully passed.

Thus, it turns out that at this stage a rather large number of errors have already been marked aside, so the following actions can be performed taking into account this fact. What can be done next:

- For example, the assembly of documentation: the operation is not fast and is needed only for released versions, so if there were obvious software errors, it would be rather pointless to generate it

- Since all the collected libraries are available for analysis, it is possible to check the compatibility of versions: for example, make sure that all declared methods of the external API are available for use and vice versa - is it closed that was not specified in the requirements

It is worth mentioning the priorities here. What is more important for your system: its operability or compliance with accepted development rules? The following options are possible:

- If getting a program by a user is more important than simplifying its support, then it makes sense to concentrate on building and checking installers

- If it is important that the program always meets the stated requirements and does not have undocumented API capabilities, then the analysis of libraries will be more priority

However, it is worthwhile to understand that in any case, the assembly should be understood to have been successfully completed only after passing through all its stages - prioritization in this case only indicates what needs to be emphasized in the first place. For example, the assembly of documentation can be brought to the stage after the assembly of the installers, so that they can be received as soon as possible, and also so that, if it is impossible to generate them, the documentation does not even try to be collected.

To build documentation in .NET projects, it is very convenient to use the SandCastle program (article about use: http://habrahabr.ru/post/102177/ ). It is possible that for other programming languages, you can also find something similar.

Part 6: Building installers and updates

After passing through the testing phase of the libraries, there are two main directions for further assembly. And if one of them consists in checking its compliance with some technical requirements or in the assembly of documentation, then the other consists in preparing a version for distribution.

The internal organization of system development is usually of little interest to the end user, since for him it is only important whether he can install it on his computer, whether it will be updated correctly, whether it will provide the declared functionality, and whether any additional problems will arise when using it .

Therefore, to achieve this goal, it is necessary to obtain from a set of disparate libraries what can be transferred to it. However, their successful formation does not mean that everything is in order - they can and should be tested again, but this time, by trying to perform actions not as a computer, but as if they were performed by the user himself.

There are quite a large number of ways to build installers: for example, you can use the standard installer project in Visual Studio or create it in InstallShield. You can read about many other options on IXBT ( http://www.ixbt.com/soft/installers-1.shtml , http://www.ixbt.com/soft/installers-2.shtml , http: // www .ixbt.com / soft / installers-3.shtml ).

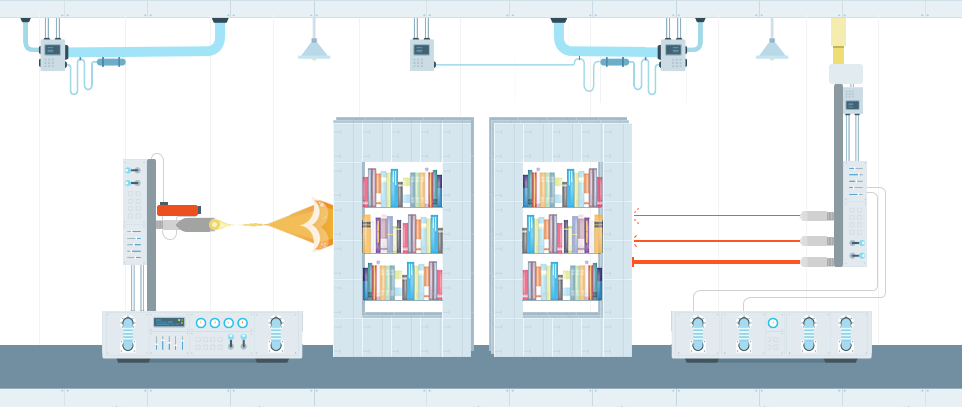

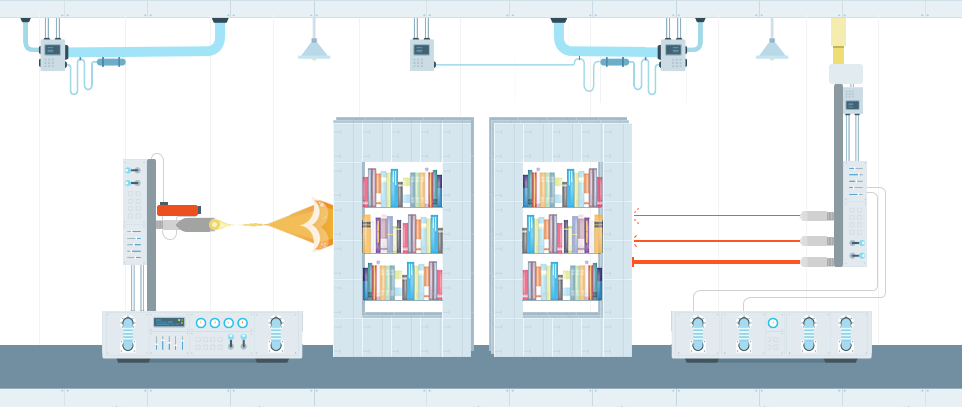

Part 7: Interface Testing

It is possible to check the system’s performance in different ways: first, during the testing of libraries, individual nodes are checked, the final decision is made by testers. However, there is an opportunity to further simplify their work - try to automatically go through the main chains of work with the system and perform all those actions that the user could perform in real use.

Such automatic testing is often called interface testing, because during its execution on the computer screen everything that ordinary people would have happened: window display, handling of hover or mouse click events, typing in input fields and so on. On the one hand, this gives a high degree of verification of the system’s operability — the main scenarios and requirements for it are described in the user language and therefore can be written in the form of automated algorithms, however, additional difficulties appear here:

- Interface testing is very unstable: if the logic of a small part of the code or several interacting areas is rarely changed, then interface testing affects an order of magnitude more - it’s worth changing the display, it will be unclear where to click, it’s worth changing the window name and it will become unclear whether it appeared or not

- Tests run much longer than regular ones: you need to not only verify that the program is working, you need to make sure that it is installed, updated, correctly deleted, that all scripts go through and possibly more than once. Displaying information on the screen and interacting with the program through the interface also greatly slows down the check

Summarizing all these remarks, we can come to the following conclusion: interface testing will help to make sure that all the tested requirements are fulfilled exactly as they were formulated, and all checks, even despite the time costs, will be completed faster than if they were carried out by testers . However, you have to pay for everything: in this case, you will have to pay labor costs for maintaining the interface test infrastructure, and be prepared for the fact that they will often fail.

If such difficulties do not stop you, then it makes sense to pay attention to the following tools with which you can organize front-end testing: for example, TestComplete, Selenium, HP QuickTest, IBM Rational Robot or UI Automation.

Part 8: Publish Results

What is the result? In the presence of a centralized source code repository, it is possible to run automatic assemblies: on a schedule or at the time of making changes. In this case, a certain set of actions will be performed, the result of which will be:

- Installers and system updates are usually the main goal of the assembly, because in the end they will be transferred to the user

- Test results - automated assembly allows you to find out about a sufficiently large layer of errors even before the version is given to testers

- Documentation - may be useful both for the system developers themselves and those who will integrate with it

- Compatibility check results - allows you to make sure that unknown modifications to the system will work with its new version

Thus, we can say the following: automatic assembly allows you to remove a huge layer of work from the developers and testers of the system for yourself, and all this will be performed without user intervention in the background. You can submit your corrections to the central repository and know that after a while everything will be ready to transfer the version to the user or for final testing and not worry about all the intermediate steps - or at least find out that the edits were incorrect , As soon as possible.

Part 9: Optimization

In the end, I would like to touch a little on what is called continuous integration. The basic principle of this approach is that after making corrections, you can find out as early as possible whether corrections lead to errors or not. In the presence of automatic assembly, this is quite simple to do, because it by itself is almost what implements this principle - its transit time is its bottleneck.

If we consider all the stages, as they were described above, it will become clear that some of their components on the one hand take quite a lot of time (for example, static code analysis or assembly of documentation), and on the other they manifest themselves only in the long term. Therefore, it would be reasonable to redistribute the parts of the automatic assembly so that what is guaranteed to interfere with the release of the version (impossibility of compiling the code, crashes in the tests) is checked as early as possible. For example, you can first build libraries, run tests on them and get installers, and postpone static code analysis, assembly of documentation and front-end testing to a later time.

As for the errors that arise during the assembly, they can be divided into two groups: critical for it and non-critical. The first stages include those stages, the negative result of which would make it impossible to launch subsequent ones. For example, if the code does not compile, then why run the tests, and if they fail, then you do not need to collect documentation. Such early interruption of assembly processes allows you to complete them as early as possible so as not to overload the computers on which they are running. If the negative result of the stage is also the result (for example, the design of the code not according to the standard does not interfere with giving the installers to the user), then it makes sense to take this into account during further study, but the assembly should not be stopped.

Part 10: Conclusion

As described above, a fairly large number of actions during the assembly of the system can be shifted to automation: the less uniform tasks will be performed by people, the more efficient their work will be. However, to complete the article, only the theoretical part would not be enough - because if you do not know whether all this is practicable, then it is not clear how much effort it will take to configure the automatic assembly yourself.

This article was not written from scratch. For several years, our company has repeatedly had to deal with the tasks of optimizing the effectiveness of the development and testing of software products. As a result, the most frequent of them were brought together, summarized, supplemented by ideas and considerations and arranged in the form of an article. At the moment, automatic assemblies are most actively used with us in the development and testing of the electronic document management system “E1 EVFRAT”. From a practical point of view, how exactly the principles described in the article are implemented during its creation could be quite useful for familiarization, but it would be better to write a separate article on this topic than to try to supplement the current one.