Expression trees in enterprise development

For most developers, the use of expression tree is limited to lambda expressions in LINQ. Often, we don’t attach any importance to how the technology works under the hood.

In this article, I will show you advanced techniques for working with expression trees: eliminating code duplication in LINQ, code generation, metaprogramming, transpiling, test automation.

You will learn how to use expression tree directly, which pitfalls the technology has prepared and how to get around them.

Under the cut - video and text transcript of my report with DotNext 2018 Piter.

My name is Maxim Arshinov, I am the co-founder of the outsourcing company "Hightech Group". We are developing software for business, and today I will tell you about the use of the expression tree technology in everyday work and how it began to help us.

I never specifically wanted to study the internal structure of expression trees, it seemed that it was some kind of internal technology for the .NET Team, so that LINQ worked, and its API wasn’t necessary for application programmers to know. It turned out that there were some applied problems that needed to be solved. So that I liked the decision, I had to crawl "in the gut."

This whole story is stretched in time, there were different projects, different cases. Something crawled out, and I finished writing, but I will allow myself to sacrifice historical truthfulness in favor of a greater artistic presentation, so all the examples will be on the same subject model - the online store.

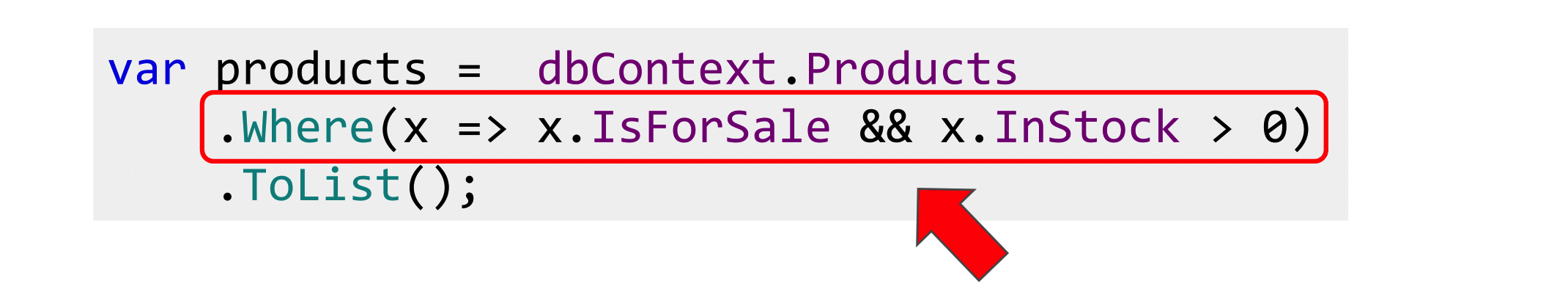

Imagine that we are all writing an online store. It has products and a tick "For sale" in the admin panel. In the public part, we will display only those products for which this tick is marked.

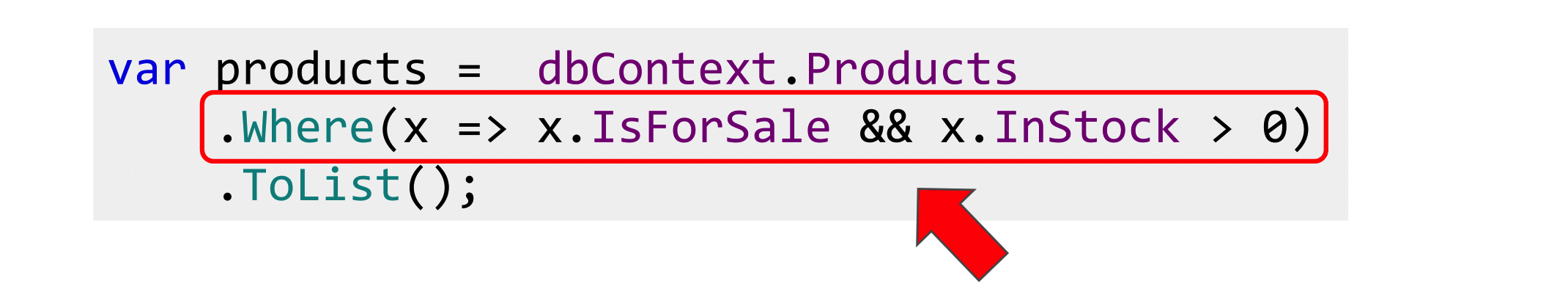

We take some DbContext or NHibernate, we write Where (), IsForSale we deduce.

Everything is good, but business rules are not the same, so we wrote them once and for all. They evolve over time. The manager comes in and says that we must also monitor the balance and bring only goods that have leftovers to the public part, without forgetting the check.

Easy to add such a property. Now our business rules are encapsulated, we can reuse them.

Let's try to edit LINQ. Is everything good here?

No, this will not work, because IsAvailable does not map to the database, this is our code, and the query provider does not know how to parse it.

We can tell him that in our property is such a story. But now this lambda is duplicated in both the linq-expression and the property.

So, the next time this lambda changes, we will have to do Ctrl + Shift + F on the project. Naturally, we all will not find - bugs and time. I want to avoid this.

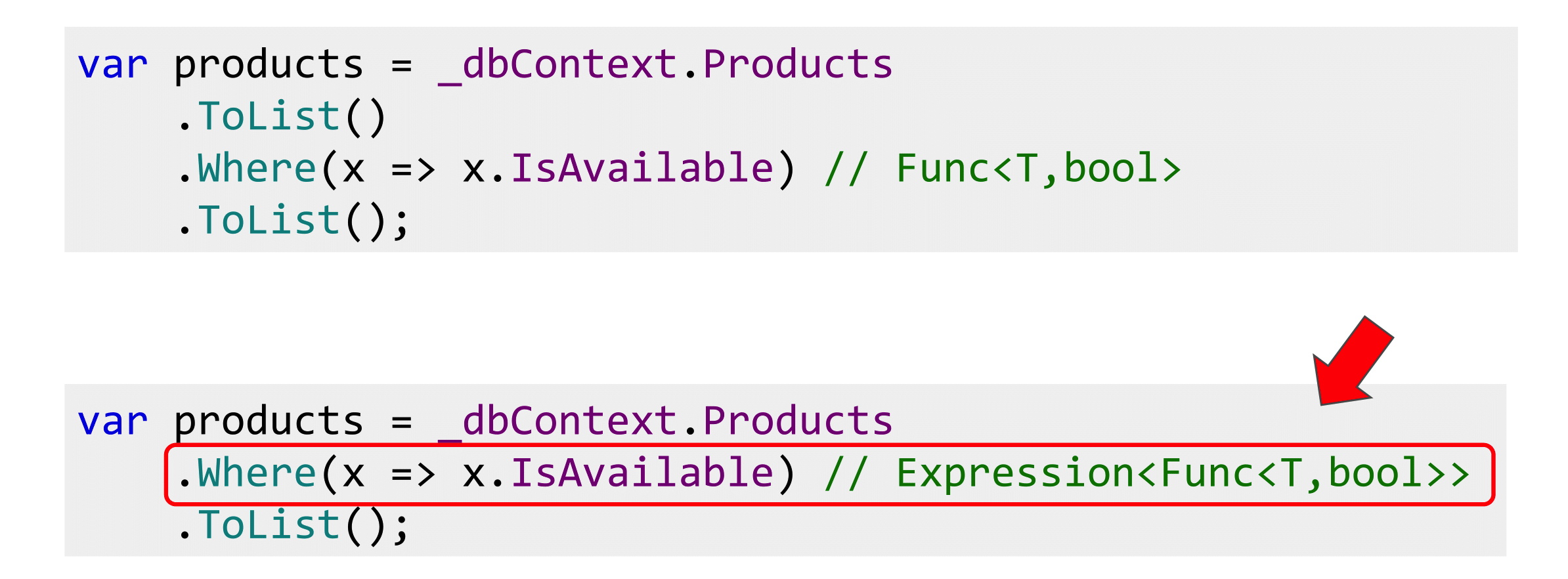

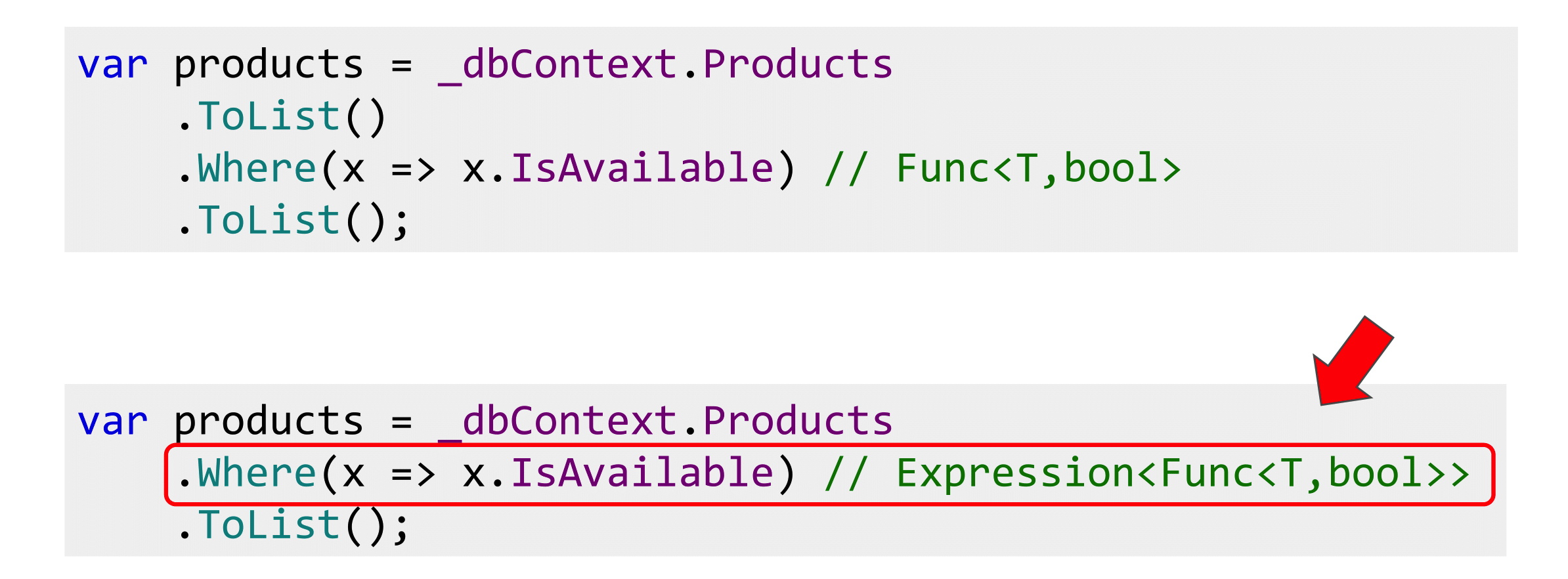

We can go from this side and put another ToList () in front of Where (). This is a bad decision, because if there is a million goods in the database, everything rises into RAM and is filtered there.

If you have three products in the store, the solution is good, but in E-commerce there are usually more of them. It worked only because, despite the similarity of the lambda with each other, the type of them is completely different. In the first case, this is the Func delegate, and in the second, the expression tree. It looks the same, the types are different, the bytecode is completely different.

To go from expression to a delegate, simply call the Compile () method. This API provides .NET: there is expression - compiled, received a delegate.

But how to go back? Is there something in .NET to go from delegate to expression trees? If you are familiar with LISP, for example, then there is a citation mechanism that allows the code to be interpreted as a data structure, but in .NET there is no such thing.

Considering that we have two types of lambdas, we can philosophize what is primary: expression tree or delegates.

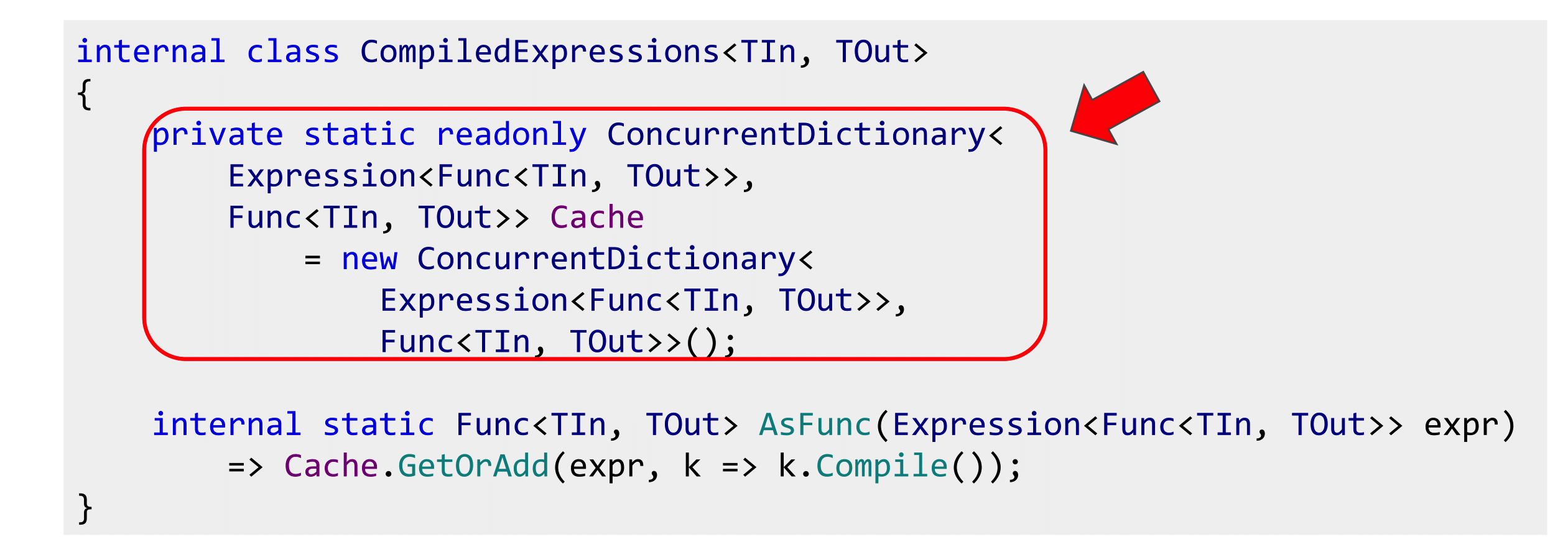

At first glance, the answer is obvious: since there is a wonderful Compile () method, expression tree is primary. And we have to get the delegate by compiling the expression. But compilation is a slow process, and if we start to do this everywhere, we will get performance degradation. In addition, we will get it in random places, where expression had to be compiled into a delegate, there will be a performance drop. You can search for these places, but they will affect the response time of the server, and randomly.

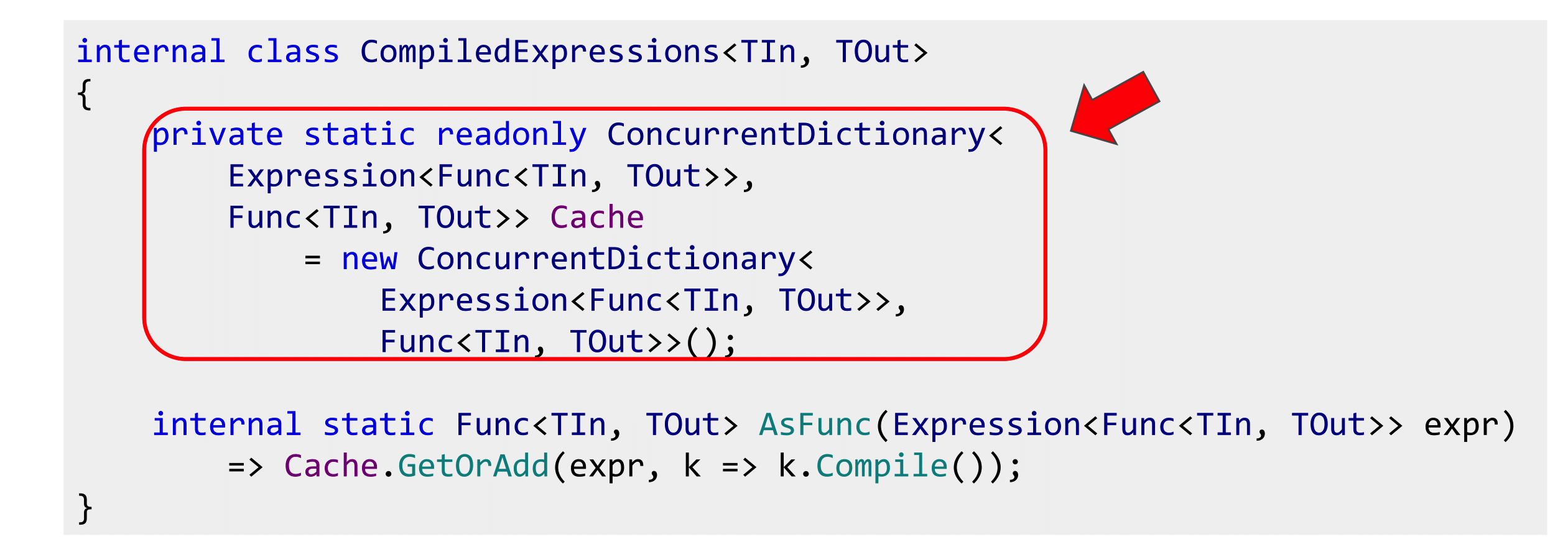

Therefore, they must somehow be cached. If you listened to a report about concurrent data structures, then you know about ConcurrentDictionary (or just know about it). I will omit the details about caching methods (with locks, not locks). Simply, ConcurrentDictionary has a simple GetOrAdd () method, and the simplest implementation: put it into ConcurrentDictionary and cache. The first time we get the compilation, but then everything will be fast, because the delegate has already been compiled.

Then you can use this extension method, you can use and refactor our code with IsAvailable (), describe the expression, compile and call IsAvailable () properties relative to the current this object.

There are at least two packages that implement this: Microsoft.Linq.Translations andSignum Framework (open source framework written by a commercial company). And there, and there is about the same story with the compilation of delegates. A bit different API, but as I showed on the previous slide.

However, this is not the only approach, and you can go from delegates to expressions. For a long time on Habré there is an article about Delegate Decompiler, where the author claims that all compilations are bad, because they are long.

In general, delegates had earlier expressions, and it is possible to move on from delegates to them. To do this, the author uses the methodBody.GetILAsByteArray (); from Reflection, which really returns the entire IL-code of the method as a byte array. If it is stuck further in Reflection, then you can get an object representation of this case, go through it and build an expression tree. Thus, the reverse transition is also possible, but it has to be done by hand.

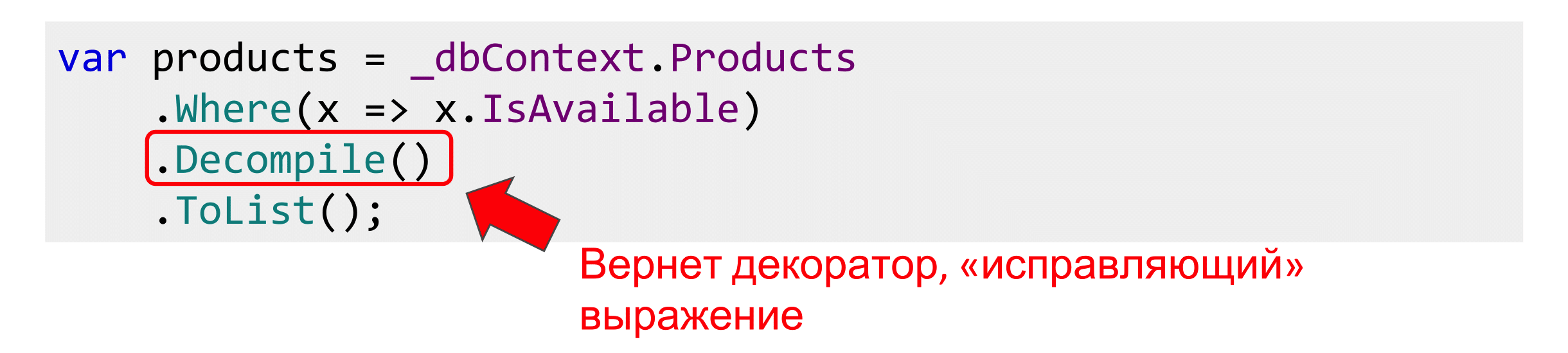

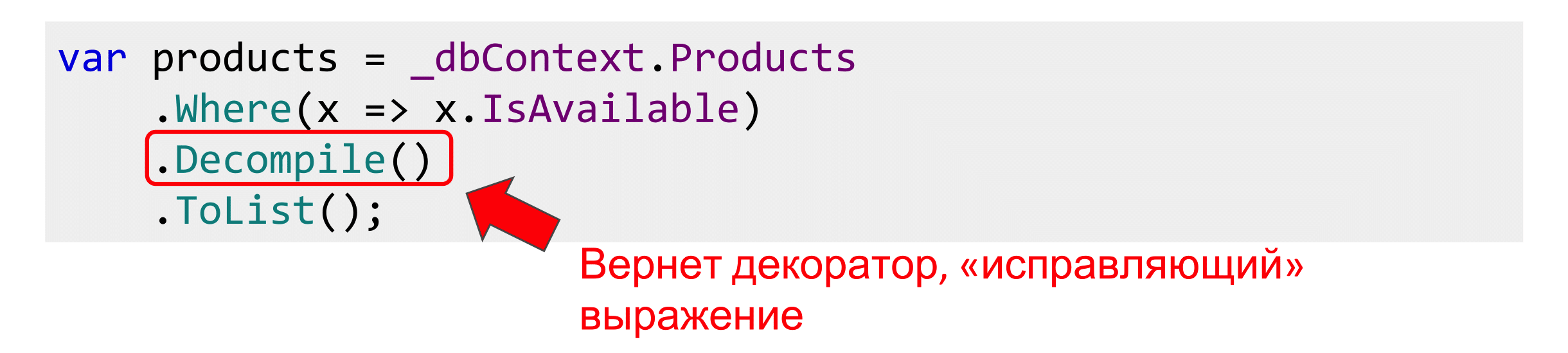

In order not to run on all properties, the author suggests hanging the Computed attribute to mark that this property should be inline. Before the request, we climb into IsAvailable (), pull out its IL code, convert it to the expression tree, and replace the IsAvailable () call with what is written in this getter. It turns out such a manual inlining.

To make it work, before passing everything to ToList (), call the special method Decompile (). It provides the decorator for the original queryable and inlines. Only after that we transfer everything to the query-provider, and everything is fine with us.

The only problem with this approach is that Delegate Decompiler 0.23.0 is not going to move forward, there is no Core support, and the author himself says that this is a deep alpha, there is a lot of unfinished there, so you cannot use it in production. Although we will return to this topic.

It turns out that we have solved the problem of duplication of specific conditions.

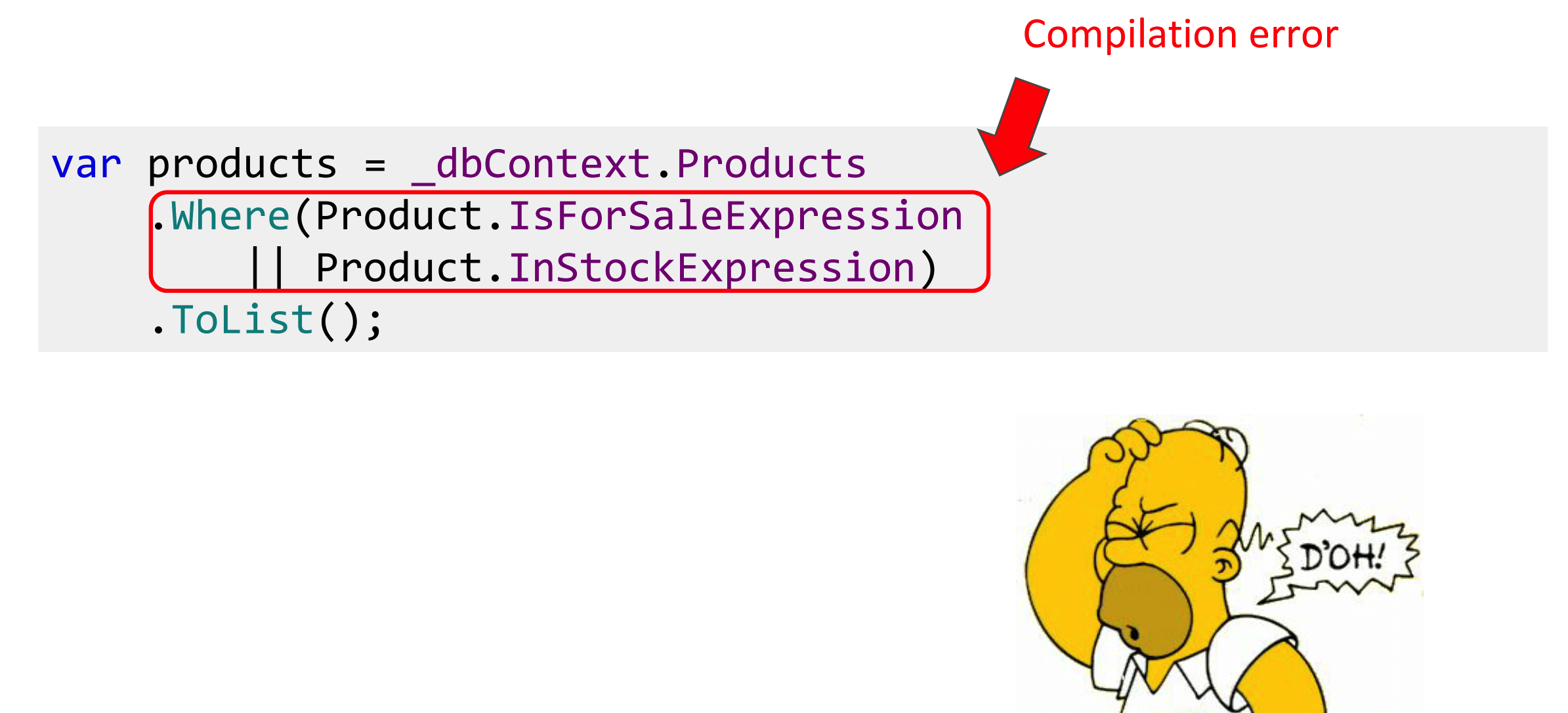

But conditions often need to be combined using Boolean logic. We had IsForSale (), InStock ()> 0, and in between the “AND” condition. If there is some other condition, or an “OR” condition is required.

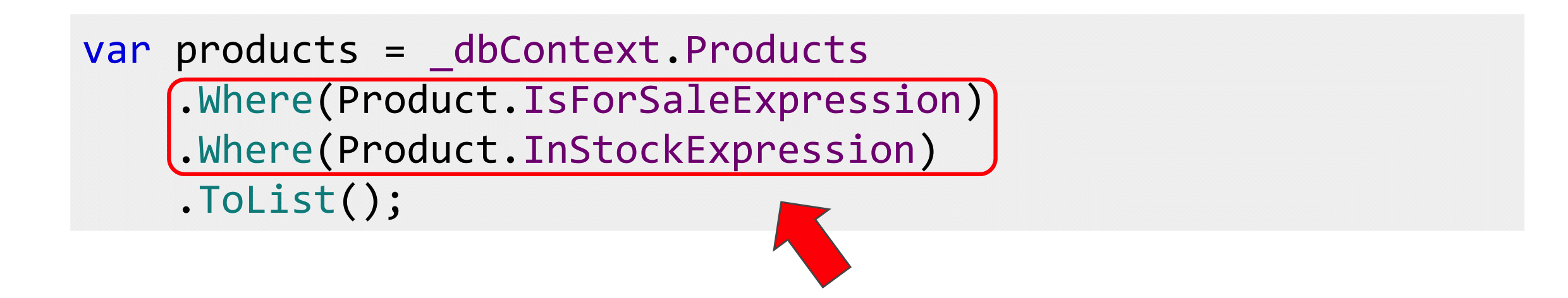

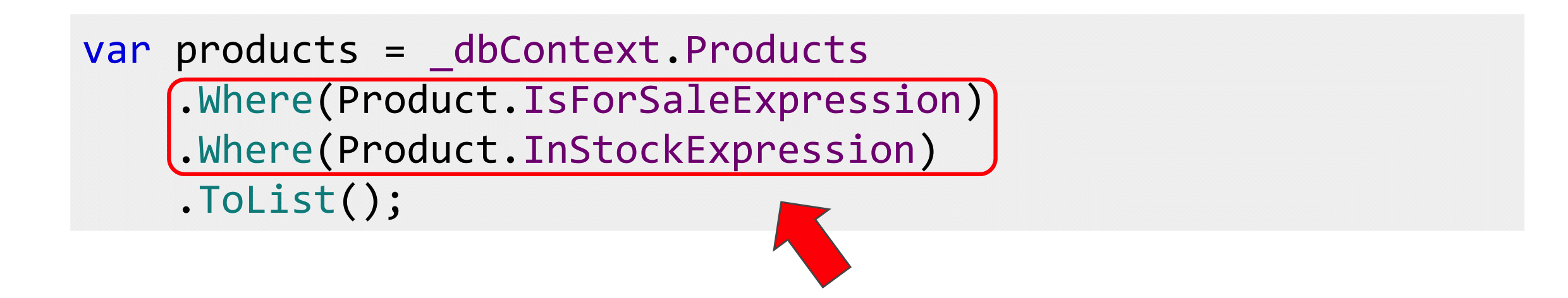

In the case of "And" you can cheat and dump all the work on the query-provider, that is, to write a lot of Where () in a row, he knows how to do it.

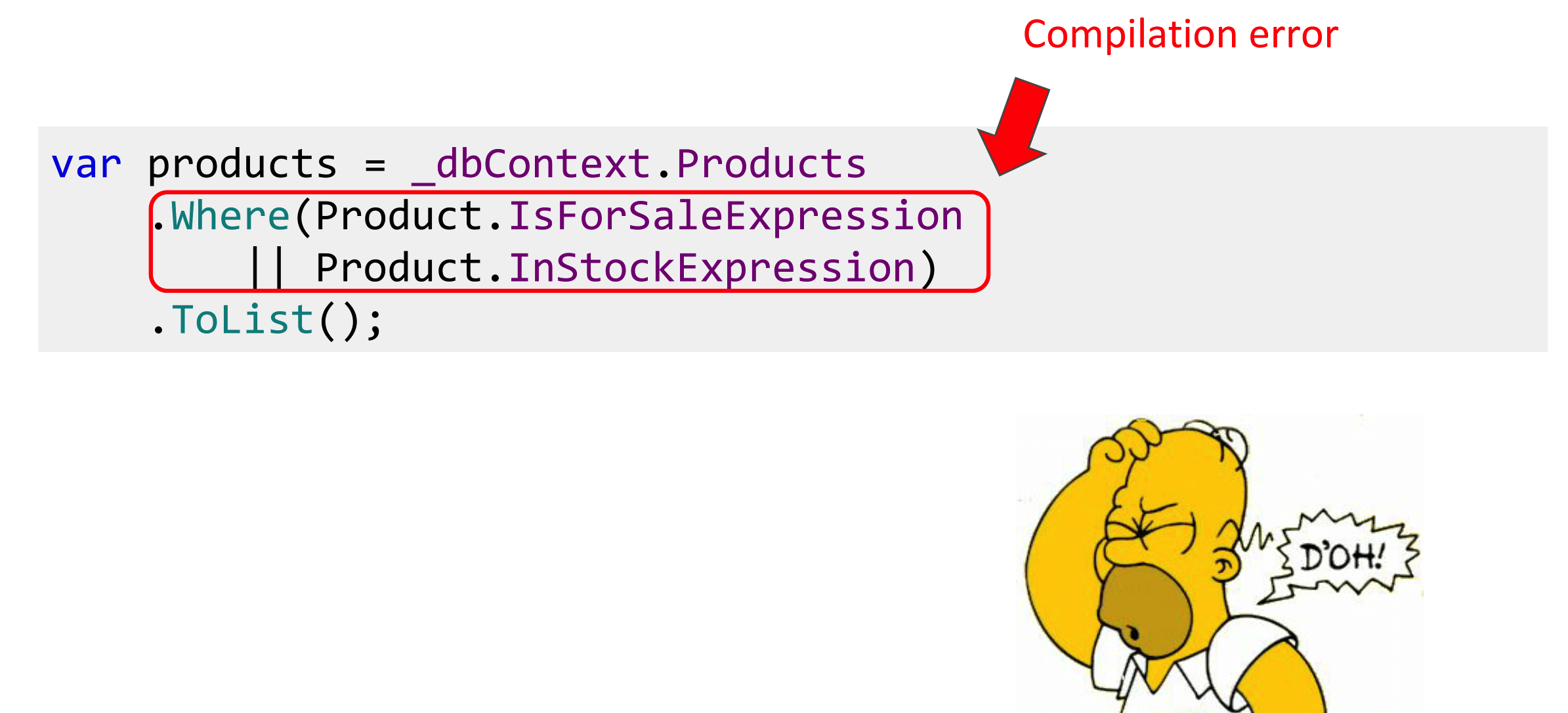

If an “OR” is required, this will not work, because WhereOr () is not in LINQ, and the expressions do not overload the “||” operator.

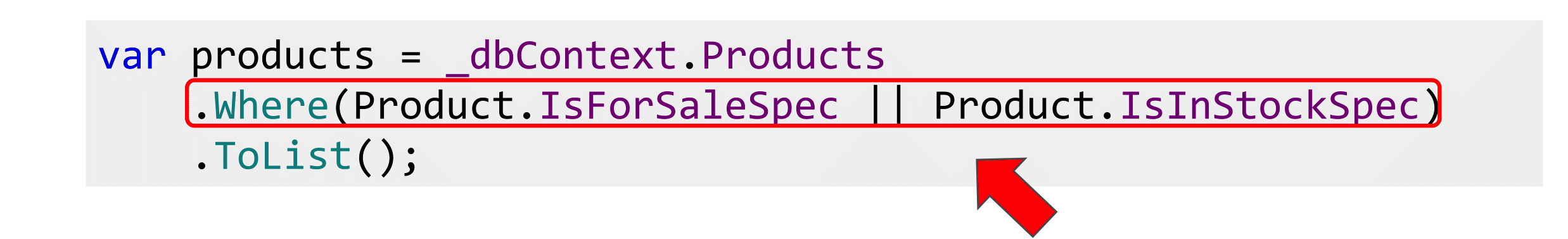

If you are familiar with Evans' DDD book, or simply know something about the Pattern Specification, then there is a design pattern designed for exactly that. There are several business rules and you want to combine operations in Boolean logic - implement the Specification.

Specification is such a term, an old pattern from Java. And in Java, especially in the old, there was no LINQ, so it is implemented there only in the form of the isSatisfiedBy () method, that is, only delegates, but there is no talk about expressing there. There is an implementation on the Internet called LinqSpecs , on the slide you will see it. I filed it a little with a file for myself, but the idea belongs to the library.

All Boolean operators are overloaded here, true and false operators are overloaded, so that the two “&&” and “||” operators work, without them only the single ampersand will work.

Then we add implicit-statements that will force the compiler to assume that the specification is both an expression and a delegate. In any place where the Expression <> or Func <> type should come to the function, you can pass the specification. Since the implicit statement is overloaded, the compiler will figure out and substitute either the Expression or IsSatisfiedBy properties.

IsSatisfiedBy () can be implemented by caching the expression that came. In any case, it turns out that we are going from Expression, the delegate corresponds to it, we have added support for boolean operators. Now you can compose all this. Business rules can be rendered into static specifications, declared and combined.

Each business rule is written only once, it is not lost anywhere, not duplicated, they can be combined. People, coming to the project, can see what you have, what conditions, to understand the subject model.

There is a small problem: the Expression does not have the And (), Or (), and Not () methods. These are extension-methods, they must be implemented independently.

The first attempt at implementation was this. About expression tree there is quite a bit of documentation on the Internet, and it’s all not detailed. So I tried to just take the Expression, hit Ctrl + Space, saw OrElse (), read about it. Passed two Expression to compile and continue to get lambda. It will not work.

The fact is that this Expression consists of two parts: a parameter and a body. The second also consists of a parameter and a body. In OrElse (), it is necessary to transfer the body of expressions, that is, it is useless to compare the lambda by the “AND” and “OR”, so it will not work. We fix it, but it will not work again.

But if last time there was a NotSupportedException that lambda is not supported, now there is a strange story about parameter 1, parameter 2, “something is wrong, I won’t work”.

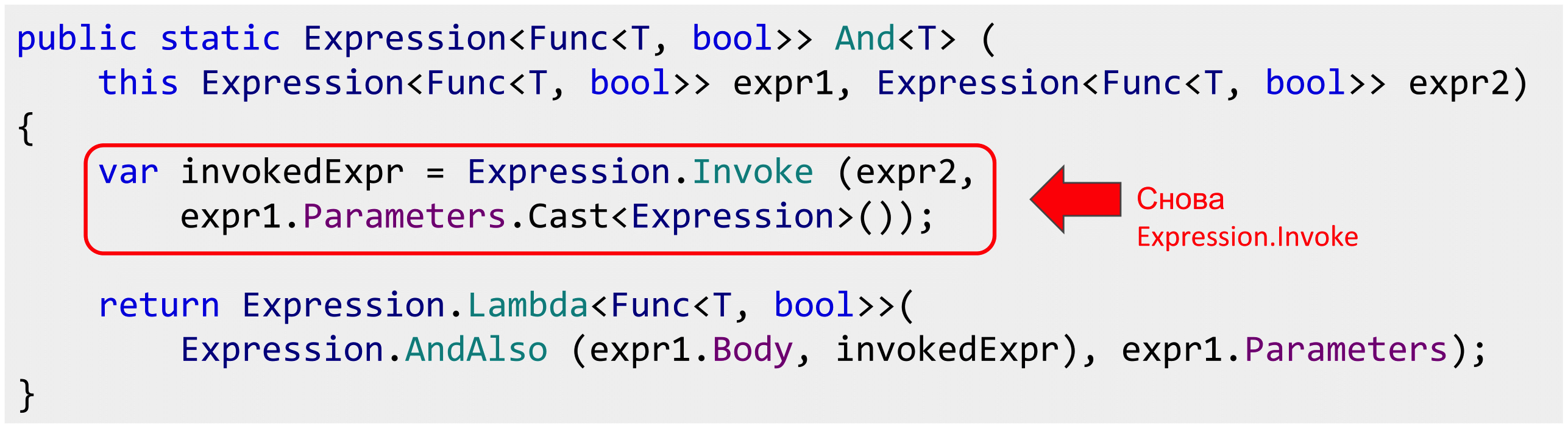

Then I thought that the method of scientific spear will not work, it is necessary to understand. I started to google and found the site of the Albahari book “ C # 7.0 in a Nutshell ”.

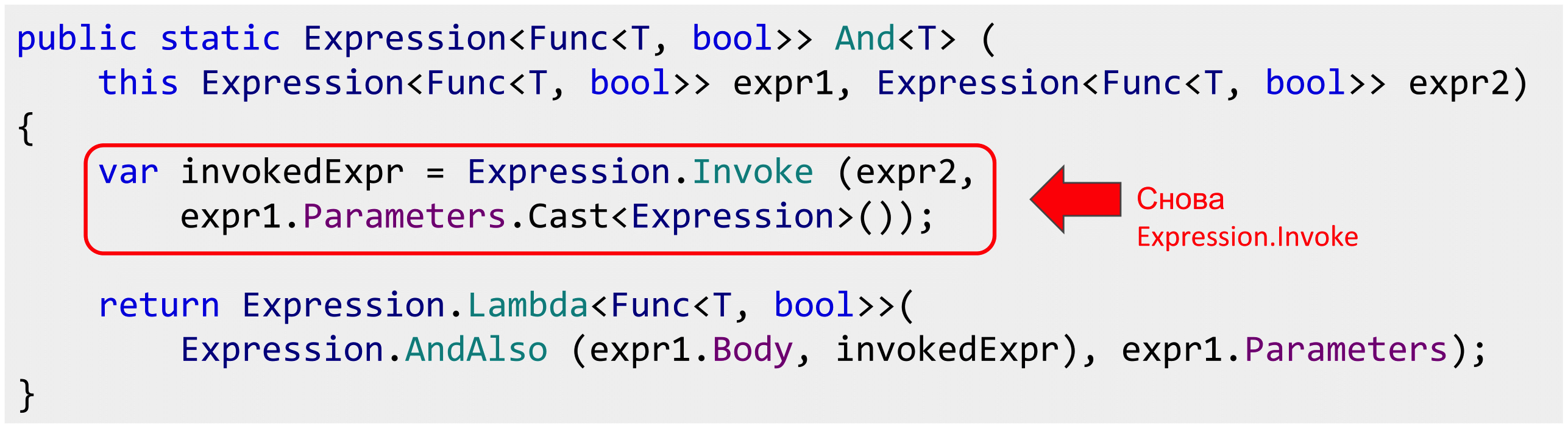

Joseph Albahari, who is also the developer of the popular LINQKit and LINQPad library, just describes this problem. that you can't just take and combine Expression, and if you take the magic Expression.Invoke (), it will work.

Question: what is Expression.Invoke ()? Go to Google again. It creates an InvocationExpression that applies a delegate or lambda expression to the argument list.

If I read this code to you now that we take Expression.Invoke (), we pass the parameters, then the same is written in English. It does not become clearer. There is some magic Expression.Invoke (), which for some reason solves this problem with parameters. It is necessary to believe, it is not necessary to understand.

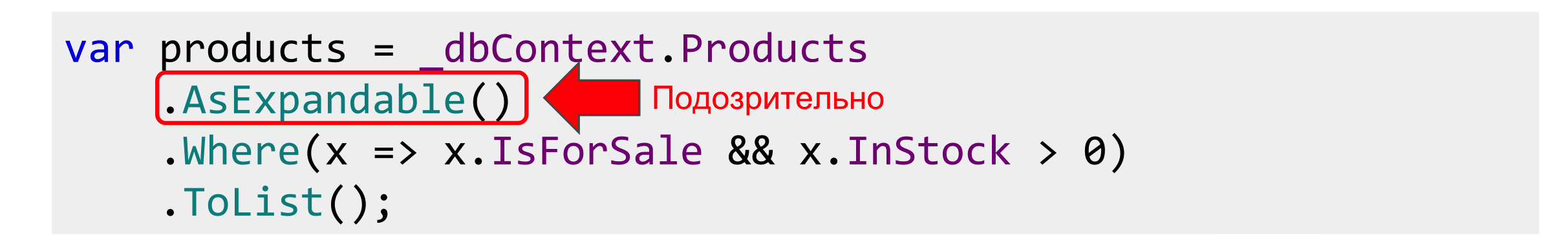

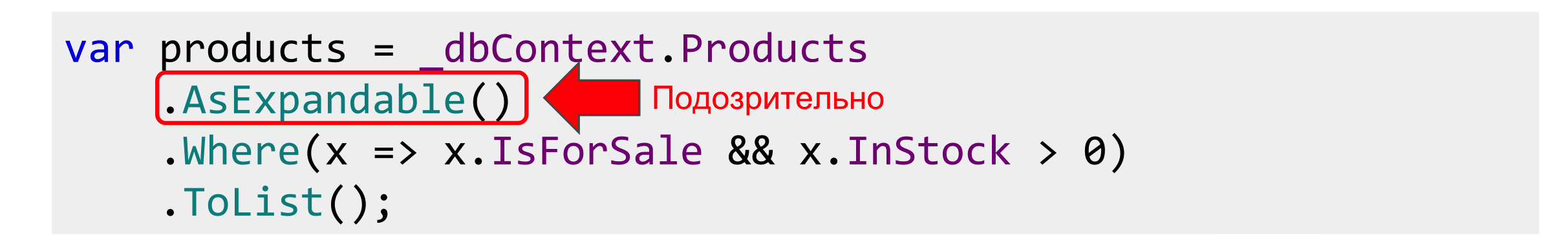

However, if you try to feed EF such combined Expression, it will fall again and say that Expression.Invoke () is not supported. By the way, EF Core began to support, but EF 6 does not hold. But Albahari suggests simply writing AsExpandable (), and it will work.

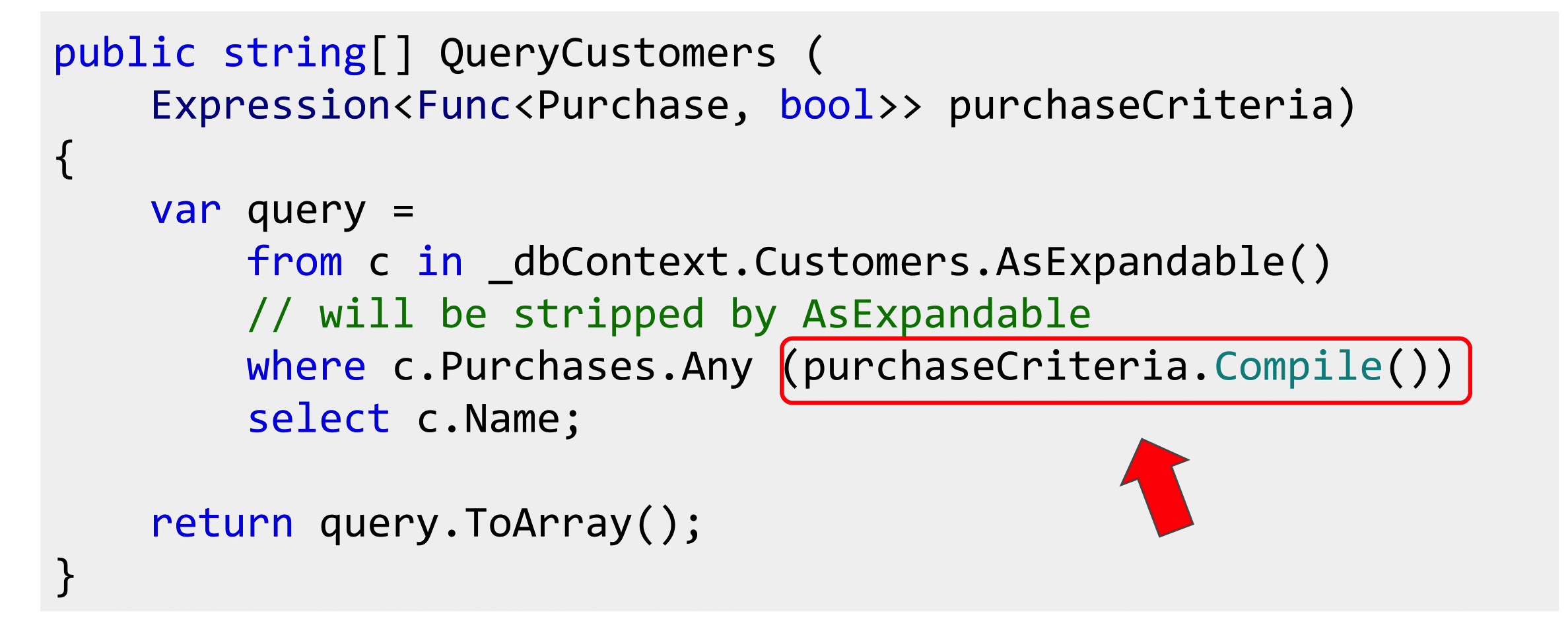

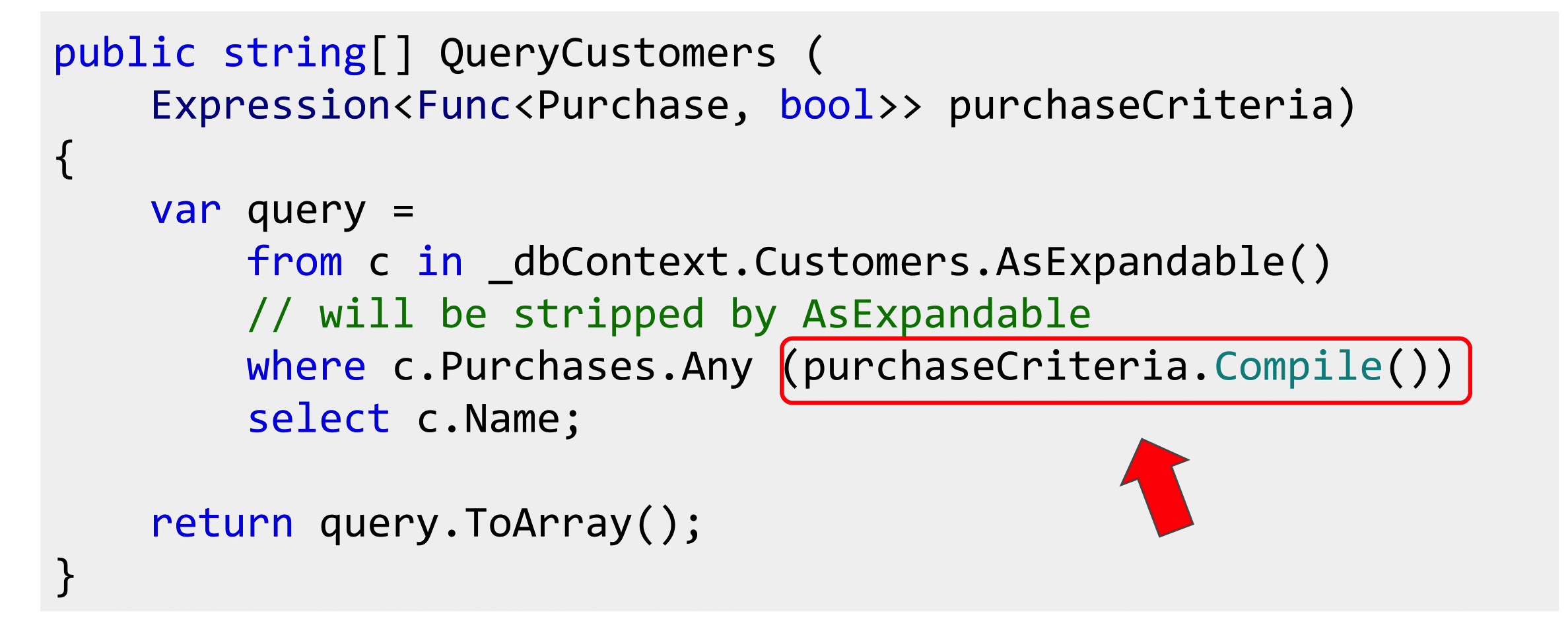

You can also substitute Expression subqueries where we need a delegate. So that they coincide, we write Compile (), but at the same time, if you write AsExpandable (), as Albahari suggests, this Compile () will not really happen, but somehow everything will be magically done correctly.

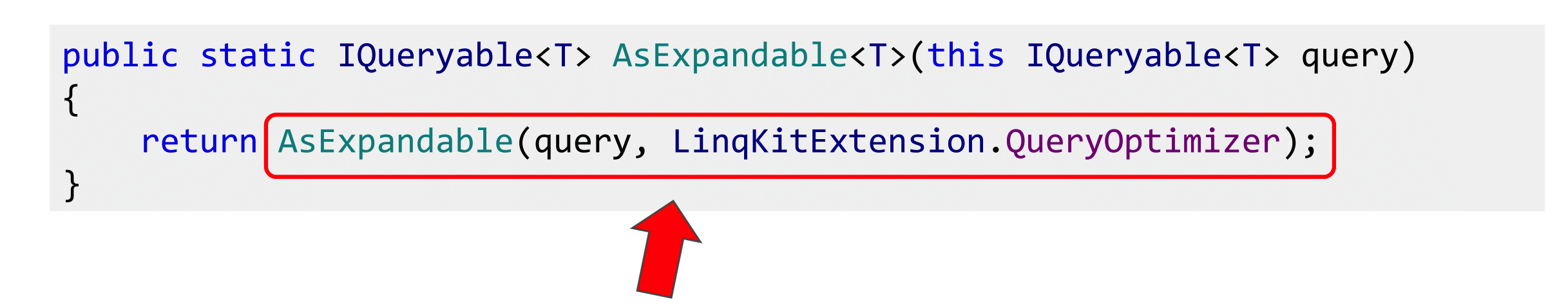

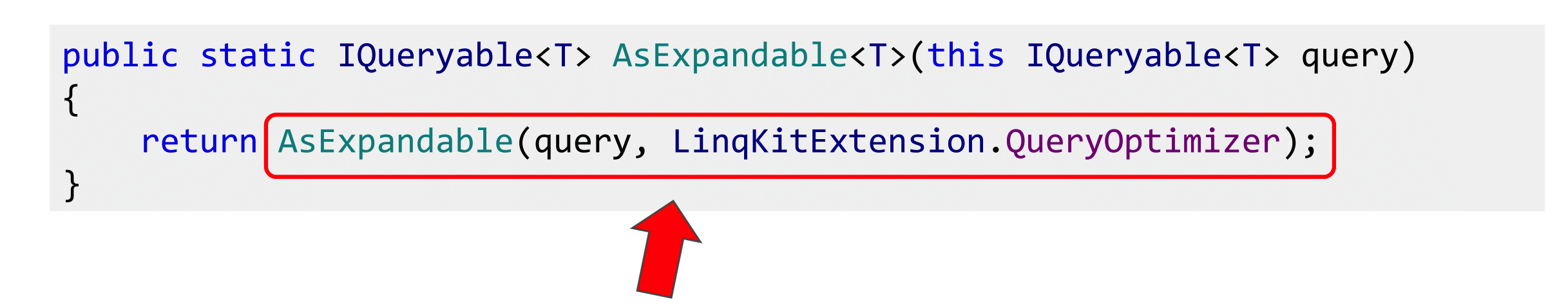

I did not believe the word and got into the source. What is the AsExpandable () method? It has query and QueryOptimizer. We leave the second one out of the brackets, since it is uninteresting, but simply sticks together the Expression: if there is 3 + 5, it will put 8.

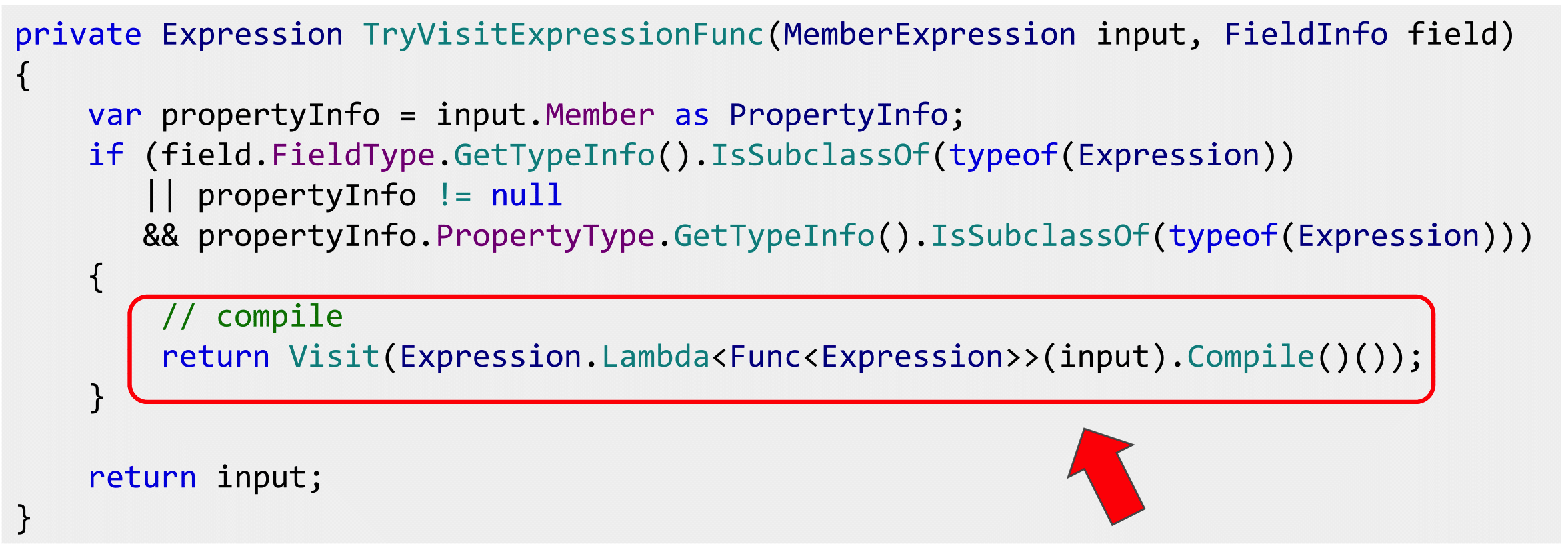

It is interesting that the Expand () method is then called, after it queryOptimizer, and then everything is passed to the query-provider as- This is altered after the Expand () method.

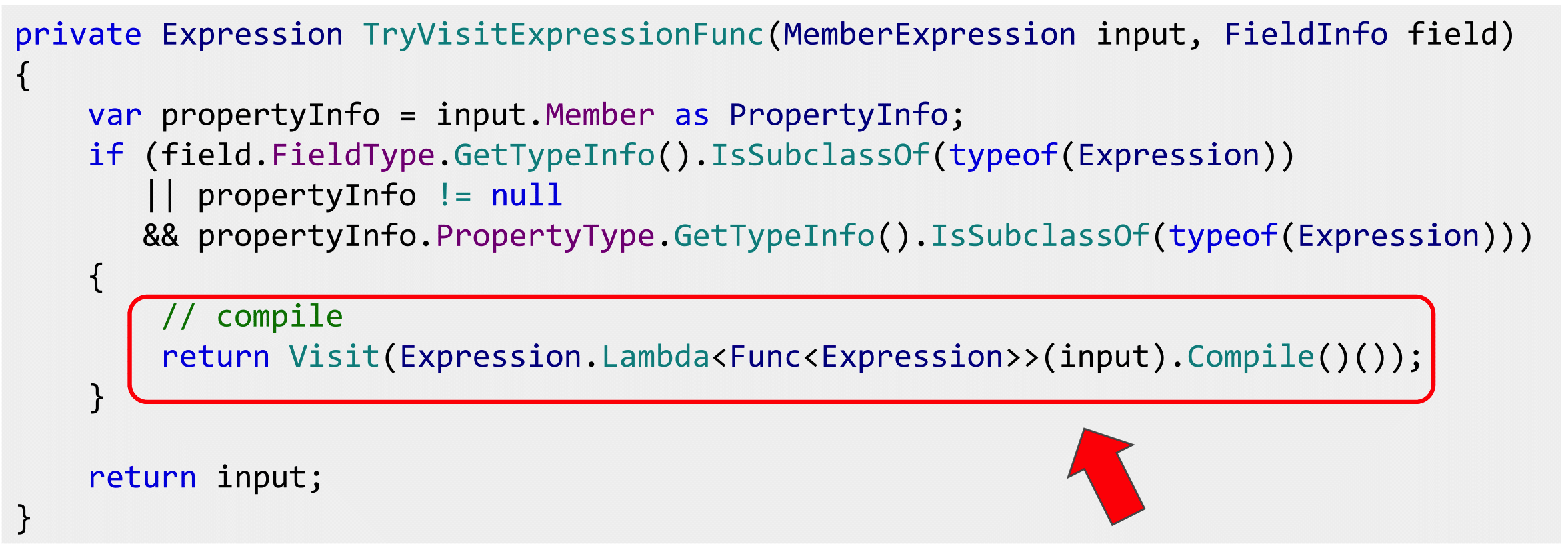

We open it, it is Visitor, inside we see a non-original Compile (), which compiles something else. I will not tell you what it is, even though there is a certain meaning in it, but we remove one compilation and replace it with another. Great, but it smacks of marketing of the 80th level, because the performance impact is not going anywhere.

I thought that this would not work and began to look for another solution. And found. There is such a Pete Montgomery, who also writes about this problem and claims that Albahari faked.

Pete talked to the EF developers, and they taught him to combine everything without Expression.Evoke (). The idea is very simple: the ambush was with parameters. The fact is that with a combination of Expression there is a parameter of the first expression and a parameter of the second. They do not match. The bodies were glued together, and the parameters remained hanging in the air. They need to bind in the right way.

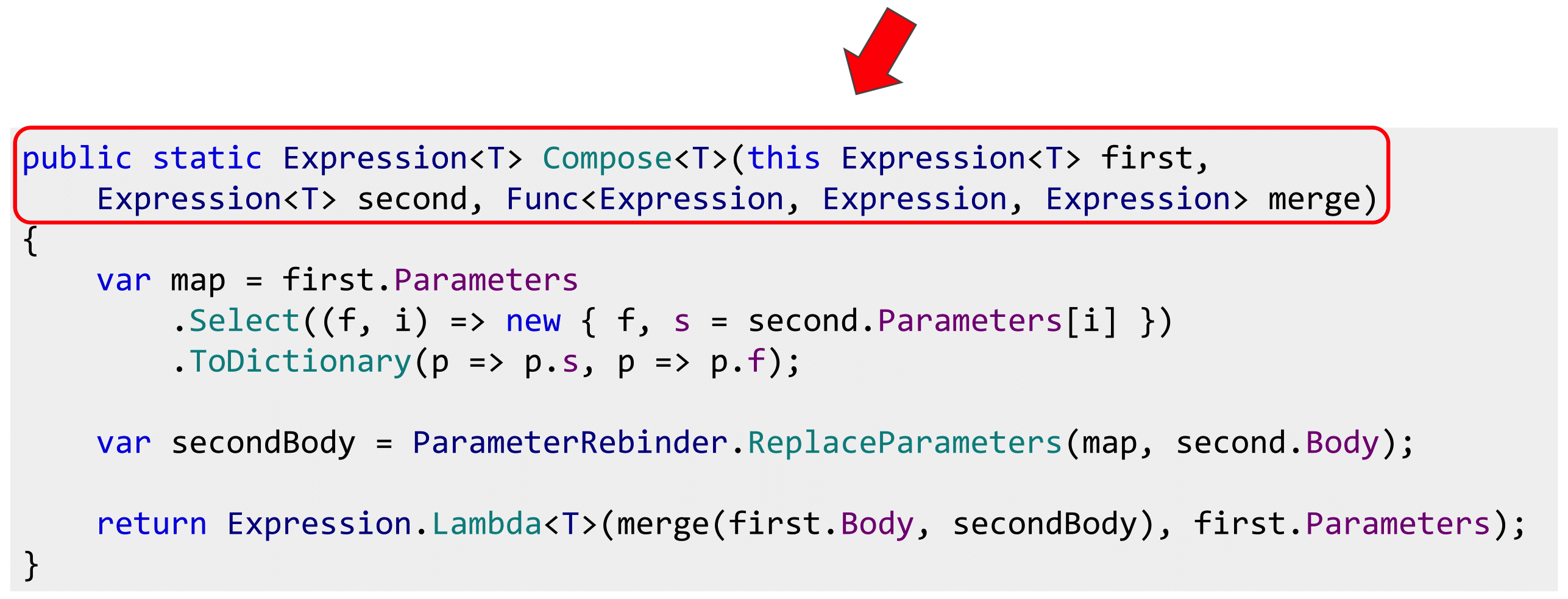

To do this, you need to compile a dictionary, looking at the parameters of the expressions, if the lambda is not from one parameter. We compile a dictionary, and all the parameters of the second are rebound to the parameters of the first, so that the original parameters are included in the Expression, passed over the whole body, which we glued together.

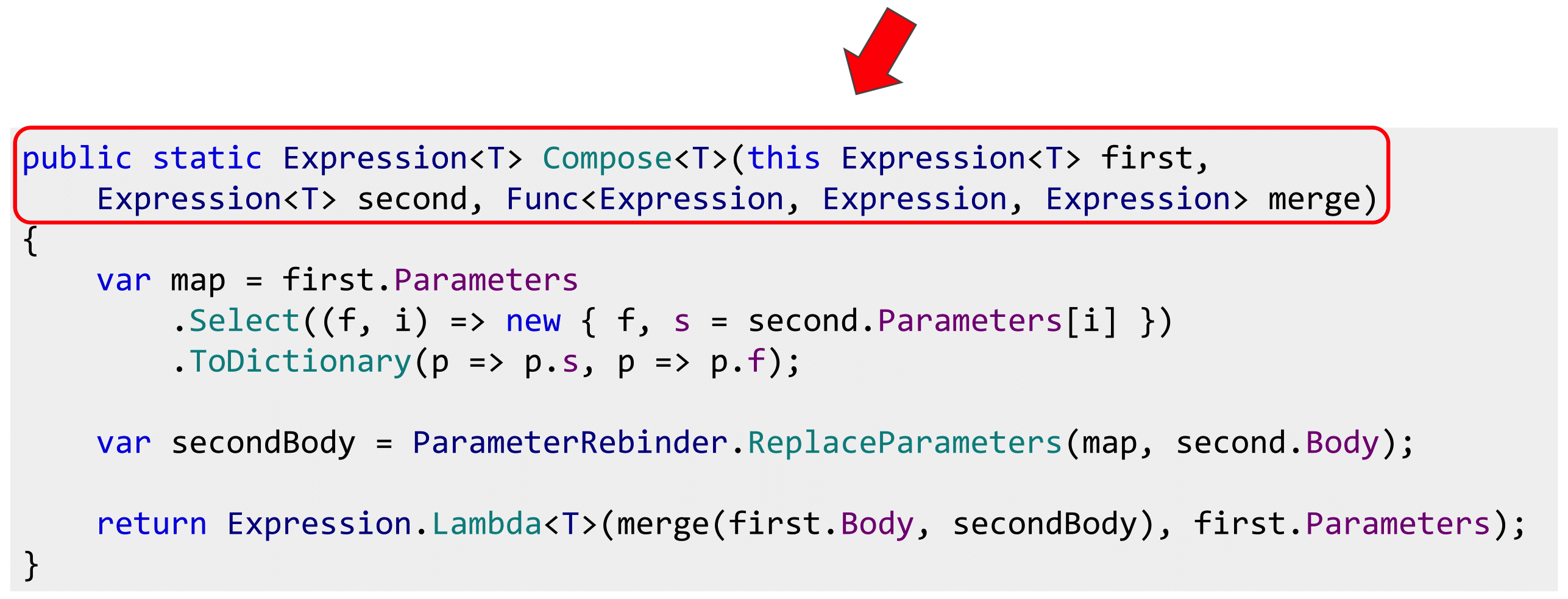

This simple method allows you to get rid of all ambushes with Expression.Invoke (). Moreover, in the implementation of Pete Montgomery, this is done even cooler. It has a Compose () method that allows you to combine any expressions.

We take expression and through AndAlso we connect, works without Expandable (). It is this implementation that is used in boolean operations.

Everything was fine until it became clear that there are aggregates in nature. For those who are not familiar, I will explain: if you have a domain model and you represent all the entities that are connected to each other, in the form of trees, then the tree hanging separately is an aggregate. The order along with the order items will be called the aggregate, and the essence of the order will be the aggregation root.

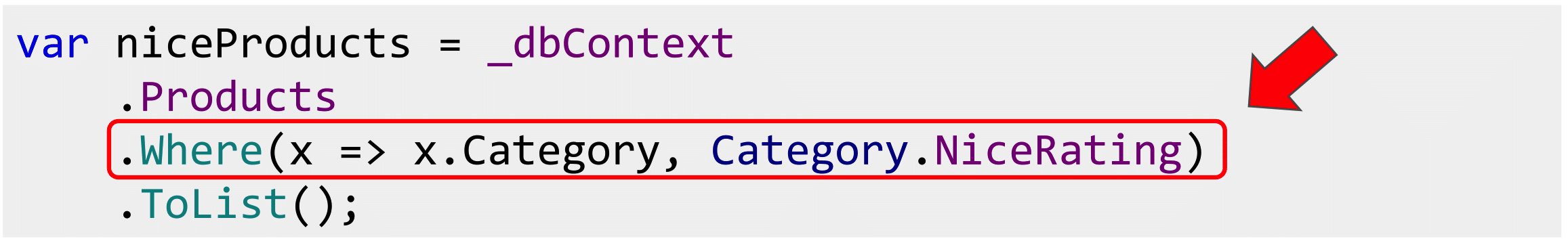

If, in addition to goods, there are still categories with a business rule declared for them in the form of a specification, that there is a certain rating that must be over 50, as marketers said, and we want to use it so, then this is good.

But if we want to pull the goods out of a good category, then again it is bad, because we did not have the same types. Specification for category, but need products.

Again, we must somehow solve the problem. The first option: replace Select () with SelectMany (). I don't like two things here. First of all, I don’t know how support for SelectMany () is implemented in all popular query providers. Secondly, if someone writes a query provider, the first thing he will do is to write throw not realized exception and SelectMany (). And the third point: people think that SelectMany () is either functional or join'y, usually not associated with a SELECT query.

I would like to use Select (), not SelectMany ().

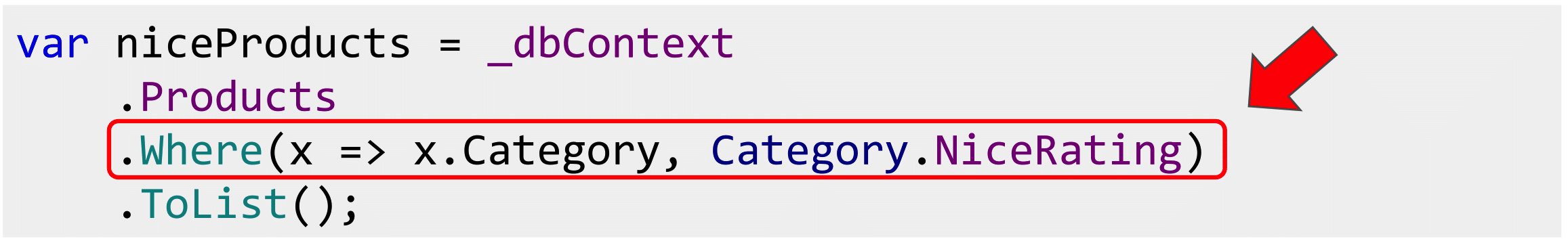

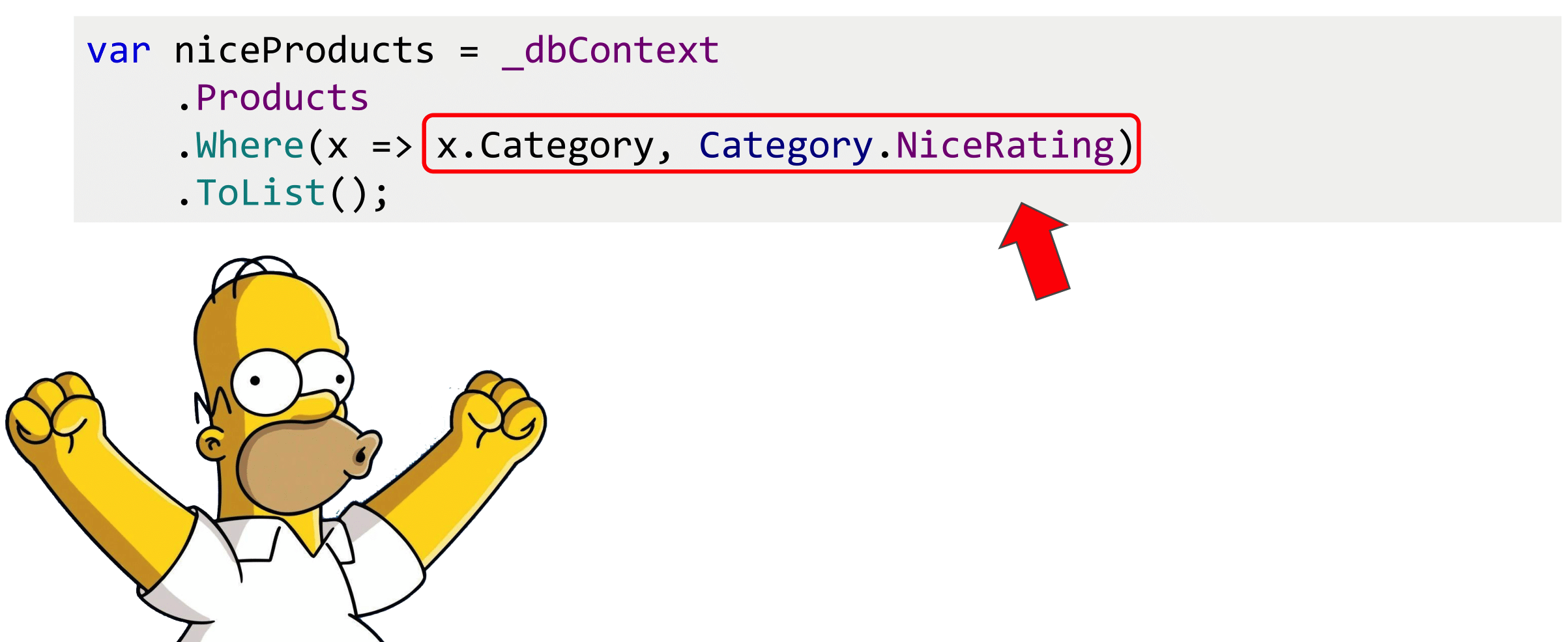

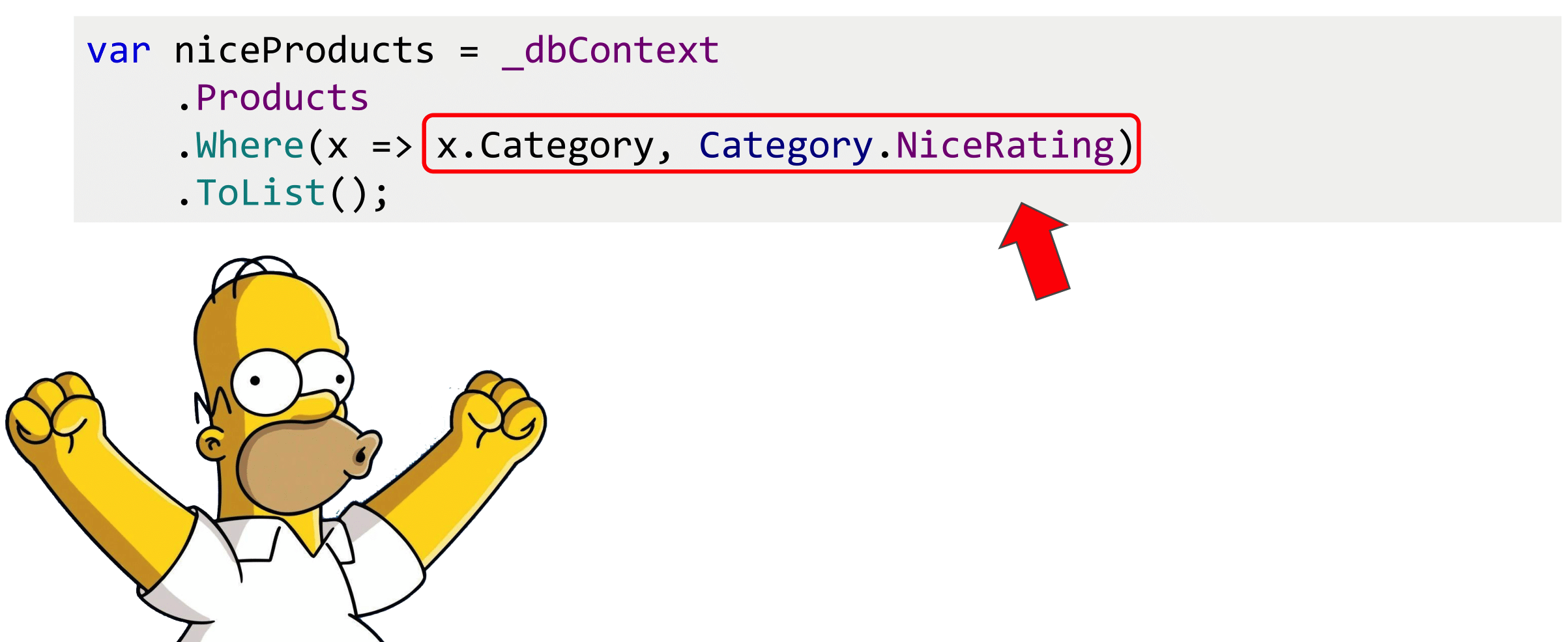

At about the same time I read about category theory, about functional composition and thought that if there are specifications from the product in bool below, there is some function that can go from the product to the category, there is a specification regarding the category, then substituting the first function as the second argument, we get what we need, the specification regarding the product. Absolutely the same as functional composition works, but for expression trees.

Then it would be possible to write such a method Where (), that it is necessary to go from the products to categories and apply the specification to this related entity. This syntax for my subjective taste looks pretty clear.

With the Compose () method, this is also easy to do. We take input Expression from products and we combine it together with specifications concerning a product and all.

Now you can write such Where (). This will work if you have a unit of any length. Category has SuperCategory and as many further properties as you can substitute.

“Once we have a functional composition tool, and since we can compile it, and once we can build it dynamically, it means there is a smell of meta-programming!” I thought.

Where can we apply meta-programming to have less code to write.

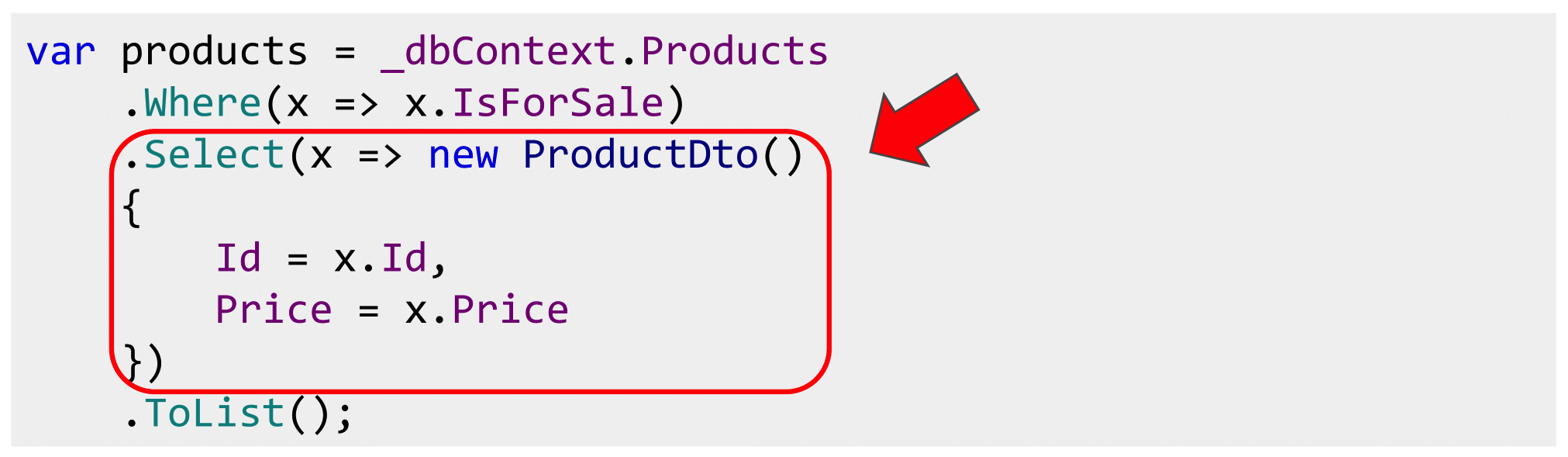

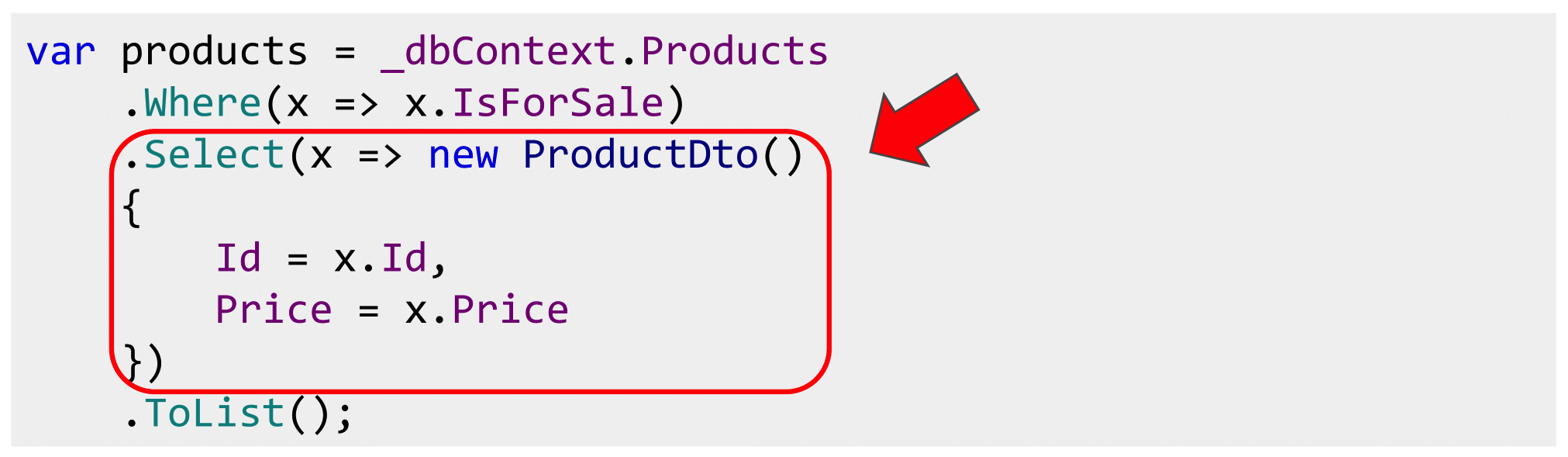

The first option is projections. Pulling an entire entity is often too expensive. Most often we transfer it to the front, serialize JSON. And it does not need the whole entity with the unit. You can do this as efficiently as possible with LINQ by writing such a Select () manual. Not difficult, but tedious.

Instead, I suggest everyone use ProjectToType (). At least there are two libraries that can do this: Automapper and Mapster. For some reason, many people know that AutoMapper can do memory mapping, but not everyone knows that it has Queryable Extensions, there is also an Expression, and it can build a SQL expression. If you are still writing manual queries and you are using LINQ, since you do not have super-serious performance restrictions, then there is no point in doing this with your hands, this is the work of the machine, not a person.

If we can do this with projections, why not do it for filtering.

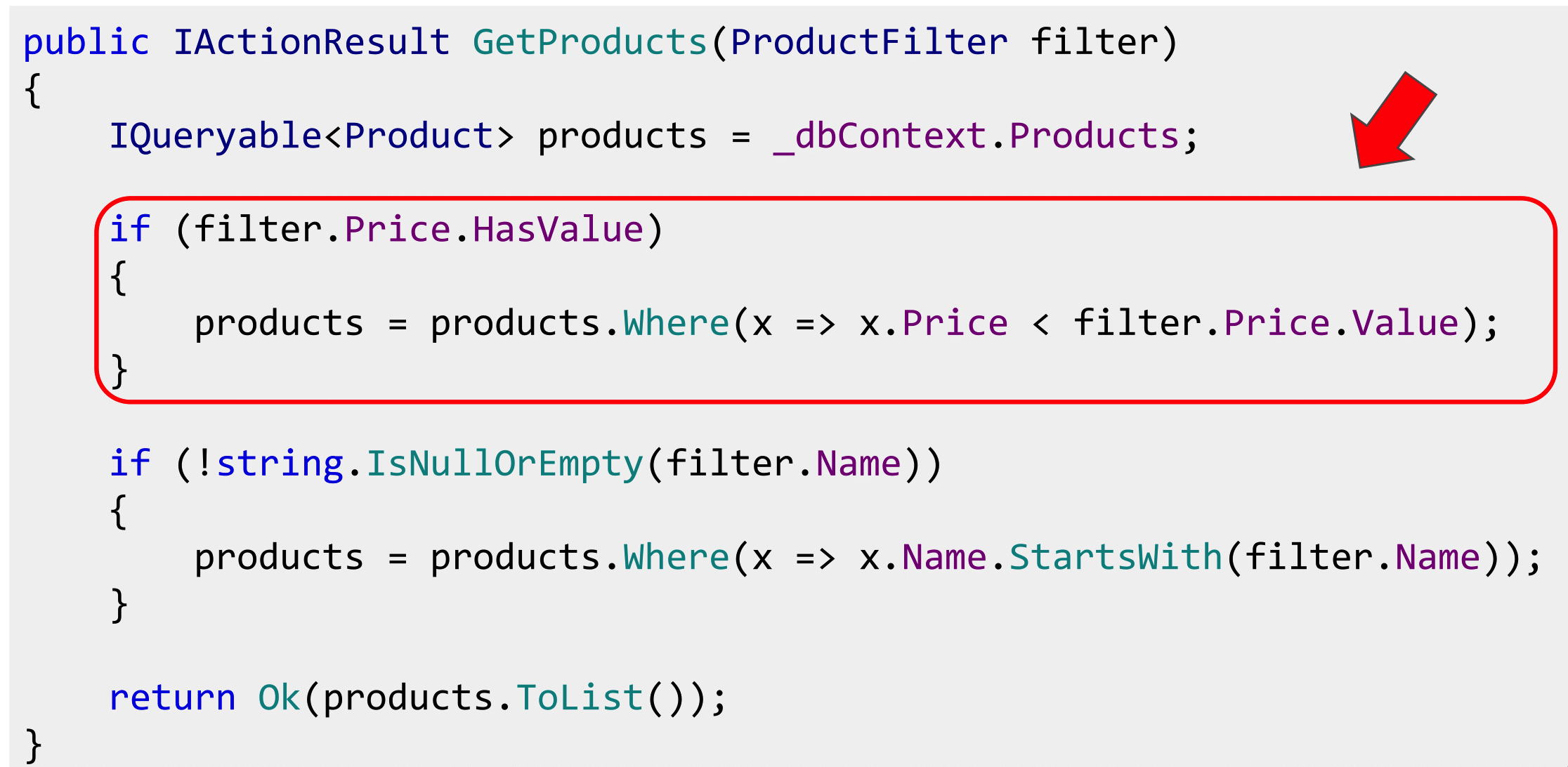

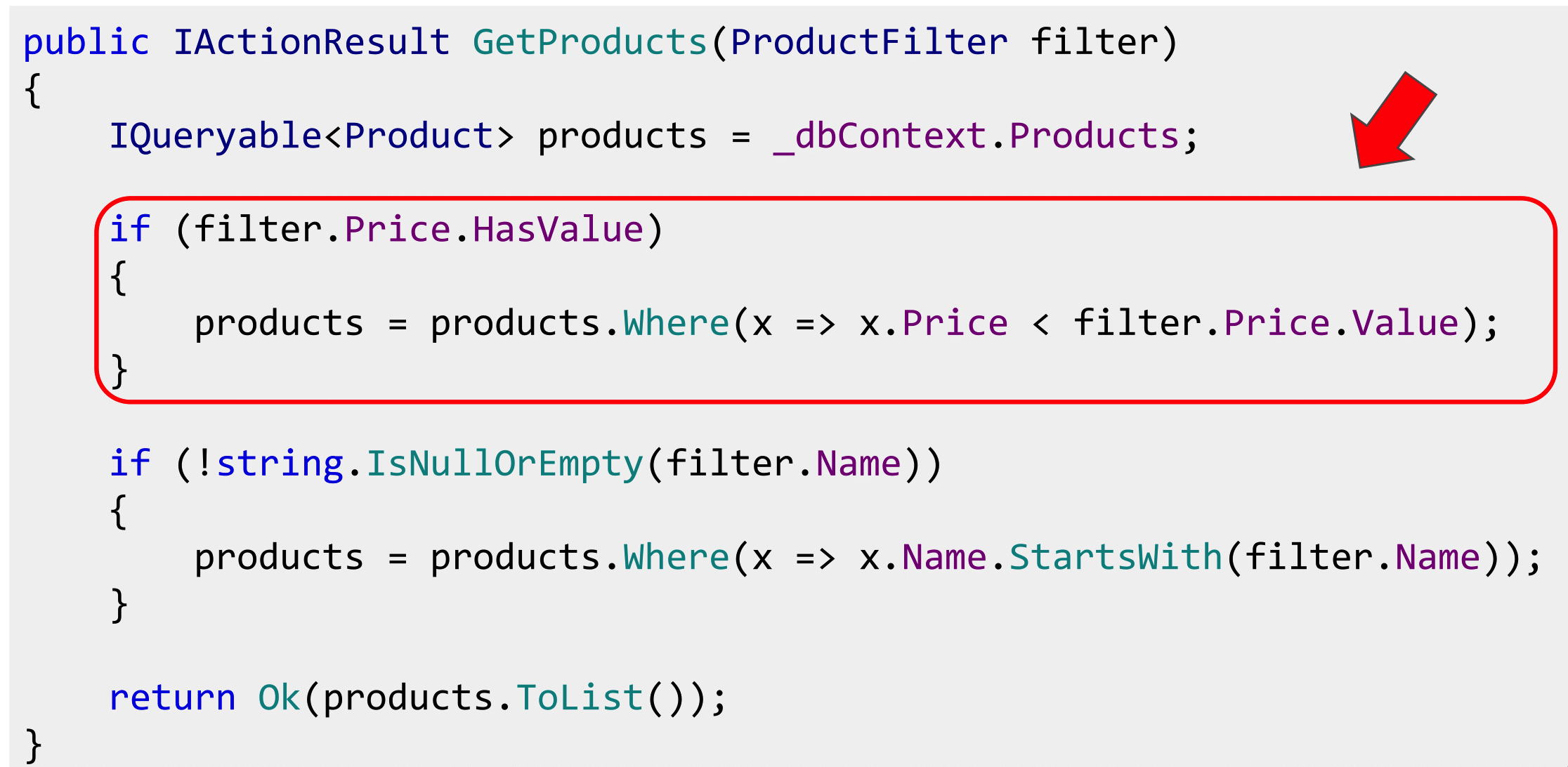

Here too the code. Comes some kind of filter. A lot of business applications look like this: a filter has arrived, we will add Where (), another filter has come, we will add Where (). How many filters are there, so many repeat. Nothing complicated, but a lot of copy-paste.

If we do something like AutoMapper, we write AutoFilter, Project and Filter, so that he can do everything, it would be cool - less code.

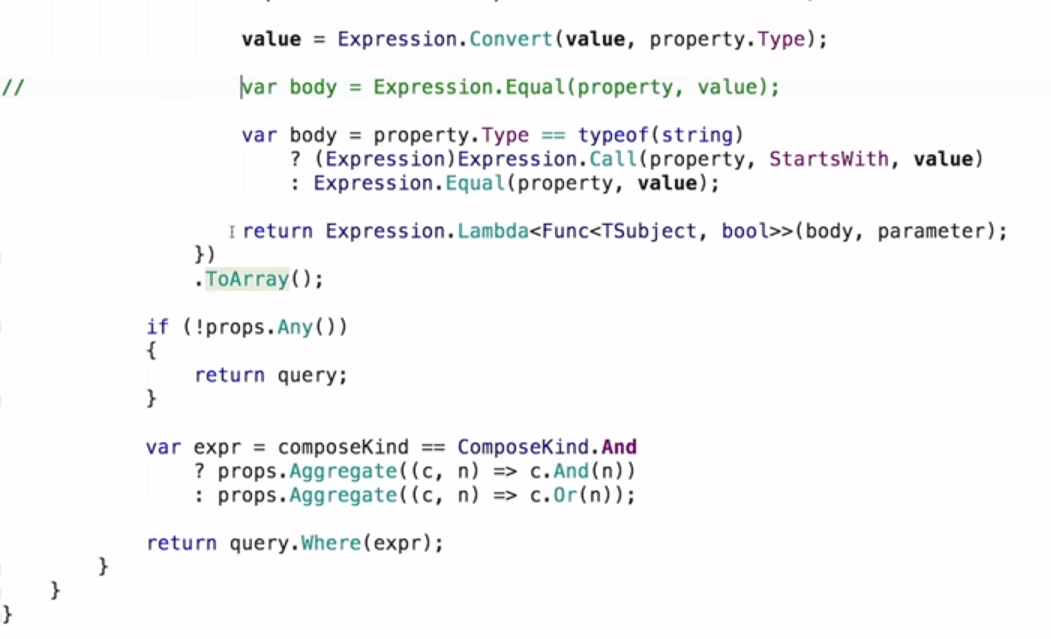

There is nothing complicated about it. We take Expression.Property, we pass on DTO and in essence. We find common properties that are called the same. If they are called the same - it looks like a filter.

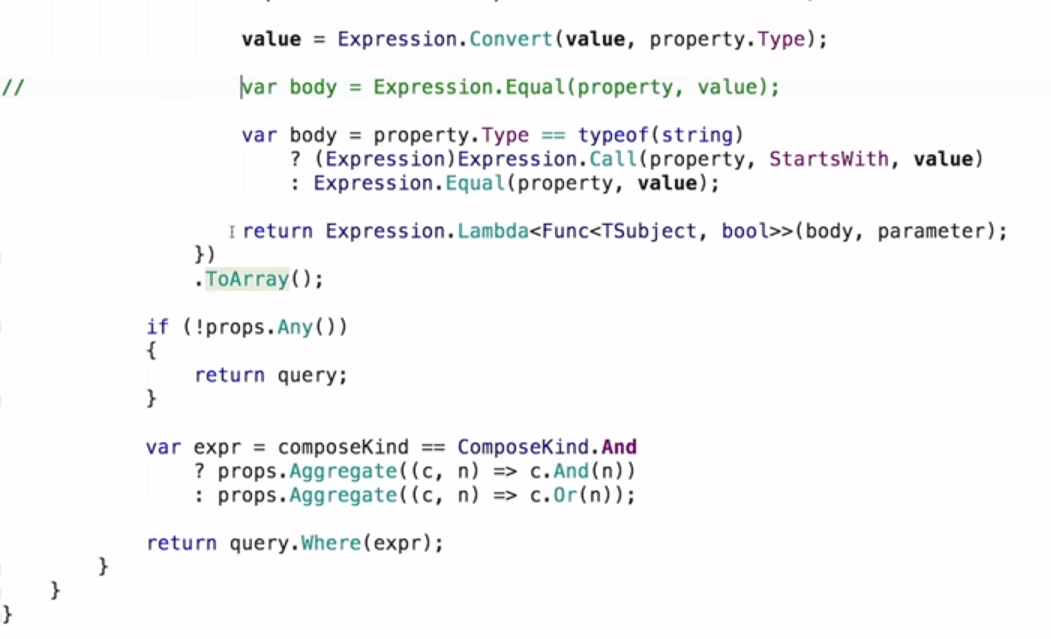

Next you need to check for null, use a constant to pull a value from DTO, substitute it into the expression and add conversion in case you have Int and NullableInt or other Nullable so that the types match. And put, for example, Equals (), a filter that checks for equality.

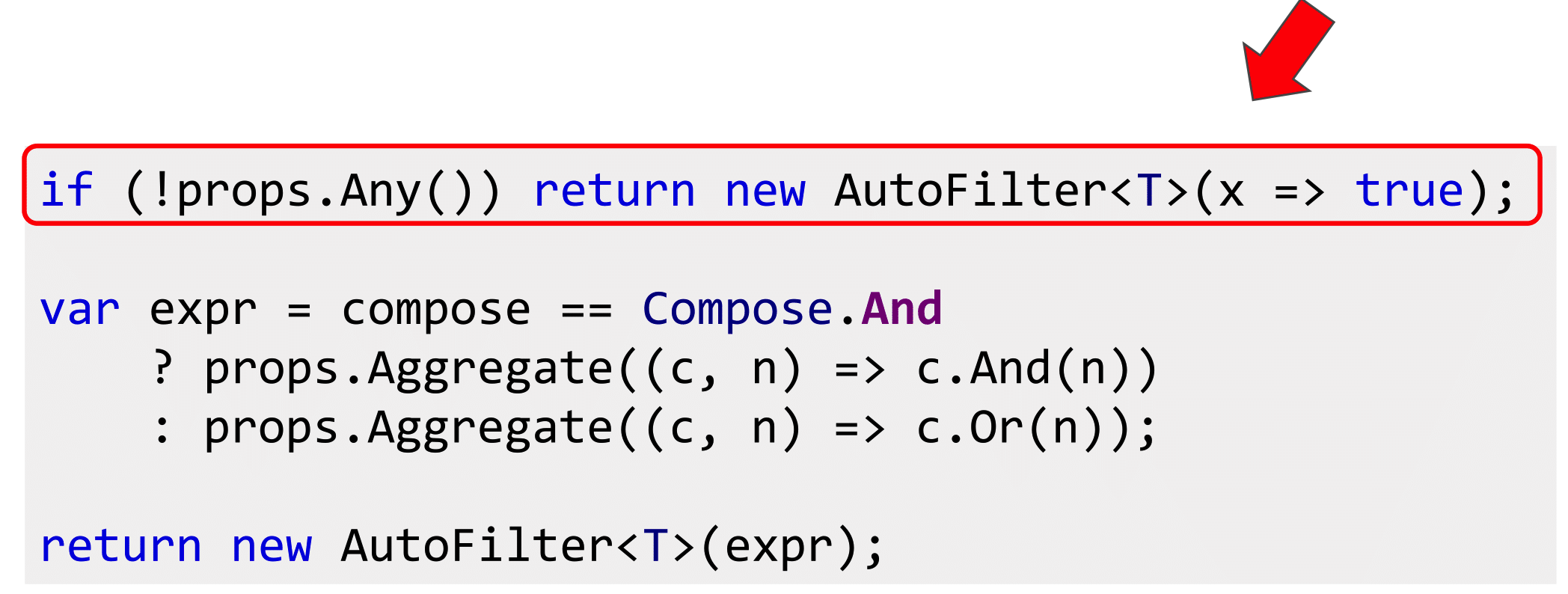

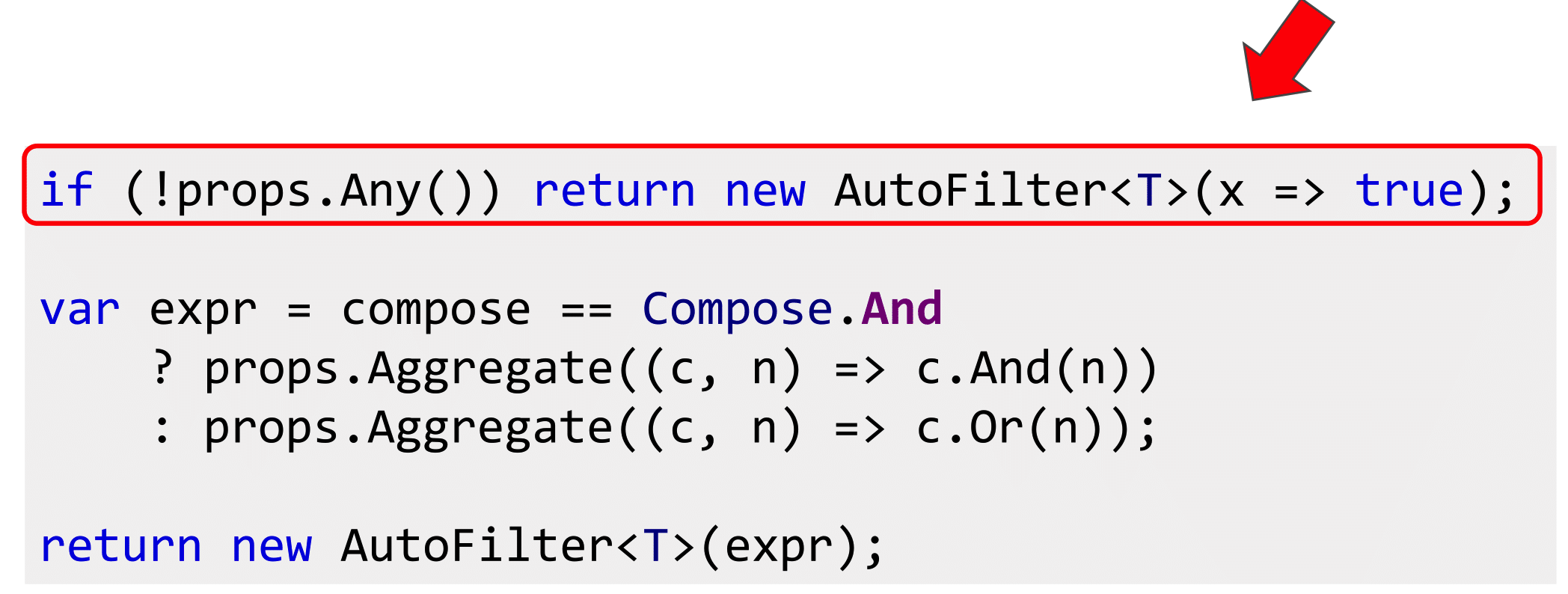

After that, collect lambda and run for each property: if there are a lot of them, collect either through “AND” or “OR”, depending on how the filter works for you.

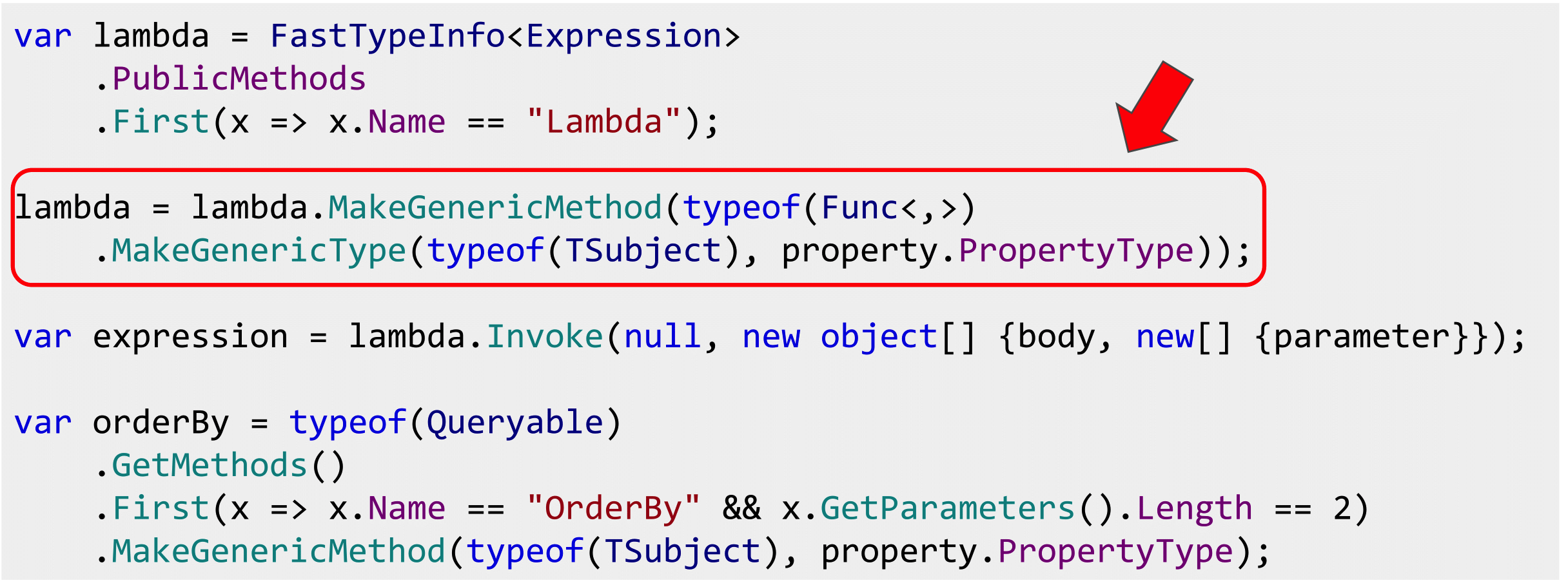

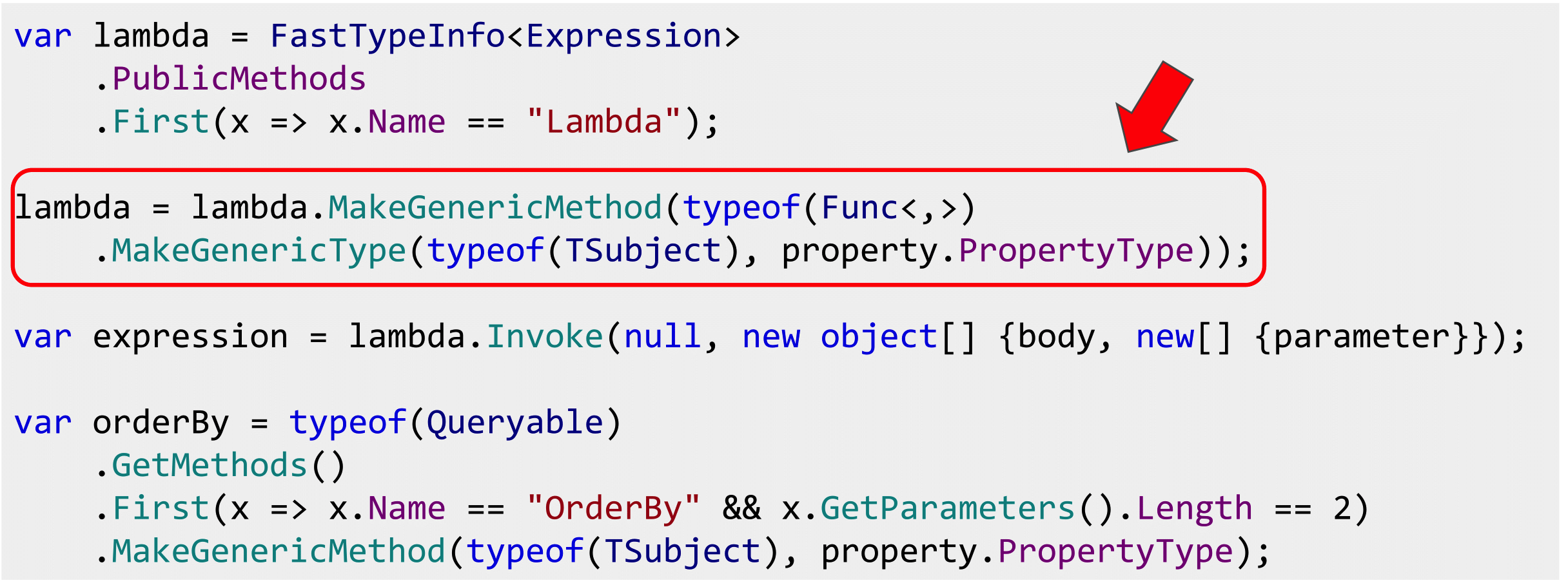

The same can be done for sorting, but it is a bit more complicated, because in the OrderBy () method there are two generics, so you have to fill them with your hands, use the Reflections to create the OrderBy () method from two generics, insert the type of entity that we take, the type of the sorted Property. In general, too, can be done, it is easy.

The question arises: where to put Where () - at the entity level, as the specifications were announced or after the projection, and there, and it will work there.

That's right and so, because specifications are by definition business rules, and we have to cherish them and not make mistakes with them. This is a one-dimensional layer. And filters are more about UI, which means they filter by DTO. Therefore, you can put two Where (). There are more questions as to how well the query provider will cope with this, but I believe that ORM solutions write bad SQL anyway, and it will not be much worse. If this is very important to you, then this story is not about vac.

As they say, it's better to see once than hear a hundred times.

Now the store has three products: "Snickers", Subaru Impreza and "Mars". Strange shop. Let's try to find “Snickers. There is. Let's see what a hundred rubles. Also "Snickers." And for 500? Let's get closer, there's nothing. And for 100,500 Subaru Impreza. Great, the same goes for sorting.

Sort alphabetically by price. The code there says exactly as much as it was. These filters work for all classes as you like. If you try to search by name, then there is also Subaru. And I had Equals () in the presentation. How is that? The fact is that the code here and in the presentation is a bit different. I commented out the line about Equals () and added some special street magic. If we have type String, then we need not Equals (), but call StartWith (), which I also received. Therefore, another filter is built for the rows.

This means that here you can press Ctrl + Shift + R, select the method and write not if, but switch, and can even implement the “Strategy” pattern and then go insane. Any desire to work filters you can realize. It all depends on the types you work with. Most importantly, the filters will work the same way.

You can agree that the filters in all UI elements should work like this: strings are searched in one way, money in another. All this is coordinated, once written, everything will be done correctly in different interfaces, and no other developers will break it, because this code is not at the application level, but somewhere either in an external library or in your kernel.

In addition to filtering and projection, you can do validation. This idea was pushed by the TComb.validation JS library . TComb is short for Type Combinators and it is based on the type system and the so-called. refinements, improvements.

First, primitives are declared that correspond to all types of JS, and the additional type is nill, which corresponds to either undefined or zero.

Further interesting begins. Each type can be enhanced with a predicate. If we want numbers greater than zero, then we declare the predicate x> = 0 and do validation with respect to the type Positive. So from the building blocks you can collect any of your validations. Noticed, probably, there is also a lambda expression.

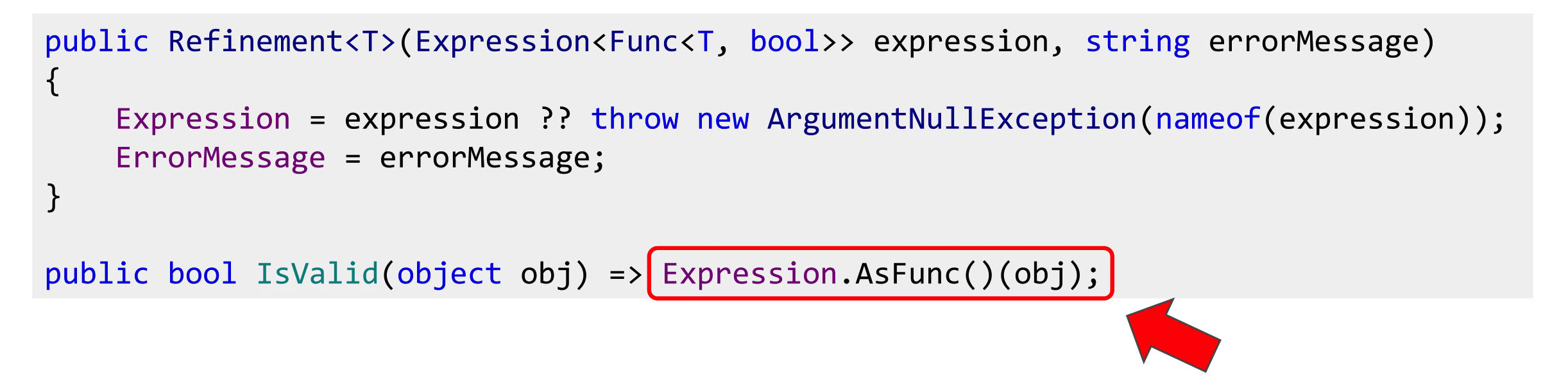

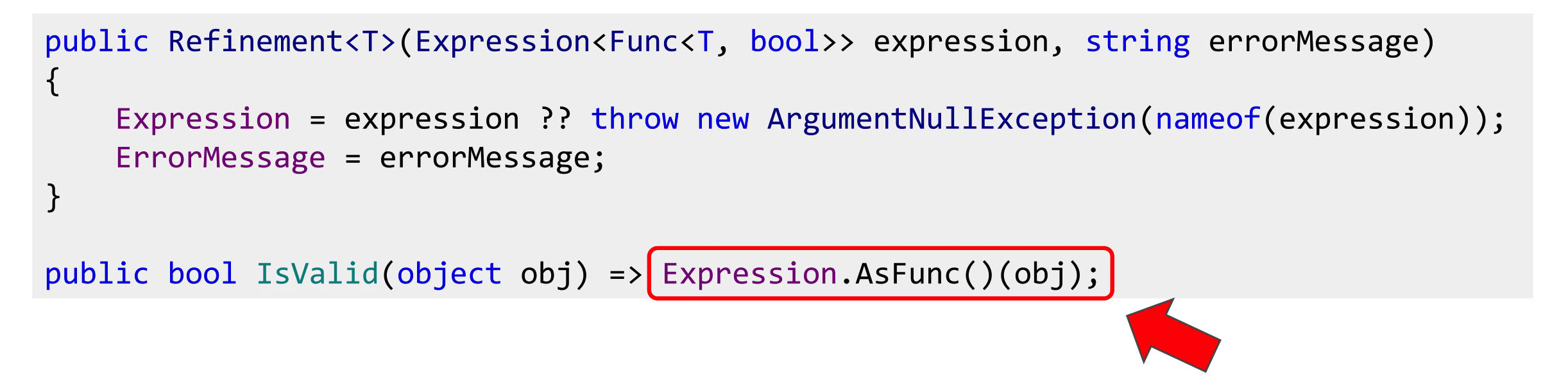

Challenge accepted. We take the same refinement, we write it in C #, we write the IsValid () method, also Expression we compile, we execute. Now we have the opportunity to carry out validation.

We integrate with the standard DataAnnotations system in ASP.NET MVC so that it all works out of the box. We declare RefinementAttribute (), we transfer type to the constructor. The fact is that RefinementAttribute is generic, so you have to use a type here, because you cannot declare a generic attribute in .NET, unfortunately.

So mark user class with refinement. AdultRefinement, that age is over 18.

To make it all good, let's make the validation on the client and the server the same. Supporters of NoJS suggest writing back and front on JS. Well, I will write on C # both back and front, nothing terrible and just translate it into JS. You can write javascriptists on your JSX, ES6 and transpose it to JavaScript. Why can't we? We write Visitor, go through what operators are needed and write JavaScript.

Separately, frequent validation cases are regular expressions, they also need to be parsed. If you have a regexp, take a StringBuilder, collect the regexp. Here I used two exclamation marks, since JS is a dynamically typed language, this expression will always be converted to bool so that everything is fine with the type. Let's see what it looks like.

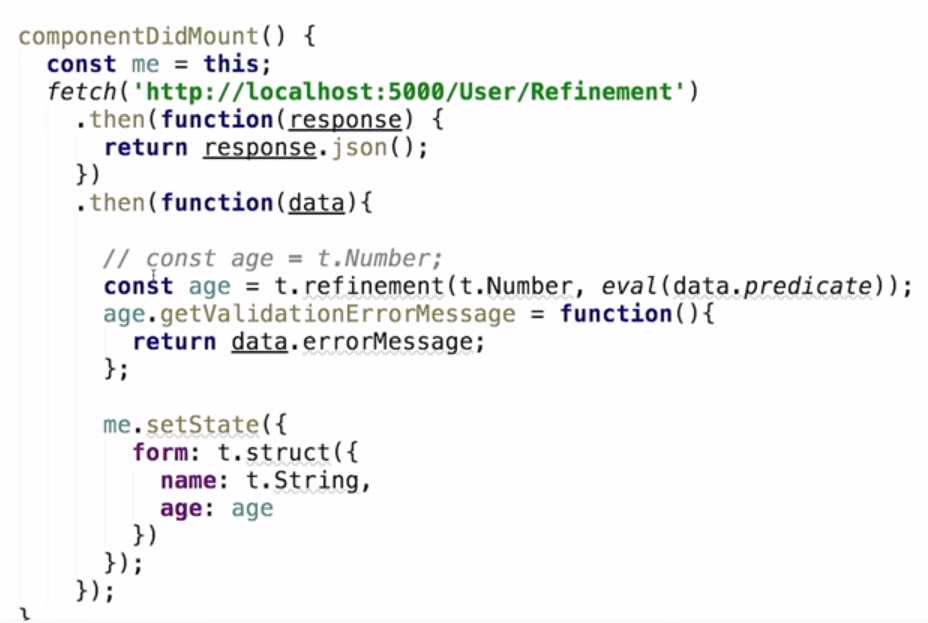

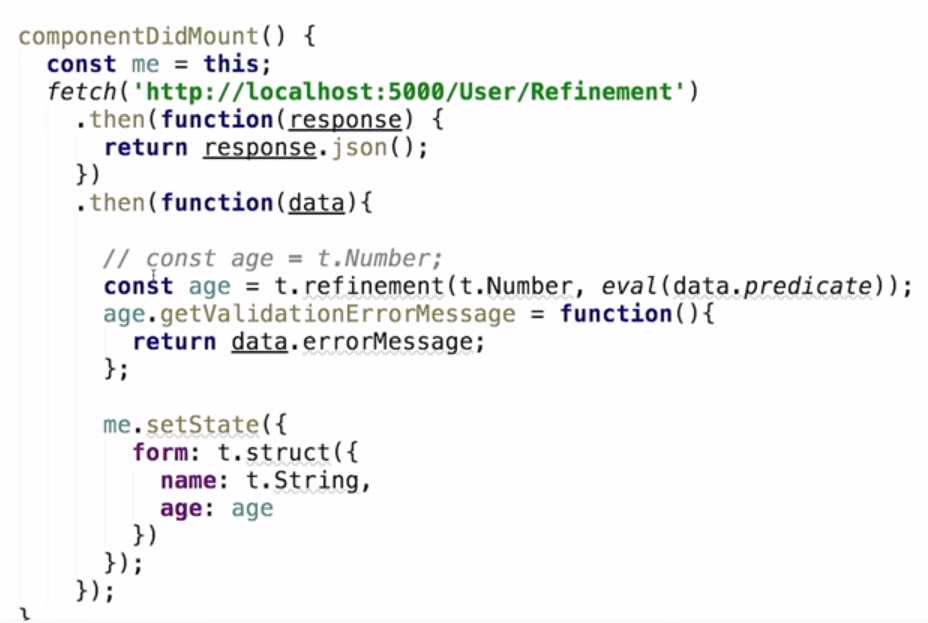

Here is our refinement, which comes from the backend, as a line predicate, since there are no lambdas and errorMessage “For adults only” in JS. Let's try to fill in the form. Does not pass. We look, how it is made.

This is React, we request from the back end of the UserRefinment () method of Expression and errorMessage, construct a refinment relative to number, use eval to get a lambda. If I redo it and remove the type restrictions, replace it with the usual number, validation on JS will fall off. Enter the unit, send. I do not know, it is visible or not, false is displayed here.

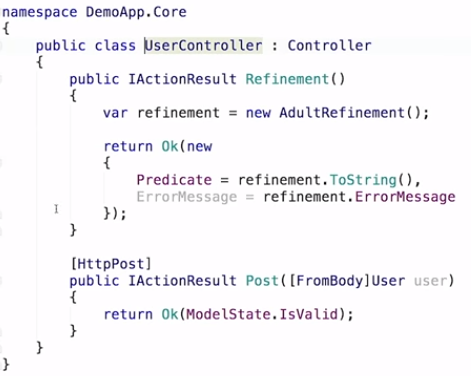

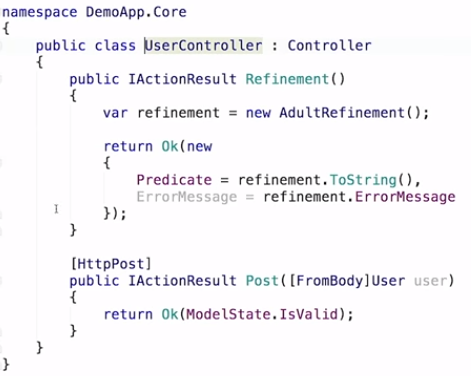

The code is alert. When we send onSubmit, alert of what came from the backend. And on the backend such a simple code.

We simply return Ok (ModelState.IsValid), the User class, which we get from a JavaScript form. Here is the Refinement attribute.

That is, validation works on the backend, which is declared in this lambda. And we translate it into javascript. It turns out, we write lambda expressions on C #, and the code is executed both there, and there. Our answer is NoJS, so can we.

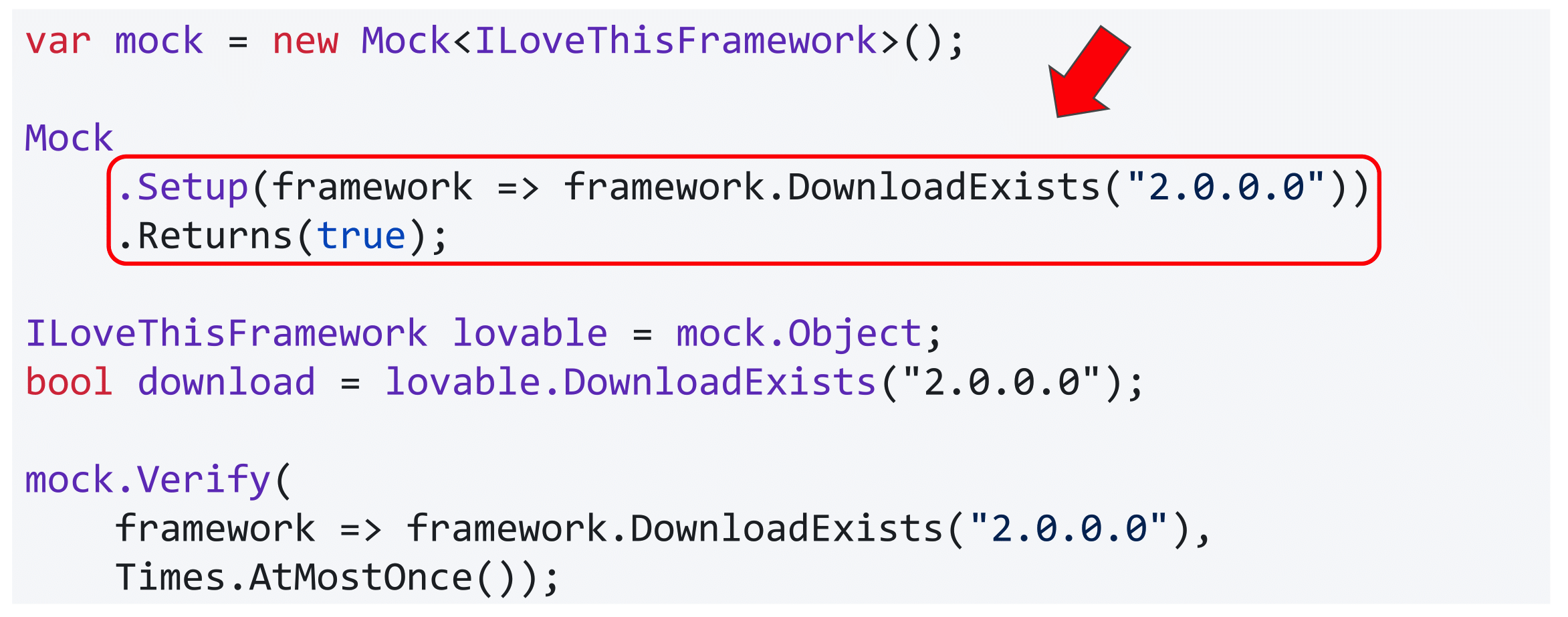

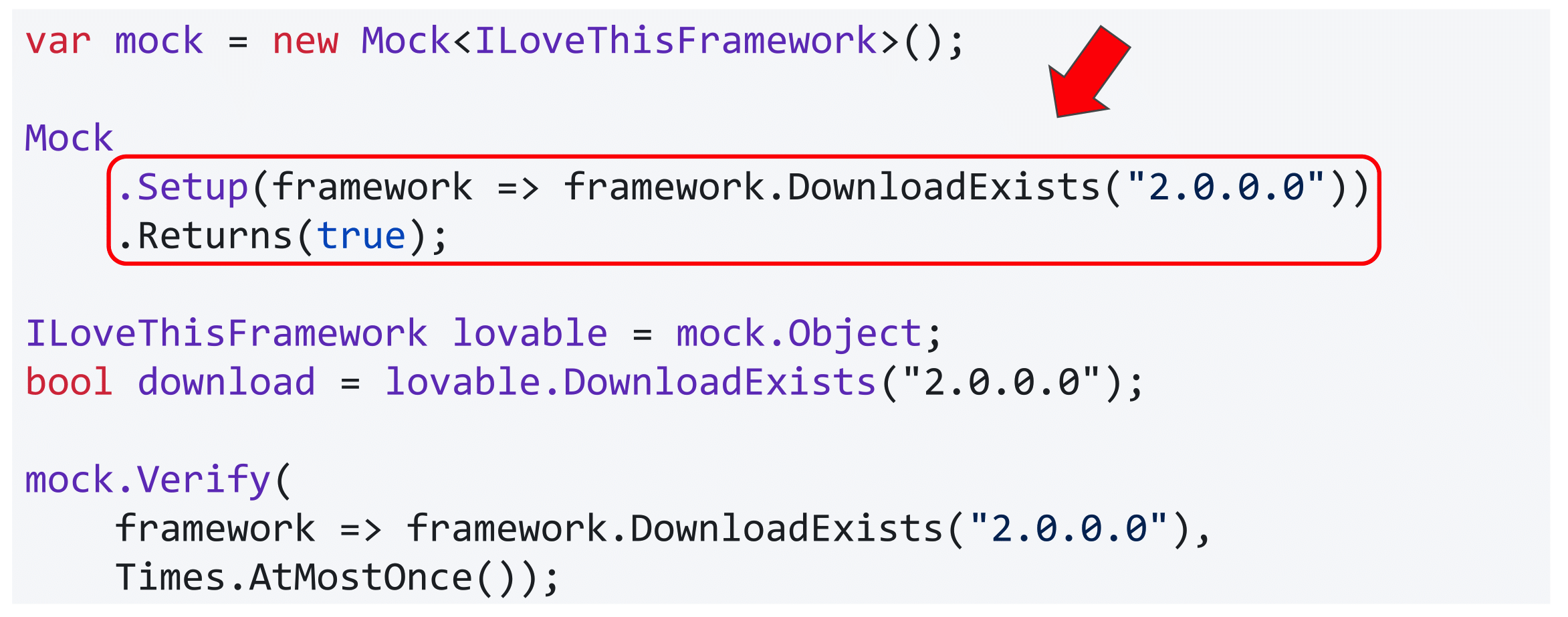

Usually it is the Timlid that is more concerned about the number of errors in the code. Those who write unit tests know the Moq library. You do not want to write a mock or declare a class - there is a moq, it has a fluent syntax. You can paint as you want him to behave and slip his application for testing.

These lambdas in moq are also Expression, not delegates. He goes over the trees of expressions, applies his logic and further feeds into Castle.DynamicProxy. And he creates the necessary classes in runtime. But we can do that too.

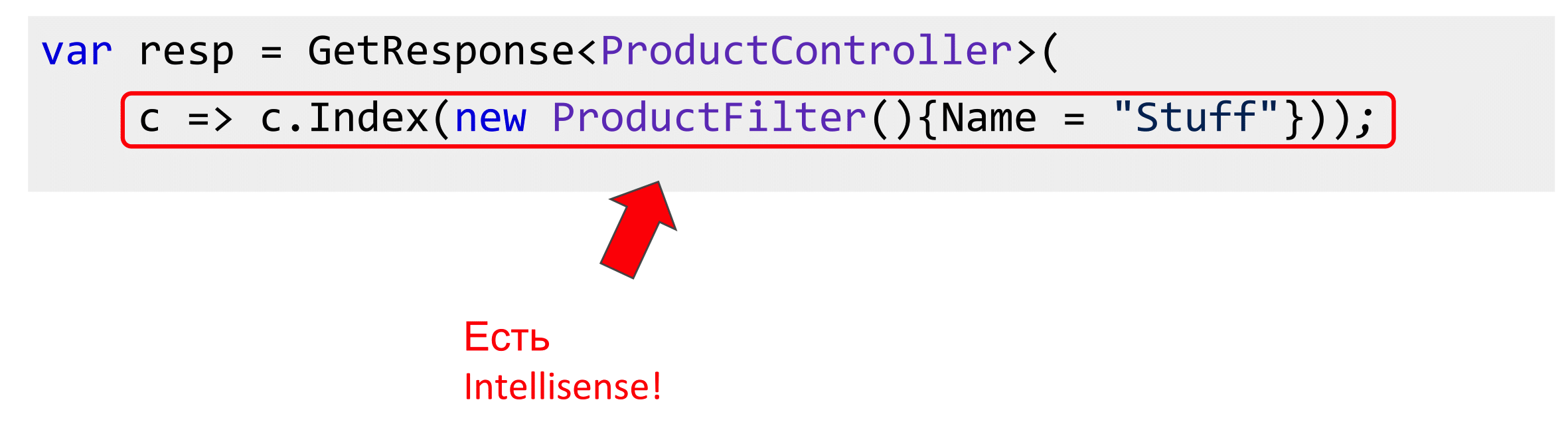

A friend of mine recently asked if there was something like WCF in our Core. I replied that there is a webapi. He also wanted to build a proxy in WebAPI, like in WCF by WSDL. WebAPI has only swagger. But the swagger is just a text, and the friend did not want to follow every time when the API changes and what breaks. When was WCF, he connected WSDL, if the spec changed at the API, the compilation broke.

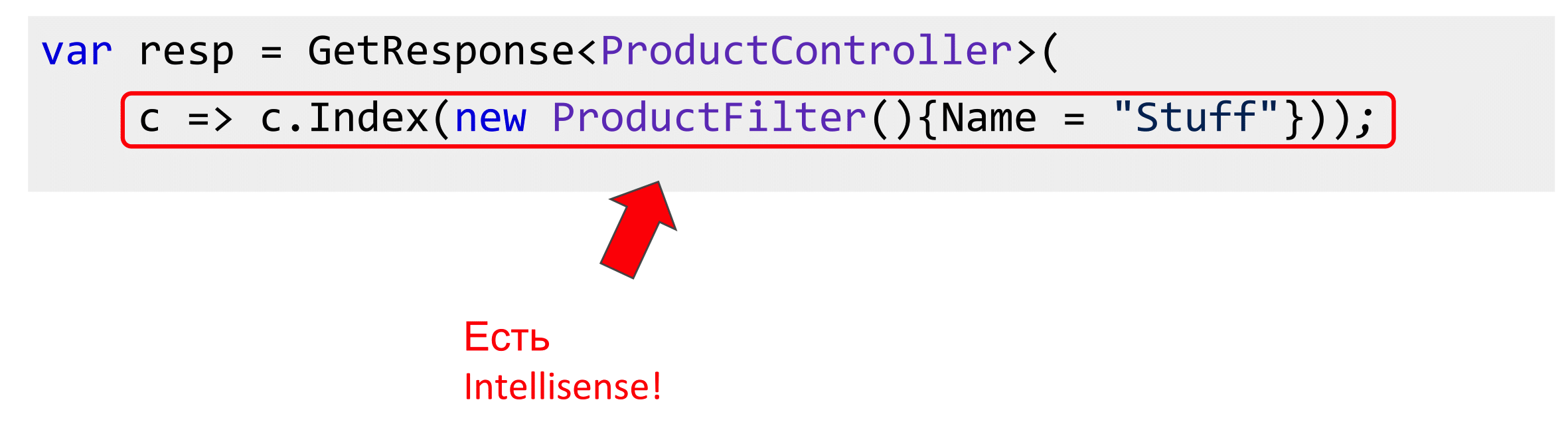

This has a definite meaning, since it’s reluctant to search, and the compiler can help. By analogy with moq, you can declare the method GetResponse <> () generic with your ProductController, and the lambda that goes into this method is parameterized by the controller. That is, you, starting to write a lambda, press Ctrl + Space and see all the methods that this controller has, provided that there is a library, dll with the code. There is Intellisense, write all this as if you are calling a controller.

Further, like Moq, we will not call it, but simply build an expression tree, go through it, pull out all the information on the routing from the API config. And instead of doing something with the controller, which we cannot perform, since we have to execute it on the server, we will simply make the POST or GET request we need, and in the opposite direction we will deserialize the response received, because of Intellisense and expression tree we know about all return types. It turns out, we write code about controllers, and actually we do Web requests.

Reflection Optimization

As far as meta-programming is concerned, it strongly echoes Reflection.

We know that Reflection is slow, I would like to avoid it. There are some good Expression case studies too. The first is the CreateInstance activator. You should never use it at all, because there is Expression.New (), which you can simply drive into the lambda, compile and then get the constructors.

I borrowed this slide from a wonderful speaker and musician Vagif. He was doing a benchmark on a blog. Here is the Activator, this is the Peak of Communism, you see how much it is trying to do everything. Constructor_Invoke, it is about half. And on the left - New and compiled lambda. There is a slight increase in performance due to the fact that it is a delegate, not a constructor, but the choice is obvious, it is clear that this is much better.

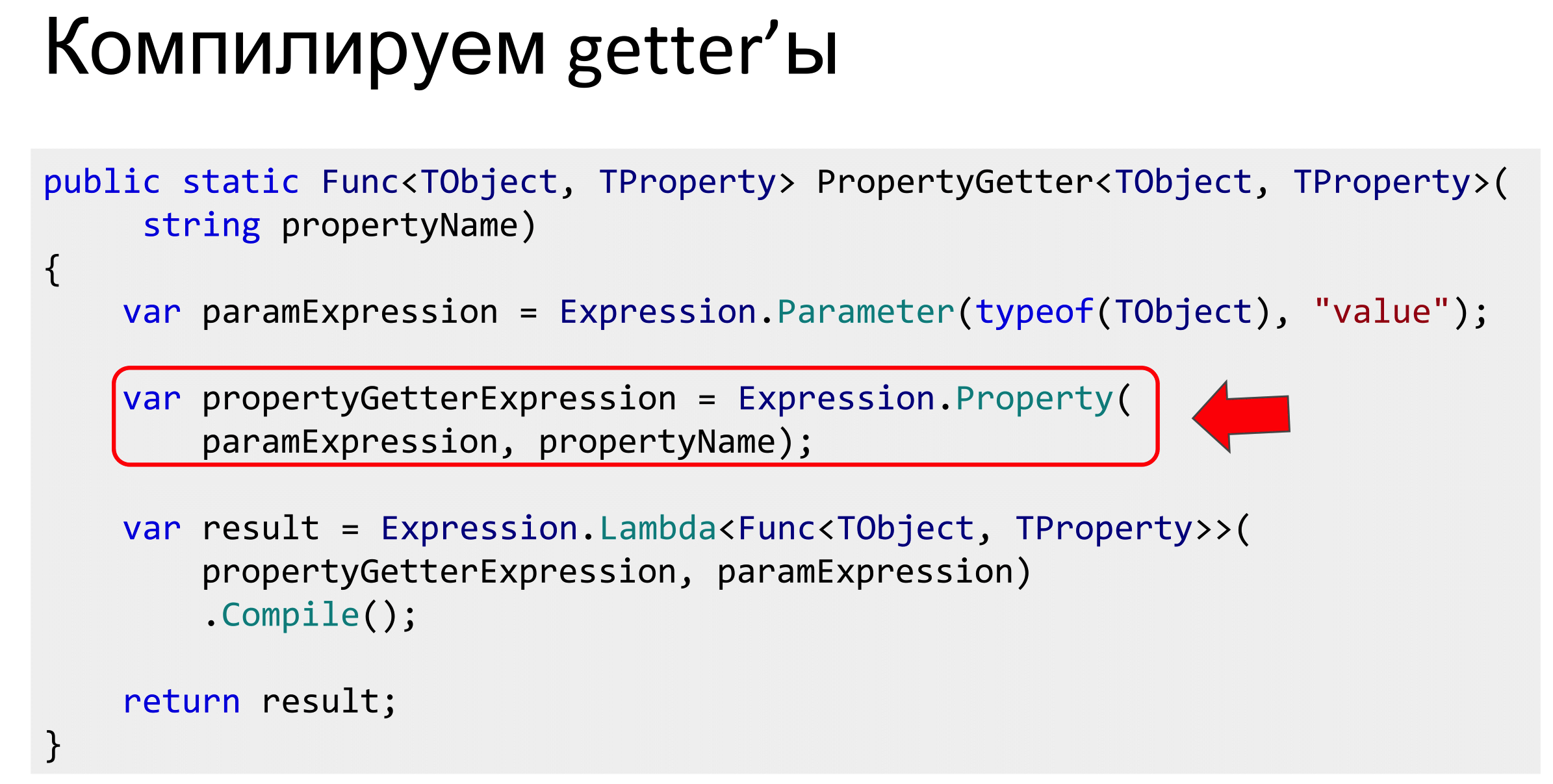

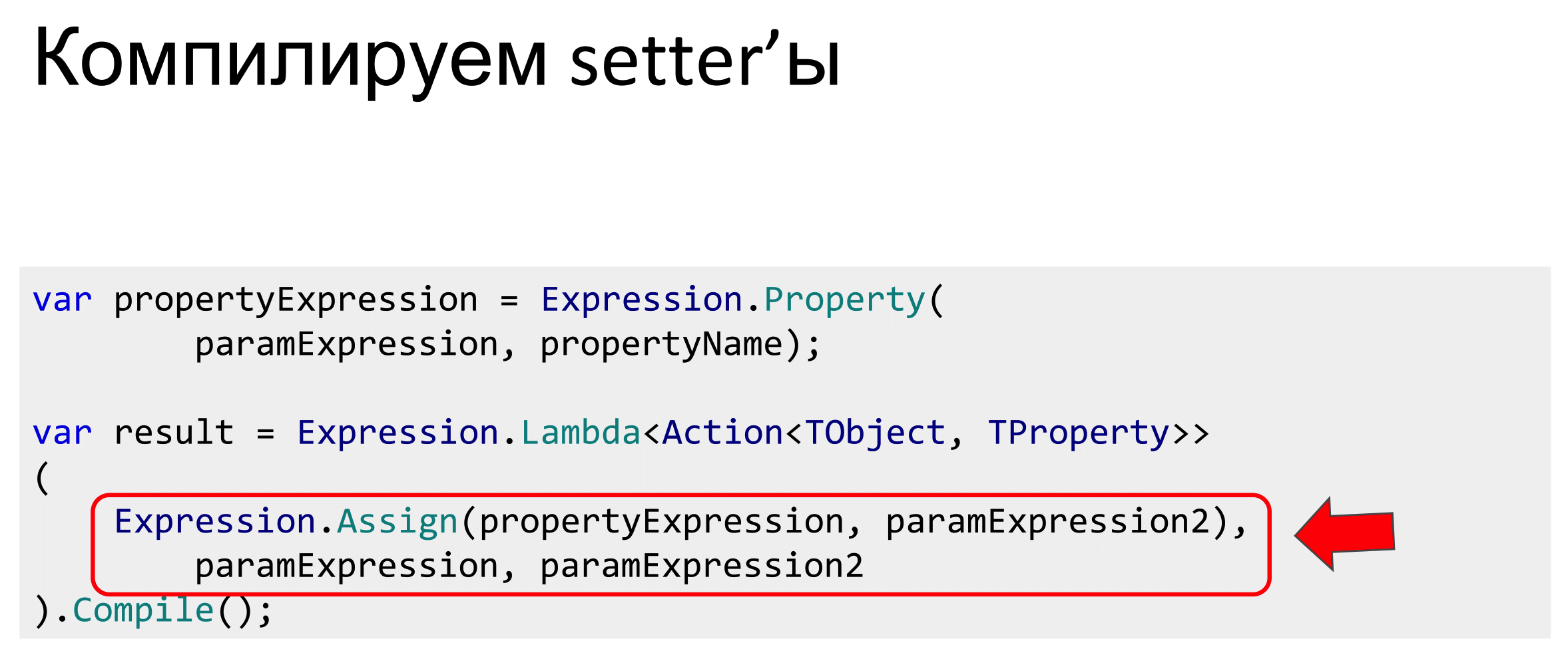

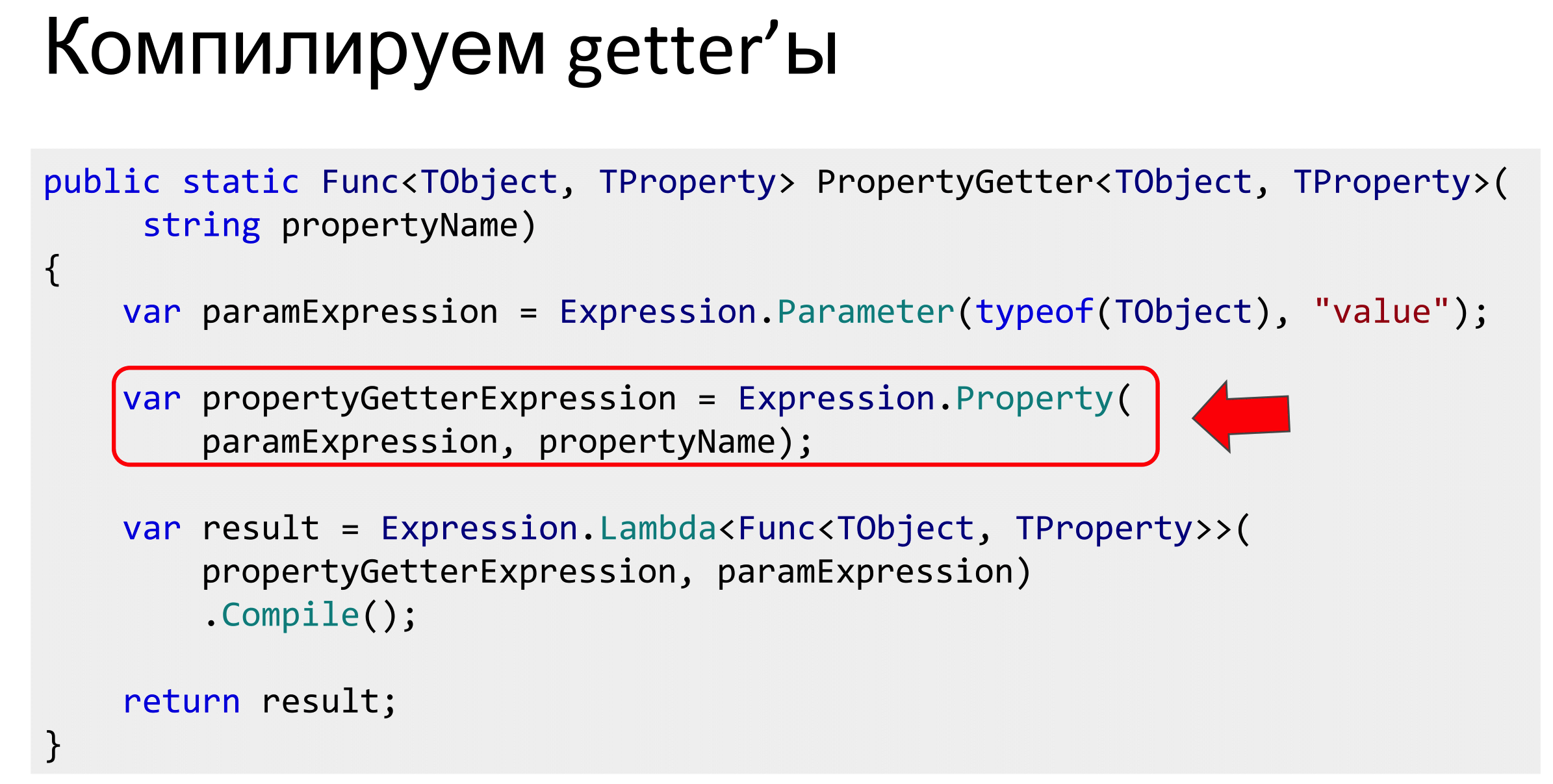

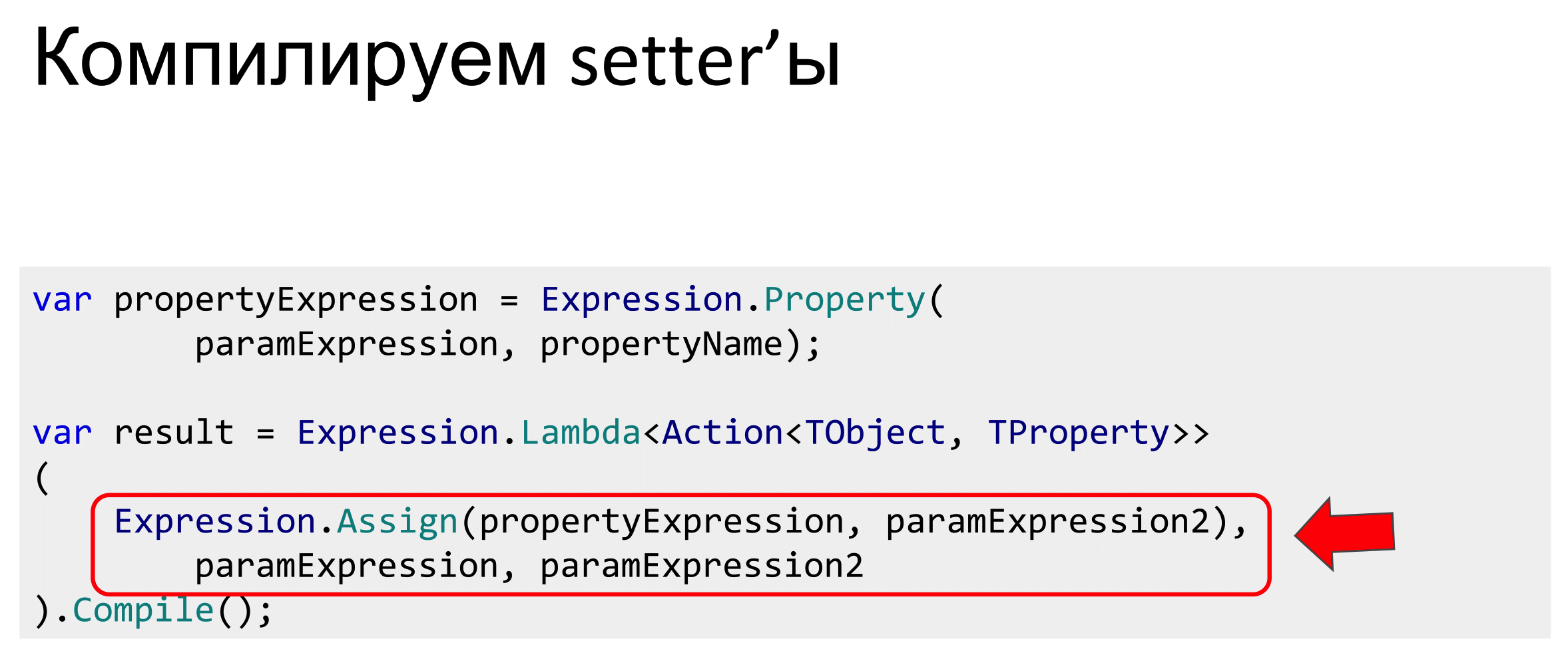

The same can be done with getters or setters.

It is done very simply. If for some reason you are not satisfied with the Fast Memember Mark Gravelli or Fast Reflect, you do not want to drag this dependence, you can do the same. The only difficulty is that you need to keep track of all these compilations, store and warm up the cache somewhere. That is, if this is a lot, then at the start you need to compile it once.

Once there is a constructor, getters and setters, only the behavior and methods remain. But they can also be compiled into delegates, and you just get a big zoo of delegates who need to be able to manage. Knowing all that I told you, someone might have the idea that if there are many delegates, many expressions, then maybe there is a place for what is called DSL, Little Languages, or a pattern interpreter, a free monad.

These are all the same things, when for some task we come up with a set of commands and write our own interpreter for it. That is, inside the application, we also write a compiler or interpreter that knows how to use these commands. This is exactly how it is done in the DLR, in the part that works with the languages IronPython, IronRuby. The expression tree is used there to execute dynamic code in the CLR. The same can be done in business applications, but so far we have not noticed such a need, and this remains outside the brackets.

In conclusion, I want to talk about what conclusions we came to after implementation and testing. As I said, this happened on different projects. Everything that I have written, we do not use everywhere, but somewhere if required, some things were used.

The first plus is the ability to automate the routine. If you have 100 thousand molds with filtering, pagination and all that. Mozart had a joke that with the help of dice, enough time and a glass of red wine, you can write waltzes in any quantity. Here with the help of Expression Trees, a bit of meta-programming, you can write molds in any quantity.

The amount of code is greatly reduced, as an alternative to code generation, if you do not like it, because you get a lot of code, you can not write it, leave everything in runtime.

Using such code for simple tasks, we further reduce the requirements for executors, because there is very little imperative code and space for error there as well. Pulling a large amount of code into reusable components, we remove this class of errors.

On the other hand, we are so much raising the requirements for the qualifications of the designer, because we get out questions of knowledge about working with Expression, Reflection, their optimization, about places where you can shoot yourself in the foot. There are many such nuances here, so a person unfamiliar with this API will not immediately understand why Expression simply does not combine. The designer should be cooler.

In some cases, Expression.Compile () can catch performance degradation. In the caching example, I had a limitation that the Expressions are static, because a Dictionary is used for caching. If someone does not know how it is arranged inside, starts to do it thoughtlessly, declares the specifications non-static inside, the cache method will not work, and we will receive calls to Compile () in random places. Exactly what I wanted to avoid.

The most unpleasant minus is that the code ceases to look like a C # code, it becomes less idiomatic, static calls appear, strange additional Where () methods, some implicit operators are overloaded. This is not in the MSDN documentation, in the examples. If, say, a person with little experience comes to you, who is not used to creeping into the source code, he will most likely be in a little prostration at first, because it does not fit into the picture of the world, there are no such examples on StackOverflow, but with this will have to work somehow.

In general, this is all that I wanted to talk about today. Much of what I said, in more detail, with the details written on Habré. The library code is laid out on the githaba, but it has one fatal flaw - the complete lack of documentation.

In this article, I will show you advanced techniques for working with expression trees: eliminating code duplication in LINQ, code generation, metaprogramming, transpiling, test automation.

You will learn how to use expression tree directly, which pitfalls the technology has prepared and how to get around them.

Under the cut - video and text transcript of my report with DotNext 2018 Piter.

My name is Maxim Arshinov, I am the co-founder of the outsourcing company "Hightech Group". We are developing software for business, and today I will tell you about the use of the expression tree technology in everyday work and how it began to help us.

I never specifically wanted to study the internal structure of expression trees, it seemed that it was some kind of internal technology for the .NET Team, so that LINQ worked, and its API wasn’t necessary for application programmers to know. It turned out that there were some applied problems that needed to be solved. So that I liked the decision, I had to crawl "in the gut."

This whole story is stretched in time, there were different projects, different cases. Something crawled out, and I finished writing, but I will allow myself to sacrifice historical truthfulness in favor of a greater artistic presentation, so all the examples will be on the same subject model - the online store.

Imagine that we are all writing an online store. It has products and a tick "For sale" in the admin panel. In the public part, we will display only those products for which this tick is marked.

We take some DbContext or NHibernate, we write Where (), IsForSale we deduce.

Everything is good, but business rules are not the same, so we wrote them once and for all. They evolve over time. The manager comes in and says that we must also monitor the balance and bring only goods that have leftovers to the public part, without forgetting the check.

Easy to add such a property. Now our business rules are encapsulated, we can reuse them.

Let's try to edit LINQ. Is everything good here?

No, this will not work, because IsAvailable does not map to the database, this is our code, and the query provider does not know how to parse it.

We can tell him that in our property is such a story. But now this lambda is duplicated in both the linq-expression and the property.

Where(x => x.IsForSale && x.InStock > 0)

IsAvailable => IsForSale && InStock > 0; So, the next time this lambda changes, we will have to do Ctrl + Shift + F on the project. Naturally, we all will not find - bugs and time. I want to avoid this.

We can go from this side and put another ToList () in front of Where (). This is a bad decision, because if there is a million goods in the database, everything rises into RAM and is filtered there.

If you have three products in the store, the solution is good, but in E-commerce there are usually more of them. It worked only because, despite the similarity of the lambda with each other, the type of them is completely different. In the first case, this is the Func delegate, and in the second, the expression tree. It looks the same, the types are different, the bytecode is completely different.

To go from expression to a delegate, simply call the Compile () method. This API provides .NET: there is expression - compiled, received a delegate.

But how to go back? Is there something in .NET to go from delegate to expression trees? If you are familiar with LISP, for example, then there is a citation mechanism that allows the code to be interpreted as a data structure, but in .NET there is no such thing.

Expresnny or delegates?

Considering that we have two types of lambdas, we can philosophize what is primary: expression tree or delegates.

// so slo-o-o-o-o-o-o-ow var delegateLambda = expressionLambda.Compile();At first glance, the answer is obvious: since there is a wonderful Compile () method, expression tree is primary. And we have to get the delegate by compiling the expression. But compilation is a slow process, and if we start to do this everywhere, we will get performance degradation. In addition, we will get it in random places, where expression had to be compiled into a delegate, there will be a performance drop. You can search for these places, but they will affect the response time of the server, and randomly.

Therefore, they must somehow be cached. If you listened to a report about concurrent data structures, then you know about ConcurrentDictionary (or just know about it). I will omit the details about caching methods (with locks, not locks). Simply, ConcurrentDictionary has a simple GetOrAdd () method, and the simplest implementation: put it into ConcurrentDictionary and cache. The first time we get the compilation, but then everything will be fast, because the delegate has already been compiled.

Then you can use this extension method, you can use and refactor our code with IsAvailable (), describe the expression, compile and call IsAvailable () properties relative to the current this object.

There are at least two packages that implement this: Microsoft.Linq.Translations andSignum Framework (open source framework written by a commercial company). And there, and there is about the same story with the compilation of delegates. A bit different API, but as I showed on the previous slide.

However, this is not the only approach, and you can go from delegates to expressions. For a long time on Habré there is an article about Delegate Decompiler, where the author claims that all compilations are bad, because they are long.

In general, delegates had earlier expressions, and it is possible to move on from delegates to them. To do this, the author uses the methodBody.GetILAsByteArray (); from Reflection, which really returns the entire IL-code of the method as a byte array. If it is stuck further in Reflection, then you can get an object representation of this case, go through it and build an expression tree. Thus, the reverse transition is also possible, but it has to be done by hand.

In order not to run on all properties, the author suggests hanging the Computed attribute to mark that this property should be inline. Before the request, we climb into IsAvailable (), pull out its IL code, convert it to the expression tree, and replace the IsAvailable () call with what is written in this getter. It turns out such a manual inlining.

To make it work, before passing everything to ToList (), call the special method Decompile (). It provides the decorator for the original queryable and inlines. Only after that we transfer everything to the query-provider, and everything is fine with us.

The only problem with this approach is that Delegate Decompiler 0.23.0 is not going to move forward, there is no Core support, and the author himself says that this is a deep alpha, there is a lot of unfinished there, so you cannot use it in production. Although we will return to this topic.

Boolean operations

It turns out that we have solved the problem of duplication of specific conditions.

But conditions often need to be combined using Boolean logic. We had IsForSale (), InStock ()> 0, and in between the “AND” condition. If there is some other condition, or an “OR” condition is required.

In the case of "And" you can cheat and dump all the work on the query-provider, that is, to write a lot of Where () in a row, he knows how to do it.

If an “OR” is required, this will not work, because WhereOr () is not in LINQ, and the expressions do not overload the “||” operator.

Specs

If you are familiar with Evans' DDD book, or simply know something about the Pattern Specification, then there is a design pattern designed for exactly that. There are several business rules and you want to combine operations in Boolean logic - implement the Specification.

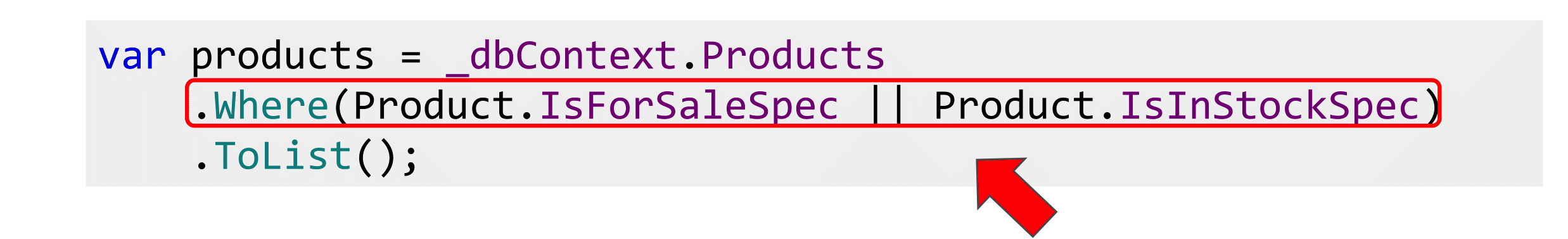

Specification is such a term, an old pattern from Java. And in Java, especially in the old, there was no LINQ, so it is implemented there only in the form of the isSatisfiedBy () method, that is, only delegates, but there is no talk about expressing there. There is an implementation on the Internet called LinqSpecs , on the slide you will see it. I filed it a little with a file for myself, but the idea belongs to the library.

All Boolean operators are overloaded here, true and false operators are overloaded, so that the two “&&” and “||” operators work, without them only the single ampersand will work.

Then we add implicit-statements that will force the compiler to assume that the specification is both an expression and a delegate. In any place where the Expression <> or Func <> type should come to the function, you can pass the specification. Since the implicit statement is overloaded, the compiler will figure out and substitute either the Expression or IsSatisfiedBy properties.

IsSatisfiedBy () can be implemented by caching the expression that came. In any case, it turns out that we are going from Expression, the delegate corresponds to it, we have added support for boolean operators. Now you can compose all this. Business rules can be rendered into static specifications, declared and combined.

publicstaticreadonly Spec<Product>

IsForSaleSpec = new Spec<Product>(x => x.IsForSale);

publicstaticreadonly Spec<Product>

IsInStockSpec = new Spec<Product>(x => x.InStock > 0);

Each business rule is written only once, it is not lost anywhere, not duplicated, they can be combined. People, coming to the project, can see what you have, what conditions, to understand the subject model.

There is a small problem: the Expression does not have the And (), Or (), and Not () methods. These are extension-methods, they must be implemented independently.

The first attempt at implementation was this. About expression tree there is quite a bit of documentation on the Internet, and it’s all not detailed. So I tried to just take the Expression, hit Ctrl + Space, saw OrElse (), read about it. Passed two Expression to compile and continue to get lambda. It will not work.

The fact is that this Expression consists of two parts: a parameter and a body. The second also consists of a parameter and a body. In OrElse (), it is necessary to transfer the body of expressions, that is, it is useless to compare the lambda by the “AND” and “OR”, so it will not work. We fix it, but it will not work again.

But if last time there was a NotSupportedException that lambda is not supported, now there is a strange story about parameter 1, parameter 2, “something is wrong, I won’t work”.

C # 7.0 in a Nutshell

Then I thought that the method of scientific spear will not work, it is necessary to understand. I started to google and found the site of the Albahari book “ C # 7.0 in a Nutshell ”.

Joseph Albahari, who is also the developer of the popular LINQKit and LINQPad library, just describes this problem. that you can't just take and combine Expression, and if you take the magic Expression.Invoke (), it will work.

Question: what is Expression.Invoke ()? Go to Google again. It creates an InvocationExpression that applies a delegate or lambda expression to the argument list.

If I read this code to you now that we take Expression.Invoke (), we pass the parameters, then the same is written in English. It does not become clearer. There is some magic Expression.Invoke (), which for some reason solves this problem with parameters. It is necessary to believe, it is not necessary to understand.

However, if you try to feed EF such combined Expression, it will fall again and say that Expression.Invoke () is not supported. By the way, EF Core began to support, but EF 6 does not hold. But Albahari suggests simply writing AsExpandable (), and it will work.

You can also substitute Expression subqueries where we need a delegate. So that they coincide, we write Compile (), but at the same time, if you write AsExpandable (), as Albahari suggests, this Compile () will not really happen, but somehow everything will be magically done correctly.

I did not believe the word and got into the source. What is the AsExpandable () method? It has query and QueryOptimizer. We leave the second one out of the brackets, since it is uninteresting, but simply sticks together the Expression: if there is 3 + 5, it will put 8.

It is interesting that the Expand () method is then called, after it queryOptimizer, and then everything is passed to the query-provider as- This is altered after the Expand () method.

We open it, it is Visitor, inside we see a non-original Compile (), which compiles something else. I will not tell you what it is, even though there is a certain meaning in it, but we remove one compilation and replace it with another. Great, but it smacks of marketing of the 80th level, because the performance impact is not going anywhere.

Looking for alternatives

I thought that this would not work and began to look for another solution. And found. There is such a Pete Montgomery, who also writes about this problem and claims that Albahari faked.

Pete talked to the EF developers, and they taught him to combine everything without Expression.Evoke (). The idea is very simple: the ambush was with parameters. The fact is that with a combination of Expression there is a parameter of the first expression and a parameter of the second. They do not match. The bodies were glued together, and the parameters remained hanging in the air. They need to bind in the right way.

To do this, you need to compile a dictionary, looking at the parameters of the expressions, if the lambda is not from one parameter. We compile a dictionary, and all the parameters of the second are rebound to the parameters of the first, so that the original parameters are included in the Expression, passed over the whole body, which we glued together.

This simple method allows you to get rid of all ambushes with Expression.Invoke (). Moreover, in the implementation of Pete Montgomery, this is done even cooler. It has a Compose () method that allows you to combine any expressions.

We take expression and through AndAlso we connect, works without Expandable (). It is this implementation that is used in boolean operations.

Specifications and assemblies

Everything was fine until it became clear that there are aggregates in nature. For those who are not familiar, I will explain: if you have a domain model and you represent all the entities that are connected to each other, in the form of trees, then the tree hanging separately is an aggregate. The order along with the order items will be called the aggregate, and the essence of the order will be the aggregation root.

If, in addition to goods, there are still categories with a business rule declared for them in the form of a specification, that there is a certain rating that must be over 50, as marketers said, and we want to use it so, then this is good.

But if we want to pull the goods out of a good category, then again it is bad, because we did not have the same types. Specification for category, but need products.

Again, we must somehow solve the problem. The first option: replace Select () with SelectMany (). I don't like two things here. First of all, I don’t know how support for SelectMany () is implemented in all popular query providers. Secondly, if someone writes a query provider, the first thing he will do is to write throw not realized exception and SelectMany (). And the third point: people think that SelectMany () is either functional or join'y, usually not associated with a SELECT query.

Composition

I would like to use Select (), not SelectMany ().

At about the same time I read about category theory, about functional composition and thought that if there are specifications from the product in bool below, there is some function that can go from the product to the category, there is a specification regarding the category, then substituting the first function as the second argument, we get what we need, the specification regarding the product. Absolutely the same as functional composition works, but for expression trees.

Then it would be possible to write such a method Where (), that it is necessary to go from the products to categories and apply the specification to this related entity. This syntax for my subjective taste looks pretty clear.

publicstatic IQueryable<T> Where<T, TParam>(this IQueryable<T> queryable,

Expression<Func<T, TParam>> prop, Expression<Func<TParam, bool>> where)

{

return queryable.Where(prop.Compose(where));

}With the Compose () method, this is also easy to do. We take input Expression from products and we combine it together with specifications concerning a product and all.

Now you can write such Where (). This will work if you have a unit of any length. Category has SuperCategory and as many further properties as you can substitute.

“Once we have a functional composition tool, and since we can compile it, and once we can build it dynamically, it means there is a smell of meta-programming!” I thought.

Projections

Where can we apply meta-programming to have less code to write.

The first option is projections. Pulling an entire entity is often too expensive. Most often we transfer it to the front, serialize JSON. And it does not need the whole entity with the unit. You can do this as efficiently as possible with LINQ by writing such a Select () manual. Not difficult, but tedious.

Instead, I suggest everyone use ProjectToType (). At least there are two libraries that can do this: Automapper and Mapster. For some reason, many people know that AutoMapper can do memory mapping, but not everyone knows that it has Queryable Extensions, there is also an Expression, and it can build a SQL expression. If you are still writing manual queries and you are using LINQ, since you do not have super-serious performance restrictions, then there is no point in doing this with your hands, this is the work of the machine, not a person.

Filtration

If we can do this with projections, why not do it for filtering.

Here too the code. Comes some kind of filter. A lot of business applications look like this: a filter has arrived, we will add Where (), another filter has come, we will add Where (). How many filters are there, so many repeat. Nothing complicated, but a lot of copy-paste.

If we do something like AutoMapper, we write AutoFilter, Project and Filter, so that he can do everything, it would be cool - less code.

There is nothing complicated about it. We take Expression.Property, we pass on DTO and in essence. We find common properties that are called the same. If they are called the same - it looks like a filter.

Next you need to check for null, use a constant to pull a value from DTO, substitute it into the expression and add conversion in case you have Int and NullableInt or other Nullable so that the types match. And put, for example, Equals (), a filter that checks for equality.

After that, collect lambda and run for each property: if there are a lot of them, collect either through “AND” or “OR”, depending on how the filter works for you.

The same can be done for sorting, but it is a bit more complicated, because in the OrderBy () method there are two generics, so you have to fill them with your hands, use the Reflections to create the OrderBy () method from two generics, insert the type of entity that we take, the type of the sorted Property. In general, too, can be done, it is easy.

The question arises: where to put Where () - at the entity level, as the specifications were announced or after the projection, and there, and it will work there.

That's right and so, because specifications are by definition business rules, and we have to cherish them and not make mistakes with them. This is a one-dimensional layer. And filters are more about UI, which means they filter by DTO. Therefore, you can put two Where (). There are more questions as to how well the query provider will cope with this, but I believe that ORM solutions write bad SQL anyway, and it will not be much worse. If this is very important to you, then this story is not about vac.

As they say, it's better to see once than hear a hundred times.

Now the store has three products: "Snickers", Subaru Impreza and "Mars". Strange shop. Let's try to find “Snickers. There is. Let's see what a hundred rubles. Also "Snickers." And for 500? Let's get closer, there's nothing. And for 100,500 Subaru Impreza. Great, the same goes for sorting.

Sort alphabetically by price. The code there says exactly as much as it was. These filters work for all classes as you like. If you try to search by name, then there is also Subaru. And I had Equals () in the presentation. How is that? The fact is that the code here and in the presentation is a bit different. I commented out the line about Equals () and added some special street magic. If we have type String, then we need not Equals (), but call StartWith (), which I also received. Therefore, another filter is built for the rows.

This means that here you can press Ctrl + Shift + R, select the method and write not if, but switch, and can even implement the “Strategy” pattern and then go insane. Any desire to work filters you can realize. It all depends on the types you work with. Most importantly, the filters will work the same way.

You can agree that the filters in all UI elements should work like this: strings are searched in one way, money in another. All this is coordinated, once written, everything will be done correctly in different interfaces, and no other developers will break it, because this code is not at the application level, but somewhere either in an external library or in your kernel.

Validation

In addition to filtering and projection, you can do validation. This idea was pushed by the TComb.validation JS library . TComb is short for Type Combinators and it is based on the type system and the so-called. refinements, improvements.

// null and undefined

validate('a', t.Nil).isValid(); // => false

validate(null, t.Nil).isValid(); // => true

validate(undefined, t.Nil).isValid(); // => true// strings

validate(1, t.String).isValid(); // => false

validate('a', t.String).isValid(); // => true// numbers

validate('a', t.Number).isValid(); // => false

validate(1, t.Number).isValid(); // => trueFirst, primitives are declared that correspond to all types of JS, and the additional type is nill, which corresponds to either undefined or zero.

// a predicate is a function with signature: (x) -> booleanvar predicate = function (x) { return x >= 0; };

// a positive numbervar Positive = t.refinement(t.Number, predicate);

validate(-1, Positive).isValid(); // => false

validate(1, Positive).isValid(); // => trueFurther interesting begins. Each type can be enhanced with a predicate. If we want numbers greater than zero, then we declare the predicate x> = 0 and do validation with respect to the type Positive. So from the building blocks you can collect any of your validations. Noticed, probably, there is also a lambda expression.

Challenge accepted. We take the same refinement, we write it in C #, we write the IsValid () method, also Expression we compile, we execute. Now we have the opportunity to carry out validation.

publicclassRefinementAttribute: ValidationAttribute

{

public IValidator<object> Refinement { get; }

publicRefinementAttribute(Type refinmentType)

{

Refinement = (IValidator<object>)

Activator.CreateInstance(refinmentType);

}

publicoverrideboolIsValid(objectvalue)

=> Refinement.Validate(value).IsValid();

}We integrate with the standard DataAnnotations system in ASP.NET MVC so that it all works out of the box. We declare RefinementAttribute (), we transfer type to the constructor. The fact is that RefinementAttribute is generic, so you have to use a type here, because you cannot declare a generic attribute in .NET, unfortunately.

So mark user class with refinement. AdultRefinement, that age is over 18.

To make it all good, let's make the validation on the client and the server the same. Supporters of NoJS suggest writing back and front on JS. Well, I will write on C # both back and front, nothing terrible and just translate it into JS. You can write javascriptists on your JSX, ES6 and transpose it to JavaScript. Why can't we? We write Visitor, go through what operators are needed and write JavaScript.

Separately, frequent validation cases are regular expressions, they also need to be parsed. If you have a regexp, take a StringBuilder, collect the regexp. Here I used two exclamation marks, since JS is a dynamically typed language, this expression will always be converted to bool so that everything is fine with the type. Let's see what it looks like.

{

predicate: “x=> (x >= 18)”,

errorMessage: “For adults only»

}Here is our refinement, which comes from the backend, as a line predicate, since there are no lambdas and errorMessage “For adults only” in JS. Let's try to fill in the form. Does not pass. We look, how it is made.

This is React, we request from the back end of the UserRefinment () method of Expression and errorMessage, construct a refinment relative to number, use eval to get a lambda. If I redo it and remove the type restrictions, replace it with the usual number, validation on JS will fall off. Enter the unit, send. I do not know, it is visible or not, false is displayed here.

The code is alert. When we send onSubmit, alert of what came from the backend. And on the backend such a simple code.

We simply return Ok (ModelState.IsValid), the User class, which we get from a JavaScript form. Here is the Refinement attribute.

using …

namespaceDemoApp.Core

{

publicclassUser: HasNameBase

{

[Refinement(typeof(AdultRefinement))]

publicint Age { get; set; }

}

}

That is, validation works on the backend, which is declared in this lambda. And we translate it into javascript. It turns out, we write lambda expressions on C #, and the code is executed both there, and there. Our answer is NoJS, so can we.

Testing

Usually it is the Timlid that is more concerned about the number of errors in the code. Those who write unit tests know the Moq library. You do not want to write a mock or declare a class - there is a moq, it has a fluent syntax. You can paint as you want him to behave and slip his application for testing.

These lambdas in moq are also Expression, not delegates. He goes over the trees of expressions, applies his logic and further feeds into Castle.DynamicProxy. And he creates the necessary classes in runtime. But we can do that too.

A friend of mine recently asked if there was something like WCF in our Core. I replied that there is a webapi. He also wanted to build a proxy in WebAPI, like in WCF by WSDL. WebAPI has only swagger. But the swagger is just a text, and the friend did not want to follow every time when the API changes and what breaks. When was WCF, he connected WSDL, if the spec changed at the API, the compilation broke.

This has a definite meaning, since it’s reluctant to search, and the compiler can help. By analogy with moq, you can declare the method GetResponse <> () generic with your ProductController, and the lambda that goes into this method is parameterized by the controller. That is, you, starting to write a lambda, press Ctrl + Space and see all the methods that this controller has, provided that there is a library, dll with the code. There is Intellisense, write all this as if you are calling a controller.

Further, like Moq, we will not call it, but simply build an expression tree, go through it, pull out all the information on the routing from the API config. And instead of doing something with the controller, which we cannot perform, since we have to execute it on the server, we will simply make the POST or GET request we need, and in the opposite direction we will deserialize the response received, because of Intellisense and expression tree we know about all return types. It turns out, we write code about controllers, and actually we do Web requests.

Reflection Optimization

As far as meta-programming is concerned, it strongly echoes Reflection.

We know that Reflection is slow, I would like to avoid it. There are some good Expression case studies too. The first is the CreateInstance activator. You should never use it at all, because there is Expression.New (), which you can simply drive into the lambda, compile and then get the constructors.

I borrowed this slide from a wonderful speaker and musician Vagif. He was doing a benchmark on a blog. Here is the Activator, this is the Peak of Communism, you see how much it is trying to do everything. Constructor_Invoke, it is about half. And on the left - New and compiled lambda. There is a slight increase in performance due to the fact that it is a delegate, not a constructor, but the choice is obvious, it is clear that this is much better.

The same can be done with getters or setters.

It is done very simply. If for some reason you are not satisfied with the Fast Memember Mark Gravelli or Fast Reflect, you do not want to drag this dependence, you can do the same. The only difficulty is that you need to keep track of all these compilations, store and warm up the cache somewhere. That is, if this is a lot, then at the start you need to compile it once.

Once there is a constructor, getters and setters, only the behavior and methods remain. But they can also be compiled into delegates, and you just get a big zoo of delegates who need to be able to manage. Knowing all that I told you, someone might have the idea that if there are many delegates, many expressions, then maybe there is a place for what is called DSL, Little Languages, or a pattern interpreter, a free monad.

These are all the same things, when for some task we come up with a set of commands and write our own interpreter for it. That is, inside the application, we also write a compiler or interpreter that knows how to use these commands. This is exactly how it is done in the DLR, in the part that works with the languages IronPython, IronRuby. The expression tree is used there to execute dynamic code in the CLR. The same can be done in business applications, but so far we have not noticed such a need, and this remains outside the brackets.

Results

In conclusion, I want to talk about what conclusions we came to after implementation and testing. As I said, this happened on different projects. Everything that I have written, we do not use everywhere, but somewhere if required, some things were used.

The first plus is the ability to automate the routine. If you have 100 thousand molds with filtering, pagination and all that. Mozart had a joke that with the help of dice, enough time and a glass of red wine, you can write waltzes in any quantity. Here with the help of Expression Trees, a bit of meta-programming, you can write molds in any quantity.

The amount of code is greatly reduced, as an alternative to code generation, if you do not like it, because you get a lot of code, you can not write it, leave everything in runtime.

Using such code for simple tasks, we further reduce the requirements for executors, because there is very little imperative code and space for error there as well. Pulling a large amount of code into reusable components, we remove this class of errors.

On the other hand, we are so much raising the requirements for the qualifications of the designer, because we get out questions of knowledge about working with Expression, Reflection, their optimization, about places where you can shoot yourself in the foot. There are many such nuances here, so a person unfamiliar with this API will not immediately understand why Expression simply does not combine. The designer should be cooler.

In some cases, Expression.Compile () can catch performance degradation. In the caching example, I had a limitation that the Expressions are static, because a Dictionary is used for caching. If someone does not know how it is arranged inside, starts to do it thoughtlessly, declares the specifications non-static inside, the cache method will not work, and we will receive calls to Compile () in random places. Exactly what I wanted to avoid.

The most unpleasant minus is that the code ceases to look like a C # code, it becomes less idiomatic, static calls appear, strange additional Where () methods, some implicit operators are overloaded. This is not in the MSDN documentation, in the examples. If, say, a person with little experience comes to you, who is not used to creeping into the source code, he will most likely be in a little prostration at first, because it does not fit into the picture of the world, there are no such examples on StackOverflow, but with this will have to work somehow.

In general, this is all that I wanted to talk about today. Much of what I said, in more detail, with the details written on Habré. The library code is laid out on the githaba, but it has one fatal flaw - the complete lack of documentation.

November 22-23, Moscow will host DotNext 2018 Moscow . A preliminary grid of reports has already been posted on the site, tickets can be purchased in the same place (the cost of tickets will increase from October 1 ).