Working with MS SQL from Powershell on Linux

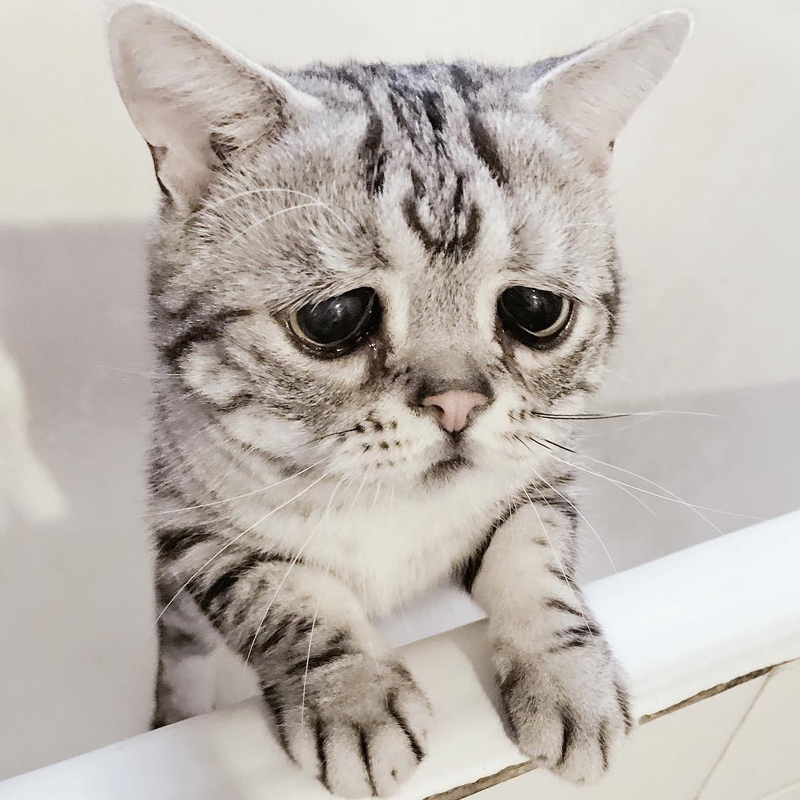

This article is purely practical and is dedicated to my sad story.

Preparing for Zero Touch PROD for RDS (MS SQL), about which all our ears were buzzing, I made a presentation (POC - Proof Of Concept) of automation: a set of powershell scripts. After the presentation, when the loud, prolonged applause died down, turning into an unceasing ovation, I was told that all this is good, but only for ideological reasons we all have Jenkins slaves running under Linux!

Is that possible? To take such a warm, tube DBA from under Windows and put it in the very heat of powershell under Linux? Isn't that cruel?

I had to dive into this strange combination of technology. Of course, all my 30+ scripts stopped working. To my surprise, in one working day I managed to fix everything. I am writing in hot pursuit. So, what pitfalls can you encounter when porting powershell scripts from Windows to Linux?

sqlcmd vs Invoke-SqlCmd

Let me remind you of the main difference between them. The good old sqlcmd utility also works under Linux, with almost identical functionality. To execute the query, we pass -Q, the input file as -i, and the output -o. Here are just the file names, of course, made case-sensitive. If you use -i, then write in the file at the end:

GO

EXITIf there is no EXIT at the end, then sqlcmd will go to wait for input, and if there is no GO before EXIT , the last command will not work. All output, selects, messages, print, etc., gets to the output file. Invoke-SqlCmd returns the result as a DataSet, DataTables or DataRows. Therefore, if you can process the result of a simple select through sqlcmd , parsing its output, then outputting something complicated is almost impossible: there is Invoke-SqlCmd for this . But this team has its own jokes:

- If you pass the file to it via -InputFile , then EXIT is not needed, moreover, it gives a syntax error

- -OutputFile not, the command returns you the result as an object

- There are two syntaxes for specifying a server: -ServerInstance -Username -Password -Database and through -ConnectionString . Oddly enough, in the first case, you cannot specify a port other than 1433.

- text output, of type PRINT, which is “caught” by sqlcmd in an elementary way , is an issue for Invoke-SqlCmd

- And most importantly: most likely this cmdlet is not in your Linux!

And this is the main problem. Only in March this cmdlet became available for non-windows platforms , and finally we can move forward!

Variable substitution

Sqlcmd has variable substitution with -v, like so:

# $conn содержит начало команды sqlcmd

$cmd = $conn + " -i D:\apps\SlaveJobs\KillSpid.sql -o killspid.res

-v spid =`"" + $spid + "`" -v age =`"" + $age + "`""

Invoke-Expression $cmdIn the SQL script, we use substitutions:

set @spid=$(spid)

set @age=$(age)So here. In * nix , variable substitutions do not work . The -v option is ignored. We Invoke-SqlCmd ignored -Variables . Although the parameter that sets the variables themselves is ignored, the substitutions themselves work - you can use any variables from Shell. However, I was offended by the variables and decided not to depend on them at all, and I acted roughly and primitively, since the scripts for sql are short:

# prepend the parameters

"declare @age int, @spid int" | Add-Content "q.sql"

"set @spid=" + $spid | Add-Content "q.sql"

"set @age=" + $age | Add-Content "q.sql"

foreach ($line in Get-Content "Sqlserver/Automation/KillSpid.sql") {

$line | Add-Content "q.sql"

}

$cmd = "/opt/mssql-tools/bin/" + $conn + " -i q.sql -o res.log"This, as you understand, is a test from the Unix version.

Upload files

In the Windows version, any operation I had was accompanied by an audit: we performed sqlcmd, got some kind of abuse in the output file, attached this file to the audit plate. Fortunately, SQL server worked on the same server as Jenkins, it was done something like this:

CREATE procedure AuditUpload

@id int, @filename varchar(256)

as

set nocount on

declare @sql varchar(max)

CREATE TABLE #multi (filer NVARCHAR(MAX))

set @sql='BULK INSERT #multi FROM '''+@filename

+''' WITH (ROWTERMINATOR = ''\0'',CODEPAGE = ''ACP'')'

exec (@sql)

select @sql=filer from #multi

update JenkinsAudit set multiliner=@sql where ID=@id

returnThus, we swallow the entire BCP file, and shove the audit table in the nvarchar (max) field. Of course, this whole system crumbled, because instead of SQL server I got RDS, and BULK INSERT doesn’t work on \\ UNC because of an attempt to take an exclusive lock to a file, and with RDS this is initially doomed. So I decided to change the design of the system, storing the audit line by line:

CREATE TABLE AuditOut (

ID int NULL,

TextLine nvarchar(max) NULL,

n int IDENTITY(1,1) PRIMARY KEY

)And write to this table like this:

function WriteAudit([string]$Filename, [string]$ConnStr,

[string]$Tabname, [string]$Jobname)

{

# get $lastid of the last execution -- проскипано для статьи

#create grid and populate it with data from file

$audit = Get-Content $Filename

$DT = new-object Data.DataTable

$COL1 = new-object Data.DataColumn;

$COL1.ColumnName = "ID";

$COL1.DataType = [System.Type]::GetType("System.Int32")

$COL2 = new-object Data.DataColumn;

$COL2.ColumnName = "TextLine";

$COL2.DataType = [System.Type]::GetType("System.String")

$DT.Columns.Add($COL1)

$DT.Columns.Add($COL2)

foreach ($line in $audit)

{

$DR = $dt.NewRow()

$DR.Item("ID") = $lastid

$DR.Item("TextLine") = $line

$DT.Rows.Add($DR)

}

# write it to table

$conn=new-object System.Data.SqlClient.SQLConnection

$conn.ConnectionString = $ConnStr

$conn.Open()

$bulkCopy = new-object ("Data.SqlClient.SqlBulkCopy") $ConnStr

$bulkCopy.DestinationTableName = $Tabname

$bulkCopy.BatchSize = 50000

$bulkCopy.BulkCopyTimeout = 0

$bulkCopy.WriteToServer($DT)

$conn.Close()

}

To select content, select by ID, choosing n (identity) in order.

In the next article I will dwell in more detail on how this all interacts with Jenkins.