PIFR - method of generating a 3D mask, regardless of the angle of rotation of the face

Introducing the translation of the article " PIFR: Pose Invariant 3D Face Reconstruction ".

In many real-world applications, including face detection and recognition, the generation of 3D emoticons and stickers, the geometry of the face must be restored from flat images. However, this task remains difficult, especially when most of the information about a person is unknowable.

Jiang and Wu from Jiangnan University (China) and Kittler from the University of Surrey (United Kingdom) offer a new algorithm for 3D face reconstruction - PIFR , which significantly increases the accuracy of the reconstruction even in difficult poses.

But let's first take a brief look at previous work on 3D masks and face reconstruction.

State-of-the-art research

The authors mention four commonly available methods for morphing a 3D mask:

- the BLL model proposed by the University of Basel;

- 3DMM models , developed by Branton and others;

- Multi-resolution 3D face model provided by the University of Surrey (UK);

- large-scale face model (CMLI) created by the Imperial College.

The article uses the BML model, which is the most popular.

There are several approaches to recreating a 3D model from a flat image, including:

- cascade regression method ;

- combination of landmark detection of the face and 3D face reconstruction, as well as indexation of signs for constructing a tree-like regression model ;

- method of normalizing the expression and position of the face ;

- 3DMM extension ( E-3DMM ), which takes into account the change in facial expression;

- weighted landmark 3DMM fit based on the traditional regression method.

The proposed method - PIFR

The article by Jiang, Wu and Kitler proposes a new algorithm for setting invariant 3D face reconstruction - POSE (Pose-Invariant 3D Face Reconstruction - PIFR), based on the 3DMM method.

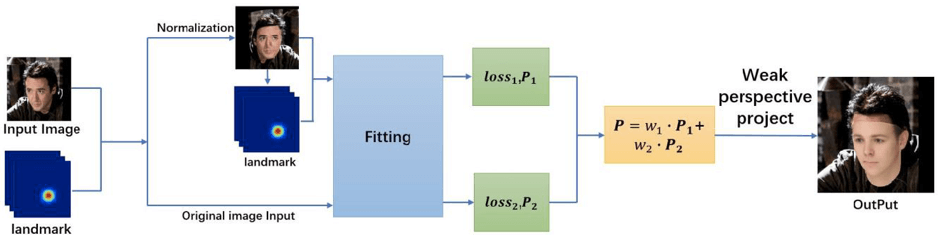

First, the authors propose to generate a frontal image, normalizing one input face image. This step allows you to recover additional identity information of the person.

The next step is to use the weighted sum of the 3D features of the two images: frontal and original. This allows not only to keep the original image position, but also to expand the identification information.

The scheme of the proposed approach:

Experiments show that the WIVL algorithm has significantly improved the performance of 3D face reconstruction compared to previous methods, especially in complex poses.

Consider the proposed model in more detail.

Method Description

The PIVL method relies heavily on the 3DMM fitting process, which can be expressed as minimizing the error in calculating the coordinates of 3D projections of key points. However, the face created by the 3D model has about 50,000 vertices, and therefore iterative calculations lead to slow and inefficient convergence.

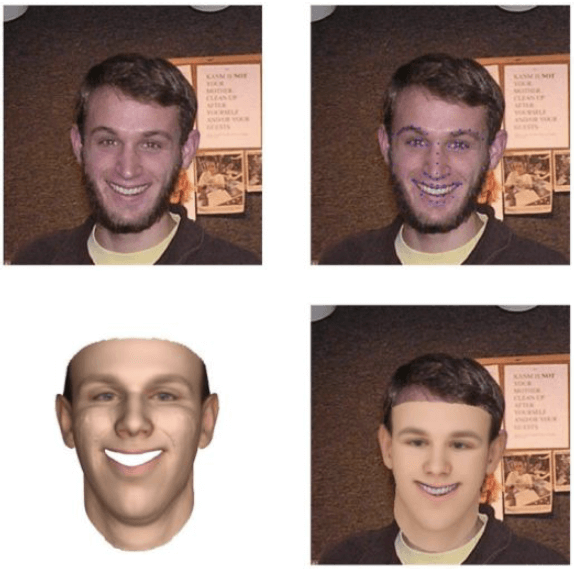

To overcome this problem, researchers suggest using key points (for example, the center of the eye, the angle of the mouth and the tip of the nose) as the main truth in the process of fitting the mask. In particular, weighted fit 3DMM fit is used.

Top row: original image and landmark. Bottom row: 3D face model and its alignment on a 2D image

The next task is to recreate a 3D face mask on a close-up. To solve this problem, researchers usemethod of high-precision normalization of posture and expression (VNPV) , but to normalize only posture, and not facial expressions. In addition, editing Poisson is used to restore the area of the face, closed due to the viewing angle.

Performance comparison with other methods

The effectiveness of the PIVL method was evaluated to recreate the face:

- in small and medium poses;

- close ups;

- complex postures (deviation angles ± 90).

For this, the researchers used three public datasets :

- The AFW dataset, created using Flickr images, contains 205 images with 468 marked faces, complex backgrounds and facial poses.

- An LFPW data set containing 224 face images in the test set and 811 face images in the training set; each image is marked with 68 characteristic dots; 900 images from both sets were selected for testing in this study.

- The AFLW dataset is a large-scale database of individuals that contains about 250 million images tagged by hand, and each image is marked with 21 feature points. In this study, only images in complex positions of a person from this data set were used for qualitative analysis.

Quantitative analysis

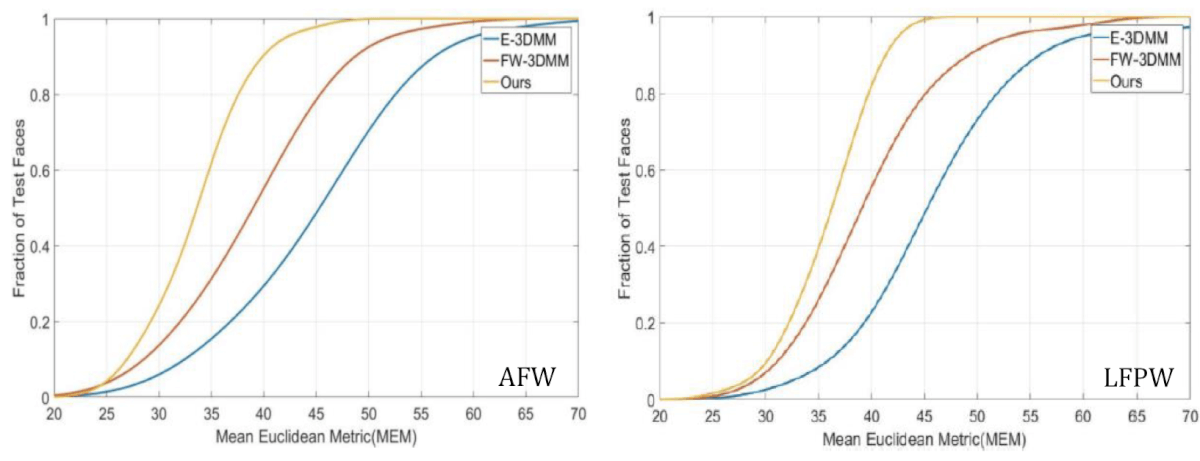

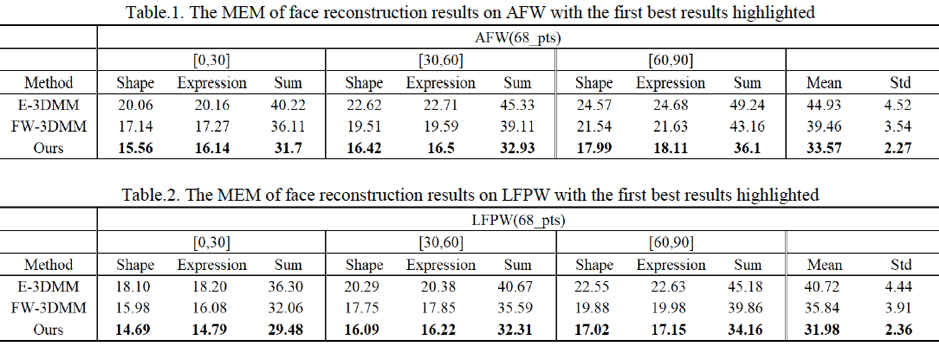

Using the Average Euclidean Metric (CEM), the study compares the performance of the PIFR method with the E-3DMM and FW-3DMM in the AFW and lfpw data sets. The cumulative error distribution curves (RNO) are as follows:

Comparison of the cumulative error distribution (RNO) in the AFW and LFPW datasets

As can be seen from these graphs and tables below, the IRPT method shows excellent performance compared to the other two methods. Especially good is the effectiveness of recreation for close-ups.

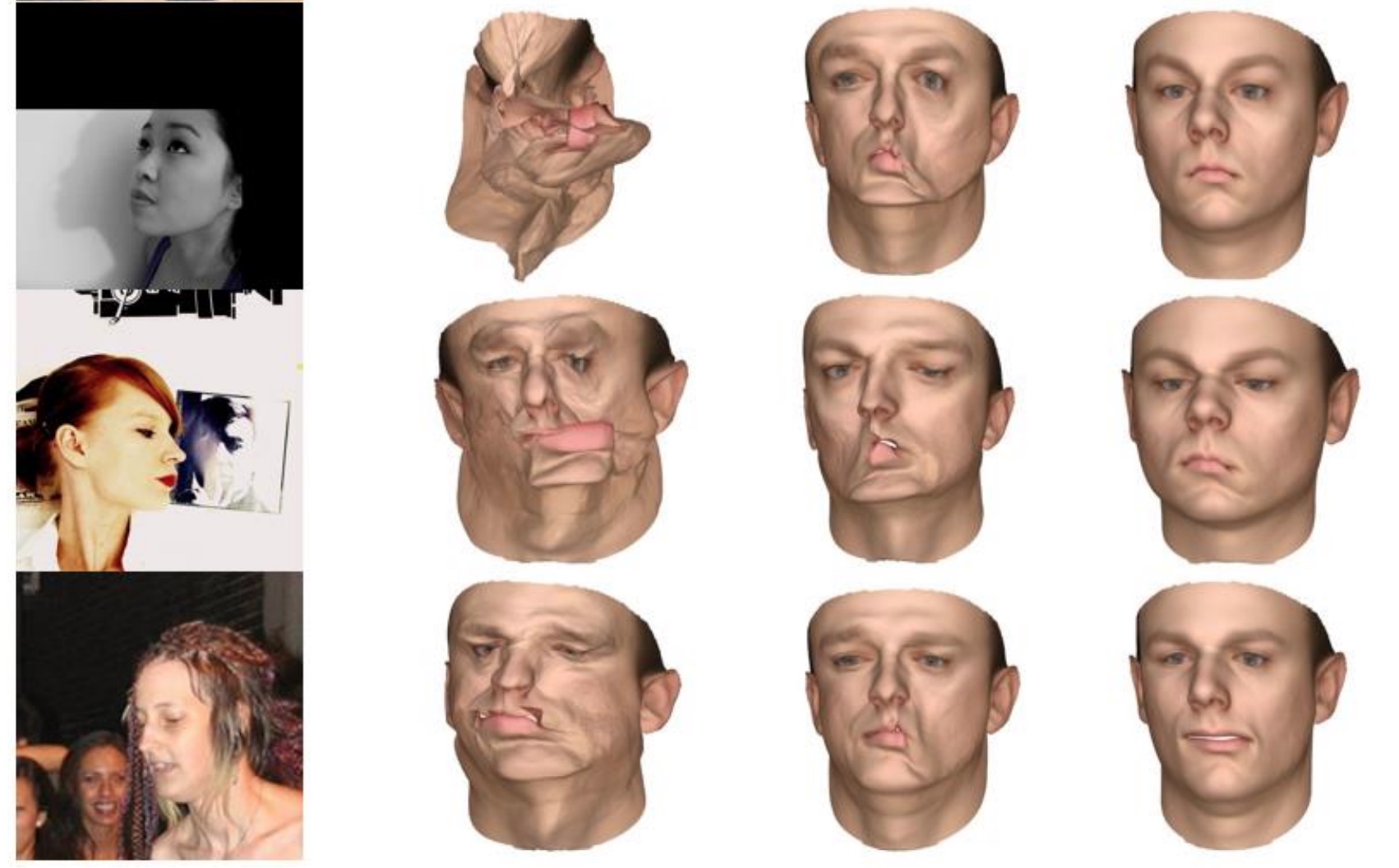

Qualitative analysis

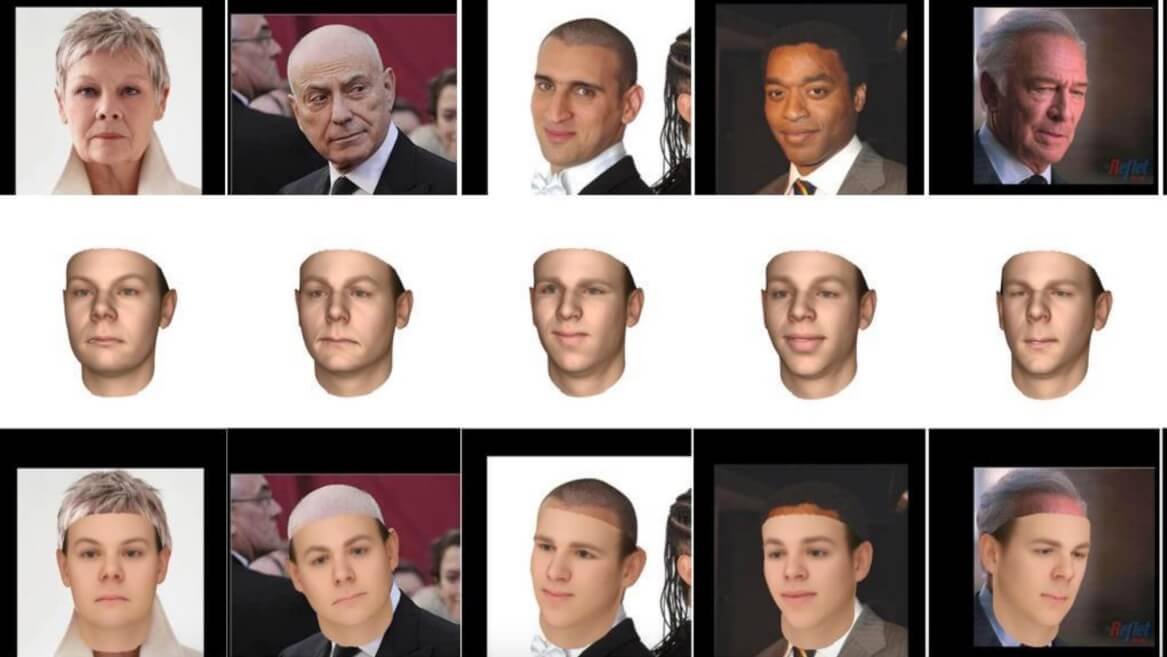

The method was also evaluated qualitatively based on photographs of the face in a different position from the AFLW data set. The results are shown in the figure below.

Comparison of 3D face reconstruction: (a) source image; (b) FW-3DMM; (c) E-3DMM; (d) the proposed approach.

Even if half of the landmarks are not visible due to non-trivial posture, which leads to large errors and failures of other methods, the PIFR method still works well.

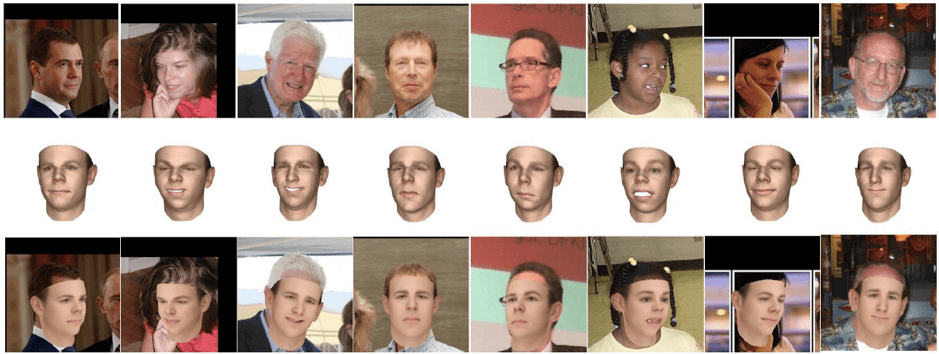

The following are additional examples of the effectiveness of the WAVL method based on images from the AFW data set.

Top row: enter 2D images. Middle row: 3D mask. Bottom row: mask alignment

Total

The new algorithm for the reconstruction of the face of the WIVL gives good results of reconstruction even in difficult poses. Taking both the original and frontal images for a weighted merge, the method allows you to recover enough information about the faces to recreate the 3D mask.

In the future, the researchers plan to recover even more information about the face in order to improve the accuracy of the mask re-creation.

Original

Translated - Farid Gasratov