Mind Algorithms

- Transfer

Science always accompanies technology, inventions give us new food for thought and create new phenomena that have yet to be explained.

So says Aram Harrow (Aram Harrow), professor of physics at MIT, in his article "Why now is the right time to study quantum computing" .

He believes that from a scientific point of view, entropy could not be fully studied until the steam engine technology gave impetus to the development of thermodynamics. Quantum computing came about because of the need to simulate quantum mechanics on a computer. So the algorithms of the human mind can be studied with the advent of neural networks. Entropy is used in many areas: for example, with smart crop , in encoding video and images; in statistics .

How is this related to machine learning?

Like steam engines, machine learning is a technology designed to solve highly targeted problems. Recent results in this industry can help to understand how the human brain works, perceives the surrounding world and learns. Machine learning technology provides new food for thought about the nature of human thoughts and imagination.

Computer imagination

Five years ago, a pioneer in deep learning, Jeff Hinton (a professor at the University of Toronto and a Google employee) posted a video:

Hinton trained a 5-level neural network to recognize handwritten numbers from their bitmaps. Using computer vision, the machine could read handwritten characters.

But, unlike other works, Hinton’s neural network could not only recognize numbers, but also recreate in its computer imagination the image of a number based on its meaning. For example, the input is set to the number 8, and the machine outputs its image to the output:

Everything happens in the intermediate layers of the network. They work as associative memory: from picture to value, from value to picture.

Can the human imagination work the same way

Despite the simplified, but very inspiring machine vision technology, the main question from a scientific point of view is whether the human imagination and visualization work on the same algorithm.

Isn't the human mind doing the same? When a person sees a number, he recognizes it. Conversely, when someone talks about the number 8, the mind draws the number 8 in the imagination.

Is it possible that the human brain, like a neural network, moves from an image to a picture (or sound, smell, sensation) using information encoded in layers? After all, neural networks already paint pictures , write music and can even create internal connections.

Contemplation and Appearance

If recognition and imagination is really just a connection between a picture and an image, what happens inside the layers? Can neural networks help figure this out?

234 years ago, Immanuel Kant, in his book Critique of Pure Reason, argued that contemplation is only a representation of a phenomenon.

Kant believed that human knowledge determines not only rational and empirical thinking, but also intuition (contemplation). In his definition, without contemplation, all knowledge will be deprived of objects and will remain empty and meaningless.

Nowadays, Professor Berkeley - Alyosha Efros (specializing in VUE) noted that in the visible world there are much more things than words to describe them. Using words as tags for teaching models can lead to language restrictions. There are many things that have no name in different languages. A popular example is the most capacious word in the world, Mamihlapinatapai .

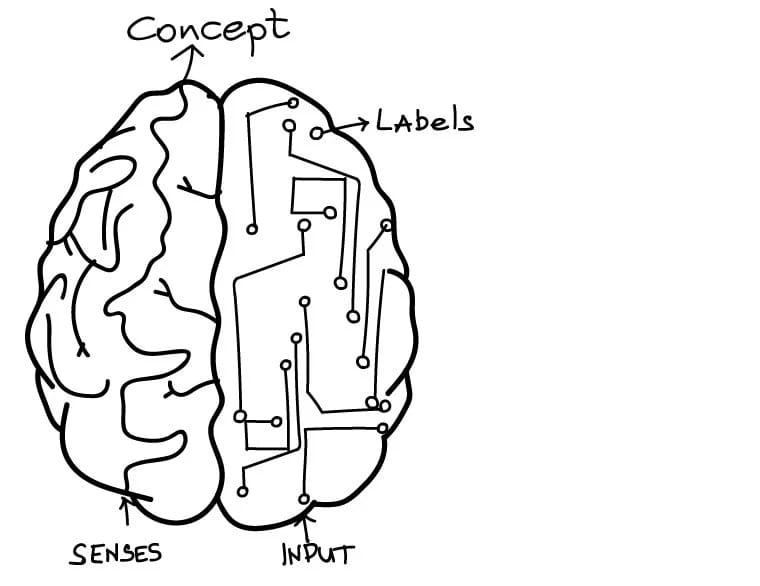

You can draw a parallel between machine labels and phenomena, as well as coding and intuition (contemplation).

During the training of deep neural networks, for example, in work on the recognition of cats, you can see that the processes are progressively from the lower to the upper levels.

The image recognition network encodes pixels at a lower level, recognizes lines and angles at the next, then standard shapes, and so on. Each level complicates the task. Intermediate levels do not necessarily have a connection with the final image, for example, “cat” or “dog”. Only the last layer matches tags defined by people and is limited to these tags.

Coding and labels intersect with concepts that Kant called contemplation and phenomenon.

The hype around the Sepir-Whorf hypothesis

As Efros noted, there are far more conceptual models than words that describe them. If so, can words limit our thoughts?

This is the main idea of the Sepir-Whorf linguistic relativity hypothesis. In its strictest form, the Sapir-Whorf hypothesis claims that the structure of language affects how people perceive and comprehend the world.

Is it so? Is it possible that the language completely defines the boundaries of our consciousness or are we free to comprehend anything, regardless of the language we speak?

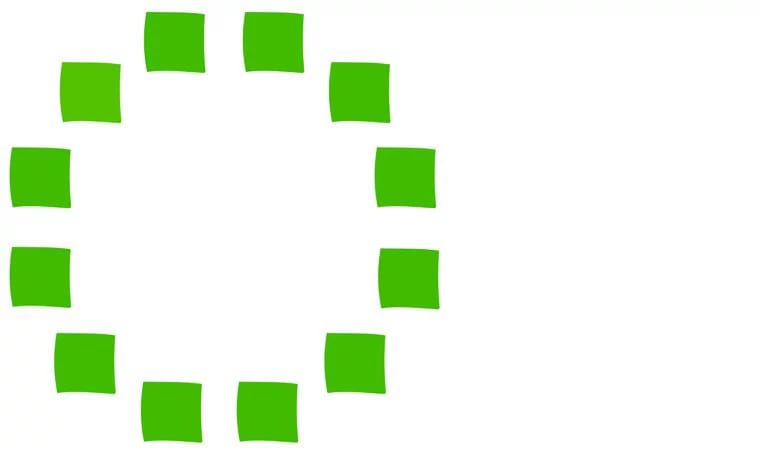

The picture shows 12 green squares, one of which is different in color. Try to guess which one. The Himba tribe has two words in speech for different shades of light green, so they give the correct answer much faster. Most of us will have to sweat before finding a box.

Correct answer

The theory is this: since there are two words for distinguishing one shade from another, our mind will begin to train ourselves to distinguish these shades and over time the difference will become apparent. If you look with the mind, and not with the eyes, then the language affects the result.

Another striking example: it was difficult for the millennials generation to get used to the CMYK color palette, since the colors cyan and magenta were not learned from birth. Especially in the Russian language, these are complex composite colors that are hard to imagine accurately: aquamarine and purplish red.

Watch with the mind, not the eyes

Something similar can be observed in machine learning. Models are trained to recognize pictures (text, audio ...) in accordance with given labels or categories. Networks recognize tagged categories more efficiently than untagged categories. This is not surprising when teaching with the teacher. Language affects human perception of the world, and the presence of labels affects the ability of a neural network to recognize categories.

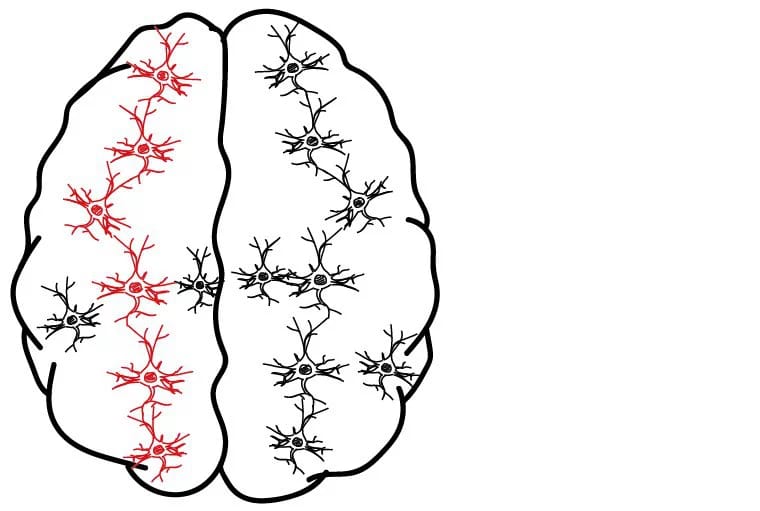

But the presence of labels is not a prerequisite. In the Google cat-recognizing brain, the network formed the concepts of “cat”, “dog” and others completely independently, without indicating the required solutions (labels).

The network was trained without a teacher (only the situation is set). If you submit to its input a picture that belongs to a certain category, for example, “cats,” only cat-like neurons will be activated. Receiving a large number of training pictures at the entrance, this network formed the basic characteristics of each category and the differences between them.

If you constantly show the child a plastic cup, then he will begin to recognize it, even if he does not know what this thing is called. Those. the image will not be matched with the name. In this case, the Sepir-Whorf hypothesis is incorrect - a person can explore and investigate various images, even if there are no words to describe them.

Machine learning with and without a teacher suggests that the Sapir-Whorf hypothesis is applicable to human learning with a teacher and is not suitable for self-learning. So it’s time to stop arguing and debating about this.

Philosophers, psychologists, linguists and neuroscientists have been studying this topic for many years. A connection with machine learning and computer science has been discovered relatively recently, with advances in big data and deep learning. Some neural networks show excellent results in language translation, picture classification, and speech recognition.

Each new discovery in machine learning helps to understand a little more about the human mind.

Abstract

- The human brain, like a neural network, is moving from image to image.

- Coding is the same as contemplation in a person, and labels are phenomena.

- If you look with the mind, and not with the eyes, then the language affects the result.

- The Sepir-Whorf hypothesis may turn out to be correct for learning with a teacher and fundamentally wrong for learning without a teacher.