Not-so-well-known solutions for protecting IT business infrastructure

The classic approach of Russian business today is the installation of a firewall, then after the first attempts at targeted attacks - an intrusion protection system. And continue to sleep peacefully. In practice, this gives a good level of protection only against violinciddy, for any more or less serious threat (for example, from competitors or an attack from ill-wishers, or a targeted attack from a foreign industrial espionage group), there needs to be something additional, in addition to classical means.

I already wrote about the profile of a typical targeted attack on a Russian civilian enterprise. Now I’ll talk about how the defense strategy as a whole has been changing in our country in recent years, in particular, in connection with the shift of attack vectors to 0-day and the related implementation of static code analyzers directly in the IDE.

Plus a couple of examples for sweets - you will find out what can happen on a network completely isolated from the Internet and on the perimeter of the bank.

Development

Over the past two years, a fairly strong movement has begun in the market of large enterprise solutions. At first, there was a fairly good activity for protecting against DDoS - just then the attacks became cheaper. While the medium-sized business got acquainted with IT on the questions “why does our site have a website and the cash desks in stores don't work,” the large one was rebuilding its defense in the direction of protection against non-standard attacks. Let me remind you that most of the directed threats are realized through a combination of 0-day and social engineering. Therefore, the logical step was the introduction of code analyzers at the stage of software development for the company.

Check before commit

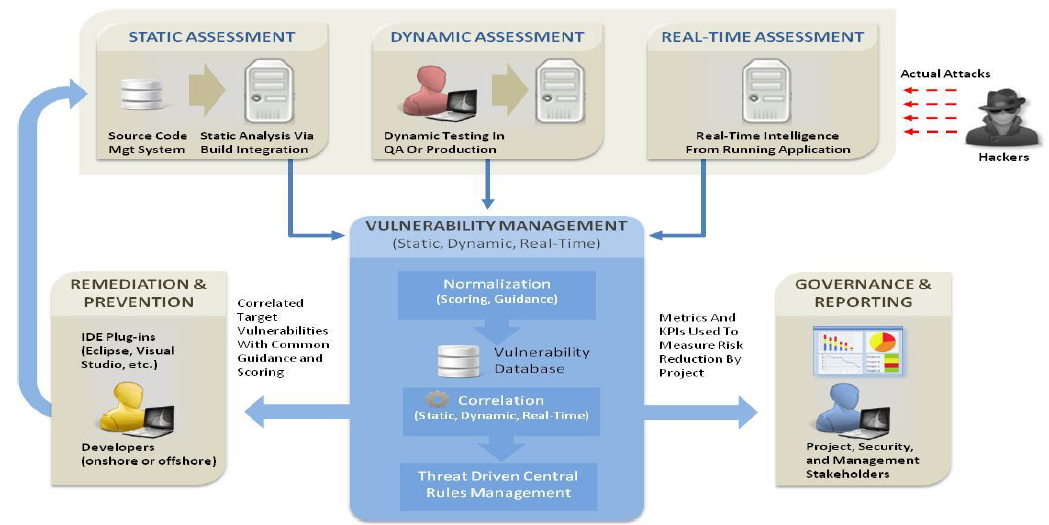

One of the most effective measures was to organize the process so that no commit goes through without static code analysis, and not one release goes to the battle servers until the dynamic analysis has been passed. Moreover, this dynamic is done in the “sandboxes” of virtual machines that ideally correspond to different servers, users' machines with different profiles, and so on. That is, the code is "swinging" in a real environment with the whole chain of interactions.

The most hardor, of course, is the integration of analyzers with the IDE. I wrote a function - and not a step further until you clear all the analyzer warnings. At the same time, for complete happiness, the security guard sees the logs of what was happening, and every warning is duplicated to him. As colleagues say, in the West it turned out to be a very effective tool to immediately write code normally.

An example solution is the HP Fortify Software Security center.

Third-party code

When implementing third-party code, large business information security companies often insist on static code analysis. When it comes to open source, the situation is very simple and straightforward, just run through the analyzer and “suppress” from a hundred to a thousand warnings. When the code is commercial, and the customer is not ready to provide the source code for his subsystem, a more interesting iteration ensues.

If the sources are not given, but you can read them in the developer's office, the representatives of the IT department and the information security department leave for the place, where they run a static analyzer together. If it’s not possible anyway, then they either refuse the code or, less often, assign reinforced “buildup” in dynamics.

In addition to automatic methods, a pentester is often invited: it is either an IB / IT department employee with a specialized education, or, more often, an external specialist from a partner company.

Old code

If you find problems with the legacy code, and even compiled for the subsystem already in 1996 (there was such a real case), of course, rewriting it can be quite difficult. In this case, a rule is written on the firewall that describes the type of exploitation of the vulnerability (in fact, the signature of the exploit packages), or on one of the intermediate systems (about them below), a cut-off of everything that is not a normal package for the final system is registered. A kind of DPI, but to close the vulnerability, the binding is a higher level.

Internal detours

A very large business has another characteristic problem - the number of changes in living infrastructure is such that even a large department cannot keep track of all movements in the network. Therefore, the same banks, retail and insurance companies use specialized tools like HP Webinspect or MaxPatrol from our compatriots Positive Technologies. These systems allow you to test a wide variety of infrastructure components, including what rolls onto the microcontrollers of low-current systems. Traffic profiling

systems have become very common. A typical profile of calls to each node is constructed on the basis of data from switches and routers, then a correlation is built between users and the systems to which they refer. The result is an interaction matrix, which shows which service generates traffic to which user. Small deviations come in the form of a notification to the security guards, serious ones are immediately blocked. When a malware enters the network, characteristic multiple traces are visible, everything critical is “frozen” and the analysis of logs begins.

In this case, the configuration is in the form of "application-user-server" in the form of a GUI directly by the security guard himself without the participation of the IT department. Oddly enough, I like such systems, including administrators - I had an example when the Vkontakte application began to generate 90% of the traffic in the band, and the admin noticed this very easily.

An example of a network anomaly search system is StealthWatch.

A comprehensive security analysis usually involves three procedures:

- Analysis at the black box level without user privileges, that is, just going outside. These are free ports, an analysis of everything that looks out of the services, attempts to use the collected vulnerabilities and go through the DMZ. Standard pentest, as a rule.

- Audit from the account of the administrator or privileged user. It is assumed that the attacker somehow “tricked” accesses (for example, by means of social engineering), and the attack develops from this moment on. As part of this audit, a tour is made throughout the entire network. Settings, configuration files, system update correctness, checksums of each package and file are checked, software versions and their known vulnerabilities are evaluated (for example, so that Windows on user machines is correctly updated from a certified source). An example package is RedCheck.

- The third mode is compliance with the requirements of a particular standard. This is an extended network bypass in accordance with a template, for example, a model for processing personal data by class. Our vendors have pre-configured templates for Russia - foreign ones, as a rule, rarely go beyond PCI DSS at best.

We have examples of such audits here . But let's move on to the problems and the game "find a friend."

Typical problems

As a rule, most of the problems for information security of large businesses are no longer technical, but "household", at the organization level:

- Outdated hardware and software. Often in large businesses, certified solutions are needed, and they are rapidly lagging behind new products. Many simply can not afford to put in new software or a new cool piece of hardware, but they put something with obviously known holes or missing necessary functionality and hammer crutches in order to somehow solve the problem. It is not uncommon for a bank to make a decision that is only being certified and, in fact, works at its own risk for a month or two without fully meeting the requirements of regulators. An alternative is to have a hole the size of a horse in information security, but at the same time try to cover it with a piece of paper, which all meets the standard and requirements.

- Consequence - one box closes the entire network. The problem is completely insane from the outside, but often the entire internal network of a small bank can rest on one device, which is 5 years behind the flagship, but it works under a certificate. When this piece of hardware fails, everything falls to a solution. Naturally, more modern solutions are able to bypass subsystems, parallelism, redundancy - but the reality is slightly different from the ideal network architecture scheme.

- Or another option. There is, in general, everything you need. But DLP and antibot cannot be activated, there are often problems updating the anti-bot signature database (it is forbidden to update), problems with drivers (there was a situation - they installed certified equipment, but it did not fit in with the RAID array - fortunately, a new certified version was released in a few days )

- Hardcode. Different devices need a different type of traffic, and when introducing new hardware solutions, a painful and long reconnection is often done. Tests of one protective agent can go on for weeks simply because of this. In practice, it’s enough to put a modern solution that will allow you to work at the channel level. That allows, for example, to put IPS under this channel before the firewall or after (which greatly affects performance).

Gigamon GigaVUE-HC2

- Miscellaneous iron under protection. This is not a problem in fact, just the trend is that most of the vendors have come to the conclusion that all perimeter solutions fit into one UTM device. The fact is that each solution is a PAC in the form of a “black box”. The modern version is the same x86 architecture, and the ability to update functionality without throwing out hardware every two years. For example, there is a device where inside there is already a firewall, streaming antivirus, anti-bot system and so on. At the same time, it is fully certified and in the iron part consists of an x86-machine, divided into virtual software blades at the software level. If necessary, software is delivered there, paid extra for a license - and it continues to thresh in a new way.

- Sophisticated integration of zoo systems. Again, a consequence of the previous paragraph. A lot of different badly docked iron is the problem of transferring data from one part of the IB system to another. For example, it has long been the norm that IPS is clearly connected to the anti-botnet system. I am sure that this is far from being the case in all banks, because, again, it is either difficult to do integration mechanics or there is no single piece of hardware with blades as I described above. By the way, the latest generation also knows how to "split the brain" in order to scan itself for compliance with the configuration of the same PCI DSS. Previously, this was done by separate external systems.

- Analysis of infrastructure for compliance with standards does not take into account Russian realities. To simplify this, you will have the perfect set of crawler utilities to verify PCI DSS compliance. But only a small part of decisions is able to No. 152-FZ " On personal data . " For example, domestic MaxPatrol is “chasing” it (and not only) in Lukoil, Norilsk Nickel, Vimpelcom, Gazprom and so on.

- Sudden changes.This is generally beyond good and evil, but it happens. A common case - an IT specialist does something and sets a new rule at the infrastructure level, which a security guard suddenly detects in a month or two (often not by himself, but with a hint, a program for finding bugs, a pentest or a real attack) and panic. Then he arranges for everyone to carry. For example, on one of our integrations there was a situation when we had to speed up the introduction of a heavy threshing server for a week. During the implementation, not knowing all the details of the customer’s IT kitchen, we were allowed to drive a certain type of traffic directly to it. And then they reported on success. In response to this, the security guards initiated an internal investigation, as a result of which we ourselves almost got a hat. So, it’s right to install the system when an IT specialist implements something on the network, but the rule does not upgrade, until the security guard confirms it. It would be so, our colleagues would receive bonuses, because the problem was not the permission for traffic, but that it passed by the information security department.

- Untrusted sources. In many corporate environments at the stock level, there are often problems with the fact that the end user devices are a circus in half with a menagerie of viruses. For example, it is one thing when an insurance agent travels to a place, photographs a dent in a car on his camera, then arrives home, photoshop on more severe damage and sends it. Another thing is when a photo comes from a corporate application (under a certificate) via a secure channel and from an untruth device. You can trust the size of the dent. A more serious case is a sharp decrease in VPN trust in one of the companies, where as a result of viruses from remote employees all access was gained to the attackers. We solved the problem with a virtual JAVA applet in a browser that provides a secure trusted environment for VPN access.

Protective agents, examples

• NG FW class systems (Check Point, Stonesoft, HP Tipping Point);

• system for detecting potentially dangerous files (sandbox) (Check Point, McAfee, FireEye);

• specialized security tools for web applications (WAF) (Imperva SecureSphere WAF, Radware AppWall, Fortinet Fortiweb);

• Intelligent center (ByPass node) for connecting information security devices (Gigamon GigaVUE-HC2).

• systems for detecting anomalies in network traffic (StealthWatch, RSA NetWitness, Solera Networks);

• Security and compliance analysis (MaxPatrol, HP Webinspect)

• code security audit (HP Fortify, Digital Security ERPScan CheckCode, IBM AppScan Source);

• DDoS protection system (hardware - Radware DefensePRO, ARBOR PRAVAIL, Check Point DDoS Protector; service - Kaspersky DDoS Prevention, QRATOR HLL).

Dessert examples

Certified network

In one of the major government departments, it was decided to assess the security of the certified network segment, designed to handle confidential information. In particular, users of this segment were strictly prohibited from accessing the Internet. Found this:

- Traces of connecting USB-modems.

- One of the laptops was connected simultaneously to an external and public network.

- There were quite a lot of messengers and games.

In general, you probably saw such isolated networks in the army, when headquarters fighters are sitting on dating sites. Here the situation was somewhat more serious.

Network perimeter of a commercial bank

It was necessary to evaluate the perimeter security. Typically, such work is carried out as part of penetration testing, but in this case it was more interesting for the customer to see what he could do on his own from the inside. For this, the servers and telecommunication equipment of the external demilitarized zones were scanned by the MaxPatrol system in the Audit and Compliance modes, after which the reports were analyzed. The first thing that caught my eye was a certain number of vulnerabilities associated, as usual, with outdated software or the lack of security updates (old versions of the OS, the absence of patches on most servers, etc.), but this is not the worst: most of these vulnerabilities there are no exploits available to hackers. But there were surprises. There were no ACLs on the pair of perimeter routers (as it turned out, they were temporarily disabled when diagnosing communication problems between departments, and forgot to turn it on), so that many more nodes were accessible outside than the administrators imagined. On the large Internet, the battle DBMS looked outward. Instead of SSH, TELNET was used on a number of nodes. On a number of battle servers, the RDP settings did not change after configuration (RDP traffic was closed with a standard key). There were heroes who did not change the default passwords from the moment they started working for the company. Fortunately, they didn’t have time to notice this outside, so they managed to quickly close everything almost without casualties as part of the IT department. On a number of battle servers, the RDP settings did not change after configuration (RDP traffic was closed with a standard key). There were heroes who did not change the default passwords from the moment they started working for the company. Fortunately, they didn’t have time to notice this outside, so they managed to quickly close everything almost without casualties as part of the IT department. On a number of battle servers, the RDP settings did not change after configuration (RDP traffic was closed with a standard key). There were heroes who did not change the default passwords from the moment they started working for the company. Fortunately, they didn’t have time to notice this outside, so they managed to quickly close everything almost without casualties as part of the IT department.

PS If you have a question not for comments, my mail is PLutsik@croc.ru.