Implementing gesture sequences in Unity 3D using the TouchScript library

- Transfer

For many gaming applications, especially those working on small screens of mobile devices, it is very important to reduce the area occupied by controls in order to maximize the part of the screen designed to display the main content. To do this, you can configure touch targets so that they handle various combinations of gestures. Thus, the number of touch targets on the screen will be reduced to a minimum. For example, two interface elements, one of which causes the gun to shoot, and the second to rotate, can be replaced by one, which allows you to perform both actions with one continuous touch.

In this article, I’ll talk about how to set up a scene to control the controller in the first person using touch targets. First of all, you need to configure touch targets for the base position of the controller and rotation, and then expand the set of their functions. The latter can be achieved through existing interface elements without adding new objects. The scene that we have will demonstrate the wide possibilities of Unity 3D in Windows * 8 as a platform for processing various sequences of gestures.

Set the depth indicator of the main camera (it is part of the controller) to level -1. Create a separate camera interface element with support for orthogonal projection, width 1 and height 0.5, as well as Don't Clear flags. Then create a GUIWidget layer and make it a mask of the camera interface.

Place the main interface elements that control the controller on the stage in the field of view of the orthogonal camera. Add a sphere for each finger of the left hand. The sphere of the little finger makes the controller move to the left, the sphere of the ring finger - forward, the middle finger - to the right, and the index finger - back. The thumb sphere allows you to jump and launch spherical shells at an angle of 30 degrees clockwise.

For the right-hand interface element, create a cube (a square in the orthogonal projection). Set up a shift gesture support for this cube and attach it to the MouseLook.cs script. This interface element provides the same features as the touchpad of an Ultrabook.

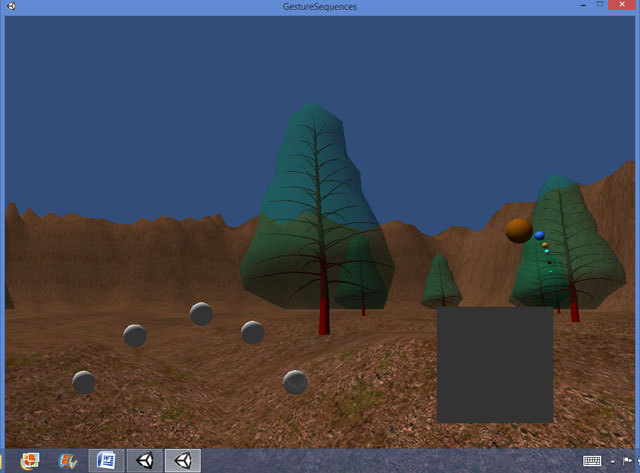

We place these interface elements outside the field of view of the main camera, and set the GUIWidget as a layer. In fig. 1 you can see how the interface elements allow you to launch shells and control the position of the controller on the stage.

Figure 1. First-person controller scene with terrain and spherical projectiles launched.

In this scene, projectiles launched from the controller fly through the trees. To fix this, you need to add a grid or collider to each tree. Another problem in this scene - a low forward speed - occurs when you try to look down using the touchpad while moving forward with the ring finger sphere. To resolve this issue, you can limit the viewing angle when looking down while holding the forward button.

Set up the scene to support multiple touches performed more often than the set period, change the angle of projectile launch and try to launch the projectile. In this case, you can adjust the angle to increase exponentially depending on the number of touches using variables of type float in the script for the thumb sphere on the left. These variables control the angle and time since the launch of the last projectile:

Next, configure the Update cycle in the script for the thumb sphere so that the projectile launch angle decreases if touches of the thumb sphere are performed more often than once every half second. In the event that touches occur less than once every half a second, or if the angle of projectile launch decreases to 0 degrees, the value of the angle of projectile launch will return to 30 degrees. The following code will turn out:

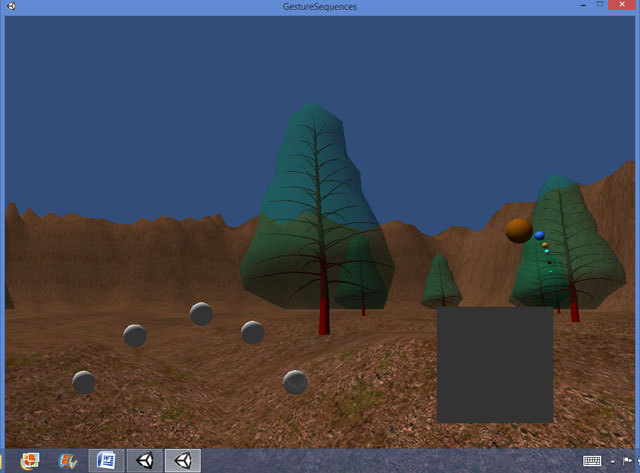

Such a code will produce a shelling effect, in which continuous touches will lead to the launch of shells at an ever-decreasing angle (see. Fig. 2). This effect can be allowed to be configured by users or made available subject to certain conditions in the game or simulation mode.

Figure 2. Continuous touches cause a change in the direction of launch of the shells

To do this, you need to program a logical variable (panned) and a variable of type float so that they mark the time elapsed from the last zoom gesture:

Set the timeSinceScale variable to 0.0f when executing the zoom gesture, and set the panned variable to True when performing the shift gesture. The field of view of the main camera of the scene is configured in the Update cycle, as can be seen in the script for the rectangle-touch panel:

Consider the onScale and onPan functions. Please note that the variable float timeSincePan does not allow the field of view to constantly increase when using the touch panel to control the camera:

Figure 3. The main camera of the scene with the image approximated using the rectangle of the touch panel on the right.

To support this function, we add a variable of type float and a logical variable that will allow us to mark the time from the gestures of releasing the sphere of the little finger and the gesture of clicking on it:

When I first set up the scene, I gave the little finger sphere script access to the InputController controller script so that the little finger sphere on the left could make the controller move left. The variable that controls the horizontal speed of the controller is not in the InputController script, but in the CharacterMotor script. You can pass the script of the sphere of the left little finger to the CharacterMotor script in the same way:

The onFlick function in our script only allows you to set the flicked boolean to True.

The Update function in the script is called once per frame and changes the horizontal movement of the controller as follows:

Thanks to this code, you can increase the horizontal speed. To do this, press and release the sphere of the little finger, and then draw through it for half a second. You can set horizontal deceleration in various ways. For example, to do this, you can press and release the sphere of the index finger, and then click on it. Please note that the CHCharacterMotor.movement method contains not only the maxSidewaysSpeed parameter, but also gravity, maxForwardsSpeed, maxBackwardsSpeed, and others. Using a variety of gestures of the TouchScript library and objects that process gestures, in combination with these parameters, provides ample opportunities and various strategies for developing touch interfaces in Unity 3D scenes.

In this example, I use a half-second time interval to determine if an action is completed or not. You can also configure multiple time intervals, although this will complicate the interface. For example, a sequence of gestures of pressing and releasing and following them for half a second can increase the horizontal speed, and a similar sequence with an interval of half a second to a second can slow down. Using time intervals in this way, you can not only more flexibly customize the user interface, but also add hidden secrets to the scene.

In cases where the gesture sequences did not work correctly, we were able to find a suitable alternative solution. One of the configuration tasks was to achieve the correct operation of the sequence of operations using the Time.deltaTime function on the existing device. Thus, the scene we created in Unity 3D for this article confirms the viability of Windows 8 on Ultrabook devices as a platform for developing applications using gesture sequences.

In this article, I’ll talk about how to set up a scene to control the controller in the first person using touch targets. First of all, you need to configure touch targets for the base position of the controller and rotation, and then expand the set of their functions. The latter can be achieved through existing interface elements without adding new objects. The scene that we have will demonstrate the wide possibilities of Unity 3D in Windows * 8 as a platform for processing various sequences of gestures.

Scene Setup in Unity * 3D

First you need to set up the scene. To do this, we import into Unity * 3D a terrain resource in .fbx format with mountains and trees exported from Autodesk 3D Studio Max *. Place the controller in the center of the landscape.Set the depth indicator of the main camera (it is part of the controller) to level -1. Create a separate camera interface element with support for orthogonal projection, width 1 and height 0.5, as well as Don't Clear flags. Then create a GUIWidget layer and make it a mask of the camera interface.

Place the main interface elements that control the controller on the stage in the field of view of the orthogonal camera. Add a sphere for each finger of the left hand. The sphere of the little finger makes the controller move to the left, the sphere of the ring finger - forward, the middle finger - to the right, and the index finger - back. The thumb sphere allows you to jump and launch spherical shells at an angle of 30 degrees clockwise.

For the right-hand interface element, create a cube (a square in the orthogonal projection). Set up a shift gesture support for this cube and attach it to the MouseLook.cs script. This interface element provides the same features as the touchpad of an Ultrabook.

We place these interface elements outside the field of view of the main camera, and set the GUIWidget as a layer. In fig. 1 you can see how the interface elements allow you to launch shells and control the position of the controller on the stage.

Figure 1. First-person controller scene with terrain and spherical projectiles launched.

In this scene, projectiles launched from the controller fly through the trees. To fix this, you need to add a grid or collider to each tree. Another problem in this scene - a low forward speed - occurs when you try to look down using the touchpad while moving forward with the ring finger sphere. To resolve this issue, you can limit the viewing angle when looking down while holding the forward button.

Multiple touch

On the base stage, there is a first-person controller that launches projectiles at a certain angle in the direction from the center (see Figure 1). The default angle is 30 degrees clockwise.Set up the scene to support multiple touches performed more often than the set period, change the angle of projectile launch and try to launch the projectile. In this case, you can adjust the angle to increase exponentially depending on the number of touches using variables of type float in the script for the thumb sphere on the left. These variables control the angle and time since the launch of the last projectile:

private float timeSinceFire = 0.0f;

private float firingAngle = 30.0f;

Next, configure the Update cycle in the script for the thumb sphere so that the projectile launch angle decreases if touches of the thumb sphere are performed more often than once every half second. In the event that touches occur less than once every half a second, or if the angle of projectile launch decreases to 0 degrees, the value of the angle of projectile launch will return to 30 degrees. The following code will turn out:

timeSinceFire += Time.deltaTime;

if(timeSinceFire <= 0.5f)

{

firingAngle += -l.0f;

}

else

{

firingAngle = 30.0f;

}

timeSinceFire = 0.0f;

if(firingAngle <= 0)

{

firingAngle = 30;

}

projectileSpawnRotation = Quaternion.AngleAxis(firingAngle,CH.transform.up);

Such a code will produce a shelling effect, in which continuous touches will lead to the launch of shells at an ever-decreasing angle (see. Fig. 2). This effect can be allowed to be configured by users or made available subject to certain conditions in the game or simulation mode.

Figure 2. Continuous touches cause a change in the direction of launch of the shells

Scrolling zooms

We set up the square in the lower right of the screen in fig. 1 to work in a mode similar to the touch panel on the keyboard. With a shift gesture, the square does not move, but rotates the main camera of the scene up, down, left and right using the MouseLook controller script. Similarly, the zoom gesture (similar to stretching / compressing on other platforms) does not lead to scaling of the square, but to a change in the field of view of the main camera, due to which the user can zoom in or out on the main camera (see Figure 3). We will configure the controller so that the shift gesture immediately after scaling returns the camera's field of view to the default value of 60 degrees.To do this, you need to program a logical variable (panned) and a variable of type float so that they mark the time elapsed from the last zoom gesture:

private float timeSinceScale;

private float timeSincePan;

private bool panned;

Set the timeSinceScale variable to 0.0f when executing the zoom gesture, and set the panned variable to True when performing the shift gesture. The field of view of the main camera of the scene is configured in the Update cycle, as can be seen in the script for the rectangle-touch panel:

timeSinceScale += Time.deltaTime;

timeSincePan += Time.deltaTime;

if(panned && timeSinceScale >= 0.5f && timeSincePan >= 0.5f)

{

fieldOfView += 5.0f;

panned = false;

}

if(panned && timeSinceScale <= 0.5f)

{

fieldOfView = 60.0f;

panned = false;

}

Camera.main.fieldOfView = fieldOfView;

Consider the onScale and onPan functions. Please note that the variable float timeSincePan does not allow the field of view to constantly increase when using the touch panel to control the camera:

private void onPanStateChanged(object sender, GestureStateChangeEventArgs e)

{

switch (e.State)

{

case Gesture.GestureState.Began:

case Gesture.GestureState.Changed:

var target = sender as PanGesture;

Debug.DrawRay(transform.position, target.WorldTransformPlane.normal);

Debug.DrawRay(transform.position, target.WorldDeltaPosition.normalized);

var local = new Vector3(transform.InverseTransformDirection(target.WorldDeltaPosition).x, transform.InverseTransformDirection(target.WorldDeltaPosition).y, 0);

targetPan += transform.InverseTransformDirection(transform.TransformDirection(local));

//if (transform.InverseTransformDirection(transform.parent.TransformDirection(targetPan -startPos)).y < 0) targetPan = startPos;

timeSincePan = 0.0f;

panned = true;

break;

}

}

private void onScaleStateChanged(object sender, GestureStateChangeEventArgs e)

{

switch (e.State)

{

case Gesture.GestureState.Began:

case Gesture.GestureState.Changed:

var gesture = (ScaleGesture)sender;

if (Math.Abs(gesture.LocalDeltaScale) > 0.01 )

{

fieldOfView *= gesture.LocalDeltaScale;

if(fieldOfView >= 170){fieldOfView = 170;}

if(fieldOfView <= 1){fieldOfView = 1;}

timeSinceScale = O.Of;

}

break;

}

}

Figure 3. The main camera of the scene with the image approximated using the rectangle of the touch panel on the right.

Clicking, releasing with a click

If you press and release the sphere of the little finger, and then draw along it for half a second, you can increase the horizontal speed of the controller.To support this function, we add a variable of type float and a logical variable that will allow us to mark the time from the gestures of releasing the sphere of the little finger and the gesture of clicking on it:

private float timeSinceRelease;

private bool flicked;

When I first set up the scene, I gave the little finger sphere script access to the InputController controller script so that the little finger sphere on the left could make the controller move left. The variable that controls the horizontal speed of the controller is not in the InputController script, but in the CharacterMotor script. You can pass the script of the sphere of the left little finger to the CharacterMotor script in the same way:

CH = GameObject.Find("First Person Controller");

CHFPSInputController =

(FPSInputController)CH.GetComponent("FPSInputController");

CHCharacterMotor = (CharacterMotor)CH.GetComponent ("CharacterMotor");

The onFlick function in our script only allows you to set the flicked boolean to True.

The Update function in the script is called once per frame and changes the horizontal movement of the controller as follows:

if(flicked && timeSinceRelease <= 0.5f)

{

CHCharacterMotor.movement.maxSidewaysSpeed += 2.0f;

flicked = false;

}

timeSinceRelease += Time.deltaTime;

}

Thanks to this code, you can increase the horizontal speed. To do this, press and release the sphere of the little finger, and then draw through it for half a second. You can set horizontal deceleration in various ways. For example, to do this, you can press and release the sphere of the index finger, and then click on it. Please note that the CHCharacterMotor.movement method contains not only the maxSidewaysSpeed parameter, but also gravity, maxForwardsSpeed, maxBackwardsSpeed, and others. Using a variety of gestures of the TouchScript library and objects that process gestures, in combination with these parameters, provides ample opportunities and various strategies for developing touch interfaces in Unity 3D scenes.

Gesture Issues

The gesture sequences that we used in this article are highly dependent on the Time.deltaTime function. I use this function in combination with various gestures before and after the function determines the action. The two main problems that I encountered when setting up these cases are the size of the time interval and the nature of the gestures.Time interval

When writing this article, I used a half second interval. If I selected an interval equal to one tenth of a second, the device could not recognize the sequence of gestures. Although it seemed to me that the pace of touch was high enough, this did not lead to the necessary actions on the screen. Perhaps this is due to delays in the operation of the device and software, therefore, I recommend that you consider the performance of the target platform when developing gesture sequences.Gestures

When working on this example, I originally intended to use the gestures of scaling and shifting, and then touching and clicking. Scaling and shifting worked correctly, but stopped as soon as I added a touch gesture. Although I managed to set up sequences of zoom and shift gestures, it is not very user friendly. A more successful option would be to configure another touch target in the interface element to handle touch and hold gestures after scaling and shifting.In this example, I use a half-second time interval to determine if an action is completed or not. You can also configure multiple time intervals, although this will complicate the interface. For example, a sequence of gestures of pressing and releasing and following them for half a second can increase the horizontal speed, and a similar sequence with an interval of half a second to a second can slow down. Using time intervals in this way, you can not only more flexibly customize the user interface, but also add hidden secrets to the scene.

Conclusion

In this article, I set up a scene with various gesture sequences in Unity * 3D using the TouchScript library on an Ultrabook with Windows 8. The goal of these sequences is to reduce the screen area with which the user controls the application. In this case, you can allocate a large screen area to display attractive content.In cases where the gesture sequences did not work correctly, we were able to find a suitable alternative solution. One of the configuration tasks was to achieve the correct operation of the sequence of operations using the Time.deltaTime function on the existing device. Thus, the scene we created in Unity 3D for this article confirms the viability of Windows 8 on Ultrabook devices as a platform for developing applications using gesture sequences.