Detection of sarcasm using convolutional neural networks

Hi, Habr! I present to you the translation of the article " Detecting Sarcasm with Deep Convolutional Neural Networks " by Elvis Saravia.

One of the key problems of natural language processing is the detection of sarcasm. Finding sarcasm is important in other areas, such as emotional computation and mood analysis, as this may reflect the polarity of the sentence.

This article shows how to detect sarcasm and also provides a link to the neural network sarcasm detector .

Sarcasm can be viewed as an expression of stinging mockery or irony. Examples of sarcasm: "I work 40 hours a week to stay poor," or "If the patient really wants to live, doctors are powerless."

To understand and detect sarcasm, it is important to understand the facts related to the event. This reveals a contradiction between objective polarity (usually negative) and sarcastic characteristics transmitted by the author (usually positive).

Consider an example: “I like the pain of parting.”

It is difficult to understand the meaning if there is sarcasm in this statement. In this example, “I like pain” gives knowledge of the feeling expressed by the author (in this case, positive), and “separation” describes a contradictory feeling (negative).

Other problems that exist in the understanding of sarcastic statements are a reference to several events and the need to extract a large number of facts, common sense, and logical reasoning.

“Mood shifts” are often present in conversations where sarcasm is present; therefore, it is proposed to first prepare a mood model (based on CNN) to extract the signs of mood. The model selects local features in the first layers, which are then converted into global features at higher levels. Sarcastic expressions are user-specific — some users use more sarcasm than others.

In the proposed model for the detection of sarcasm are used, personal signs, signs of mood and signs based on emotions. A set of detectors is a framework designed to detect sarcasm. Each feature set is studied by separate pre-trained models.

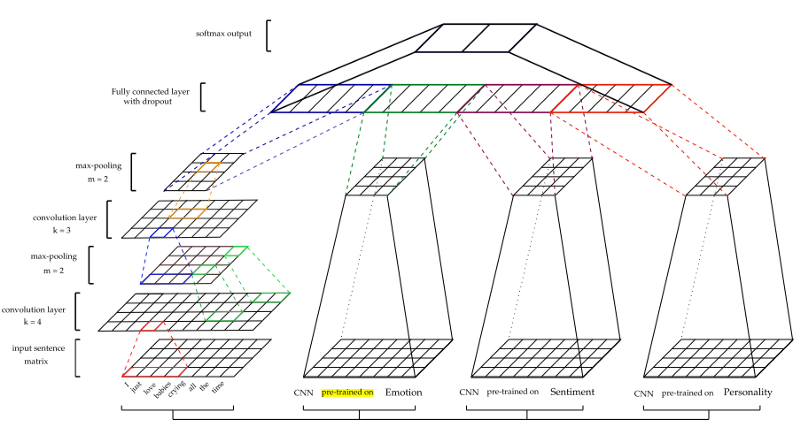

CNNs are effective in modeling a hierarchy of local attributes to highlight global attributes that are necessary to explore the context. Input data are presented as word vectors. For the initial processing of input data using word2vec from Google. Vector parameters are obtained at the learning stage. Maximum combining is then applied to function maps to create functions. After a fully bonded layer, go softmax layer to get a final prediction.

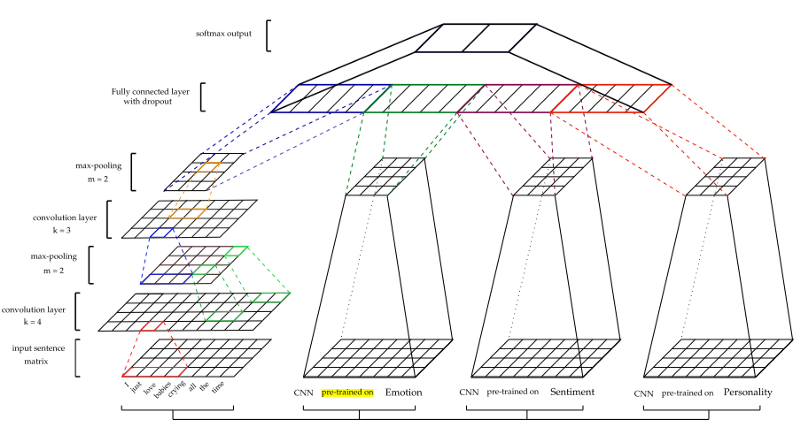

The architecture is shown in the figure below.

For other characteristics — mood (S), emotion (E), and personality (P) —the CNN models undergo a preliminary training session and are used to extract traits from the sarcasm data sets. Different training data sets were used to train each model. (For details, see. In the document)

Two classifiers are tested - the pure CNN classifier (CNN) and the CNN-extracted characteristics that are passed to the SVM classifier (CNN-SVM).

A separate basic classifier (B) is also trained, consisting only of the CNN model without the inclusion of other models (for example, emotions and moods).

Data. Balanced and unbalanced data sets were obtained from (Ptacek et al., 2014) and the sarcasm detector . User names, URLs and hash tags are removed, then the NLTK Twitter tokenator is applied.

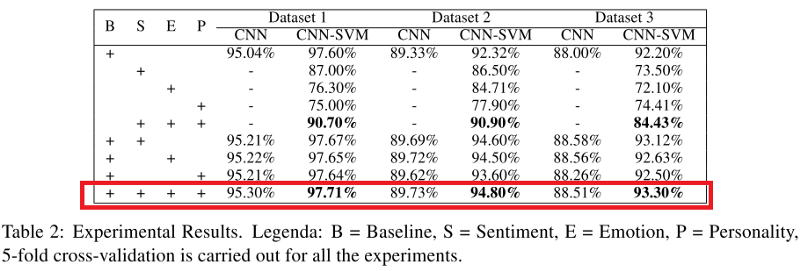

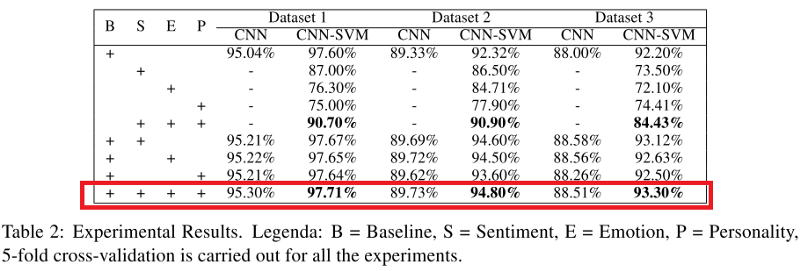

Both CNN and CNN-SVM classifier indicators applied to all data sets are shown in the table below. It can be noted that when the model (in particular, CNN-SVM) combines signs of sarcasm, signs of emotions, feelings and character traits, it surpasses all other models, except for the base model (B).

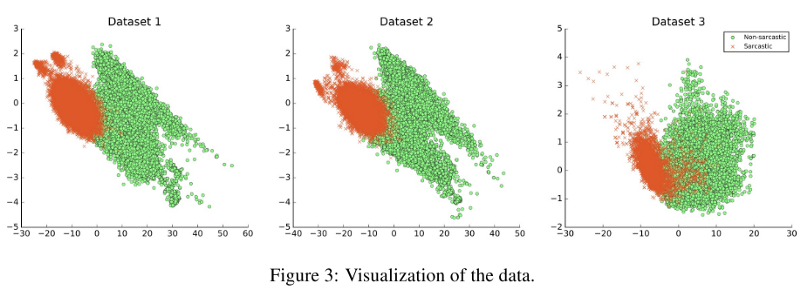

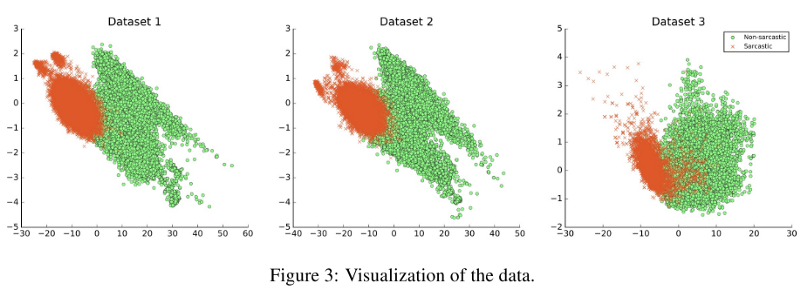

The possibilities of generalizability of the models were tested, and the main conclusion was that if the data sets differed in nature, this significantly influenced the result as shown in the figure below. For example, training was conducted on data set 1 and tested on data set 2; The F1-score of the model was 33.05%.

One of the key problems of natural language processing is the detection of sarcasm. Finding sarcasm is important in other areas, such as emotional computation and mood analysis, as this may reflect the polarity of the sentence.

This article shows how to detect sarcasm and also provides a link to the neural network sarcasm detector .

Sarcasm can be viewed as an expression of stinging mockery or irony. Examples of sarcasm: "I work 40 hours a week to stay poor," or "If the patient really wants to live, doctors are powerless."

To understand and detect sarcasm, it is important to understand the facts related to the event. This reveals a contradiction between objective polarity (usually negative) and sarcastic characteristics transmitted by the author (usually positive).

Consider an example: “I like the pain of parting.”

It is difficult to understand the meaning if there is sarcasm in this statement. In this example, “I like pain” gives knowledge of the feeling expressed by the author (in this case, positive), and “separation” describes a contradictory feeling (negative).

Other problems that exist in the understanding of sarcastic statements are a reference to several events and the need to extract a large number of facts, common sense, and logical reasoning.

Model

“Mood shifts” are often present in conversations where sarcasm is present; therefore, it is proposed to first prepare a mood model (based on CNN) to extract the signs of mood. The model selects local features in the first layers, which are then converted into global features at higher levels. Sarcastic expressions are user-specific — some users use more sarcasm than others.

In the proposed model for the detection of sarcasm are used, personal signs, signs of mood and signs based on emotions. A set of detectors is a framework designed to detect sarcasm. Each feature set is studied by separate pre-trained models.

CNN Framework

CNNs are effective in modeling a hierarchy of local attributes to highlight global attributes that are necessary to explore the context. Input data are presented as word vectors. For the initial processing of input data using word2vec from Google. Vector parameters are obtained at the learning stage. Maximum combining is then applied to function maps to create functions. After a fully bonded layer, go softmax layer to get a final prediction.

The architecture is shown in the figure below.

For other characteristics — mood (S), emotion (E), and personality (P) —the CNN models undergo a preliminary training session and are used to extract traits from the sarcasm data sets. Different training data sets were used to train each model. (For details, see. In the document)

Two classifiers are tested - the pure CNN classifier (CNN) and the CNN-extracted characteristics that are passed to the SVM classifier (CNN-SVM).

A separate basic classifier (B) is also trained, consisting only of the CNN model without the inclusion of other models (for example, emotions and moods).

Experiments

Data. Balanced and unbalanced data sets were obtained from (Ptacek et al., 2014) and the sarcasm detector . User names, URLs and hash tags are removed, then the NLTK Twitter tokenator is applied.

Both CNN and CNN-SVM classifier indicators applied to all data sets are shown in the table below. It can be noted that when the model (in particular, CNN-SVM) combines signs of sarcasm, signs of emotions, feelings and character traits, it surpasses all other models, except for the base model (B).

The possibilities of generalizability of the models were tested, and the main conclusion was that if the data sets differed in nature, this significantly influenced the result as shown in the figure below. For example, training was conducted on data set 1 and tested on data set 2; The F1-score of the model was 33.05%.