Is it possible to render realistic images without floating point numbers?

- Transfer

Introduction

“What happens if we replace the floating point numbers with rational numbers and try to render the image?”

I asked myself this question after thinking about the tweet of a researcher and computer graphics teacher Morgan McGwire. He talked about how much computer science students are surprised when they first find out that to store the familiar floating-point numbers in modern computers, compromises must be made. And these compromises make simple tasks difficult, for example, checking whether a point belongs to a triangle. The problem, of course, is that checking for four points in the same plane (coplanarity) using a determinant or some kind of vector multiplication (but in fact this is the same thing) will never give a value exactly equal to zero, which is required these are mathematical methods. Even if the real calculations of being on the same plane were accurate,

This gave rise to the idea in me - if we assume that all incoming renderer data (vertex coordinates, 3D transforms, etc.) were set as rational numbers, then they would create all the operations, from creating a ray, traversing the accelerating structure to the intersection rays with triangles are only rational numbers? If that were the case, then we would be able to carry out a coplanarity test exactly! You might be wondering why a 3D scene expressed in rational numbers should only give results in rational numbers ...

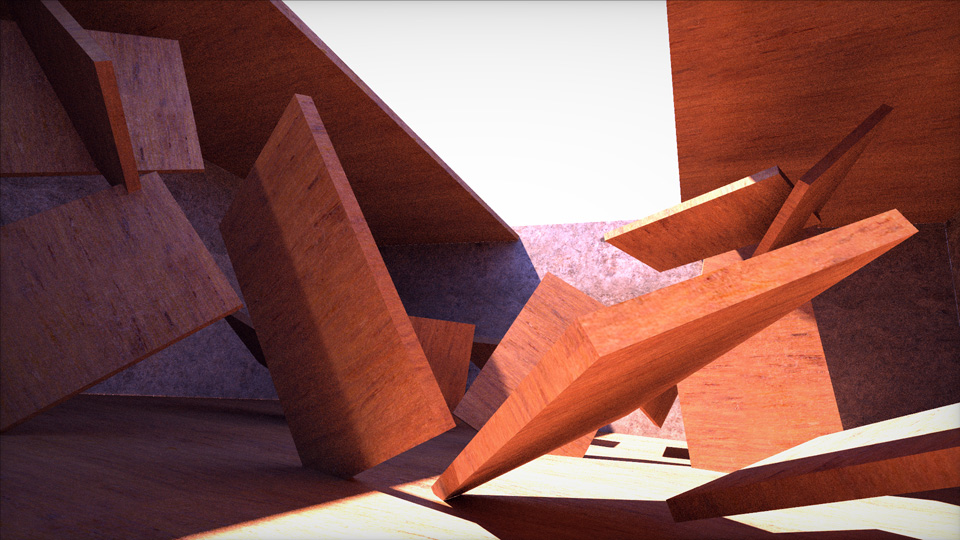

A simple scene, the path tracing in which is performed by rational arithmetic. It uses a system of numbers "floating feature shot " rather than floating point .

Firstly, a rational number is a number that can be expressed as the ratio of two integers, for example 1/2 or 355/113. Secondly, “normal rendering operations”, such as bounding box tests, checking the intersection of a ray with a triangle, reflection of a ray, etc., are based on vector and scalar products, as well as scalar division (this includes coordinate transformation and matrix inversion, quaternions, etc.), which in turn are based on four basic operations: addition, subtraction, multiplication and division. When adding, subtracting, multiplying and dividing rational numbers, rational numbers are also obtained. The mathematician would say that many rational numbers form a field that is closed under four basic arithmetic operations. For us, this means

Exceptions to the rule “actions on rational numbers give rational numbers” are square roots and trigonometric / transcendental functions. As for the latter, I always say that if you had to perform trigonometric calculations in the geometric interiors of your renderer, then most likely you are doing something wrong (and I showed how to fix the most standard cases ) [ translationon Habr.]. As for the square roots, with the exception of conical sections (spheres, cylinders, etc.) and performing shading / DFOS / coloring, it is not necessary to normalize the rays and normal to surfaces as often as is usually done. It certainly does not need to be done to create a ray, its passage, intersection, reflections, etc. Unfortunately, very often I see that programmers normalize values for no reason other than “well, I don’t know, I do it so I can play it safe.” In practice, in the part of the rendering where the geometry is traced, it is very rarely necessary to normalize the values, so I had the hope that it would be possible to trace the whole scene without leaving the world of rational numbers - this is what I would call "rational rendering".

To put this into practice, I need to create a number system based on rational numbers that a computer could use. Then, on top of it, I could implement the usual path tracing algorithms, calculate images without loss of accuracy, perform coplanarity checks that have accurate answers, and make all students studying computer graphics happy.

This article is a story about two nights of research into the realism of such an idea. I will talk about the many aspects that I learned, about what I came up with and about a few surprises that I discovered in the process. The article is written in a more or less chronological order of my work. In addition, it was written in my unusually informal and very unscientific style (which I am proud of). The image shown above is a kind of spoiler, but read the article to the end, because I will talk about the good and the bad.

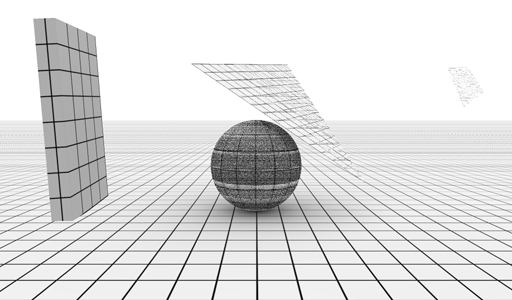

Training

The first thing I did was to implement in Shadertoy a minimally limited tracer for an extremely simple scene consisting of a plane, sphere, rectangular parallelepiped and a triangle - the building blocks of real renderers. Then I copied the code to a C ++ file and, after making a couple of minor changes, compiled it using my piLibs framework. So for comparison I got a traced image rendered to the CPU using regular numbers according to the IEEE754 standard with a floating point. I also removed all ray normalization from the trace code, because, as mentioned above, none of them are actually needed. Let me remind you that a square root is required for normalization, and rational numbers are not preserved when it is used (the square root of a rational number is not a rational number). A little later we will see that it is still possible to apply square roots, of course, I just wanted to make the code as mathematically clean as possible to see how far I can go with the exact arithmetic of rational numbers without rounding.

The final preparatory step - I took all vec3, mat4x4 and other basic algebra / math classes, and then changed them so that they used rational instead of float. Since my rational structure overloads all standard operators (add, sub, mul, div, sign reversal, comparisons, etc.), the replacement occurred without problems. I quickly implemented the remaining usual operations (abs, sign, mod, fract, floor, sqrt, etc.), which theoretically was enough to get beautiful rational renderings.

Test 1 - The Naive Solution

But let's see what this first implementation was like. At first I always try the simplest, and then I look at the results. And the simplest way to implement rational values was to use two integers. As the name of the section suggests, this will not be my final decision, but for the first attempt it was a reasonable decision. Thus, each number x should have been presented as a numerator N and a denominator D , which form the value of N / D . The x value is approximated by the best possible N / D pair (within the specified bit depth), which is closest to the true x value. I decided that both numbers must be positive, and the sign of the number should be stored in a separate bit in order to simplify the work and get rid of ambiguities, although this is not very important. At this stage, both numerators and denominators were of unsigned type. But even when separating the sign, N / D had a lot of redundancy: for example, 1/4 and 7/28 denote the same number, but have completely different bit representations. We will touch on this later, but for now, let's not focus our attention and see how the four basic arithmetic operations look in this rational form.

First, note that subtracting a - b is simply the addition of a and the value opposite to b, i.e., a + ( -b ), where -b can be computed by simply changing the sign of b . Similarly, dividing a / b is the same as multiplying a and the inverse of b . Or, in other words, a / b = a · (1 / b ), where (1 / b ) can be calculated by simply changing the places of the numerator b n and the denominator b d of the number b. So, here is the first interesting property of rational arithmetic - division and multiplication have the same costs, therefore, unlike the usual floating point rendering, in which divisions are usually avoided, delayed or hidden under the delays of slow texture requests, there is no need to be afraid of these operations in rational arithmetic .

We turn to addition with multiplication: we know that the opposite and inverse values are trivially simple to calculate, so we get:

Sign preservation during multiplication is trivial, it is just xor, because two positive values give a positive result, as well as two negative ones. Saving a sign for addition is a more complicated process, and for a quick solution I implemented it through three branches (addition is trivial if the signs a and b match, but when they do not match, then you need to select a smaller number and subtract it from the larger one - in the article I don’t I will describe such small details in more detail, but just lay out the source code somewhere).

I will also skip the implementation of fract () and floor (); if you decide to try to implement them yourself, you will see their simplicity and beauty. Attention should also be paid to comparison operators. Taking care of the signs and accepting that aand b are positive, we get

It is important to note here that even for comparison we need a couple of multiplication operations, which can lead to the transition to the next word size and will be important a little lower.

Finally, we look at the square roots in a separate section, knowing that for the most part we do not need them (except for the sphere from this first test).

This was enough to run the first render and trace the test scene (plane + sphere + triangle + rectangular box) to see what came of it. I generously used 65-bit rational numbers for this first test, which actually represents a large amount of data (comparable to the "double" data type): 32 bits are taken by the numerator, 32 bits are the denominator, and another bit is the sign. The first is the image obtained with this naive approach, the second is the image made using floating point numbers (reference):

"Naive" 65-bit rational numbers

Floating point reference

The result was pretty bad, the box and triangle did not even appear on the render, and the sphere and plane of the floor were too noisy. The problem, of course, was that every time my rational numbers performed any basic arithmetic operation at any of the algorithmic stages of rendering, the numerator and denominator became more and more uncontrollably, because integer multiplication was used. Think about the following: if the units of our initial world were meters, and we would attach the source geometry (vertices and camera) to millimeter accuracy, then only the source data would occupy a 16-bit volume for a rather small scene. At the same time, with standard HD screen resolution and 4X smoothing, rational beam direction numbers would easily require 12 bits. That is, during the first interaction of the beam and geometry, the simplest arithmetic operation, using both sets of input data, would turn the result into 28-bit lengths - close enough to the 32-bit limit that I set for myself in this first implementation. And this is even before we performed the very first vector or scalar product. By the time the scalar product is complete, the renderer would need rational numbers hundreds of bits long to represent numbers. Of course, this is the worst case, but the average case would be close to this. Considering that I allocated only a 32-bit capacity for the numerator and denominator, it is easy to understand how quickly the values go beyond the boundaries in this test - it is not surprising that with the exception of the floor plane and part of the sphere, almost nothing is visible.

Test 2 - Reduction by the largest common factor

Then I improved the system by using the property that I briefly mentioned above - different rational numbers can mean the same amount. And in fact, 6/12 is the same value as 1/2, but it uses a lot more bits than the last. Therefore, the idea was as follows: if after each basic arithmetic operation (or after it) I would extract all common divisors from the numerator and denominators, and bring the fraction to its simplest form, then perhaps I will be able to keep everything under control and continue operations longer with exact arithmetic without loss of accuracy. Perhaps you can do this long enough to get clean, rendered images? I will make a small digression to show another example: 588/910 can be simplified to 42/65, because 14 is a divisor of both 588 and 910. But to store 42/65, obviously, fewer bits are needed than 588/910. Finding the largest possible number that simultaneously divides the other two numbers can be done using the Great Common Divisor (GCD) algorithm, effective implementations of which you can find anywhere (I personally copied it directly from Wikipedia and accelerated it a bit by performing the scanning step bits using internal x64 operations). So, armed with the GCD algorithm, my rational class should constantly simplify fractions generated during the rendering process. This could be done in two ways: effective implementations of which you can find anywhere (I personally copied it directly from Wikipedia and accelerated it a bit by performing the stage of scanning bits using internal x64 operations). So, armed with the GCD algorithm, my rational class should constantly simplify fractions generated during the rendering process. This could be done in two ways: effective implementations of which you can find anywhere (I personally copied it directly from Wikipedia and accelerated it a bit by performing the stage of scanning bits using internal x64 operations). So, armed with the GCD algorithm, my rational class should constantly simplify fractions generated during the rendering process. This could be done in two ways:

The first is to convert the intermediate result of the addition and multiplication operators to the next type of bit data (in my current naive solution it is uin64_t), search for GCD in this more voluminous data type, and then reduce the result to the original bit length (32). The second way is to analyze how a n , a d , b n and b dcombine with each other in both arithmetic operators and extract common divisors from them before performing the multiplication. The second approach basically eliminated the need for large bit lengths. Knowing that it might be necessary to use them anyway, I decided to choose the first method, because it was easier to implement and it allowed me to speed up my work (the evening flies very quickly). Having done all this, let's see what render I can create now:

65-bit rational numbers reduced by GCD

Floating point

reference Much better! So far, far from ideal, of course, but it looks promising. I made the box and triangle appear, and the sphere now seems much more voluminous. However, a funny artifact appeared in the upper right corner, and rational numbers for many pixels still go beyond the boundaries, which leads to many points in the image. However, it is worth noting that for some (many) pixels, I started to get accurate and perfect results! That is, the tracer found mathematically accurate intersections of points and distances, which was the root cause of trying to use rational numbers.

Before proceeding to the next step in the process of proving the applicability of rational numbers, I want to briefly stop and share my findings regarding GCD and the reduction of rational numbers.

The first discovery is related to the bit volume of rational numbers. Even though I still can’t render beautiful images and this is more important than worrying about optimizing data volumes, and although this early implementation still used a large number of bits (1 + 32 + 32), I was already thinking about the waste mentioned earlier bits in the form of excess fractions. In particular, after adding a stage with a GCD, bit combinations like 2/4 are no longer applicable, because they are automatically reduced to 1/2 before writing to any register or variable. That is, in a sense, out of all 2 64combinations of bits, which could be the numerator and denominator, many remained unused. And you can't waste bits like that. Or is it possible? How much space do I actually lose? I made a small digression to explore this issue.

Digression - On Mutually Prime Numbers

The illustrations below show the use of bits for rational numbers in 5/5 bits and 7/7 bits. The horizontal and vertical axis of the graphs represent the values of the numerator and denominator of all possible rational numbers having numerators and denominators up to 5 bits (31) and 7 bits (127). Black pixels are unused combinations, and white pixels are fractions used. For example, the entire diagonal is black, except for the 1/1 pixel, because all fractions of the form n / n are reduced to 1/1.

Using bits for 5/5 rational

Using bits for 7/7 rational

If you count the pixels, as I did, you can quickly understand that the proportion of useful pixels with an increase in the number of bits tends to 60.8%. A little online research showed me that this ratio is exactly equal to 6 / π 2, because it is also likely to be coprime (not to have common divisors) for two random numbers. You may ask, where did the pi come from? It turns out that “six by pi squared” is a value equal to unity divided by the Riemann zeta function calculated at point 2, 1 / ζ (2). This should not surprise us very much, because the Riemann zeta function often pops up in problems involving simple and mutually prime numbers.

Be that as it may, in my rational view, I wasted about 40% of the bit combinations. And although this seems like a large number, I decided to look at it as if it was actually a bit less ... thanks to which I could not be very upset. With this in mind, I decided to move on, using other, completely different approaches, instead of trying to optimize this single issue locally. However, nevertheless, I briefly learned about the Stern-Brokaw and Calkin-Wilf trees, which could allow me to fully use all the available bits, but the interval of values obtained with their help is very small, so I quickly abandoned this idea and moved on. I think at this point I should express my gratitude to Wikipedia as a constant source of my knowledge.

Let us return to the analysis of what we got: I can render images with distortions, but very much depend on the distribution of primes in the calculations. It depends on these primes whether the GCD algorithm can simplify the expression - as soon as any prime number or a multiple of it falls into any of the renderer numbers (vectors, scalars, matrices), it “pollutes” all the numbers following it in further arithmetic manipulations, and remains in them forever. Therefore, gradually everything is guaranteed to begin to grow, it is only a matter of time. In addition to the fact that this is inevitable, it is also necessary, because it is mutually simple divisors that carry information about the value of a number. But at the same time, large primes break everything very quickly. So there is a conflict.

The last thing worth noting is that I still used twice as many bits as the standard floating-point number, so there are no real advantages here so far. Of course, I tried to use 16/16 bit rational numbers, which would be a more honest comparison with the true requirements for floating point arithmetic, but with a precision of 16/16, the system I wrote with the numerator + denominator + GCD created completely illegible images.

Test 3 - Normalization of Rational Numbers

So, at this point I needed something really significant. It seemed that in order to prevent numbers from going out of bounds, one had to start trimming the numbers and losing accuracy. My whole experiment began with the idea of researching accurate rendering, but by this moment I felt that I was ready to abandon this idea and continue to explore other areas, if only for fun, and see what it would lead me to (the initial idea stimulating the research process “This is exactly the idea to start the process, and after it you often end up in a completely unexpected place. Or, as John Cleese once said, bad ideas can lead to good ideas, the creative process is not always always edovatelnostyu or logically true progression of steps).

Be that as it may, I decided to check what would happen to the renders if I somehow protected the numerator and denominator from overflowing. The easiest way would be to shift both the numerator and the denominator, if necessary, by a sufficient number of bits to the right, until they again appear in the bit space allocated to them. In fact, this means an integer division of both the numerator and the denominator by one value, which means that the value of the number remains approximately unchanged. And here I deviated from the original goal of the experiment.

In my first implementation, I looked at the number of bits needed for the numerator and denominator, took the maximum for both, and shifted both by this number of bits (rounding to the nearest integer). When this was implemented in the addition and multiplication operators, everything began to look quite acceptable:

65-bit rational numbers reduced by GCD and normalization

Floating-point standard

Since everything looked pretty good, at this stage I proceeded to solve the problem of a large amount of bits used in the current implementation. I tried using 16/16 (33 bits) instead of 32/32 (65 bits), and the images turned out to be surprisingly good! I still saw that in some edges of the sphere there are small holes, and in the figure of the texture of the triangle there are small gaps. But this is not bad for quantities close enough to floating point numbers. It gave me energy to learn new ideas.

Test 4 - Floating Slash

At this stage, I decided to distract myself and stop looking for excuses - if I want to find something interesting for rendering in rational numbers, then they should occupy 32 bits and no more. It is better to find a good idea or stop, and end there (this was at the beginning of the second evening of experiments).

At first, I thought that it was worth sticking to the ideas of GCD and normalization, but it was wiser to approach the storage and use of bits. The first thing that occurred to me was that even though the numerator and denominator can get large, this often does not happen. Or, at least, this does not happen simultaneously. Therefore, when the numerator is small, you can let the denominator be large, and vice versa. Unused bits of one of two integer values can be used to represent larger values. Then I realized that similarly, a floating point number is essentially a fixed point format, where the “fixed” point is made variable. I can take my rational numbers and also make the bit layout of the fraction fraction variable. That is, it is not hard to set the fraction as 16/16, but to allow the same 32-bit variable to sometimes be 16/16,

It was worth checking out. Anyway, a few lines of packing / unpacking code in internal setters and getters can be written quickly. The most logical scheme for me seemed 1 | 5 | 26, that is:

1 bit:

5-bit sign : fraction line position (B)

26-bit: combined numerator and denominator data; the numerator is the upper 26-B bit, the denominator is the lower B bit,

where the fraction bar (B) determines the size of the denominator. For example, the number 7/3 will be written as

7/3 = 0 00010 000000000000000000000111 11,

where the sign 0 means a positive value, the line of fraction 2 denotes the denominator (number 3), which requires 2 bits to represent, and the rest of the bits go to the numerator.

Those readers who have worked with the IEEE754 standard may find this observation familiar: the binary representation of the denominator always starts with “1” because the fraction line number always truncates it to the shortest representation. That is, the first bit of the denominator is optional. In this case, the number “3” can be represented only by the binary value “1” and the value of the fraction line “1”:

7/3 = 0 00001 0000000000000000000000111 1

This trick not only saved me one precious bit, but it also has one excellent side effect: when the slash value of a fraction is zero, this naturally means at the same time that the denominator is 1 and that no space is needed to store it. This means that my rational representation of numbers suddenly turned out to be completely compatible with the usual integer representation and arithmetic, until the values of the numbers rise above 2 26, that is, to a sufficiently large threshold. What a wonderful surprise! That is, theoretically, I can use exactly the same data type, “rational”, to perform standard rendering and shading operations, but also perform all the logic and tasks of the command flow in the path tracer - I no longer need to use two data types, as it happens in most renderers ("int" and "float") and perform conversions in one direction and another! However, time was running out for me, so I did not change all loop indices from “int” to “rational”. The evening was drawing to a close, and I still had to check a lot of things to improve the quality of renderings.

Having created the implementation, I was able to verify it:

32-bit fractional rational numbers (1 | 5 | 26)

32-bit floating point reference Ohhhh

, not bad! I still have artifacts in the sphere, which for now I will blame for my poor implementation of the square root, but the box and triangle have become really clean. The number of precisely resolved image pixels has also increased. I think due to the fact that before the overflow in the denominator or numerator, more numbers have time to appear, I increased the likelihood that the GCD will find common divisors and perform a reduction. That is, the floating line of the fraction not only increased the interval of the represented numbers and postponed the moment of normalization (with loss of accuracy) caused by overflow, but also took the next step in improving quality by increasing the likelihood of reductions.

At this stage, I was ready to conduct a more serious test (but still still experimental - the system is still far from ready for operation). I implemented a path tracer with a minimal set of functions (not necessarily physically accurate or even taking into account physics) and created a scene with several rectangular parallelepipeds and two light sources, the reference implementation of which on the GPU is here: https://www.shadertoy.com/view/Xd2fzR .

I again converted the scene to the C ++ framework, again removed some unnecessary ray normalizations, and ran the render. Here is what I got:

32-bit fractional rational numbers

32-bit floating point standard

Wow, this is really good! Although light leaks are clearly visible in the corners where the edges of the floor and ceiling are connected. Look at them in the approximation:

Perhaps they are caused by a problem in my implementation of the intersection of a ray and a rectangular box, which is only expressed in rational numbers; I would not be surprised. Or maybe I ran into the boundaries of what rational numbers are capable of. Be that as it may, I am quite pleased. In addition, I have other changes and experiments that I wanted to test for the remaining short time:

Some other experiments

Precise arithmetic in 64 bits

The idea of exact arithmetic cannot be realized either in naive 64-bit rational numbers or in 32-bit (1 | 5 | 26) rational numbers with a floating line fraction. And will 64-bit floating-point numbers of a fraction work?

I quickly implemented rational numbers 1 | 6 | 57 (although I had to learn new internal x64 mechanisms for shifting bits). These 57 bits of numerator / denominator allowed tracing a much larger distance interval. I actually managed to trace a scene with several triangles with all the exactarithmetic (not the above-mentioned scene with rectangular parallelepipeds and global lighting, but just a few triangles in front of the camera). And success awaited me! However, the coplanarity test, which I implemented to check the correctness, required several scalar and vector product operations, which caused the numbers to begin to normalize themselves. Therefore, although I knew that the render was accurate, I could not “prove” it experimentally. What an irony. In general, this means that 64 bits was enough for several triangles, but more complex scenes will still fall apart. However, this made me think about another question: is there any algorithm that can be used to test coplanarity, based not on absolute values, but on modular arithmetic? Maybe, in modular arithmetic, rational numbers should not “explode” in size? I did not have time to investigate all this, and I am not an expert in number theory.

Square roots

On the last (second) evening of research, I decided to briefly dwell on this topic and study new information. I wanted to implement the best possible square root function for rational numbers. My current naive decision ( bad ) took the integer square root of the numerator (with corresponding rounding), and then did the same with the denominator. Since the square root of a fraction is a fraction of the square roots of the numerator and denominator, in general, this approach returns decent results, not too different from the best answer. But he certainly does not return the best rational approximation of the square root of a rational number. He performs two instead of one approximation.

I tried the following: in the end, here we are looking for two integers xand y such that

Then we can rewrite this expression as finding a solution (non-trivial) of the following Diophantine equation (“Diophantine” means that we are only interested in integer solutions):

After searching Wikipedia, I found that this particular equation is the so-called “Modified Pell's equation”. There are algorithms that find the smallest x and y values to solve this equation. Unfortunately, my attention quickly shifted to other curious Diophantine mathematics, and I did not proceed with the implementation of any of these algorithms.

More effective reduction

In the last minutes of the evening, I thought about exploring the idea of using multiple members that combine in complex geometric operators, for example, in a vector product. Let's say the first component of a vector product was

on the assumption that sy = a / b, tz = c / d, ty = e / f, sz = g / h.

This meant that now I can try to find common factors, for example, between a and d, or e and h , and use them for preliminary reduction.

I had another idea: if at some stage the rendering speed becomes a problem, then you can completely disable the steps of searching for GCD and apply only normalization. A quick check showed that in this case, the image renders still remain acceptable and work well at a much higher speed. However, in this case, of course, we get fewer arithmetically accurate results.

As a compromise, you can refuse to implement a procedure or a GCD scheme, and instead use something mathematically simple, hard-coded in the code, and effective, determining divisibility by only 2, 3, and 5. Although this way we won’t find an exhaustive number of divisors, by in practice, this would lead to finding a large number of abbreviations. Think about it - divisibility by 2 occurs three times more often than divisibility by 7, and 20 times more often than divisibility by 41!

Conclusion

After this experiment, I began to believe that it is quite possible for a representation of numbers to be based on rational numbers, similar to what I call the “floating line fraction”. A representation compatible with integers and capable of performing many operations in exact arithmetic for many tasks (provided that the input data is presented in a rational form). The 64-bit version (1 | 6 | 57) has great potential, although the 32-bit version (1 | 5 | 26) already creates interesting renderings.

If this were not an experiment for two evenings, but something professional created in a studio or company, then the following steps could be taken:

* Get a histogram of the number of accurately and not exactly traced pixels (in other words, the normalization frequencies)

* Try to implement a hard-coded reduction by divisors 2, 3, and 5 and measure the percentage of exact pixels lost

* Show the pixel difference between floating point rendering and fraction floating-point rendering

* Find ingenious ways to use unused fractions of the floating-point fraction bit format, for example , to indicate Inf and NaN

* Implement detection of NaN, Inf, underflow, overflow.

All in all, it was a fascinating study. In the process, I discovered a few surprises, came up with one small invention and learned a lot about the Pell equation, square roots, GCD, x86_64 internal mechanisms, the Riemann zeta function, and some other aspects. I am very pleased with this!