Comparison of tick-to-trade delays of CEPappliance and Solarflare TCPDirect

In this article, we present the values of the delays measured for two types of environments - a device based on the FPGA CEPappliance ( hardware ) and a computer with a Solarflare network card in TCPDirect mode, tell how we got these measurements - we describe the measurement methodology and its technical implementation. At the end of the article there is a link to GitHub with the results and some source codes.

It seems to us that the results obtained by us may be of interest to high-frequency traders, algorithmic traders and all who are partial to data processing with small delays.

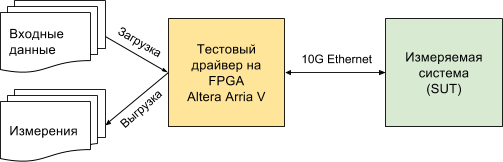

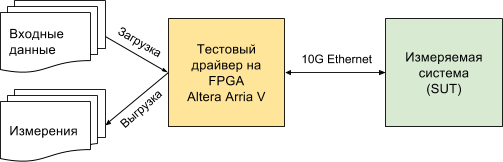

The scheme of the measuring stand looks like this:

SUT (System Under Test) is either a CEPappliance or a server with Solarflare (for the characteristics of the systems under test, see below).

CEPappliance and Solarflare have a common area of application - high-frequency and algorithmic trading. Therefore, we took the scenario from this area as a basis, measuring the delay from the moment the test driver sent the last byte of the packet with market data (tick) to the moment it received the first byte of the packet with the trade request (the delay of the MAC and PHY levels of the driver is the same for both test environments and subtracted from the resulting values below) - the so-called tick-to-trade delay. By measuring the time from the moment the driver sent the last byte, we exclude the influence of the speed of receiving / transmitting data, depending on the physical layer.

You can measure the delay by another method as well - from the moment the driver sends the first byte to the moment it receives the first byte from the measured system. Such a delay will be longer and can be calculated based on our measurements according to the formula:

latency 1-1 = latency N-1 + 6.4 * int ((N + 7) / 8) ,

where latency N-1 is the delay we measured (from the moment the driver sends the last byte until it receives the first byte), N is the Ethernet frame length in bytes, int (x) is the conversion to the integer, discarding the fractional part of the real number.

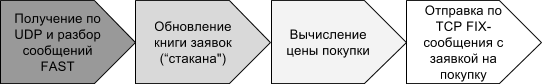

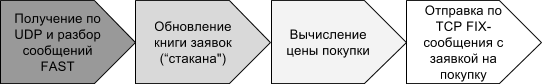

Here is the processing diagram, the runtime of which is the delay of interest to us:

What are the testing stages?

Training:

Testing:

Processing test results:

The SUT is a server with an Asus P9X79 WS motherboard, an Intel Core i7-3930K CPU @ 3.20GHz processor and an SFN8522-R2 Flareon Ultra 8000 Series 10G Adapter network card that supports TCPDirect.

A C-program was written for this stand, which receives UDP packets through the Solarflare TCPDirect API, parses them, builds an application book, generates and sends a purchase message using the FIX protocol.

Parsing a message, building a glass forming a message with the application is encoded “hard” without the support of any variations and checks to ensure a minimum delay. The code is available on GitHub .

The SEP acts as a CEPappliance or “piece of hardware”, as we call it, - it is a DE5-Net board with Altera Stratix V FPGA chip inserted into the PCIe server slot through which it receives power and nothing else. Management and data exchange with the board through a 10G Ethernet connection.

We already said that our firmware for the FPGA chip contains many different components, including everything necessary for the implementation of the testing scenario described here.

The script program for the CEPappliance is contained in two files. In one file , a data processing logic program, which we call a circuit. In another file, a description of the adapters through which the circuit (or the piece of hardware that runs it) interacts with the outside world. So simple!

For CEPappliance, we implemented two versions of the circuit and made measurements for each version. In one version (CEPappliance ALU) logic is implemented in a high-level built-in language (see. Line 47- 67 ). In another (CEPappliance WIRE) - on Verilog'e (see line. 47- 54 ).

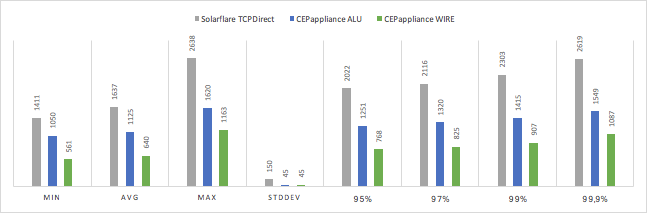

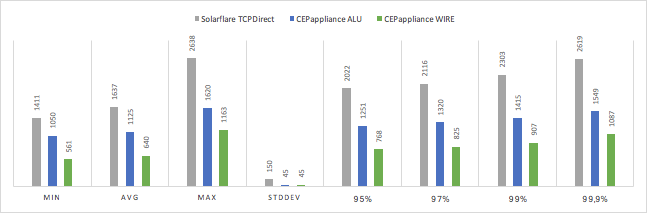

Measured tick-to-trade delays in nanoseconds:

No miracle happened and the hardware implemented on the basis of FPGA turned out to be faster than the server-based solution with Solarflare TCPDirect. The higher the percentile, the greater the difference in speed. At the same time, the speed of the solution at CEPappliance has a variance much lower.

The option for CEPappliance, when the data processing logic is implemented on Verilog, is 60-70% faster than implementing the same algorithm in the built-in CEPappliance language.

We have posted almost all of the source code that participated in the testing open on GitHub in this repository .

Only the test driver code was left closed, since there is a hope to monetize it. After all, it allows you to very accurately measure the reaction rate of the system. And without this information, making a high-quality HFT solution is almost impossible.

It would be logical to find out whether the revealed difference in the delays of various decisions matters, for example, when trading on the Moscow Exchange. About it will be in the next article. But looking ahead, let's say that even half a microsecond matters!

It seems to us that the results obtained by us may be of interest to high-frequency traders, algorithmic traders and all who are partial to data processing with small delays.

Method of measurement: what and how we measured

The scheme of the measuring stand looks like this:

SUT (System Under Test) is either a CEPappliance or a server with Solarflare (for the characteristics of the systems under test, see below).

CEPappliance and Solarflare have a common area of application - high-frequency and algorithmic trading. Therefore, we took the scenario from this area as a basis, measuring the delay from the moment the test driver sent the last byte of the packet with market data (tick) to the moment it received the first byte of the packet with the trade request (the delay of the MAC and PHY levels of the driver is the same for both test environments and subtracted from the resulting values below) - the so-called tick-to-trade delay. By measuring the time from the moment the driver sent the last byte, we exclude the influence of the speed of receiving / transmitting data, depending on the physical layer.

You can measure the delay by another method as well - from the moment the driver sends the first byte to the moment it receives the first byte from the measured system. Such a delay will be longer and can be calculated based on our measurements according to the formula:

latency 1-1 = latency N-1 + 6.4 * int ((N + 7) / 8) ,

where latency N-1 is the delay we measured (from the moment the driver sends the last byte until it receives the first byte), N is the Ethernet frame length in bytes, int (x) is the conversion to the integer, discarding the fractional part of the real number.

Here is the processing diagram, the runtime of which is the delay of interest to us:

What are the testing stages?

Training:

- Input data - recorded dump of the order flow from the ACTS trading system of the Moscow Exchange in the form of messages packed according to the FAST protocol and transmitted over UDP

- Data is loaded into the memory of the test driver using the utility

Testing:

- Test driver

- sends recorded UDP packets (plays a dump) with market data - information about orders (orders) for purchase or sale accepted by the exchange; information includes the action with the application - adding a new application, changing or deleting a previously added application, identifier of a traded financial instrument, purchase / sale price, number of lots, etc .;

- one package may contain information about one or several applications (after changes in the rules for packing data released by the exchange in March 2017, we met packages with information on 128 applications!);

- remembers the time the T1 packet was sent.

- System under test

- accepts a package with information about applications;

- unpacks it in accordance with the packaging rules specified by the exchange (message X-OLR-CURR of the Orders flow from the Moscow Exchange currency market);

- updates its internal application book (“glass”), using all the data from the received package;

- if the best (lowest) sale price in the book has changed, sends a purchase request with this price via the FIX protocol.

- Test driver

- receives a TCP packet with an application;

- fixes the time of receipt of T2;

- calculates the delay (T2 -T1) and remembers it.

- Testing is performed on a set of 90,000 packets with market data, on the obtained set of delay values, statistical values (average, variance, percentiles) are calculated. Packets are sent strictly in turn. After sending the packet, we are waiting for a response, or the timeout expires (if the algorithm should not respond to this packet with market data). After that we send the next package, etc.

Processing test results:

- The obtained average delay values are unloaded from the memory of the test driver

- Each delay value is stored along with the value of the input packet size for which it was measured

Stand for Solarflare

The SUT is a server with an Asus P9X79 WS motherboard, an Intel Core i7-3930K CPU @ 3.20GHz processor and an SFN8522-R2 Flareon Ultra 8000 Series 10G Adapter network card that supports TCPDirect.

A C-program was written for this stand, which receives UDP packets through the Solarflare TCPDirect API, parses them, builds an application book, generates and sends a purchase message using the FIX protocol.

Parsing a message, building a glass forming a message with the application is encoded “hard” without the support of any variations and checks to ensure a minimum delay. The code is available on GitHub .

Stand for the hardware CEPappliance

The SEP acts as a CEPappliance or “piece of hardware”, as we call it, - it is a DE5-Net board with Altera Stratix V FPGA chip inserted into the PCIe server slot through which it receives power and nothing else. Management and data exchange with the board through a 10G Ethernet connection.

We already said that our firmware for the FPGA chip contains many different components, including everything necessary for the implementation of the testing scenario described here.

The script program for the CEPappliance is contained in two files. In one file , a data processing logic program, which we call a circuit. In another file, a description of the adapters through which the circuit (or the piece of hardware that runs it) interacts with the outside world. So simple!

For CEPappliance, we implemented two versions of the circuit and made measurements for each version. In one version (CEPappliance ALU) logic is implemented in a high-level built-in language (see. Line 47- 67 ). In another (CEPappliance WIRE) - on Verilog'e (see line. 47- 54 ).

results

Measured tick-to-trade delays in nanoseconds:

| SUT | min | avg | max | stddev | 95% | 97% | 99% | 99.9% |

|---|---|---|---|---|---|---|---|---|

| Solarflare TCPDirect | 1411 | 1637 | 2638 | 150 | 2022 | 2116 | 2303 | 2619 |

| Cepappliance alu | 1050 | 1125 | 1620 | 45 | 1251 | 1320 | 1415 | 1549 |

| Cepappliance wire | 561 | 640 | 1163 | 45 | 768 | 825 | 907 | 1087 |

conclusions

No miracle happened and the hardware implemented on the basis of FPGA turned out to be faster than the server-based solution with Solarflare TCPDirect. The higher the percentile, the greater the difference in speed. At the same time, the speed of the solution at CEPappliance has a variance much lower.

The option for CEPappliance, when the data processing logic is implemented on Verilog, is 60-70% faster than implementing the same algorithm in the built-in CEPappliance language.

Source

We have posted almost all of the source code that participated in the testing open on GitHub in this repository .

Only the test driver code was left closed, since there is a hope to monetize it. After all, it allows you to very accurately measure the reaction rate of the system. And without this information, making a high-quality HFT solution is almost impossible.

What's next?

It would be logical to find out whether the revealed difference in the delays of various decisions matters, for example, when trading on the Moscow Exchange. About it will be in the next article. But looking ahead, let's say that even half a microsecond matters!