Trust Me, I know what I'm doing: independent adaptation of the modular robot to the task environment

It is impossible to imagine the sci-fi world of the future without robots. Whether it be the Androids from the Alien universe, the anthropomorphic robot machines from Transformers, the robot dog named Axel or the huge robot killer ED-209 of the movie “RoboCop”, which to many viewers was like a chicken. But what do they have in common? In addition to strength, speed, endurance and other, so to speak, physical features? Intelligence. And what is intelligence? The ability to think, analyze data and make decisions, if we talk exaggerated and in a nutshell. Today, we will meet with the world's first modular robot, which is able to analyze the situation in the field, rearranging to achieve the task. How scientists managed to teach a robot to think quickly and make right decisions, how does this robot work and how well? The research team describes all this in its report, which we will dive into. Go.

The basis of the basics

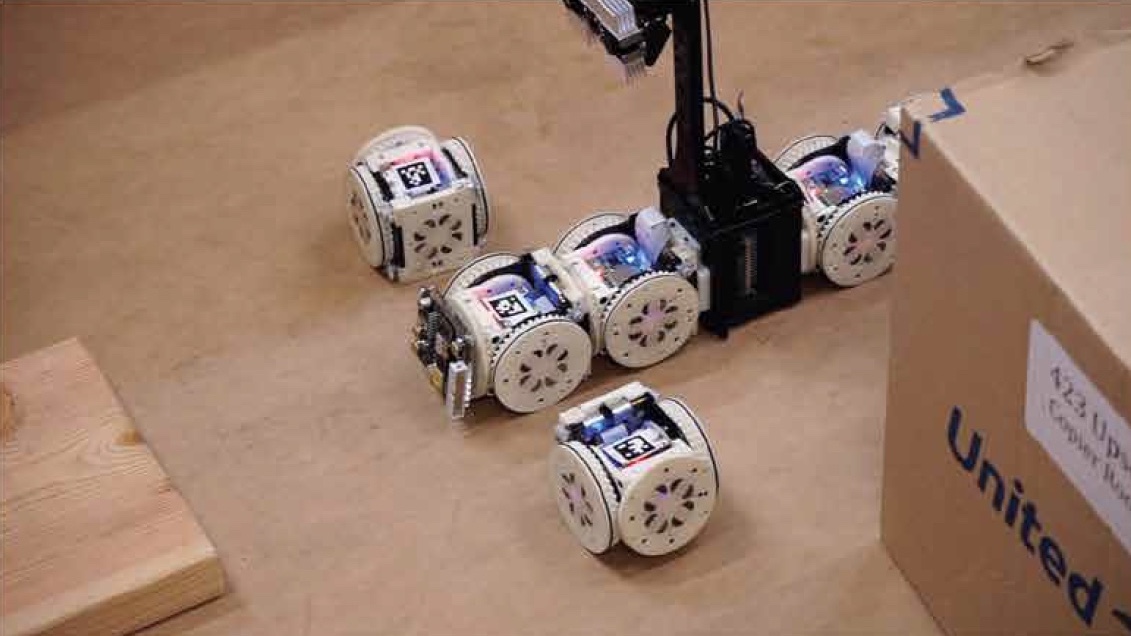

To start, it is worthwhile to tell a little about how the tested robot looks. It is not quite usual, its design features are disclosed in the name itself - a modular self-configurable robot (MSRR). We all understand the word "robot". Let us see what the first two mean. Modular: this robot consists of modules that are essentially independent robots. Connecting these modules you can get a design of any complexity (practically), depending on the task you need. That is, individual mini-robots (modules) can safely perform some tasks by themselves, and for more complex tasks they come together, as powerful rangers unite in Megazord (children of the 90s will understand what kind of nonsense I have just written :) ).

Appearance of the modular robot.

Researchers note that research has already been conducted in the field of modular robots that are capable of solving some problems. However, earlier such robots could solve either simple tasks or complex tasks whose solutions were already programmed into them by humans. In fact, they did not make decisions on their own, assessing the situation and complexity necessary to complete the task.

Scientists conducted a series of tests, each of which was different from the previous one. The robot had to perform a specific task (which one later) re-arranged for a new environment. A double toe loop, of course, no one asked him to do, but the results still struck scientists. And now we will get to know them.

Test of "Megazord"

The tests of the robot took place in three stages, each of which had its own task. They passed the tests in the room where the “working environment” of the robot was formed with the help of boxes, which was changed at each stage by researchers. Imagine a maze that changes every time you enter it. The robot was not originally programmed for each new environment, for it it was absolutely new conditions. The only thing that the robot knew was their abilities. First of all, it is an environmental assessment, then the robot chooses from the library of its capabilities those that most effectively contribute to the task.

As I said earlier, there were only three stages of testing with different tasks and environment:

- Explore the environment, find all the pink / green objects and the blue mark, move the objects to the drop point;

- Investigate the environment, find the mailbox, place the breadboard in the box;

- Explore the environment, find the parcel, stick a stamp on it.

The tasks seem pretty simple, but this is for us. We went into the room, looked around, found everything you need and ready. But do not compare one of the most complex computers in the world (our brain) and a small robot.

At the first stage, the robot was tasked to take 2 objects - “metallic trash”, marked in pink and green, and deliver it to the discharge point for “recycling”. The discharge point was marked with a blue square on the wall.

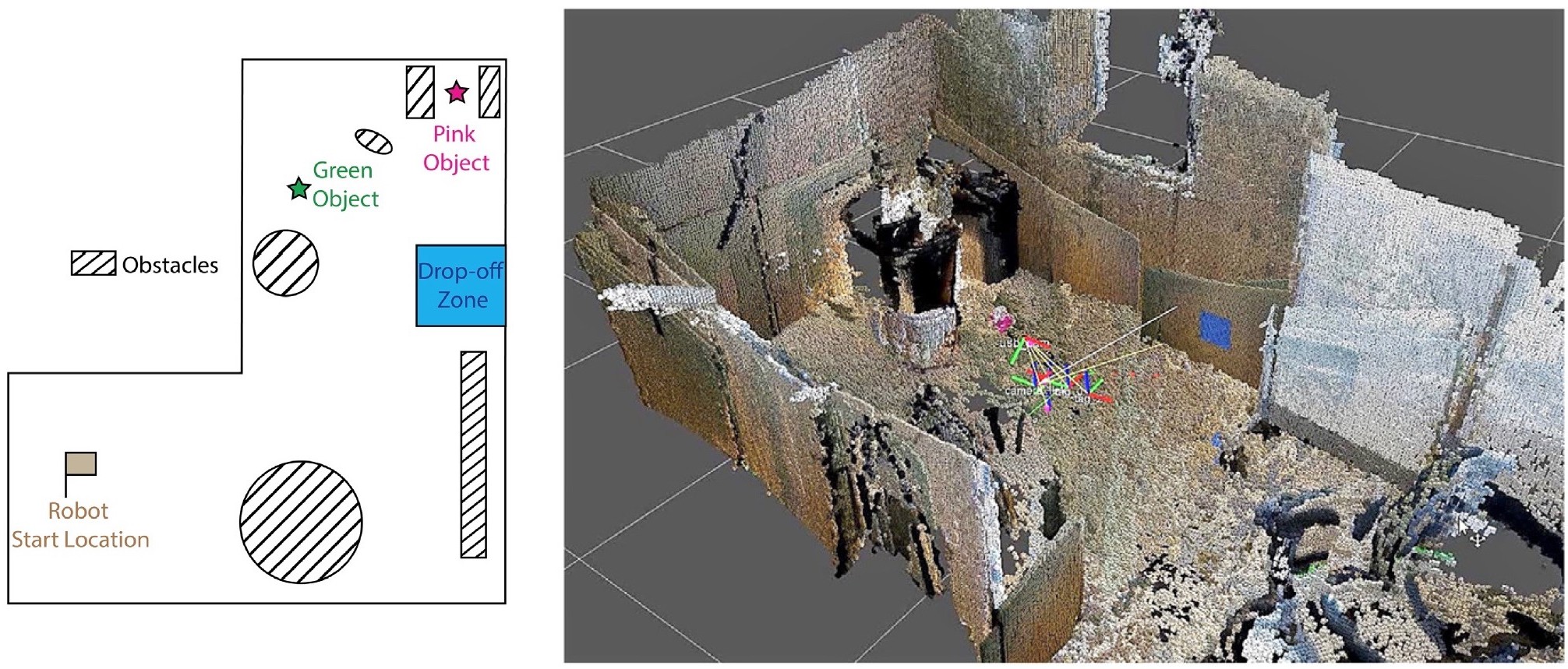

The task is set, the working environment is ready, the robot is running. The first thing he does is to scan the space and create a three-dimensional map, according to which he will be guided in the future.

Diagram of the test zone of the first stage (left) and a three-dimensional map of the working environment of the modular robot (right).

Consider the actions of the robot on the example of the first stage of testing, the details of which you see in the diagram and the snapshot above.

The green label in the diagram is the location of a regular soda can, access to which is not barred. The pink mark is a coil of wire, which is located in the narrow space between two garbage cans. Also across the square were located various obstacles.

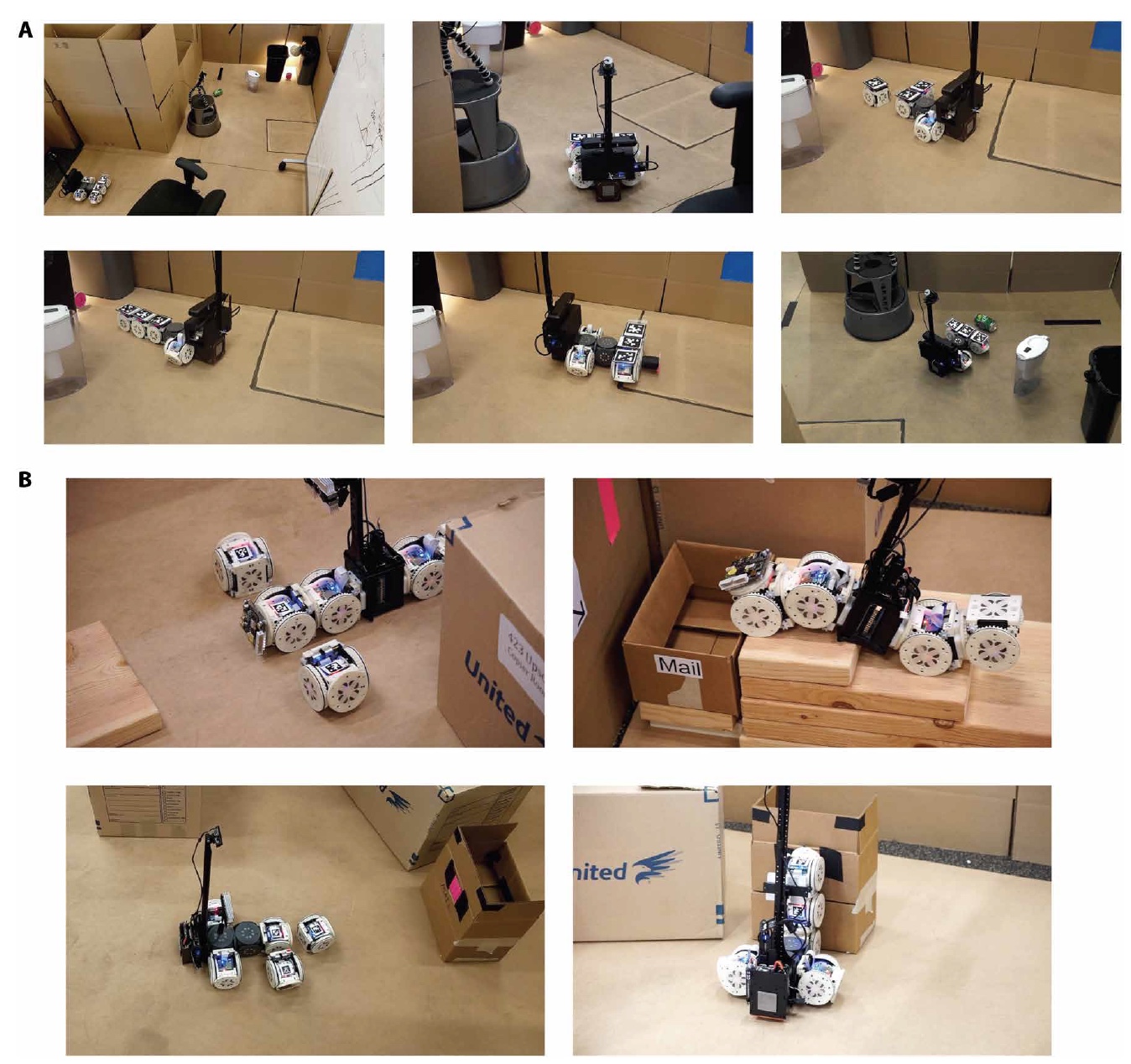

First, the robot chose the most appropriate form for movement - “machine”. Finding a pink object, the robot analyzed its position and concluded that such a form would not allow it to get the object. Because he rebuilt in the form of "trunk" and pulled the object. Returning to the shape of the car, the robot took the object and carried it to the discharge point, the position of which he already knows thanks to scanning and creating a map.

A - the first stage (from left to right: type of working environment, scanning the environment, reconfiguring the robot, capturing a pink object, transferring an object, capturing a green object); B - the second stage (the top 2 pictures) and the third stage (the bottom 2 pictures).

At the second stage, the environment was already different, because the previous tactics of the robot would no longer be effective. In the pictures above, we see that the robot has moved into the form of "snake" in order to climb the steps and leave the object in the mailbox. That is, the robot, having assessed the situation, realized that the presence of certain modules would not only be superfluous, but could also lead to failure, therefore it disconnected them.

At the third stage, the robot had problems finding the target (tags where he should place the postage stamp), but after a couple of minutes he was able to find it. The label is located at a height of 25 cm from the floor, because the initial configuration of the robot (“machine”) is not effective. The robot was rebuilt in a vertical configuration and glued brand.

Recipe for a robot puzzle

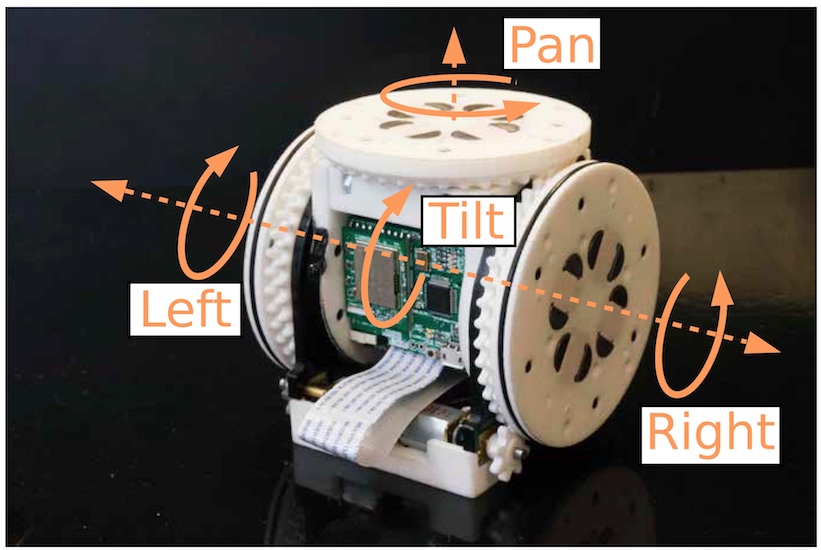

As we have already understood, our Megazord consists of several small robot modules capable of performing various movements on their own, which gives a large robot the advantage of mobility and, of course, the ability to reconfigure.

The appearance of the module. The arrows show how the module can change position (horizontal and vertical turns and tilt).

Each module (each face of the cube is 80 mm) is equipped with electro-permanent magnets that allow the modules to connect with each other, regardless of the side of the connection. Also, this magnet allows you to attach ferromagnetic objects (for example, for carrying to the point of discharge or clearing the path for the robot). Also, each individual module is equipped with its own battery (approximate term of work - 1 hour), a microcontroller and a Wi-Fi chip. All modules were controlled wirelessly by a central computer, and a common household router was used to provide a Wi-Fi environment.

Appearance of the main part of the robot (camera, stand, RGB-D camera and base).

The base of the robot is a small box (90x70x70 mm) of thin metal plates, which allows the modules to join the base through magnetism. Computational processes were performed using the Intel Atom 1.92 GHz processor, 4 GB of RAM and 64 GB of storage. Also in the base was built USB Wi-Fi adapter.

The most important step in the implementation of the task is its understanding. This rule applies to humans and small smart robots. In order to understand what and how to do, the robot scans the environment. This is done through the RGB-D camera.

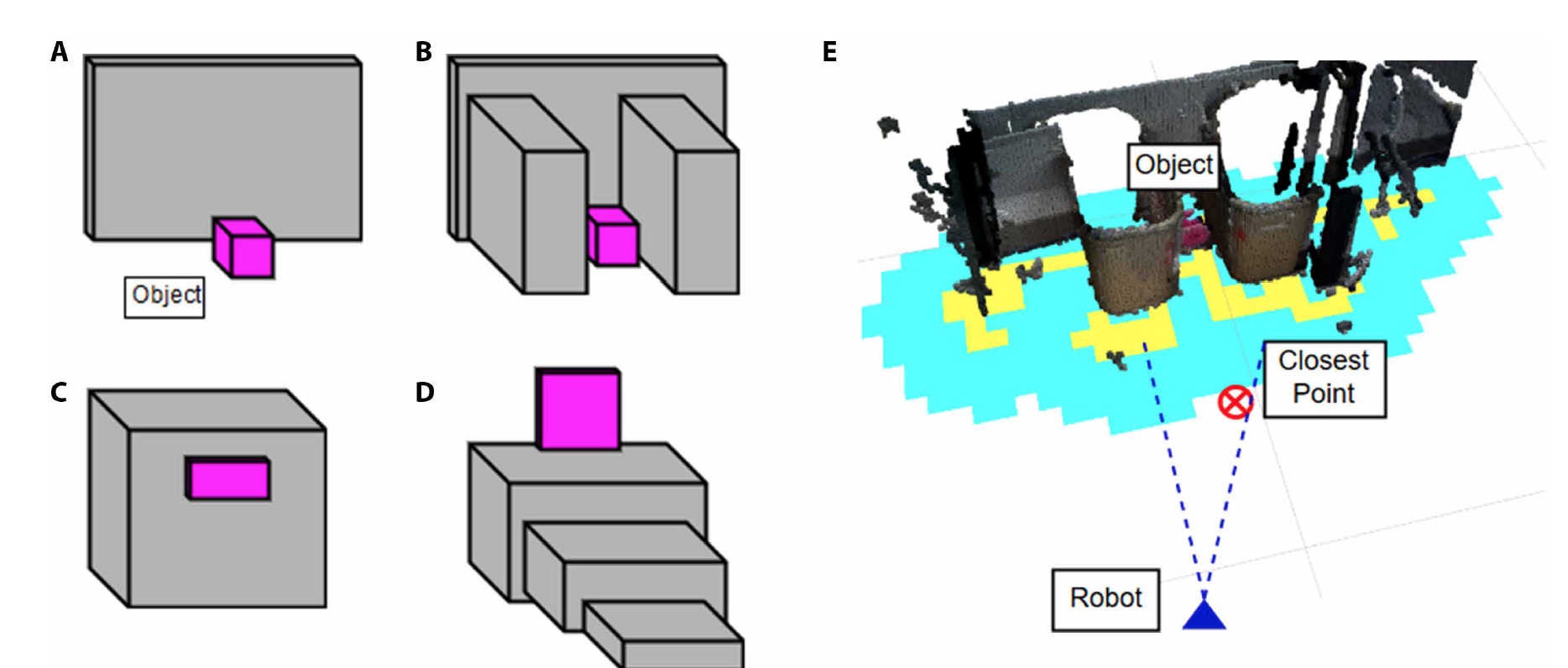

Position of objects of interest ( A , B - the first stage, C - the second and D- the third), as well as an example of how the robot “sees” during a test with an object inside a narrow opening ( E ).

When the scanning system recognizes the required object, the function of characterization of information obtained from the three-dimensional map of the medium is activated. This forms a grid of space on which areas inaccessible to the robot are marked in yellow. Next, the system finds the nearest access point to the object (at an angle of 20 ° from the robot itself). If the distance from this point to the object is greater than the boundary value, and the object is located on the floor, the system determines that the object is located in the opening. If the object is determined by the system in a position above the floor, then it evaluates it as steps. If, however, the distance from the point to the object is below the boundary indicator, then the system decides to use the configuration “free” (that is, the original) or “high” (for lifting to a certain height).

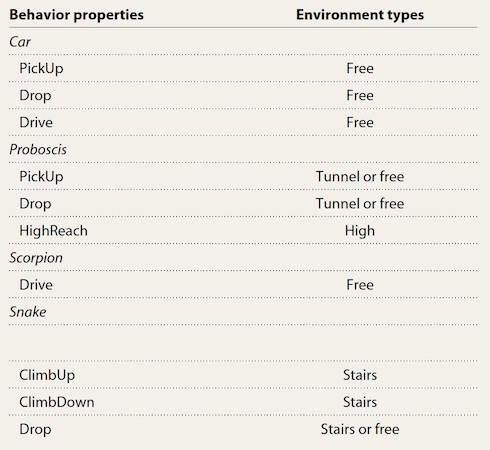

The configuration table of the robot and what they are used for.

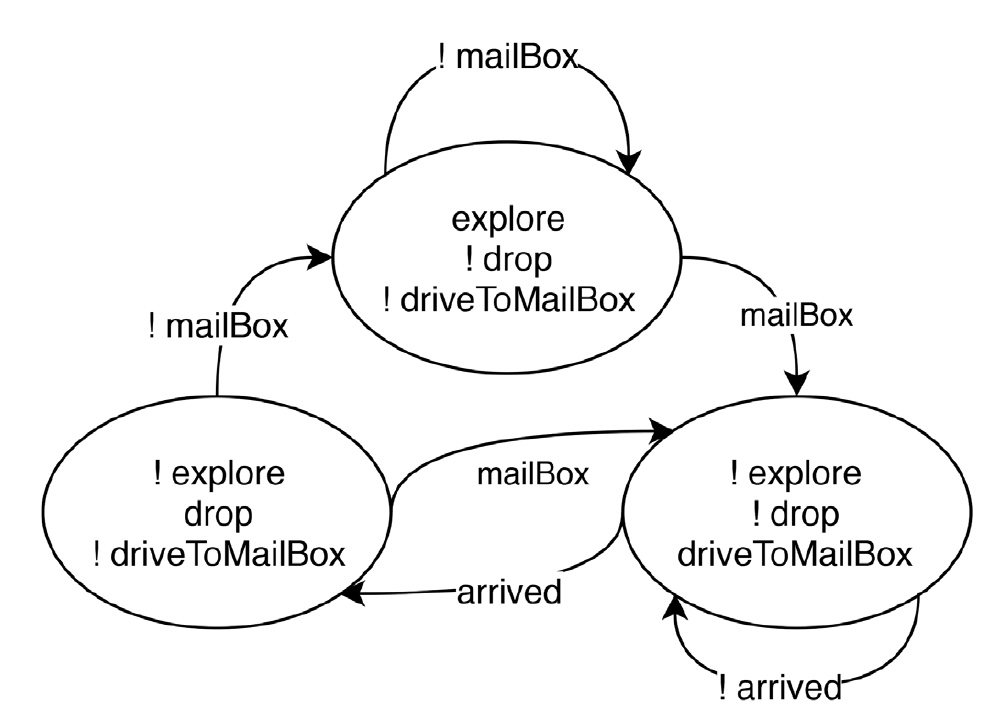

The specification of the task of the robot is quite simple. Consider an example with a mailbox:

- perform a “study” if and only if the robot does not see the mailbox;

- perform a “move to the box” if and only if the robot sees the box and has not yet reached it;

- perform a "reset" (object in the box) if and only if the robot sees the box and arrived at it.

This can be schematically depicted as follows (the “!” Sign indicates actions with a value of true and false, depending on the variant):

The configuration change process itself begins with the fact that the system determines the need for this process. If there is one, the downwardly directed camera detects a section of 0.75 m by 0.5 m, where the reconfiguration process can be carried out successfully and without interference from any objects. The controller determines the initial and final configuration, and then transmits a signal to the modules that have the AprilTag tag (looks like a QR code). Modules receive a command to disconnect, move to the desired position and connect in a new configuration.

This video shows the entire process of testing the robot, from scanning the environment to the task.

The most curious element of this transformer robot is still not the ability to change the configuration of the modules, but the ability to independently decide how to change it, adapting to the circumstances.

The system architecture uses a framework that allows any user, using the most common vocabulary, to assign a task to the robot and form a central controller, which in turn will manage the modules depending on the environment of the task. The foundation of all this is LTLMoP (Linear Temporal Logic MissiOn Planning), which allows you to create controllers based on user-provided high-level instructions.

For more detailed acquaintance with the study I recommend the report of the researchers and additional materials to it.

Epilogue

This system is very interesting, though not without flaws. So, for example, the user gives the task of the robot to place an object in the mailbox, but do not drop the object until the box is detected. In other words, the robot cannot just interrupt the task execution with the words “F * ck it, I quit!”. At the same time, if the robot fails to detect the box, the system will ask the user to enter clarifying data. It turns out the robot is not so independent? No, of course this is not yet the T-1000, but the first steps towards this are already there. After all, the robot itself decides which of the configurations available to it is suitable for performing the task in the best way. It is impossible to call this a thought process, everything is quite simple and linear.

However, even if this small transformer requires human help, it can still make a decision. Let's hope that we are not seeing the formation of the future of Ultron now. :)

Friday Offtop (all good weekend):

Video for those who can not decide who he likes more: cool samurai or cool robots.

And the second offtopic (sorry, could not resist) for music lovers.

Video for those who can not decide who he likes more: cool samurai or cool robots.

And the second offtopic (sorry, could not resist) for music lovers.

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending to friends, 30% discount for Habr's users on a unique analogue of the entry-level servers that we invented for you: The whole truth about VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps from $ 20 or how to share the server? (Options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps until December for free if you pay for a period of six months, you can order here .

Dell R730xd 2 times cheaper? Only we have 2 x Intel Dodeca-Core Xeon E5-2650v4 128GB DDR4 6x480GB SSD 1Gbps 100 TV from $ 249in the Netherlands and the USA! Read about How to build an infrastructure building. class c using servers Dell R730xd E5-2650 v4 worth 9000 euros for a penny?