Everyday Service Plan - Part 3: Automatically Create Backups

- Tutorial

There are a large number of posts in which they insistently call for one simple truth - you need to do backups on an ongoing basis. But people will always be divided into two categories: who does not do backups yet, and who already does them. The first category, which neglects such advice, can often be found on specialized forums with approximately the same questions:

There are a large number of posts in which they insistently call for one simple truth - you need to do backups on an ongoing basis. But people will always be divided into two categories: who does not do backups yet, and who already does them. The first category, which neglects such advice, can often be found on specialized forums with approximately the same questions: - my disks flew / someone deleted my database ... how can I recover my data?

- Do you have a fresh backup?

- no

In order not to become the hero of such a situation, you need to spend a minimum of effort. First, allocate a disk array on which to store backups. Since storing backups along with database files is clearly not our choice. The second ... is to create a database backup maintenance plan.

What we will do next, and after that we will discuss some of the subtleties associated with backups.

Create a table in which error messages will be recorded when creating backups:

USE [master]

GO

IF OBJECT_ID('dbo.BackupError', 'U') IS NOT NULL

DROP TABLE dbo.BackupError

GO

CREATE TABLE dbo.BackupError (

db SYSNAME PRIMARY KEY,

dt DATETIME NOT NULL DEFAULT GETDATE(),

msg NVARCHAR(2048)

)

GO

The script for backing up databases for every day I use this:

USE [master]

GO

SET NOCOUNT ON

TRUNCATE TABLE dbo.BackupError

DECLARE

@db SYSNAME

, @sql NVARCHAR(MAX)

, @can_compress BIT

, @path NVARCHAR(4000)

, @name SYSNAME

, @include_time BIT

--SET @path = '\\pub\backup' -- можно задать свой путь для бекапа

IF @path IS NULL -- либо писать в папку для бекапов указанную по умолчанию

EXEC [master].dbo.xp_instance_regread

N'HKEY_LOCAL_MACHINE',

N'Software\Microsoft\MSSQLServer\MSSQLServer',

N'BackupDirectory', @path OUTPUT, 'no_output'

SET @can_compress = ISNULL(CAST(( -- вопросы сжатия обсуждаются ниже

SELECT value

FROM sys.configurations

WHERE name = 'backup compression default') AS BIT), 0)

DECLARE cur CURSOR FAST_FORWARD READ_ONLY LOCAL FOR

SELECT d.name

FROM sys.databases d

WHERE d.[state] = 0

AND d.name NOT IN ('tempdb') -- базы для которых не надо делать бекапов

OPEN cur

FETCH NEXT FROM cur INTO @db

WHILE @@FETCH_STATUS = 0 BEGIN

IF DB_ID(@db) IS NULL BEGIN

INSERT INTO dbo.BackupError (db, msg) VALUES (@db, 'db is missing')

END

ELSE IF DATABASEPROPERTYEX(@db, 'Status') != 'ONLINE' BEGIN

INSERT INTO dbo.BackupError (db, msg) VALUES (@db, 'db state != ONLINE')

END

ELSE BEGIN

BEGIN TRY

SET @name = @path + '\T' + CONVERT(CHAR(8), GETDATE(), 112) + '_' + @db + '.bak'

SET @sql = '

BACKUP DATABASE ' + QUOTENAME(@db) + '

TO DISK = ''' + @name + ''' WITH NOFORMAT, INIT' +

CASE WHEN @can_compress = 1 THEN ', COMPRESSION' ELSE '' END

--PRINT @sql

EXEC sys.sp_executesql @sql

END TRY

BEGIN CATCH

INSERT INTO dbo.BackupError (db, msg) VALUES (@db, ERROR_MESSAGE())

END CATCH

END

FETCH NEXT FROM cur INTO @db

END

CLOSE cur

DEALLOCATE cur

If the Database Mail component is configured on the server , then you can add a notification by mail about the problems encountered in the script:

IF EXISTS(SELECT 1 FROM dbo.BackupError) BEGIN

DECLARE @report NVARCHAR(MAX)

SET @report =

'' +

CAST((

SELECT td = db, '', td = dt, '', td = msg

FROM dbo.BackupError

FOR XML PATH('tr'), TYPE

) AS NVARCHAR(MAX)) +

'database date message

'

EXEC msdb.dbo.sp_send_dbmail

@recipients = 'your_account@mail.ru',

@subject = 'Backup Problems',

@body = @report,

@body_format = 'HTML'

END

Actually, at this stage, a working script for automatically creating backups is ready. It remains to create it job , which would run this script on a schedule.

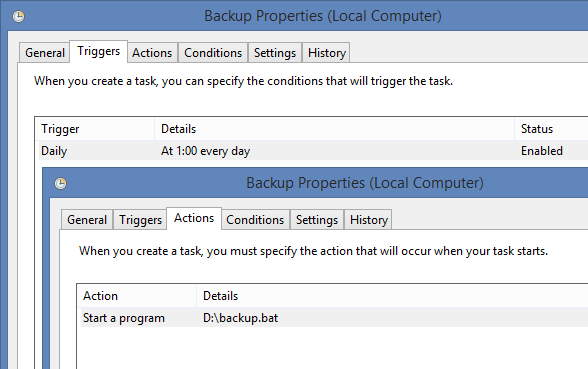

Owners of Express editions need to be mentioned separately, since there is no way to use SQL Server Agent in SQL Server Express edition . Whatever sadness comes after these words, in fact, everything is solved. The easiest way is to create a bat file with roughly similar contents:

sqlcmd -S \ -i D:\backup.sql

Next, open the Task Scheduler and create a new task in it.

The second alternative is to use third-party developments that allow you to run tasks on a schedule. Among them, you can highlight SQL Scheduler - a convenient and free tool. My installer was lost, so I would be grateful if someone would share a working link for readers.

Now let's talk about useful little things related to backups.

Compression ... The

ability to compress backups was first introduced in SQL Server 2008 . I remember with nostalgia the time when working on the 2005 version I had 7Zip th compress backups. Now everything has become much easier.

But you need to remember that backup compression will be used only if you run the BACKUP command with the COMPRESSION parameter or enable default compression with the following command:

USE [master]

GO

EXEC sp_configure 'backup compression default', 1

RECONFIGURE WITH OVERRIDE

GO

By the way, it will be said that compressed backups have some advantages: you need less space to store them, restoring a database from compressed backups is usually done a little faster, they are also created faster because they require fewer I / O operations. By the way, there are also disadvantages - when working with compressed backups, the load on the processor increases.

With this query, you can return the size of the last FULL backup with and without compression:

SELECT

database_name

, backup_size_mb = backup_size / 1048576.0

, compressed_backup_size_mb = compressed_backup_size / 1048576.0

, compress_ratio_percent = 100 - compressed_backup_size * 100. / backup_size

FROM (

SELECT

database_name

, backup_size

, compressed_backup_size = NULLIF(compressed_backup_size, backup_size)

, RowNumber = ROW_NUMBER() OVER (PARTITION BY database_name ORDER BY backup_finish_date DESC)

FROM msdb.dbo.backupset

WHERE [type] = 'D'

) t

WHERE t.RowNumber = 1

Typically, compression reaches 40-90%, if you do not take binary data into account:

database_name backup_size_mb compressed_backup_size_mb compress_ratio_percent

------------------------------- ---------------- -------------------------- ------------------------

AdventureWorks2012 190.077148437 44.652716636 76.50810894222767

DB_Dev 1530.483398437 295.859273910 80.66890015190163

BinDocuments 334.264648437 309.219978332 7.49246748707956

locateme 37.268554687 17.247792243 53.72025454546944

master 3.643554687 0.654214859 82.04459888434736

model 2.707031250 0.450525283 83.35721895292208

msdb 17.147460937 2.956551551 82.75807967958028

OnlineFormat 125.078125000 23.639108657 81.10052524545207

Refactoring 286.076171875 35.803841590 87.48450758543927

ReportServer$SQL_2012 4.045898437 0.696615219 82.78218719828627

ReportServer$SQL_2012TempDB 2.516601562 0.428588867 82.96953822273962

If you modify the previous request, then you can monitor for which databases backups were made:

SELECT

d.name

, rec_model = d.recovery_model_desc

, f.full_time

, f.full_last_date

, f.full_size

, f.log_time

, f.log_last_date

, f.log_size

FROM sys.databases d

LEFT JOIN (

SELECT

database_name

, full_time = MAX(CASE WHEN [type] = 'D' THEN CONVERT(CHAR(10), backup_finish_date - backup_start_date, 108) END)

, full_last_date = MAX(CASE WHEN [type] = 'D' THEN backup_finish_date END)

, full_size = MAX(CASE WHEN [type] = 'D' THEN backup_size END)

, log_time = MAX(CASE WHEN [type] = 'L' THEN CONVERT(CHAR(10), backup_finish_date - backup_start_date, 108) END)

, log_last_date = MAX(CASE WHEN [type] = 'L' THEN backup_finish_date END)

, log_size = MAX(CASE WHEN [type] = 'L' THEN backup_size END)

FROM (

SELECT

s.database_name

, s.[type]

, s.backup_start_date

, s.backup_finish_date

, backup_size =

CASE WHEN s.backup_size = s.compressed_backup_size

THEN s.backup_size

ELSE s.compressed_backup_size

END / 1048576.0

, RowNum = ROW_NUMBER() OVER (PARTITION BY s.database_name, s.[type] ORDER BY s.backup_finish_date DESC)

FROM msdb.dbo.backupset s

WHERE s.[type] IN ('D', 'L')

) f

WHERE f.RowNum = 1

GROUP BY f.database_name

) f ON f.database_name = d.name

If you have SQL Server 2005, then this line: need to be changed to: The results of this query can help prevent many problems:

backup_size = CASE WHEN s.backup_size = s.compressed_backup_size THEN ...

backup_size = s.backup_size / 1048576.0

name rec_model full_time full_last_date full_size log_time log_size

------------------- --------- --------- ------------------- ----------------- --------- ------------

master SIMPLE 00:00:01 2015-11-06 15:08:12 0.654214859 NULL NULL

tempdb SIMPLE NULL NULL NULL NULL NULL

model FULL 00:00:00 2015-11-06 15:08:12 0.450525283 NULL NULL

msdb SIMPLE 00:00:00 2015-11-06 15:08:12 2.956551551 NULL NULL

DB_Dev FULL 00:00:13 2015-11-06 15:08:26 295.859273910 00:00:04 72.522538642

BinDocuments FULL 00:00:05 2015-11-06 15:08:31 309.219978332 00:00:01 2.012338638

Refactoring SIMPLE 00:00:02 2015-11-06 15:08:33 35.803841590 NULL NULL

locateme SIMPLE 00:00:01 2015-11-06 15:08:34 17.247792243 NULL NULL

AdventureWorks2012 FULL 00:00:02 2015-11-06 15:08:36 44.652716636 NULL NULL

OnlineFormat SIMPLE 00:00:01 2015-11-06 15:08:39 23.639108657 NULL NULL

You can immediately see that for all databases there are FULL backups for the current date.

Next, you can look at the backup creation time. Why is it asking? Suppose that earlier backup of the DB_Dev database took 5 seconds, and then began to take 1 hour. There can be many reasons for this: the disks cannot cope with the load, the data in the database has grown to indecent volumes, the disk has flown in RAID and the write speed has decreased.

If the database has the recovery model FULL or BULK_LOGGED , then it is advisable to backup the log from time to time so as not to doom the server to the torment of constant growth of the LDF file. The degree of filling the data file and log for databases can be viewed with this query:

IF OBJECT_ID('tempdb.dbo.#space') IS NOT NULL

DROP TABLE #space

CREATE TABLE #space (

database_id INT PRIMARY KEY,

data_used_size DECIMAL(18,6),

log_used_size DECIMAL(18,6)

)

DECLARE @SQL NVARCHAR(MAX)

SELECT @SQL = STUFF((

SELECT '

USE [' + d.name + ']

INSERT INTO #space (database_id, data_used_size, log_used_size)

SELECT

DB_ID()

, SUM(CASE WHEN [type] = 0 THEN space_used END)

, SUM(CASE WHEN [type] = 1 THEN space_used END)

FROM (

SELECT s.[type], space_used = SUM(FILEPROPERTY(s.name, ''SpaceUsed'') * 8. / 1024)

FROM sys.database_files s

GROUP BY s.[type]

) t;'

FROM sys.databases d

WHERE d.[state] = 0

FOR XML PATH(''), TYPE).value('.', 'NVARCHAR(MAX)'), 1, 2, '')

EXEC sys.sp_executesql @SQL

SELECT

database_name = DB_NAME(t.database_id)

, t.data_size

, s.data_used_size

, t.log_size

, s.log_used_size

, t.total_size

FROM (

SELECT

database_id

, log_size = SUM(CASE WHEN [type] = 1 THEN size END) * 8. / 1024

, data_size = SUM(CASE WHEN [type] = 0 THEN size END) * 8. / 1024

, total_size = SUM(size) * 8. / 1024

FROM sys.master_files

GROUP BY database_id

) t

LEFT JOIN #space s ON t.database_id = s.database_id

The query results on my local instance:

database_name data_size data_used_size log_size log_used_size total_size

--------------------- ------------- --------------- ------------- -------------- --------------

master 4.875000 3.562500 1.750000 0.781250 6.625000

tempdb 8.000000 4.500000 0.500000 0.632812 8.500000

model 4.062500 2.562500 1.250000 0.609375 5.312500

msdb 16.687500 16.062500 26.187500 2.804687 42.875000

DB_Dev 1782.812500 1529.562500 7286.125000 42.570312 9068.937500

BinDocuments 334.000000 333.500000 459.125000 12.031250 793.125000

Refactoring 333.125000 285.625000 127.882812 0.851562 461.007812

locateme 591.000000 36.500000 459.125000 8.585937 1050.125000

AdventureWorks2012 205.000000 189.125000 0.750000 0.453125 205.750000

OnlineFormat 125.375000 124.437500 1.015625 0.414062 126.390625

I also wanted to show a couple of interesting tricks that can make life easier. If several paths are specified when executing the BACKUP command , the resulting backup file will be cut into pieces of approximately the same size.

BACKUP DATABASE AdventureWorks2012

TO

DISK = 'D:\AdventureWorks2012_1.bak',

DISK = 'D:\AdventureWorks2012_2.bak',

DISK = 'D:\AdventureWorks2012_3.bak'

Once it came in handy when I had to copy backup to a USB flash drive with the FAT32 file system , which has a limit on the maximum file size.

Another interesting opportunity is to create a backup copy. From personal experience I’ll say that I’ve met people who first created backup in the default folder, and then copied it to a disk ball with their hands or a script. And you just had to use this command:

BACKUP DATABASE AdventureWorks2012

TO DISK = 'D:\AdventureWorks2012.bak'

MIRROR TO DISK = 'E:\AdventureWorks2012_copy.bak'

WITH FORMAT

Previous posts for automating everyday tasks:

Part 1: Automatically defragment indexes

Part 2: Automatically update statistics