Chrome 70 supports [feature list] and AV1 - why is support for this codec so important?

- Transfer

The 69th version of Chrome was a big upgrade , because showed a new interface for desktop and mobile versions. Chrome 70 is not so radical, but its new features are very important. We made an adapted translation and added material about the most, in our opinion, important in the new version - support for the AV1 codec, which sets a new performance bar. For now, the codec will be used only during video playback, but we hope that it will reach WebRTC - this will give us the opportunity to use advanced coding in video calls and conferences (for example, using our Web SDK ).

Almost 10 years ago, Google rolled out its own rival codec for H.264 - it was VP8 . While technological competitors were not very different, VP8 was free, and H.264 required a license. Android supported VP8 out of the box, starting with 2.3 Gingerbread. Also, all major browsers (with the exception of Safari) can play VP8-video.

Google is now part of the Alliance for Open Media, a group of companies that is developing a VP8 / VP9 successor called AV1. Facebook has already tested the codec on thousands of popular videos and found out that it gives an increase in compression by more than 30% compared to VP9, namely by 50.3%, 46.2% and 34% (compared to the main profile x264, high x264 profile and libvpx-vp9, respectively).

Starting with Chrome 70, AV1 codec supports the default for desktop and Android. And although the codec will take time to become widely used, it is still an important step, because no other browser supports AV1 yet.

Explanation: This section is an excerpt from the next generation video: Introducing AV1 article .

Chroma from Luma prediction (hereinafter - CfL) is one of the new forecasting methods used in AV1. CfL predicts colors in an image (chroma) based on the brightness value (luma). First, the brightness values are encoded / decoded, then the CfL attempts to predict colors. If the attempt is successful, then the amount of color information to be encoded is reduced; therefore, the place remains.

It is worth noting that CfL first appeared not in AV1. The founding document on the CFL dates back to 2009; at the same time, LG and Samsung offered early implementation of CfL under the name LM Mode , but it all curtailed during the development of HEVC / H.265. Cisco's Thor codec uses a similar technique, and HEVC implemented an improved version called Cross-Channel Prediction (CCP).

Until recently, video compression was based on interframe prediction , i.e. on the difference of the frame from the others, when the basis of the prediction are the reference frames . Although this technique has developed powerfully, it still requires reference frames that do not rely on other frames. As a result, the reference frames use only intraframe prediction.

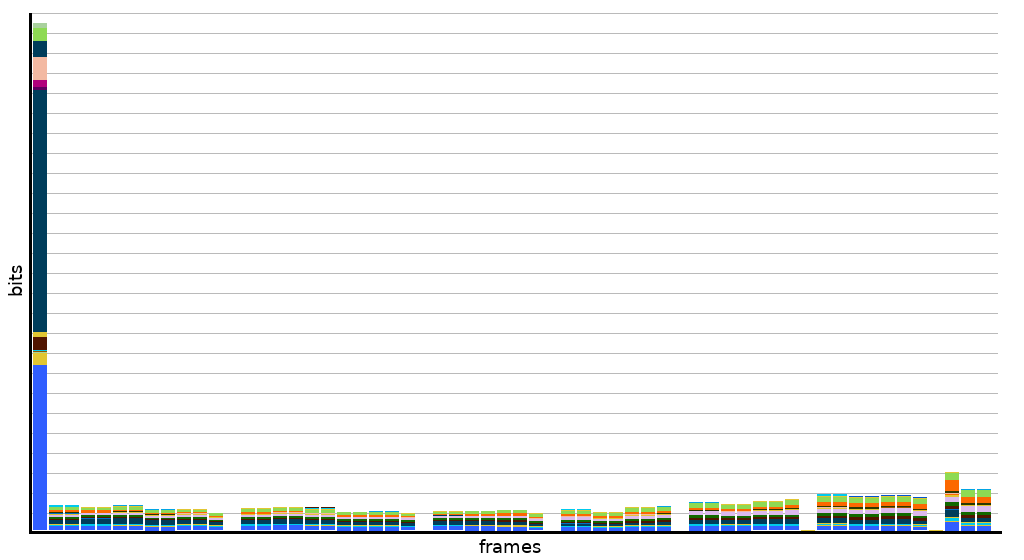

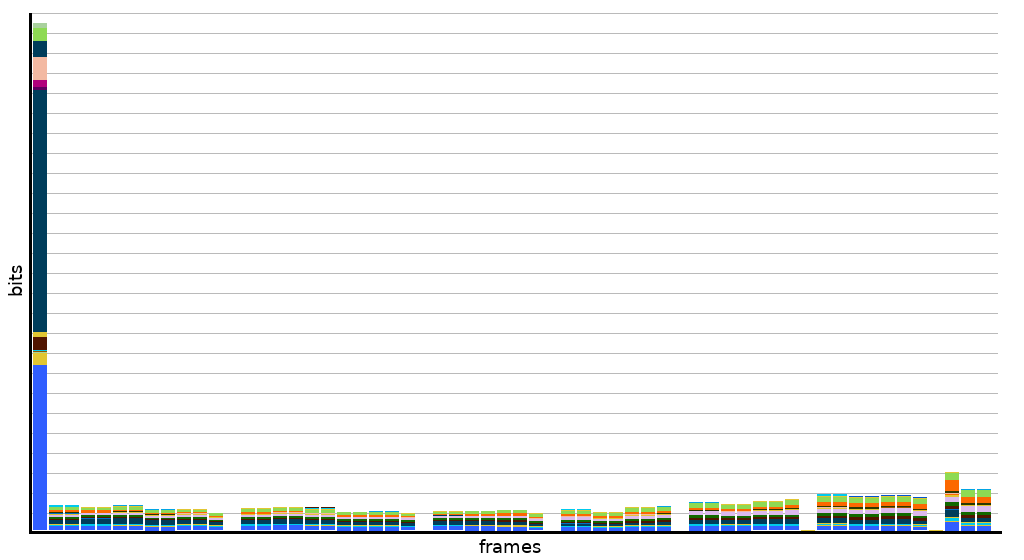

The first 60 frames of the test video. The histogram begins with a reference frame that is ~ 20 times the rest.

The first 60 frames of the test video. The histogram begins with a reference frame that is ~ 20 times the rest.

Reference frames are much more than intermediate frames - therefore, they are tried to be used as little as possible. But if there are a lot of reference frames, it increases the video bitrate. In order to cope with this and reduce the size of the reference frames, codec researchers focused on improving intra-frame prediction (which can also be applied to intermediate frames).

Summarizing, it can be argued that CfL is just an advanced intra-frame prediction technique, since it works based on the brightness within the frame .

CfL is basically a coloring of a monochrome image based on sound, accurate prediction. The prediction is facilitated by the fact that the image is beaten into small blocks, in which encoding occurs independently.

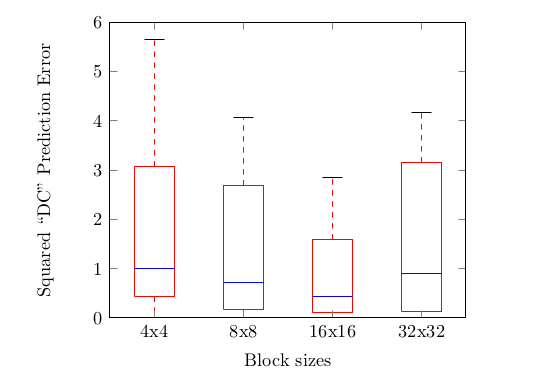

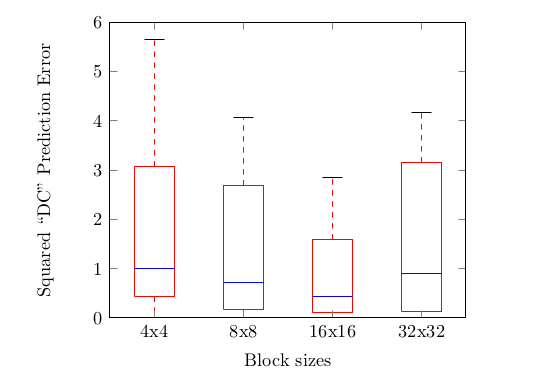

Splitting into blocks to maximize coding accuracy.

Splitting into blocks to maximize coding accuracy.

Since the coder does not work with the whole image, but with its pieces, it is enough to identify correlations in small areas - this is enough to predict the colors at the true brightness. Take a small block image:

Based on this fragment, the encoder will establish that it is bright = green, and the darker, the less saturation. And also with the rest of the blocks.

CfL did not use the PVQ algorithm , so the costs for the pixel and frequency domains are about the same. Plus, AV1 uses discrete sine transformation and pixel domain identity transform, so AV1 CfL in the frequency domain is not very easy. But - surprise - AV1 and does not need the CfL in the frequency domain, because The basic CfL equations work equally in both areas.

СfL in AV1 is designed to simplify the reconstruction as much as possible. For this, it is necessary to explicitly encode α and calculate β on its basis, although ... It is possible not to calculate β, but instead use the DC chroma offset already predicted by the coder (it will be less accurate, but still suitable):

Comparing the default DC prediction (calculation based on neighboring pixels) with the calculated β-value (calculation based on pixels in the current block).

Comparing the default DC prediction (calculation based on neighboring pixels) with the calculated β-value (calculation based on pixels in the current block).

Thus, the complexity of the approximation on the side of the coder is optimized maximally by using prediction. If the prediction is not enough, then the remaining transformations are performed; if the prediction does not give a benefit in bits, then it is not used at all.

The Open Media Alliance uses a series of tests that are also available in the Are We Compressed Yet?

Below is a table with a bit rate in a section on different indicators. Pay attention to CIE delta-E 2000, this is a metric of perceptual-uniform color error. Notice how the bitrate is saved? Up to 8%!

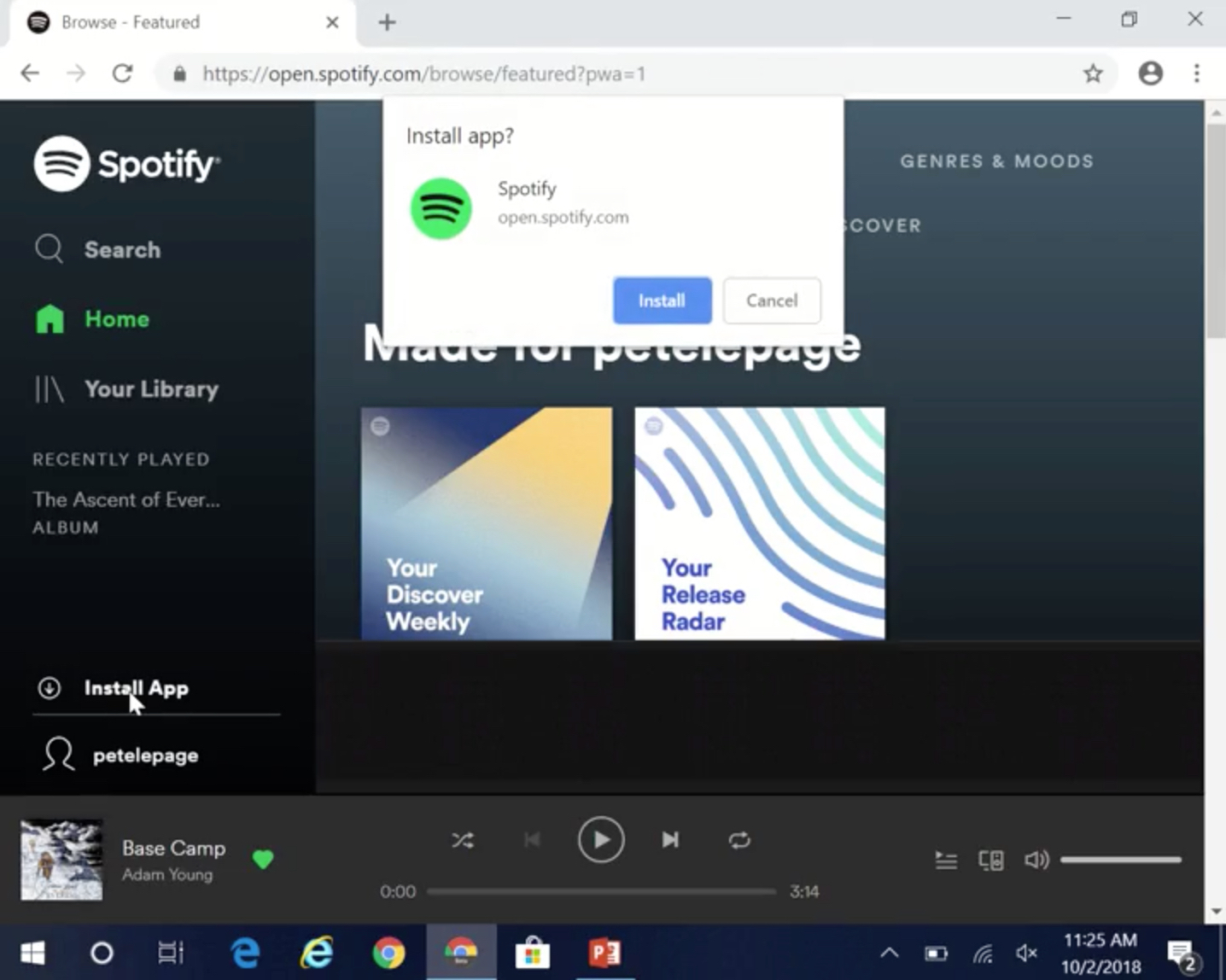

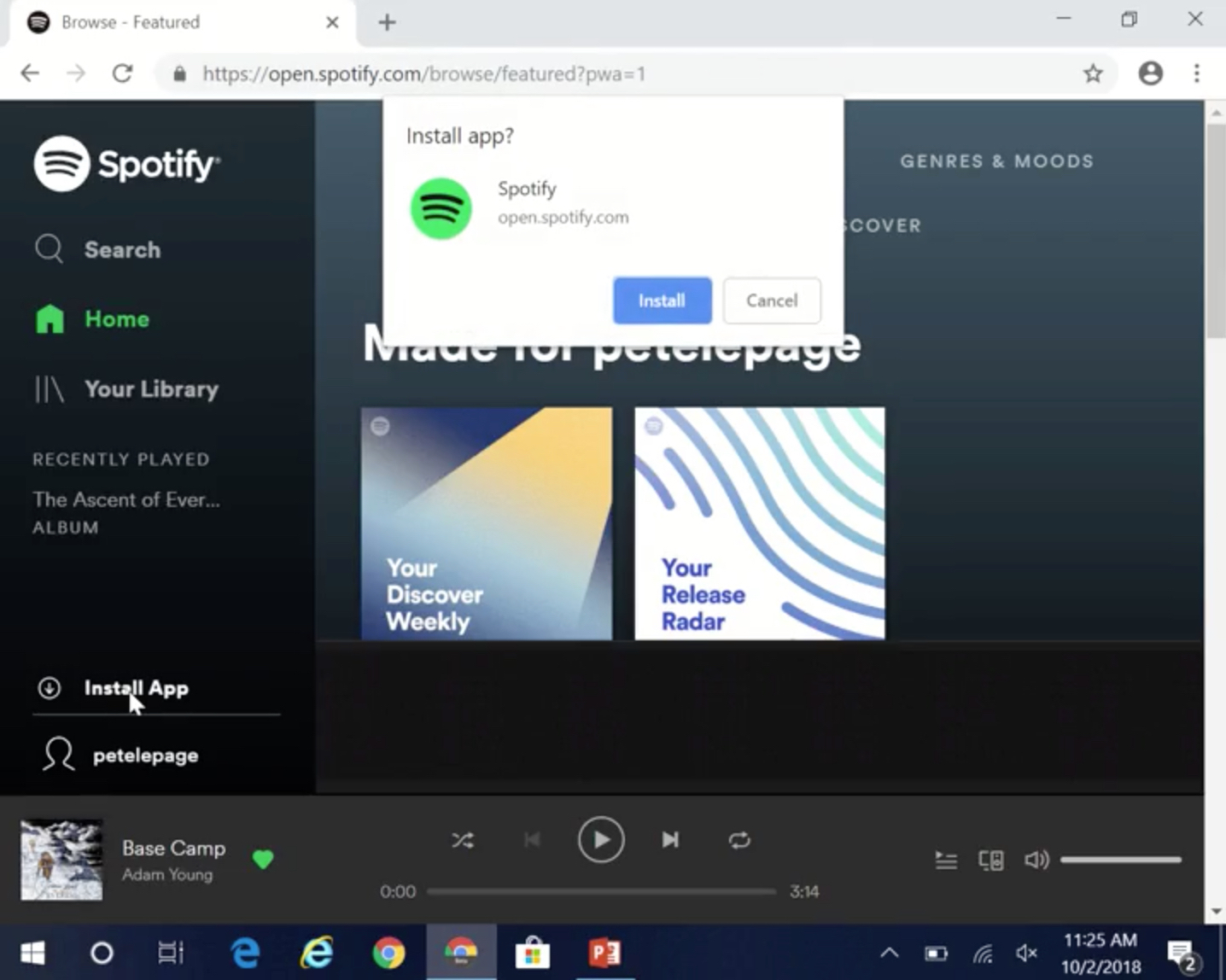

Although support for Progressive Web Apps is mainly implemented on mobile platforms , Google does not forget about the desktop. In the desktop, Chrome 67, there was a PWA installation button, and already Chrome 70 introduced several improvements for Windows users.

Now Chrome shows the “Install app?” Pop-up for PWA (after you interacted with them for a while). If you install PWA, the browser will create a shortcut for PWA in the Start menu. Similar to the mobile experience, the browser interface will be hidden in the open PWA.

Google promises to roll out this functionality for Mac and Linux in 72 versions.

Web applications can read barcodes and recognize faces differently, usually using machine learning JS libraries, but this can work very slowly. To make this feature more accessible and productive, Google introduces its own functionality in Chrome - shape detection.

Shape Detection API in Chrome 70 is an experimental function (origin trial), i.e. it is not yet ready for widespread use. The API can define 3 types of objects / images - faces, shtrikody and text. At the moment, compatibility varies across platforms, because the OS requires functionality to define objects. You can try the demo here .

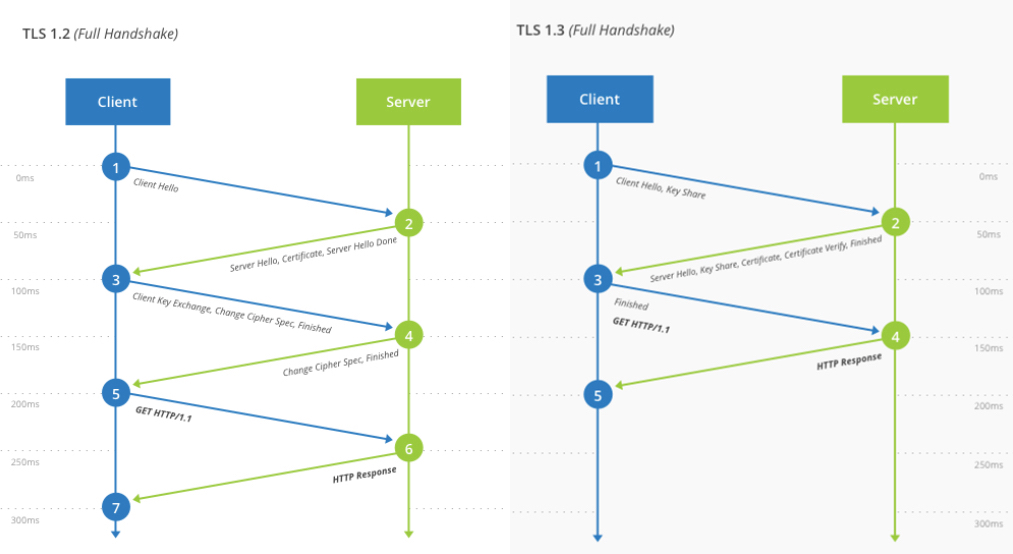

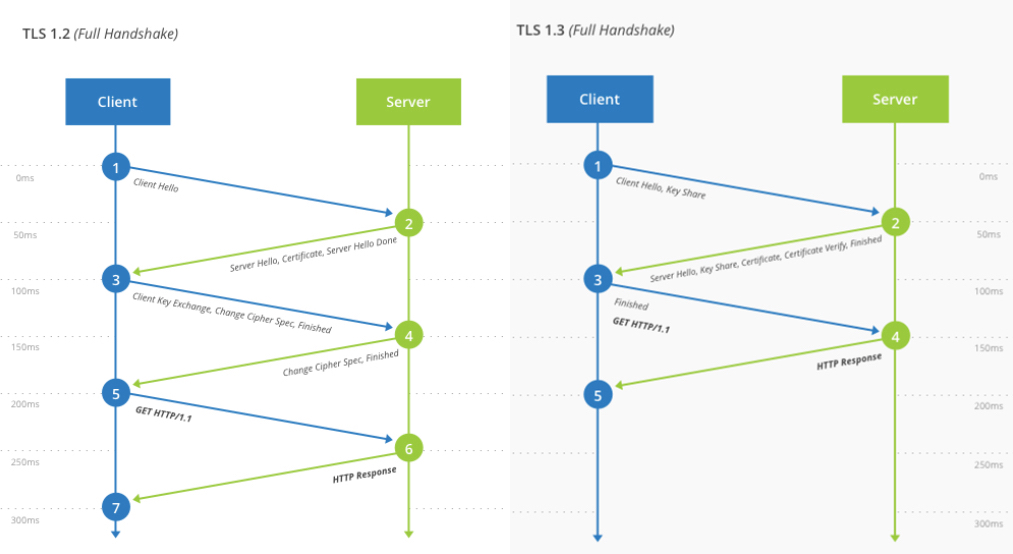

Transport Layer Security is a protocol that allows you to safely transfer data over the Internet. When you use the site on HTTPS, then most likely, the data is sent via TLS. Chrome 70 supports TLS 1.3, which was released last month.

A list of changes is available here , but in general, version 1.3 improves both efficiency and security (for example, “won” BREACH and CRIME, so you can safely use https compression again - thanks to menstenebris ). It takes fewer steps to establish a connection, so you can notice a slight improvement in time (if the site you are logged in to supports TLS 1.3, of course). Here is a visual comparison of the difference from CloudFlare :

With the release of TLS 1.3, support for old features, such as SHA1 and MD5, also ceases. Google announced this on the Status Page :

Firefox 60 added support for TLS 1.3 (draft 23), which was rolled out in May of this year; then it began to use and CloudFlare.

As always, Chrome 70 includes innovations for users and developers. Here is a list of other changes in this update:

AV1 support

Almost 10 years ago, Google rolled out its own rival codec for H.264 - it was VP8 . While technological competitors were not very different, VP8 was free, and H.264 required a license. Android supported VP8 out of the box, starting with 2.3 Gingerbread. Also, all major browsers (with the exception of Safari) can play VP8-video.

Google is now part of the Alliance for Open Media, a group of companies that is developing a VP8 / VP9 successor called AV1. Facebook has already tested the codec on thousands of popular videos and found out that it gives an increase in compression by more than 30% compared to VP9, namely by 50.3%, 46.2% and 34% (compared to the main profile x264, high x264 profile and libvpx-vp9, respectively).

Starting with Chrome 70, AV1 codec supports the default for desktop and Android. And although the codec will take time to become widely used, it is still an important step, because no other browser supports AV1 yet.

AV1 in detail

Explanation: This section is an excerpt from the next generation video: Introducing AV1 article .

Chroma from luma

Chroma from Luma prediction (hereinafter - CfL) is one of the new forecasting methods used in AV1. CfL predicts colors in an image (chroma) based on the brightness value (luma). First, the brightness values are encoded / decoded, then the CfL attempts to predict colors. If the attempt is successful, then the amount of color information to be encoded is reduced; therefore, the place remains.

It is worth noting that CfL first appeared not in AV1. The founding document on the CFL dates back to 2009; at the same time, LG and Samsung offered early implementation of CfL under the name LM Mode , but it all curtailed during the development of HEVC / H.265. Cisco's Thor codec uses a similar technique, and HEVC implemented an improved version called Cross-Channel Prediction (CCP).

Improved intraframe prediction

Until recently, video compression was based on interframe prediction , i.e. on the difference of the frame from the others, when the basis of the prediction are the reference frames . Although this technique has developed powerfully, it still requires reference frames that do not rely on other frames. As a result, the reference frames use only intraframe prediction.

Reference frames are much more than intermediate frames - therefore, they are tried to be used as little as possible. But if there are a lot of reference frames, it increases the video bitrate. In order to cope with this and reduce the size of the reference frames, codec researchers focused on improving intra-frame prediction (which can also be applied to intermediate frames).

Summarizing, it can be argued that CfL is just an advanced intra-frame prediction technique, since it works based on the brightness within the frame .

Colored chalk

CfL is basically a coloring of a monochrome image based on sound, accurate prediction. The prediction is facilitated by the fact that the image is beaten into small blocks, in which encoding occurs independently.

Since the coder does not work with the whole image, but with its pieces, it is enough to identify correlations in small areas - this is enough to predict the colors at the true brightness. Take a small block image:

Based on this fragment, the encoder will establish that it is bright = green, and the darker, the less saturation. And also with the rest of the blocks.

CfL to AV1

CfL did not use the PVQ algorithm , so the costs for the pixel and frequency domains are about the same. Plus, AV1 uses discrete sine transformation and pixel domain identity transform, so AV1 CfL in the frequency domain is not very easy. But - surprise - AV1 and does not need the CfL in the frequency domain, because The basic CfL equations work equally in both areas.

СfL in AV1 is designed to simplify the reconstruction as much as possible. For this, it is necessary to explicitly encode α and calculate β on its basis, although ... It is possible not to calculate β, but instead use the DC chroma offset already predicted by the coder (it will be less accurate, but still suitable):

Thus, the complexity of the approximation on the side of the coder is optimized maximally by using prediction. If the prediction is not enough, then the remaining transformations are performed; if the prediction does not give a benefit in bits, then it is not used at all.

Some tests

The Open Media Alliance uses a series of tests that are also available in the Are We Compressed Yet?

Below is a table with a bit rate in a section on different indicators. Pay attention to CIE delta-E 2000, this is a metric of perceptual-uniform color error. Notice how the bitrate is saved? Up to 8%!

| BD-rate | |||||||

| PSNR | PSNR-HVS | SSIM | CIEDE2000 | PSNR Cb | PSNR Cr | MS SSIM | |

| Average | -0.43 | -0.42 | -0.38 | -2.41 | -5.85 | -5.51 | -0.40 |

| 1080p | -0.32 | -0.37 | -0.28 | -2.52 | -6.80 | -5.31 | -0.31 |

| 1080p-screen | -1.82 | -1.72 | -1.71 | -8.22 | -17.76 | -12.00 | -1.75 |

| 720p | -0.12 | -0.11 | -0.07 | -0.52 | -1.08 | -1.23 | -0.12 |

| 360p | -0.15 | -0.05 | -0.10 | -0.80 | -2.17 | -6.45 | -0.04 |

... and other news in Chrome 70

PWA on Windows

Although support for Progressive Web Apps is mainly implemented on mobile platforms , Google does not forget about the desktop. In the desktop, Chrome 67, there was a PWA installation button, and already Chrome 70 introduced several improvements for Windows users.

Now Chrome shows the “Install app?” Pop-up for PWA (after you interacted with them for a while). If you install PWA, the browser will create a shortcut for PWA in the Start menu. Similar to the mobile experience, the browser interface will be hidden in the open PWA.

Google promises to roll out this functionality for Mac and Linux in 72 versions.

Shape Detection API

Web applications can read barcodes and recognize faces differently, usually using machine learning JS libraries, but this can work very slowly. To make this feature more accessible and productive, Google introduces its own functionality in Chrome - shape detection.

Shape Detection API in Chrome 70 is an experimental function (origin trial), i.e. it is not yet ready for widespread use. The API can define 3 types of objects / images - faces, shtrikody and text. At the moment, compatibility varies across platforms, because the OS requires functionality to define objects. You can try the demo here .

TLS 1.3

Transport Layer Security is a protocol that allows you to safely transfer data over the Internet. When you use the site on HTTPS, then most likely, the data is sent via TLS. Chrome 70 supports TLS 1.3, which was released last month.

A list of changes is available here , but in general, version 1.3 improves both efficiency and security (for example, “won” BREACH and CRIME, so you can safely use https compression again - thanks to menstenebris ). It takes fewer steps to establish a connection, so you can notice a slight improvement in time (if the site you are logged in to supports TLS 1.3, of course). Here is a visual comparison of the difference from CloudFlare :

With the release of TLS 1.3, support for old features, such as SHA1 and MD5, also ceases. Google announced this on the Status Page :

TLS 1.3 was a multi-year project that brought together adherents from various industries, research groups and other participants to work on the standard. Previously, we experimented with draft versions of the standard, but when the standard was fully implemented, we can finally embed it in Chrome.

Firefox 60 added support for TLS 1.3 (draft 23), which was rolled out in May of this year; then it began to use and CloudFlare.

Other features

As always, Chrome 70 includes innovations for users and developers. Here is a list of other changes in this update:

- Speech synthesis API will not work until the page has interacted with this API at least once. This API is often used for spamming pop-ups on mobile devices up to the new autoplay policy in Chrome 66;

- Touch ID on Macbook Pro can be used as a login method in the Web Authentication API;

- if the page is in full screen mode, then pop-up will bring the page out of full screen;

- AppCache no longer works on non-https pages;

- on Android devices, the OC build number (for example, “NJH47F”) is no longer included in the user agent to prevent browser identification. Chrome on iOS will leave the build number "15E148" instead of completely removing it in order to follow the implementation in Safari;

- opus audio is now supported for MP4, Ogg and WebM containers;

- WebUSB now uses the context of an individual worker, which should improve performance;

- Web Bluetooth now works in Windows 10;

- new synchronization dialog on desktops;

- service workers can be given names;

- Credential Management API now supports PublicKeyCredential ;

- initial implementations of Custom Elements, HTML imports, navigator.getGamepads and Shadow DOM API are now in deprecated status;

- Lazy Loading can now be enabled using the # enable-lazy-frame-loading and # enable-lazy-image-loading flags .