How to live with Docker, or why is it better with it than without it?

This article is intended for those who already know about Docker, know what it is for. But he does not know what to do next. The article is advisory in nature and does not infringe on the title of "best practice".

So, maybe you went through the docker tutorial , the docker seems simple and useful, but you don’t know yet how it can help you with your projects.

There are usually three problems with deployment:

- How do I deliver the code to the server?

- How do I run code on servers?

- How can I ensure the same environment in which my code starts and runs?

How Docker will help with this under a cat. So, let's start not with docker, but with how we lived without it.

How do I deliver the code to the server?

The first thing that comes to mind is to pack everything in a package. RPM or DEB does not matter.

Packages

The system already has a batch manager that can manage files, usually we expect the following from it:

- Deliver project files to the server, from some storage

- Satisfy dependencies (libraries, interpreter, etc.)

- Delete files of the previous version and deliver the files of the current.

In practice, it works, for now ... Imagine a situation. You are DevOps on an ongoing basis. Those. you spend all day automating the routine activities that admins are involved in. You have a small fleet of cars on which you do not breed a zoo from distributions. For example, you love Centos. And she is everywhere. But here comes the problem: for one of the projects you need to replace libyy (which is a quest under Centos itself) with libxx. Why replace - you do not know. Most likely the developer wrote “as usual krivoruky” only for libxx, and with libyy it does not work. Well, we take vim into our hands and write rpmspec for libxx, in parallel for new versions of the libraries that are needed for assembly. And about 2-3 days of exercise, and here is one of the results:

- libxx is going, everything is fine, the task is completed

- libxx was not built, not because, say, libyy is installed on the system, which will have to be removed, but something else on the server depends on it

- libxx was not built, there is not enough library which will conflict with some other library

The total probability of coping with the task is approximately 30% (although in practice options 2 or 3 always drop out). And if the second situation can still be somehow managed (the virtual machine is there or lxc), then the third situation will either add another beast to your menagerie, or collect the nulls / slakvari greetings in / usr / local with handles, etc.

In general, this situation is called dependency hell . There are tons of solutions to this, from simple chroot to complex projects like NixOs .

Why am I doing this. Oh yes. As soon as you start to collect everything in packages and drive them on the same system, you will definitely have a dependency hell situation. It can be solved in different ways. Either put a new repository for each project plus a new virtual machine / hardware, or introduce restrictions. By limitations, I mean a certain set of company policies, like: “We only have libyy-vN.N here. Whoever doesn’t like it, to the personnel department with a statement. ” I didn’t just say that about NN, some libraries cannot live on the same server in two versions. Limitations do not give anything in the end. Business, and common sense, will quickly destroy them all.

Think for yourself which is more important: to make a feature in the product, which depends on updating a third-party component, or to give devops time to enjoy the heterogeneous environment.

Deploy via GIT

Good for interpreted languages. Not suitable for something that needs to be compiled. And also do not get rid of dependency-hell.

How do I run code on servers?

A lot of things have been invented for this question: daemontools, runit, supervisor. We believe that this question has at least one correct answer.

How can I ensure the same environment in which my code starts and runs?

Imagine a banal situation, you are still the same DevOps, the task comes to you, you need to deploy the “Eniac” project (the name is taken from the name generator for projects) on N servers, where N is greater than 20.

You drag Eniac from GIT, it’s on the well-known to you technology (Django / RoR / Go /

Understand the problem. Go ahead, you manage to run Eniac on the same server, and it doesn’t work on N-1 yet.

How does Docker help me?

Well, firstly, let's figure out how to use Docker as a means of delivering projects to hardware.

Private Docker Registry

What is the Docker Registry ? This is what stores your data, like a WEB server that distributes deb packages and metadata files for them. You can install the Docker Hub on a virtual machine in Amazon and store data in Amazon S3, or you can install it on your infrastructure and store data in Ceph or on the FS.

Docker registry itself can also be started by docker. This is just an application in python (apparently, go did not manage to write libraries for storages, of which a large number of registry supports).

And we will analyze later how our project gets into this repository.

How do I run code on servers?

Now let's compare how we deliver the project with the usual package manager. For example apt:

apt-get update && apt-get install -y myuperprojectThis command is suitable for automation. She herself will do everything quietly, it remains to restart our application.

Now an example for Docker:

docker run -d 192.168.1.1:80/reponame/mysuperproject:1.0.5rc1 superproject-run.shAnd that’s all. Docker will download from your docker registry located at 192.168.1.1 and listening on port 80 (I have nginx here), all that is needed to start, and run superproject-run.sh inside the image.

What happens if in the image there are 2 programs that we run, and, say, in the next release, one of them crashes, but the other, on the contrary, scores features that need to be rolled out. No problems:

docker run -d 192.168.1.1:80/reponame/mysuperproject:1.0.10 superproject-run.sh

docker run -d 192.168.1.1:80/reponame/mysuperproject:1.0.5rc1 broken-program.sh

And now we already have 2 different containers, and inside them there may be completely different versions of system file libraries, etc.

How can I ensure the same environment in which my code starts and runs?

In the case of standard virtual machines or generally real systems, you will have to follow everything yourself. Well, either study Chef / Ansible / Puppet or something else like that. Otherwise, there is no guarantee that xxx-utils is the same version on all N servers.

In the case of docker, you don’t need to do anything, just expand the containers from the same images.

Container assembly

In order for your code to get into the docker registry (yours or global does not matter), you have to push it there.

You can force the developer to build the container yourself:

- Developer writes Dockerfile

- Does docker tag $ imageId 192.168.1.1:80/reponame/mysuperproject:1.0.5rc1

- And pushing docker push 192.168.1.1:80/reponame/mysuperproject:1.0.5rc1

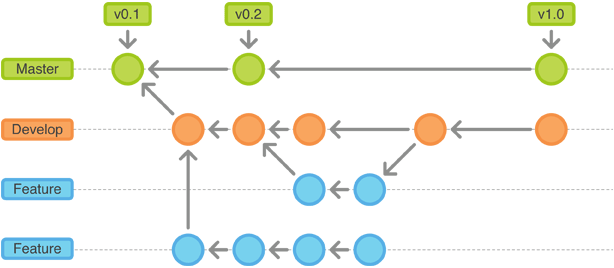

Or collect automatically. I did not find anything that collects containers, so here, I have assembled a bicycle called lumper

The bottom line is:

- Install 3 components lumper and RabbitMQ on the server

- Listening to the port for github hooks.

- Configure github web-hook on our lumper installation

- Developer makes git tag v0.0.1 && git push --tags

- Receive email with build results

Dockerfile

In fact, there is nothing complicated. For example, build a lumper using the Dockerfile.

FROM ubuntu:14.04

MAINTAINER Dmitry Orlov

RUN apt-get update && \

apt-get install -y python-pip python-dev git && \

apt-get clean

ADD . /tmp/build/

RUN pip install --upgrade --pre /tmp/build && rm -fr /tmp/build

ENTRYPOINT ["/usr/local/bin/lumper"]

Here, FROM talks about which image the image is based on. Next, RUN runs the commands preparing the environment. ADD places the code in / tmp / build, then ENTRYPOINT indicates the entry point to our container. So at startup, by writing docker run -it --rm mosquito / lumper --help we see the output --help entrypoint.

Conclusion

In order to run containers on servers there are several ways, you can either write an init-script or look at Fig which can deploy entire solutions from your containers.

I also deliberately ignored the topic of port forwarding, and ip routing of containers.

Also, since the technology is young, docker has a huge community. And great documentation . Thanks for attention.