Slowest x86 instruction

Everyone knows and loves the x86 assembler. Most of its instructions are executed by a modern processor in units or fractions of nanoseconds. Some operations that are decoded into a long sequence of microcode, or awaiting access to memory, can take much longer - up to hundreds of nanoseconds. This post is about champions. A hit parade of four instructions under the cut, but for those who are too lazy to read the whole text, I will write here that the main villain is [memory] ++ under certain conditions.

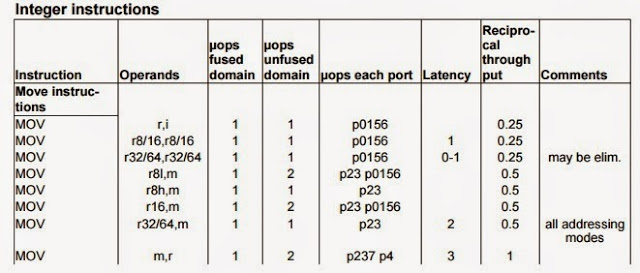

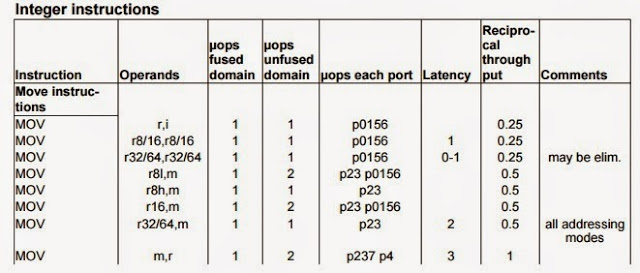

The CPAP is taken from a document by Agner Fogh, which, along with two documents from Intel (optimization guide and architecture software development manual) contain a lot of useful and interesting topics.

To begin with, there are commands that are expected to execute within microseconds. For example, IN, OUT or RSM (return from SMM). VMEXIT has accelerated dramatically in recent years, and fractions of a microsecond last on new processors. There is MWAIT, which by definition is executed as long as possible. In general, in ring 0 there are many "heavy" instructions, all consisting of microcode - WRMSR, CPUID, setting control registers, etc. The examples that I will give below can be executed with the privileges of ring 3, that is, in any regular program. Even programming in C is not necessary - the virtual machines of some popular languages are able to generate code containing these operations. These are not some special processor commands, but ordinary instructions, sometimes in special conditions.

Since they take a long time to execute, any sane profiler will detect them with a regular time profile, unless, of course, they are found in a rather "hot" code. There are still separate performance counters (PMU registers) that respond exclusively to such cases, with their help you can find these operations in a large program, even if they do not take a lot of absolute time (just why?). The most popular tools for this are Vtune and Linux perf. You can also use PCM , but it will not show where the instruction is located.

Villain number four.The x86 command (actually x87), which can execute almost 700 clock cycles, is FYl2X. Computes the binary logarithm multiplied by the second operand. There is no analogue in SSE, therefore it is still found in nature. There is no special counter.

Villain number three. Perhaps a bit artificial example, but used often. Fortunately, mostly in drivers.

Team MFENCE (or its subsets - LFENCE, SFENCE. By the way, LFENCE + SFENCE! = MFENCE). If a long operation with memory or PCIe write was performed before MFENCE, for example, an operation with non-temporal (MOVNTI, MOVNTPS, MASKMOVDQU, etc.) or with an operand located in write through / write combined memory area, then the “fence” itself will take almost a microsecond or longer. A performance counter exists for this situation, but it’s not in the processor core, but in “uncore”, it is easier to work with it through PCM.

Villain number two. Here is a very simple code.

What do you think will be approximately equal to time? (It doesn't matter if this code compiles to x87 or scalar SSE). This single instruction will be executed in 1-2 microseconds. This is the so-called denormal operation, a special case handled by a long sequence of microcode. Caught easily, PMU register - performance counter called FP_ASSIST.ALL. By the way, it is quite obvious that measuring the TSC difference when executing one (or even several dozen) instructions is almost always pointless. This case is an exception, we measure a long microcode.

The main villain.

Well and a bonus - unlike other participants of the hit parade, this code will make all other kernels also stop for a smoke break for a period of several thousand measures.

This is also caught using Vtune, perf, PCM, etc. using the counter LOCK_CYCLES.SPLIT_LOCK_UC_LOCK_DURATION. An example may seem far-fetched, but over the past year I have encountered this problem with my clients twice. In one of the cases, LOCK_CYCLES.SPLIT_LOCK_UC_LOCK_DURATION went off scale when initializing a huge program written in .net. I didn’t figure it out then, the runtime or the client code located the mutex so unsuccessfully, but the performance drained seriously - another, independent program running on a different kernel slowed down thirty times.

Does anyone know an even slower instruction? (REP MOV not to offer).

The CPAP is taken from a document by Agner Fogh, which, along with two documents from Intel (optimization guide and architecture software development manual) contain a lot of useful and interesting topics.

To begin with, there are commands that are expected to execute within microseconds. For example, IN, OUT or RSM (return from SMM). VMEXIT has accelerated dramatically in recent years, and fractions of a microsecond last on new processors. There is MWAIT, which by definition is executed as long as possible. In general, in ring 0 there are many "heavy" instructions, all consisting of microcode - WRMSR, CPUID, setting control registers, etc. The examples that I will give below can be executed with the privileges of ring 3, that is, in any regular program. Even programming in C is not necessary - the virtual machines of some popular languages are able to generate code containing these operations. These are not some special processor commands, but ordinary instructions, sometimes in special conditions.

Since they take a long time to execute, any sane profiler will detect them with a regular time profile, unless, of course, they are found in a rather "hot" code. There are still separate performance counters (PMU registers) that respond exclusively to such cases, with their help you can find these operations in a large program, even if they do not take a lot of absolute time (just why?). The most popular tools for this are Vtune and Linux perf. You can also use PCM , but it will not show where the instruction is located.

Villain number four.The x86 command (actually x87), which can execute almost 700 clock cycles, is FYl2X. Computes the binary logarithm multiplied by the second operand. There is no analogue in SSE, therefore it is still found in nature. There is no special counter.

Villain number three. Perhaps a bit artificial example, but used often. Fortunately, mostly in drivers.

Team MFENCE (or its subsets - LFENCE, SFENCE. By the way, LFENCE + SFENCE! = MFENCE). If a long operation with memory or PCIe write was performed before MFENCE, for example, an operation with non-temporal (MOVNTI, MOVNTPS, MASKMOVDQU, etc.) or with an operand located in write through / write combined memory area, then the “fence” itself will take almost a microsecond or longer. A performance counter exists for this situation, but it’s not in the processor core, but in “uncore”, it is easier to work with it through PCM.

Villain number two. Here is a very simple code.

double fptest = 3000000000.0f; // Same with float. // TSC1 int inttest = 2 + fptest; // TSC2 time = TSC2 - TSC1;

What do you think will be approximately equal to time? (It doesn't matter if this code compiles to x87 or scalar SSE). This single instruction will be executed in 1-2 microseconds. This is the so-called denormal operation, a special case handled by a long sequence of microcode. Caught easily, PMU register - performance counter called FP_ASSIST.ALL. By the way, it is quite obvious that measuring the TSC difference when executing one (or even several dozen) instructions is almost always pointless. This case is an exception, we measure a long microcode.

The main villain.

static unsigned char array [128];

for (int i = 0; i <64; i ++) if ((int) (array + i)% 64 == 63) break;

lock = (unsigned int *) (array + i);

for (i = 0; i <1024; i ++) * (lock) ++; // prime

// TSC1

asm volatile (

"lock xaddl% 1, (% 0) \ n"

: // no output

: "r" (lock), "r" (1));

// or in Windows, just InterlockedIncrement (lock);

// TSC2

time = TSC2 - TSC1;

Well and a bonus - unlike other participants of the hit parade, this code will make all other kernels also stop for a smoke break for a period of several thousand measures.

This is also caught using Vtune, perf, PCM, etc. using the counter LOCK_CYCLES.SPLIT_LOCK_UC_LOCK_DURATION. An example may seem far-fetched, but over the past year I have encountered this problem with my clients twice. In one of the cases, LOCK_CYCLES.SPLIT_LOCK_UC_LOCK_DURATION went off scale when initializing a huge program written in .net. I didn’t figure it out then, the runtime or the client code located the mutex so unsuccessfully, but the performance drained seriously - another, independent program running on a different kernel slowed down thirty times.

Does anyone know an even slower instruction? (REP MOV not to offer).