How we did analytics for a highly loaded site

Recently on the habr there was a publication about how analytics is implemented on ivi.ru. After reading, I wanted to talk about the analytics that we did for one large site. The customer, unfortunately, was not allowed to publish a link to the site in the article. If you believe Alexa Rank, then the traffic on the site for which we did analytics is 10 times more than ivi.ru.

Reasons and goals for creating analytics

Due to the large number of visits to the site, at some point a letter came from Google asking them to stop using the service or reduce the number of requests to it, and some data could not be obtained through Google Analytics.

Information we collected about users:

- page views (refer, IP, UAgent, screen sizes);

- active / passive;

- buffering;

- rewind.

About 70% of the views were on pages with a video player, the main task was to collect information from these pages. It was necessary to get information about active / passive - how many seconds the user was active on the page, and how many seconds it was inactive - opened as a tab. Information about buffering (whether the video slows down or not, and how long it takes the user to download), information about the number of rewinds, and from which second to which users rewind, were also interesting. To do this, javascript code was placed on all pages, which tapped every 30 seconds to the server information from the page open in the browser.

Client part

The script is quite simple, it pulls two one-pixel images from the analytics server, passes parameters in the url of these images. Why is that? In our opinion, the most reliable solution will work absolutely in any browsers and platforms. If you used AJAX, you would have to solve issues with cross-domain and performance in various browsers. There are two pictures stat.gif and p.gif, the first is used when loading the page and transmits basic information about the user, the second twitches every 15 seconds and transfers the information that may change over time (active / passive, buffering, rewinding).

This picture twitches when you first open the page:

/stat.gif?pid=p0oGejy139055323022216801050bny0&l=http%3A%2F%2Fsite.ru%2F8637994&r=http%3A%2F%2Fsite.ru%2F&w=1680&h=1050&a=Mozilla%2F5.0%20(Windows%20NT%206.1%3B%20rv%3A26.0)%20Gecko%2F20100101%20Firefox%2F26.0&k=1390553230222&i=30000&vr=3.0

This picture twitches every 30 seconds:

/p.gif?pid=p0oGejy139055323022216801050bny0&rand=6752416&b=1&time=2-188x190-57x50-349x251-83x0-235x&pl=29&fpl=46&ld=552&efsc=true&tfsc=19&tac=89&tpas=70&vr=3.0

Parameter names are abbreviated to reduce traffic. PID - a unique identifier for viewing the page, serves to match the data that came from stat.gif and p.gif.

Server part

We immediately decided on the database, it was decided to use MongoDB (quick insert, data is stored in documents, non-relational structure). The first implementation was written in php, the very first tests under heavy load showed serious problems:

- php-fpm itself, in conjunction with nginx, consumed a lot of resources for processing the request;

- when stat.gif was loaded into MongoDB, a new document was inserted, then every 15 seconds the data that comes with the p.gif image was updated into it.

It became obvious that the data from stat.gif and p.gif needed to be aggregated and inserted into the mongu only after the requests stopped coming to p.gif. This allowed reducing the number of calls to MongoDB by an order of magnitude, and the calls themselves became only on insert (without Update). I can’t solve the problem in PHP, so the question arose about choosing a new platform. We needed the ability to process requests at the web server level, so pretty quickly our choice fell on NodeJS. Reasons: asynchrony, promise, familiar syntax (extensive JavaScript experience), relative ease of writing code. A great influence on the choice in favor of NodeJS was given by the publication “Million simultaneous connections on Node.js” by ashtuchkin - we repeated the described experiment on our server.

A little about the traffic and the nature of the requests: on every open page there is such a script and taps the data to the server every 15 seconds. One user can have several such pages open at once and all of them will send data, regardless of whether the user is on this page now or not. And that's all with about ~ 40 million views per day!

Server device on NodeJS

First, we made a single-threaded version of the server for the test. The script is very simple, in it request received requests for stat.gif and p.gif pictures and wrote this data to an array.

Array

(

[PID] => Array

(

[stat] => данные переданные картинкой stat.gif при первой загрузке страницы

[pgif] => последние данные переданные картинкой p.gif (отправляются каждые 15 секунд)

[time] => ЮНИКС метка времени, дата последнего обновление данных по этому PID

)

)

Further on the timer, a handler is launched, which iterates over the entire array with PID and checks the time of the last data change by this PID (Array [PID] [time]). If more than 90 seconds have passed since the last change (since the data does not come from the user every 15 seconds, it means that he closed the page or the Internet disappeared), then the record is inserted into MongoDB and deleted from the array itself. After testing the single-threaded version, it was decided to implement a multi-threaded version (in order to maximize the use of all processor capabilities).

NodeJS makes multithreading very easy thanks to the wonderful Cluster module. In the framework of this article I will not go into the details of the multithreaded code (this has been written about so much), I will only say that this module allows you to run a piece of code in several instances on different threads and provides a tool for the interaction of child threads with the main thread using messages.

The logic of a single-threaded application was divided between the head and child streams: the

child streams received the http request, they returned one pixel picture, and the data received with the picture in the get request was transferred to the head stream.

Example code for worker- (child stream):

//Часть кода в которой происходит непосредственно разбор запросов

server.on('request', function(req, res) { - Обработка GET запроса к серверу

var url_parts = url.parse(req.url, true);

var query = url_parts.query;

var url_string = url_parts.pathname.slice(1);

var cookies = {};

switch(url_string){ // Все очень примитивно потому что нужно обрабатывать только в урла /p.gif и /stat.gif

case 'p.gif':

process.send({ routeType: 'p.gif', params: url_parts.query}); // отправляем данные в головной поток

if(image == undefined){ // если после запуска сервера картинка не считывалась и ее нет в памяти то считываем записываем в память и отдаем -- однопиксельная картинка

fs.stat('p.gif', function(err, stat) {

if (!err){

image = fs.readFileSync('p.gif');

res.end(image);

}

else

res.end();

});

}else

res.end(image);

break;

case 'stat.gif':

url_parts.query.ip = req.connection.remoteAddress;

process.send({ routeType: 'stat.gif', params: url_parts.query}); // отправляем данные в головной поток

if(image == undefined){ // если после запуска сервера картинка не считывалась и ее нет в памяти то считываем записываем в память и отдаем -- однопиксельная картинка

fs.stat('p.gif', function(err, stat) {

if (!err){

image = fs.readFileSync('p.gif');

res.end(image);

}

else

res.end();

});

}else

res.end(image);

break;

default: //

res.end('No file');

break;

}

});

Data is sent to the head stream using process.send ({}) .

In the main stream, data from child streams is received using

worker.on ('message', function (data) {}) and written to the array.

Sample head stream code:

Часть кода, вешаем событие на сообщение для каждого дочернего процесса

worker.on('message', function(data) {

switch(data.routeType){

case 'p.gif':

counter++;

if(data.params.pid != undefined && dataObject[data.params.pid] != undefined){ //Проверяем что передан PID, а также что юзер уже существует в объекте

dataObject[data.params.pid]['pgif'] = data.params; //Записываем параметры во второй, перезаписываемый индекс

dataObject[data.params.pid]['time'] = Math.ceil(new Date().getTime()/1000); //Записываем последнюю дату перезаписи

}

break;

case 'stat.gif':

counter++;

if(data.params.pid != undefined){

if(dataObject[data.params.pid] == undefined) //Если массив не существует, создаём его

dataObject[data.params.pid] = [];

dataObject[data.params.pid]['stat'] = data.params; //Записываем параметры в первый индекс

dataObject[data.params.pid]['time'] = Math.ceil(new Date().getTime()/1000); //Записываем дату когда была сделана первая запись, для вычисления случаев, когда юзер закрыл страницу раньше, чем был второй запрос

}

break;

default:

break;

}

});

Also, a timer is launched in the main thread, which analyzes the entries in the array and inserts into the MongoDB database those for which there was no change for more than 90 seconds.

Data storage

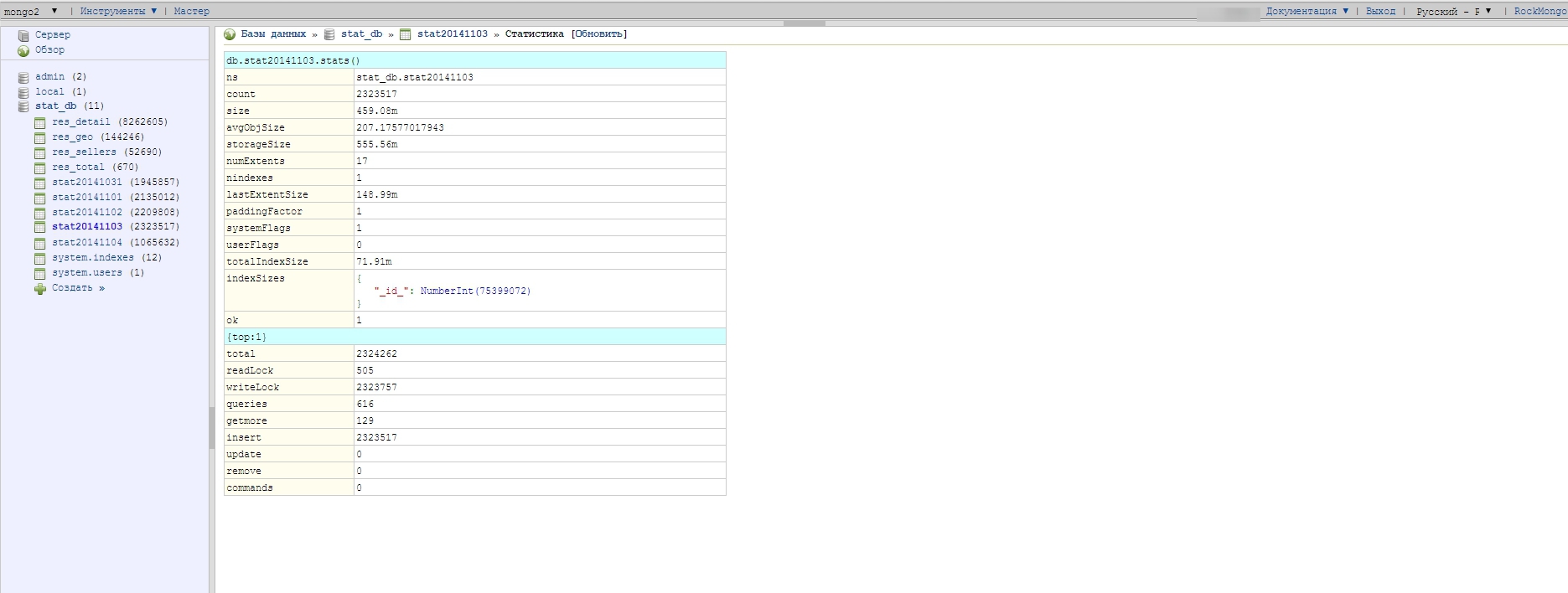

Since data storage also has its own nuances, during various experiments we came to the conclusion that storing all the data in one collection (an analog of a table in MySQL) is a bad idea. It was decided to create a new collection every day - the benefit in MongoDB is easy: if the collection does not exist and you are trying to write something to it, it is created automatically. It turns out that in the course of its work, the server side writes data to the collection with a date in the name: stat20141102, stat20141103, stat20141104.

Database structure:

Structure of one document (one document corresponds to one view):

The data for one day weighs pretty decently - about 500 megabytes this is when sampling 1/10 (only 10% of visitors are statistics), respectively, if run without sampling, the collection would weigh 5 Gigabytes in one day. Collections with raw data are stored for only 5 days, then deleted as unnecessary, because there are scripts-aggregators that run on the crown, process the raw data and write them already in a more compact, calculated form to other collections - which are used to build graphs and reports.

Report Building

Initially, reports were built using find () and Map-Reduce . The collection.find () method was used for simple selections, while more complex ones were built using Map-Reduce. The second method is the most complex and required a complete understanding of distributed computing mechanisms and practical experience. The tasks that were solved in MySQL by the operators AVG, SUM, ORDER BY required certain tricks with Map-Reduce in order to get the result. A good gift for us at that moment was the release of a stable version of MongoDB 2.2, the Aggregation Framework appeared in it , it made it very easy and fast to build complex samples from the database, I did not resort to Map-Reduce.

An example of a request through aggregate (groups data by id video and summarizes | gets the average by indicators):

db.stat20141103.aggregate([

{ $match : { $nor : [{ ap : {$gt: 20}, loaded :0 }]} } ,

{ $group: {

_id:"$video_id",

sum:{$sum:1},

active:{$sum:"$active" },

passive:{$sum:"$passive" },

buffer:{$sum:"$buffer" },

rewind:{$avg:"$rewindn" },

played:{$sum:"$played" }

}

}

]);

Deploy and Debug

For all this to work well under high load, you need to configure the operating system and database a little:

- On the OS itself, it was necessary to increase the number of descriptors. In the case of Ubuntu, this is:

#/etc/security/limits.conf # Увеличиваем лимит дескрипторов файлов (на каждое соединение нужно по одному). * - nofile 1048576

On other Linux systems, the settings from the /etc/sysctl.conf file. - To speed up MongoDB, the database files were placed on the SSD. It was also necessary to fix the database config: they turned off Journalin, and played with the time of flushing and information to disk (storage.syncPeriodSecs - this parameter indicates how often MongoDB unloads data from RAM to disk).

/etc/mongodb.conf journal: enabled: false

PS I decided to supplement the article with answers to questions in the comments:

What is the rationale for choosing a MongoDB base?

The choice of the MongoDB database was due to the fact that there was already a positive experience working with it, I think PostgreSQL could also cope with this task - it is possible that even better results

How and where was the PID generated?

PID - a unique field, it should identify a unique view. It is generated on the basis of a unique user id (it is generated on the basis of IP and some other information) + the current Unix label, UAgent, screen resolution and some random variable.

They opened the page, the PID was created, immediately updated the same page, you will have a completely different PID.

Generated in javascript on the user page, the unique user id comes from the server via cookies

Was journaling enabled? Backup, fault tolerance? And fault tolerance at Node level?

journal'ing - off - the loss of data from these statistics in a few minutes is not critical for this project

Fault tolerance Node - if you worked with it you know that any minor error in child streams will bring down this stream, and if in the head one the whole web server .

We decided as follows in the head stream daughter flows were monitored and in the event of a fall, they restarted.

In case of a fall in the head stream, forever was used - if the server crashed, it immediately restarted it and the error with which it fell wrote to the log

What is the normal delay to receiving reports? Last day aggregation or as close to realtime as possible?

There are internal statistics, she writes some elementary things on traffic, it is available in real time, the one that I described in the article is aggregated with an interval of 1 hour - this was quite enough

What did not please the same Google Analytics (or YandexMetrica)? From what you wrote, like, everything can be done with it

A lot of traffic, google and so used sampling to build reports + a letter came from them asking them to reduce the load on their services

Why is the Apache Storm - Hadoop / Spark set not standard for this class of tasks?

Apache Storm / Hadoop / Spark is not familiar with them, I do not write in JAVA

Initially, I had a lot of experience writing various php / MySQL / JS / HTML

code in nodeJS JS syntax for the most part, therefore the choice fell in her direction.

Also during the discussions on the hub, an interesting idea came up - to replace the part on NodeJS with nginx which writes all the data in the required format to the log and some application would read this log at the time of insertion, process the data and insert it into the database. With the current conditions of the task, this could well be implemented - the main arguments of this option: nginx is a very reliable and proven solution for years.