As I wrote my bike to rotate tables in Oracle and Postgre

Greetings, Habr!

In this article I will talk about how we struggled with the problem of rapidly growing the size of tables in the database in a highly loaded EMS system. The highlight is that the problem was solved for two databases: Oracle and Postgre. Interested, I ask for cat.

So, there is a certain EMS system that receives and processes messages from network elements. A record of each message is entered in the database tables. According to customer requirements, the number of incoming messages (and, accordingly, the number of entries in the tables) is on average 100 per second, while the peak load can increase up to 1500.

It is easy to calculate that more than 8 million entries per table are typed on average. The problem appeared when it turned out that with data volumes of more than 20 million lines, some system requests begin to slow down and go beyond the time limits requested by the customer.

Thus, it was necessary to figure out what to do with the data, so that no information was lost and the queries worked quickly. At the same time, the system initially worked on Postgre, but in the near future it was planned to switch to Oracle and I wanted to have a minimum of problems with the transfer of functionality during the transition.

The option of using partitioning disappeared immediately, because it was known that Oracle Partitioning would definitely not be included in the license, and I didn’t really want to redo from one parting to another, so we began to think about implementing some kind of bicycle.

Significantly facilitated the problem that logs older than a couple of days are not needed to display in the system, because to investigate the vast majority of problems should have been enough messages two days ago. But to store them "just in case of a fireman" is still necessary. It was then that the idea was born to implement procedures for periodically “rotating” data in tables, i.e. transferring them from tables to display in some historical tables.

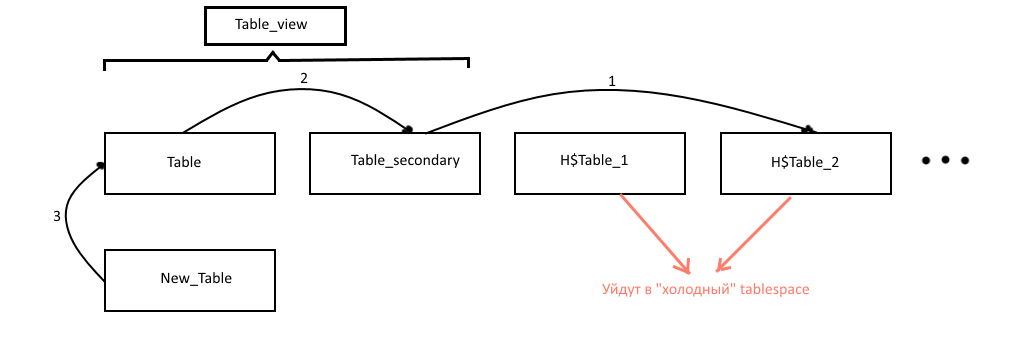

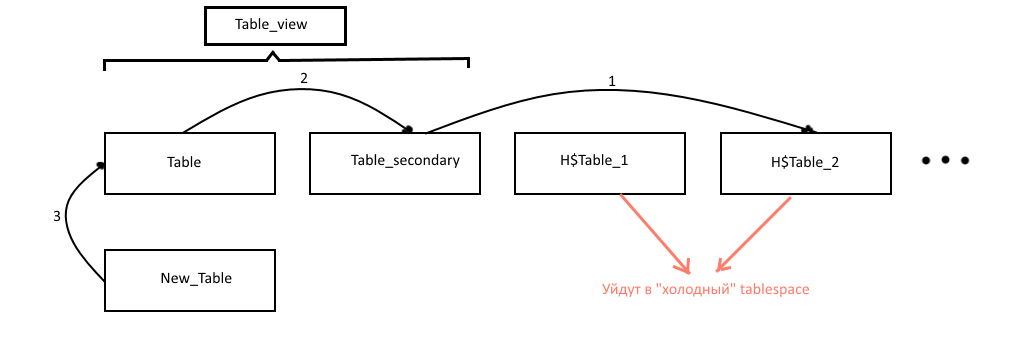

It was decided to keep 2 tables with the most relevant data for display (let's call them table - the main and table_secondary - additional). The table_view view from which the data for display was taken was hung on these two tables: it is necessary so that after the moment of data transfer to the UI all records would not disappear sharply. Older records are transferred to historical tables with names like H $ table_NUM, where NUM is the number of the historical table (the older the data, the higher the number). Historical tables, so as not to clog the main tablespace, are also periodically dragged into the “cold” tablespace, whose tables can be stored on slow disks. This operation, generally speaking, is difficult, therefore it is less often done as a separate procedure. In addition, this same procedure removes too old tables from the "cold" tablespace.

Regarding how the data is migrated: due to the large number of indexes on the tables, directly transferring records using insert worked slowly, therefore, the approach was chosen with renaming tables and re-creating indexes and triggers.

Schematically, the operation of the procedures is shown in the figure:

So, the operation algorithm turned out to be something like this (I give the algorithm and examples of the procedure code for oracle, for postgre you can look at github):

Procedure rotate_table (primary_table_name). Running, say, every hour.

Procedure move_history_logs_to_cold_ts (primary_table_name). It is carried out, for example, once a day.

Scheduled procedures were started using Quartz Sheduler in the case of Postgre, and with the help of Oracle Scheduler in the case of Oracle, the scripts for the configuration of which are also in the sources.

The full source of procedures and scripts for configuring the sheduler can be viewed on GitHub .

Thanks for attention!

In this article I will talk about how we struggled with the problem of rapidly growing the size of tables in the database in a highly loaded EMS system. The highlight is that the problem was solved for two databases: Oracle and Postgre. Interested, I ask for cat.

Initial conditions

So, there is a certain EMS system that receives and processes messages from network elements. A record of each message is entered in the database tables. According to customer requirements, the number of incoming messages (and, accordingly, the number of entries in the tables) is on average 100 per second, while the peak load can increase up to 1500.

It is easy to calculate that more than 8 million entries per table are typed on average. The problem appeared when it turned out that with data volumes of more than 20 million lines, some system requests begin to slow down and go beyond the time limits requested by the customer.

Task

Thus, it was necessary to figure out what to do with the data, so that no information was lost and the queries worked quickly. At the same time, the system initially worked on Postgre, but in the near future it was planned to switch to Oracle and I wanted to have a minimum of problems with the transfer of functionality during the transition.

The option of using partitioning disappeared immediately, because it was known that Oracle Partitioning would definitely not be included in the license, and I didn’t really want to redo from one parting to another, so we began to think about implementing some kind of bicycle.

Significantly facilitated the problem that logs older than a couple of days are not needed to display in the system, because to investigate the vast majority of problems should have been enough messages two days ago. But to store them "just in case of a fireman" is still necessary. It was then that the idea was born to implement procedures for periodically “rotating” data in tables, i.e. transferring them from tables to display in some historical tables.

Solution and implementation

It was decided to keep 2 tables with the most relevant data for display (let's call them table - the main and table_secondary - additional). The table_view view from which the data for display was taken was hung on these two tables: it is necessary so that after the moment of data transfer to the UI all records would not disappear sharply. Older records are transferred to historical tables with names like H $ table_NUM, where NUM is the number of the historical table (the older the data, the higher the number). Historical tables, so as not to clog the main tablespace, are also periodically dragged into the “cold” tablespace, whose tables can be stored on slow disks. This operation, generally speaking, is difficult, therefore it is less often done as a separate procedure. In addition, this same procedure removes too old tables from the "cold" tablespace.

Regarding how the data is migrated: due to the large number of indexes on the tables, directly transferring records using insert worked slowly, therefore, the approach was chosen with renaming tables and re-creating indexes and triggers.

Schematically, the operation of the procedures is shown in the figure:

So, the operation algorithm turned out to be something like this (I give the algorithm and examples of the procedure code for oracle, for postgre you can look at github):

Procedure rotate_table (primary_table_name). Running, say, every hour.

- Check that the number of rows in the main table has exceeded a certain limit;

- Check that there is a “cold” tablespace:

SELECT COUNT(*) INTO if_cold_ts_exists FROM USER_TABLESPACES WHERE tablespace_name = 'EMS_HISTORICAL_DATA'; - Create an auxiliary empty table new_table based on the current main table. For this, postgre has a convenient CREATE TABLE ... (LIKE ... INCLUDING ALL) functional, but for Oracle it was necessary to write its own analogue - create_tbl_like_including_all (primary_table_name, new_table_name, new_idx_trg_postfix, new_idx_trg_prefix), which creates a similar empty table

SELECT replace(dbms_metadata.get_ddl('TABLE', primary_table_name), primary_table_name, new_table_name) INTO ddl_query FROM dual; ddl_query := substr(ddl_query, 1, length(ddl_query) - 1); EXECUTE IMMEDIATE ddl_query;

And also triggers and indexes to it:FOR idx IN (SELECT idxs.index_name FROM user_indexes idxs WHERE idxs.table_name = primary_table_name) LOOP ddl_query := REPLACE( REPLACE(dbms_metadata.get_ddl('INDEX', idx.index_name), primary_table_name, new_table_name), idx.index_name, new_idx_trg_prefix || idx.index_name || new_idx_trg_postfix); ddl_query := substr(ddl_query, 1, length(ddl_query) - 1); EXECUTE IMMEDIATE ddl_query; END LOOP; - Rename the tables:

EXECUTE IMMEDIATE 'alter table ' || secondary_table_name || ' rename to ' || history_table_name; EXECUTE IMMEDIATE 'alter table ' || primary_table_name || ' rename to ' || secondary_table_name; EXECUTE IMMEDIATE 'alter table ' || new_table_name || ' rename to ' || primary_table_name; - Rename triggers and indexes for them;

- If the cold tablespace does not exist, then we consider that we do not need to store historical data, and delete the corresponding table:

EXECUTE IMMEDIATE 'drop table ' || history_table_name || ' cascade CONSTRAINTS'; - Rebuild the view (only for oracle):

EXECUTE IMMEDIATE 'select * from ' || view_name || ' where 1=0';

Procedure move_history_logs_to_cold_ts (primary_table_name). It is carried out, for example, once a day.

- If a “cold” tablespace exists, look for all historical tables that are not in this tablespace:

EXECUTE IMMEDIATE 'select table_name from user_tables where table_name like ''' || history_table_pattern || ''' and (tablespace_name != ''EMS_HISTORICAL_DATA'' or tablespace_name is null)' BULK COLLECT INTO history_tables; - Move each table to the “cold” tablespace:

EXECUTE IMMEDIATE 'ALTER TABLE ' || history_tables(i) || ' MOVE TABLESPACE ems_historical_data'; - We delete triggers and indexes for the moved tables;

- We delete too old tables from the "cold" tablespace.

Scheduled procedures were started using Quartz Sheduler in the case of Postgre, and with the help of Oracle Scheduler in the case of Oracle, the scripts for the configuration of which are also in the sources.

Conclusion

The full source of procedures and scripts for configuring the sheduler can be viewed on GitHub .

Thanks for attention!