FAQ about cooling data centers: how to make cheap, cheerful, reliable and fit into the dimensions of the site

- What is the usual choice for cooling a data center?

- Freon cooling. Designed, simple, affordable, but the main disadvantage is the limited ability to maneuver in energy efficiency. Physical restrictions on the length of the path between the outdoor and indoor units also often interfere.

- Systems with water and glycol solutions. That is, Freon is still the refrigerant, but another substance will already be the refrigerant. The route may be longer, but the main thing is that a variety of options for adjusting the operating modes of the system opens.

- Combined type systems: air conditioning can be both freon and water cooling (there are a lot of nuances).

- Air cooling from the street is a variety of freecooling options: rotary heat exchangers, direct and indirect coolers, and so on. The general meaning is either a direct heat dissipation with filtered air, or a closed system where the street air cools the internal air through a heat exchanger. You need to look at the possibilities of the site, as sudden decisions are possible.

- So let's get a look at classic Freon systems, what's the problem?

Classic freon systems work fine in small server and, not often, mid-range data centers. As soon as the machine passes over 500-700 kW, there are problems with the placement of outdoor units of air conditioners. There is simply not enough space for them. We have to look for free areas away from the data centers, but here the length of the route intervenes (it is not enough). Of course, you can design the system to the limit, but then the losses in the circuit increase, the efficiency decreases, and operation becomes more complicated. As a result, for medium and large data centers, purely freon systems are often disadvantageous.

“How about using an intermediate refrigerant?”

That's right! If an intermediate coolant is used (for example, water, propylene or ethylene glycol), then the length of the path becomes almost unlimited. The Freon circuit, as is clear, does not disappear anywhere, and it is not the mashrooms themselves that cool it, but the coolant. He already goes further along the highway to the consumer.

- What are the pros and cons of intermediate refrigerants?

Well, firstly, the space on the roof or the adjacent area is significantly saved. In this case, the freon route, in case of a further increase in load, needs to be laid in advance and jammed, but under the same water you can add new devices as needed or suddenly necessary, having pre-mounted the pipeline of the required diameter. You can make bends in advance, and then just connect them. The system is more flexible, easier to scale. The reverse side of the system with an intermediate refrigerant is an increase in heat loss, an increase in the number of energy consumers. For small data centers, the specific cooling cost per rack is higher than that of freon systems. You also need to remember about freezing the system. Frost is contraindicated in water, ethylene glycols are considered dangerous for a number of objects. Propylene is less effective. We are looking for a compromise depending on the task.

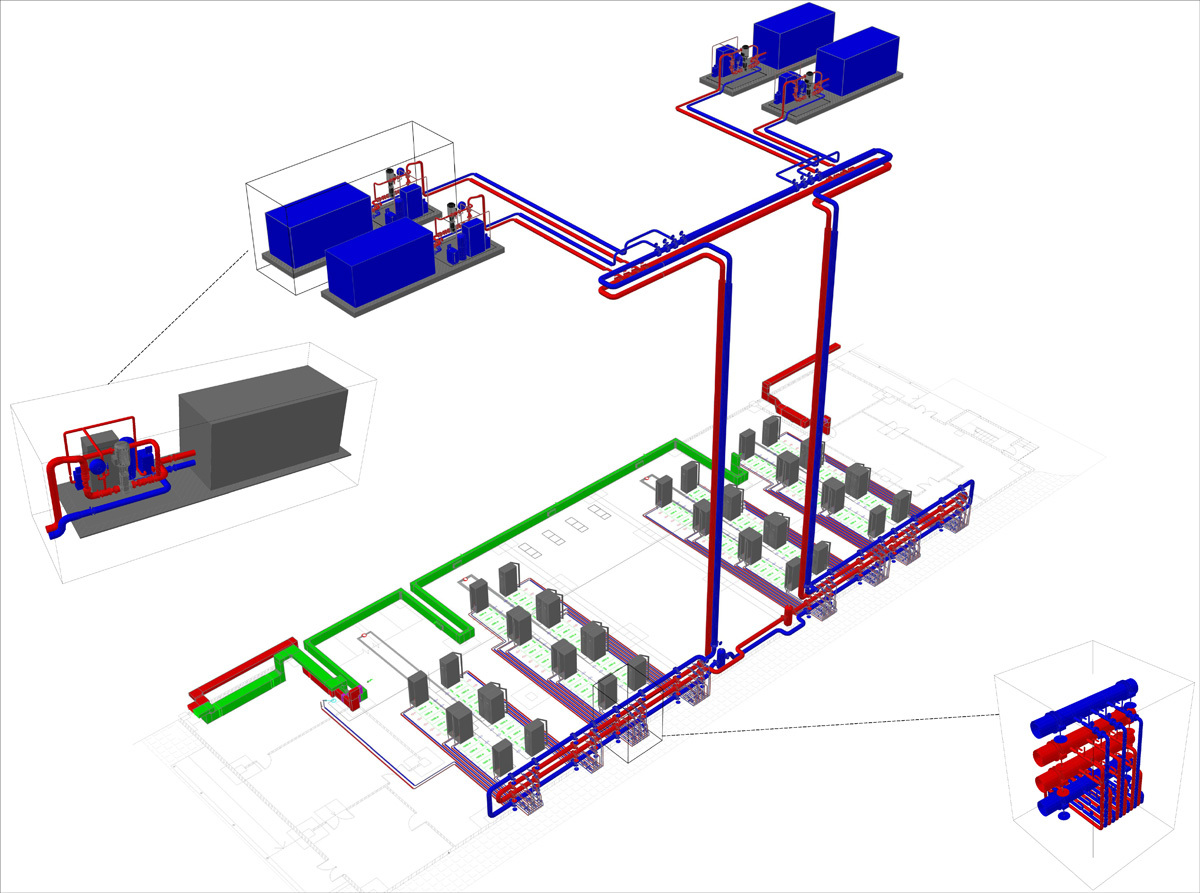

A typical project looks like this

- are there passive cooling systems?

Yes, but, as a rule, are not used for data centers. Passive cooling is possible for small rooms with 1-3 racks. The so-called heat dissipation helps with a total power of not more than 2 kW per room, and in data centers it is usually already 6 kW per rack, and the placement density is high, and therefore not applicable.

- How about cooling through a heat exchanger in a nearby lake?

Great technology. We did a similar project for a major customer in Siberia. It is very important to calculate the mode and operating point that you can count on all year round, to consider the risks that are not directly related to the operation of the data center. Roughly speaking, the meaning of the technology is this: a heat exchanger is immersed in a lake or river, to which a track with a coolant is suitable. Further - freecooling, only water instead of air in the external circuit.

- You talked about some kind of life hack with street cooling.

Yes, there are alternative options - the use of recycled process water of industrial enterprises. Not that it’s a direct life hack, but sometimes you should not lose sight of such opportunities.

- I heard that on small objects geoprobes are used ...

Yes, at base stations and similar facilities, where a maximum of a couple of racks, you can see such solutions. The principle is the same as with the lake: a heat exchanger is buried underground, and due to the constant temperature difference, it is possible to directly cool the “iron” inside the container. The technology is already well-established and well tested, but if necessary, it can be understaffed with a backup freon unit.

- A for large objects, there is only the opportunity to save on freecooling, right?

Yes, for economic reasons, freecooling systems are one of the most interesting options. The main feature is that we rely on the external environment, that is, there can still be days when the air is too warm outside. In this case, it will be necessary to cool it down using traditional methods. It is not often possible to refuse backup systems. But this is the main vector in the design of such systems - a complete rejection of the backup freon circuit.

- And what is the optimal solution?

There is no universal optimal solution, no matter how corny. If the climate allows, then 350 days a year we use "clean" freecooling, and 15 days, when it is especially hot, we cool the air with some additional traditional system. On transition days, when free cooling is not enough, we go out to mixed mode. The desire to make a system with many modes rests on its increasing cost. Simply put, given the demand coefficient for the operation of such a traditional system, it should be cheap to purchase, install, and at the same time without stringent requirements for work efficiency under load. Ideally, we always strive to calculate the system in such a way that in a specific region, under a regular climate situation (as in the last 10 years), there should be no inclusions of the traditional system at all. It’s clear that the climate of some regions,

- And how do the energy costs for the system operation modes compare?

The scheme is simple: we take cold air from the street, we drive it inside the data center (it does not matter with or without a heat exchanger). If the air is cold enough, everything is fine, the system consumes, say, 20% of the power. If it’s warm, you need to cool it down, and we add another 80% of power consumption. Naturally, I want to “cut out” this part and work in cold air.

- But in the summer, the right temperature for pure freecooling is not always possible. Can I increase the time of his work?

For example, we always have a margin of several degrees due to the fact that air can be purged through the evaporation chamber. A few degrees is hundreds of working hours a year. This is a rough example of how to make the circuit more economical by complicating the system - the essence of design is to achieve the optimal solution to the problem using dozens of options for such engineering practices. Often there is a need for additional resources (water, for example). Well, the climate is very dependent.

- And what are the temperature losses on heat exchangers during freecooling?

Example. In Moscow and St. Petersburg, at an air temperature of 21 degrees Celsius, the refrigerant outside the window will be approximately 23 degrees, and the temperature in the hall will be 25 degrees. Details are important, of course. You can do without an intermediate refrigerant and save a couple of degrees. For example, above, the same adiabatic humidification allows you to win 7 degrees in some regions, but it does not work in a humid climate (the air is already quite humid).

- It has become clearer. Freon for small data centers, Freon plus water or similar systems - for large objects. Free cooling is better if the climate allows. Right?

In general, yes. But all this must be considered, because there are a lot of nuances for each system. For example, if you suddenly use an autonomous energy center to power a data center with trigeneration, it is much more logical to utilize the heat generated during electricity generation in the ABCM and, therefore, cool the data center. In this case, you can think less about energy costs. But a host of other questions arise, such as: the continuity of the process of generating electricity, the adequacy of the heat utilized.

- How large is the variation in the efficiency of the chiller and closer system?

This is a very good question, especially nice when asked by customers who understand the chip. It all depends on the specific object. Somewhere, a high EER chiller is unreasonably expensive, it just never pays off. It must be remembered that manufacturers are constantly experimenting with compressors, refrigeration circuits and loading modes. At the moment, a certain ceiling for efficiency has been formed for the chiller-fan coil system, which can be laid in the calculations and strive for it from below. Within the same scheme, quite strong fluctuations in price and efficiency are possible depending on the selection of devices and their compatibility. In figures, unfortunately, it is difficult to speak, because for each region they are different. Well, for example, for Moscow, a successful PUE air conditioning system is considered to be 1.1.

- Stupid question about freecooling: what if it’s –38 degrees Celsius outside, will it be like that in the hall?

Of course not. A system for that and a system to keep the set parameters all year round under any external conditions. Too low a temperature, of course, is harmful to iron - the dew point is close with all the consequences. As a rule, the temperature in the cold corridor is set in the range from +17 to +28 degrees Celsius. Commercial data centers have an SLA mode, for example, 18-24, and falling over the lower border is as unacceptable as going over the upper one.

- And what are the problems of combined systems at subzero temperatures?

If a part of the circuit with water comes out, it will definitely freeze and cause the destruction of the pipeline and stopping the operation of the circuit. Therefore, propylene or ethylene glycol is used. But this is not always enough. At our Compressor data center, for example, inactive outdoor units are “warmed up” before being switched on due to the fact that a route with a working temperature refrigerant passes through them.

- You can again: what is the usual practice of choosing a cooling system?

Up to 500 kW - usually freon. From 500 kW to 1 MW - go to the "water", but not always. Above 1 MW, the economic case for organizing an uncompressed cold system (rather complicated in terms of capital costs) becomes positive. Data centers of 1 MW and above without free cooling today are rarely anyone can afford to do if there is an expectation for long-term operation.

- Can I have it again on my fingers?

Yes. Direct Freon is cheap and cheerful in implementation, but very expensive to operate. Freon plus water - space saving, scaling, reasonable costs for large sites. Uncompressed cold roads are expensive to implement, but very cheap to operate. In the calculation, for example, a 10-year case is used and both capital and operating costs are considered in aggregate.

- Why is freecooling so cheap to operate?

Because modern free-cooling is convenient in itself using an external unlimited resource, plus it combines the most energy-efficient solutions for the data center, a minimum of moving parts. And the power of the data center is about 20% of the budget. They raised efficiency by a couple of percent - they saved a million dollars a year.

- And what about the reservation?

Depending on the required level of data center resiliency, redundancy of N + 1 or 2N over the nodes of the cooling system may be required. And if in the case of standard freon systems this is quite simple and worked out for years, then in the case of recuperators it will be a little expensive to reserve the whole system. Therefore, a particular system is always considered a specific data center.

- Ok, what about the food?

Not everything is trivial here either. Let's start with a simple thing: if the power turns off, you just need an incredible amount of batteries for the UPS to keep the cooling system running until the diesel engine starts. For this reason, often emergency power supply of the air conditioning system is greatly compressed, so to speak, limited.

- And what are the options?

The most common way is a pool with pre-chilled water (as we have at the Compressor data center). It can be open or closed. The pool acts as an accumulator of cold. The power disappears, and the water in the pool allows another 10-15 minutes to cool the data center in the normal mode. Only pumps and fans of air conditioners operate from the mains. This time with a large margin is enough to start diesel generators.

Behind the lamps you can see the edge of the open-type pool. We have 90 tons of water there, it's 15 minutes of cooling without power chillers.

- Outdoor or indoor pool - is there a difference?

Open cheaper, it does not need to be registered with regulatory authorities (pressure vessels are inspected according to Russian standards), natural deaeration. A closed tank, on the other hand, can be located anywhere; there are no special restrictions on the pumps. It does not bloom and does not smell. You have to pay for this with significantly higher capital and operating costs.

- What else do you need to know about the pools?

The simple fact is that few people consider them right if there is no long-term operating experience. We ate a dog on such systems, we know that with the correct design and application of different approaches, you can reduce the capacity several times. It is cheaper to operate, and less in volume and area. The issue of engineering approach.

“But what about the walls, the racks themselves — do they also accumulate heat?”

Practice shows that in normalized calculations, walls, racks and other objects in a machine room should not be considered as accumulators of cold or heat. In the sense that they are not difficult to calculate, but they go like NZ. The operators know that due to the cold accumulated in the walls, raised floor, ceiling, in the event of a serious accident they will have about another 40 to 90 seconds to react. On the other hand, there was a case in one of the customers when the heated room of the data center was cooled by a full air conditioning system to the standard temperature for several hours - the walls gave off the accumulated heat. They were lucky: for sure, such a room, when the cooling is turned off, does not last half a minute, but all 3-5.

- And what if there is a DDIBP in our data center?

DDIBP is an excellent option for emergency power supply. In short - you are constantly spinning a heavy top, maintaining the rotation of which costs almost nothing. As soon as the power disappears, the same top without a break begins to give the accumulated energy to the network, which allows you to quickly switch circuits. Accordingly, there is no problem of a long start of the reserve (provided that the diesel is ready for quick start) - and you can forget about the pool.

- What does the design process look like?

As a rule, the customer handles preliminary data on the object. Need an approximate estimate of several options. It is often important to simply understand where the costs and organizational difficulties are expected or already have the client. Rarely, cooling is ordered separately, so usually there are already introductory ones for both power and redundancy details. Considering that our systems ultimately occupy far from the top lines of the data center budget, colleagues joke that we are dealing with “different stuff”. Nevertheless, a lot in operation depends on the quality of the project and subsequent implementation. Then, as a rule, the customer or contractor (that is, we) dwells on one of the options for the totality of systems. We calculate the details and “pull” each link by selecting the right equipment and modes. A fragment of the circuit is above.

- Are there any features at tenders?

Yes. A number of our solutions are cheaper and more efficient than other participants. Affects practice. Nevertheless, we have raised more than a hundred data centers throughout the country. But people are surprised, asking where such calculations come from. We have to come, explain all this with the use of temperature graphs. Then they lay out this whole thing to our competitors, so that they too are corrected. And a new round. Further questions: the choice of the right pump, the choice of refrigeration machines, drycoolers, so that they are affordable and work efficiently.

- And in more detail?

Take the same chiller. The first thing that everyone is looking at is cooling capacity, then they enter it into the necessary dimensions, the third stage is a comparison of options (if any) for annual energy consumption. This is a classic approach. As a rule, people do not go further. We began to pay attention to the operation mode of the chiller at full and partial load. That is, depending on how loaded it is, its efficiency varies, therefore it is important to choose, among other things, the reserve throughout the system for the number of chillers in such a way that, when all chillers are constantly in operation, they are at the most efficient point in their work . A very crude example, but such details are just the sea.

Another typical example is a chiller with built-in freecooling or with an external cooling tower. Here, to the detriment of dimensions, it is possible to significantly increase efficiency and controllability, which is often economically more important. Here you already need to look for an approach to the customer.

A cold stream comes out from under the raised floor, cools the rack and lifts it to the ceiling, where the heated air is taken from the hall for cooling.

Harvesting air for the machine in the ventilation chamber.

Cooling tests on the "Compressor". There were 100 kW heaters in the hall, which heat the air for 72 hours.

Chillers and dry coolers on the roof.

- What is the most common mistake when starting a data center?

Water, air and other environments in the routes and rooms of the data center should be clean. Water in the mains is prepared in a special way, excess pressure is maintained in the halls so that the dust does not suck in through the doors (in the story about the epidemic “When the system administrators ruled the Earth” it is very well revealed), nobody is allowed inside without shoe covers. The level of cleanliness, I think, is understandable. So, before starting the data center in operation, it is necessary to call a cleaning company, which will tear off the machine room as if processors will be assembled in it. Otherwise, in the evening, the cooling system will go into emergency mode due to clogged air filters. “The installers are gone, we launched the data center,” is a fairly common case, despite our warnings. About once a year this happens, we come, we change the filters. And if you forgot to put filters - then the air conditioners themselves.

Questions

I hope I helped to understand the basics of cooling for data centers. If details are interesting - ask here in the comments or directly to astepanov@croc.ru . You can ask questions about specific sites, tell you options and advise non-obvious solutions with equipment, if necessary.