Library for automation of acceptance testing in mobile applications

Preamble

I work for a company that makes a large enough and, not afraid of the word, bulky mobile application with a solid history for a mobile application of several years and, accordingly, with a fairly solid and monstrous code.

The flow of wishes from the customer is diverse and plentiful, and in connection with this, from time to time, it is necessary to make changes even to those places that, for example, are not intended for this. Some of the problems that arise with this - regression bugs - deliver quite a few complicated hours from time to time.

At the same time, for one reason or another, there is only manual testing on the project and a rather impressive number of testers, and rather naive attempts to automate it remained only at the level of several rather trivial unit tests at the “Hello world” level.

In particular, the testing department has an impressive test cycle to search for regression, which is carried out quite regularly and takes a decent amount of time. Accordingly, once a task arose to somehow optimize this process. This will be discussed.

Honestly, I don’t remember what tools for automated acceptance testing I looked at and why they didn’t suit me. (I would be very grateful if someone in the comments would tell you interesting options for solving this - I probably missed something very worthwhile) One thing is for sure - since our application, in fact, a thin client, there are so many cases impossible (well, or at least I don’t know how) to cover with unit tests and you need something else. One way or another, it was decided to write a library to automate acceptance testing.

About the system itself and its use

So, this system must satisfy the following requirements:

- The system should be able to run tests in application runtime.

- The system should enable testers to translate tests from the human language to something that can be performed automatically.

- The system should cover acceptance tests in the sense that it should provide access in one way or another to the data that the user sees.

- The system must be portable. (It is desirable that you can adapt it to other platforms except iOS and, possibly, to other projects)

- The system should make it possible to synchronize tests with an external source.

- The system should enable sending test results to an external service.

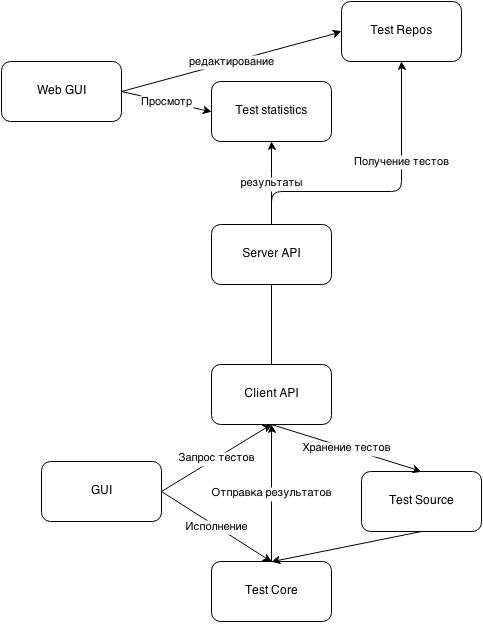

In large blocks, the testing system should consist of the following blocks:

A potential tester should act according to the following scheme:

- Go to webGUI, log in and write / edit some test cases and test plans

- Launch the application on the device and open the test interface (for example, by tap with three fingers at any time of the application)

- Get test plans and test cases of interest from the server

- Run tests. Some of them will complete correctly, some will fall, some (for example, UI tests) will require additional validation

- on webGUI, open this execution history and find tests that require additional validation and, on the basis of additional data (for example, screenshots at those moments), put down on your own whether the test was successful or not

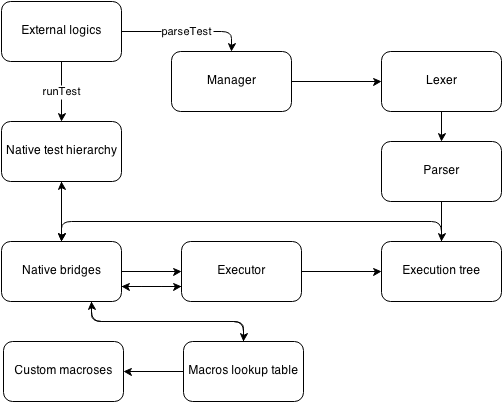

In this article, I would like to dwell on the TestCore module. It can be described in approximately the following way:

Procedure:

- Native macros are read and placed in the macro table with keys - names with which they can be called from the test case

- Files of test cases are read - they are run through the lexer and parser, on the basis of which the syntax execution tree is built.

- Test cases are placed in the table of test cases with keys - names.

- From outside the team comes to execute a test plan and the user sees the test result.

And from this moment in more detail:

The test case is a structure of the following form:

Заголовок теста и передаваемые параметры

Список действий теста

Терминальный символ.

A test action can be a macro call, arithmetic, or assignment. Here, for example, are some of the simplest examples that I used to verify the correctness of the system:

#simpleTest

/*simple comment*/

send("someSignal")

waitFor("response")->timeOut(3.0)->failSignals("signalError")->onFail("log fail")

#end

#someTest paramA,paramB

log("we have #paramA and #paramB")

#end

#mathTest

foo = 1 + 2

bar = foo * 3

failOn(bar == 9, "calculation is failed")

failOn(((1 + 2) < 5),"1 + 2 < 5 : false")

failOn(NOT((1 + 2) > 5), "1 + 2 > 5 : true")

failOn(NOT("abc" == "def"), "true equality of abc and def")

failOn("abc" == "abc", "false equality of abc and abc")

failOn(1 == 2, "compeletly wrong equality")

#end

The send, log, waitFor, and other operators are the macros - which I wrote about above - these are, in fact, native methods, the writing of which lies on the shoulders of the programmer, not the tester.

Here, for example, the logging macro code:

@implementation LogMacros

-(id)executeWithParams:(NSArray *)params success:(BOOL *)success

{

NSLog(@"TESTLOG: %@",[params firstObject]);

return nil;

}

+(NSString *)nameString

{

return @"log";

}

@end

And here, the macro code FailOn - essentially an assert.

@implementation FailOnMacros

-(id)executeWithParams:(NSArray *)params success:(BOOL *)success

{

id assertion = [params firstObject];

NSString *message = nil;

if (params.count > 1)

message = params[1];

if ([assertion isKindOfClass:[NSNumber class]])

{

if ([assertion intValue] == 0)

{

*success = NO;

TCLog(@"FAILED: %@",message);

}

}

return nil;

}

+(NSString*)nameString

{

return @"failOn";

}

@end

Thus, by writing a number of custom macros (macros from the above example and several others), it is possible to provide access to the application data for checking them with these tests, performing some UI actions, sending screenshots to the server.

One of the key macros is the waitFor macro, which expects the application to respond to its actions. He, at the moment, is one of the main points where the application code affects the execution of test cases. That is, for the library to work comfortably, it is necessary not only to write a certain number of macros specific to the project, but also to introduce various status signals into the application code, about changing the state, sending a request, receiving a response, and so on. That is, in other words, prepare the application so that it will be tested in this way.

Under the hood

And under the hood, the fun begins. The main part (lexer, parser, executor, execution tree) is written in C, YACC and Lex - this way it can be compiled, run and quite successfully interpret the tests not only on iOS, but also on other systems that can C. If there is interest - I would try to write in a separate article about the intricacies of merging non-native iOS languages with my favorite IDE XCode - all development was carried out in it, but in this article I will tell you less about the code only.

Lex

As you already understood, to solve the problem, a small, but very proud interpreted scripting language was written, which means that the task of interpretation arises to its full potential. For a high-quality interpretation of self-made bikes, there are not so many funds and I used a bunch of YACC and LEX.

There were several articles on the subject on the subject (to be honest, they weren’t enough for the start. Some kind of not too complicated, but not too obvious, example of use was missing. And I would like to believe that if someone there will be such a task - my code will help to take some kind of start):

A series of articles on writing a compiler with an immersion in how it works ;

A short article about one simple example ;

Wiki Lexer ;

Wiki about YACC .

Well, there are a lot of other useful and not very references ...

If briefly - the task of Lexer is to ensure that the parser is supplied not with character-by-character, but with a prepared sequence of tokens with already defined types.

In order not to litter the article with long listings, here is the code of one of the lexers:

Lexer

In fact, it distinguishes arithmetic signs, numbers and names and passes them to the parser as input.

Yacc

Essentially, YACC is a magical thing that translates a once-written description of a language in the Backus-Naur Form into a language interpreter.

Here code of the main parser

Parser

Consider a piece of it, for an understanding of:

program:

alias module END_TERMINAL {finalizeTestCase($2);}

;

module: /*this is not good, but it can be nil*/ {$$ = NULL;}

| expr new_line {$$ = listWithParam($1);}

| module expr new_line {$$ = addNodeToList($1,$2);}

;

YACC generates a syntax tree, that is, in fact, the entire test case is collapsed into one program node, which in turn consists of a case declaration, an action list, and a final terminal. The list of actions, in turn, can be collapsed from function calls, arithmetic expressions, and so on. For instance:

func_call:

NAME_TOKEN '(' param_list ')' {$$ = functionCall($3,$1);}

| '(' func_call ')' {$$ = $2;}

| func_call '->' func_call {$$ = decorateCodeNodeWithCodeNode($1,$3);}

;

param_list: /*May be null param list */ {$$ = NULL;}

| math {$$ = listWithParam($1);}

| param_list ',' math {$$ = addNodeToList($1,$3);}

;

math:

param {$$ = $1;}

| '(' math ')' {$$ = $2;}

| math sign math {$$ = mathCall($2,$1,$3);}

| NOT '(' math ')' {$$ = mathCall(signNOT,$3, NULL);}

| MINUS math {$$ = mathCall(signMINUS,$2, NULL);}

;

In particular, for example, a function call is its name, its parameters, its modifiers.

In general, YACC itself simply goes through the token nodes and collapses them. In order to do something with this, a logic is hung on each pass according to some kind of syntactic construction, which in parallel creates exactly a tree in memory, which can be used in the future. For understanding - in YACC notation

$$ is the result that is associated with the given expression

$ 1, $ 2, $ 3 ... - these are the results associated with the corresponding phonemes of these expressions.

And to a call like listWithParam, mathCall and so on - they generate and connect nodes in memory.

Nodes

The source code of how the nodes are

generated can be read here: The logic of node generation The node

heading

In fact, the node is required to be able to bypass the graph that they represent together and get some conclusion about the test from the results of the tour. In fact, they should be an abstract syntax tree.

After folding the program expression into $$, we have just this tree and we can calculate it.

Executor

The executor’s code is actually stored here: Code

Actually, this is a recursive parsing of a tree in depth from left to right. A node, depending on its type, is parsed either as mathNode (arithmetic) or as operationalNode (calling a macro, compiling a list of parameters).

The leaves of this graph are either constants (string, numeric), or variable names, which form a lookup table at the initial parsing stage and acquire quick access indexes to the memory cells in them, and at the calculation stage they simply access these cells, or call the macro, which in the same way, through the bridge module, it requests the execution of a macro with this name and a list of parameters (here you should not forget that the parameters should already be calculated by this moment). And there are a lot of other routine and not very moments related to memory management, data structures, etc.

Call example

Well, as a conclusion, I will give an example of how this miracle, in fact, is called from the native code:

-(void) doWork

{

TestReader *reader = [[TestReader alloc] init];

[reader processTestCaseFromFile:[[NSBundle mainBundle] pathForResource:@"testTestSuite" ofType:@"tc"]];

[reader processTestHierarchyFromFile:[[NSBundle mainBundle] pathForResource:@"example" ofType:@"th"]];

[self performSelectorInBackground:@selector(run) withObject:nil];

}

-(void) run

{

[[[TestManager sharedManager] hierarchyForName:@"TestFlow"] run];

}

All execution of the test plan is carried out in a separate thread - not in the main one - and when writing macros that require access to application data, it is recommended not to forget about this. And at the output of the Run method there will be an object of the TestHierarchy class, which contains a tree of objects of the TestCase class with the name and execution status, and of course a bunch of beeches in the logs.

As PS

Oddly enough, testers took this thing with joy and now this thing is slowly preparing for implementation on the project. It would be great to write about this wonderful process later.

You can find the source codes of the TestCore module for iOS at the link on the github: github.com/trifonov-ivan/testSuite

Generally, a significant part of the work was done more for self-education, but at the same time it was brought to some logical conclusion - therefore I will be very grateful if you can tell me some weaknesses in the idea - maybe some means that more effectively solve this problem. What do you think - is it worth developing an idea to a full-fledged test service?

Well and yes - if any of the parts needs more detailed explanations - I would try to write about it, because there was a breakthrough in the process. “But the fields of this article are too narrow for them” (c)