How to build VDI for complex graphics

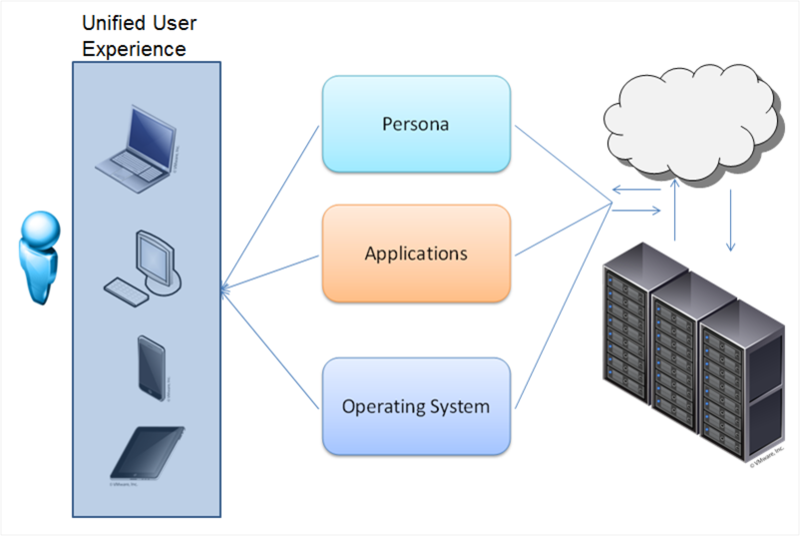

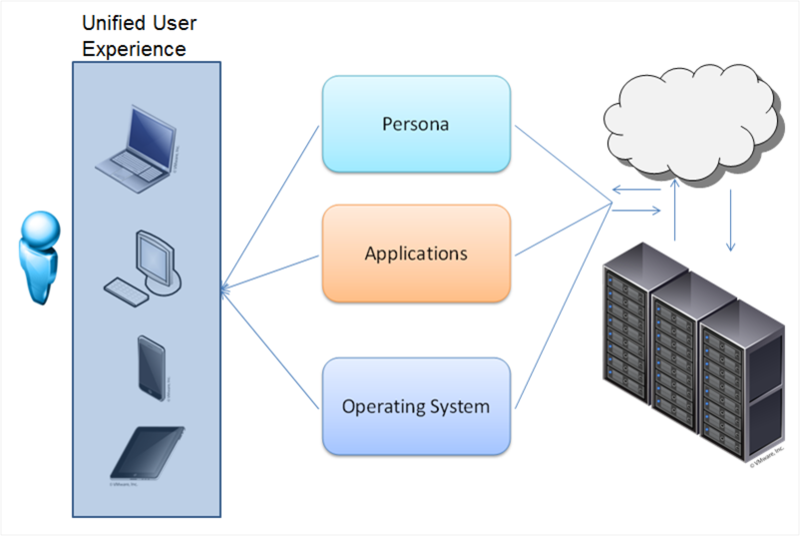

The centralization of desktops and client PCs in the data center today is increasingly becoming the subject of discussion in the IT community, this approach is especially interesting for large organizations. One of the hottest technologies in this regard is VDI. VDI allows you to centralize the servicing of client environments, simplify the deployment of applications, their configuration and configuration, as well as updating and monitoring compliance with security requirements.

VDI unbinds the user's desktop from the hardware. You can deploy both a permanent virtual desktop and (the most common option) a flexible virtual machine. Virtual machines include an individual set of applications and settings, which is deployed in the base OS during user authorization. After exiting the system, the OS returns to a “clean” state, removing any changes and malware.

It is very convenient for the system administrator - manageability, security, reliability at the height, applications can be updated in a single center, and not on every PC. Office packages, database interfaces, Internet browsers and other undemanding graphics applications can run on any server (well-known 1C terminal clients).

But what if you want to virtualize a more serious graphics station?

Here you can not do without virtualization of the graphics subsystem.

There are three work options:

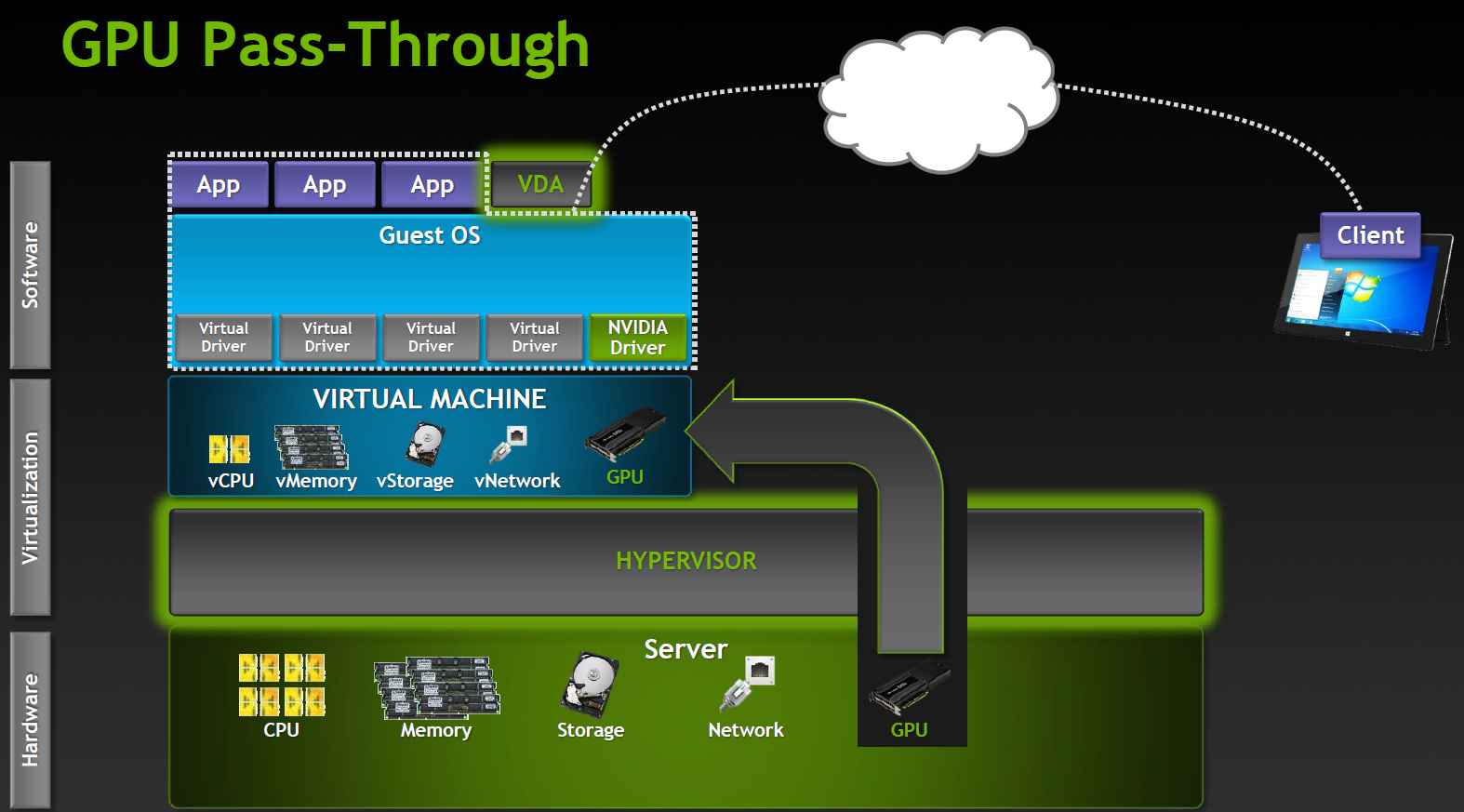

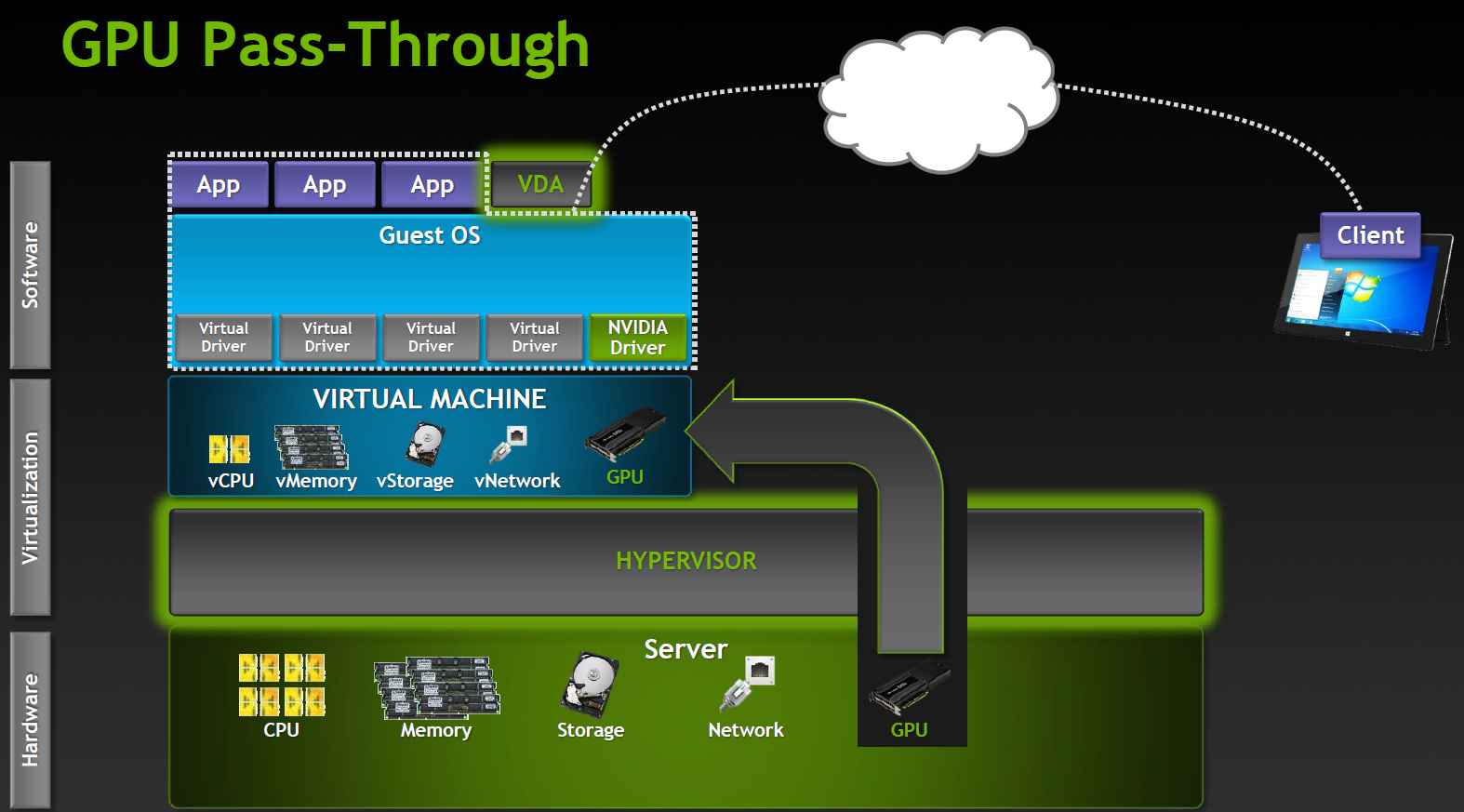

Let's take a closer look Dedicated GPU The most productive operating mode, supported in Citrix XenDesktop 7 VDI delivery and VMware Horizon View (5.3 or higher) with vDGA. Fully work NVIDIA CUDA, DirectX 9,10,11, OpenGL 4.4. All other components (processors, memory, drives, network adapters) are virtualized and shared between hypervisor instances, however one GPU remains one GPU. Each virtual machine gets its own GPU with virtually no penalty in performance. The obvious limitation is that the number of such virtual machines is limited by the number of available graphics cards in the system. Shared GPU

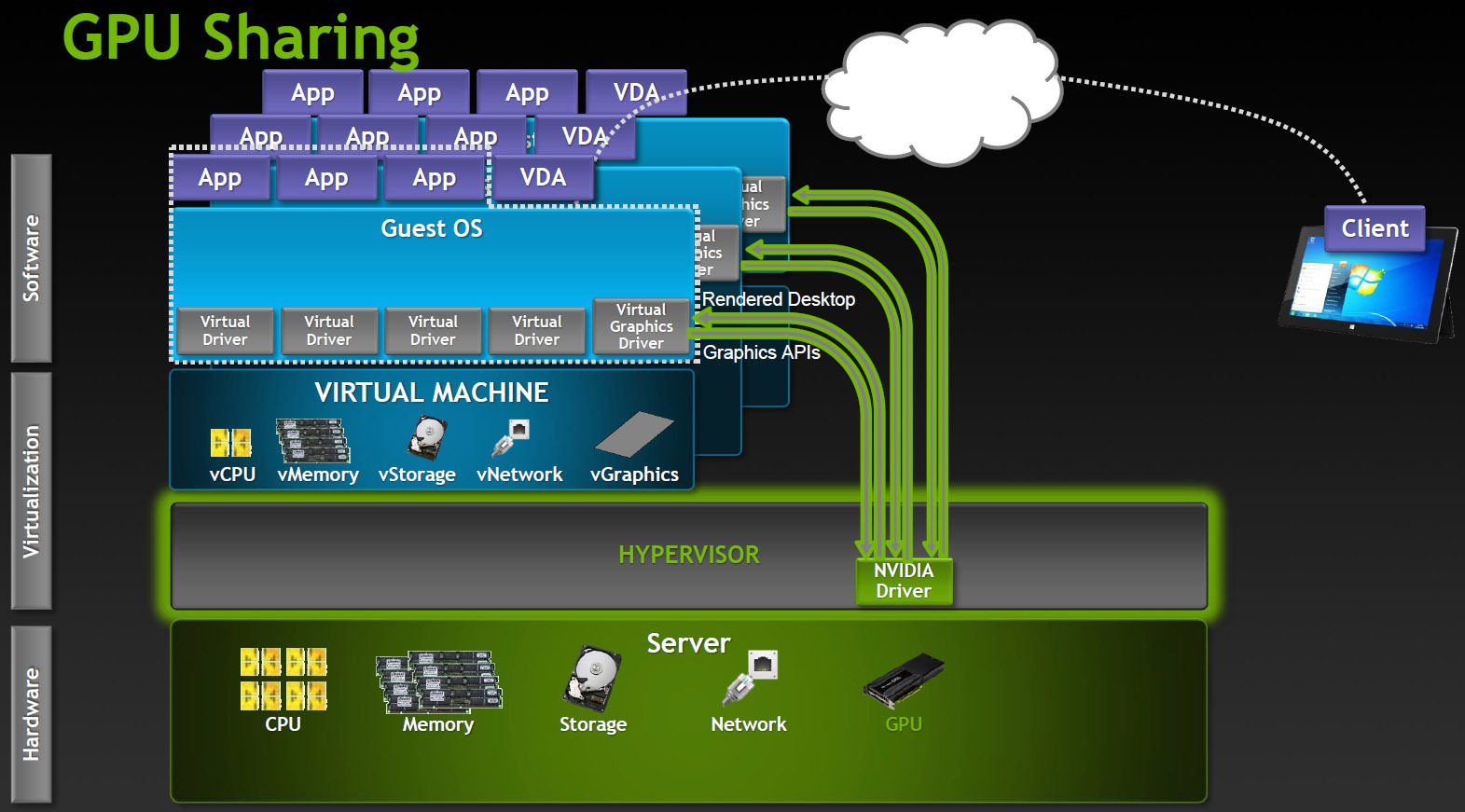

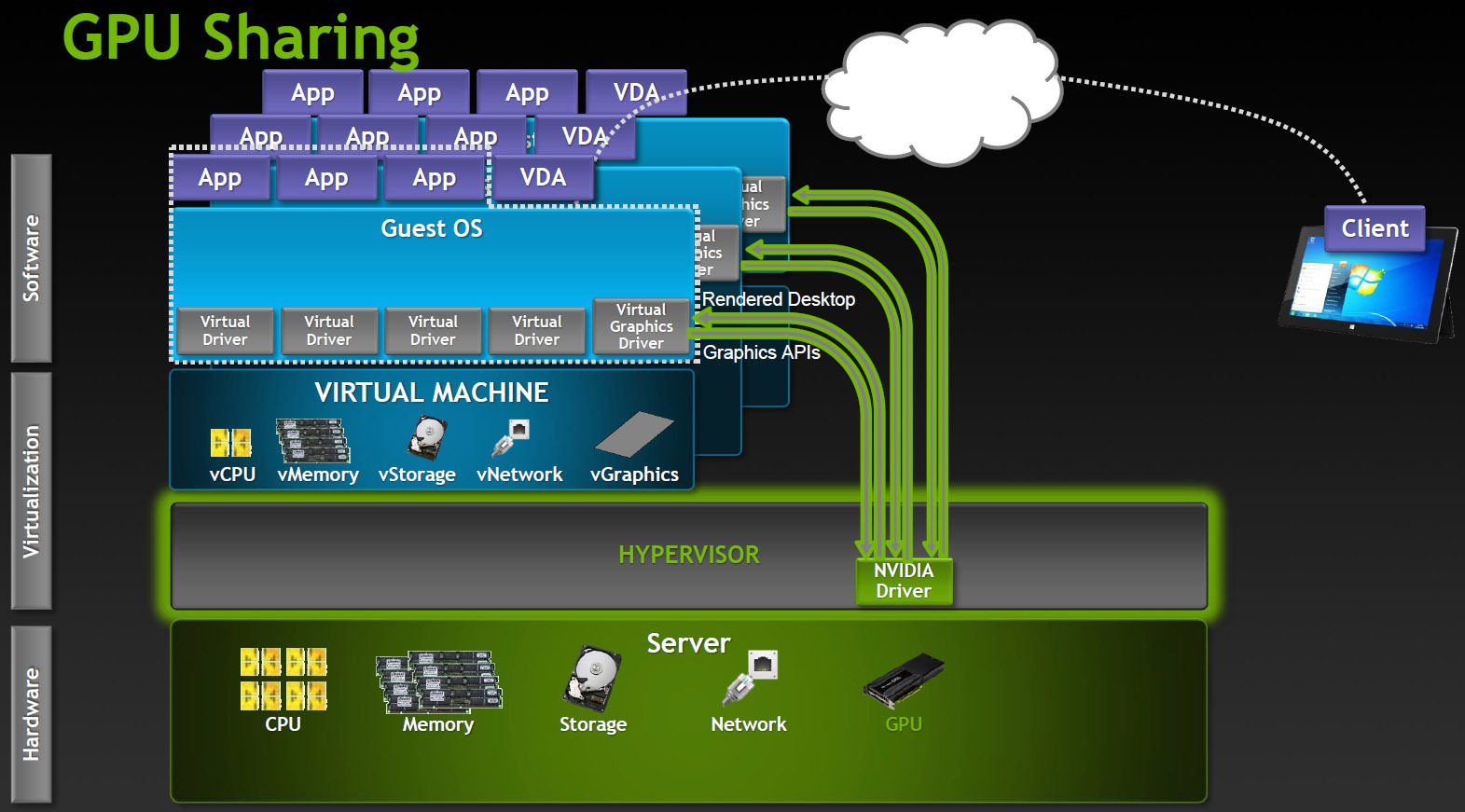

Powered by Microsoft RemoteFX, VMware vSGA. This option relies on the capabilities of the VDI software, the virtual machine seems to work with a dedicated adapter, and the server GPU also believes that it works with a single host, although this is actually an abstraction level. The hypervisor intercepts API calls and translates all the commands, rendering requests, etc., before being sent to the graphics driver, and the host machine works with the virtual card driver.

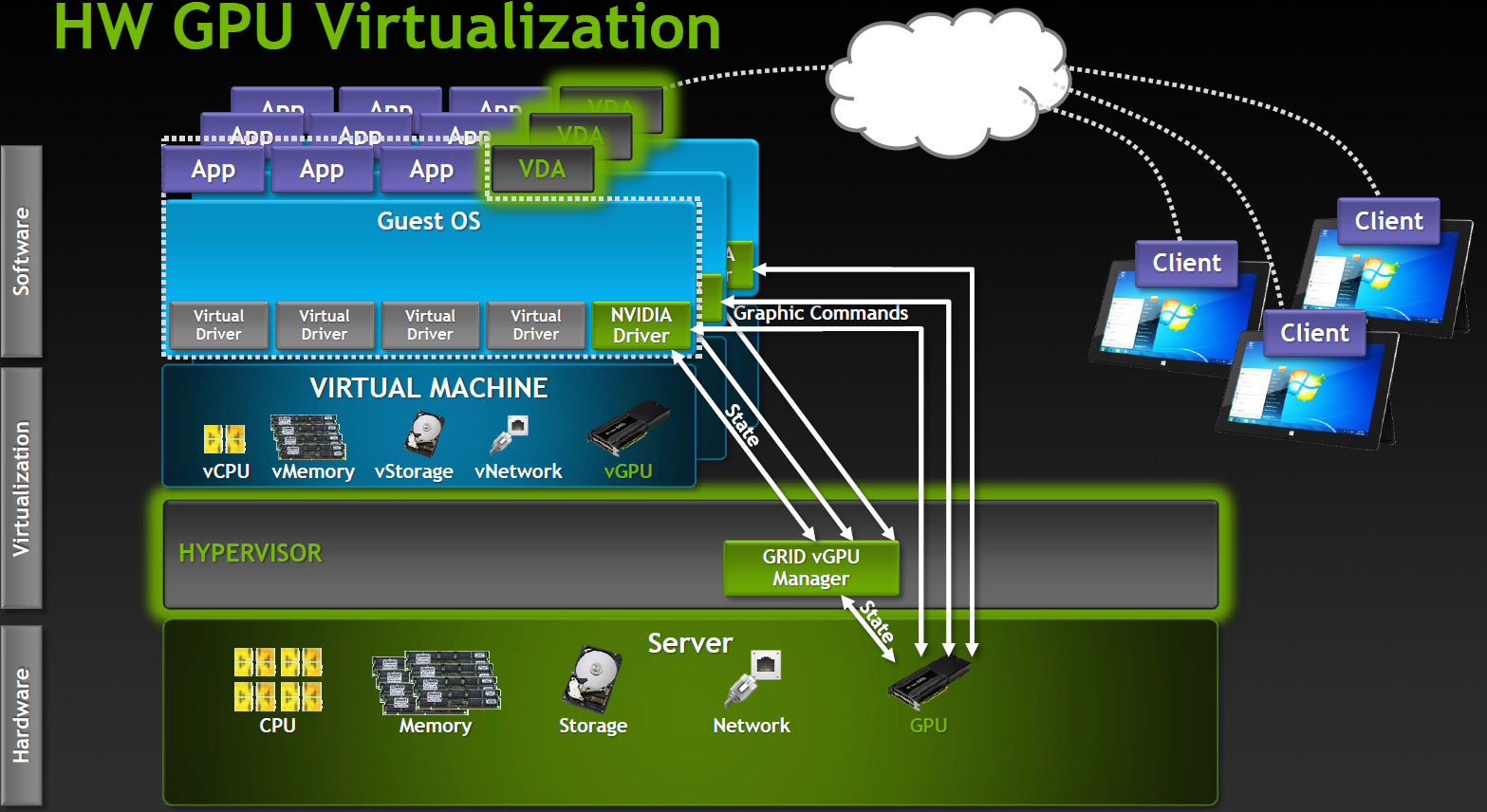

Shared GPU is a reasonable solution for many cases. If the applications are not too complex, then a significant number of users can work at the same time. On the other hand, a lot of resources are spent on translating the API, and it is impossible to guarantee compatibility with applications. Especially with those applications that use newer versions of the API than existed at the time of the development of the VDI product. Virtual GPU The most advanced option for sharing GPUs between users, currently supported only in Citrix products.

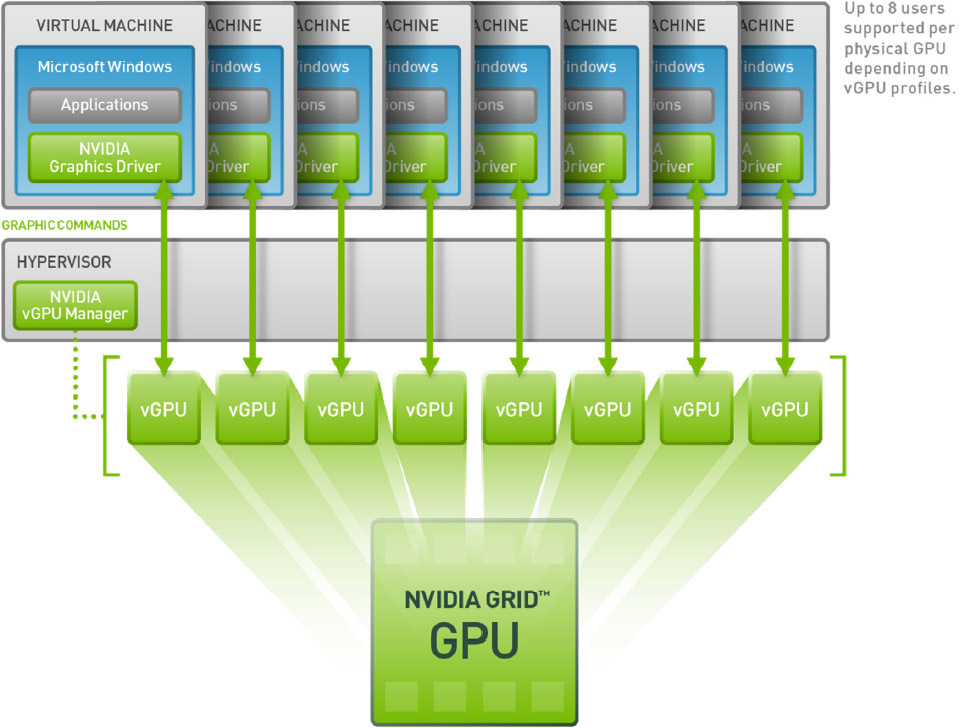

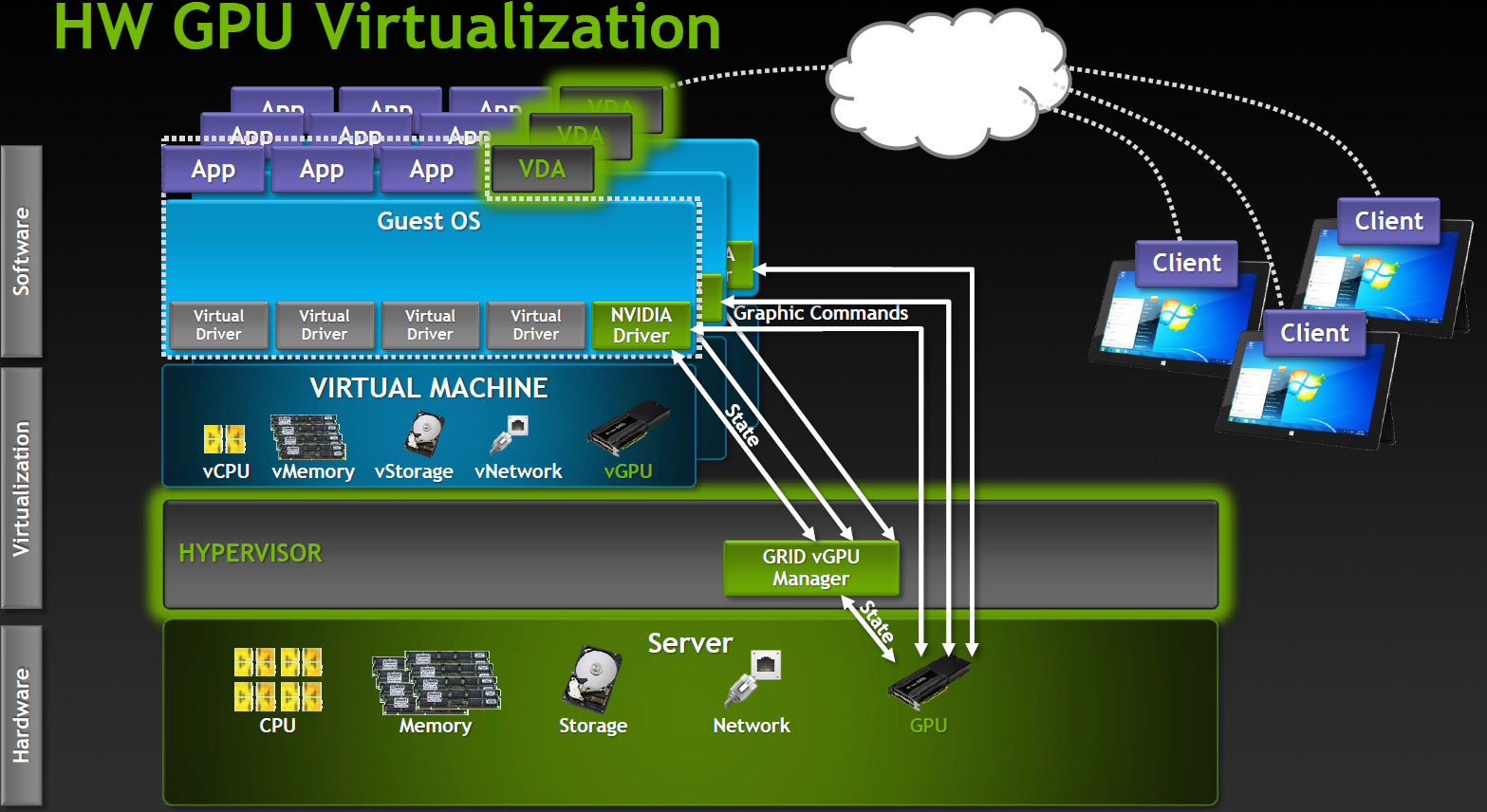

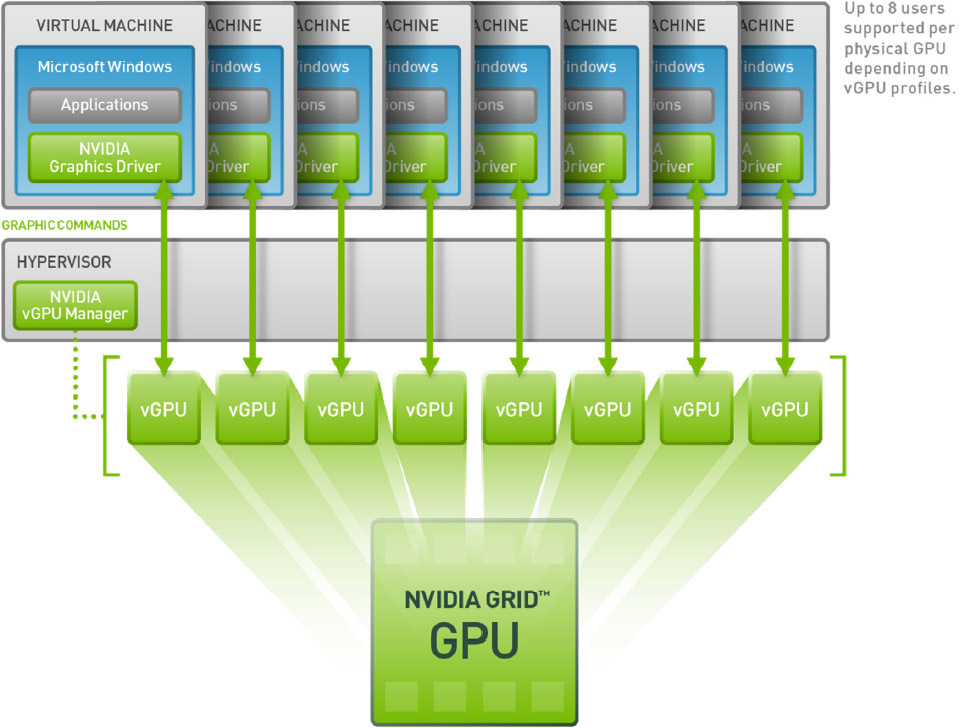

How it works? In a VDI environment with vGPU, each virtual machine runs through a hypervisor with a dedicated vGPU driver, which is available in each virtual machine. Each vGPU driver sends commands and controls one physical GPU using a dedicated channel.

Processed frames are returned by the driver to the virtual machine for sending to the user.

This mode of operation became possible in the latest generation of NVIDIA GPUs - Kepler. Kepler has a Memory Management Unit (MMU) that translates virtual host addresses to physical system addresses. Each process works in its own virtual address space, and the MMU shares their physical addresses so that there is no intersection and struggle for resources.

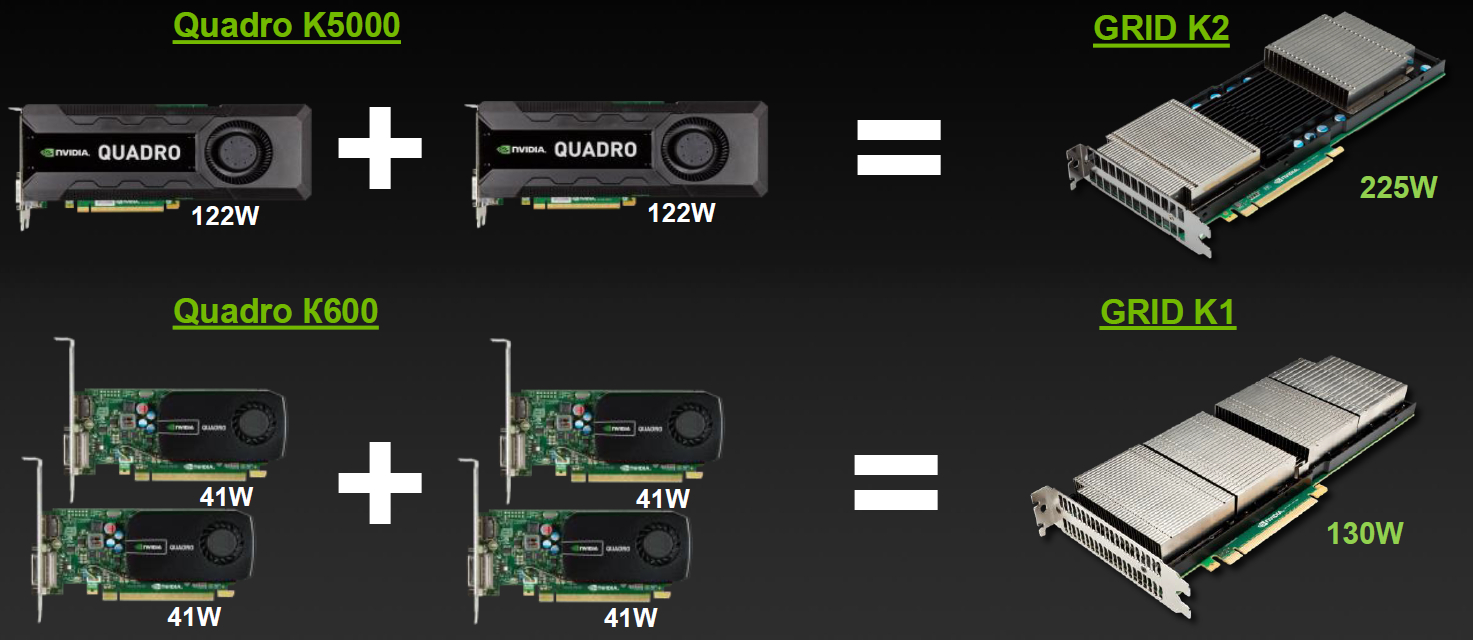

Embodied in the cards Lineup

Varieties for VDI

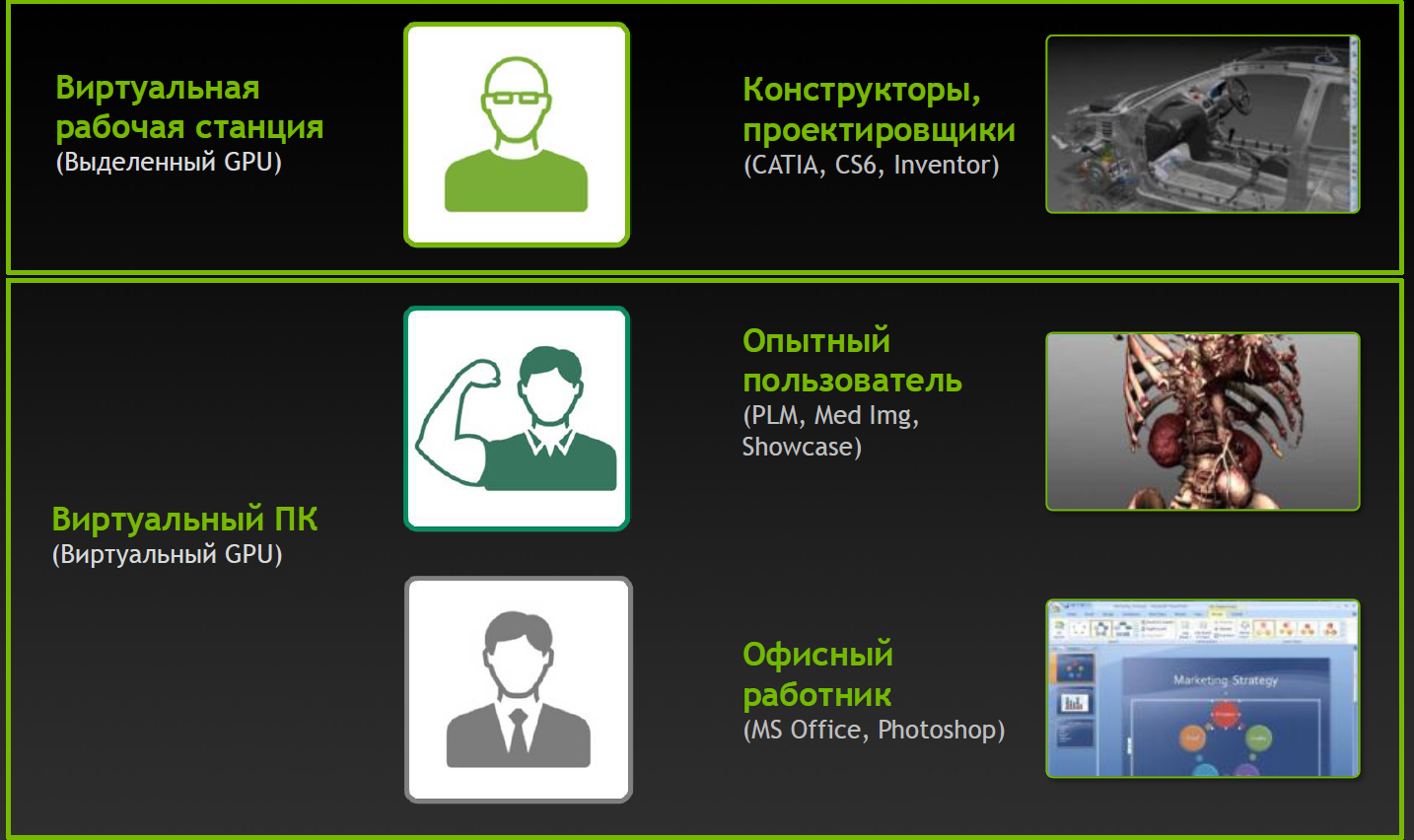

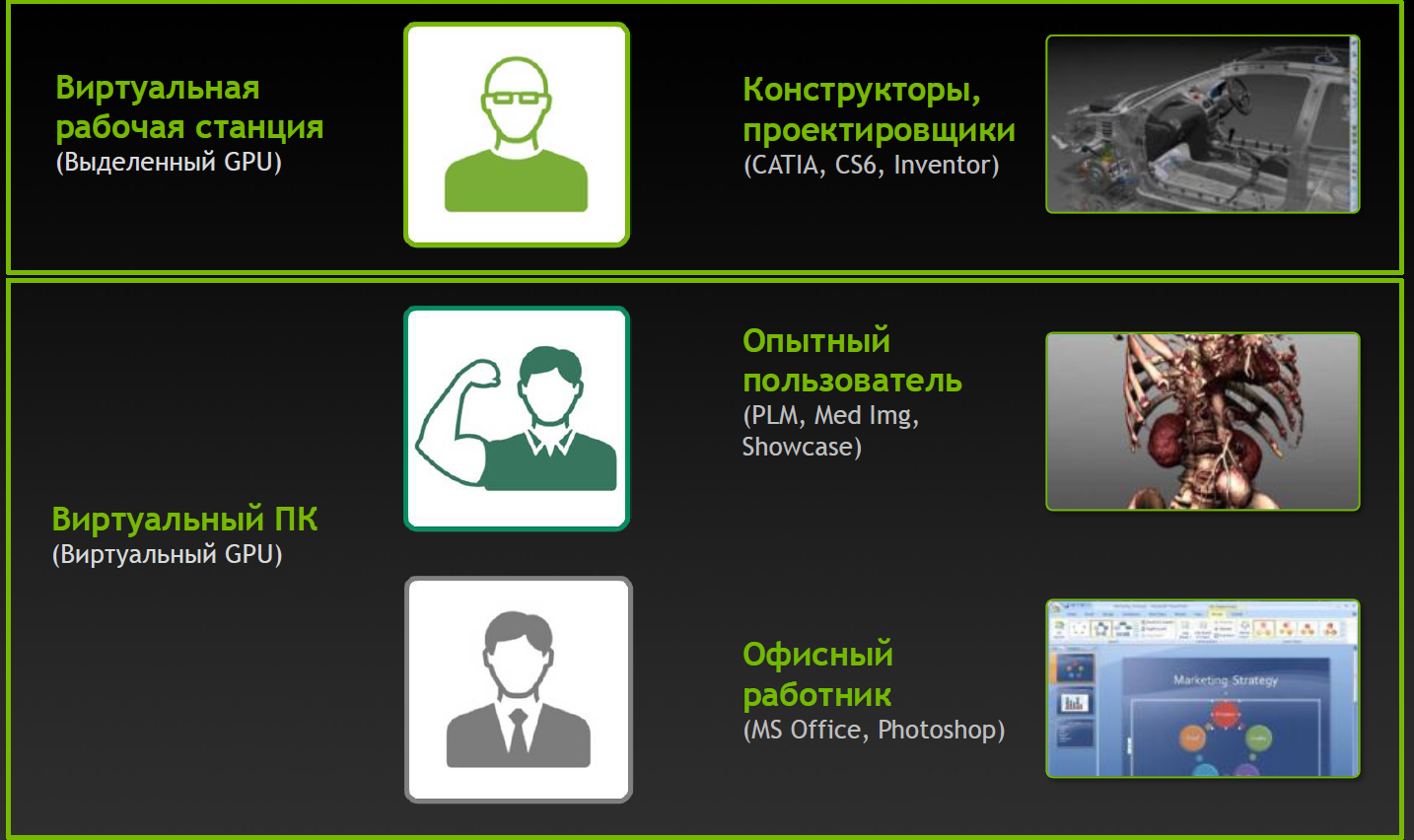

But simple division by characteristics is not enough, division into virtual profiles vGPU is available for GRID. User Type Vision

Profiles and Applications

Profiles with the Q index are certified for a number of professional applications (for example, Autodesk Inventor 2014 and PTC Creo) in the same way as Quadro cards.

Complete virtual happiness!

NVIDIA would not be true to itself if it had not thought about additional varieties. Game services on demand (Gaming-as-a-Service, GaaS) are gaining some popularity, where you can also get a good bonus from virtualization and the ability to split the GPU between users.

Varieties for gaming in the clouds

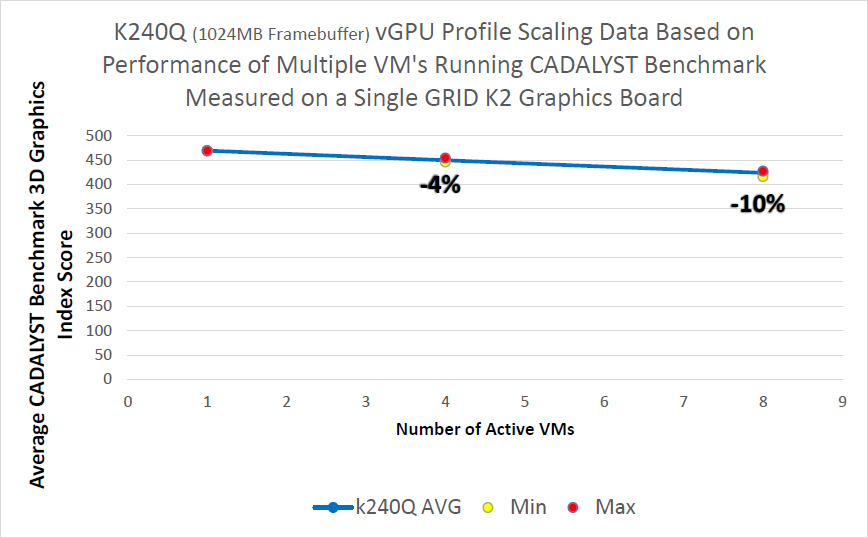

Impact of virtualization on performance

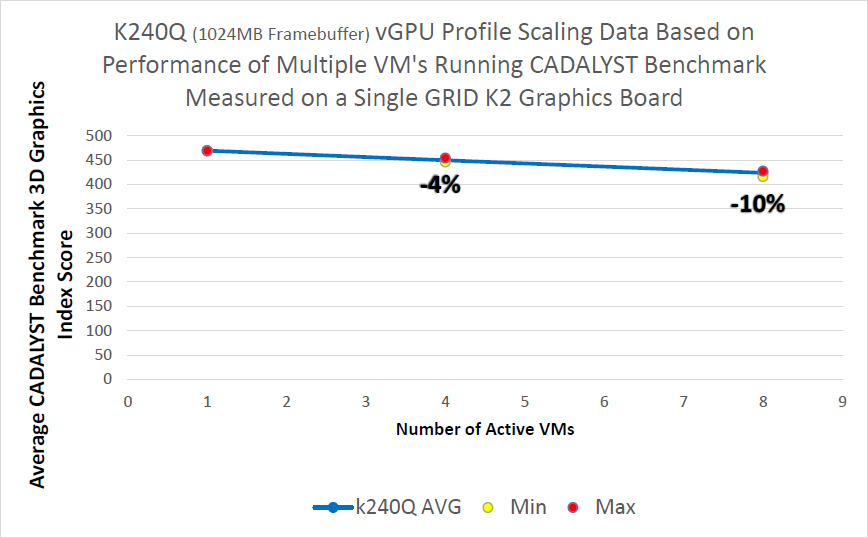

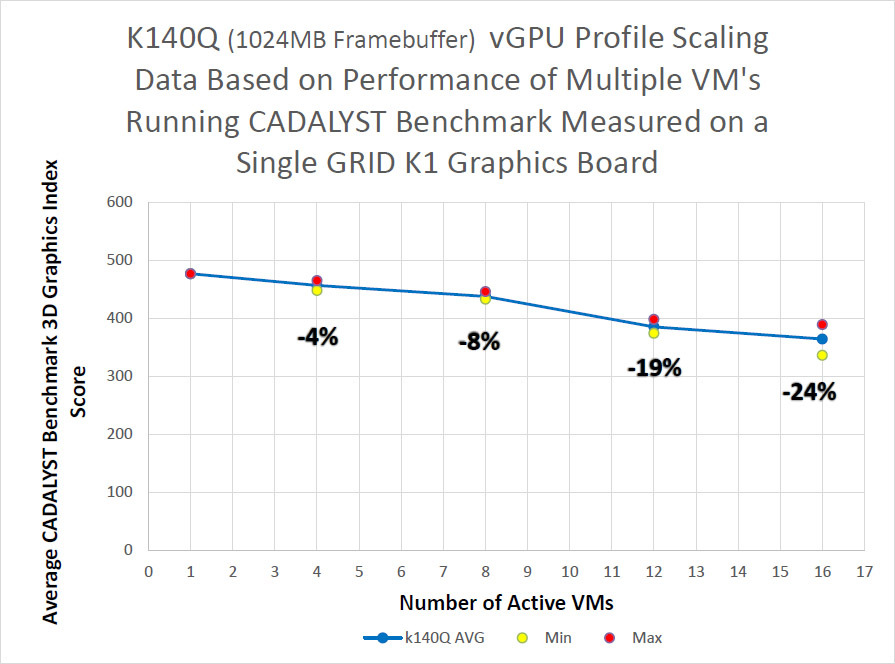

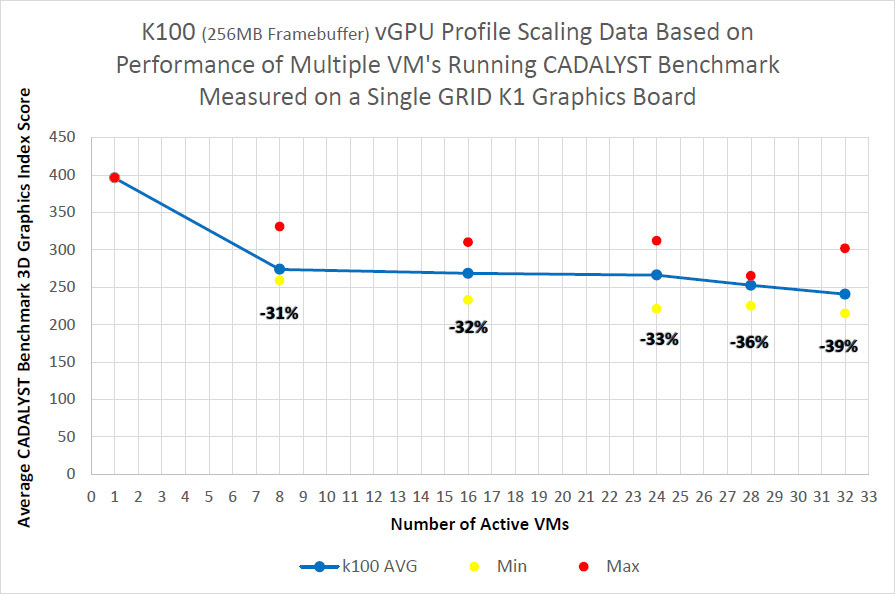

Server configuration:

Intel Xeon CPU E5-2670 2.6GHz, Dual Socket (16 Physical CPU, 32 vCPU with HT)

Memory 384GB

XenServer 6.2 Tech Preview Build 74074c

Virtual Machine Configuration:

VM Vcpu: 4 Virtual CPU

Memory: 11GB

XenDesktop 7.1 RTM HDX 3D Pro

AutoCAD 2014

Benchmark CADALYST C2012

NVIDIA Driver: vGPU Manager: 331.24

Guest driver: 331.82

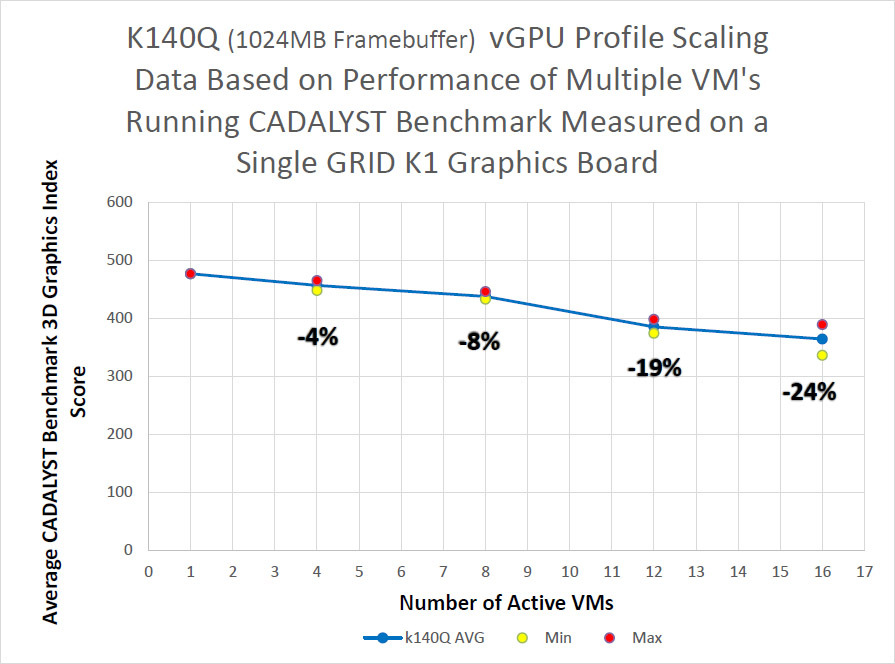

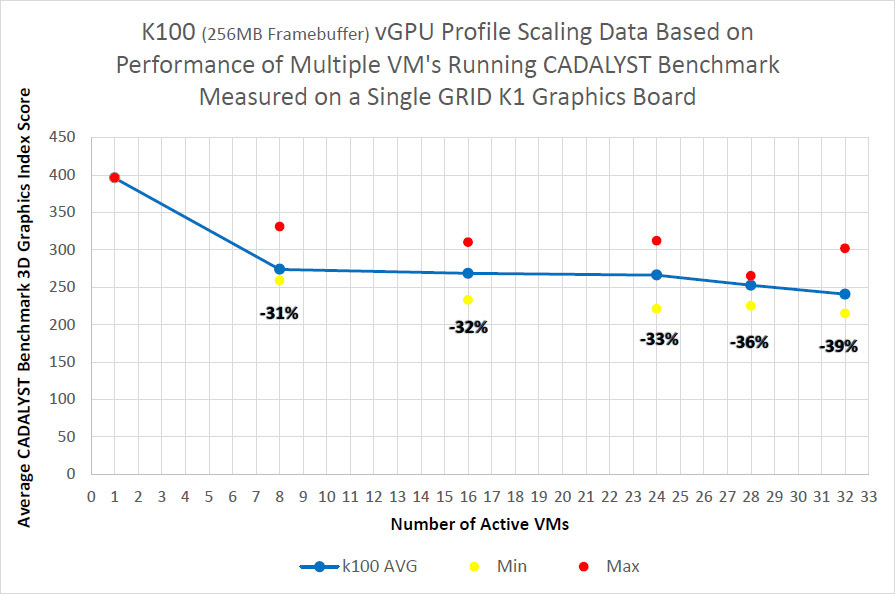

The measurement procedure is simple - in virtual machines the CADALYST test was run and the performance was compared when adding new virtual machines.

As can be seen from the results, for the older K2 model and the certified profile, the drop is about 10% when 8 virtual machines are launched, for the K1 model the drop is stronger, but there are twice as many virtual machines.

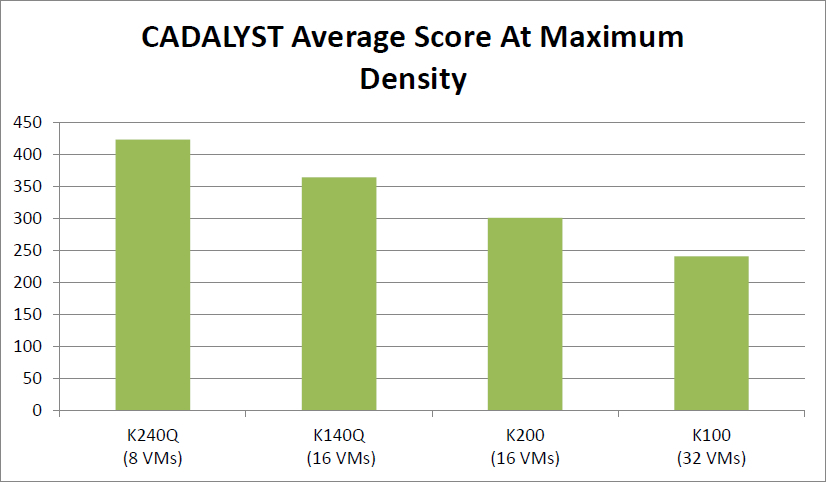

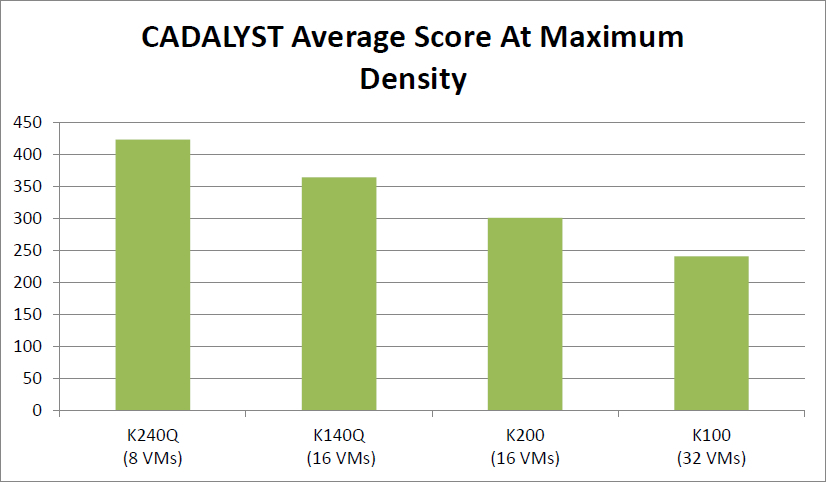

The absolute result of cards with the maximum number of virtual machines:

Where to put the cards?

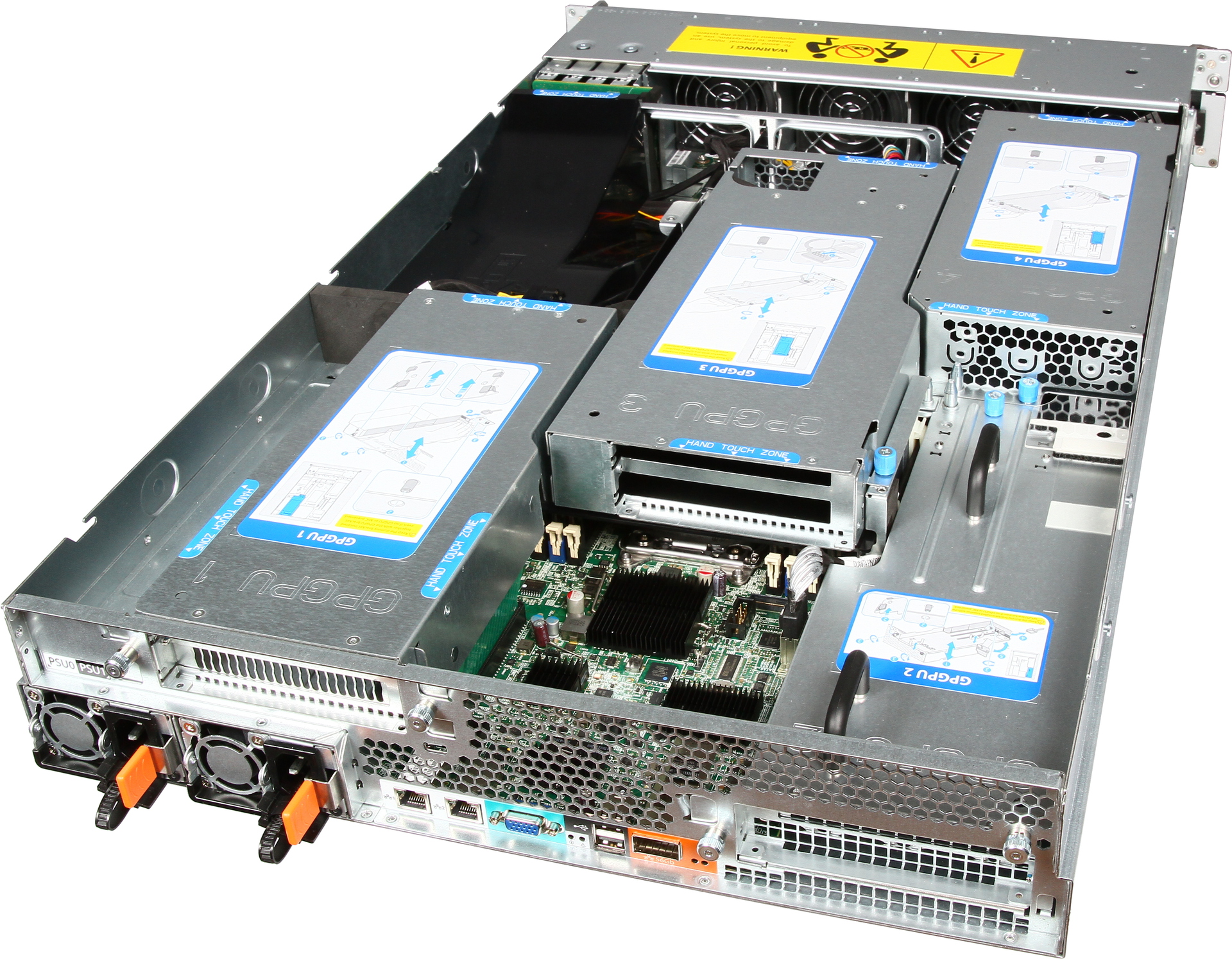

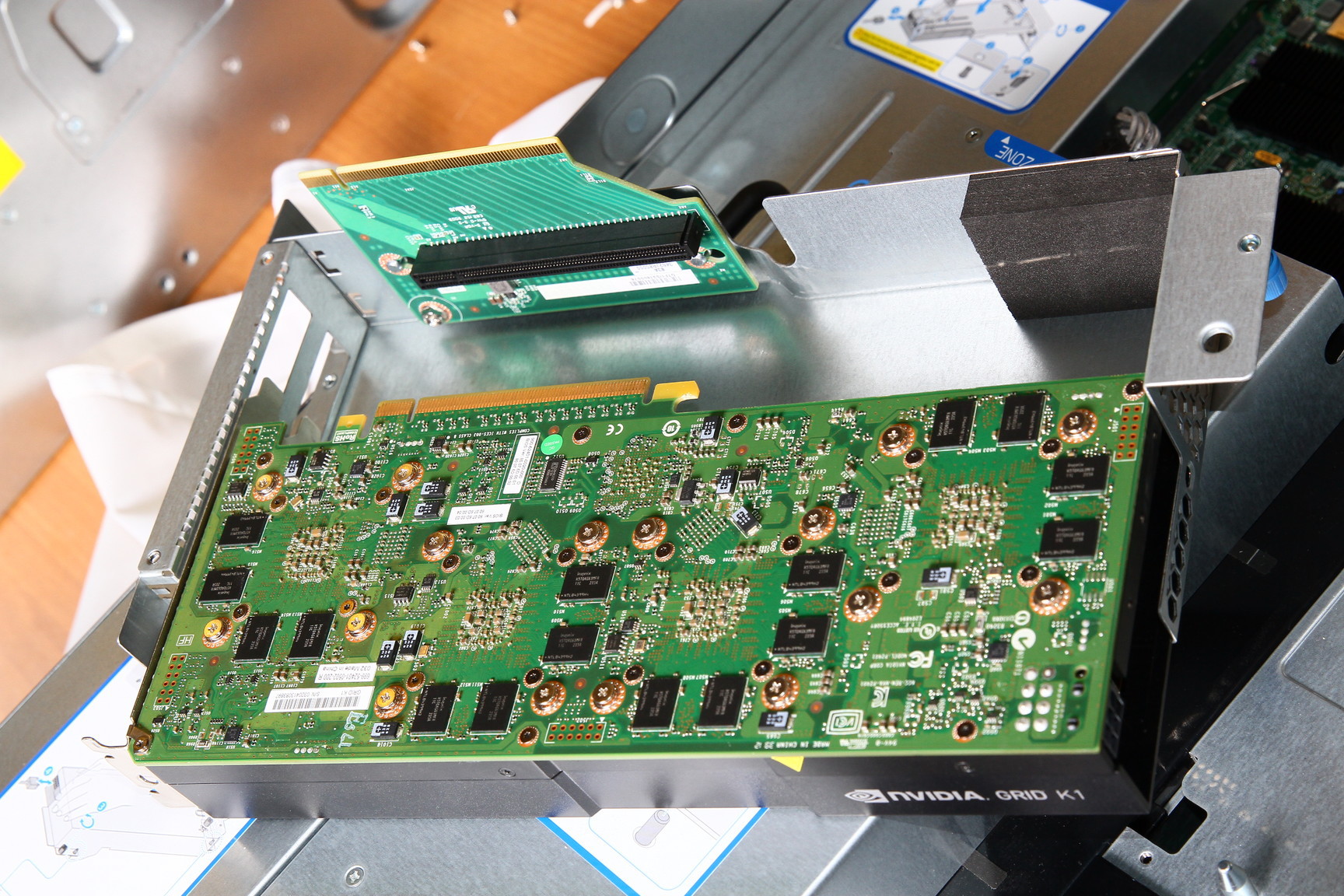

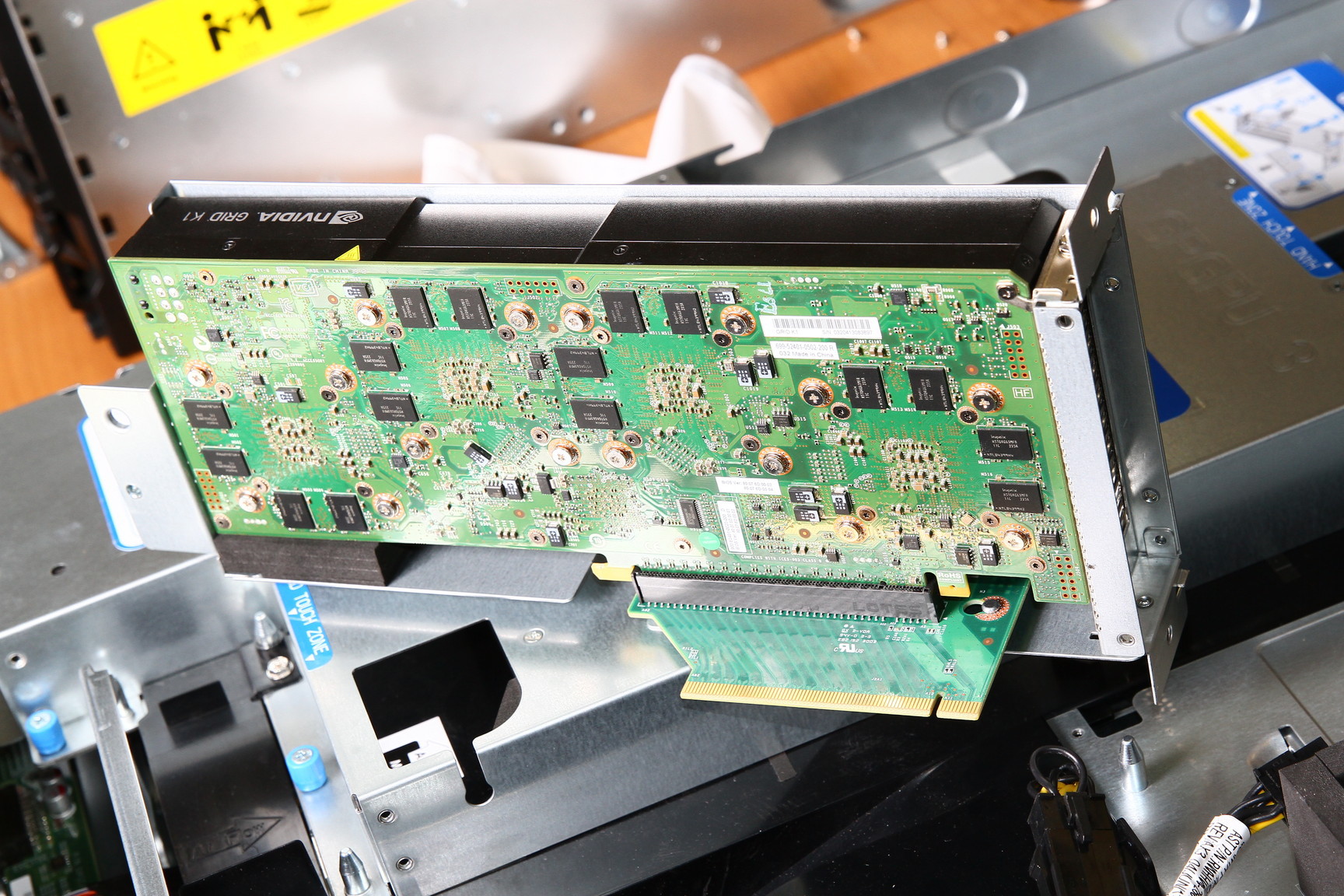

We have introduced the Hyperion RS225 G4 model , designed to install 4 Intel Xeon Phi or GPGPU cards. Two Xeon E5-2600 v2 processors, up to 1 terabyte of RAM, 4 seats for hard drives, InfiniBand FDR or 40G Ethernet for connecting to a high-speed network and a pair of standard gigabit network connectors. Installing a card in a server: Card in a server:

There are also several standard solutions for various occasions.

VDI unbinds the user's desktop from the hardware. You can deploy both a permanent virtual desktop and (the most common option) a flexible virtual machine. Virtual machines include an individual set of applications and settings, which is deployed in the base OS during user authorization. After exiting the system, the OS returns to a “clean” state, removing any changes and malware.

It is very convenient for the system administrator - manageability, security, reliability at the height, applications can be updated in a single center, and not on every PC. Office packages, database interfaces, Internet browsers and other undemanding graphics applications can run on any server (well-known 1C terminal clients).

But what if you want to virtualize a more serious graphics station?

Here you can not do without virtualization of the graphics subsystem.

There are three work options:

- GPU pass-through: 1: 1 dedicated GPU on VM

- Shared GPU: Software Virtualization GPU

- Virtual GPU: Hardware Vitrulization (HW&SW)

Let's take a closer look Dedicated GPU The most productive operating mode, supported in Citrix XenDesktop 7 VDI delivery and VMware Horizon View (5.3 or higher) with vDGA. Fully work NVIDIA CUDA, DirectX 9,10,11, OpenGL 4.4. All other components (processors, memory, drives, network adapters) are virtualized and shared between hypervisor instances, however one GPU remains one GPU. Each virtual machine gets its own GPU with virtually no penalty in performance. The obvious limitation is that the number of such virtual machines is limited by the number of available graphics cards in the system. Shared GPU

Powered by Microsoft RemoteFX, VMware vSGA. This option relies on the capabilities of the VDI software, the virtual machine seems to work with a dedicated adapter, and the server GPU also believes that it works with a single host, although this is actually an abstraction level. The hypervisor intercepts API calls and translates all the commands, rendering requests, etc., before being sent to the graphics driver, and the host machine works with the virtual card driver.

Shared GPU is a reasonable solution for many cases. If the applications are not too complex, then a significant number of users can work at the same time. On the other hand, a lot of resources are spent on translating the API, and it is impossible to guarantee compatibility with applications. Especially with those applications that use newer versions of the API than existed at the time of the development of the VDI product. Virtual GPU The most advanced option for sharing GPUs between users, currently supported only in Citrix products.

How it works? In a VDI environment with vGPU, each virtual machine runs through a hypervisor with a dedicated vGPU driver, which is available in each virtual machine. Each vGPU driver sends commands and controls one physical GPU using a dedicated channel.

Processed frames are returned by the driver to the virtual machine for sending to the user.

This mode of operation became possible in the latest generation of NVIDIA GPUs - Kepler. Kepler has a Memory Management Unit (MMU) that translates virtual host addresses to physical system addresses. Each process works in its own virtual address space, and the MMU shares their physical addresses so that there is no intersection and struggle for resources.

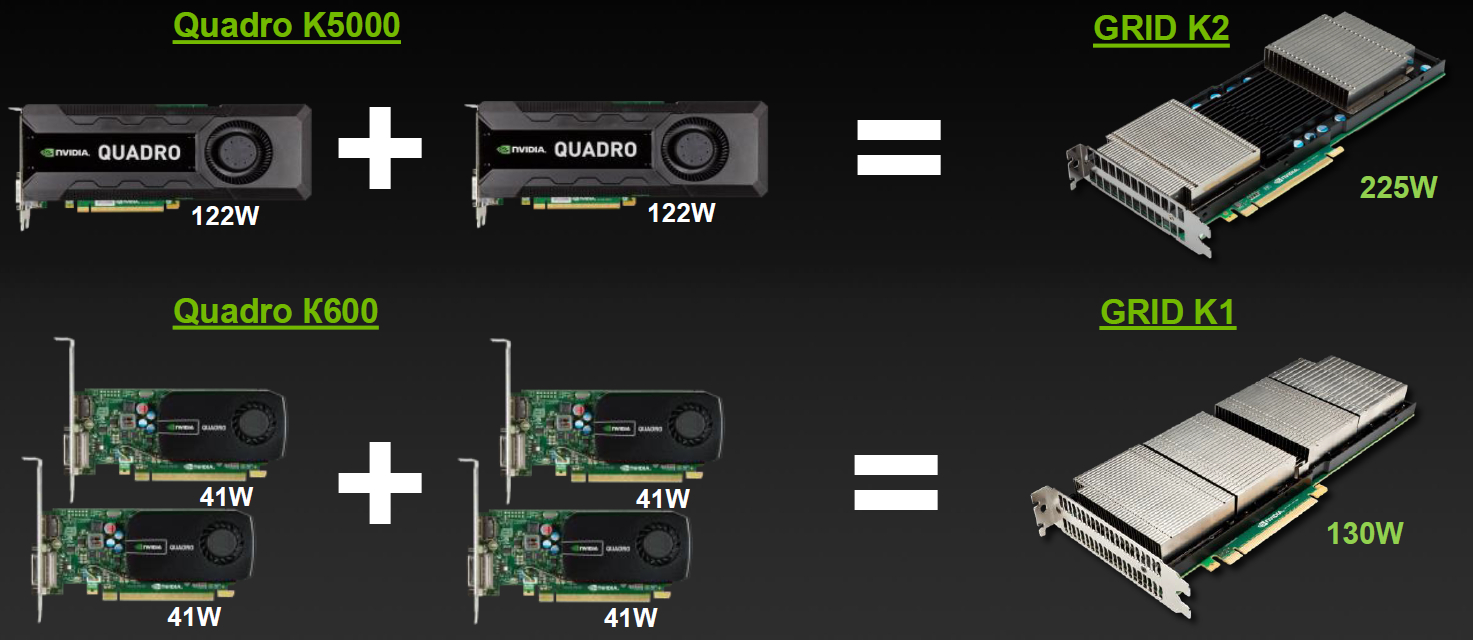

Embodied in the cards Lineup

| GRID K1 | GRID K2 | |

| Number of GPUs | 4 x entry Kepler GPUs | 2 x high-end Kepler GPUs |

| Total NVIDIA CUDA cores | 768 | 3072 |

| Total memory size | 16 GB DDR3 | 8 GB GDDR5 |

Varieties for VDI

But simple division by characteristics is not enough, division into virtual profiles vGPU is available for GRID. User Type Vision

| Map | GPU Number | Virtual GPU | User type | Memory size (MB) | Number of virtual screens | Maximum resolution | Max number of vGPUs per GPU / card |

| GRID K2 | 2 | GRID K260Q | Designer / Designer | 2048 | 4 | 2560x1600 | 2/4 |

| GRID K2 | 2 | GRID K240Q | Mid-level designer / designer | 1024 | 2 | 2560x1600 | 4/8 |

| GRID K2 | 2 | GRID K200 | Office employee | 256 | 2 | 1920x1200 | 8/16 |

| GRID K1 | 4 | GRID K140Q | Designer / Entry Level Designer | 1024 | 2 | 2560x1600 | 4/16 |

| GRID K1 | 4 | GRID K100 | Office employee | 256 | 2 | 1920x1200 | 8/32 |

Profiles and Applications

Profiles with the Q index are certified for a number of professional applications (for example, Autodesk Inventor 2014 and PTC Creo) in the same way as Quadro cards.

Complete virtual happiness!

NVIDIA would not be true to itself if it had not thought about additional varieties. Game services on demand (Gaming-as-a-Service, GaaS) are gaining some popularity, where you can also get a good bonus from virtualization and the ability to split the GPU between users.

| Product name | GRID K340 | GRID K520 |

| Target market | High-density gaming | High performance gaming |

| Concurrent # Users1 | 4-24 | 2–16 |

| Driver support | GRID Gaming | GRID Gaming |

| Total GPUs | 4 GK107 GPUs | 2 GK104 GPUs |

| Total NVIDIA CUDA Cores | 1536 (384 / GPU) | 3072 (1536 / GPU) |

| GPU Core Clocks | 950 MHz | 800 MHz |

| Memory size | 4 GB GDDR5 (1 GB / GPU) | 8 GB GDDR5 (4 GB / GPU) |

| Max power | 225 W | 225 W |

Varieties for gaming in the clouds

Impact of virtualization on performance

Server configuration:

Intel Xeon CPU E5-2670 2.6GHz, Dual Socket (16 Physical CPU, 32 vCPU with HT)

Memory 384GB

XenServer 6.2 Tech Preview Build 74074c

Virtual Machine Configuration:

VM Vcpu: 4 Virtual CPU

Memory: 11GB

XenDesktop 7.1 RTM HDX 3D Pro

AutoCAD 2014

Benchmark CADALYST C2012

NVIDIA Driver: vGPU Manager: 331.24

Guest driver: 331.82

The measurement procedure is simple - in virtual machines the CADALYST test was run and the performance was compared when adding new virtual machines.

As can be seen from the results, for the older K2 model and the certified profile, the drop is about 10% when 8 virtual machines are launched, for the K1 model the drop is stronger, but there are twice as many virtual machines.

The absolute result of cards with the maximum number of virtual machines:

Where to put the cards?

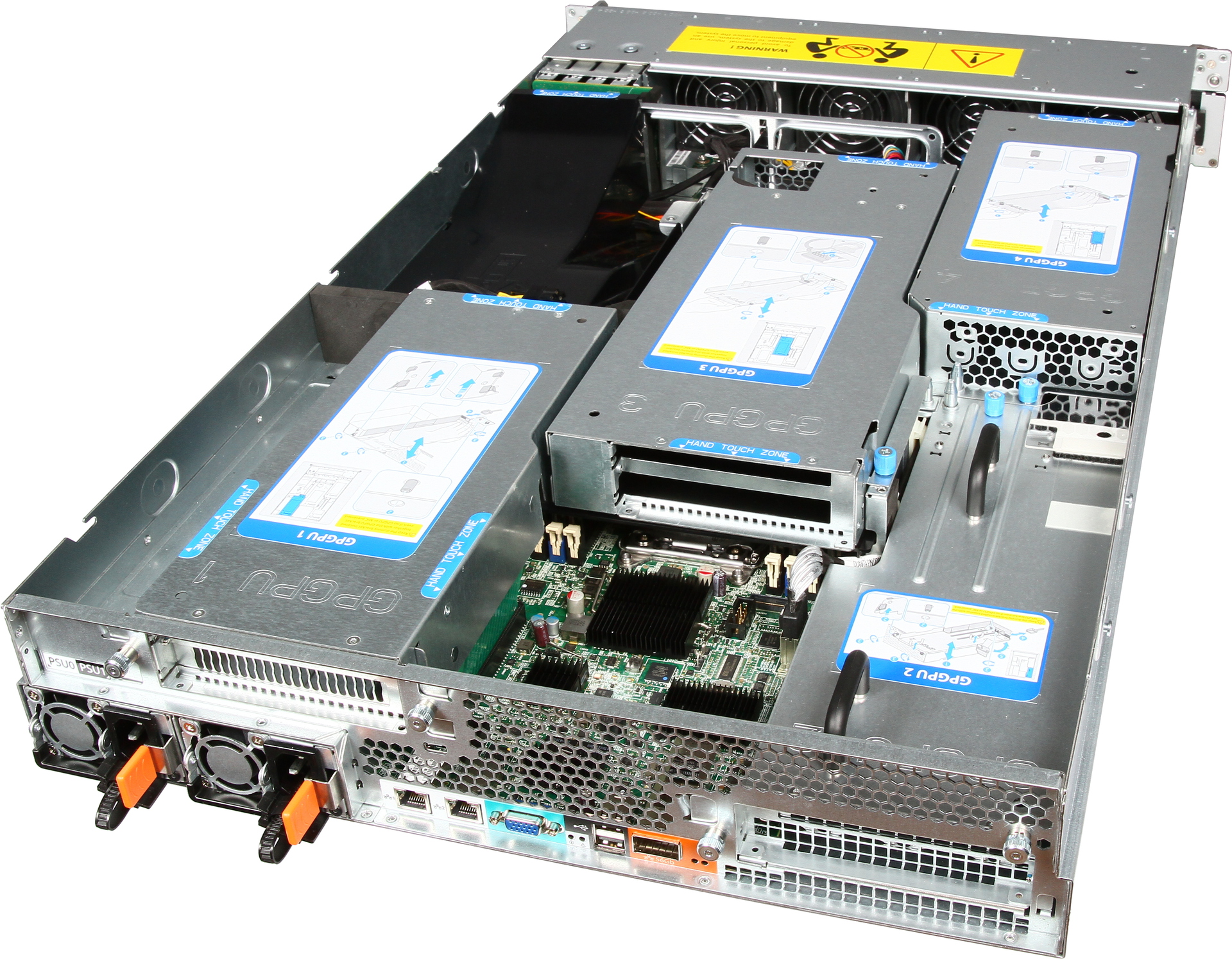

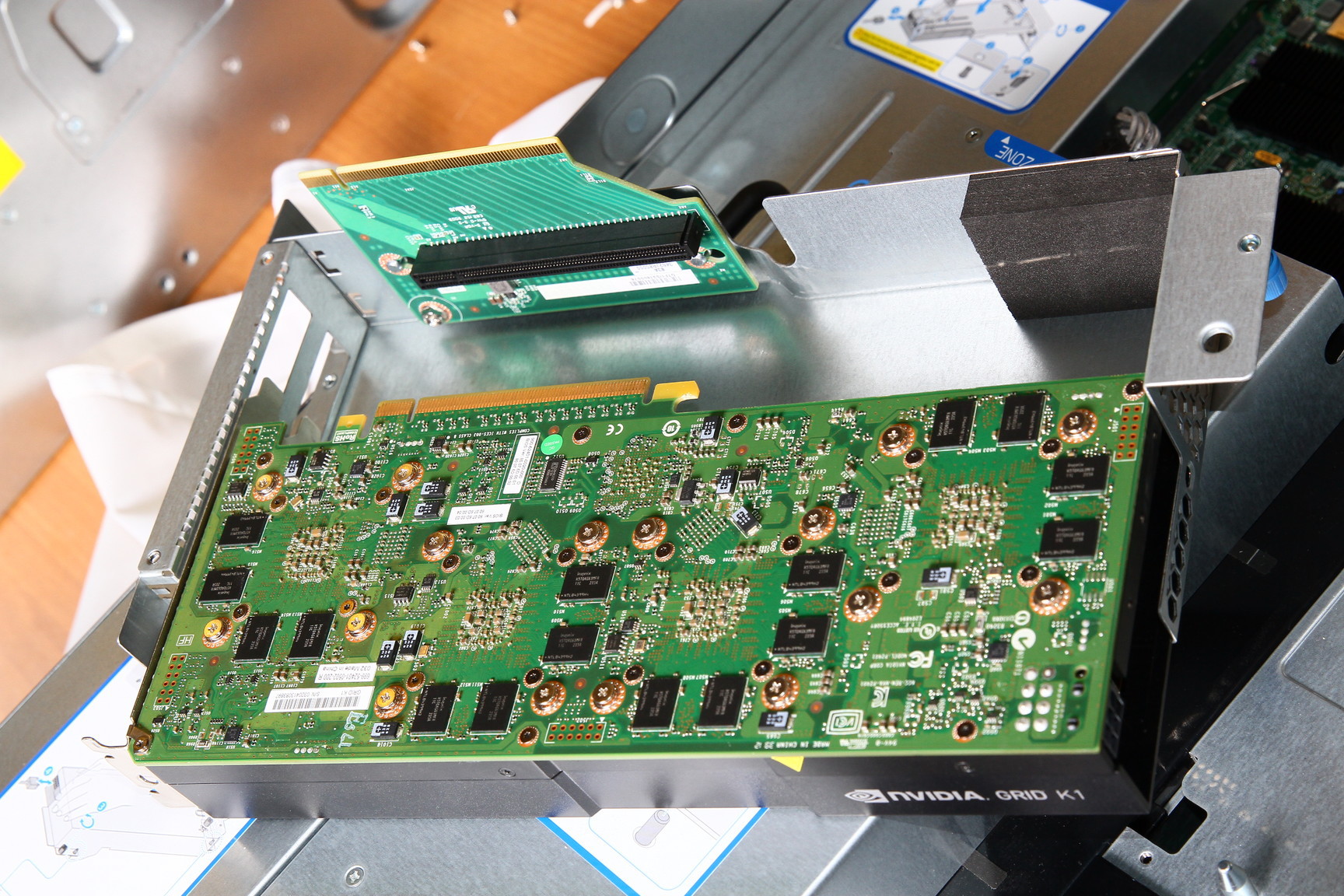

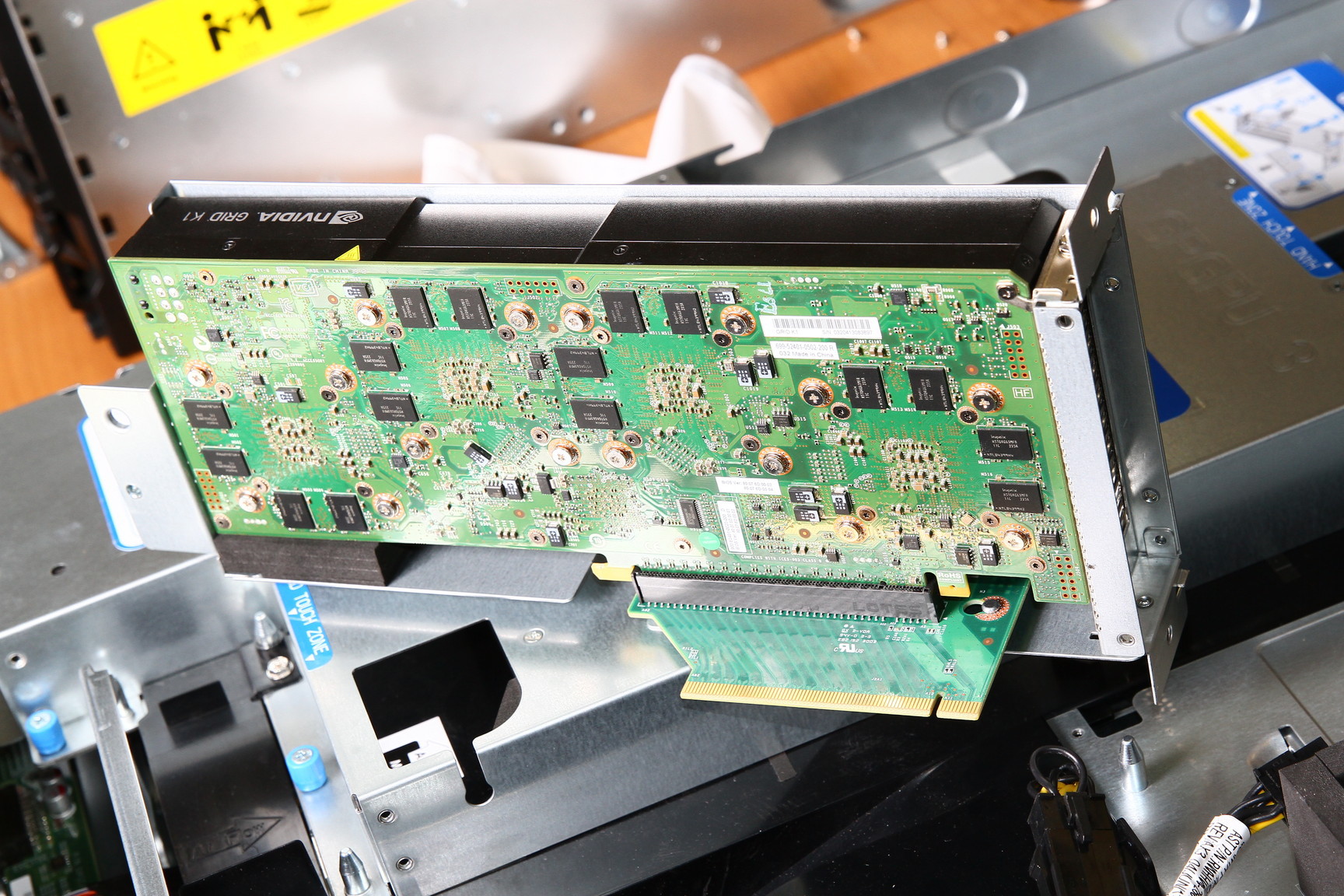

We have introduced the Hyperion RS225 G4 model , designed to install 4 Intel Xeon Phi or GPGPU cards. Two Xeon E5-2600 v2 processors, up to 1 terabyte of RAM, 4 seats for hard drives, InfiniBand FDR or 40G Ethernet for connecting to a high-speed network and a pair of standard gigabit network connectors. Installing a card in a server: Card in a server:

There are also several standard solutions for various occasions.