PACT Conference (Parallel Architectures and Compilation Techniques) 2013. Visit Report

On September 7-11, Edinburgh, Scotland hosted the 22nd International Conference on Parallel Architectures and Compilation Methods (PACT). The conference consisted of two parts: Workshops / Tutorials and the main part. I managed to visit the main part, which I would like to talk about.

The PACT conference is one of the largest and significant in its field. The list of conference topics is very extensive:

- Parallel architectures and computational models

- Instrumentation (compilers, etc.) for parallel computer systems

- Architectures: multi-core, multi-threaded, superscalar and VLIW

- Languages and Algorithms for Parallel Programming

- And so on, so on, so on, which is connected with parallelism in software and in hardware

The conference is sponsored by organizations such as IEEE, ACM and IFIP. In the list of sponsors of the conference there are very famous corporations:

A few words about the venue. The conference was held in the historical part of Edinburgh, the old city, the Surgeons' Hall: the

city impresses with both its beauty and the number of famous people associated with it. Conan Doyle, Walter Scott, Robert Lewis Stevenson, Joan Rowling, Sean Connery. They and other historical figures will be happy to tell you in this city. The first thing that comes to mind when you hear Scotland is whiskey. Here you understand that whiskey is an integral part of the culture of Scotland. He is everywhere, even in the conference participant recruitment there was a small bottle of single malt whiskey:

Key speakers and winners of the student competition received a full-sized bottle of great whiskey.

Key Papers

A Comprehensive Approach to HW / SW Codesign

David J. Kuck (Intel Fellow) in his report touched on the topic of analysis of computer systems by formal methods. Optimization of parameters of computer systems is carried out mainly by empirical methods. David Kuck offers a methodology for constructing mathematical models of the HW / SW system described by a system of nonlinear equations. The methodology was implemented in the Cape software system, which accelerates the search for optimal solutions to computer system design problems.

Parallel programming for mobile computing

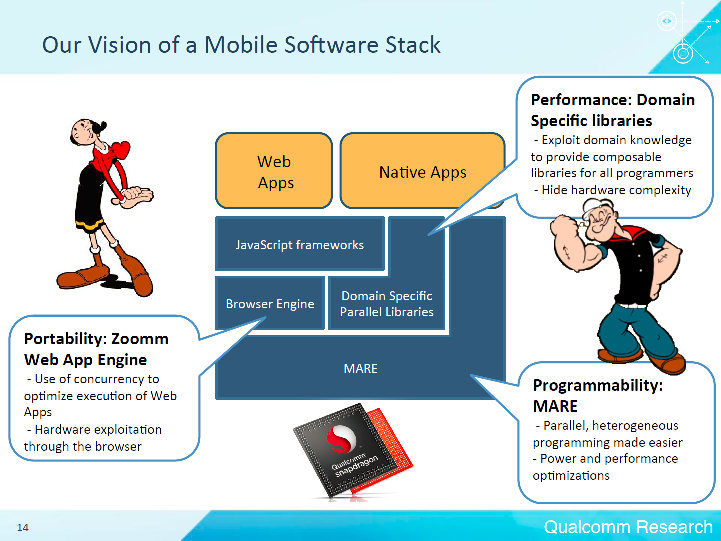

Câlin Caşcaval (Director of Engineering at the Qualcomm Silicon Valley Research Center) talked about the problems of parallelizing computing on mobile devices. Having researched the scenarios of using mobile devices, Qualcomm offers its vision of the mobile software stack:

A key component of the system is the MARE - Multicore Asynchronous Runtime Environment. MARE provides a C ++ API for concurrent programming and a runtime environment that optimizes program execution by heterogeneous SoC. We can say that this is an analog of Intel Threading Building Blocks (Intel TBB) .

Towards Automatic Resource Management in Parallel Architectures

Per Stenström (Chalmers University of Technology, Sweden), in his report Towards Automatic Resource Management in Parallel Architectures, addressed some of the challenges that will have to be confronted with current trends in the development of parallel architectures.

According to forecasts, the number of cores in the processor will grow linearly in the coming years and will reach 100 cores by 2020. At the same time, energy restrictions will be tightened. Despite the increase in the number of cores, the increase in memory bandwidth is slowing down. To achieve efficient use of processor resources, you need to parallelize programs to the maximum.

The programmer parallelizes the program, the compiler maps the program to a certain model of parallel computing, the runtime performs balancing. Heterogeneous processors have good energy efficiency potential. For parallel architectures, the speed of the memory subsystem will become an acute problem. According to studies, a large percentage of data in memory and caches are duplicated. Per Stenström suggests using a compression cache as the last level cache (LLC). When using such caches, a twofold acceleration of program execution is observed.

Key Papers

The reports were divided into sections:

- Compilers

- Power and energy

- GPU and Energy

- Memory system management

- Runtime and scheduling

- Caches and memory hierarchy

- GPU

- Networking, Debugging and Microarchitecture

- Compiler optimization

It was necessary to choose which sections to visit, since part of the sections went simultaneously.

Below are the sections that I visited and the reports that interested me.

Compilers

An interesting report was “Parallel Flow-Sensitive Pointer Analysis by Graph-Rewriting” (Indian Institute of Science, Bangalore) on the parallelization of aliasing pointer analysis algorithm. “Flow-Sensitive Pointer Analysis” means performing pointer analysis based on the order in which operations are performed. At each point in the program, a set of "points-to" is calculated. In contrast, with Flow-Insensitive Pointer Analysis, one set of points-to is built for the entire program. The analysis of pointers to aliasing can be carried out on a graph where the vertices are program variables, and the arcs are the relations between the variables of one of the types: taking the address, copying the pointer, downloading data by pointer, writing data by pointer. The authors of the report showed that by modifying this graph according to certain rules, you can get a graph,

GPU and Energy

An interesting report “Parallel Frame Rendering: Trading Responsiveness for Energy on a Mobile GPU” (Universitat Politecnica de Catalunya, Intel) on the parallelization of frame calculations on mobile processors with integrated graphics and the problems faced by developers.

Exploring Hybrid Memory for GPU Energy Efficiency through Software-Hardware Co-Design report (Auburn University, College of William and Mary, Oakridge National Lab) on the study of DRAM + PCM hybrid memory that consumes less power than just DRAM or PCM, having a performance of only 2% less than DRAM. Phase Change Memory (PCM) is better than DRAM in power consumption, but worse in performance.

Runtime and scheduling

“Fairness-Aware Scheduling on Single-ISA Heterogeneous Multi-Cores” (Ghent University, Intel) - traditional methods of scheduling threads are not entirely suitable for heterogeneous multi-core processors. The report describes dynamic dispatching of threads, which allows to achieve the same progress in the execution of each thread, which positively affects the performance of the application as a whole.

“An Empirical Model for Predicting Cross-Core Performance Interference on Multicore Processors” (Institute of Computing Technology, University of New South Wales, Huawei) - the use of machine learning methods to obtain a function that predicts a drop in application performance when run jointly with other applications.

Networking, Debugging & Microarchitecture

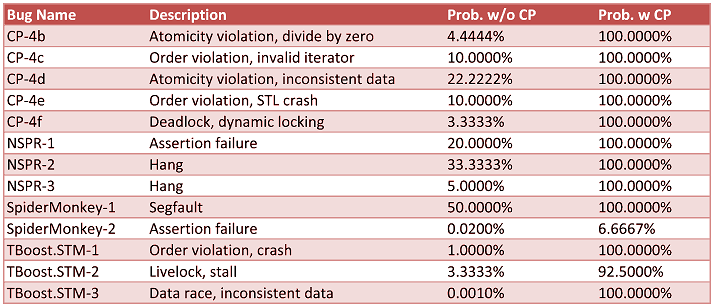

A report by A Debugging Technique for Every Parallel Programmer (Intel) showed how using the Concurrent Predicates (CP) technique can significantly increase the reproducibility of bugs in multi-threaded applications. I was impressed with the results of applying this technique for analyzing real bugs:

The third column is the probability of reproducing a bug without the CP technique. The fourth column is using the CP technique.

Compiler optimization

“Vectorization Past Dependent Branches Through Speculation” (University of Texas - San Antonio, University of Colorado) is a report on SIMD loop vectorization with simple conditional transitions in the loop body. The loop contains a path that can be vectorized and that is executed in successive iterations. Based on this path, a new cycle is created. If during the execution of the loop a situation arises when different paths will be executed in some successive iterations, then these iterations will be executed by the original unmodified loop.

The best reports

Apart from the main reports, a session of the best reports was held. Of these, I would like to highlight two reports:

- “A Unified View of Non-monotonic Core Selection and Application Steering in Heterogeneous Chip Multiprocessors” (Qualcomm, North Carolina State University) - a study on finding the optimal parameters of a heterogeneous processor. A system for dynamically detecting application bottlenecks and moving application processing threads from one core to another to eliminate bottlenecks is also described.

- “SMT-Centric Power-Aware Thread Placement in Chip Multiprocessors” (IBM) - in multi-core processors, energy consumption can be reduced by dynamically changing the voltage / frequency of the cores, or by disabling the cores. The report examined how using these techniques together and individually, a significant improvement in the power-performance ratio can be achieved.

Conclusion

The overall impression of the conference is positive. A large number of interesting reports. Understanding what is happening in academia and in large corporations. People with whom it was interesting to chat. Useful contacts made. We were pleased with the well-organized cultural program: dinners and city tours, museums. After-party at the Museum of Surgery caused a storm of emotions with an extensive collection of exhibits treated surgically. For people with iron nerves, a night tour of the dungeons of Edinburgh was organized. It was nice to meet guys from Russia (MIPT and ICST) at the student work contest.

The conference will be held next year in Edmonton, Canada. The organizers promised to hold a conference at the same high level.

References

PACT 2013 Home

PACT 2013 Program

PACT 2014 Home