DNS Records from NetXMS

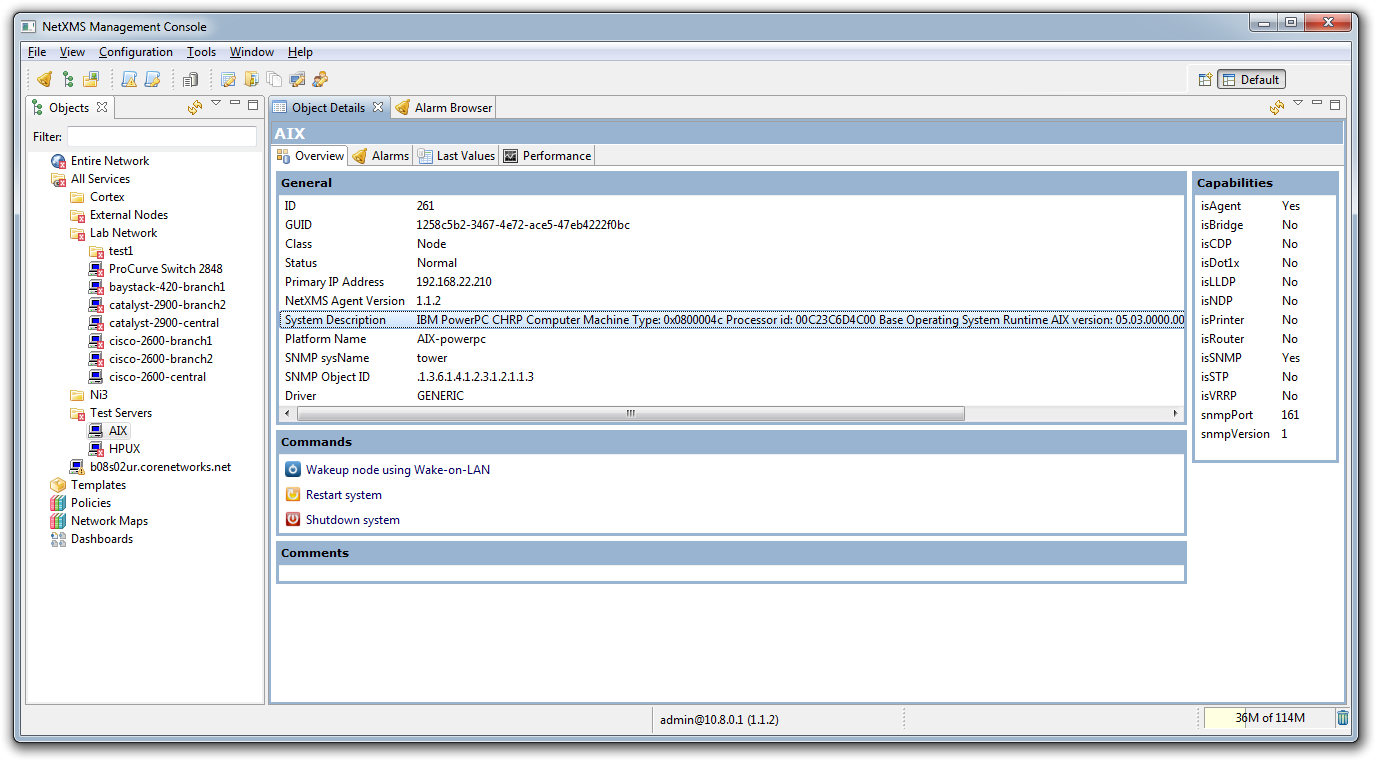

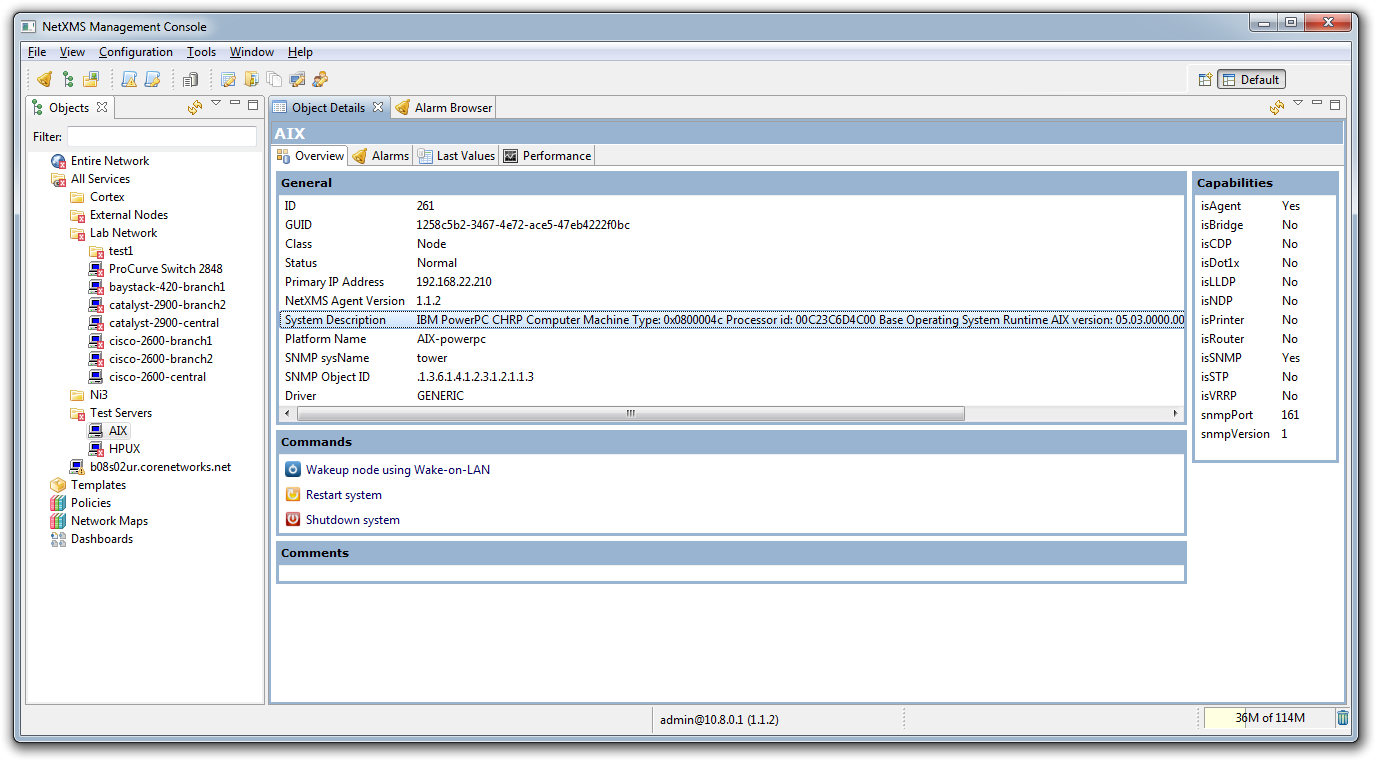

Some time ago, our team of engineers got hooked on the NetXMS monitoring system . The killer feature turned out to be a graphical console:

There is nothing so intuitive and convenient (yes, this is a very subjective criterion) in the familiar OpenNMS and Zabbix that are close. It has become possible to use the monitoring system not just as a mechanism for notifying of emerging problems, but primarily to analyze the current state of the network.

We have already used several completely unrelated databases, in which on one side or another all or part of the equipment operated by us was taken into account. There was a temptation to reduce the number of these databases, taking the NetXMS database as the primary source of information. The first victim was an internal DNS server.

Indeed, what is the point for each of several hundred hosts to have corresponding A and PTR records? We may not be too tense, just editing the / etc / hosts file for dnsmasq , but you can avoid this completely unnecessary work.

We chose PostgreSQL as the DBMS for NetXMS; accordingly, we needed a DNS server that could get at least A and PTR from PostgreSQL. The following options were immediately found:

We discarded too exotic solutions, we have an irrational aversion to bind, so PowerDNS remains. It was required to add to its configuration:

PowerDNS expects to see the following tables in the nxdb database:

Of course, such tables are not there, but instead of them there is the following:

Therefore, the required tables are easily replaced by views:

Result:

The reverse zone can be implemented in a similar way, but it can be a little more interesting. Any host can have several interfaces with different addresses, so the name corresponding to the address can be composed of the host name itself and the interface name. Then the last view will change as follows:

Result:

The reverse_address function is mentioned in the code, its implementation looks like this:

And this is the only part of the implementation specific to PostgreSQL. Everything else can be done in a similar way on other DBMSs supported by NetXMS and PowerDNS.

Finally, performance: this solution, of course, is not suitable for public DNS servers. For internal use - completely. At the first execution, all requests fit into 100 msec, then the cache works. There is a field for optimization using the gpgsql - * - query parameters instead of representations, but for now we have enough.

There is nothing so intuitive and convenient (yes, this is a very subjective criterion) in the familiar OpenNMS and Zabbix that are close. It has become possible to use the monitoring system not just as a mechanism for notifying of emerging problems, but primarily to analyze the current state of the network.

We have already used several completely unrelated databases, in which on one side or another all or part of the equipment operated by us was taken into account. There was a temptation to reduce the number of these databases, taking the NetXMS database as the primary source of information. The first victim was an internal DNS server.

Indeed, what is the point for each of several hundred hosts to have corresponding A and PTR records? We may not be too tense, just editing the / etc / hosts file for dnsmasq , but you can avoid this completely unnecessary work.

We chose PostgreSQL as the DBMS for NetXMS; accordingly, we needed a DNS server that could get at least A and PTR from PostgreSQL. The following options were immediately found:

- Dynamically Loadable Zones for BIND

- Generic PgSQL backend for PowerDNS

- Relative exotic - implementations of perl or python DNS servers specially designed for extension by native handlers include PipeBackend and RemoteBackend for PowerDNS in the same category

We discarded too exotic solutions, we have an irrational aversion to bind, so PowerDNS remains. It was required to add to its configuration:

load-modules=gpgsql

launch=gpgsql

gpgsql-host=nxhost

gpgsql-dbname=nxdb

gpgsql-user=nxreader

gpgsql-password=nxreaderpwd

recursor=8.8.8.8

PowerDNS expects to see the following tables in the nxdb database:

CREATETABLE domains (

idSERIAL PRIMARY KEY,

nameVARCHAR(255) NOTNULL,

masterVARCHAR(128) DEFAULTNULL,

last_check INTDEFAULTNULL,

typeVARCHAR(6) NOTNULL,

notified_serial INTDEFAULTNULL,

accountVARCHAR(40) DEFAULTNULL

);

CREATETABLErecords (

idSERIAL PRIMARY KEY,

domain_id INTDEFAULTNULL,

nameVARCHAR(255) DEFAULTNULL,

typeVARCHAR(10) DEFAULTNULL,

contentVARCHAR(65535) DEFAULTNULL,

ttl INTDEFAULTNULL,

prio INTDEFAULTNULL,

change_date INTDEFAULTNULL

);

Of course, such tables are not there, but instead of them there is the following:

nxdb=> SELECTid, name, primary_ip, last_modified FROM nodes INNERJOIN object_properties ONid = object_id;

id | name | primary_ip | last_modified

------+------------------------+-----------------+---------------

1 | ns | 10.1.1.1 | 1376221181

Therefore, the required tables are easily replaced by views:

CREATEORREPLACEVIEW domains ASSELECT0::INTASid,

'domain.local'::TEXTASname,

NULL::TEXTASmaster,

NULL::INTas last_check,

'NATIVE'::TEXTastype,

NULL::INTas notified_serial,

NULL::TEXTasaccount;

CREATEORREPLACEVIEWrecordsASSELECT0::INTASid,

0AS domain_id,

'domain.local'ASname,

'SOA'::TEXTAStype,

'ns.domain.local'::TEXTAScontent,

0AS ttl,

NULL::INTAS prio,

NULL::TEXTAS change_date

UNIONSELECTid,

0AS domain_id,

LOWER(name) ASname,

'A'::TEXTAStype,

primary_ip::TEXTAScontent,

0AS ttl,

NULL::INTAS prio,

last_modified::TEXTAS change_date

FROM nodes

INNERJOIN object_properties ONid = object_id;

Result:

$ nslookup

> ns

Server: 10.1.1.1

Address: 10.1.1.1#53

Name: ns.domain.local

Address: 10.1.1.1

The reverse zone can be implemented in a similar way, but it can be a little more interesting. Any host can have several interfaces with different addresses, so the name corresponding to the address can be composed of the host name itself and the interface name. Then the last view will change as follows:

CREATEORREPLACEVIEW domains ASSELECT0::INTASid,

'domain.local'::TEXTASname,

NULL::TEXTASmaster,

NULL::INTas last_check,

'NATIVE'::TEXTastype,

NULL::INTas notified_serial,

NULL::TEXTasaccount;

CREATEORREPLACEVIEWrecordsASSELECT0::INTASid,

0AS domain_id,

'domain.local'ASname,

'SOA'::TEXTAStype,

'ns.domain.local'::TEXTAScontent,

0AS ttl,

NULL::INTAS prio,

NULL::TEXTAS change_date

UNIONSELECTid,

0AS domain_id,

LOWER(name) ASname,

'A'::TEXTAStype,

primary_ip::TEXTAScontent,

0AS ttl,

NULL::INTAS prio,

last_modified::TEXTAS change_date

FROM nodes

INNERJOIN object_properties ONid = object_id

UNIONSELECT0::INTASid,

0AS domain_id,

'in-addr.arpa'ASname,

'SOA'::TEXTAStype,

'ns.domain.local'::TEXTAScontent,

0AS ttl,

NULL::INTAS prio,

NULL::TEXTAS change_date

UNIONSELECT

interfaces.id,

0AS domain_id,

reverse_address(interfaces.ip_addr::INET) ASname,

'PTR'::TEXTAStype,

(object_properties.name||'.'||interfaces.description)::TEXTAScontent,

0AS ttl,

NULL::INTAS prio,

last_modified::TEXTAS change_date

FROM interfaces

INNERJOIN nodes on nodes.id = interfaces.node_id

INNERJOIN object_properties on nodes.id = object_id;

Result:

$ nslookup

> 10.7.1.1

Server: 10.1.1.1

Address: 10.1.1.1#53

1.1.1.10.in-addr.arpa name = ns.eth0.

The reverse_address function is mentioned in the code, its implementation looks like this:

CREATEORREPLACEFUNCTION reverse_address(addr inet)

RETURNStextAS

$t$

DECLAREmaskinteger;

parts text[];

part text;

retval text;

rounds integer;

host text;

BEGIN

retval := '';

rounds := 0;

mask := masklen(addr);

host := host(addr);

IF (family(addr) = 4) THEN

IF (mask < 8) THEN

rounds := 4;

ELSIF (mask < 16) THEN

rounds := 3;

ELSIF (mask < 24) THEN

rounds := 2;

ELSIF (mask < 32) THEN

rounds := 1;

ENDIF;

FOREACH part in ARRAY regexp_split_to_array(host, '\.') LOOP

rounds := rounds + 1;

IF (rounds <= 4) THEN

retval := part || '.' || retval;

ENDIF;

ENDLOOP;

RETURN retval || 'in-addr.arpa';

ELSE

RETURN 'ip6.arpa';

ENDIF;

END;

$t$

LANGUAGE 'plpgsql'

IMMUTABLE;

And this is the only part of the implementation specific to PostgreSQL. Everything else can be done in a similar way on other DBMSs supported by NetXMS and PowerDNS.

Finally, performance: this solution, of course, is not suitable for public DNS servers. For internal use - completely. At the first execution, all requests fit into 100 msec, then the cache works. There is a field for optimization using the gpgsql - * - query parameters instead of representations, but for now we have enough.