Worldwide Data Center Distribution Network - The Heart of the Google Empire

"Googol (from the English googol) - the decimal number system represented by a unit with one hundred zeros."

(Wikipedia).

Many people know the many faces of Google - this is a popular search engine, and a social network, as well as many other useful and innovative services. But few people thought: how did the company manage to deploy and support them with such high speed and fault tolerance? How is organized that provides such opportunities - Google data center , what are its features? This is exactly what will be discussed in this article.

Actually, Google has not surprised anyone with the powerful dynamics of its growth for a long time. An active fighter for "green energy", many patents in various fields, openness and user friendliness - these are the first associations that many people have when mentioning Google. The company's data center is no less impressive: it is a whole network of data centers located around the world with a total capacity of 220 MW (as of last year). Given the fact that investments in data centers over the past year alone amounted to $ 2.5 billion, it is clear that the company considers this area to be strategically important and promising. At the same time, there is a certain dialectical contradiction, because, despite the publicity of the activities of Google, its data centers are a kind of know-how, a secret hidden behind seven seals. The company believes

Actually, Google has not surprised anyone with the powerful dynamics of its growth for a long time. An active fighter for "green energy", many patents in various fields, openness and user friendliness - these are the first associations that many people have when mentioning Google. The company's data center is no less impressive: it is a whole network of data centers located around the world with a total capacity of 220 MW (as of last year). Given the fact that investments in data centers over the past year alone amounted to $ 2.5 billion, it is clear that the company considers this area to be strategically important and promising. At the same time, there is a certain dialectical contradiction, because, despite the publicity of the activities of Google, its data centers are a kind of know-how, a secret hidden behind seven seals. The company believes

To begin with, Google itself is not involved in the construction of data centers. He has a department that deals with development and maintenance, and the company cooperates with local integrators who carry out the implementation. The exact number of built data centers is not advertised, but in the press their number varies from 35 to 40 around the world. The main concentration of data centers falls on the United States, Western Europe and East Asia. Also, some of the equipment is located in rented premises of commercial data centers with good communication channels. It is known that Google used the space to accommodate the equipment of commercial data centers Equinix (EQIX) and Savvis (SVVS). Google is strategically focused on the transition exclusively to the use of its own data centers - in the corporation this is explained by the growing requirements for the confidentiality of information of users who trust the company, and the inevitability of information leakage in commercial data centers. Following the latest trends, this summer it was announced that Google will lease a “cloud” infrastructure for third-party companies and developers, corresponding to the Compute Engine IaaS service model, which will provide computing power of fixed configurations with hourly pay for use.

The main feature of the network of data centers is not so much in the high reliability of a single data center, but in geo-clustering. Each data center has many high-capacity communication channels with the outside world and replicates its data to several other data centers, geographically distributed around the world. Thus, even force majeure circumstances such as a meteorite fall will not significantly affect the integrity of the data.

The first public data center is located in Douglas, USA. This container data center ( Fig. 1) was opened in 2005. The same data center is the most public. In fact, this is a kind of frame structure resembling a hangar, inside of which containers are arranged in two rows. In one row - 15 containers located in one tier, and in the second - 30 containers located in two tiers. This data center houses approximately 45,000 Google servers. Containers - 20ft sea. To date, 45 containers have been put into operation, and the capacity of IT equipment is 10 MW. Each container has its own connection to the hydraulic cooling circuit, its own power distribution unit - in addition to circuit breakers, it also has power consumption analyzers for groups of electrical consumers to calculate the PUE coefficient. Separately located pumping stations, cascades of chillers, diesel generator sets and transformers. PUE declared equal to 1.25. The cooling system is dual circuit, while in the second circuit economizers with cooling towers are used, which can significantly reduce the operating time of the chillers. In fact, it is nothing more than an open cooling tower. Water that draws heat from the server room is fed to the top of the tower, from where it is sprayed and flows down. Thanks to the atomization of water, heat is transferred to the air, which is pumped from the outside by fans, and the water itself partially evaporates. This solution can significantly reduce the working time of chillers in the data center. It is interesting that, initially, purified tap water suitable for drinking was used to replenish water reserves in the external circuit. Google quickly realized that water doesn’t have to be so clean, so a system was created,

Inside each container, racks with servers are built on the principle of a common “cold” corridor. Along it, under the raised floor, there are a heat exchanger and fans that blow the cooled air through the grilles to the air intakes of the servers. Heated air from the back of the cabinets is taken under the raised floor, passes through the heat exchanger and is cooled, forming a recirculation. The containers are equipped with emergency lighting, EPO buttons, smoke and temperature sensors for fire safety.

Fig. 1. Container data center in Douglas

It is interesting that Google subsequently patented the idea of a “container tower”: containers are stacked on top of each other, an entrance is arranged on the one hand, and utilities - power supply, air conditioning and external communication channels are connected on the other ( Fig. 2)

Fig. 2. The principle of cooling the air in the container

Fig. 3. The patented “container tower”

Opened a year later, in 2006, in Dulles (USA), on the banks of the Columbia River, the data center already consisted of three separate buildings, two of which (each with an area of 6,400 square meters) were erected in the first place ( Fig. 4 ) - in them are located machine rooms. Near these buildings are buildings in which refrigeration units are located. The area of each building is 1,700 square meters. meters. In addition, the complex has an office building (1,800 sq. Meters) and a hostel for temporary residence of staff (1,500 sq. Meters).

This project was previously known under the code name Project 02. It should be noted that the place for the data center was not chosen by chance: before that, an aluminum smelter with a capacity of 85 MW functioned here, the work of which was suspended.

Fig. 4. Construction of the first phase of the Dallas data center

In 2007, Google began to build a data center in southwestern Iowa - in Council Bluffs, not far from the Missouri River. The concept resembles the previous object, but there are also external differences: the buildings are combined, and refrigeration equipment instead of cooling towers is placed along both sides of the main structure ( Fig. 5 ).

Fig. 5. Data Center in Council Bluffs - a typical concept for building Google data centers

Apparently, such a concept was taken as the best practices, since it can be traced in the future. Examples of this are data centers in the USA:

Fig. 6. Data Center in Lenoir City

But the priority in secrecy belongs to the data center in Saint-Gislin ( Fig. 7a ). Built in Belgium in 2007-2008, this data center is larger than the data center in Council Bluffs in size. In addition, this data center is notable for the fact that when you try to look at the Google satellite map, instead of it you will see an empty area in a clean field ( Fig. 7b ). Google says there is no negative impact from the operation of this data center on the surrounding settlements: treated water is used as cooling tower water in the data center. For this purpose, a special multi-stage treatment station was built nearby, and water is supplied to the station via a technical shipping channel.

Fig. 7a.Data center in Saint-Gislin on a search engine map bing

Fig. 7b. But on the Google map it does not exist!

Google experts during the construction process noted that it would be logical to get water that is used to cool the external circuit from its natural storage, rather than take it from the water supply. The corporation for its future data center acquired in Finland (Hamina) an old paper mill, which was reconstructed as a data center ( Fig. 8) The project has been implemented for 18 months. 50 companies participated in its implementation, and the successful finish of the project was in 2010. At the same time, this scope is not accidental: the developed cooling concept really allowed Google to declare also the environmental friendliness of its data centers. The northern climate combined with a low freezing point of salt water made it possible to provide cooling without chillers at all, using only pumps and heat exchangers. It uses a typical circuit with a double water-water circuit and an intermediate heat exchanger. Sea water is pumped into the heat exchangers, cools the data center and then discharged into the bay in the Baltic Sea.

Fig. 8. Reconstruction of the old paper mill in Hamina turns it into an energy-efficient data center

In 2011, a data center with an area of 4,000 square meters was built in the northern part of Dublin (Ireland). meters. To create a data center, the warehouse building already existing here was reconstructed. This is the most modest of the well-known data centers built as a springboard for the deployment of company services in Europe. In the same year, the development of a network of data centers in Asia began: three data centers should appear in Hong Kong, Singapore and Taiwan. And this year, Google announced the purchase of land for the construction of a data center in Chile.

It is noteworthy that in the data center under construction in Taiwan, Google experts took a different path, deciding to take advantage of the economic benefits of the cheaper, nightly electricity tariff. The water in the huge tanks is cooled with the help of a chiller unit with tanks, cold accumulators, and is used for cooling in the daytime. But it is not known whether the phase transition of the coolant will be used here or the company will stop only on the chilled water tank. Perhaps after the commissioning of the data center, Google will provide this information.

It is interesting that the polar idea is an even bolder project of the corporation — a floating data center, which in 2008 patented Google. The patent states that the IT equipment is located on a floating vessel, the cooling is performed by cold sea water, and the electricity is produced by floating generators that generate electricity from the movement of waves. For the pilot project, it is planned to use floating generators manufactured by Pelamius: 40 such generators floating on an area of 50x70 meters will allow generating up to 30 MW of electricity sufficient for the operation of the data center.

By the way, Google regularly publishes the PUE energy efficiency indicator of its data centers. Moreover, the measurement procedure itself is interesting. If in the classical sense of the standards GreenGrid is the ratio of the data center consumed power to its IT power, then Google measures PUE for the whole facility, including not only the data center life-support systems, but also the conversion losses in transformer substations, cables, energy consumption in office premises, etc. - that is, everything that is inside the perimeter of the object. The measured PUE is given as an average value for a one-year period. As of 2012, the average PUE across all Google data centers was 1.13.

Actually, it is clear that, building such a huge data center, Google does not choose their location randomly. What criteria are primarily taken into account by company specialists?

Let's start with the server park. The number of servers has not been disclosed, but various sources of information call the figure from one to two million servers, while it is said that even the last digit is not the limit, and the existing data centers are not completely filled (given the area of the server rooms, it is difficult to disagree). Servers are selected based on value for money, and not based on absolute quality or performance. The server platform is x86, and a modified version of Linux is used as the operating system. All servers are combined in a clustered solution.

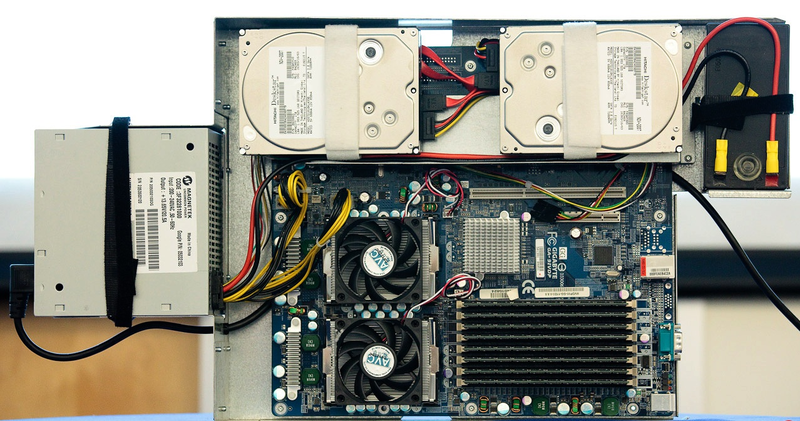

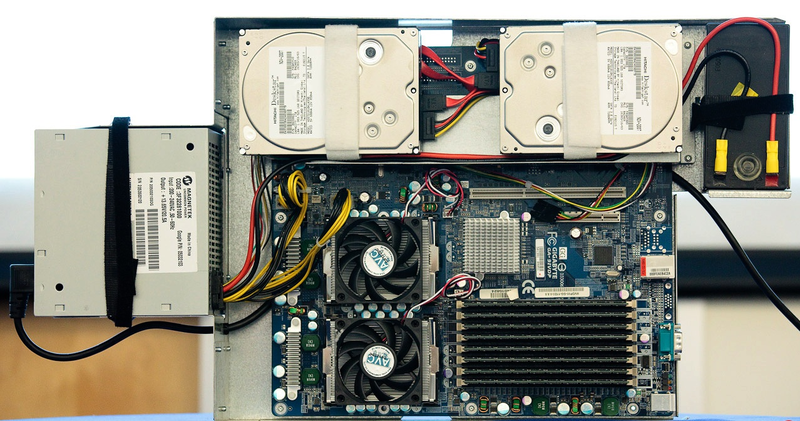

Back in 2000, the company thought about reducing transmission losses and the transformation of electricity in servers. Therefore, the power supplies comply with the Gold level of the Energy Star standard - the efficiency of the power supply is at least 90%. Also, all components that are not required to run applications running on them were removed from the servers. For example, there are no graphic adapters in the servers, there are fans with RPM control, and the components allow proportionally loading to reduce their energy consumption. It is interesting that in large data centers and container data centers, where the servers are essentially consumables, it was apparently decided: the lifetime of the servers is comparable to the battery life. And if so, then instead of the UPS there is a battery that is installed in the server itself. So we managed to reduce the losses on the UPS and eliminate the problem of its low efficiency at low load. It is known that the dual-processor platform x86 was used, and the well-known company Gigabyte was engaged in the production of motherboards specifically for Google. It is curious that the server does not have the usual enclosed enclosure: there is only its lower part, which houses hard drives, a motherboard, a battery and a power supply (fig. 9 ). The installation process is very simple: the administrator pulls the metal plug from the installation field and inserts a server instead, which is freely blown from the front to the back. After installation, the battery and power supply are connected.

The status and performance of each server hard drive is monitored. Additionally, data is archived to tape. The problem of recycling non-working storage media - hard drives in a peculiar way is solved. At the first stage, the disks take turns in a kind of press: a metal tip pushes through the hard drive, compresses the camera with the plates to make it impossible to read from them in any way that is available at the moment. Then the disks get into the shredder, where they are crushed, and only after that they can leave the territory of the data center.

An equally high level of security is also for employees: perimeter security, quick response teams are on duty around the clock, employee identification at first by a badge made using lens (lenticular) printing, which reduces the likelihood of counterfeiting, and after that - biometric control according to the iris of the eye.

Fig. 9. A typical “Spartan” Google server - nothing more

All servers are installed in 40-inch double-frame open racks, which are placed in rows with a common "cold" corridor. Interestingly, in data centers, Google does not use special designs to limit the “cold” corridor, but uses hinged, rigid, movable polymer lamellas, assuring that this is a simple and inexpensive solution that allows you to quickly add existing lamellas to existing rows and fold existing lamellas above the top of the closet.

It is known that, in addition to hardware, Google uses the Google File System (GFS) file system, designed for large data arrays. The peculiarity of this system is that it is clustered: information is divided into 64 MB blocks and stored in at least three places at the same time with the ability to find replicated copies. If any of the systems fails, the replicated copies are automatically found using specialized programs of the MapReduce model. The model itself implies the parallelization of operations and the execution of tasks on several machines simultaneously. In this case, information is encrypted inside the system. The BigTable system uses distributed storage arrays to store a large array of information with quick access for storage, such as web indexing, Google Earth and Google Finance. The basic web applications are Google Web Server (GWS) and Google Front-End (GFE), using the optimized Apache kernel. All these systems are closed and customized - Google explains this by the fact that closed and customized systems are very resistant to external attacks and they have significantly fewer vulnerabilities.

Summing up, I would like to note several important points that cannot but surprise. Google reasonably plans the costs and development strategy of data centers, using the concept of "best in price / quality" instead of "best solution." There is no unnecessary functionality, there are no decorative excesses - only a “Spartan” filling, although to some it may not seem aesthetic. The company actively uses green technologies, and not as an end in itself, but as a means to reduce operating costs for electricity and fines for environmental pollution. When building data centers, the bias is not made on a large number of redundant systems - the data center itself is backed up (thereby minimizing the influence of external factors). The main emphasis is on the software level and non-standard solutions. The focus on renewable energy sources and the use of natural water resources suggests that the company is trying to be as independent as possible from rising energy prices. The environmental friendliness of the solutions used correlates well with their energy efficiency. All this suggests that Google has not only strong technical competence, but also knows how to invest money, look ahead, keeping pace with market trends.

Konstantin Kovalenko magazine TsODy.RF , issue number 1

(Wikipedia).

Many people know the many faces of Google - this is a popular search engine, and a social network, as well as many other useful and innovative services. But few people thought: how did the company manage to deploy and support them with such high speed and fault tolerance? How is organized that provides such opportunities - Google data center , what are its features? This is exactly what will be discussed in this article.

Actually, Google has not surprised anyone with the powerful dynamics of its growth for a long time. An active fighter for "green energy", many patents in various fields, openness and user friendliness - these are the first associations that many people have when mentioning Google. The company's data center is no less impressive: it is a whole network of data centers located around the world with a total capacity of 220 MW (as of last year). Given the fact that investments in data centers over the past year alone amounted to $ 2.5 billion, it is clear that the company considers this area to be strategically important and promising. At the same time, there is a certain dialectical contradiction, because, despite the publicity of the activities of Google, its data centers are a kind of know-how, a secret hidden behind seven seals. The company believes

Actually, Google has not surprised anyone with the powerful dynamics of its growth for a long time. An active fighter for "green energy", many patents in various fields, openness and user friendliness - these are the first associations that many people have when mentioning Google. The company's data center is no less impressive: it is a whole network of data centers located around the world with a total capacity of 220 MW (as of last year). Given the fact that investments in data centers over the past year alone amounted to $ 2.5 billion, it is clear that the company considers this area to be strategically important and promising. At the same time, there is a certain dialectical contradiction, because, despite the publicity of the activities of Google, its data centers are a kind of know-how, a secret hidden behind seven seals. The company believesTo begin with, Google itself is not involved in the construction of data centers. He has a department that deals with development and maintenance, and the company cooperates with local integrators who carry out the implementation. The exact number of built data centers is not advertised, but in the press their number varies from 35 to 40 around the world. The main concentration of data centers falls on the United States, Western Europe and East Asia. Also, some of the equipment is located in rented premises of commercial data centers with good communication channels. It is known that Google used the space to accommodate the equipment of commercial data centers Equinix (EQIX) and Savvis (SVVS). Google is strategically focused on the transition exclusively to the use of its own data centers - in the corporation this is explained by the growing requirements for the confidentiality of information of users who trust the company, and the inevitability of information leakage in commercial data centers. Following the latest trends, this summer it was announced that Google will lease a “cloud” infrastructure for third-party companies and developers, corresponding to the Compute Engine IaaS service model, which will provide computing power of fixed configurations with hourly pay for use.

The main feature of the network of data centers is not so much in the high reliability of a single data center, but in geo-clustering. Each data center has many high-capacity communication channels with the outside world and replicates its data to several other data centers, geographically distributed around the world. Thus, even force majeure circumstances such as a meteorite fall will not significantly affect the integrity of the data.

Geography of data centers

The first public data center is located in Douglas, USA. This container data center ( Fig. 1) was opened in 2005. The same data center is the most public. In fact, this is a kind of frame structure resembling a hangar, inside of which containers are arranged in two rows. In one row - 15 containers located in one tier, and in the second - 30 containers located in two tiers. This data center houses approximately 45,000 Google servers. Containers - 20ft sea. To date, 45 containers have been put into operation, and the capacity of IT equipment is 10 MW. Each container has its own connection to the hydraulic cooling circuit, its own power distribution unit - in addition to circuit breakers, it also has power consumption analyzers for groups of electrical consumers to calculate the PUE coefficient. Separately located pumping stations, cascades of chillers, diesel generator sets and transformers. PUE declared equal to 1.25. The cooling system is dual circuit, while in the second circuit economizers with cooling towers are used, which can significantly reduce the operating time of the chillers. In fact, it is nothing more than an open cooling tower. Water that draws heat from the server room is fed to the top of the tower, from where it is sprayed and flows down. Thanks to the atomization of water, heat is transferred to the air, which is pumped from the outside by fans, and the water itself partially evaporates. This solution can significantly reduce the working time of chillers in the data center. It is interesting that, initially, purified tap water suitable for drinking was used to replenish water reserves in the external circuit. Google quickly realized that water doesn’t have to be so clean, so a system was created,

Inside each container, racks with servers are built on the principle of a common “cold” corridor. Along it, under the raised floor, there are a heat exchanger and fans that blow the cooled air through the grilles to the air intakes of the servers. Heated air from the back of the cabinets is taken under the raised floor, passes through the heat exchanger and is cooled, forming a recirculation. The containers are equipped with emergency lighting, EPO buttons, smoke and temperature sensors for fire safety.

Fig. 1. Container data center in Douglas

It is interesting that Google subsequently patented the idea of a “container tower”: containers are stacked on top of each other, an entrance is arranged on the one hand, and utilities - power supply, air conditioning and external communication channels are connected on the other ( Fig. 2)

Fig. 2. The principle of cooling the air in the container

Fig. 3. The patented “container tower”

Opened a year later, in 2006, in Dulles (USA), on the banks of the Columbia River, the data center already consisted of three separate buildings, two of which (each with an area of 6,400 square meters) were erected in the first place ( Fig. 4 ) - in them are located machine rooms. Near these buildings are buildings in which refrigeration units are located. The area of each building is 1,700 square meters. meters. In addition, the complex has an office building (1,800 sq. Meters) and a hostel for temporary residence of staff (1,500 sq. Meters).

This project was previously known under the code name Project 02. It should be noted that the place for the data center was not chosen by chance: before that, an aluminum smelter with a capacity of 85 MW functioned here, the work of which was suspended.

Fig. 4. Construction of the first phase of the Dallas data center

In 2007, Google began to build a data center in southwestern Iowa - in Council Bluffs, not far from the Missouri River. The concept resembles the previous object, but there are also external differences: the buildings are combined, and refrigeration equipment instead of cooling towers is placed along both sides of the main structure ( Fig. 5 ).

Fig. 5. Data Center in Council Bluffs - a typical concept for building Google data centers

Apparently, such a concept was taken as the best practices, since it can be traced in the future. Examples of this are data centers in the USA:

- Lenoir City (North Carolina) —a building of 13,000 square meters. meters; built in 2007-2008 ( fig. 6 );

- Monks Corner (South Carolina) - opened in 2008; consists of two buildings, between which a site is reserved for the construction of the third; the presence of its own high-voltage substation;

- Mayes County (Oklahoma) —construction lasted three years - from 2007 to 2011; The data center was implemented in two phases - each included the construction of a building with an area of 12,000 square meters. meters; power supply of the data center is provided by a wind farm.

Fig. 6. Data Center in Lenoir City

But the priority in secrecy belongs to the data center in Saint-Gislin ( Fig. 7a ). Built in Belgium in 2007-2008, this data center is larger than the data center in Council Bluffs in size. In addition, this data center is notable for the fact that when you try to look at the Google satellite map, instead of it you will see an empty area in a clean field ( Fig. 7b ). Google says there is no negative impact from the operation of this data center on the surrounding settlements: treated water is used as cooling tower water in the data center. For this purpose, a special multi-stage treatment station was built nearby, and water is supplied to the station via a technical shipping channel.

Fig. 7a.Data center in Saint-Gislin on a search engine map bing

Fig. 7b. But on the Google map it does not exist!

Google experts during the construction process noted that it would be logical to get water that is used to cool the external circuit from its natural storage, rather than take it from the water supply. The corporation for its future data center acquired in Finland (Hamina) an old paper mill, which was reconstructed as a data center ( Fig. 8) The project has been implemented for 18 months. 50 companies participated in its implementation, and the successful finish of the project was in 2010. At the same time, this scope is not accidental: the developed cooling concept really allowed Google to declare also the environmental friendliness of its data centers. The northern climate combined with a low freezing point of salt water made it possible to provide cooling without chillers at all, using only pumps and heat exchangers. It uses a typical circuit with a double water-water circuit and an intermediate heat exchanger. Sea water is pumped into the heat exchangers, cools the data center and then discharged into the bay in the Baltic Sea.

Fig. 8. Reconstruction of the old paper mill in Hamina turns it into an energy-efficient data center

In 2011, a data center with an area of 4,000 square meters was built in the northern part of Dublin (Ireland). meters. To create a data center, the warehouse building already existing here was reconstructed. This is the most modest of the well-known data centers built as a springboard for the deployment of company services in Europe. In the same year, the development of a network of data centers in Asia began: three data centers should appear in Hong Kong, Singapore and Taiwan. And this year, Google announced the purchase of land for the construction of a data center in Chile.

It is noteworthy that in the data center under construction in Taiwan, Google experts took a different path, deciding to take advantage of the economic benefits of the cheaper, nightly electricity tariff. The water in the huge tanks is cooled with the help of a chiller unit with tanks, cold accumulators, and is used for cooling in the daytime. But it is not known whether the phase transition of the coolant will be used here or the company will stop only on the chilled water tank. Perhaps after the commissioning of the data center, Google will provide this information.

It is interesting that the polar idea is an even bolder project of the corporation — a floating data center, which in 2008 patented Google. The patent states that the IT equipment is located on a floating vessel, the cooling is performed by cold sea water, and the electricity is produced by floating generators that generate electricity from the movement of waves. For the pilot project, it is planned to use floating generators manufactured by Pelamius: 40 such generators floating on an area of 50x70 meters will allow generating up to 30 MW of electricity sufficient for the operation of the data center.

By the way, Google regularly publishes the PUE energy efficiency indicator of its data centers. Moreover, the measurement procedure itself is interesting. If in the classical sense of the standards GreenGrid is the ratio of the data center consumed power to its IT power, then Google measures PUE for the whole facility, including not only the data center life-support systems, but also the conversion losses in transformer substations, cables, energy consumption in office premises, etc. - that is, everything that is inside the perimeter of the object. The measured PUE is given as an average value for a one-year period. As of 2012, the average PUE across all Google data centers was 1.13.

Features of choosing a data center construction site

Actually, it is clear that, building such a huge data center, Google does not choose their location randomly. What criteria are primarily taken into account by company specialists?

- Fairly cheap electricity, the possibility of its supply and its environmentally friendly origin. Adhering to the policy of preserving the environment, the company uses renewable energy sources, because one large Google data center consumes about 50-60 MW - enough to be the sole customer of the entire power plant. Moreover, renewable sources make it possible to be independent of energy prices. Currently, hydroelectric power stations and windmill parks are used.

- The presence of a large amount of water that can be used for the cooling system. It can be either a canal or a natural reservoir.

- The presence of buffer zones between roads and settlements to build a secure perimeter and maintain maximum confidentiality of the object. At the same time, the availability of highways for normal transport communication with the data center is required.

- The area of land purchased for the construction of data centers should allow its further expansion and the construction of auxiliary buildings or its own renewable sources of electricity.

- Channels of connection. There should be several, and they must be reliably protected. This requirement has become especially relevant after regular problems with the disappearance of communication channels in a data center located in Oregon (USA). Overhead communication lines passed through power lines, the insulators on which became for local hunters something like targets for shooting competitions. Therefore, during the hunting season, communication with the data center was constantly cut off, and its restoration required a lot of time and considerable strength. As a result, the problem was solved by laying underground communication lines.

- Tax exemptions. A logical requirement, given that the "green technologies" used are much more expensive than traditional ones. Accordingly, when calculating the payback, tax incentives should reduce the already high capital costs in the first stage.

Features in detail

Let's start with the server park. The number of servers has not been disclosed, but various sources of information call the figure from one to two million servers, while it is said that even the last digit is not the limit, and the existing data centers are not completely filled (given the area of the server rooms, it is difficult to disagree). Servers are selected based on value for money, and not based on absolute quality or performance. The server platform is x86, and a modified version of Linux is used as the operating system. All servers are combined in a clustered solution.

Back in 2000, the company thought about reducing transmission losses and the transformation of electricity in servers. Therefore, the power supplies comply with the Gold level of the Energy Star standard - the efficiency of the power supply is at least 90%. Also, all components that are not required to run applications running on them were removed from the servers. For example, there are no graphic adapters in the servers, there are fans with RPM control, and the components allow proportionally loading to reduce their energy consumption. It is interesting that in large data centers and container data centers, where the servers are essentially consumables, it was apparently decided: the lifetime of the servers is comparable to the battery life. And if so, then instead of the UPS there is a battery that is installed in the server itself. So we managed to reduce the losses on the UPS and eliminate the problem of its low efficiency at low load. It is known that the dual-processor platform x86 was used, and the well-known company Gigabyte was engaged in the production of motherboards specifically for Google. It is curious that the server does not have the usual enclosed enclosure: there is only its lower part, which houses hard drives, a motherboard, a battery and a power supply (fig. 9 ). The installation process is very simple: the administrator pulls the metal plug from the installation field and inserts a server instead, which is freely blown from the front to the back. After installation, the battery and power supply are connected.

The status and performance of each server hard drive is monitored. Additionally, data is archived to tape. The problem of recycling non-working storage media - hard drives in a peculiar way is solved. At the first stage, the disks take turns in a kind of press: a metal tip pushes through the hard drive, compresses the camera with the plates to make it impossible to read from them in any way that is available at the moment. Then the disks get into the shredder, where they are crushed, and only after that they can leave the territory of the data center.

An equally high level of security is also for employees: perimeter security, quick response teams are on duty around the clock, employee identification at first by a badge made using lens (lenticular) printing, which reduces the likelihood of counterfeiting, and after that - biometric control according to the iris of the eye.

Fig. 9. A typical “Spartan” Google server - nothing more

All servers are installed in 40-inch double-frame open racks, which are placed in rows with a common "cold" corridor. Interestingly, in data centers, Google does not use special designs to limit the “cold” corridor, but uses hinged, rigid, movable polymer lamellas, assuring that this is a simple and inexpensive solution that allows you to quickly add existing lamellas to existing rows and fold existing lamellas above the top of the closet.

It is known that, in addition to hardware, Google uses the Google File System (GFS) file system, designed for large data arrays. The peculiarity of this system is that it is clustered: information is divided into 64 MB blocks and stored in at least three places at the same time with the ability to find replicated copies. If any of the systems fails, the replicated copies are automatically found using specialized programs of the MapReduce model. The model itself implies the parallelization of operations and the execution of tasks on several machines simultaneously. In this case, information is encrypted inside the system. The BigTable system uses distributed storage arrays to store a large array of information with quick access for storage, such as web indexing, Google Earth and Google Finance. The basic web applications are Google Web Server (GWS) and Google Front-End (GFE), using the optimized Apache kernel. All these systems are closed and customized - Google explains this by the fact that closed and customized systems are very resistant to external attacks and they have significantly fewer vulnerabilities.

Summing up, I would like to note several important points that cannot but surprise. Google reasonably plans the costs and development strategy of data centers, using the concept of "best in price / quality" instead of "best solution." There is no unnecessary functionality, there are no decorative excesses - only a “Spartan” filling, although to some it may not seem aesthetic. The company actively uses green technologies, and not as an end in itself, but as a means to reduce operating costs for electricity and fines for environmental pollution. When building data centers, the bias is not made on a large number of redundant systems - the data center itself is backed up (thereby minimizing the influence of external factors). The main emphasis is on the software level and non-standard solutions. The focus on renewable energy sources and the use of natural water resources suggests that the company is trying to be as independent as possible from rising energy prices. The environmental friendliness of the solutions used correlates well with their energy efficiency. All this suggests that Google has not only strong technical competence, but also knows how to invest money, look ahead, keeping pace with market trends.

Konstantin Kovalenko magazine TsODy.RF , issue number 1