Postmortem doubles: how to defeat Cthulhu and another 2,000 people

Hello everyone, my name is Olya. Two weeks ago, another contest took place at CodinGame, a contest for programming bots for the game. I hit the top 300 of the world leaderboard, so I want to tell why the contests are cool, and share my secrets. And Ivan will share the secrets of spaceorc , who got into the top 100 of the same competition.

You will learn how to successfully perform at competitions in programming game artificial intelligence.

What is CodinGame

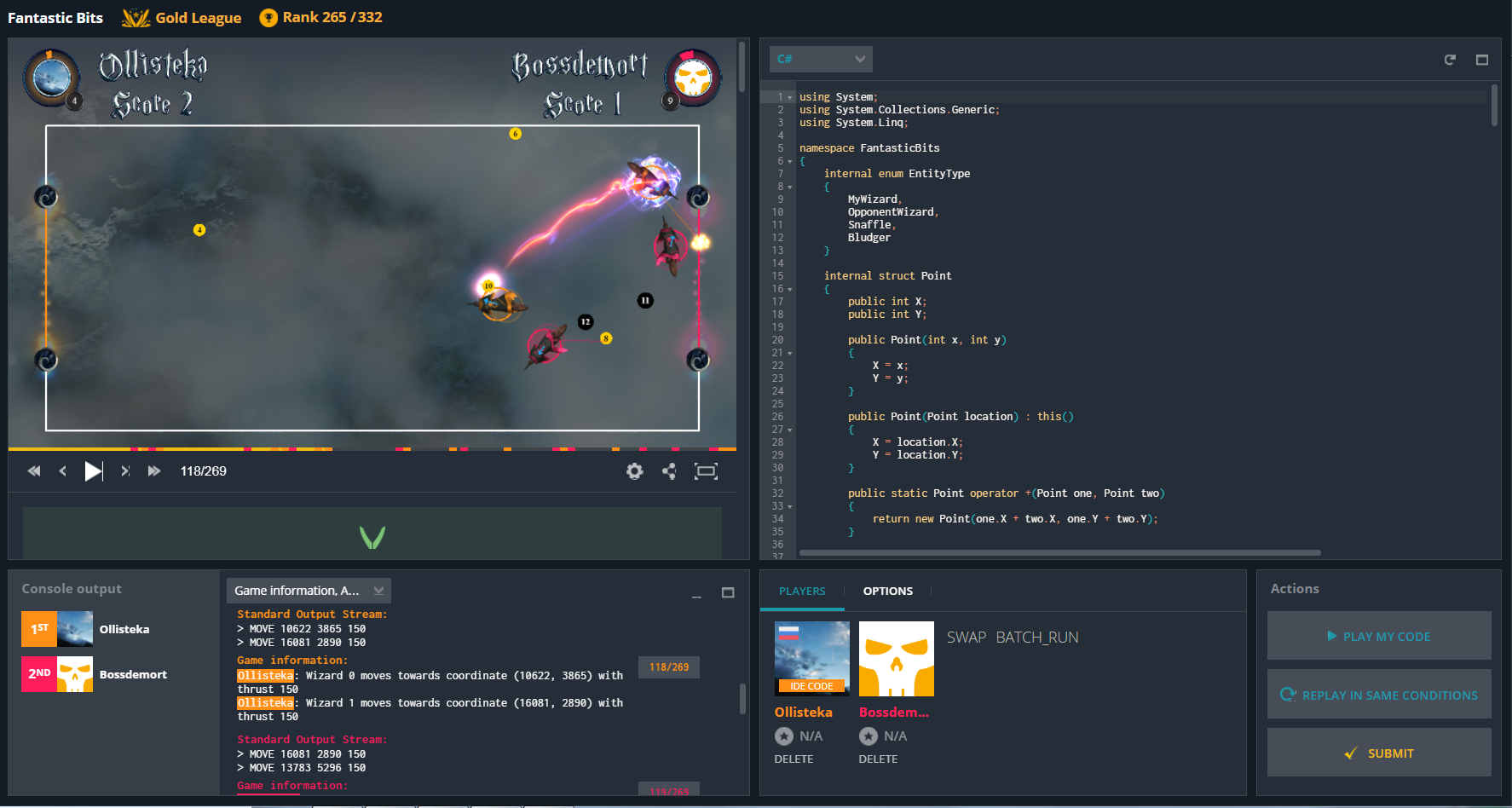

codingame.com is an educational platform for developers of all ages and levels of training. You can write in 26 languages : from C # and Python to Bash and Haskell. The coolest thing is that the puzzles there are not boring and incomprehensible, but real games with a decent GUI:

The contest is a 10-day competition that is held every couple of months. Anyone can participate, not necessarily be a finalist of ACM ICPC. Of course, to get to the very top, you need at least to understand the algorithms that are typical for artificial intelligence.

But in order to spend a couple of evenings with interest, the most basic knowledge is enough. In each contest there is a ready code for reading the input data. It remains only to read the rules, write unpretentious if-else - and into battle!

Code of kutulu

The Code of Cthulhu is the last contest held from June 15 to June 25. To learn the plot and purpose, enough descriptions:

PH'NGLUI MGLW'NAFH CTHULHU R'LYEH WGAH'NAGL FHTAGN

What can lie forever is not at all dead. And Death dies in a mysterious eon.

You and your team of researchers have discovered the tomb of Cthulhu. This is the worst decision in your life, as he was not ready to wake up and is now eager for your death. But the crypt is a real labyrinth, and you do not remember where the exit was ... If it still exists!

Oh ... and it seems that Cthulhu was not alone, and now he is sending the Depths after you.

Try to stay alive the longest, but remember that you alone will not last long ...

rules

I’ll say right away that instead of reading a textual description of the rules, you can watch a videotape of the parsing of rules and basic tactics from Tinsane :

Otherwise read on.

Map

In the game, four players walk on a generated map — more precisely, a graph of cells connected with each other. More on the map are running enemy minions, whose goal is to catch up and scare the players.

Characters

- The researcher is one of the players. Walks in any direction on one cell. Possesses superpowers, but about them later.

- Wanderer - green monsters. It appears on the map once every 5 turns, from previously known points. Spawn in 3-6 moves, look for the nearest player and start the pursuit. Goes only one cell per turn. If you do not catch up with anyone in N steps, it disappears from the map.

- Slasher - similar to Death with a scythe. Appear on the player's place, whose health fell below 200 units, and remain on the map until the end of the game. “Sees” a player if there are no walls between them. If the goal is not out of sight in 2 moves, on the next move it jumps to the place where the player was last seen.

Survival

If a vanderer or slasher gets into a cell with a player, the player loses 20 health points. Players also lose a certain amount of health each turn just like that. But if there are living researchers in a radius of two cells, then health is lost a little less.

Superpowers researchers

- PLAN - increases health by 2 points for 5 turns. If other researchers are in range, the effect extends to them, and the effect creator gets +2 health per person. During the game you can use 2 times.

- YELL - scares the player in the next cell. The action planned for the next move turns into WAIT (the player will simply stand still). It is useful if the vanderer is chasing the enemy and you want to substitute him.

- LIGHT - illuminates the cells in a radius of 5, you can use 3 times per game. It helps to scare away the Wanderers: when they count the way to the nearest target, each lit cell counts as 4.

End of the game

A player dies if health drops to zero. After 200 moves, the surviving players begin to lose their health faster, and the game ends almost immediately.

Contest developers give players rules, but successful players go to Github and read the referee code .

Tactics

I must say that I did not start from scratch. On June 16, Kontur conducted coding hubs in seven cities - meetings for those who want to discuss the contest and give them a pleasant atmosphere ( photo ).

I passed the exam at the university and did not get to the hub in Yekaterinburg, but I took advantage of the bonus from the organizers - the starter kit. It is available in two languages - C # and TypeScript , and it has already implemented the entire infrastructure: the logic of reading the state of the game at each turn, as well as classes that characterize the game itself (such as state and possible actions), and some auxiliary (for example, custom timer) . I was able to immediately start writing the most interesting thing - my brainbot the researcher.

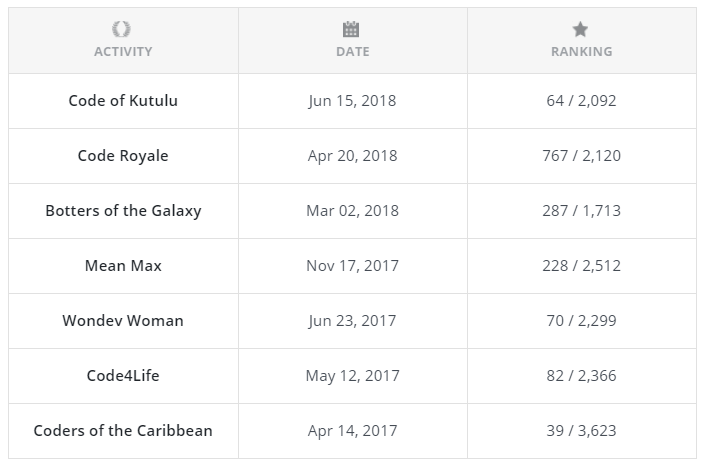

One of the leaders of the hub in Yekaterinburg is Vanya Dashkevich ( spaceorc ). He has been sitting on CodinGame for more than a year, has participated in seven contests, and in five has hit the world top 100:

I learned from Vanya details of the decision that secured him 64th place in the world leaderboard, and compared his decision with mine.

[1] Come to the hubs: there you can get links to starters-whales, discuss the rules, come up with tactics and meet interesting people.

What is at the beginning of the contest, what is at the end, the algorithm for selecting the next move looks the same:

- Generate all available bot actions;

- Apply them to the current state;

- Evaluate the results of these moves;

- Choose the best.

Generating available actions

Ollisteka : Already at this step I began to apply heuristics - I imagined myself in the place of this researcher, and decided what would be good and what was not. Can I go to the next free cell? We add such a move. I still have an unused plan, but there is no vanderer or slasher around who will attack me on the next turn? We add.

According to the same scheme, LIGHT and YELL fell into the list of possible actions, but their use only lowered me lower in the leaderboard. Therefore, I finally removed them from the final implementation.

[2] Do not be afraid to include fantasy: for a start, quite simple heuristics and a pair of conditional operators.

Use stroke

The process of applying a turn to the state of the game is called a simulation. The presence of the simulation allows the use of advanced AI programming techniques: minimax , genetic algorithms , Monte Carlo Tree Search and others.

Ollisteka : It is this step that applies to my “non-stimulation”. "Nedo" - because after I went, the other players must go, the enemies will go or fall back. However, I was too lazy to do a full simulation for four players and a large number of enemies with non-trivial logic. Therefore, I only changed my health depending on whether I was alone or in a group, and didn’t come across a vanderer on this move.

spaceorc : My usual approach recently consists of two steps:

- one can reach the stage when the organizers open all the rules and drop the source code of the “referee” on Github;

- you take the referee and write, looking at him, a simulation.

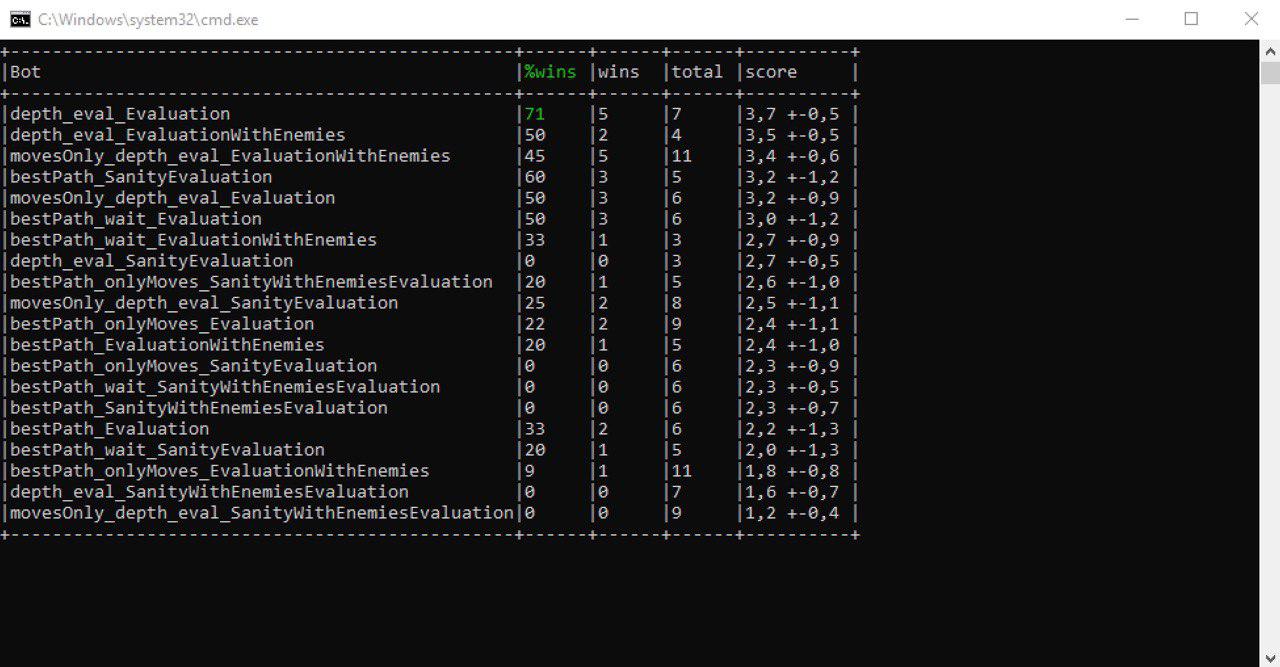

The simulation is complete, with all the nuances, but ineffective. I usually bet on the speed and depth of the search ... But the full simulation allows you to run many matches locally and compare different strategies. I tested the full simulation on CodinGame - predicted the positions of the minions, knowing how the rivals descended (that is, on the next turn), and dealt with discrepancies. When the complete simulation was ready, I began to write fast - it is easy to do it, having a working one.

[3] Want to get to the top? We'll have to deal with the rules and write a simulation.

Simulation of opponents

spaceorc : Posted by Monte Carlo Tree Search, but he played worse because there was too little brunt time. In general, this approach came to me more or less only in Ultimate Tic-Tac-Toe . The opponents played the same way - a simulation for a move plus a score, my moves are MCTS and we play the game to the end. It was possible in this way to simulate about 50 games to the end for 50 ms. This is not enough for MCTS, so I cut it down and returned to the original idea.

As a result, the fast simulation became incomplete:

- I stopped looking at Wanderers more than 8 cells from me;

- I stopped spawning vandererov, because they already spawn in 5 moves, and this is my search depth approximately.

Due to this increased depth busting. I also tried to simulate only moves for opponents (without YELL, LIGHT, PLAN), but it turned out worse.

Evaluation function

Evaluation function helps to choose the best from all available moves. She takes the state of the game to the input, and the output gives a number with an estimate - the bigger it is, the better the state of the game for the current player.

Ollisteka : What parameters were included in my assessment:

- the health of my researcher;

- Wanderers:

- if he most likely comes here next turn, then this is a bad move;

- if I'm on the same line with him - the farther from him, the better;

- if he also hunts me, the distance is even more important.

- free cells around, so as not to run into a dead end;

- other researchers, to which it is better to stay closer;

- current PLAN: if my health drops below 230, then treatment is a good idea.

At some point I tried to evaluate the slashers, but when I removed them, I picked up a couple of dozen places in the leaderboard. If I had worked their logic better, then perhaps they would have brought more benefits.

As a result, my estimate could have been smaller, but, as they say, it works - do not touch.

spaceorc : I tried to play with evaluative functions, but it didn’t work out very well ... In general, some of those who turned out to be significantly taller than me in the leaderboard didn’t do so much, but came up with good features for evaluation. I did not cope. My final evaluation function included:

- the number of points (tour, which died + health);

- Black ;

- distance to other researchers.

[4] Experiment: change the coefficients of the parameters of the evaluation function, add new parameters and delete old ones.

Choosing the best move

Sort moves by descending grade, take the first, use.

Optimization

In order to compete for a place in the top hundred, it is not enough to have a good evaluation function and a complete simulation. The faster the bot works, the more games will be simulated per time slice. The more games, the greater the chance that the current move is the most optimal. The more optimal the move, the higher the place in the leaderboard.

Ollisteka : I took advantage of the fact that the card can be represented as a graph - I calculated in advance the lengths of all the paths from cell to cell and did not spend time on it at each turn.

spaceorc : The simulation worked quickly on CodinGame, in 50 ms it made several tens of thousands of moves. Due to which:

- Bitmasks and unsafe code.

- Explorer - int, wanderer - int, slasher - int.

- All changeable state fits into 128 bytes, so everything works very fast with it.

- The coordinate is byte because the largest map had 222 free cells.

- You need a queue - var queue = stackalloc byte [255].

- Preference paths, distances and other things.

I have been doing it all the time lately, it turns out very well. By the way, I always write a lot of tests for such a simulation, without it I just can’t debug it.

[5] Want to compete for a place in the top 100 - get rid of inefficient code.

What did it lead to

Ollisteka : Throughout the entire contest, my bot steadily stayed in the top 300. At one point, I was even at the 84th place in the world leaderboard, but then I stopped the new version and didn’t come back ¯ \ () / ¯ Having finished at the 290th place, I am very pleased for three reasons:

- This is the first contest in which I took a full part, because during the past I was too busy with my studies.

- I liked the game itself - it was clear how to play and what to do to win.

- World top 15% - that sounds cool :)

It was obvious that to get to the top you need to do a complete and fast simulation. But I didn’t want to do this, so I just added parameters to the evaluation function and changed the magic constants.

spaceorc : I am rather satisfied with the result, I went to the top 100 ... But still I had to work more on the evaluation function, a strong bias towards the simulation turned out. And still a little tired at the end, not enough imagination ...

Finally

Come on CodinGame and try your hand! In July they promise a new contest - come to the hubs, we will code bots together.

Useful links:

- CodinGame accounts: mine and Vanya Dashkevich ;

- the next contest Legends of Code and Magic (at the time of this writing, there are already 1392 registered);

- Contour blog and channel in Telegram - wait for the announcements of new hubs in them.

UPD . Thanks to dbf for the comment: Code of Kutulu was uploaded to multiplayer . So you can apply the knowledge gained in the article in practice! :)