Arithmetic coding

Now there are many algorithms for compressing information. Most of them are widely known, but there are some very effective, but, nevertheless, little-known algorithms. This article talks about the method of arithmetic coding, which is the best of the entropy, but nevertheless few people know about it.

Before we talk about arithmetic coding, I must say a few words about the Huffman algorithm. This method is effective when the symbol frequencies are proportional to 1/2 n (where n is a positive integer). This statement becomes obvious if we recall that the Huffman codes for each character always consist of an integer number of bits. Consider a situation where the frequency of occurrence of a character is 0.2, then the optimal code for encoding this character should be –log2 (0.2) = 2.3 bits. It is clear that the Huffman prefix code cannot have such a length, i.e. this ultimately leads to poor data compression.

Arithmetic coding is intended to solve this problem. The main idea is to assign codes not to individual characters, but to their sequences.

First, consider the idea behind the algorithm, then consider a small practical example.

As in all entropy algorithms, we have information about the frequency of use of each symbol of the alphabet. This information is the source for the method under consideration. Now we introduce the concept of a working segment. A half-interval [a; b) with points located on it is called a worker. Moreover, the points are located so that the lengths of the segments formed by them are equal to the frequency of use of the characters. When the algorithm is initialized, a = 0 b = 1.

One coding step consists in a simple operation: the encoded character is taken, for it the corresponding section on the working segment is searched. The found section becomes a new working segment (i.e., it also needs to be divided using dots).

This operation is performed for a number of characters of the source stream. The result of encoding a character string is any number (as well as the length of its bit record) from the final working segment (most often the left boundary of the segment is taken).

That sounds pretty complicated. Let's try to figure it out with a small example. Encode the message "THIS_METHOD_BETTER_HAFFMANA" using the described method.

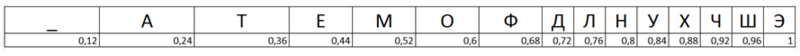

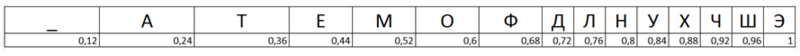

Having compiled a table of the frequency of occurrence of characters, we can start coding. At the first stage we will make a working segment. It will look like this:

We take the first symbol from the stream, this is the symbol "E". The corresponding segment is the segment [0.96; 1). If we wanted to encode one character, then the encoding result would be any number from this segment. But we will not stop at one symbol, but add another one. The symbol "T". To do this, we compose a new working segment with a = 0.96 and b = 1. We break this line with dots in the same way as we did for the original line and read the new symbol “T”. The “T” symbol corresponds to the range [0.24; 0.36), but our working segment has already been reduced to the segment [0.96; 1). Those. borders we need to recount. You can do this using the following two formulas: High = Low old + (High old- Low old ) * Range High (x), Low = Low old + (Highold -Low old ) * Range Low (x), where Low old is the lower boundary of the interval, High old is the upper boundary of the Range High range and Range Low is the upper and lower boundaries of the encoded character.

Using these formulas, we

encode the first word of the message as a whole: The encoding result will be any number from the half-interval [0.97218816; 0.97223424).

Assume that the left boundary of the half-interval, i.e. number 0.97218816.

Consider the decoding process. The code lies in the half-interval [0.96; 1). Those. the first character of the message "E". To decode the second character (which was encoded in the half-interval [0.96; 1)), the half-interval needs to be normalized, i.e. cast to the form [0; 1). This is done using the following formula: code = (code-Range Low (x)) / (Range High (x) -Range Low (x)), where code is the current code value.

Applying this formula, we obtain a new value code = (0.97218816-0.96) / (1-0.96) = 0.3004704. Those. the second character of the sequence "T".

Again we apply the formula: code = (0.304704-0.24) / (0.36-0.24) = 0.5392. The third character of the sequence is “O”.

Continuing decoding, according to the described scheme, we can completely restore the source text.

T.O. we have examined the encoding and decoding algorithm using the most efficient of all entropy algorithms. I hope you learned something new from this article and understood the basic ideas that lie in the arithmetic coding algorithm.

Before we talk about arithmetic coding, I must say a few words about the Huffman algorithm. This method is effective when the symbol frequencies are proportional to 1/2 n (where n is a positive integer). This statement becomes obvious if we recall that the Huffman codes for each character always consist of an integer number of bits. Consider a situation where the frequency of occurrence of a character is 0.2, then the optimal code for encoding this character should be –log2 (0.2) = 2.3 bits. It is clear that the Huffman prefix code cannot have such a length, i.e. this ultimately leads to poor data compression.

Arithmetic coding is intended to solve this problem. The main idea is to assign codes not to individual characters, but to their sequences.

First, consider the idea behind the algorithm, then consider a small practical example.

As in all entropy algorithms, we have information about the frequency of use of each symbol of the alphabet. This information is the source for the method under consideration. Now we introduce the concept of a working segment. A half-interval [a; b) with points located on it is called a worker. Moreover, the points are located so that the lengths of the segments formed by them are equal to the frequency of use of the characters. When the algorithm is initialized, a = 0 b = 1.

One coding step consists in a simple operation: the encoded character is taken, for it the corresponding section on the working segment is searched. The found section becomes a new working segment (i.e., it also needs to be divided using dots).

This operation is performed for a number of characters of the source stream. The result of encoding a character string is any number (as well as the length of its bit record) from the final working segment (most often the left boundary of the segment is taken).

That sounds pretty complicated. Let's try to figure it out with a small example. Encode the message "THIS_METHOD_BETTER_HAFFMANA" using the described method.

Having compiled a table of the frequency of occurrence of characters, we can start coding. At the first stage we will make a working segment. It will look like this:

We take the first symbol from the stream, this is the symbol "E". The corresponding segment is the segment [0.96; 1). If we wanted to encode one character, then the encoding result would be any number from this segment. But we will not stop at one symbol, but add another one. The symbol "T". To do this, we compose a new working segment with a = 0.96 and b = 1. We break this line with dots in the same way as we did for the original line and read the new symbol “T”. The “T” symbol corresponds to the range [0.24; 0.36), but our working segment has already been reduced to the segment [0.96; 1). Those. borders we need to recount. You can do this using the following two formulas: High = Low old + (High old- Low old ) * Range High (x), Low = Low old + (Highold -Low old ) * Range Low (x), where Low old is the lower boundary of the interval, High old is the upper boundary of the Range High range and Range Low is the upper and lower boundaries of the encoded character.

Using these formulas, we

encode the first word of the message as a whole: The encoding result will be any number from the half-interval [0.97218816; 0.97223424).

Assume that the left boundary of the half-interval, i.e. number 0.97218816.

Consider the decoding process. The code lies in the half-interval [0.96; 1). Those. the first character of the message "E". To decode the second character (which was encoded in the half-interval [0.96; 1)), the half-interval needs to be normalized, i.e. cast to the form [0; 1). This is done using the following formula: code = (code-Range Low (x)) / (Range High (x) -Range Low (x)), where code is the current code value.

Applying this formula, we obtain a new value code = (0.97218816-0.96) / (1-0.96) = 0.3004704. Those. the second character of the sequence "T".

Again we apply the formula: code = (0.304704-0.24) / (0.36-0.24) = 0.5392. The third character of the sequence is “O”.

Continuing decoding, according to the described scheme, we can completely restore the source text.

T.O. we have examined the encoding and decoding algorithm using the most efficient of all entropy algorithms. I hope you learned something new from this article and understood the basic ideas that lie in the arithmetic coding algorithm.