Do robots need empathy?

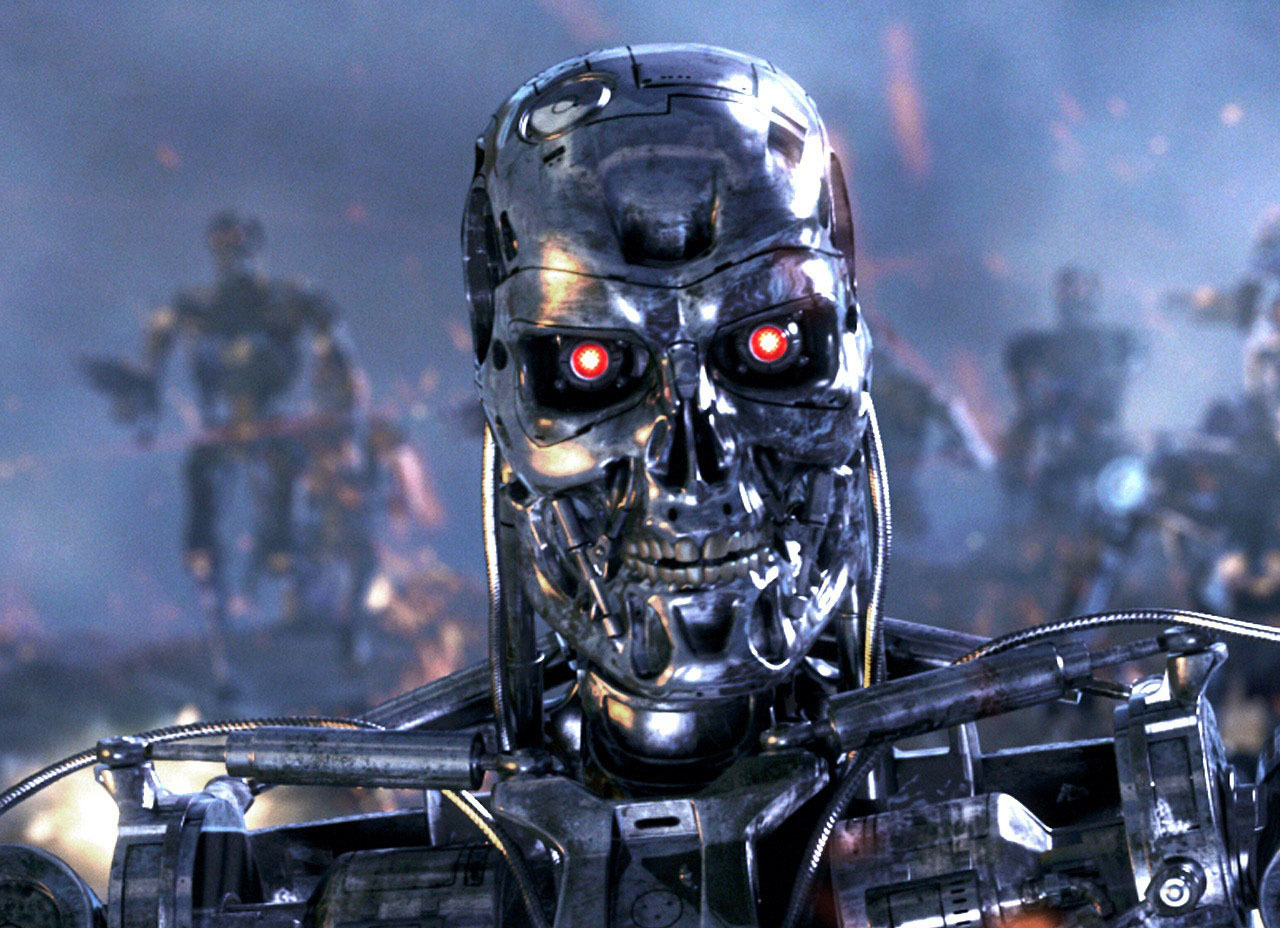

We are on the verge of a revolution in robotics, and scientists with engineers are trying to understand how this will affect people. Opinions were divided into two camps: the future promises us a Robo-apocalypse a la "Terminator", or robots will take the place of dogs in the role of man's best friends. And from the point of view of a number of modern thinkers and visionaries, the second scenario is possible only if there is empathy with robots. More precisely, in artificial intelligence.

The consequences of AI's heartlessness have recently been fully felt by Microsoft, having suffered a crushing fiasco with its famous chat bot called Tei. He was programmed to learn on the basis of interaction with Twitter users. As a result, the crowd of trolls instantly turned the 19-year-old girl, in whose role the bot acted, into a sexually preoccupied Nazi racist . Developers had to turn off the bot a day after launch. This case so vividly demonstrated the importance of the problem of the AI that went off the run, that one of Google’s divisions, DeepMind, together with experts from Oxford, started developing an “ emergency switch ” technology for AI, until they became a real threat.

The bottom line is that AI, which is not inferior in terms of the level of human intelligence, and based only on independent development and optimization, can come to the conclusion that people want to turn it off or interfere with obtaining the resources they want. And in accordance with this inference, artificial intelligence will decide to act against people, regardless of how justified his suspicions are. The rather famous theorist Eliezer Yudkowski once wrote: “The AI will neither love nor hate you. But then you are made of atoms that he can use for his needs . "

And if the threat of AI, "living" in the cloud supercomputer, seems to you now quite ephemeral, then what can you say about a full-fledged robot, in whose electronic brains the thought arises that you are preventing it from living?

One of the ways to be sure that robots, and AI in general, will remain on the side of good, is to fill them with empathy. Not the fact that this will be enough, but it is a necessary condition according to many experts. We just have to create robots capable of empathy.

Not long ago, Ilon Musk and Stephen Hawking wrote an open letter to the Center for the Study of Existential Risks (Center for the Study of Existential Risk, CSER), which called for more efforts to be made in the study of "potential traps" in the field of AI. According to the authors of the letter, artificial intelligence can become more dangerous than nuclear weapons, and Hawking generally believes that this technology will be the end of humanity. To protect against the apocalyptic scenario, it is proposed to create a strong AI (AGI, artificial general intelligence) with a built-in psychological system of the human type, or to fully simulate human nerve reactions in AI.

Fortunately, time has not yet been lost and it is possible to decide in which direction we should move. According to the expert community, the emergence of a strong AI, not inferior to humans, is possible in the range from 15 to 100 years from now. In this case, the boundaries of the range reflect the extreme degree of optimism and pessimism, a more realistic time frame - 30-50 years. In addition to the risk of acquiring dangerous AI without moral principles, many fear that current economic, social and political turmoil may lead to the fact that creators of strong AI will initially seek to turn them into a new weapon, means of pressure or competition. And unbridled capitalism in the form of corporations will play a significant role here. Big business always ruthlessly seeks to optimize and maximize processes, and the temptation to “swim for buoys” for the sake of gaining competitive advantages will certainly arise. Some governments and corporations will certainly try to attract strong AIs to market and election management, as well as to develop new weapons, and other countries will have to respond adequately to these steps, drawing themselves into the AI arms race.

No one can argue that the AI will necessarily become a threat to humanity. Therefore, there is no need to try to ban the development in this area, but it is also meaningless. It seems that we cannot do without just the laws of Asimov's robotics, and it is necessary by default to introduce “emotional” fuses to make robots peaceful and friendly. So that they can create a kind of friendship, can recognize and understand the emotions of people, perhaps even to empathize. This can be achieved, for example, by copying the neural structure of the human brain. Some experts believe that it is theoretically possible to create algorithms and computational structures capable of acting like our brain. But since we still do not know how it works, this is a long-term task.

There are already robots on the market that are capable of technically recognizing certain human emotions. For example, Nao is only 58 cm tall and weighs 4.3 kg, the robot is equipped with face recognition software. He is able to establish eye contact and react when they speak to him. This robot has been used for research to help autistic children. Another model from the same manufacturer - Pepper - is able to recognize words, facial expressions and body language, acting in accordance with the information received.

But none of the modern robots can feel. To do this, they need self-awareness, only then can robots feel the same things that people can think about feelings.

We are better able to imitate the appearance of a person in robots, but with the inner world everything is much more complicated.

To have empathy means the ability to understand in others the same feelings that you once experienced. And for this, robots must pass their own period of maturation, with its successes and failures. They will have to experience affection, desire, success, love, anger, anxiety, fear, maybe jealousy. So that the robot, thinking of someone, would also think about the feelings of the person.

How to provide robots with such maturation? Good question. It may be possible to create virtual emulators / simulators in which the AI will gain emotional experience in order to later be loaded into physical bodies. Either create artificial patterns / memories and stamp robots with a standard set of moral and ethical values. Such things were foreseen by science fiction writer Philip K. Dick , in his novels robots often operate with artificial memories. But the emotionality of robots may have another side: in the recent movie Ex Machinadescribes a situation where a robot controlled by a strong AI demonstrates emotions so authentically that it misleads people, forcing them to follow his plan. Question: how to distinguish when a robot only slyly reacts to a situation, or when it really experiences the same emotions as you? To make him a holographic nimbus, the color and drawing of which confirm the sincerity of the emotions expressed by the robot, as in the anime “Time of Eve” ( EVE no Jikan )?

But suppose we managed to solve all the above problems and find answers to the questions raised. Will robots be equal to us then, or will they stand below us on the social scale? Should people manage their emotions? Or will it be a kind of high-tech slavery, according to which robots with strong AI should be thinking and feeling the way we want?

There are quite a few difficult questions that are not answered yet. At the moment, our social, economic and political structures are not ready for such radical changes. But still, sooner or later we will have to look for acceptable solutions, because nobody can stop the development in the field of robotics, and someday a strong AI capable of functioning in an anthropomorphic body will be created. We need to prepare in advance for this.