GPU Ray Tracing in Unity - Part 3

- Transfer

[ The first and second parts.]

Today we will make a big leap. We will move away from exclusively spherical structures and the infinite plane that we traced earlier, and add triangles - the whole essence of modern computer graphics, an element that all virtual worlds consist of. If you want to continue with what we finished the last time, then use the code from part 2 . The ready code for what we will do today is available here . Let's get started!

A triangle is just a list of three connected vertices , each of which keeps its position, and sometimes normal. The order of traversal of the vertices from your point of view determines what we are looking at - the front or back side of the triangle. Traditionally, the “front” is considered the counterclockwise traversal order.

First, we need to be able to determine if the ray intersects a triangle, and if so, at what point. A very popular (but definitely not the only one ) algorithm for determining the intersection of a ray with a triangle was proposed in 1997 by gentlemen Thomas Akenin-Meller and Ben Trembor. You can read more about it in their article “Fast, Minimum Storage Ray-Triangle Intersection” here .

The code from the article can be easily ported to the HLSL shader code:

To use this function, we need a ray and three vertices of a triangle. The return value tells us if the triangle intersected. In the case of an intersection, three additional values are calculated:

Without too much delay, let's trace one triangle with the vertices indicated in the code! Find the function in the shader

As I said, it

Exercise: Try to calculate the position using barycentric coordinates, not distance. If you do everything right, then the shiny triangle will look exactly like before.

We overcame the first obstacle, but tracing whole meshes from triangles is a completely different story. First we need to learn some basic information about meshes. If you know them, you can safely skip the next paragraph.

In computer graphics, the mesh is defined by several buffers, the most important of which are the vertex and index buffers . The vertex buffer is a list of 3D vectors describing the position of each vertex in the space of an object (this means that such values do not need to be changed when moving, rotating or scaling an object - they are transformed from the space of the object to world space on the fly using matrix multiplication) .An index buffer is a list of integer values that are indexes that point to the vertex buffer. Every three indexes make up a triangle. For example, if the index buffer has the form [0, 1, 2, 0, 2, 3], then it has two triangles: the first triangle consists of the first, second and third vertices in the vertex buffer, and the second triangle consists of the first, third and fourth peaks. Therefore, the index buffer also defines the aforementioned traversal order. In addition to vertex buffers and indexes, additional buffers may exist that add other information to each vertex. The most common additional buffers store normals , texture coordinates (called texcoords or just UV), as well as the colors of the vertices .

First of all, we need to find out which GameObjects should become part of the ray tracing process. A naive solution would be simple to use , but we will do something more flexible and quick. Let's add a new component :

This component is added to each object that we want to use for ray tracing and registers them with

Everything is going well - now we know what objects need to be traced. But then comes the difficult part: we are going to collect all the data from Unity meshes (matrix, vertex buffers and indexes - remember them?), Write them to our own data structures and load them into the GPU so that the shader can use them. Let's start by defining data structures and buffers on the C # side, in the wizard:

... and now let's do the same in the shader. Are you used to it?

Data structures are ready, and we can fill them with real data. We collect all the vertices of all meshes into one large , and all the indices into large . There are no problems with the vertices, but the indices need to be changed so that they continue to point to the correct vertex in our large buffer. Imagine that we have already added objects from 1000 vertices, and now we add a simple mesh cube. The first triangle can consist of indices [0, 1, 2], but since we already had 1000 vertices in the buffer, we need to shift the indices before adding vertices to the cube. That is, they will turn into [1000, 1001, 1002]. Here's what it looks like in code:

We will call

Great, we created buffers and they are filled with the necessary data! Now we just need to report this to the shader. Add to the

So, the work is boring, but let's see what we just did: we collected all the internal data of the meshes (matrix, vertices and indexes), placed them in a convenient and simple structure, and then sent them to the GPU, which now looks forward to when they can be used.

Let's not make him wait. In the shader, we already have the trace code of a single triangle, and the mesh is, in fact, just a lot of triangles. The only new aspect here is that we use a matrix to convert vertices from the object space to world space using the built-in function

We are just one step away from seeing it all in action. Let's restructure the function a bit

That's all! Let's add some simple meshes (Unity primitives are fine), give them a component,

Note that our meshes do not have smooth, but flat shading. Since we have not yet loaded the normals of the vertices into the buffer, to obtain the normal of the vertices of each triangle, we must perform a vector product. In addition, we cannot interpolate over the area of the triangle. We will deal with this problem in the next part of the tutorial.

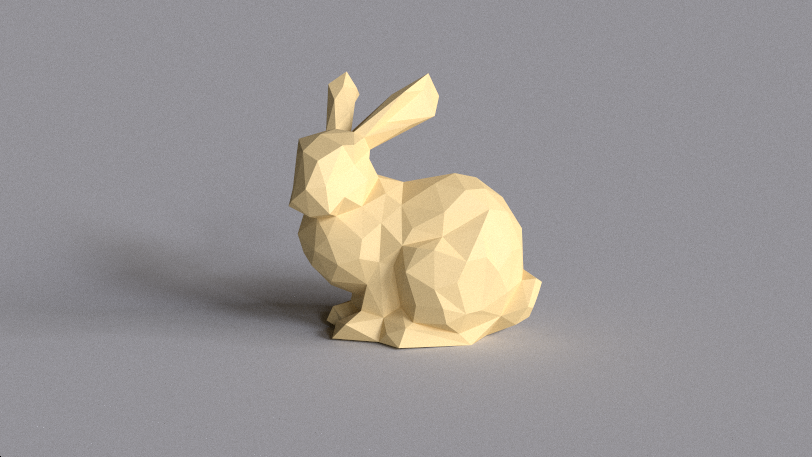

For the sake of interest, I downloaded Stanford Bunny from the archive of Morgan McGwire and using the decimate modifier of the Blender package I reduced the number of vertices to 431. You can experiment with lighting parameters and hard-coded material in the shader function

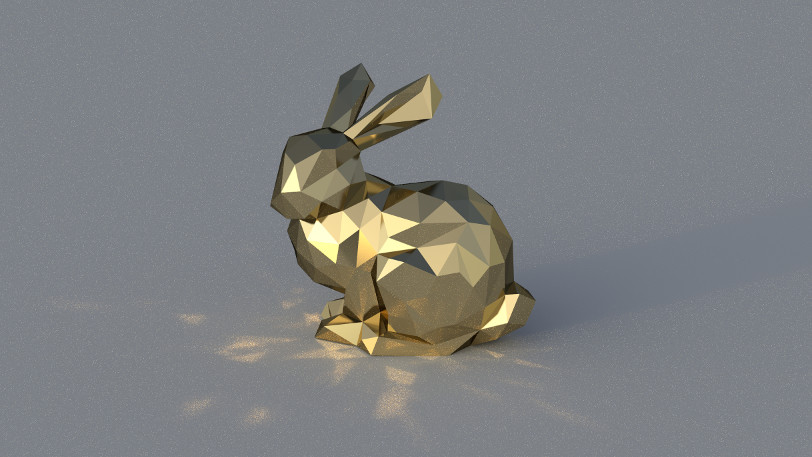

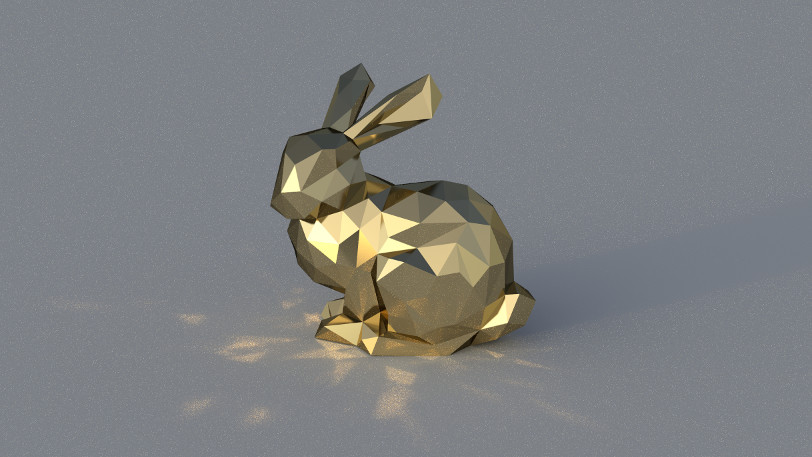

... and here's a metal rabbit under the strong directional light of Cape Hill , casting disco glare onto the floor plane:

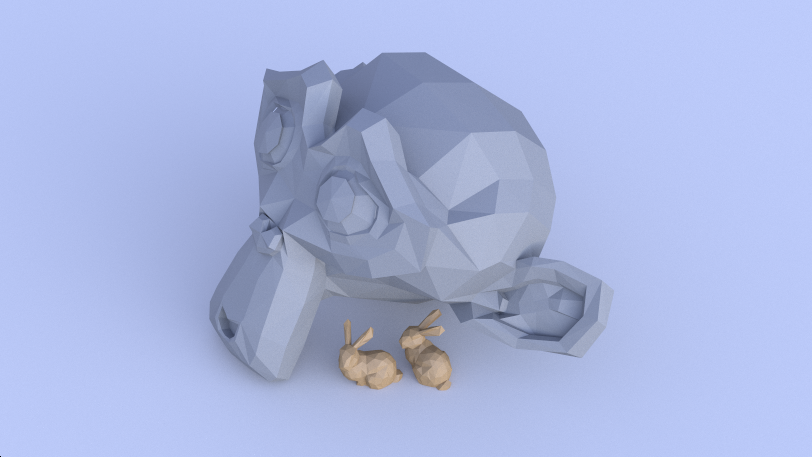

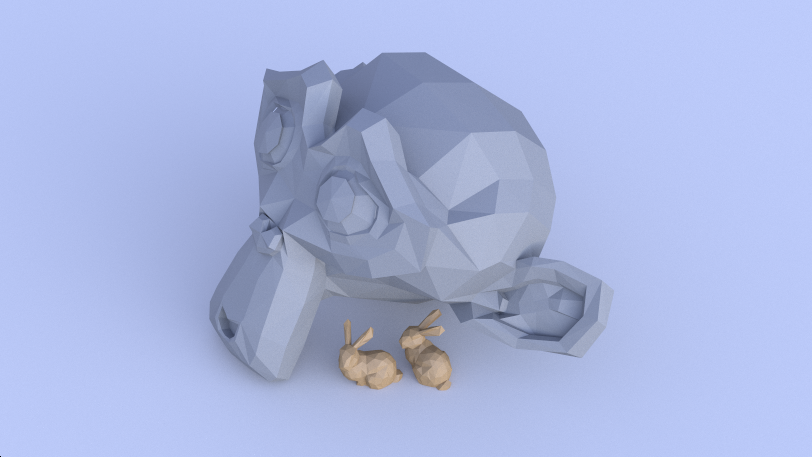

... and here are two little rabbits hiding under the large stone Suzanne under the blue sky Kiara 9 Dusk (I prescribed alternative material for the second object, checking if the index shift is zero):

It's great to see a real mesh in your own tracer for the first time, right? Today we processed some data, found out about the intersection using the Meller-Trambor algorithm, and collected everything so that you can immediately use the Unity engine's GameObjects engine. In addition, we saw one of the advantages of ray tracing: as soon as you add a new intersection to the code, all the beautiful effects (soft shadows, reflected and diffused global lighting, and so on) immediately start working.

Rendering a shiny rabbit took a lot of time, and I still had to use a little filtering to get rid of the most obvious noise. To solve this problem, the scene is usually written in a spatial structure, for example, in a grid, a K-dimensional tree or a hierarchy of bounding volumes, which significantly increases the speed of rendering large scenes.

But we need to move in order: further we will eliminate the problem with normals so that our meshes (even low-poly ones) look smoother than now. It would also be nice to automatically update matrices when moving objects and directly refer to Unity materials, and not just write them in the code. This is what we will do in the next part of the tutorial series. Thanks for reading, and see you in part 4!

Today we will make a big leap. We will move away from exclusively spherical structures and the infinite plane that we traced earlier, and add triangles - the whole essence of modern computer graphics, an element that all virtual worlds consist of. If you want to continue with what we finished the last time, then use the code from part 2 . The ready code for what we will do today is available here . Let's get started!

Triangles

A triangle is just a list of three connected vertices , each of which keeps its position, and sometimes normal. The order of traversal of the vertices from your point of view determines what we are looking at - the front or back side of the triangle. Traditionally, the “front” is considered the counterclockwise traversal order.

First, we need to be able to determine if the ray intersects a triangle, and if so, at what point. A very popular (but definitely not the only one ) algorithm for determining the intersection of a ray with a triangle was proposed in 1997 by gentlemen Thomas Akenin-Meller and Ben Trembor. You can read more about it in their article “Fast, Minimum Storage Ray-Triangle Intersection” here .

The code from the article can be easily ported to the HLSL shader code:

static const float EPSILON = 1e-8;

bool IntersectTriangle_MT97(Ray ray, float3 vert0, float3 vert1, float3 vert2,

inout float t, inout float u, inout float v)

{

// find vectors for two edges sharing vert0

float3 edge1 = vert1 - vert0;

float3 edge2 = vert2 - vert0;

// begin calculating determinant - also used to calculate U parameter

float3 pvec = cross(ray.direction, edge2);

// if determinant is near zero, ray lies in plane of triangle

float det = dot(edge1, pvec);

// use backface culling

if (det < EPSILON)

return false;

float inv_det = 1.0f / det;

// calculate distance from vert0 to ray origin

float3 tvec = ray.origin - vert0;

// calculate U parameter and test bounds

u = dot(tvec, pvec) * inv_det;

if (u < 0.0 || u > 1.0f)

return false;

// prepare to test V parameter

float3 qvec = cross(tvec, edge1);

// calculate V parameter and test bounds

v = dot(ray.direction, qvec) * inv_det;

if (v < 0.0 || u + v > 1.0f)

return false;

// calculate t, ray intersects triangle

t = dot(edge2, qvec) * inv_det;

return true;

}To use this function, we need a ray and three vertices of a triangle. The return value tells us if the triangle intersected. In the case of an intersection, three additional values are calculated:

tdescribes the distance along the beam to the intersection point, and u/ vare two of the three barycentric coordinates that determine the location of the intersection point on the triangle (the last coordinate can be calculated as w = 1 - u - v). If you are not familiar with barycentric coordinates yet, then read their excellent explanation on Scratchapixel . Without too much delay, let's trace one triangle with the vertices indicated in the code! Find the function in the shader

Traceand add the following code fragment to it:// Trace single triangle

float3 v0 = float3(-150, 0, -150);

float3 v1 = float3(150, 0, -150);

float3 v2 = float3(0, 150 * sqrt(2), -150);

float t, u, v;

if (IntersectTriangle_MT97(ray, v0, v1, v2, t, u, v))

{

if (t > 0 && t < bestHit.distance)

{

bestHit.distance = t;

bestHit.position = ray.origin + t * ray.direction;

bestHit.normal = normalize(cross(v1 - v0, v2 - v0));

bestHit.albedo = 0.00f;

bestHit.specular = 0.65f * float3(1, 0.4f, 0.2f);

bestHit.smoothness = 0.9f;

bestHit.emission = 0.0f;

}

}As I said, it

tstores the distance along the beam, and we can directly use this value to calculate the intersection point. The normal, which is important for calculating the correct reflection, can be calculated using the vector product of any two edges of the triangle. Launch the game mode and admire your first traced triangle:

Exercise: Try to calculate the position using barycentric coordinates, not distance. If you do everything right, then the shiny triangle will look exactly like before.

Triangle Meshes

We overcame the first obstacle, but tracing whole meshes from triangles is a completely different story. First we need to learn some basic information about meshes. If you know them, you can safely skip the next paragraph.

In computer graphics, the mesh is defined by several buffers, the most important of which are the vertex and index buffers . The vertex buffer is a list of 3D vectors describing the position of each vertex in the space of an object (this means that such values do not need to be changed when moving, rotating or scaling an object - they are transformed from the space of the object to world space on the fly using matrix multiplication) .An index buffer is a list of integer values that are indexes that point to the vertex buffer. Every three indexes make up a triangle. For example, if the index buffer has the form [0, 1, 2, 0, 2, 3], then it has two triangles: the first triangle consists of the first, second and third vertices in the vertex buffer, and the second triangle consists of the first, third and fourth peaks. Therefore, the index buffer also defines the aforementioned traversal order. In addition to vertex buffers and indexes, additional buffers may exist that add other information to each vertex. The most common additional buffers store normals , texture coordinates (called texcoords or just UV), as well as the colors of the vertices .

Using GameObjects

First of all, we need to find out which GameObjects should become part of the ray tracing process. A naive solution would be simple to use , but we will do something more flexible and quick. Let's add a new component :

FindObjectOfType() RayTracingObjectusing UnityEngine;

[RequireComponent(typeof(MeshRenderer))]

[RequireComponent(typeof(MeshFilter))]

public class RayTracingObject : MonoBehaviour

{

private void OnEnable()

{

RayTracingMaster.RegisterObject(this);

}

private void OnDisable()

{

RayTracingMaster.UnregisterObject(this);

}

}This component is added to each object that we want to use for ray tracing and registers them with

RayTracingMaster. Add the following functions to the wizard:private static bool _meshObjectsNeedRebuilding = false;

private static List _rayTracingObjects = new List();

public static void RegisterObject(RayTracingObject obj)

{

_rayTracingObjects.Add(obj);

_meshObjectsNeedRebuilding = true;

}

public static void UnregisterObject(RayTracingObject obj)

{

_rayTracingObjects.Remove(obj);

_meshObjectsNeedRebuilding = true;

} Everything is going well - now we know what objects need to be traced. But then comes the difficult part: we are going to collect all the data from Unity meshes (matrix, vertex buffers and indexes - remember them?), Write them to our own data structures and load them into the GPU so that the shader can use them. Let's start by defining data structures and buffers on the C # side, in the wizard:

struct MeshObject

{

public Matrix4x4 localToWorldMatrix;

public int indices_offset;

public int indices_count;

}

private static List _meshObjects = new List();

private static List _vertices = new List();

private static List _indices = new List();

private ComputeBuffer _meshObjectBuffer;

private ComputeBuffer _vertexBuffer;

private ComputeBuffer _indexBuffer; ... and now let's do the same in the shader. Are you used to it?

struct MeshObject

{

float4x4 localToWorldMatrix;

int indices_offset;

int indices_count;

};

StructuredBuffer _MeshObjects;

StructuredBuffer _Vertices;

StructuredBuffer _Indices; Data structures are ready, and we can fill them with real data. We collect all the vertices of all meshes into one large , and all the indices into large . There are no problems with the vertices, but the indices need to be changed so that they continue to point to the correct vertex in our large buffer. Imagine that we have already added objects from 1000 vertices, and now we add a simple mesh cube. The first triangle can consist of indices [0, 1, 2], but since we already had 1000 vertices in the buffer, we need to shift the indices before adding vertices to the cube. That is, they will turn into [1000, 1001, 1002]. Here's what it looks like in code:

ListListprivate void RebuildMeshObjectBuffers()

{

if (!_meshObjectsNeedRebuilding)

{

return;

}

_meshObjectsNeedRebuilding = false;

_currentSample = 0;

// Clear all lists

_meshObjects.Clear();

_vertices.Clear();

_indices.Clear();

// Loop over all objects and gather their data

foreach (RayTracingObject obj in _rayTracingObjects)

{

Mesh mesh = obj.GetComponent().sharedMesh;

// Add vertex data

int firstVertex = _vertices.Count;

_vertices.AddRange(mesh.vertices);

// Add index data - if the vertex buffer wasn't empty before, the

// indices need to be offset

int firstIndex = _indices.Count;

var indices = mesh.GetIndices(0);

_indices.AddRange(indices.Select(index => index + firstVertex));

// Add the object itself

_meshObjects.Add(new MeshObject()

{

localToWorldMatrix = obj.transform.localToWorldMatrix,

indices_offset = firstIndex,

indices_count = indices.Length

});

}

CreateComputeBuffer(ref _meshObjectBuffer, _meshObjects, 72);

CreateComputeBuffer(ref _vertexBuffer, _vertices, 12);

CreateComputeBuffer(ref _indexBuffer, _indices, 4);

} We will call

RebuildMeshObjectBuffersin functions OnRenderImage, and we will not forget to free new buffers in OnDisable. Here are two helper functions that I used in the code above to simplify buffer handling a bit:private static void CreateComputeBuffer(ref ComputeBuffer buffer, List data, int stride)

where T : struct

{

// Do we already have a compute buffer?

if (buffer != null)

{

// If no data or buffer doesn't match the given criteria, release it

if (data.Count == 0 || buffer.count != data.Count || buffer.stride != stride)

{

buffer.Release();

buffer = null;

}

}

if (data.Count != 0)

{

// If the buffer has been released or wasn't there to

// begin with, create it

if (buffer == null)

{

buffer = new ComputeBuffer(data.Count, stride);

}

// Set data on the buffer

buffer.SetData(data);

}

}

private void SetComputeBuffer(string name, ComputeBuffer buffer)

{

if (buffer != null)

{

RayTracingShader.SetBuffer(0, name, buffer);

}

} Great, we created buffers and they are filled with the necessary data! Now we just need to report this to the shader. Add to the

SetShaderParametersfollowing code (and thanks to new helper functions we can reduce the code of the buffer of spheres):SetComputeBuffer("_Spheres", _sphereBuffer);

SetComputeBuffer("_MeshObjects", _meshObjectBuffer);

SetComputeBuffer("_Vertices", _vertexBuffer);

SetComputeBuffer("_Indices", _indexBuffer);So, the work is boring, but let's see what we just did: we collected all the internal data of the meshes (matrix, vertices and indexes), placed them in a convenient and simple structure, and then sent them to the GPU, which now looks forward to when they can be used.

Mesh tracing

Let's not make him wait. In the shader, we already have the trace code of a single triangle, and the mesh is, in fact, just a lot of triangles. The only new aspect here is that we use a matrix to convert vertices from the object space to world space using the built-in function

mul(short for multiply). The matrix contains the translation, rotation and scale of the object. It has a size of 4 × 4, so for multiplication we need a 4d vector. The first three components (x, y, z) are taken from the vertex buffer. We set the fourth component (w) to 1 because we are dealing with a point. If this were the direction, then we would write 0 in it to ignore all the translations and scale in the matrix. Is this confusing for you? Then read this tutorial at least eight times.. Here is the shader code:void IntersectMeshObject(Ray ray, inout RayHit bestHit, MeshObject meshObject)

{

uint offset = meshObject.indices_offset;

uint count = offset + meshObject.indices_count;

for (uint i = offset; i < count; i += 3)

{

float3 v0 = (mul(meshObject.localToWorldMatrix, float4(_Vertices[_Indices[i]], 1))).xyz;

float3 v1 = (mul(meshObject.localToWorldMatrix, float4(_Vertices[_Indices[i + 1]], 1))).xyz;

float3 v2 = (mul(meshObject.localToWorldMatrix, float4(_Vertices[_Indices[i + 2]], 1))).xyz;

float t, u, v;

if (IntersectTriangle_MT97(ray, v0, v1, v2, t, u, v))

{

if (t > 0 && t < bestHit.distance)

{

bestHit.distance = t;

bestHit.position = ray.origin + t * ray.direction;

bestHit.normal = normalize(cross(v1 - v0, v2 - v0));

bestHit.albedo = 0.0f;

bestHit.specular = 0.65f;

bestHit.smoothness = 0.99f;

bestHit.emission = 0.0f;

}

}

}

}We are just one step away from seeing it all in action. Let's restructure the function a bit

Traceand add tracing of mesh objects:RayHit Trace(Ray ray)

{

RayHit bestHit = CreateRayHit();

uint count, stride, i;

// Trace ground plane

IntersectGroundPlane(ray, bestHit);

// Trace spheres

_Spheres.GetDimensions(count, stride);

for (i = 0; i < count; i++)

{

IntersectSphere(ray, bestHit, _Spheres[i]);

}

// Trace mesh objects

_MeshObjects.GetDimensions(count, stride);

for (i = 0; i < count; i++)

{

IntersectMeshObject(ray, bestHit, _MeshObjects[i]);

}

return bestHit;

}results

That's all! Let's add some simple meshes (Unity primitives are fine), give them a component,

RayTracingObjectand watch the magic. Do not use detailed meshes yet (more than a few hundred triangles)! Our shader lacks optimization, and if you overdo it, it can take seconds or even minutes to trace at least one sample per pixel. As a result, the system will stop the GPU driver, the Unity engine may crash, and the computer will need to restart.

Note that our meshes do not have smooth, but flat shading. Since we have not yet loaded the normals of the vertices into the buffer, to obtain the normal of the vertices of each triangle, we must perform a vector product. In addition, we cannot interpolate over the area of the triangle. We will deal with this problem in the next part of the tutorial.

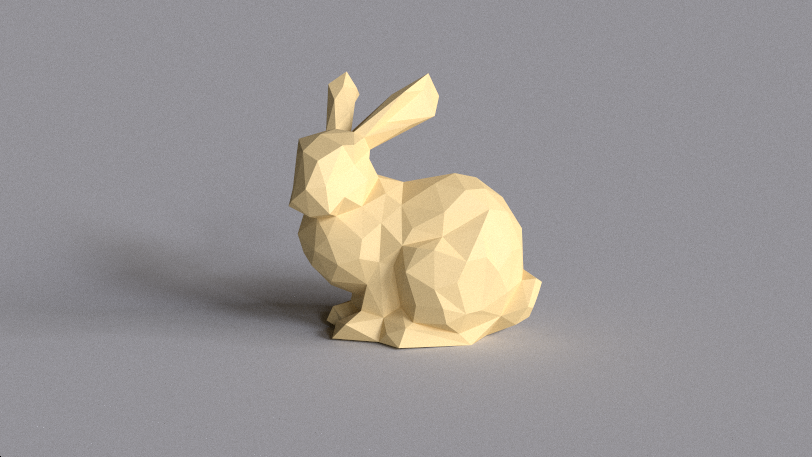

For the sake of interest, I downloaded Stanford Bunny from the archive of Morgan McGwire and using the decimate modifier of the Blender package I reduced the number of vertices to 431. You can experiment with lighting parameters and hard-coded material in the shader function

IntersectMeshObject. Here's a dielectric rabbit with beautiful soft shadows and a little diffused global lighting at Grafitti Shelter :

... and here's a metal rabbit under the strong directional light of Cape Hill , casting disco glare onto the floor plane:

... and here are two little rabbits hiding under the large stone Suzanne under the blue sky Kiara 9 Dusk (I prescribed alternative material for the second object, checking if the index shift is zero):

What's next?

It's great to see a real mesh in your own tracer for the first time, right? Today we processed some data, found out about the intersection using the Meller-Trambor algorithm, and collected everything so that you can immediately use the Unity engine's GameObjects engine. In addition, we saw one of the advantages of ray tracing: as soon as you add a new intersection to the code, all the beautiful effects (soft shadows, reflected and diffused global lighting, and so on) immediately start working.

Rendering a shiny rabbit took a lot of time, and I still had to use a little filtering to get rid of the most obvious noise. To solve this problem, the scene is usually written in a spatial structure, for example, in a grid, a K-dimensional tree or a hierarchy of bounding volumes, which significantly increases the speed of rendering large scenes.

But we need to move in order: further we will eliminate the problem with normals so that our meshes (even low-poly ones) look smoother than now. It would also be nice to automatically update matrices when moving objects and directly refer to Unity materials, and not just write them in the code. This is what we will do in the next part of the tutorial series. Thanks for reading, and see you in part 4!