EXAM - State-of-the-art text classification method

- Transfer

Text classification is one of the most common tasks in NLP and teacher training, when datasets contain text documents, and tags are used to train a text classifier.

From the NLP point of view, the text classification problem is solved by learning to represent words at the word level using word embedding and then learning to represent the text level used as a function for classification.

The type of coding-based methods ignores fine details and keys for classification (since the general representation at the text level is studied by compressing the representations at the word level).

Methods for classifying text with text-level matching based on coding

EXAM - new text classification method

Researchers from Shandong University and the National University of Singapore have proposed a new text classification model , which includes word-level matching signals in the text classification task. Their method uses an interaction mechanism to introduce detailed word-level clues into the classification process.

To solve the problem of including more accurate word-level matching signals, the researchers proposed to explicitly calculate the correspondence estimates between words and classes .

The basic idea is to calculate the interaction matrix from a word-level view that will carry the corresponding keys at the word level. Each entry in this matrix is an assessment of the correspondence between a word and a specific class.

The proposed text classification structure, called EXAM - EXplicit interAction Model ( GitHub ), contains three main components:

- word level encoder

- interaction layer and

- aggregation layer.

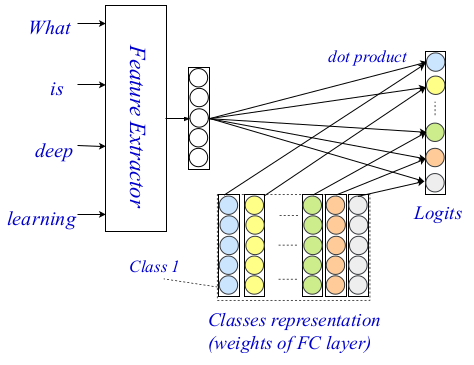

This three-tier architecture allows you to encode and classify text using both small and generalized signals and hints. The entire architecture is shown in the image below.

EXAM Architecture

In the past, word level coders were extensively researched in the NLP community, and very powerful coders appeared. The authors use the advanced method as an encoder at the word level, and in their work they describe in detail the other two components of their architecture: the level of interaction and aggregation.

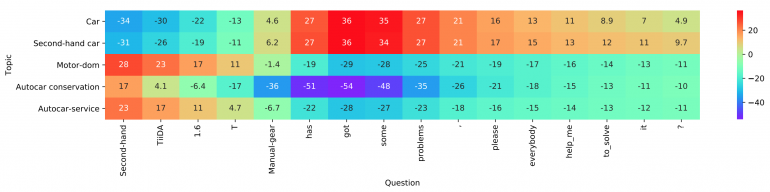

The interaction layer, the main contribution and the novelty of the proposed method are based on the well-known interaction mechanism. Researchers use a student representation matrix to encode each of the classes.to later be able to calculate inter-class interaction scores. The final scores are put using the dot product as a function of the interaction between the target word and each class. More complex functions were not considered due to the increase in computational complexity.

Visualization of the layer operation.

Finally, they use a simple fully connected two-layer MLP as an aggregation layer. They also mention that a more complex level of aggregation can be used here, including CNN or LSTM. MLP is used to calculate the final classification logits using the interaction matrix and word level encodings. Cross-entropy is used as a loss function for optimization.

Ratings

To evaluate the proposed text classification framework, the researchers conducted extensive experiments both in multi-class and multi-tag conditions. They show that their method is far superior to modern relevant modern methods.

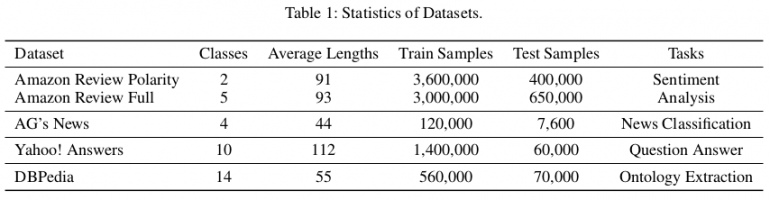

Statistics of datasets used for evaluation

For the evaluation they establish three different basic types of models:

- Models based on feature development;

- Deep character based models;

- Deep word-based patterns.

The authors used publicly available reference data sets (Zhang, Zhao and LeCun 2015) to evaluate the proposed method. In total there are six sets of classification textual data corresponding to the tasks of analyzing moods, classifying news, questions and answers, and extracting ontologies, respectively. In the article, they show that EXAM achieves the best performance among the three data sets: AG, Yah. A. and dad. Evaluation and comparison with other methods can be seen in the tables below.

![Test Set Accuracy [%] on multi-class document classification tasks](https://habrastorage.org/getpro/habr/post_images/de7/8d8/b60/de78d8b6017c9624c347b0fb645ae0ae.png)

findings

This work is an important contribution to the field of natural language processing (NLP). This is the first work that introduces more accurate word-level matching hints into the text classification in the deep neural network model. The proposed model provides state-of-the-art metrics for multiple data sets.

Translation - Stanislav Litvinov