How to stay in the TOP when changing search algorithms (a guide for beginner seoshniks)

After each update to the search engine algorithm, some of the SEOs say it was unsuccessful. There are over 200 site ranking factors. It is reasonable to assume that there are a lot of possible updates. In this article, we will describe how search algorithms work, why they are constantly changing, and how to respond to it.

They are based on formulas that determine the position of the site in the search results for a specific request. They are aimed at selecting web pages that most closely match the phrase entered by the user. At the same time, they try not to take into account irrelevant sites and those that use various types of spam.

Search engines look at the texts of the site, in particular, the presence of keywords in them. Content is one of the most important factors when deciding on a site’s ranking. Yandex and Google have different algorithms, and therefore the results of search results for the same phrase may differ. Nevertheless, the basic ranking rules laid down in the algorithm are the same for them. For example, they both follow the originality of the content.

Initially, when few sites were registered on the Internet, search engines took into account a very small number of parameters: headings, keywords, text size, etc. But soon the owners of web resources began to actively use unscrupulous methods of promotion. This made search engines develop algorithms in the direction of spam tracking. As a result, now it is often spammers with their ever new methods that dictate the development trends of search algorithms.

The main difficulty in SEO-optimization of the site is that Yandex and Google do not talk about the principles of their work. Thus, we have only an approximate idea of the parameters that they take into account. This information is based on the findings of SEO experts who are constantly trying to determine what affects the ranking of sites.

For example, it became known that search engines determine the most visited pages on a site and calculate the time that people spend on them. The systems assume that if the user has been on the page for a long time, then the published information is useful to him.

Google algorithms were the first to take into account the number of links on other resources leading to a particular site. The innovation made it possible to use the natural development of the Internet to determine the quality of sites and their relevance. This was one of the reasons why Google has become the most popular search engine in the world.

Then he began to take into account the relevance of information and its relation to a specific location. Since 2001, the system has learned to distinguish information sites from selling sites. Then she began to attach more importance to links posted on better and more popular resources.

In 2003, Google began to pay attention to the too frequent use of key phrases in texts. This innovation significantly complicated the work of SEO-optimizers and forced them to look for new methods of promotion.

Soon, Google began to take into account the quality of ranked sites.

Yandex talks more about how its algorithms work than Google. Since 2007, he began to publish information about the changes in his blog.

For example, in 2008, a company reported that a search engine began to understand abbreviations and learned to translate words in a query. Then Yandex started working with foreign resources, as a result of which it became more difficult for Russian-language sites to occupy high positions on requests with foreign words.

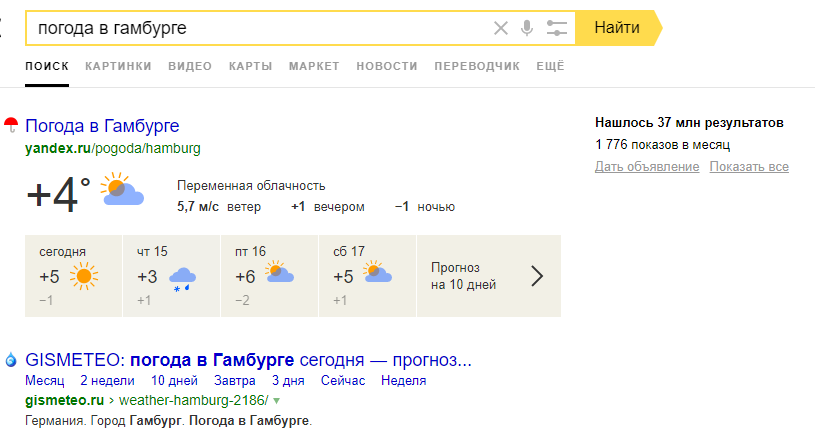

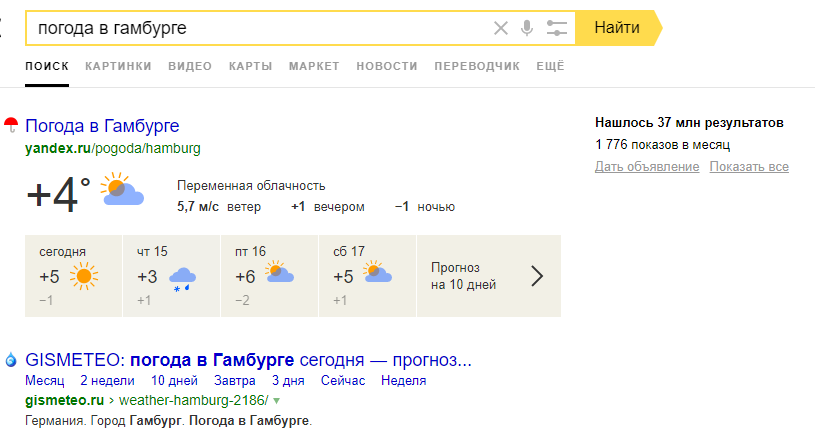

In the same year, users began to receive answers to some queries directly on the results pages. For example, to find out the weather, it was not necessary to go to the site.

Yandex drew attention to the originality of the texts.

Further, the search engine began to better process queries with negative keywords, to understand when the user made a grammatical error. In addition, SEO-specialists concluded that Yandex began to give preference to older sites, raising their ranking.

In most cases, when we talk about updates, we mean changes in the main search engine algorithm. It consists of traditional ranking factors, as well as algorithms designed to track and remove spam.

Therefore, when a change in the algorithm occurs, and this happens almost daily, the search engine can make many adjustments at once. And they can seriously affect the ranking of your site on the issuance page.

Google updates are designed to help users. However, this does not mean that they help site owners.

There are two types of updates. Some of them relate to UX, that is, the convenience of using the site: ads, pop-ups, download speed, etc. This includes, for example, updates on spamming and evaluating the usability of the mobile version of the site.

Other updates are needed to evaluate the site’s content: how valuable it is to the user. Poor quality content has the following symptoms:

We can say that often updates relate to the quality of content, because problems with the perception of information are closely related to its content.

Requirements for sites are quite extensive, which causes dissatisfaction with SEO-optimizers. Some believe that Google defends the power of its brand and is biased towards small sites.

To get started, determine if they contribute to raising or lowering your rating. If the rating fell, it could not have happened like this: it means something is done wrong.

Many algorithms are aimed at tracking spam. And yet it is possible that the site is losing ground without doing anything wrong. Then the reason usually consists in changing the selection criteria for sites for TOP. In other words, instead of downgrading the site for some errors, the algorithm can promote other resources that do something better.

Therefore, you need to constantly analyze why sites bypass you. For example, if a user enters an information request, and your site is commercial, then the page with information content will be higher than your site.

Here are some reasons why competitors can overtake you:

In general, don’t worry if your site is still on the first page of search results. But if he was on the second or further, this indicates the presence of serious problems. Sometimes the reason is the SEO changes you made recently.

In other cases, a downgrade may be due to the introduction of a new ranking factor. It is important to know the difference between innovations that contribute to the promotion of other pages, and innovations that lead to the loss of position of your site. Your SEO strategy may need to be updated.

Are search algorithms wrong?

Yes, sometimes the algorithm does not work correctly. But this happens very rarely.

Algorithm errors are usually visible in long searches. Examine their results to better understand the innovations in the algorithm. A mistake can be considered cases when the page with the results clearly does not match the query.

Of course, at first you will come up with the most obvious explanation, but it is not always true. If you decide that the search engine prefers certain brands or the problem lies solely in the UX site, then dig a little deeper. You may find a better explanation.

It is not so easy to understand the principles of operation of algorithms, but to track their changes you need to be constantly included in the information field. However, not every company has an SEO specialist, often the task of promotion in search engines is given to freelance. Then you need to configure SEO so that the position of the site remains consistently high regardless of the innovations of search engines.

Here are some ways to do this.

1. Properly use the promotion using links.

The main goal of the innovations of Yandex and Google is protection against spam. For example, before SEO-algorithms raised higher sites to which there were most of links on other web pages. As a result, this type of spam appeared as commenting on blogs. Fake recordings usually begin with a ridiculous stamped greeting like “What a wonderful and informative blog you have” and end with a link to a page whose contents are in no way related to this blog.

As a result, search engines began to actively introduce ranking factors to take into account this type of spam. Links to your site are still important now, but clearly unnatural comments can hurt your SEO promotion.

2. Interact with the audience.

Communication with potential buyers will not only help your SEO, but also directly increase sales. Interaction refers to advertising, PR, SMM and other ways to “hook” users on the Internet.

The natural dissemination of information about your brand leads to an increase in links and links to your site. Thus, using different methods of promotion, you win twice: in terms of SEO and direct sales.

3. Clearly define your SEO goals.

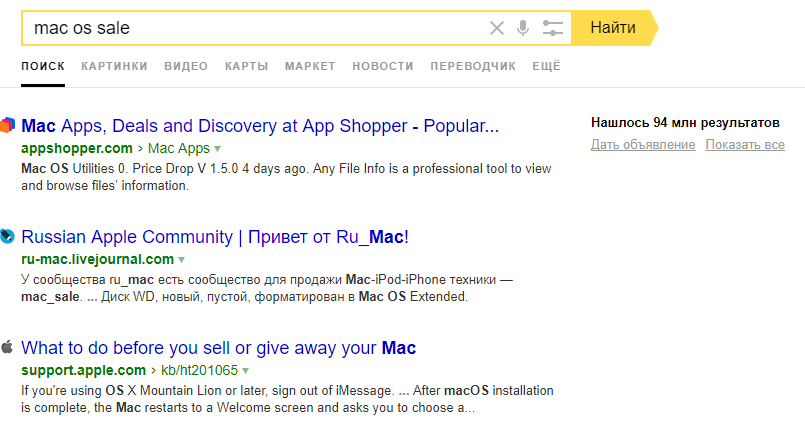

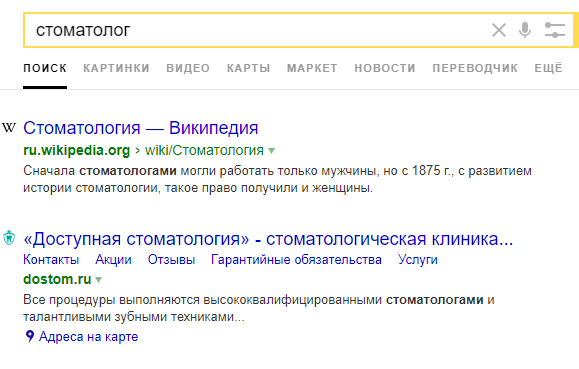

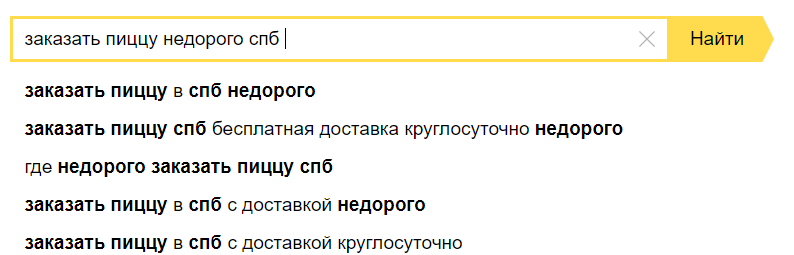

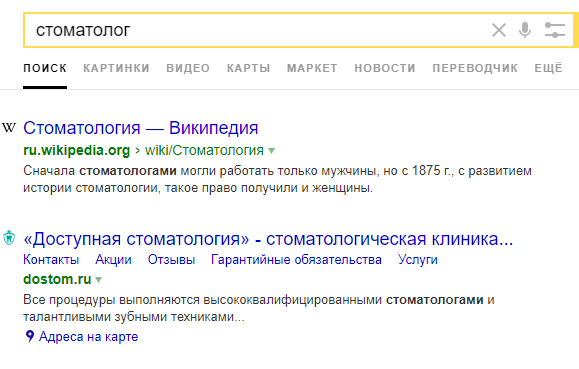

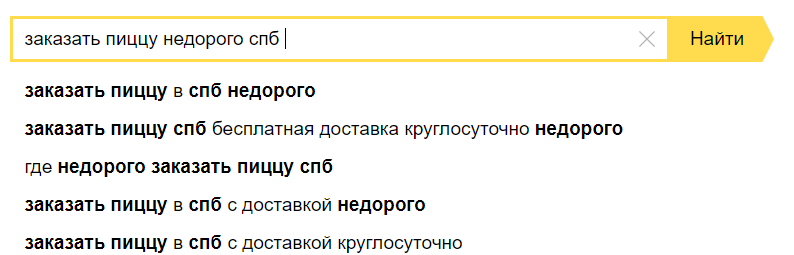

Often, company representatives believe that search engine promotion is necessary in order to occupy high positions on one-word queries, such as “lawyer”, “dentistry”, “sushi”. However, it is not. More and more often, users enter long search phrases, for example: “an inexpensive coffee shop in Samara”. In addition, search engines give hints that can make the final query even longer.

It is important to occupy high positions precisely on such narrow low- and mid-frequency queries.

The basic principle of working with SEO, which is not affected by changes in the algorithms, is to focus not on increasing the number of clicks to the site, but on attracting real customers. That is why the development of SEO should not be reduced to just buttons and texts written specifically for algorithms. Of course, you cannot do without this, but such elements are meaningless if they do not lead buyers.

SEO-optimizers should not only select key phrases for each individual page of the site and take into account the hints that the search engine offers users, but also understand the competitive advantages of the company, which must be used when designing and filling the site.

One of the popular mistakes made by SEO-specialists is the selection of keywords manually, without using special programs. As a result, they miss some valuable requests and, at the same time, leave them ineffective. This, of course, still improves the position of the site, but not significantly.

The use of automated services can increase the efficiency and speed of collection of the semantic kernel. With their help, the formation process does not take more than two minutes. The optimizer can only make sure that all requests are suitable for the company's goals.

Such programs include, for example, SEMrush and Key Collector. These services are paid - access to them is provided for a period of a month.

The second common mistake is the site structure inconvenient for the user. For example, there may be insufficient filters, tags, and subcategories. This affects the usability of the product search. When the process is too complicated for the user, then your potential client will most likely go to another site.

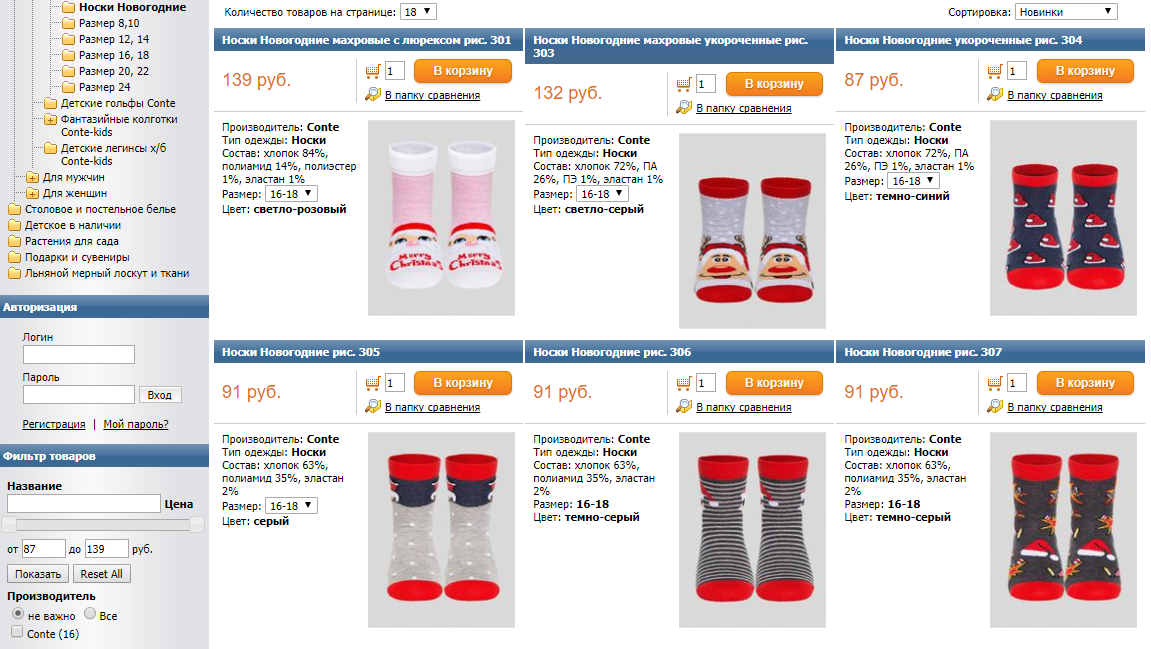

So, if you have two hundred pairs of socks in your online store, then you should not add all of them to the same category “Socks” without the ability to filter. No need to force users to scroll your entire assortment for a long time. It will be much more convenient for them to have a separate page for highly specialized queries. For example, the search phrase “New Year socks size 36” should match a page with several matching pairs.

Many sites already use this technique, and users are used to finding the most detailed and convenient answer to their request. Now people quickly understand when the site structure is poorly thought out.

Although it seems that search engines, daily changing their algorithms, are trying to do everything to complicate the lives of SEO-specialists, this is not so. The changes, for the most part, are explained by the fight against spam, which means they help sites that are promoted using honest methods. Innovations are also aimed at improving the ranking of quality sites, which is an excellent reason for you to become better.

Regardless of the changes, the algorithms are always based on the same principles. Therefore, having tuned SEO well once, you can almost forget about constant innovations.

What are search algorithms?

They are based on formulas that determine the position of the site in the search results for a specific request. They are aimed at selecting web pages that most closely match the phrase entered by the user. At the same time, they try not to take into account irrelevant sites and those that use various types of spam.

Search engines look at the texts of the site, in particular, the presence of keywords in them. Content is one of the most important factors when deciding on a site’s ranking. Yandex and Google have different algorithms, and therefore the results of search results for the same phrase may differ. Nevertheless, the basic ranking rules laid down in the algorithm are the same for them. For example, they both follow the originality of the content.

Initially, when few sites were registered on the Internet, search engines took into account a very small number of parameters: headings, keywords, text size, etc. But soon the owners of web resources began to actively use unscrupulous methods of promotion. This made search engines develop algorithms in the direction of spam tracking. As a result, now it is often spammers with their ever new methods that dictate the development trends of search algorithms.

The main difficulty in SEO-optimization of the site is that Yandex and Google do not talk about the principles of their work. Thus, we have only an approximate idea of the parameters that they take into account. This information is based on the findings of SEO experts who are constantly trying to determine what affects the ranking of sites.

For example, it became known that search engines determine the most visited pages on a site and calculate the time that people spend on them. The systems assume that if the user has been on the page for a long time, then the published information is useful to him.

Comparison of Google and Yandex algorithms

Google algorithms were the first to take into account the number of links on other resources leading to a particular site. The innovation made it possible to use the natural development of the Internet to determine the quality of sites and their relevance. This was one of the reasons why Google has become the most popular search engine in the world.

Then he began to take into account the relevance of information and its relation to a specific location. Since 2001, the system has learned to distinguish information sites from selling sites. Then she began to attach more importance to links posted on better and more popular resources.

In 2003, Google began to pay attention to the too frequent use of key phrases in texts. This innovation significantly complicated the work of SEO-optimizers and forced them to look for new methods of promotion.

Soon, Google began to take into account the quality of ranked sites.

Yandex talks more about how its algorithms work than Google. Since 2007, he began to publish information about the changes in his blog.

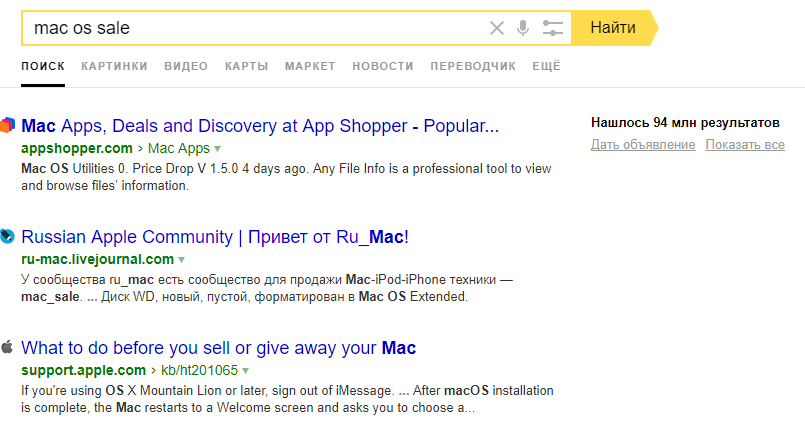

For example, in 2008, a company reported that a search engine began to understand abbreviations and learned to translate words in a query. Then Yandex started working with foreign resources, as a result of which it became more difficult for Russian-language sites to occupy high positions on requests with foreign words.

In the same year, users began to receive answers to some queries directly on the results pages. For example, to find out the weather, it was not necessary to go to the site.

Yandex drew attention to the originality of the texts.

Further, the search engine began to better process queries with negative keywords, to understand when the user made a grammatical error. In addition, SEO-specialists concluded that Yandex began to give preference to older sites, raising their ranking.

Types of changes in algorithms

In most cases, when we talk about updates, we mean changes in the main search engine algorithm. It consists of traditional ranking factors, as well as algorithms designed to track and remove spam.

Therefore, when a change in the algorithm occurs, and this happens almost daily, the search engine can make many adjustments at once. And they can seriously affect the ranking of your site on the issuance page.

Google updates are designed to help users. However, this does not mean that they help site owners.

There are two types of updates. Some of them relate to UX, that is, the convenience of using the site: ads, pop-ups, download speed, etc. This includes, for example, updates on spamming and evaluating the usability of the mobile version of the site.

Other updates are needed to evaluate the site’s content: how valuable it is to the user. Poor quality content has the following symptoms:

- The same text is repeated several times on the page.

- Does not help the user find the answer to the request.

- Deceives a visitor to buy a product.

- Often contains false information.

- Not reliable.

- There is no clear structure: the allocation of sections and paragraphs.

- The content does not correspond to the interests of the target audience of the site.

- He has a low uniqueness.

We can say that often updates relate to the quality of content, because problems with the perception of information are closely related to its content.

Requirements for sites are quite extensive, which causes dissatisfaction with SEO-optimizers. Some believe that Google defends the power of its brand and is biased towards small sites.

How to receive updates from search engines?

To get started, determine if they contribute to raising or lowering your rating. If the rating fell, it could not have happened like this: it means something is done wrong.

Many algorithms are aimed at tracking spam. And yet it is possible that the site is losing ground without doing anything wrong. Then the reason usually consists in changing the selection criteria for sites for TOP. In other words, instead of downgrading the site for some errors, the algorithm can promote other resources that do something better.

Therefore, you need to constantly analyze why sites bypass you. For example, if a user enters an information request, and your site is commercial, then the page with information content will be higher than your site.

Here are some reasons why competitors can overtake you:

- More accurate match to user request.

- A large number of links to this page, posted on other resources.

- A recent page update, as a result of which it has become more relevant and valuable.

In general, don’t worry if your site is still on the first page of search results. But if he was on the second or further, this indicates the presence of serious problems. Sometimes the reason is the SEO changes you made recently.

In other cases, a downgrade may be due to the introduction of a new ranking factor. It is important to know the difference between innovations that contribute to the promotion of other pages, and innovations that lead to the loss of position of your site. Your SEO strategy may need to be updated.

Are search algorithms wrong?

Yes, sometimes the algorithm does not work correctly. But this happens very rarely.

Algorithm errors are usually visible in long searches. Examine their results to better understand the innovations in the algorithm. A mistake can be considered cases when the page with the results clearly does not match the query.

Of course, at first you will come up with the most obvious explanation, but it is not always true. If you decide that the search engine prefers certain brands or the problem lies solely in the UX site, then dig a little deeper. You may find a better explanation.

How not to depend on changes in algorithms?

It is not so easy to understand the principles of operation of algorithms, but to track their changes you need to be constantly included in the information field. However, not every company has an SEO specialist, often the task of promotion in search engines is given to freelance. Then you need to configure SEO so that the position of the site remains consistently high regardless of the innovations of search engines.

Here are some ways to do this.

1. Properly use the promotion using links.

The main goal of the innovations of Yandex and Google is protection against spam. For example, before SEO-algorithms raised higher sites to which there were most of links on other web pages. As a result, this type of spam appeared as commenting on blogs. Fake recordings usually begin with a ridiculous stamped greeting like “What a wonderful and informative blog you have” and end with a link to a page whose contents are in no way related to this blog.

As a result, search engines began to actively introduce ranking factors to take into account this type of spam. Links to your site are still important now, but clearly unnatural comments can hurt your SEO promotion.

2. Interact with the audience.

Communication with potential buyers will not only help your SEO, but also directly increase sales. Interaction refers to advertising, PR, SMM and other ways to “hook” users on the Internet.

The natural dissemination of information about your brand leads to an increase in links and links to your site. Thus, using different methods of promotion, you win twice: in terms of SEO and direct sales.

3. Clearly define your SEO goals.

Often, company representatives believe that search engine promotion is necessary in order to occupy high positions on one-word queries, such as “lawyer”, “dentistry”, “sushi”. However, it is not. More and more often, users enter long search phrases, for example: “an inexpensive coffee shop in Samara”. In addition, search engines give hints that can make the final query even longer.

It is important to occupy high positions precisely on such narrow low- and mid-frequency queries.

Common SEO mistakes

The basic principle of working with SEO, which is not affected by changes in the algorithms, is to focus not on increasing the number of clicks to the site, but on attracting real customers. That is why the development of SEO should not be reduced to just buttons and texts written specifically for algorithms. Of course, you cannot do without this, but such elements are meaningless if they do not lead buyers.

SEO-optimizers should not only select key phrases for each individual page of the site and take into account the hints that the search engine offers users, but also understand the competitive advantages of the company, which must be used when designing and filling the site.

One of the popular mistakes made by SEO-specialists is the selection of keywords manually, without using special programs. As a result, they miss some valuable requests and, at the same time, leave them ineffective. This, of course, still improves the position of the site, but not significantly.

The use of automated services can increase the efficiency and speed of collection of the semantic kernel. With their help, the formation process does not take more than two minutes. The optimizer can only make sure that all requests are suitable for the company's goals.

Such programs include, for example, SEMrush and Key Collector. These services are paid - access to them is provided for a period of a month.

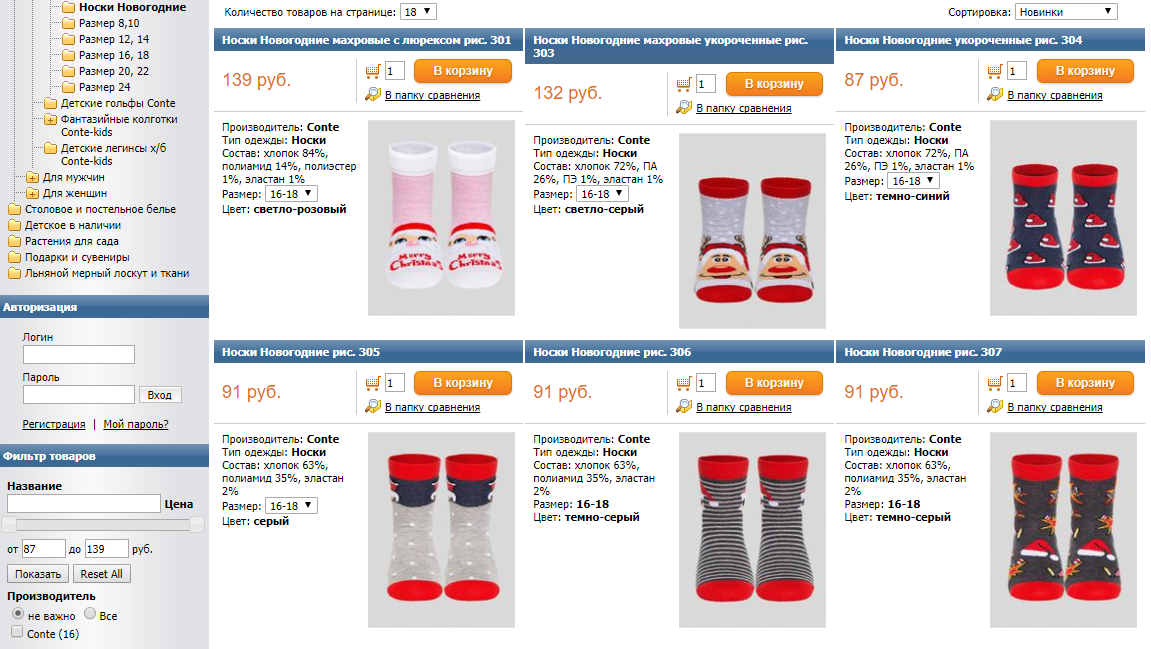

The second common mistake is the site structure inconvenient for the user. For example, there may be insufficient filters, tags, and subcategories. This affects the usability of the product search. When the process is too complicated for the user, then your potential client will most likely go to another site.

So, if you have two hundred pairs of socks in your online store, then you should not add all of them to the same category “Socks” without the ability to filter. No need to force users to scroll your entire assortment for a long time. It will be much more convenient for them to have a separate page for highly specialized queries. For example, the search phrase “New Year socks size 36” should match a page with several matching pairs.

Many sites already use this technique, and users are used to finding the most detailed and convenient answer to their request. Now people quickly understand when the site structure is poorly thought out.

conclusions

Although it seems that search engines, daily changing their algorithms, are trying to do everything to complicate the lives of SEO-specialists, this is not so. The changes, for the most part, are explained by the fight against spam, which means they help sites that are promoted using honest methods. Innovations are also aimed at improving the ranking of quality sites, which is an excellent reason for you to become better.

Regardless of the changes, the algorithms are always based on the same principles. Therefore, having tuned SEO well once, you can almost forget about constant innovations.