NetApp ONTAP & VMware vVOL

In this article, I would like to consider the internal device and architecture of vVol technology implemented in NetApp storage systems with ONTAP firmware.

Why did this technology appear and why is it needed, why will it be in demand in modern data centers? I will try to answer these and other questions in this article.

VVol technology has provided profiles that, when creating a VM, create virtual disks with specified characteristics. In such an environment, the administrator of the virtual environment can, on the one hand, in his vCenter interface easily and quickly check which virtual machines live on which media and find out their characteristics on the storage system: what type of medium is there, is the caching technology enabled for it, is it running there replication for DR, whether compression, deduplication, encryption, etc. are configured there With vVol technology, storage has become just a collection of resources for vCenter, integrating them much deeper with each other than before.

The problem of consolidating snapshots and increasing the load on the disk subsystem from VMware snapshots may not be noticeable for small virtual infrastructures, but even they may encounter their negative impact in the form of slowing down virtual machine robots or the inability to consolidate (removing the snapshot). vVol supports hardware QoS for VM virtual disks, as well as Hardware-Assistant replication and NetApp snapshots, since they are architecturally designed and work fundamentally differently, without affecting performance, unlike VMware COW snapshots. It would seem who uses these snapshots and why is this important? The fact is that all backup schemes in a virtualization environment are somehow architecturally forced to use VMware COW snapshots if you want to get consistent data.

What is vVol in general? vVol is a layer for the datastore, i.e. it is a datastore virtualization technology. You used to have a datastore located on a LUN (SAN) or file share (NAS); As a rule, each datastore hosted several virtual machines. vVol made the datastore more granular by creating a separate datastore for each disk of the virtual machine. vVol also on the one hand unified the work with NAS and SAN protocols for the virtualization environment, the administrator doesn’t care if it’s moons or files, on the other hand, each virtual VM disk can live in different volumes, moons, disk pools (aggregates), with different on caching and QoS settings, on different controllers and can have different policies that follow this VM disk. In the case of the traditional SAN, everything that the storage system “saw” on its part and what it could manage — entirely the entire datastore, but not separately for each virtual VM disk. With vVol, when creating one virtual machine, each of its separate disks can be created in accordance with a separate policy. Each vVol policy, in turn, allows you to select the free space from the available storage resource pool that will correspond to the predefined conditions in the vVol policies.

This allows you to more efficiently and conveniently use the resources and capabilities of the storage system. Thus, each disk of a virtual machine is located on a dedicated virtual datastore similar to LUN RDM. On the other hand, in vVol technology, the use of one common space is no longer a problem, because hardware snapshots and clones are performed not at the level of the whole datastore, but at the level of each individual virtual disk, and VMware COW snapshots are not used. At the same time, the storage system will now be able to "see" each virtual disk separately, this allowed delegating storage capabilities to vSphere (for example, snapshots), providing deeper integration and transparency of disk resources for virtualization.

VMware started developing vVol in 2012 and demonstrated this technology two years later in its preview; at the same time, NetApp announced its support in its data storage systems with ONTAP firmware with all protocols supported by VMWare: NAS (NFS), SAN (iSCSI, FCP).

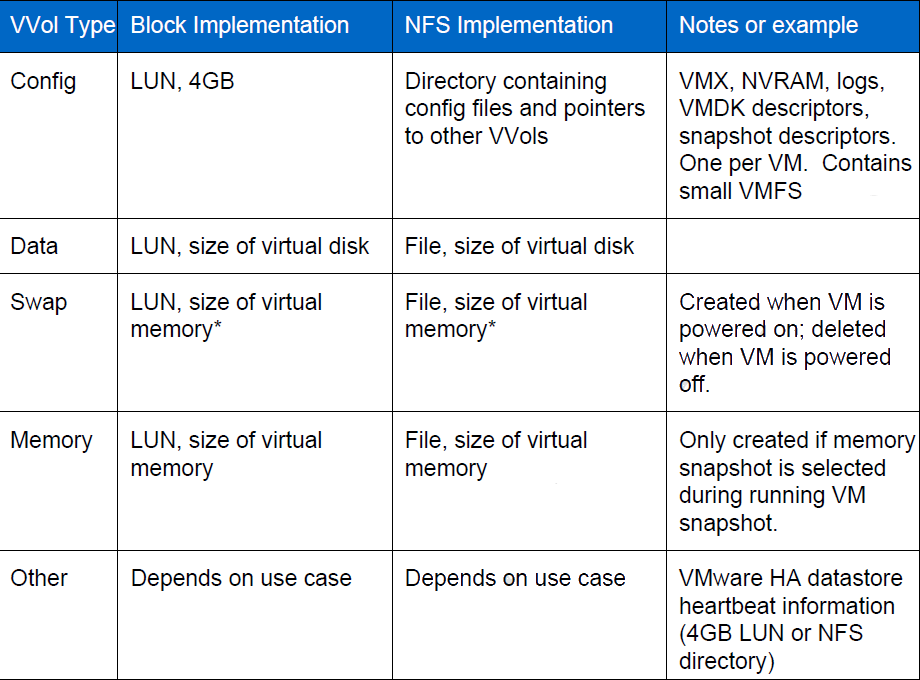

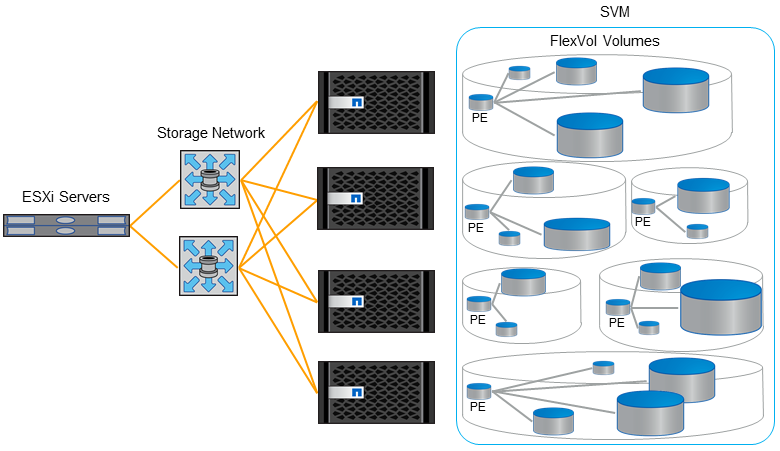

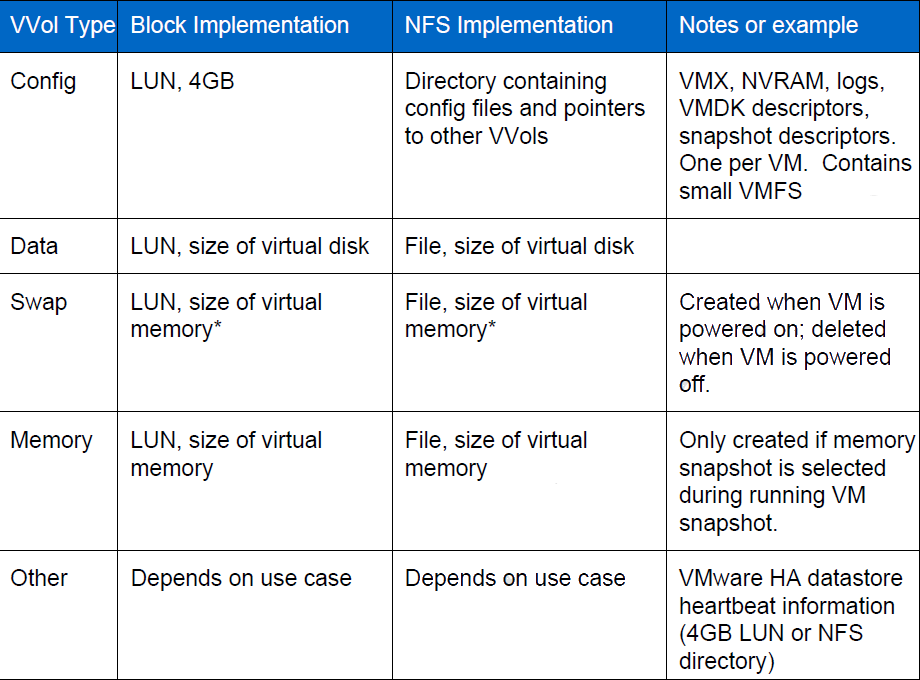

Let's now move away from the abstract description and move on to the specifics. vVol'y are located on FlexVol, for NAS and SAN it, respectively, files or moons. Each VMDK disk lives on its own dedicated vVol disk. When a vVol disk moves to another storage node, it transparently remaps to another PE on this new node. The hypervisor can start creating a snapshot separately for each disk of the virtual machine. Snapshot of one virtual disk is its full copy, i.e. another vVol. Each new virtual machine without snapshots consists of several vVol. Depending on the purpose of the vVol, there are the following types:

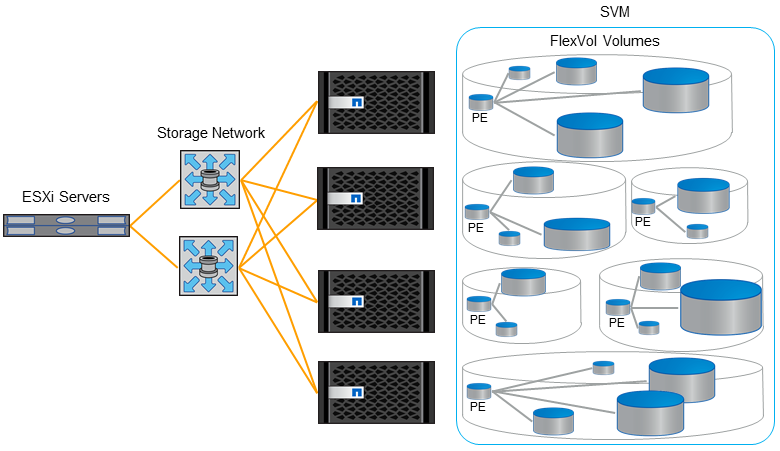

Now, actually, about PE. PE is an entry point to vVol'am, a kind of proxy. PE and vVol'y are located on the storage system. In the case of NAS, each Data LIF with its IP address on the storage port is such an entry point. In the case of SAN, this is a special 4MB moon.

PE is created at the request of the VASA Provider (VP). PE adapts all its vVol via Binging request from VP to storage, an example of the most frequent request is VM start.

ESXi always connects to PE, not directly to vVol. In the case of SAN, this avoids the restriction of 255 moons per ESXi host and the maximum number of paths to the moon. Each virtual machine can consist of only one protocol vVol: NFS, iSCSI or FCP. All PEs have LUN ID 300 and above.

Despite the small differences, NAS and SAN are very similarly arranged in terms of vVol in a virtualized environment.

iGroup and Export Policy are mechanisms that allow you to hide information available on the storage system from hosts. Those. provide each host with just what it needs to see. As in the case of NAS and SAN, mapping of the moons and export of the file ball occurs automatically from VP. iGroup does not map on all vVol, but only on PE, since ESXi uses PE as a proxy. In the case of the NFS protocol, the export policy is automatically applied to the file share. iGroup and export policies are created and populated automatically using a request from vCenter.

In the case of iSCSI protocol, it is necessary to have at least one Data LIF on each storage node that is connected to the same network as the corresponding VMkernel on the ESXi host. iSCSI and NFS LIFs must be separated, but can coexist on the same IP network and one VLAN. In the case of FCP, it is necessary to have at least one Data LIF on each storage node for each factory, in other words, as a rule, these are two Data LIFs from each storage node that live on their own separate target port. Use soft-zoning on the switch for FCP.

In the case of the NFS protocol, it is necessary to have at least one Data LIF with a set IP address on each storage node that is connected to the same network subnet as the corresponding VMkernel on the ESXi host. You cannot use the same IP address for iSCSI and NFS at the same time, but they can both coexist on the same VLAN, on the same subnet, and on the same physical Ethernet port.

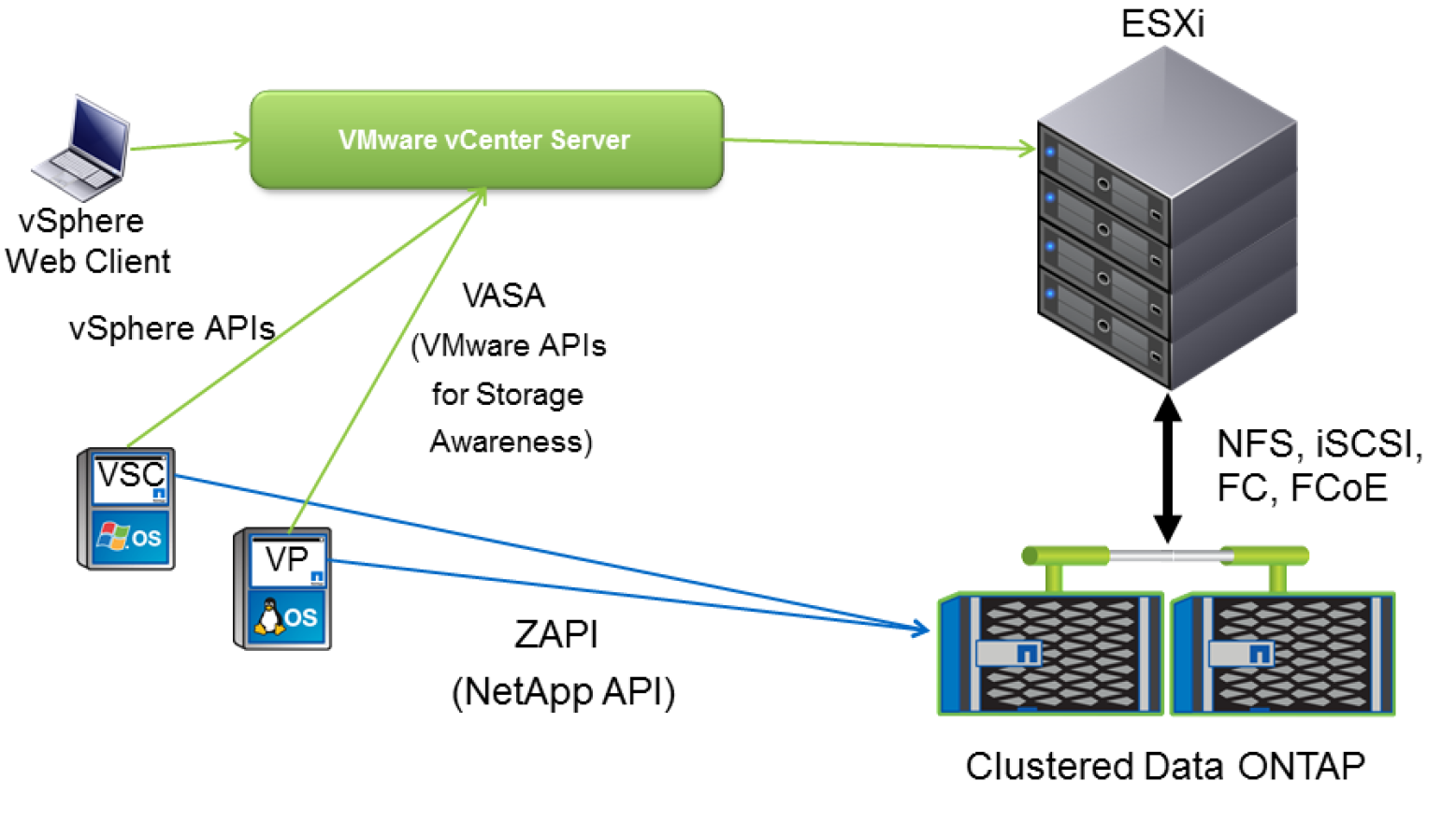

VP is an intermediary between vCenter and storage, it explains storage what vCenter wants from it and vice versa tells vCenter about important alerts and available storage resources, those that are physically actually. Those. vCenter can now know how much free space actually is, it is especially convenient when the storage system presents thin moons to the hypervisor.

VP is not a single point of failure in the sense that when it fails, the virtual machines will continue to work normally, but it will not be possible to create or edit policies and virtual machines on vVol, start or stop the VM on vVol. Those. in the event of a complete reboot of the entire infrastructure, the virtual machines will not be able to start, since the VP does not fulfill the Binding request to the storage to map the PE to its vVol. Therefore, VP is clearly desirable to reserve. And for the same reason, it is not allowed to host a virtual machine from VP to vVol, which it controls. Why is it “desirable” to reserve? Because, starting with NetApp VP 6.2, the latter can recover vVol content simply by reading meta-information from the storage itself. Read more about configuration and integration in the VASA Provider documentationand VSC .

VP supports Disaster Recovery functionality: if VP is deleted or damaged, the vVol environment database can be restored: Meta information about the vVol environment is stored in duplicated form: in the VP database, as well as together with vVol objects themselves on ONTAP. To restore VP, it is enough to register the old VP, raise the new VP, register ONTAP in it, run the vp dr_recoverdb command there and connect it to vCenter, in the latter run the “Rescan Storage Provider”. More details in KB .

The DR functionality for VP allows you to replicate vVol using SnapMirror hardware replication and restore the site to the DR site when vVol starts supporting storage replication (VASA 3.0).

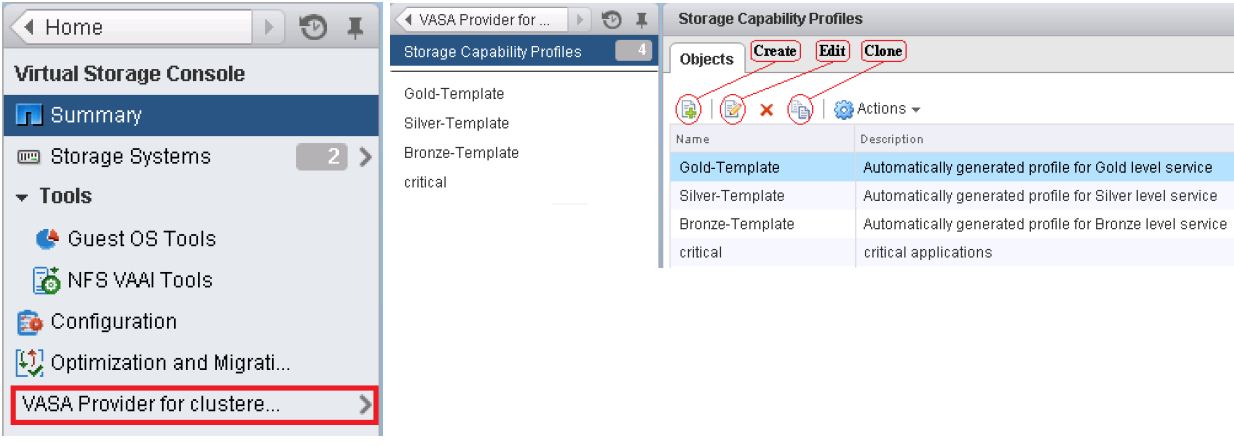

VSC is a plug-in for storage in the vCenter GUI, including for working with VP policies. VSC is a required component for vVol to work.

Let's draw a line between snapshots in terms of the hypervisor and snapshots in terms of storage. This is very important for understanding the internal structure of how vVol on NetApp works with snapshots. As many already know, snapshots in ONTAP are always performed at the volume level (and aggregate level) and are not executed at the file / moon level. On the other hand, vVol are files or moons, i.e. it is not possible to remove snapshots from them for each VMDK file separately by means of storage systems. FlexClone technology comes to the rescue here., which just knows how to make clones of not only volyum as a whole, but also files / moons. Those. when the hypervisor takes a snapshot of a virtual machine living on vVol, the following happens under the hood: vCenter contacts VSC and VP, which in turn find where the necessary VMDK files live and give the ONATP command to remove the clone from them . Yes, what looks like a snapshot from the side of the hypervisor is a clone on the storage system. In other words, a FlexClone license is required for vVol to run Hardware-Assistant Snapshot. It is for this or similar purposes that ONTAP has a new FlexClone Autodelete feature that allows you to set policies for deleting old clones.

Since snapshots are performed at the storage level, the problem of consolidation of snapshots (ESXi) and the negative impact of snapshots on the performance of the disk subsystem are completely eliminated thanks to the internal cloning / snapshot mechanism in ONTAP.

Since the hypervisor itself removes snapshots using storage systems, they are immediately automatically consistent. Which fits very well with the NetApp ONTAP backup paradigm . vSphere supports snapshoting VM memory to dedicated vVol.

The cloning function is most often very popular in VDI environments, where there is a need to quickly deploy many of the same type of virtual machines. In order to use Hardware-Assistant cloning, a FlexClone license is required. It is important to note that FlexClone technology not only dramatically speeds up the deployment of copies of a large number of virtual machines, dramatically reducing disk space consumption, but also indirectly speeds up their work. Cloning essentially performs a function similar to deduplication, i.e. reduces the amount of occupied space. The fact is that NetApp FAS always places data in the system cache, and the system and SSD caches, in turn, are Dedup-Aware, i.e. they do not drag out duplicate blocks from other virtual machines that are already there, logically accommodating much more than physically the system and SSD cache can. This dramatically improves performance during the operation of storage and especially during Boot-Storm moments due to the increased hit / read of data to / from the cache (a).

VVol technology starting with VMware 6.0, VM Hardware version 11 and Windows 2012 with the NTFS file system supports the release of space inside the thin moon on which this virtual machine is located automatically. This significantly improves the utilization of usable space in a SAN infrastructure using Thing Provisioning and Hardware-assistant snapshots. And starting with VMware 6.5 and the Linux guest OS with SPC-4 support, it will also allow freeing up space from inside the virtual machine, back to the storage system, thereby significantly saving expensive storage space. More about UNMAP .

For more details, contact your authorized NetApp partner or the compatibility matrix .

Unfortunately, Site Recovery Manager does not yet support vVol since replication and SRM are not supported in the current implementation of the VASA protocol by VMware. NetApp FAS systems integrate with SRM and operate without vVol. VVol technology is also supported with vMSC (MetroCluster) for building to build, geo-distributed, highly accessible storage.

VVol technology will dramatically change the approach to backups, reducing backup time and more efficiently using storage space. One of the reasons for this is a fundamentally different approach to snapshoting and backup, since vVol uses hardware snapshoting, which is why the issue of consolidating and removing VMware snapshots is no longer needed. Due to the elimination of the problem with consolidating VMware snapshots, removing application-aware (hardware) snapshots and backups is no longer a headache for administrators. This will allow more frequent backups and snapshots. Hardware snapshots that do not affect performance will allow them to be stored a lot directly on a productive environment, and hardware replication will allow more efficient replication of data for archiving or to a DR site.

Do not confuse the plugin from the storage vendor (for example, NetApp VASA Provider 6.0) and the VMware VASA API (for example, VASA API 3.0). The most important and expected innovation in the VASA API in the near future will be support for hardware replication. It is hardware replication support that is so lacking in order for vVol technology to be widely used. The new version will support hardware replication of vVol disks by means of storage for providing the DR function. Replication could be performed earlier using storage systems, but the hypervisor could not work with replicated data before due to the lack of support for such functionality in VASA API 2.X (and younger) from vSphere. PowerShell cmdlets for managing DR on vVol will also appear, and more importantly, the ability to run for testing replicated machines. For planned migration from a DR site, virtual machine replication may be requested. This will allow you to use SRM with vVol.

Oracle RAC will be validated to run on vVol, which is good news.

The following components are required for vVol to work: VP, VSA, and storage with the necessary firmware that supports vVol. These components alone do not require any licenses to operate vVol. On the NetApp ONTAP side, a FlexClone license is required for vVol to work - this license is also needed to support hardware snapshots or clones (removal and recovery). For data replication (if needed), you will need a NetApp SnapMirror / SnapVault license (on both storage systems: on the main and backup sites). VSphere requires Standard or Enterprise Plus licenses.

VVol technology was designed to further integrate the ESXi hypervisor with storage and simplify management. This allowed for more rational use of storage capabilities and resources, such as Thing Provisioning, where, thanks to UNMAP, space from thin moons can be freed . At the same time, the hypervisor is informed about the real state of storage space and resources, all this allows you to use Thing Provisioning not only for the NAS, but also to use it in “combat” conditions with the SAN, abstract from the access protocol and ensure transparency of the infrastructure. And policies for working with storage resources, thin cloning and snapshots that do not affect performance will allow you to more quickly and conveniently deploy virtual machines in a virtualized infrastructure.

Why did this technology appear and why is it needed, why will it be in demand in modern data centers? I will try to answer these and other questions in this article.

VVol technology has provided profiles that, when creating a VM, create virtual disks with specified characteristics. In such an environment, the administrator of the virtual environment can, on the one hand, in his vCenter interface easily and quickly check which virtual machines live on which media and find out their characteristics on the storage system: what type of medium is there, is the caching technology enabled for it, is it running there replication for DR, whether compression, deduplication, encryption, etc. are configured there With vVol technology, storage has become just a collection of resources for vCenter, integrating them much deeper with each other than before.

Snapshots

The problem of consolidating snapshots and increasing the load on the disk subsystem from VMware snapshots may not be noticeable for small virtual infrastructures, but even they may encounter their negative impact in the form of slowing down virtual machine robots or the inability to consolidate (removing the snapshot). vVol supports hardware QoS for VM virtual disks, as well as Hardware-Assistant replication and NetApp snapshots, since they are architecturally designed and work fundamentally differently, without affecting performance, unlike VMware COW snapshots. It would seem who uses these snapshots and why is this important? The fact is that all backup schemes in a virtualization environment are somehow architecturally forced to use VMware COW snapshots if you want to get consistent data.

vVol

What is vVol in general? vVol is a layer for the datastore, i.e. it is a datastore virtualization technology. You used to have a datastore located on a LUN (SAN) or file share (NAS); As a rule, each datastore hosted several virtual machines. vVol made the datastore more granular by creating a separate datastore for each disk of the virtual machine. vVol also on the one hand unified the work with NAS and SAN protocols for the virtualization environment, the administrator doesn’t care if it’s moons or files, on the other hand, each virtual VM disk can live in different volumes, moons, disk pools (aggregates), with different on caching and QoS settings, on different controllers and can have different policies that follow this VM disk. In the case of the traditional SAN, everything that the storage system “saw” on its part and what it could manage — entirely the entire datastore, but not separately for each virtual VM disk. With vVol, when creating one virtual machine, each of its separate disks can be created in accordance with a separate policy. Each vVol policy, in turn, allows you to select the free space from the available storage resource pool that will correspond to the predefined conditions in the vVol policies.

This allows you to more efficiently and conveniently use the resources and capabilities of the storage system. Thus, each disk of a virtual machine is located on a dedicated virtual datastore similar to LUN RDM. On the other hand, in vVol technology, the use of one common space is no longer a problem, because hardware snapshots and clones are performed not at the level of the whole datastore, but at the level of each individual virtual disk, and VMware COW snapshots are not used. At the same time, the storage system will now be able to "see" each virtual disk separately, this allowed delegating storage capabilities to vSphere (for example, snapshots), providing deeper integration and transparency of disk resources for virtualization.

VMware started developing vVol in 2012 and demonstrated this technology two years later in its preview; at the same time, NetApp announced its support in its data storage systems with ONTAP firmware with all protocols supported by VMWare: NAS (NFS), SAN (iSCSI, FCP).

Protocol endpoints

Let's now move away from the abstract description and move on to the specifics. vVol'y are located on FlexVol, for NAS and SAN it, respectively, files or moons. Each VMDK disk lives on its own dedicated vVol disk. When a vVol disk moves to another storage node, it transparently remaps to another PE on this new node. The hypervisor can start creating a snapshot separately for each disk of the virtual machine. Snapshot of one virtual disk is its full copy, i.e. another vVol. Each new virtual machine without snapshots consists of several vVol. Depending on the purpose of the vVol, there are the following types:

Now, actually, about PE. PE is an entry point to vVol'am, a kind of proxy. PE and vVol'y are located on the storage system. In the case of NAS, each Data LIF with its IP address on the storage port is such an entry point. In the case of SAN, this is a special 4MB moon.

PE is created at the request of the VASA Provider (VP). PE adapts all its vVol via Binging request from VP to storage, an example of the most frequent request is VM start.

ESXi always connects to PE, not directly to vVol. In the case of SAN, this avoids the restriction of 255 moons per ESXi host and the maximum number of paths to the moon. Each virtual machine can consist of only one protocol vVol: NFS, iSCSI or FCP. All PEs have LUN ID 300 and above.

Despite the small differences, NAS and SAN are very similarly arranged in terms of vVol in a virtualized environment.

cDOT::*> lun bind show -instance

Vserver: netapp-vVol-test-svm

PE MSID: 2147484885

PE Vdisk ID: 800004d5000000000000000000000063036ed591

vVol MSID: 2147484951

vVol Vdisk ID: 800005170000000000000000000000601849f224

Protocol Endpoint: /vol/ds01/vVolPE-1410312812730

PE UUID: d75eb255-2d20-4026-81e8-39e4ace3cbdb

PE Node: esxi-01

vVol: /vol/vVol31/naa.600a098044314f6c332443726e6e4534.vmdk

vVol Node: esxi-01

vVol UUID: 22a5d22a-a2bd-4239-a447-cb506936ccd0

Secondary LUN: d2378d000000

Optimal binding: true

Reference Count: 2

iGroup & Export Policy

iGroup and Export Policy are mechanisms that allow you to hide information available on the storage system from hosts. Those. provide each host with just what it needs to see. As in the case of NAS and SAN, mapping of the moons and export of the file ball occurs automatically from VP. iGroup does not map on all vVol, but only on PE, since ESXi uses PE as a proxy. In the case of the NFS protocol, the export policy is automatically applied to the file share. iGroup and export policies are created and populated automatically using a request from vCenter.

SAN & IP SAN

In the case of iSCSI protocol, it is necessary to have at least one Data LIF on each storage node that is connected to the same network as the corresponding VMkernel on the ESXi host. iSCSI and NFS LIFs must be separated, but can coexist on the same IP network and one VLAN. In the case of FCP, it is necessary to have at least one Data LIF on each storage node for each factory, in other words, as a rule, these are two Data LIFs from each storage node that live on their own separate target port. Use soft-zoning on the switch for FCP.

NAS (NFS)

In the case of the NFS protocol, it is necessary to have at least one Data LIF with a set IP address on each storage node that is connected to the same network subnet as the corresponding VMkernel on the ESXi host. You cannot use the same IP address for iSCSI and NFS at the same time, but they can both coexist on the same VLAN, on the same subnet, and on the same physical Ethernet port.

VASA Provider

VP is an intermediary between vCenter and storage, it explains storage what vCenter wants from it and vice versa tells vCenter about important alerts and available storage resources, those that are physically actually. Those. vCenter can now know how much free space actually is, it is especially convenient when the storage system presents thin moons to the hypervisor.

VP is not a single point of failure in the sense that when it fails, the virtual machines will continue to work normally, but it will not be possible to create or edit policies and virtual machines on vVol, start or stop the VM on vVol. Those. in the event of a complete reboot of the entire infrastructure, the virtual machines will not be able to start, since the VP does not fulfill the Binding request to the storage to map the PE to its vVol. Therefore, VP is clearly desirable to reserve. And for the same reason, it is not allowed to host a virtual machine from VP to vVol, which it controls. Why is it “desirable” to reserve? Because, starting with NetApp VP 6.2, the latter can recover vVol content simply by reading meta-information from the storage itself. Read more about configuration and integration in the VASA Provider documentationand VSC .

Disaster recovery

VP supports Disaster Recovery functionality: if VP is deleted or damaged, the vVol environment database can be restored: Meta information about the vVol environment is stored in duplicated form: in the VP database, as well as together with vVol objects themselves on ONTAP. To restore VP, it is enough to register the old VP, raise the new VP, register ONTAP in it, run the vp dr_recoverdb command there and connect it to vCenter, in the latter run the “Rescan Storage Provider”. More details in KB .

The DR functionality for VP allows you to replicate vVol using SnapMirror hardware replication and restore the site to the DR site when vVol starts supporting storage replication (VASA 3.0).

Virtual storage console

VSC is a plug-in for storage in the vCenter GUI, including for working with VP policies. VSC is a required component for vVol to work.

Snapshot & flexclone

Let's draw a line between snapshots in terms of the hypervisor and snapshots in terms of storage. This is very important for understanding the internal structure of how vVol on NetApp works with snapshots. As many already know, snapshots in ONTAP are always performed at the volume level (and aggregate level) and are not executed at the file / moon level. On the other hand, vVol are files or moons, i.e. it is not possible to remove snapshots from them for each VMDK file separately by means of storage systems. FlexClone technology comes to the rescue here., which just knows how to make clones of not only volyum as a whole, but also files / moons. Those. when the hypervisor takes a snapshot of a virtual machine living on vVol, the following happens under the hood: vCenter contacts VSC and VP, which in turn find where the necessary VMDK files live and give the ONATP command to remove the clone from them . Yes, what looks like a snapshot from the side of the hypervisor is a clone on the storage system. In other words, a FlexClone license is required for vVol to run Hardware-Assistant Snapshot. It is for this or similar purposes that ONTAP has a new FlexClone Autodelete feature that allows you to set policies for deleting old clones.

Since snapshots are performed at the storage level, the problem of consolidation of snapshots (ESXi) and the negative impact of snapshots on the performance of the disk subsystem are completely eliminated thanks to the internal cloning / snapshot mechanism in ONTAP.

Consistency

Since the hypervisor itself removes snapshots using storage systems, they are immediately automatically consistent. Which fits very well with the NetApp ONTAP backup paradigm . vSphere supports snapshoting VM memory to dedicated vVol.

Cloning and VDI

The cloning function is most often very popular in VDI environments, where there is a need to quickly deploy many of the same type of virtual machines. In order to use Hardware-Assistant cloning, a FlexClone license is required. It is important to note that FlexClone technology not only dramatically speeds up the deployment of copies of a large number of virtual machines, dramatically reducing disk space consumption, but also indirectly speeds up their work. Cloning essentially performs a function similar to deduplication, i.e. reduces the amount of occupied space. The fact is that NetApp FAS always places data in the system cache, and the system and SSD caches, in turn, are Dedup-Aware, i.e. they do not drag out duplicate blocks from other virtual machines that are already there, logically accommodating much more than physically the system and SSD cache can. This dramatically improves performance during the operation of storage and especially during Boot-Storm moments due to the increased hit / read of data to / from the cache (a).

Unmap

VVol technology starting with VMware 6.0, VM Hardware version 11 and Windows 2012 with the NTFS file system supports the release of space inside the thin moon on which this virtual machine is located automatically. This significantly improves the utilization of usable space in a SAN infrastructure using Thing Provisioning and Hardware-assistant snapshots. And starting with VMware 6.5 and the Linux guest OS with SPC-4 support, it will also allow freeing up space from inside the virtual machine, back to the storage system, thereby significantly saving expensive storage space. More about UNMAP .

Minimum System Requirements

- NetApp VASA Provider 5.0 and later

- VSC 5.0 and higher.

- Clustered Data ONTAP 8.2.1 and later

- VMware vSphere 6.0 and later

- VSC and VP must have the same version, for example, both 5.x or both 6.x.

For more details, contact your authorized NetApp partner or the compatibility matrix .

SRM and hardware replication

Unfortunately, Site Recovery Manager does not yet support vVol since replication and SRM are not supported in the current implementation of the VASA protocol by VMware. NetApp FAS systems integrate with SRM and operate without vVol. VVol technology is also supported with vMSC (MetroCluster) for building to build, geo-distributed, highly accessible storage.

Backup

VVol technology will dramatically change the approach to backups, reducing backup time and more efficiently using storage space. One of the reasons for this is a fundamentally different approach to snapshoting and backup, since vVol uses hardware snapshoting, which is why the issue of consolidating and removing VMware snapshots is no longer needed. Due to the elimination of the problem with consolidating VMware snapshots, removing application-aware (hardware) snapshots and backups is no longer a headache for administrators. This will allow more frequent backups and snapshots. Hardware snapshots that do not affect performance will allow them to be stored a lot directly on a productive environment, and hardware replication will allow more efficient replication of data for archiving or to a DR site.

VASA API 3.0

Do not confuse the plugin from the storage vendor (for example, NetApp VASA Provider 6.0) and the VMware VASA API (for example, VASA API 3.0). The most important and expected innovation in the VASA API in the near future will be support for hardware replication. It is hardware replication support that is so lacking in order for vVol technology to be widely used. The new version will support hardware replication of vVol disks by means of storage for providing the DR function. Replication could be performed earlier using storage systems, but the hypervisor could not work with replicated data before due to the lack of support for such functionality in VASA API 2.X (and younger) from vSphere. PowerShell cmdlets for managing DR on vVol will also appear, and more importantly, the ability to run for testing replicated machines. For planned migration from a DR site, virtual machine replication may be requested. This will allow you to use SRM with vVol.

Oracle RAC will be validated to run on vVol, which is good news.

Licensing

The following components are required for vVol to work: VP, VSA, and storage with the necessary firmware that supports vVol. These components alone do not require any licenses to operate vVol. On the NetApp ONTAP side, a FlexClone license is required for vVol to work - this license is also needed to support hardware snapshots or clones (removal and recovery). For data replication (if needed), you will need a NetApp SnapMirror / SnapVault license (on both storage systems: on the main and backup sites). VSphere requires Standard or Enterprise Plus licenses.

findings

VVol technology was designed to further integrate the ESXi hypervisor with storage and simplify management. This allowed for more rational use of storage capabilities and resources, such as Thing Provisioning, where, thanks to UNMAP, space from thin moons can be freed . At the same time, the hypervisor is informed about the real state of storage space and resources, all this allows you to use Thing Provisioning not only for the NAS, but also to use it in “combat” conditions with the SAN, abstract from the access protocol and ensure transparency of the infrastructure. And policies for working with storage resources, thin cloning and snapshots that do not affect performance will allow you to more quickly and conveniently deploy virtual machines in a virtualized infrastructure.