How we built our mini data center. Part 1 - Colocation

Hello colleagues and residents of Habr. We want to tell you the story of one small company from the beginning of its formation to the current realities. We want to say right away that this material is made for people who are interested in this topic. Before writing this (and long before that), we reviewed many publications on Habré about the construction of data centers and this helped us a lot in implementing our ideas. The publication will be divided into several stages of our work, from renting a closet to the final of building our own mini-data center. I hope this article helps at least one person. Go!

In 2015, a hosting company was created in Ukraine with great ambitions for the future and a virtual lack of start-up capital. We rented equipment in Moscow, from one of the well-known providers (and by acquaintance), this gave us the start of development. Thanks to our experience and partly luck, already in the first months we gained enough customers to buy our own equipment and get good connections.

For a long time we chose the equipment that we need for our own purposes, first we selected the price / quality parameters, but unfortunately most of the well-known brands (hp, dell, supermicro, ibm) are all very good in quality and similar in price.

Then we went the other way, chose from what is available in Ukraine in large quantities (in case of breakdowns or replacement) and chose HP. Supermicro (as it seemed to us) are very well configured, but unfortunately their price in Ukraine exceeded any adequate meaning. And there were not so many spare parts. So we chose what we chose.

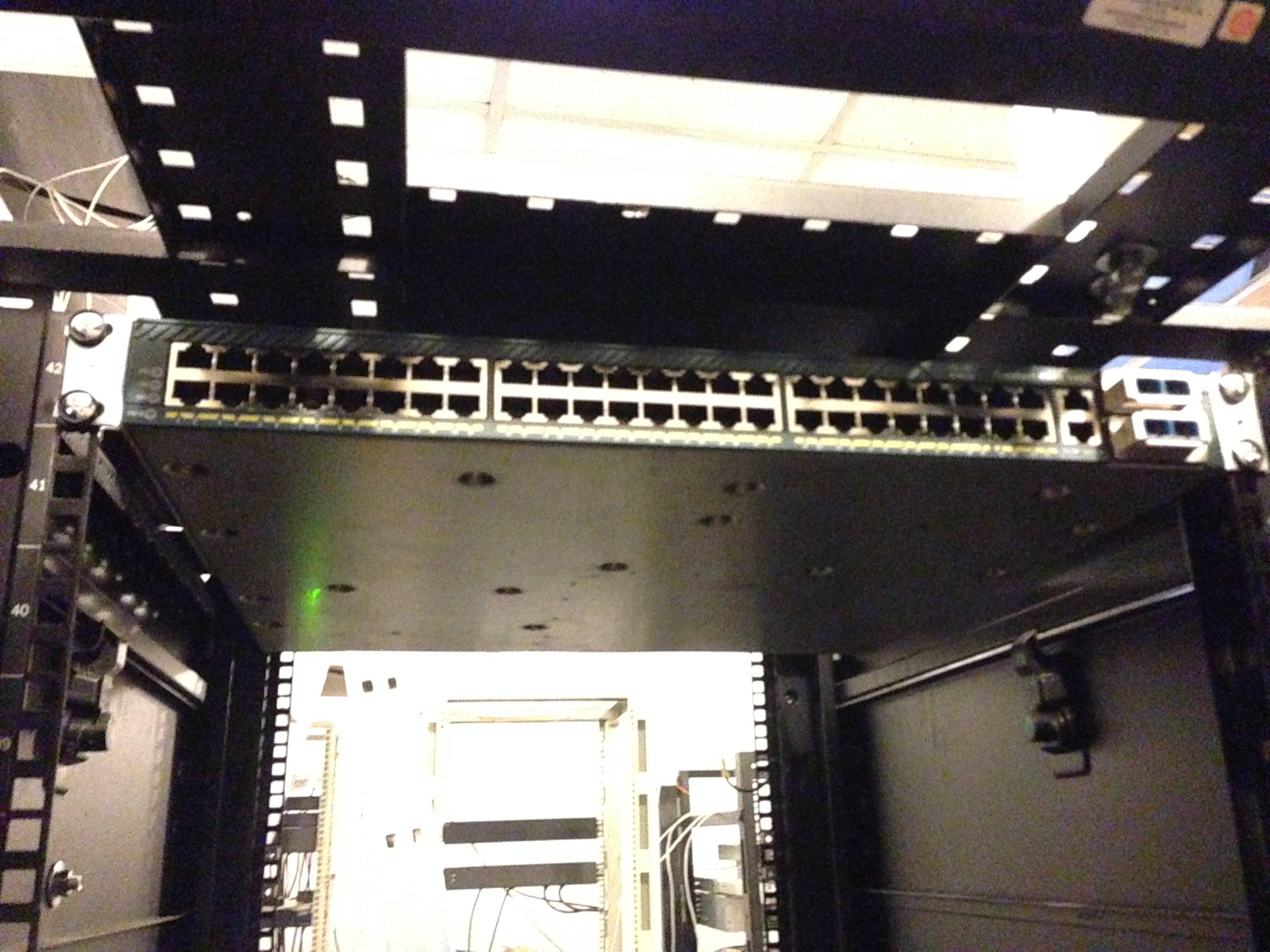

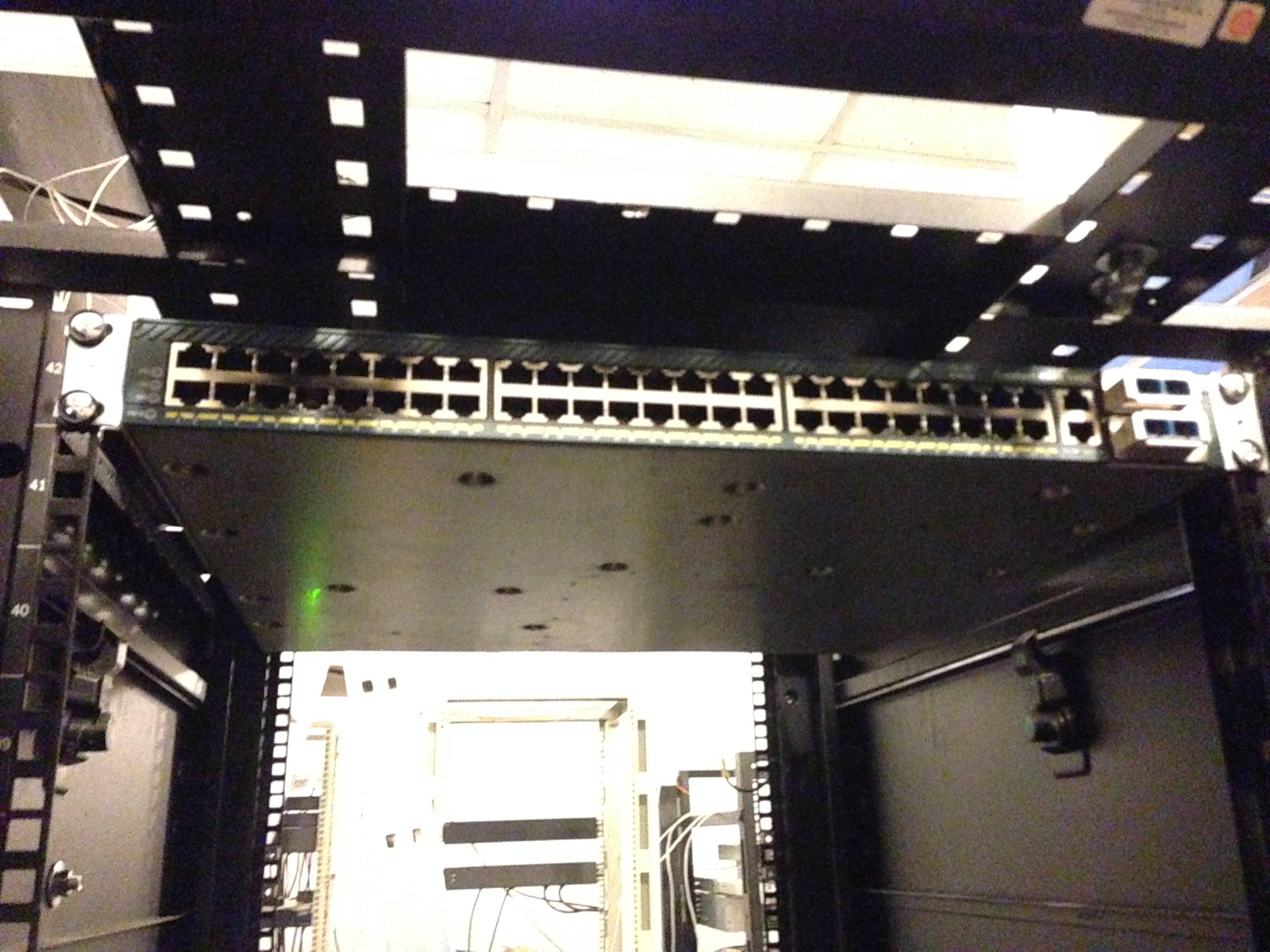

We rented a cabinet in the Dnepropetrovsk data center and purchased the Cisco 4948 10GE (for growth) and the first three HP Gen 6 servers.

Choosing HP equipment subsequently helped us a lot, due to our low power consumption. Well, then, off we go. We purchased managed sockets (PDUs), they were important for us to count energy consumption and manage servers (auto-install).

Later we realized that we took not the PDUs that are managed, but simply measuring ones. But the benefit of HP has a built-in iLO (IPMI) and it saved us. We did all the automation of the system using IPMI (through DCI Manager). After that, we acquired some tools for laying SCS and we slowly began to build something more.

Here we see the APC PDUs (which turned out to be unmanaged), we connect:

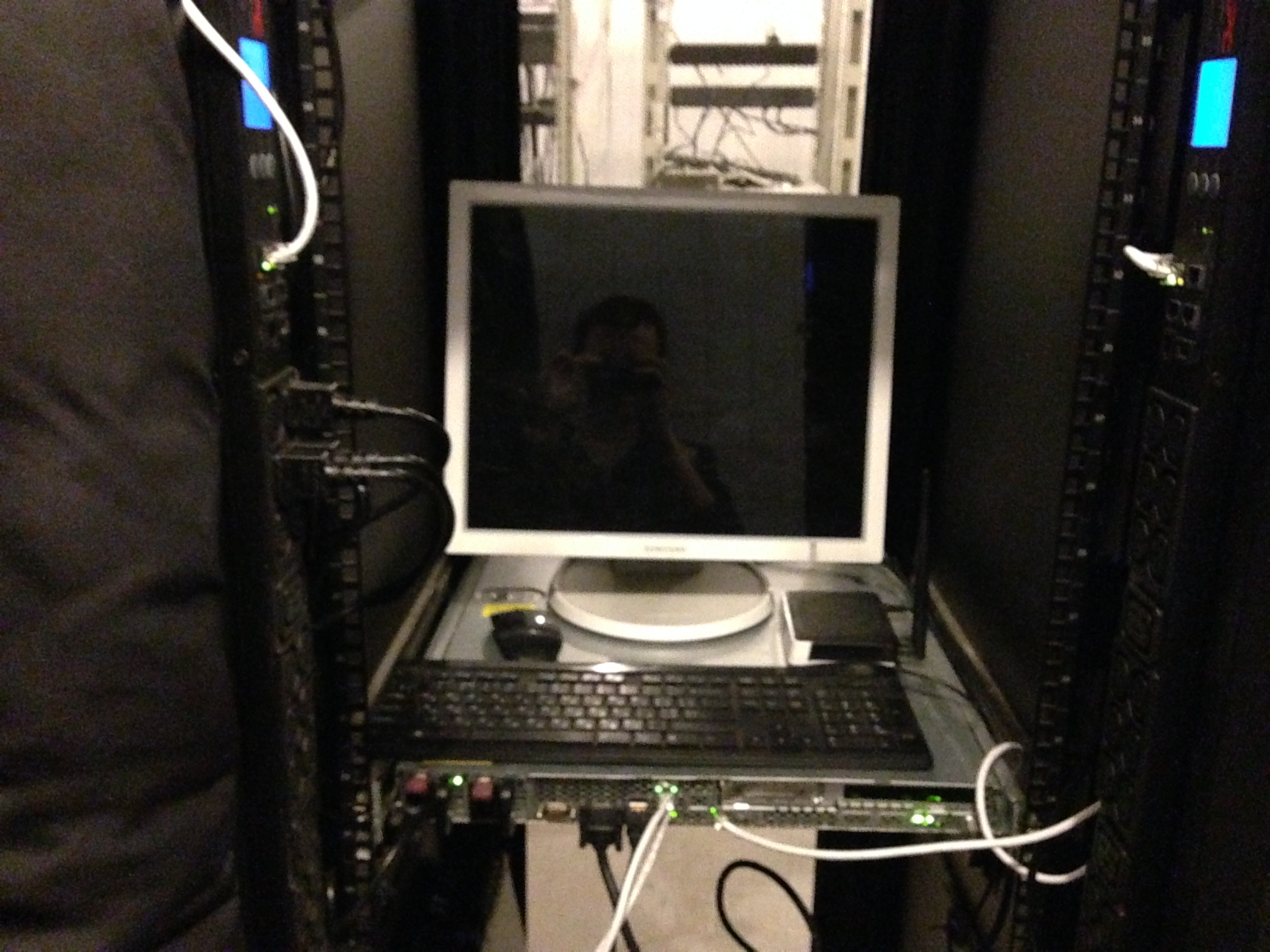

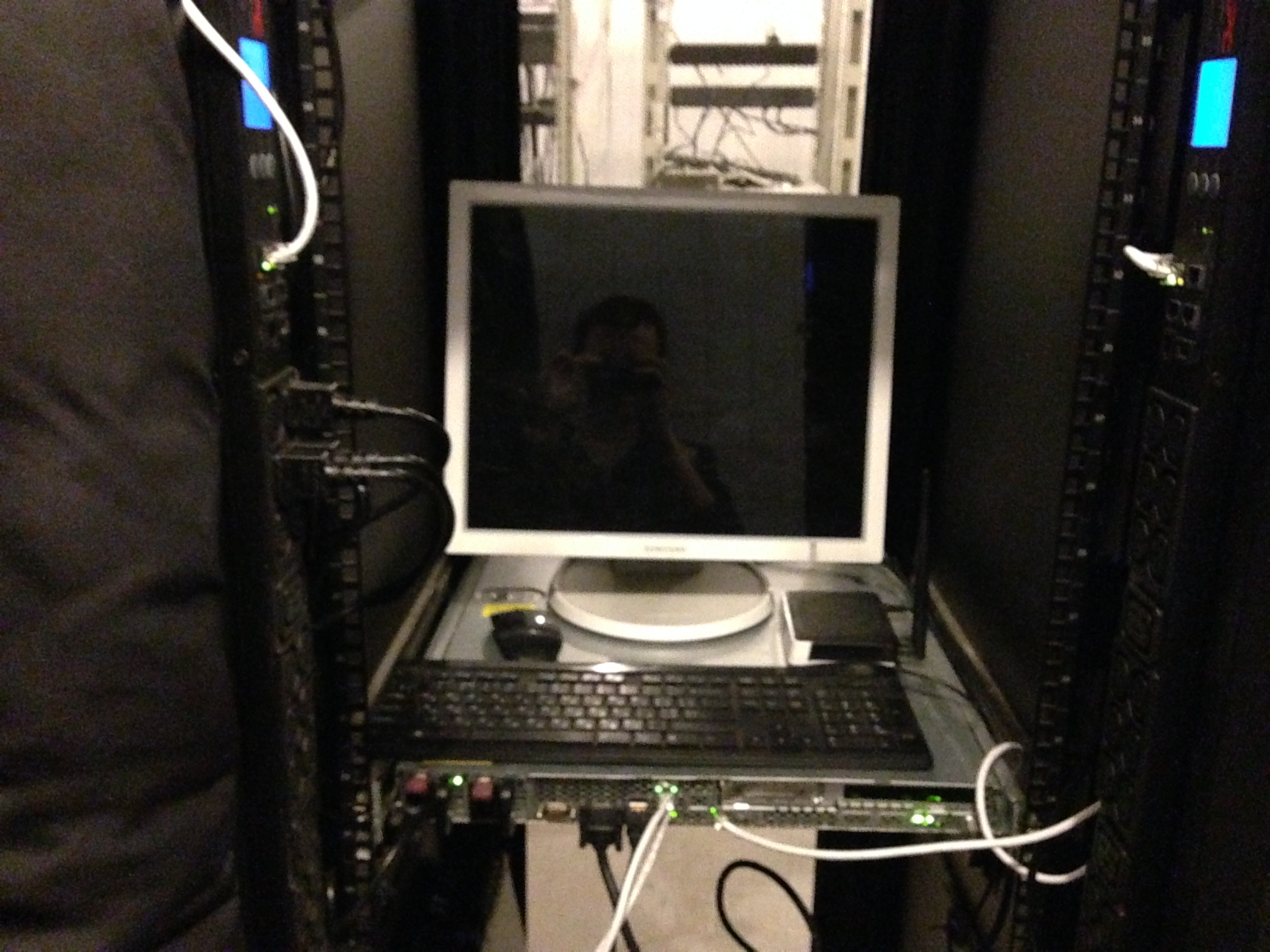

So, by the way, we installed the third server and PDU (right and left). The server has long served us as a stand for the monitor and keyboard with the mouse.

On the existing equipment, we organized a certain Cloud service using VMmanager Cloud from ISP Systems. We had three servers, one of which was a master, the second - a file one. We had the actual backup in case one of the servers crashed (except for the file one). We realized that 3 servers for the cloud is not enough, so add. the equipment was on its way. The benefit of “delivering” it to the cloud was without problems and special settings.

When one of the servers crashed (master, or the second backup), the cloud quietly migrated back and forth without crashes. We specifically tested this before entering production. The file server was organized on good disks in hardware RAID10 (for speed and fault tolerance).

At that time, we had several nodes in Russia on rented equipment, which we carefully began to transfer to our new Cloud service. A lot of negativity was expressed by the support of ISP Systems, as they did not make an adequate migration from VMmanager KVM to VMmanager Cloud, and as a result we were generally told that this should not be. Carried by handles, long and painstakingly. Of course, only those who agreed to the public offering and transfer were transferred.

That’s how we worked for about a month, until we filled the floor of the cabinet with equipment.

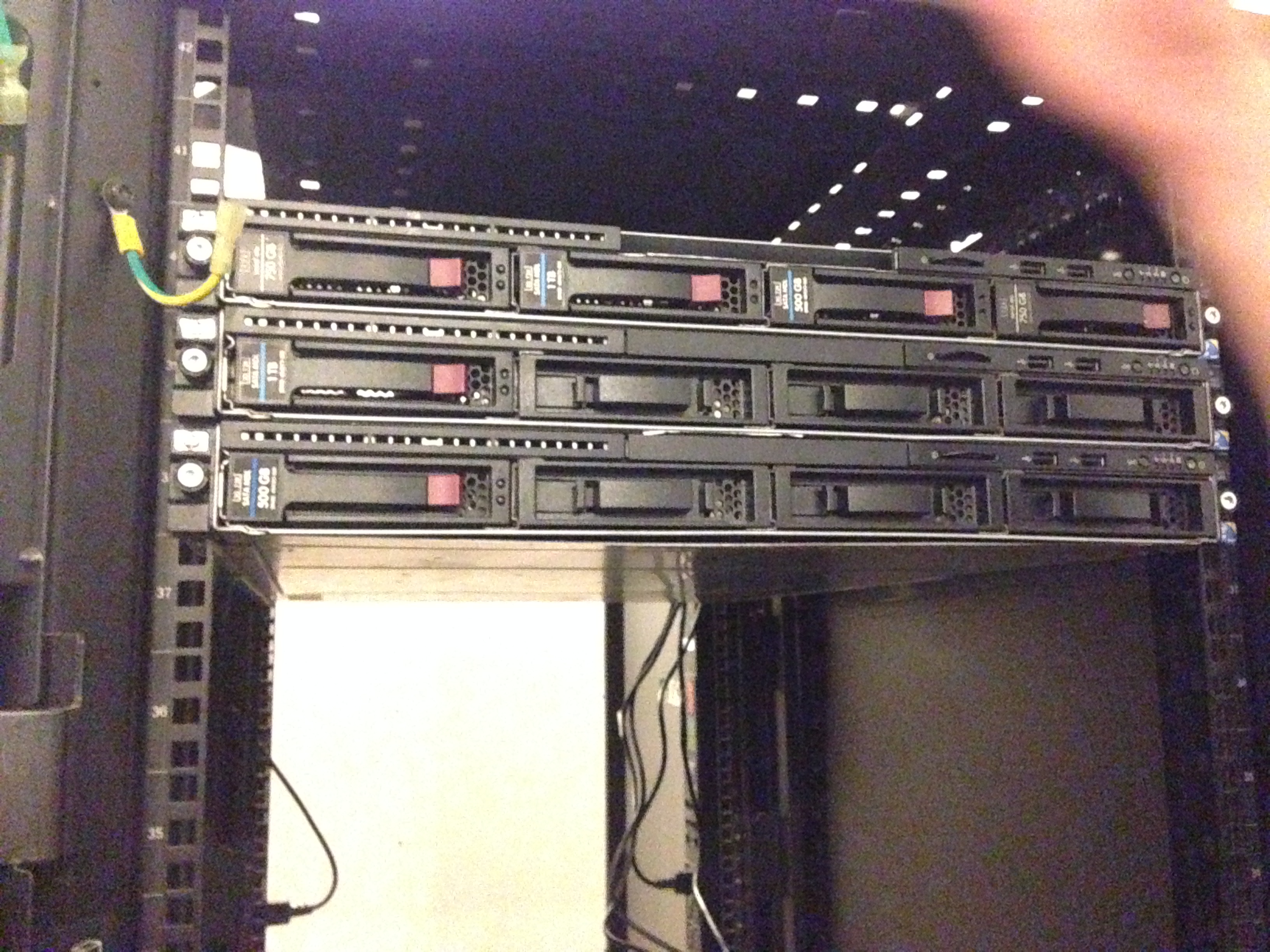

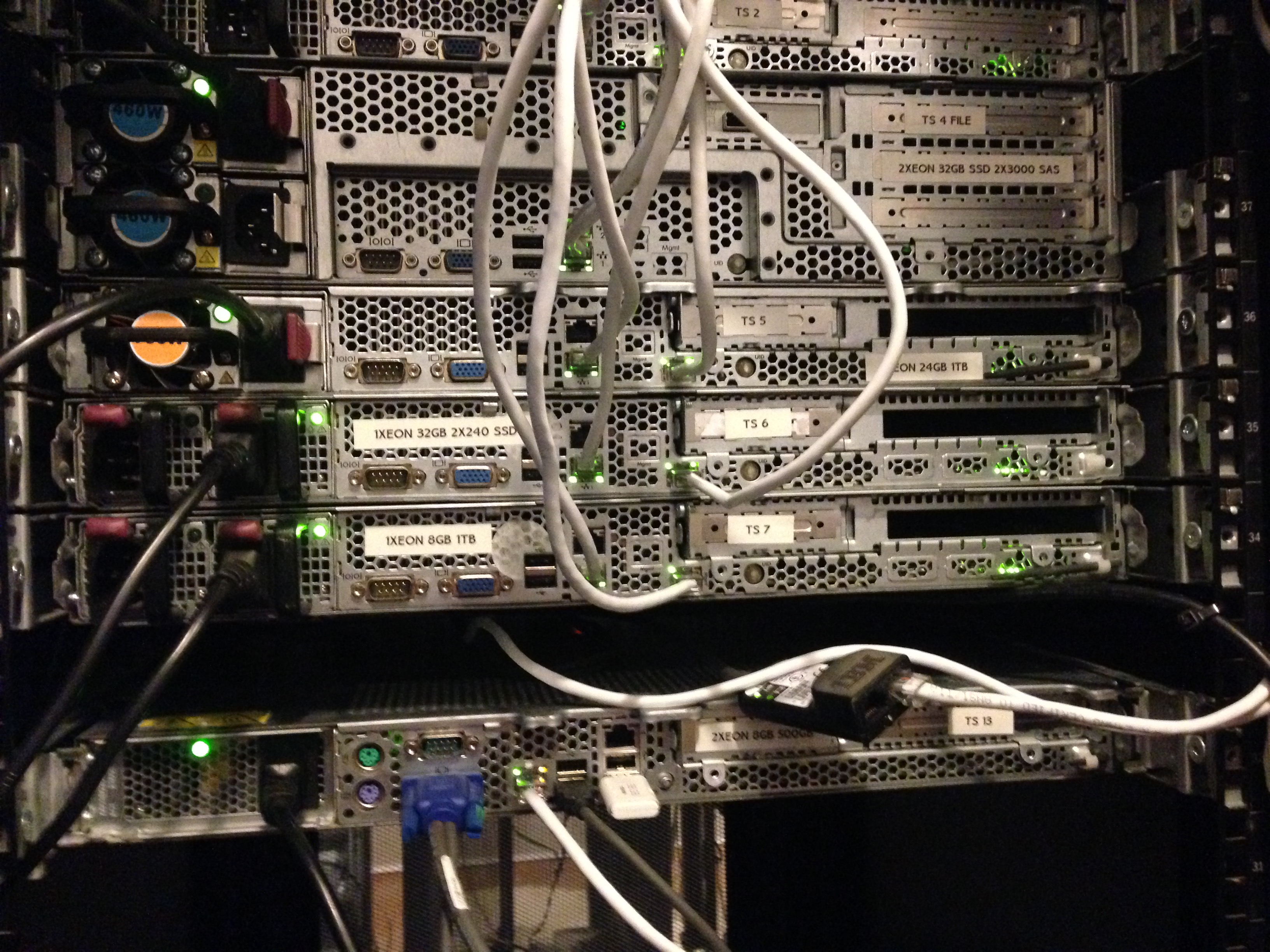

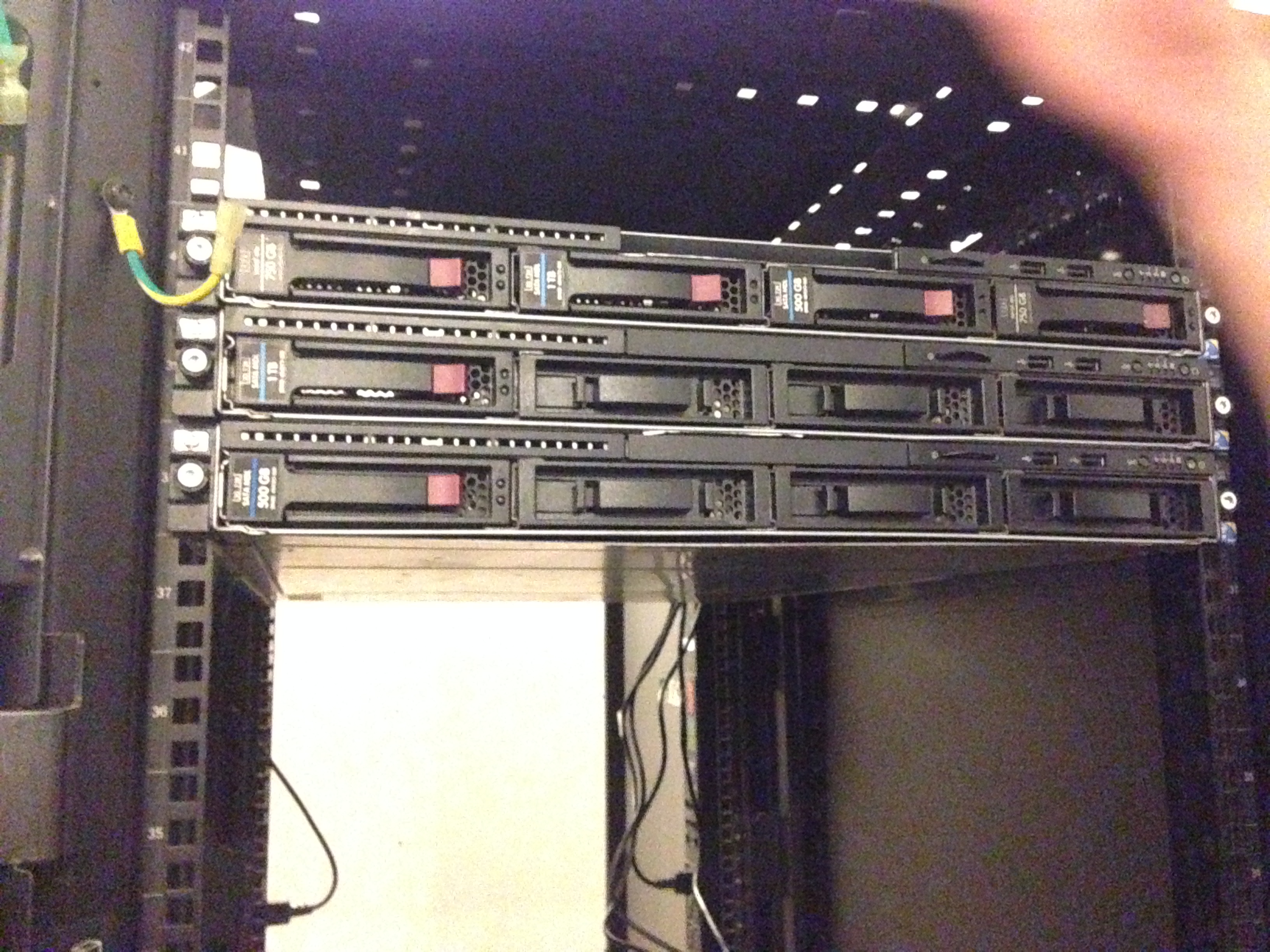

So we met the second batch of our equipment, again HP (not advertising). It's just better to have servers that are interchangeable than the other way around.

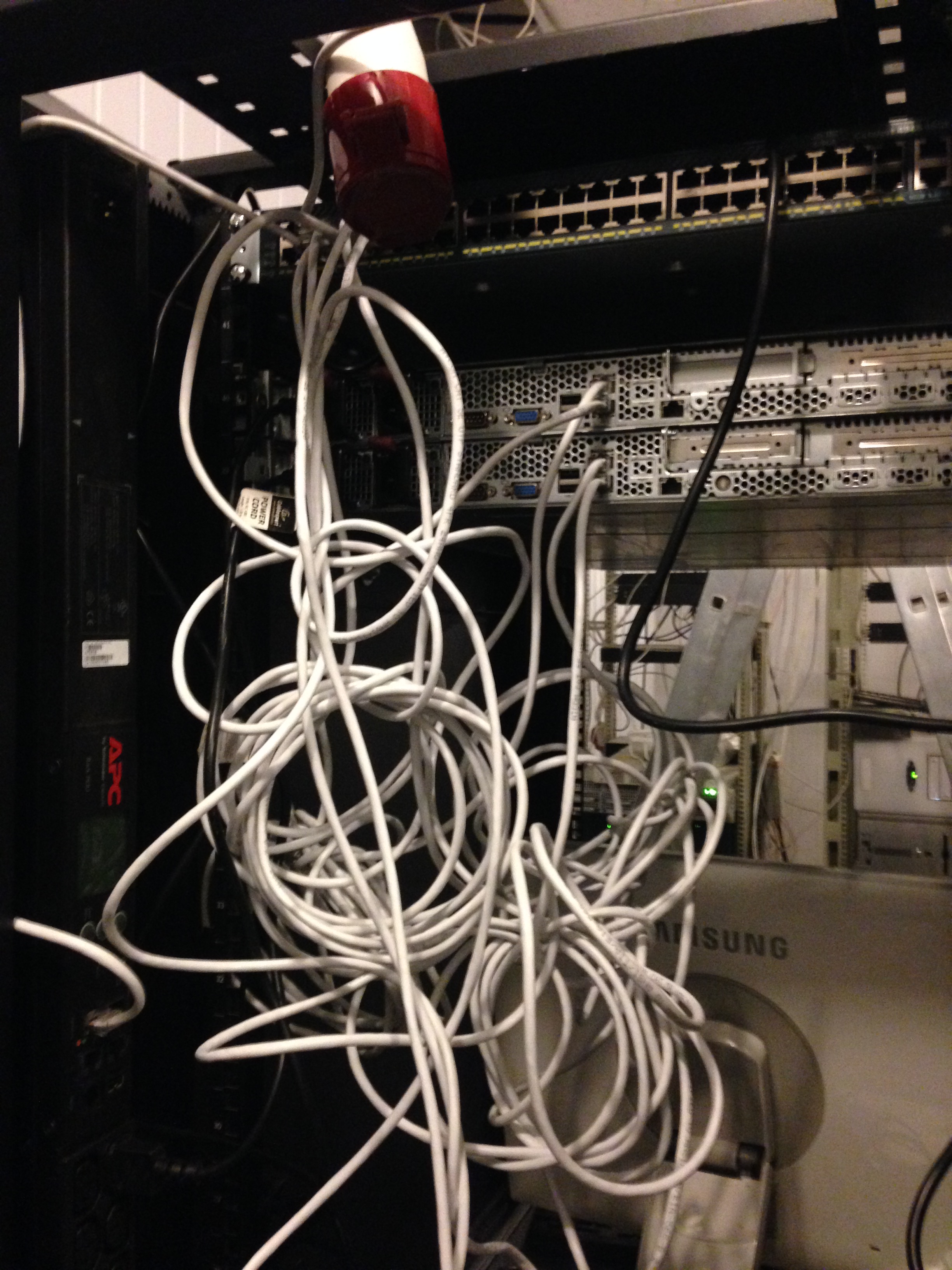

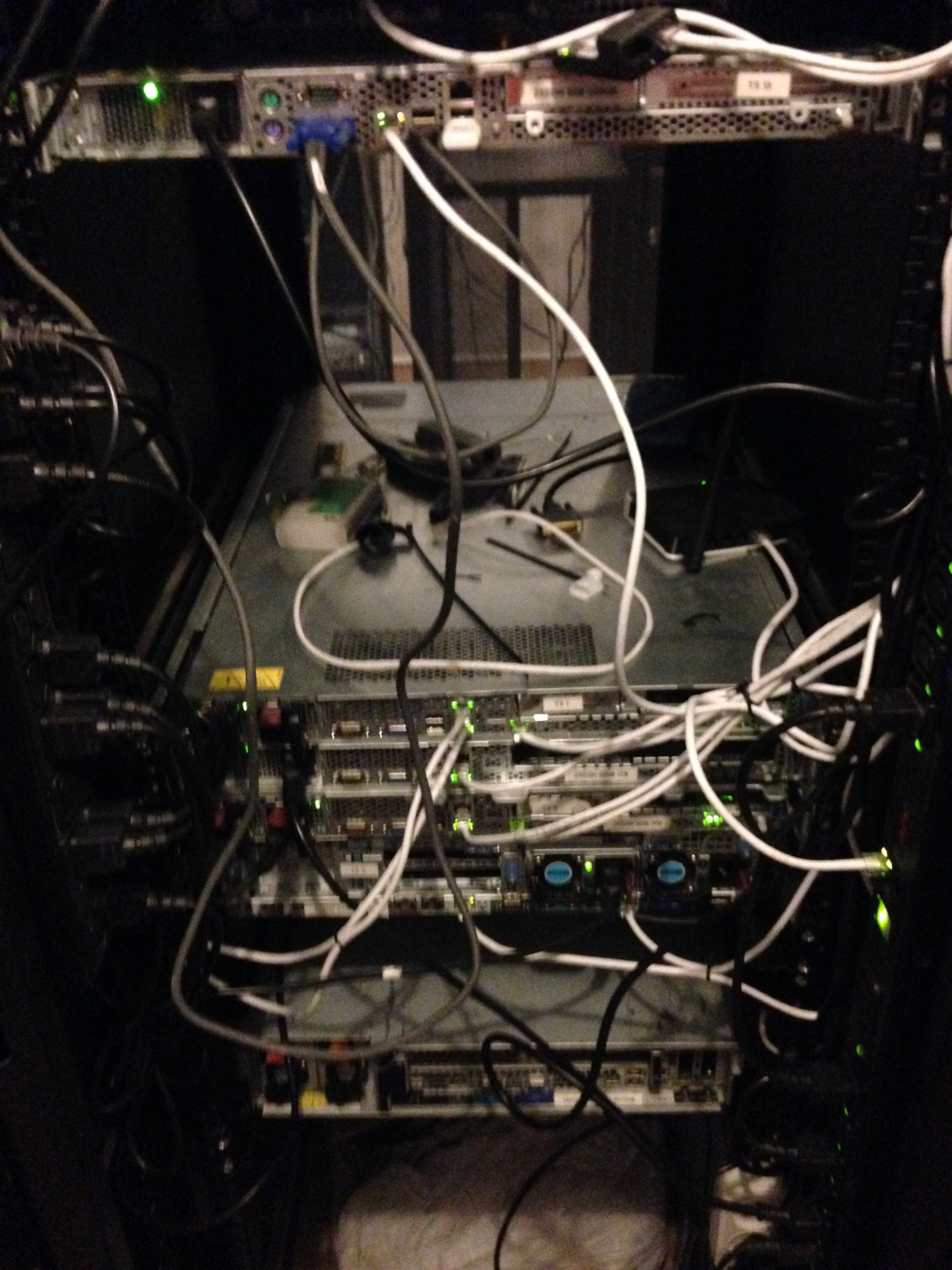

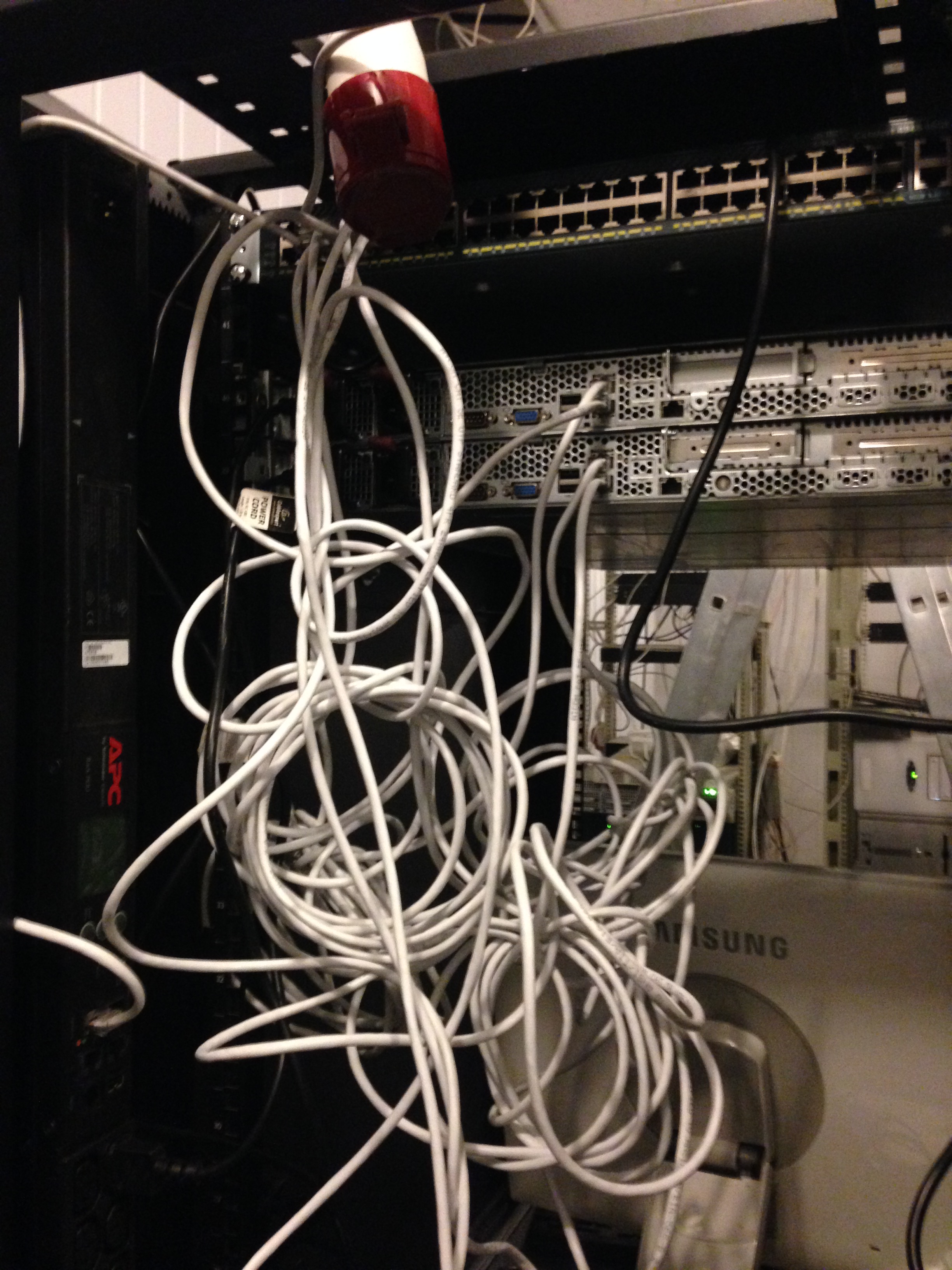

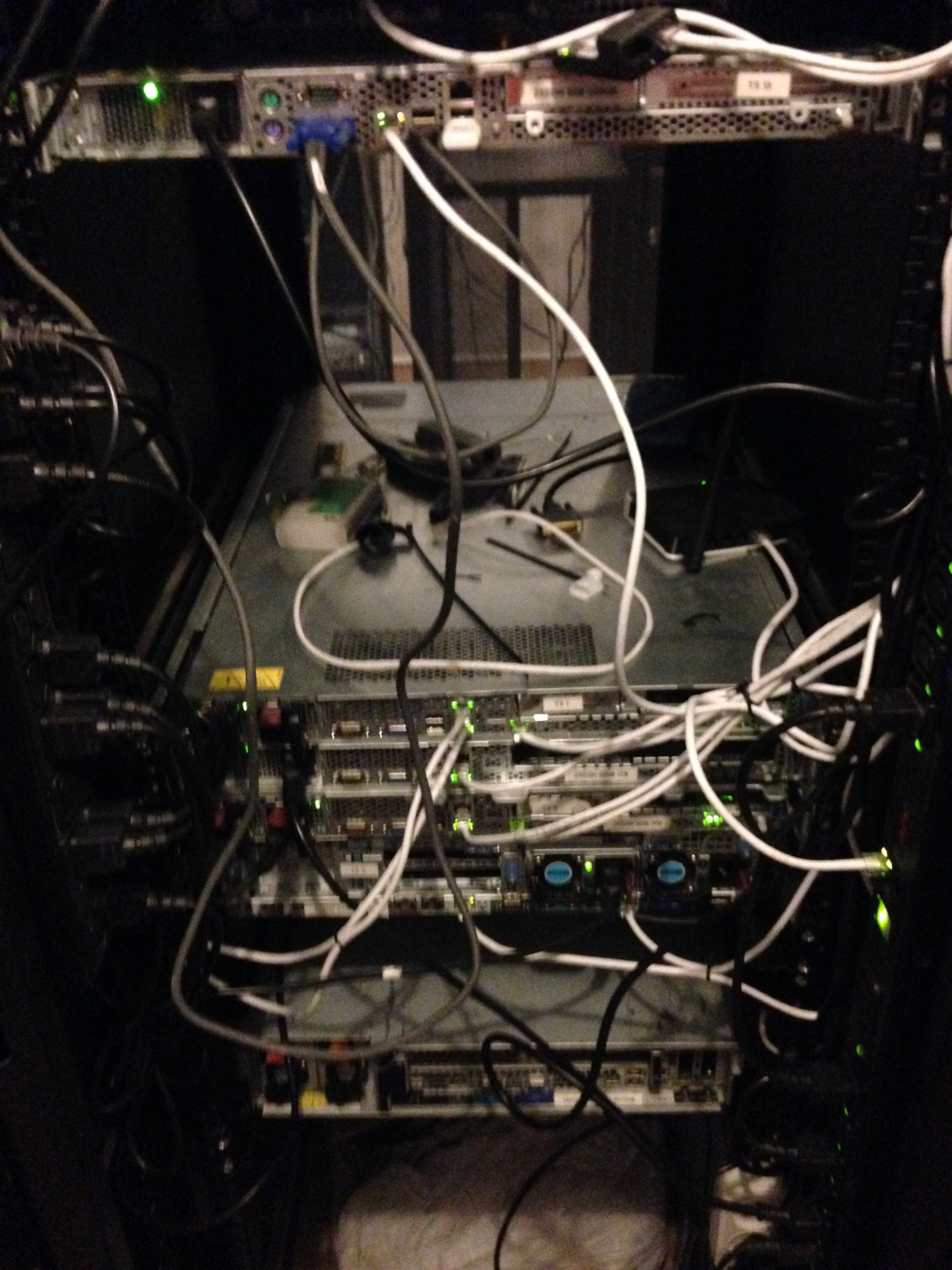

Based on the fact that none of us has huge experience in installing SCS (except for administrators who, as usual, are not where they are needed), we had to learn everything on the fly. So we had wires the first time (about a day) when we installed the servers.

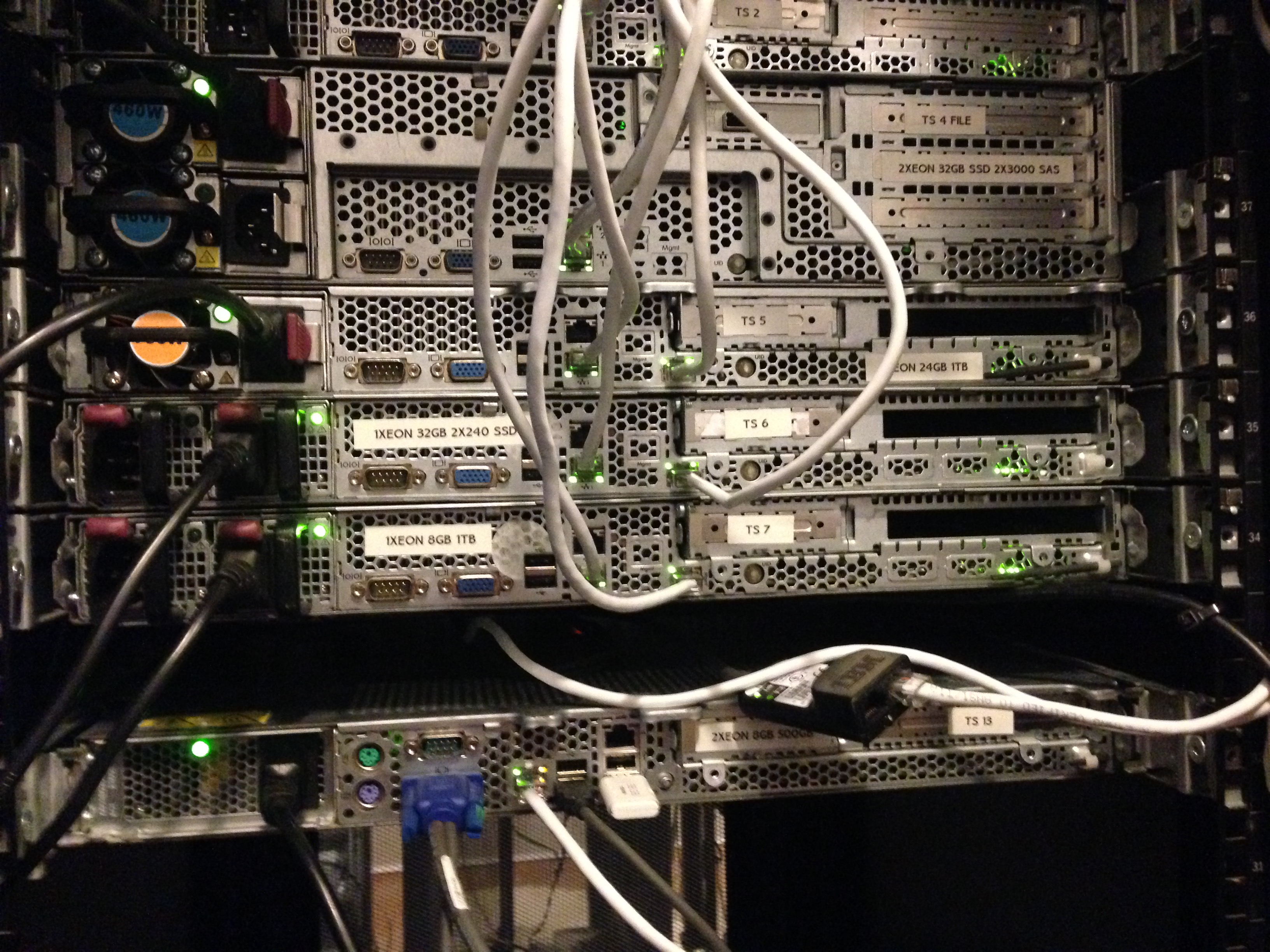

Something like this - it became already after installing all the equipment that came and laying.

As the company grew, we were increasingly faced with certain difficulties. There were also problems with the equipment (lack of SAS-SATA cables in quick access), its connection, various subtleties and with DDoS attacks on us (such that they arrived and put the entire data center). But one of the main, in our opinion, is the instability of the data center in which we rented a cabinet for equipment. We ourselves were not very stable, but when the data center in which you are located falls, it’s really bad.

Based on the fact that the data center was in the old semi-abandoned building of the industrial complex, it worked accordingly. And despite the fact that its owner made every effort to ensure that he worked stably (and this is the true truth, Alexander was really great and helped us a lot in the beginning) - they turned off the lights and the Internet. Probably it saved us. This was the reason that prompted us (without the finances available for that) to start searching for a room for our own data center and arrange moving to it in less than 14 days (taking into account the preparation of the room, broaching optics and everything else) But more on that, already in the next part of our story. About a hasty move, finding a room and building a mini-data center in which we now live.

How we built our mini data center. Part 2 - Hermozona

PS In the next part a lot of interesting things. From difficulties with moving, repairing, pulling optics through residential areas, installing automation, connecting UPS and DGU, etc.

Part 2 - Hermozona

Part 3 - Moving

Go

In 2015, a hosting company was created in Ukraine with great ambitions for the future and a virtual lack of start-up capital. We rented equipment in Moscow, from one of the well-known providers (and by acquaintance), this gave us the start of development. Thanks to our experience and partly luck, already in the first months we gained enough customers to buy our own equipment and get good connections.

For a long time we chose the equipment that we need for our own purposes, first we selected the price / quality parameters, but unfortunately most of the well-known brands (hp, dell, supermicro, ibm) are all very good in quality and similar in price.

Then we went the other way, chose from what is available in Ukraine in large quantities (in case of breakdowns or replacement) and chose HP. Supermicro (as it seemed to us) are very well configured, but unfortunately their price in Ukraine exceeded any adequate meaning. And there were not so many spare parts. So we chose what we chose.

First iron

We rented a cabinet in the Dnepropetrovsk data center and purchased the Cisco 4948 10GE (for growth) and the first three HP Gen 6 servers.

Choosing HP equipment subsequently helped us a lot, due to our low power consumption. Well, then, off we go. We purchased managed sockets (PDUs), they were important for us to count energy consumption and manage servers (auto-install).

Later we realized that we took not the PDUs that are managed, but simply measuring ones. But the benefit of HP has a built-in iLO (IPMI) and it saved us. We did all the automation of the system using IPMI (through DCI Manager). After that, we acquired some tools for laying SCS and we slowly began to build something more.

Here we see the APC PDUs (which turned out to be unmanaged), we connect:

So, by the way, we installed the third server and PDU (right and left). The server has long served us as a stand for the monitor and keyboard with the mouse.

On the existing equipment, we organized a certain Cloud service using VMmanager Cloud from ISP Systems. We had three servers, one of which was a master, the second - a file one. We had the actual backup in case one of the servers crashed (except for the file one). We realized that 3 servers for the cloud is not enough, so add. the equipment was on its way. The benefit of “delivering” it to the cloud was without problems and special settings.

When one of the servers crashed (master, or the second backup), the cloud quietly migrated back and forth without crashes. We specifically tested this before entering production. The file server was organized on good disks in hardware RAID10 (for speed and fault tolerance).

At that time, we had several nodes in Russia on rented equipment, which we carefully began to transfer to our new Cloud service. A lot of negativity was expressed by the support of ISP Systems, as they did not make an adequate migration from VMmanager KVM to VMmanager Cloud, and as a result we were generally told that this should not be. Carried by handles, long and painstakingly. Of course, only those who agreed to the public offering and transfer were transferred.

That’s how we worked for about a month, until we filled the floor of the cabinet with equipment.

So we met the second batch of our equipment, again HP (not advertising). It's just better to have servers that are interchangeable than the other way around.

Based on the fact that none of us has huge experience in installing SCS (except for administrators who, as usual, are not where they are needed), we had to learn everything on the fly. So we had wires the first time (about a day) when we installed the servers.

Something like this - it became already after installing all the equipment that came and laying.

As the company grew, we were increasingly faced with certain difficulties. There were also problems with the equipment (lack of SAS-SATA cables in quick access), its connection, various subtleties and with DDoS attacks on us (such that they arrived and put the entire data center). But one of the main, in our opinion, is the instability of the data center in which we rented a cabinet for equipment. We ourselves were not very stable, but when the data center in which you are located falls, it’s really bad.

Based on the fact that the data center was in the old semi-abandoned building of the industrial complex, it worked accordingly. And despite the fact that its owner made every effort to ensure that he worked stably (and this is the true truth, Alexander was really great and helped us a lot in the beginning) - they turned off the lights and the Internet. Probably it saved us. This was the reason that prompted us (without the finances available for that) to start searching for a room for our own data center and arrange moving to it in less than 14 days (taking into account the preparation of the room, broaching optics and everything else) But more on that, already in the next part of our story. About a hasty move, finding a room and building a mini-data center in which we now live.

How we built our mini data center. Part 2 - Hermozona

PS In the next part a lot of interesting things. From difficulties with moving, repairing, pulling optics through residential areas, installing automation, connecting UPS and DGU, etc.