How to remove fake traffic from your site

But this is not always a cause for joy. Later we discover that most of this referral traffic was sent from spammers. Spam has become a big problem lately.

Referral spam occurs when your site receives fake traffic directions from spam bots. This traffic fake is recorded by Google Analytics. If you notice traffic received from spam sources in Analytics, you need to perform certain actions to eliminate this data from statistics.

What is a bot?

Bots are usually called programs whose task is to perform repetitive tasks with maximum speed and degree of accuracy.

A traditional use case for bots is web indexing of the content of Internet resources, regularly carried out by search engines. But bots can also be used for malicious purposes. For example, for:

- click fraud;

- accumulation of e-mail addresses;

- Transmission of website content

- malware distribution;

- artificially inflating resource traffic.

By analyzing the tasks for which bots are used, you can divide them into safe and dangerous.

Dangerous and Safe Bots

An example of a good bot is Googlebot, which Google uses to crawl and index web pages on the Internet.

Most bots (whether safe or dangerous) do not execute JavaScript scripts, but some do.

Search bots that run Javascript scripts (like Google’s analytics code) appear in Google Analytics reports and distort traffic indicators (direct traffic, referral traffic) and other metric data based on sessions (bounce rate, conversion rate, etc.).

Search bots that don’t execute JavaScript (e.g. Googlebot) do not distort the above data. But their visits are still recorded in the server logs. They also consume server resources, degrade throughput and can negatively affect the site’s loading speed.

Safe bots, unlike dangerous ones, obey the robots.txt directive. They are able to create fake user accounts, send spam, collect email addresses and can bypass CAPTCHA.

Dangerous bots use various methods that complicate their detection. They can affect the web browser (for example, Chrome, Internet Explorer, etc.), as well as traffic coming from a normal site.

It’s impossible to say for sure which dangerous bots Google data can distort and which not. Therefore, it is worth considering all dangerous bots as a threat to data integrity.

Spam bots

As the name implies, the main task of these bots is spam. They visit a huge amount of web resources daily, sending HTTP requests to sites with fake referrer headers. This allows them to avoid detection as bots.

The forged referrer heading contains the website address that the spammer wants to promote or receive backlinks.

When your site receives an HTTP request from a spam bot with a fake referrer header, it is immediately logged in the server log. If your server log has public access, then it can be crawled and indexed by Google. The system treats the referrer value in the server log as a backlink, which ultimately affects the ranking of the website promoted by the spammer.

Recently, Google’s indexing algorithms have been designed in such a way as not to take into account data from the logs. This eliminates the efforts of the creators of such bots.

Spam bots that can run JavaScript scripts can bypass the filtering methods used by Google Analytics. Thanks to this ability, this traffic is reflected in Google analytic reports.

Botnet

When a spam bot uses a botnet (a network of infected computers located locally or around the world), it can access the website using hundreds of different IP addresses. In this case, the IP blacklist or rate limiting (rate of traffic sent or received) becomes largely useless.

The ability of a spam bot to distort traffic to your site is directly proportional to the size of the botnet that uses the spam bot.

With a large botnet with different IP addresses, a spam bot can access your website without being blocked by a firewall or other traditional security mechanism.

Not all spam bots send referrer headers.

In this case, traffic from such bots will not appear as a source of referral traffic in Google Analytics reports. It looks like direct traffic, which makes it even more difficult to detect. In other words, whenever the referrer is not transmitted, this traffic is processed in Google Analytics as direct.

Spambot can create dozens of fake referrer headers.

If you blocked one referrer source, spam bots will send another fake to the site. Therefore, spam filters in Google Analytics or .htaccess do not guarantee that your site is completely blocked from spam bots.

Now you know that not all spam bots are dangerous. But some of them are really dangerous.

Very dangerous spam bots

The goal of truly dangerous spam bots is not only to distort the traffic of your web resource, clear the contents or receive e-mail addresses. Their goal is to infect someone else's computer with malware, make your machine part of a botnet.

As soon as your computer integrates into the botnet’s network, it begins to be used to send spam, viruses, and other malicious programs to other computers on the Internet.

There are hundreds and thousands of computers around the world that are used by real people, while being part of a botnet.

There is a high probability that your computer is part of a botnet, but you do not know about it.

If you decide to block the botnet, you are most likely blocking traffic coming from real users.

It is likely that as soon as you visit a suspicious site from your referral traffic report, your machine will become infected with malware.

Therefore, do not visit suspicious sites from analytics reports that do not install the appropriate protection (anti-virus programs installed on your computer). It is preferable to use a separate machine specifically for visiting such sites. Alternatively, you can contact your system administrator to deal with this problem.

Smart spam bots

Some spam bots (like darodar.com) can send artificial traffic even without visiting your site. They do this by playing HTTP requests that come from the Google Analytics tracking code, using your web property ID. They can not only send you fake traffic, but also fake referrers. For example, bbc.co.uk. Since the BBC is a legitimate site, when you see this referrer in your report, you don’t even think that traffic coming from a reputable site can be fake. In fact, no one has visited your site with the BBC.

These smart and dangerous bots do not need to visit your website or execute JavaScript. Since they do not actually visit your site, these visits are not recorded in the server log.

And, since their visits are not recorded in the server log, you cannot block them by any means (blocking IP, user, referral traffic, etc.).

Smart spam bots crawl your site for web property identifiers. People who don’t use Google Tag Manager leave a Google Analytics tracking code on their web pages.

The Google Analytics tracking code contains your web property ID. The identifier is stolen by a smart spam bot and can be transferred to use by other bots. No one will guarantee that the bot that has stolen your web resource identifier and the bot that sends you artificial traffic is the same “face”.

You can solve this problem by using Google Tag Manager (GTM).

Use GTM to track Google Analytics on your site. If the ID of your web resource has already been borrowed, then solving this problem is most likely too late. All you can do now is use a different ID or wait for a solution from Google.

Not every site is attacked by spam bots.

Initially, the task of spam bots is to detect and exploit the vulnerabilities of a web resource. They attack weakly protected sites. Accordingly, if you placed a page on a "budget" hosting or using a custom CMS, it has a good chance of being attacked.

Sometimes a site that often comes under attack by dangerous bots just needs to change its web hosting. This simple way can really help.

Follow the steps below to spot spam sources.

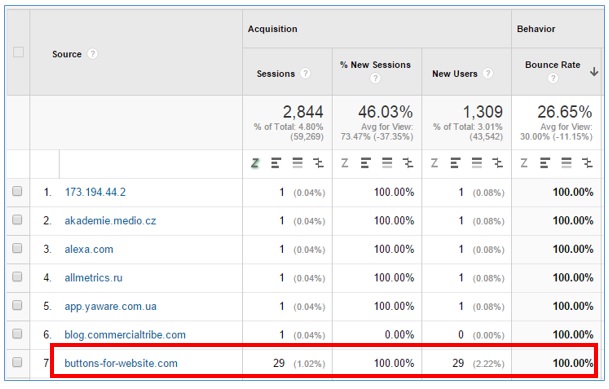

1) Go to the referral traffic report in your Google Analytics account and sort the report by the percentage of rejections in descending order:

2) Look at referrers with a 100% or 0% rejection rate, as well as those with 10 or more sessions. Most likely, these are spammers.

3) If one of your suspicious referrers belongs to the list of sites listed below, then this is referral spam. You can not check it yourself:

buttons-for-website.com

7makemoneyonline.com

ilovevitaly.ru

resellerclub.com

vodkoved.ru

cenokos.ru

76brighton.co.uk

sharebutton.net

simple-share-buttons.com

forum20.smailik.org

social-buttons.com

forum.topic39398713.darodar.com

An exhaustive list of spam sources can be downloaded here .

4) When it was not possible to confirm the identity of your suspicious referrer, take the risk and visit the dubious website. Perhaps this is indeed a normal resource. Make sure you have antivirus software before visiting these dubious resources. They are able to infect your computer at the time of transition to their page.

5) After confirming the identity of the dangerous bots, the next step is to block them from visiting your site again.

How can you limit your site from spam bots?

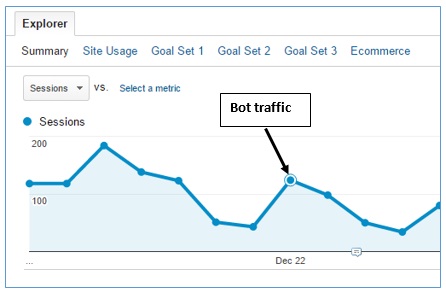

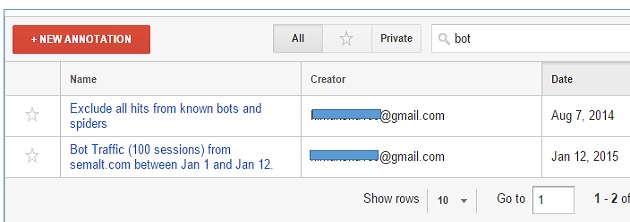

Create an annotation on your chart and write a note explaining what caused the unusual surge in traffic . You can discard this traffic from the accounts during the analysis.

Block referral spam using Spambot features . Add the following code to the .htaccess file (or web configuration if using IIS):

RewriteEngine On

Options +FollowSymlinks

RewriteCond %{HTTP_REFERER} ^https?://([^.]+\.)*buttons-for-website\.com\ [NC,OR]

RewriteRule .* – [F]This code will block all HTTP and HTTPS directions from buttons-for-website.com, including the subdomains of buttons-for-website.com.

Block the IP address used by the spam bot . Take the .htaccess file and add the code shown below:

RewriteEngine On

Options + FollowSymlinks

Order Deny, Allow

Deny from 234.45.12.33

Note : There is no need to copy the code to your .htaccess - the scheme will not work. Here is just an example that provides IP blocking in a .htaccess file.

Spam bots can use different IP addresses. Systematically replenish the list of IP addresses of spam bots available on your site.

Only block IP addresses that affect the site.

It makes no sense to try to block each of the known IP addresses. The .htaccess file will become very cumbersome. It will become difficult to manage, and the performance of the web server will decrease.

Have you noticed that the number of stock blacklist IP addresses is growing rapidly? There is a clear sign of a security problem. Contact your web hosting representative or system administrator. Use Google to find the blacklist for blocking IP addresses. Automate this work by creating a script that can independently find and deny IP addresses whose harmfulness is not in doubt.

Take advantage of the ability to block the ranges of IP addresses used by spam bots. When you are sure that a specific range of IP addresses is used by a spam bot, you can block a number of IP addresses at once with one movement, as shown below:

RewriteEngine On

Options + FollowSymlinks

Deny from 76.149.24.0/24

Allow from all

Here 76.149.24.0/24 is the CIDR range (CIDR is the method used to represent address ranges).

Using CIDR blocking is more efficient than blocking specific IP addresses, since it allows you to occupy a minimum of space on the server.

Note: You can hide a number of IP addresses in CIDR and vice versa open them using this tool: www.ipaddressguide.com/cidr

Block blocked users who use spam bots . Analyze server log files weekly, detect and block malicious agents of users using spam bots. After blocking, they will not be able to access the web resource. The ability to do this is shown below:

Rewriteengine on

Options + FollowSymlinks

RewriteCond% {HTTP_USER_AGENT} Baiduspider [NC]

RewriteRule. * - [F, L]

Using the Google search box, you can get an impressive list of resources that support records of known prohibited user agents. Use the information to identify these user agents on your site.

The easiest way is to write a script to automate the entire process. Create a database with all known banned user agents. Use a script that will automatically identify and block them, relying on data from the database. Regularly replenish the database with new prohibited user agents - those appear with enviable constancy.

Only block user agents that really affect the resource. It makes no sense to try to block every known IP address - this will make the .htaccess file too large, it will become difficult to manage. Server performance will also decrease.

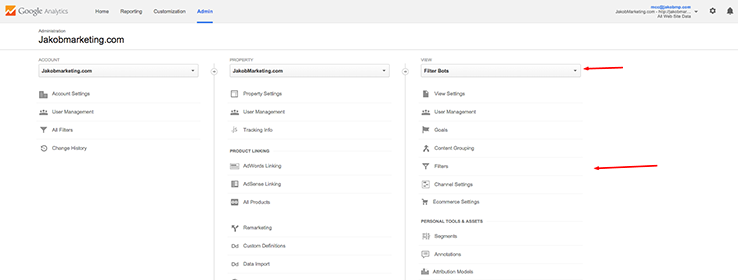

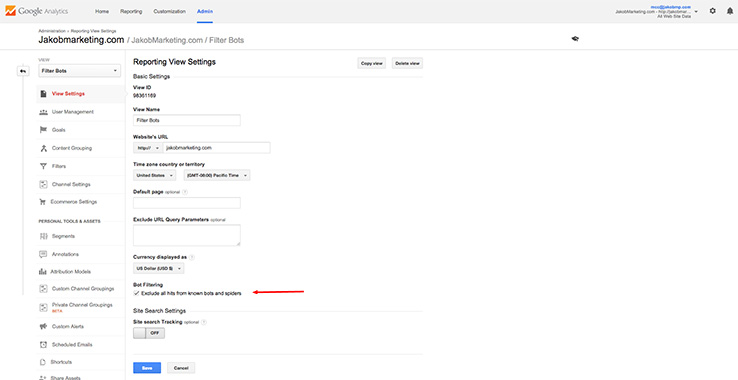

Use the “Bot Filtering” filtering available in Google Analytics - “Exclude hits from known bots and spiders.”

Monitor server logs at least weekly . To begin the fight against dangerous bots is real at the server level. While it has not been possible to “discourage” spam bots from visiting your resource, do not exclude them from Google’s analytical reporting.

Use a firewall. Firewall will become a reliable filter between your computer (server) and virtual space. It is able to protect the web resource from dangerous bots.

Get expert help from your system administrator . Round-the-clock protection of client web resources from malicious objects is its main work. The one who is responsible for network security has much more tools to repel bot attacks than the owner of the site. If you find a new bot that threatens the site, immediately inform about the finding of the system administrator.

Use Google Chrome for web surfing . In case the firewall is not used, it is best to use Google Chrome to browse the Internet.

Chrome also capable of detecting malware. At the same time, it opens web pages more quickly than other browsers, not forgetting to scan them for malware.

If you use Chrome, the risk of "picking up" malware on your computer is reduced. Even when you visit a suspicious resource from the reports of referral traffic Google Analytics.

Use custom alerts when monitoring unexpected traffic spikes. A personalized alert in Google analytics will enable you to quickly detect and neutralize harmful bot requests, minimizing their harmful effects on the site.

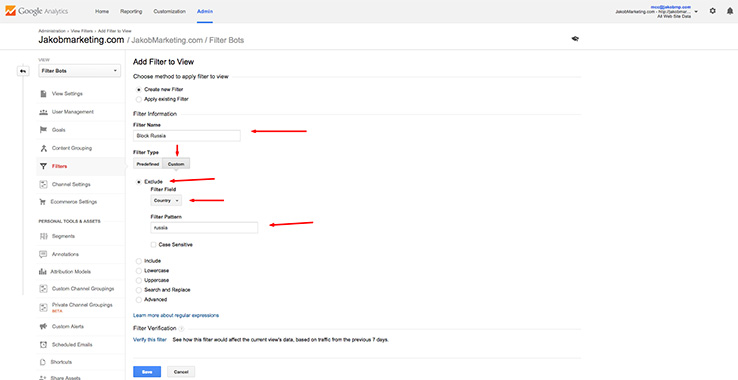

Use the filters available in Google Analytics .To do this, on the "Administrator" tab in the "Views" column, select "Filters" and create a new one.

Setting up filters is pretty easy. The main thing is to know how to do it.

You can use the “Bot Filtering” checkbox located in the “View Settings” section of the “Administrator” tab. It will not hurt.

Despite the ease of using filters in Google Analytics, we still do not recommend using them in practice.

There are three good reasons for this:

- There are hundreds and thousands of bad bots, a huge number of new ones appear daily. How many filters will you have to create and apply to your reports?

- The more filters you apply, the more difficult it will be to analyze reports received from Google analytics.

- Блокировка трафика спама в Google Analytics – это сокрытие, но не решение проблемы. Вы потеряете возможность оценивать степень искаженности трафика спам-ботами.

Аналогично, не блокируйте реферальный трафик с помощью «Referral exclusion list»- это не решит вашу проблему. Наоборот, этот трафик в последствии будет оцениваться как прямой, что приведет к потере возможности следить за воздействием спама на трафик вашего веб-ресурса.

После того, как спам-бот попал в статистику аналитического сервиса Google, данные о трафике будут искажены навсегда. Вы уже не сможете исправить его.

Заключение

We hope that the above recommendations will help you get rid of all sources of spam on your site. You can do this in many different ways, but we described those that helped many resources protect their data in Google Analytics.