Learning from machine learning (Saturday, philosophical)

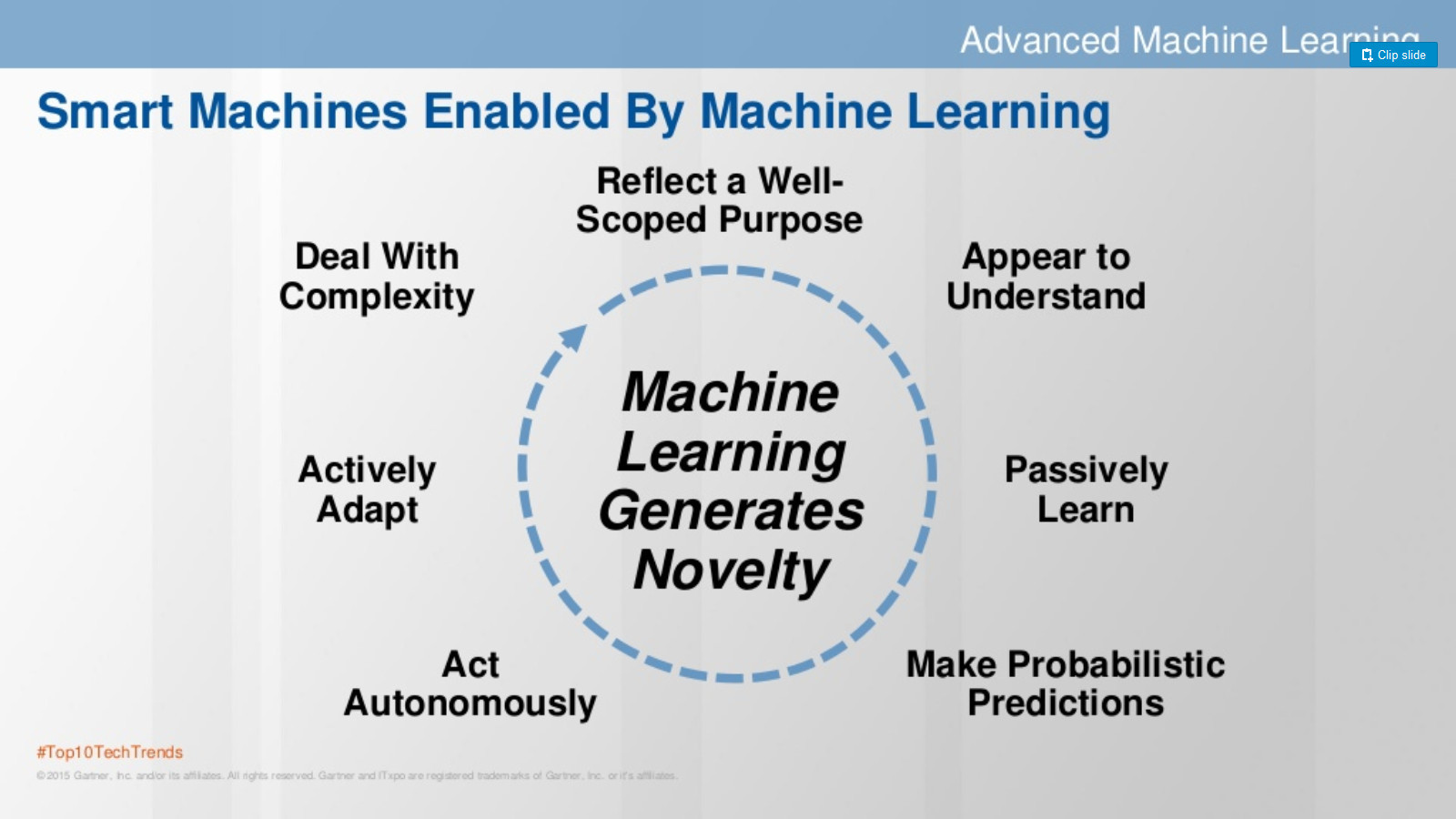

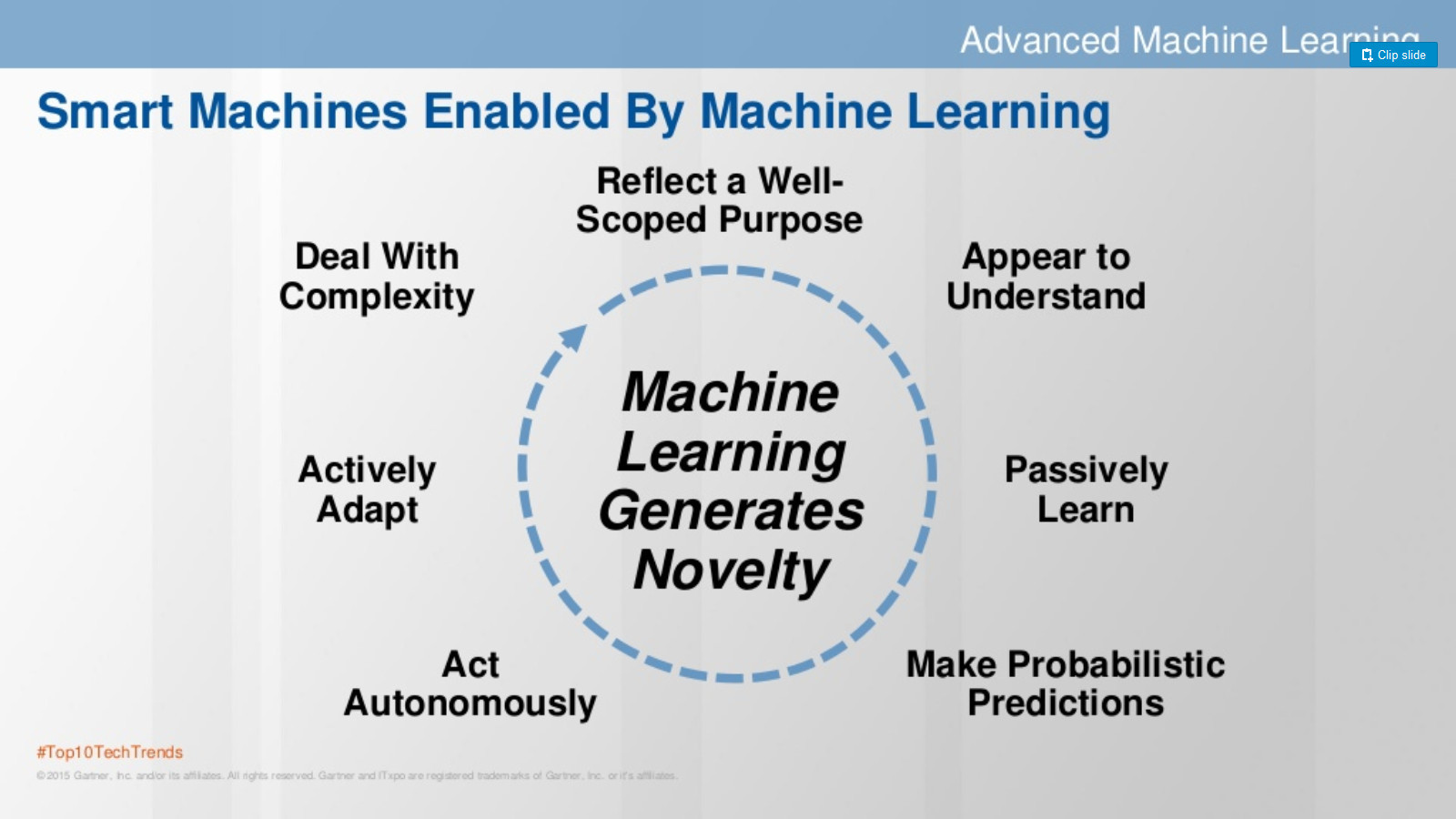

Machine learning is drawing more and more enthusiasts into its orbit. I became such an enthusiast a few years ago. I am a representative of one of the “affiliated” groups, an economist with data practices. Data is always a problem in economic science (it remained such, however) and it was easy to buy the mantra "big data". It was easy to move from big data, following Garnter in 2016, to machine learning.

The more you deal with this topic, the more interesting it becomes, especially in light of the ongoing predictions such as the advent of the era of robots, smart machines, etc. And it is not surprising that such machines will be created, because evolution shows that man learns to expand himself, creating a symbiosis of man-machine. It happens that you are walking at your fence, a nail sticks out. Oh, how hard it is to hammer it without a hammer. And with a hammer - just there. Therefore, it is not surprising that the same “helpers” appear for brain activity.

As I studied the topic, I did not stop thinking that machine learning seems to explain how our mind works. Below I will list the lessons that I learned about a person while studying machine learning. I do not pretend to be right, I apologize if all this is obvious, I will be glad if the material is fun, or if there are counter-examples to start (again) living by faith in the "incomprehensible". By the way, HSE has a course where machine learning is used to understand how the brain works.

1) An ensemble of simplest algorithms can understand an unimaginable data heap with good accuracy. In this regard, I recall the recently deceased Minsky, who wrote a whole bookabout the stupid agents that together form our mind. This interpretation of the mind allows us to imagine that all the magic that is our mind is nothing more than a collection of simple models, trained by experience.

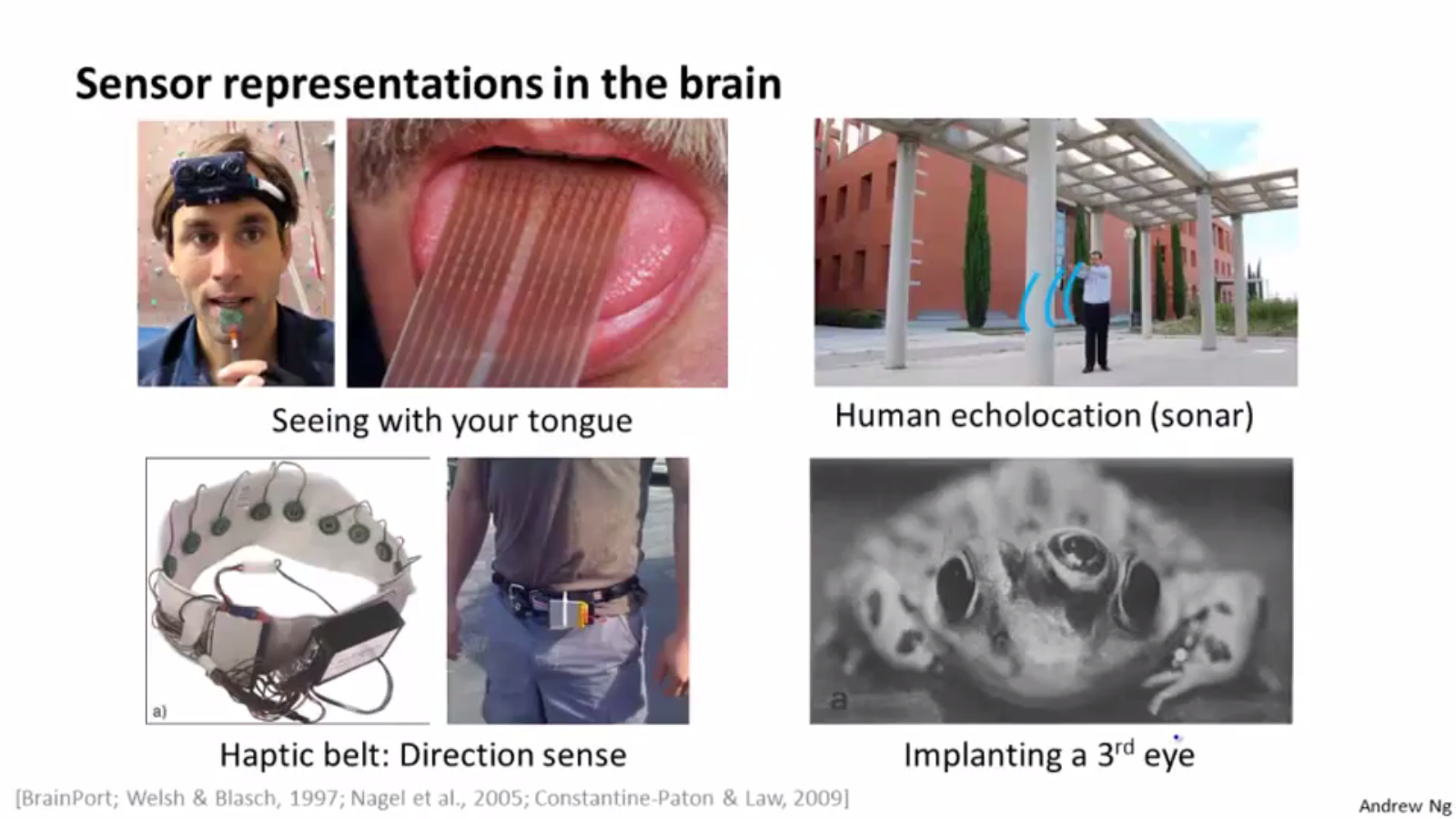

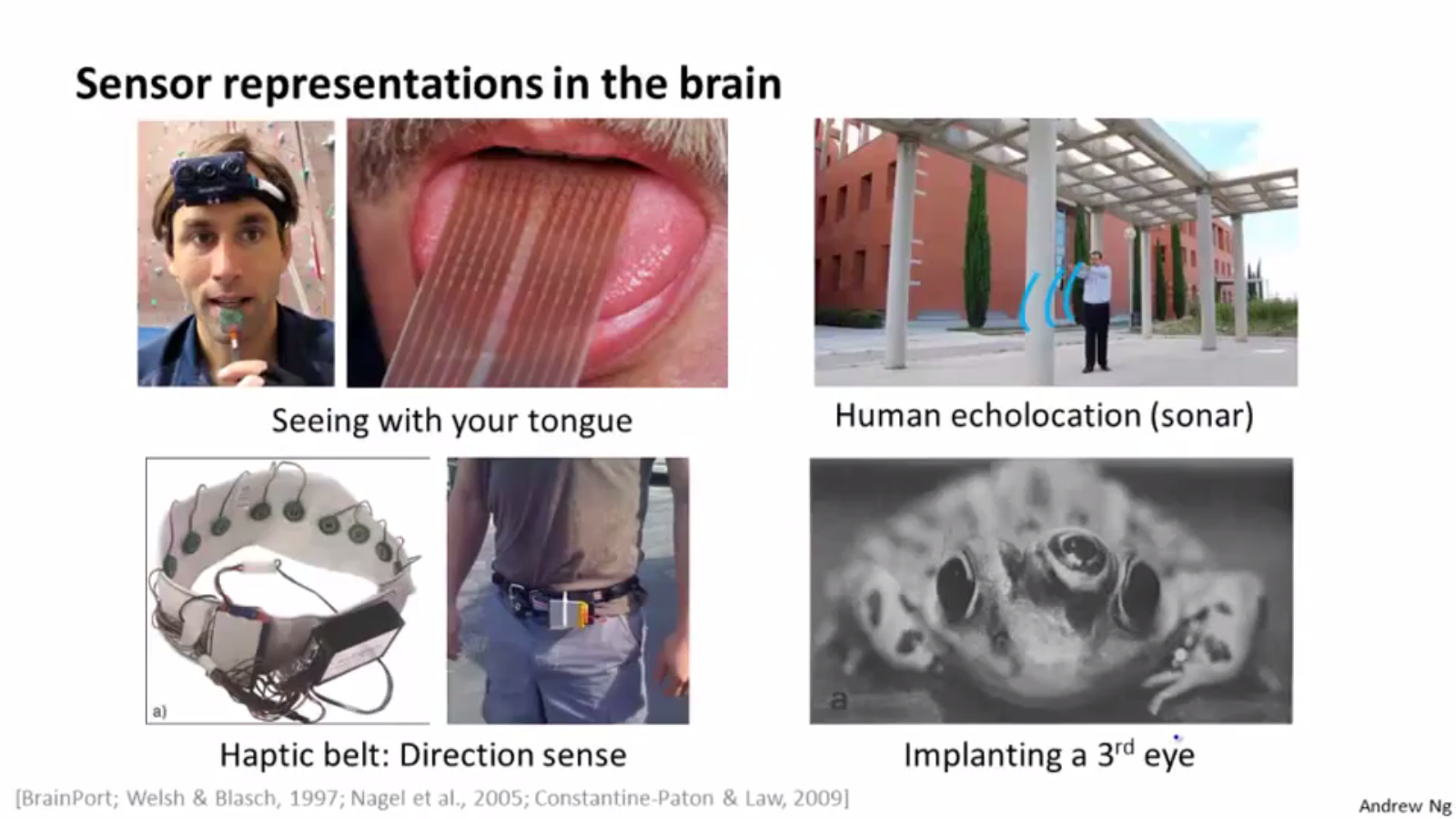

2) In terms of learning from experience, I recall one of Andrew NG's favorite slides:

On it, Andrew focuses on the fact that the brain can teach its other department (where there is no model yet) those functions that were lost, for example, to see with the tongue.

3) Brain researchers know that the mind is laid when a person is very small. Fantastic changes take place - from the sounds a person begins to understand speech, to recognize parents / strangers. The kids are still beating computers, but this only shows that our mind seems to be very good models, trained on a gigantic amount of information (e.g., 3 years of continuous pictures), by giant biological neural networks ( 86 billion neurons ).

4) It seems that some models can somehow be explained and changed - there is an area like “sandbox” (“let's think about why this is so,” correction methods like psychoanalysis, etc.), and some go into unconscious “production” (habits and automatic actions: driving a car after many years of practice, breathing, movement, etc.). An example of how production works - the classic Clive Wearing case. Man has completely lost the ability to remember. "Having watched a certain video recording multiple times on successive days, he never had any memory of ever seeing the video or knowing the contents, but he was able to anticipate certain parts of the content without remembering how he learned them." . the model trained in the past days is in memory and gives predictions for the next viewing. Model - remained in memory, data about the past - no.

5) Probably, many people are too lazy to create or update their models and live on what is in “production”, on ready-made models created on historical data that are no longer relevant. Therefore, it is so difficult to change a person: of course, he will trust (unconsciously) more a model trained on a gigantic amount of past information than new reliableless data.

6) Animals live only on models in “production” - they cannot analyze the model analytically and without retraining change the model ( ceteris paribus ). They will salivate without food, from a light bulb.

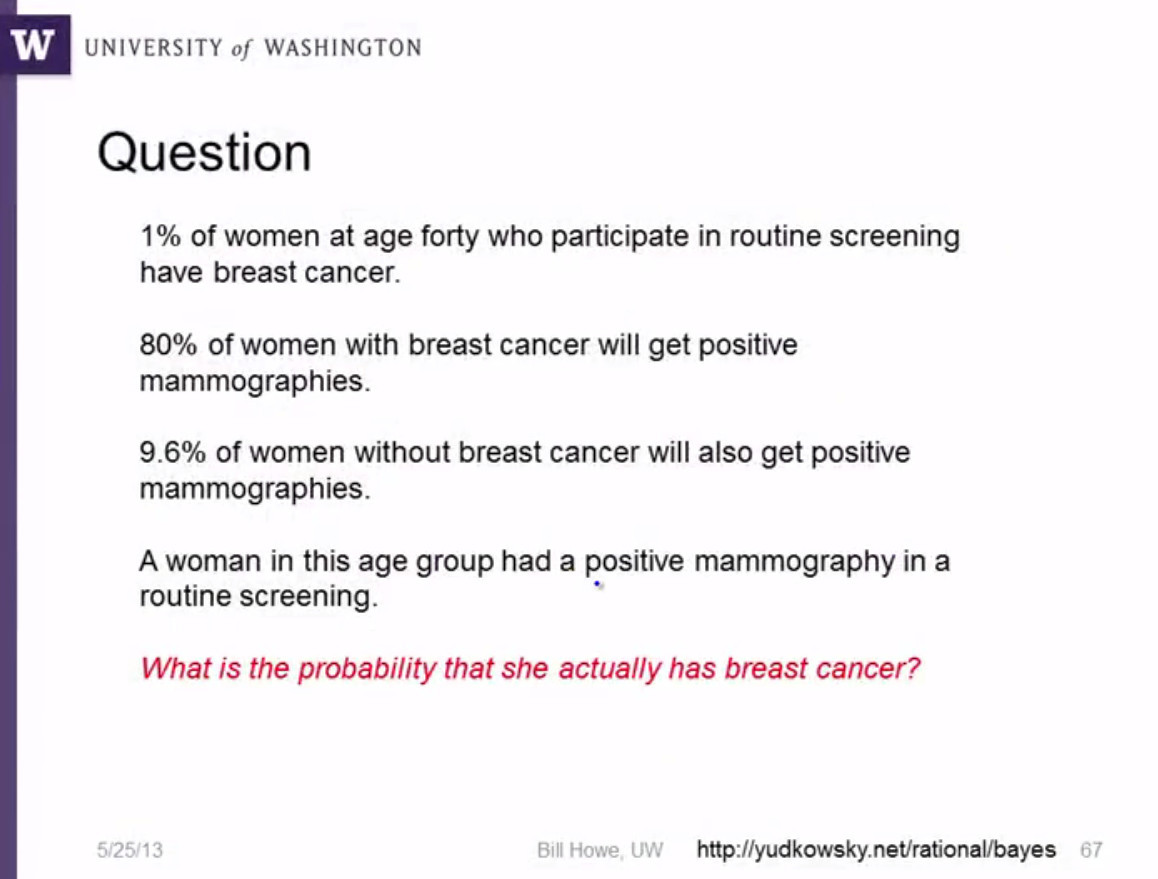

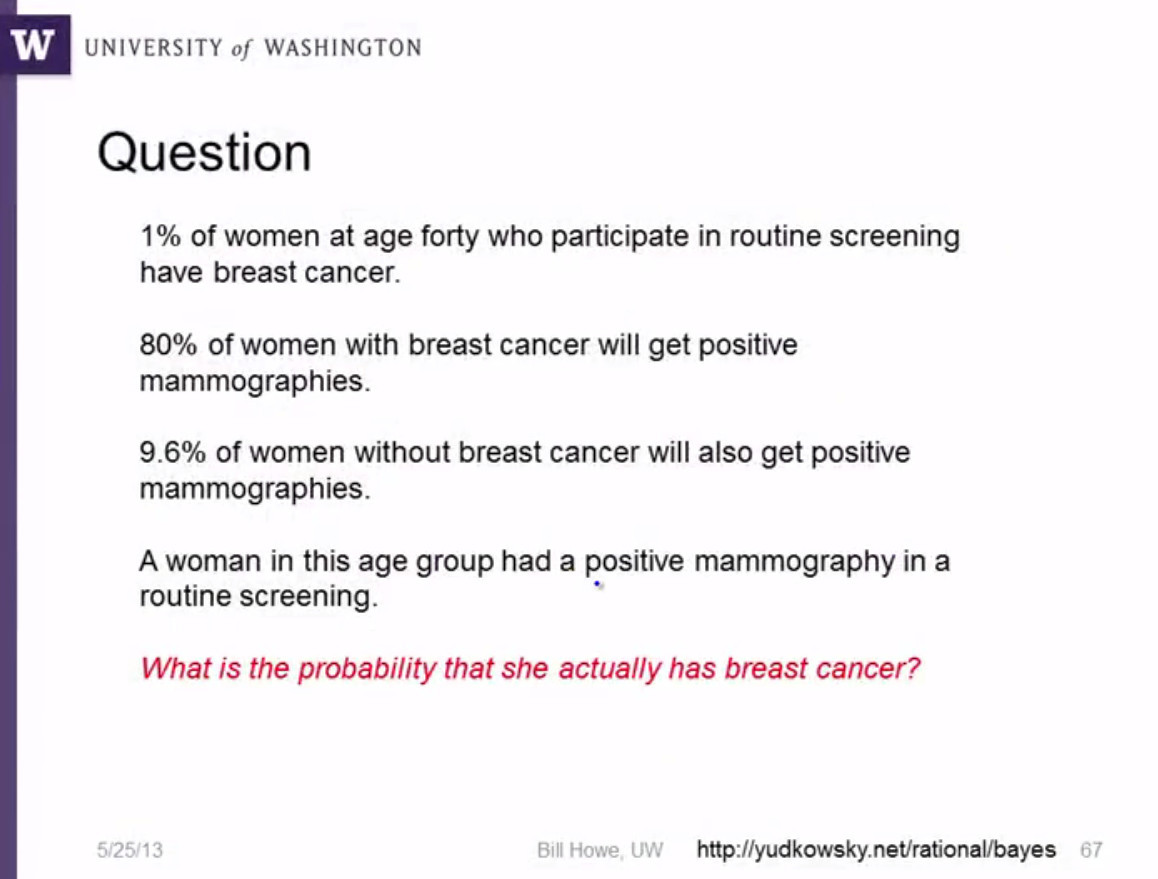

7) The brain is often inferior to the machine (analytics), especially if you do not think carefully. For example, a classic of the genre from the Bill Howe course, Practical Predictive Analytics: Models and Methods:

15% (!) Of doctors answer correctly (answer: 7.8%).

8) Intuition is a complex model that cannot be explained. Intuition - always based on data and models. The best example from Malcolm Gladwell's “Blink”- “the tennis coach who knows when a player will double-fault before the racket even makes contact with the ball”. Those. his model, trained on years of observation, gives a very accurate prediction of whether the ball will hit the field, having only data before the strike.

9) In principle, the following generalization can be made: “A person is smart in some area as much as he has built an algorithm trained in this area.” For this, as a rule, many observations are needed. The same Gladwell says that data is needed in about 10,000 hours of practice.

10) Why can some not grow wiser, even with a lot of experience? Note that this is how often a fool is defined: “one who makes the same mistake in the same circumstances (with the same data).” Probably, the complexity of the models is different for everyone, as well as the ability to train models.

11) For training the model, positive and negative examples are needed. You cannot teach an algorithm or a person using only examples of the same type. A person will not learn if you always scold or repeat that everything is OK.

12) Machine and human learning does not predict with 100% probability. 25% of the error is a miss in one case out of four. It is not necessary to be surprised that the ideal algorithm with such an error is often mistaken (examples: forecasting the exchange rate, GDP this year, Dota2 games , etc.) It is not necessary to be surprised that very smart ones can be wrong very often. By the way, averages of a large number of predictions help - it is better to judge by them than by one observation.

13) Love, music, art, etc. clearly connected with the trained model (please understand in the right sense), which converts the data coming from the object of admiration into the probability of “success”, activation of the connections that we “like”, etc.

14) Machine learning is built on minimizing the prediction error, which corresponds to the very definition of a smart person (mind) - the one who gives the correct answer, that is, has a minimal error.

15) The ability to explain why the algorithm works the second time, both for a person and for a machine. In this connection, the Buddha is recalled :

“One day Māluṅkyaputta got up from his afternoon meditation, went to the Buddha, saluted him, sat on one side and said:

'Sir, when I was all alone meditating, this thought occurred to me: There are these problems unexplained, put aside and rejected by the Blessed One. Namely, (1) is the universe eternal ... '

Excerpt from Buddha's answer:

'... Suppose Māluṅkyaputta, a man is wounded by a poisoned arrow, and his friends and relatives bring him to a surgeon. Suppose the man should then say: “I will not let this arrow be taken out until I know who shot me; whether he is a Kṣatriya (of the warrior caste) or a Brāhmaṇa (of the priestly caste) or a Vaiśya (of the trading and agricultural caste) or a Śūdra (of the low caste); what his name and family may be; whether he is tall, short, or of medium stature; whether his complexion is black, brown, or golden: from which village, town or city he comes. I will not let this arrow be taken out until I know the kind of bow with which I was shot; the kind of bowstring used; the type of arrow; what sort of feather was used on the arrow and with what kind of material the point of the arrow was made. ”Māluṅkyaputta,

... Therefore, Māluṅkyaputta, bear in mind what I have explained as explained and what I have not explained as unexplained. What are the things that I have not explained? Whether the universe is eternal or not, etc., I have not explained. Why, Māluṅkyaputta, have I not explained them? Because it is not useful, it is not fundamentally connected with the spiritual holy life, is not conducive to aversion, detachment, cessation, tranquility, deep penetration, full realization, Nirvāṇa. That is why I have not told you about them. ”

The more you deal with this topic, the more interesting it becomes, especially in light of the ongoing predictions such as the advent of the era of robots, smart machines, etc. And it is not surprising that such machines will be created, because evolution shows that man learns to expand himself, creating a symbiosis of man-machine. It happens that you are walking at your fence, a nail sticks out. Oh, how hard it is to hammer it without a hammer. And with a hammer - just there. Therefore, it is not surprising that the same “helpers” appear for brain activity.

As I studied the topic, I did not stop thinking that machine learning seems to explain how our mind works. Below I will list the lessons that I learned about a person while studying machine learning. I do not pretend to be right, I apologize if all this is obvious, I will be glad if the material is fun, or if there are counter-examples to start (again) living by faith in the "incomprehensible". By the way, HSE has a course where machine learning is used to understand how the brain works.

1) An ensemble of simplest algorithms can understand an unimaginable data heap with good accuracy. In this regard, I recall the recently deceased Minsky, who wrote a whole bookabout the stupid agents that together form our mind. This interpretation of the mind allows us to imagine that all the magic that is our mind is nothing more than a collection of simple models, trained by experience.

2) In terms of learning from experience, I recall one of Andrew NG's favorite slides:

On it, Andrew focuses on the fact that the brain can teach its other department (where there is no model yet) those functions that were lost, for example, to see with the tongue.

3) Brain researchers know that the mind is laid when a person is very small. Fantastic changes take place - from the sounds a person begins to understand speech, to recognize parents / strangers. The kids are still beating computers, but this only shows that our mind seems to be very good models, trained on a gigantic amount of information (e.g., 3 years of continuous pictures), by giant biological neural networks ( 86 billion neurons ).

4) It seems that some models can somehow be explained and changed - there is an area like “sandbox” (“let's think about why this is so,” correction methods like psychoanalysis, etc.), and some go into unconscious “production” (habits and automatic actions: driving a car after many years of practice, breathing, movement, etc.). An example of how production works - the classic Clive Wearing case. Man has completely lost the ability to remember. "Having watched a certain video recording multiple times on successive days, he never had any memory of ever seeing the video or knowing the contents, but he was able to anticipate certain parts of the content without remembering how he learned them." . the model trained in the past days is in memory and gives predictions for the next viewing. Model - remained in memory, data about the past - no.

5) Probably, many people are too lazy to create or update their models and live on what is in “production”, on ready-made models created on historical data that are no longer relevant. Therefore, it is so difficult to change a person: of course, he will trust (unconsciously) more a model trained on a gigantic amount of past information than new reliableless data.

6) Animals live only on models in “production” - they cannot analyze the model analytically and without retraining change the model ( ceteris paribus ). They will salivate without food, from a light bulb.

7) The brain is often inferior to the machine (analytics), especially if you do not think carefully. For example, a classic of the genre from the Bill Howe course, Practical Predictive Analytics: Models and Methods:

15% (!) Of doctors answer correctly (answer: 7.8%).

8) Intuition is a complex model that cannot be explained. Intuition - always based on data and models. The best example from Malcolm Gladwell's “Blink”- “the tennis coach who knows when a player will double-fault before the racket even makes contact with the ball”. Those. his model, trained on years of observation, gives a very accurate prediction of whether the ball will hit the field, having only data before the strike.

9) In principle, the following generalization can be made: “A person is smart in some area as much as he has built an algorithm trained in this area.” For this, as a rule, many observations are needed. The same Gladwell says that data is needed in about 10,000 hours of practice.

10) Why can some not grow wiser, even with a lot of experience? Note that this is how often a fool is defined: “one who makes the same mistake in the same circumstances (with the same data).” Probably, the complexity of the models is different for everyone, as well as the ability to train models.

11) For training the model, positive and negative examples are needed. You cannot teach an algorithm or a person using only examples of the same type. A person will not learn if you always scold or repeat that everything is OK.

12) Machine and human learning does not predict with 100% probability. 25% of the error is a miss in one case out of four. It is not necessary to be surprised that the ideal algorithm with such an error is often mistaken (examples: forecasting the exchange rate, GDP this year, Dota2 games , etc.) It is not necessary to be surprised that very smart ones can be wrong very often. By the way, averages of a large number of predictions help - it is better to judge by them than by one observation.

13) Love, music, art, etc. clearly connected with the trained model (please understand in the right sense), which converts the data coming from the object of admiration into the probability of “success”, activation of the connections that we “like”, etc.

14) Machine learning is built on minimizing the prediction error, which corresponds to the very definition of a smart person (mind) - the one who gives the correct answer, that is, has a minimal error.

15) The ability to explain why the algorithm works the second time, both for a person and for a machine. In this connection, the Buddha is recalled :

“One day Māluṅkyaputta got up from his afternoon meditation, went to the Buddha, saluted him, sat on one side and said:

'Sir, when I was all alone meditating, this thought occurred to me: There are these problems unexplained, put aside and rejected by the Blessed One. Namely, (1) is the universe eternal ... '

Excerpt from Buddha's answer:

'... Suppose Māluṅkyaputta, a man is wounded by a poisoned arrow, and his friends and relatives bring him to a surgeon. Suppose the man should then say: “I will not let this arrow be taken out until I know who shot me; whether he is a Kṣatriya (of the warrior caste) or a Brāhmaṇa (of the priestly caste) or a Vaiśya (of the trading and agricultural caste) or a Śūdra (of the low caste); what his name and family may be; whether he is tall, short, or of medium stature; whether his complexion is black, brown, or golden: from which village, town or city he comes. I will not let this arrow be taken out until I know the kind of bow with which I was shot; the kind of bowstring used; the type of arrow; what sort of feather was used on the arrow and with what kind of material the point of the arrow was made. ”Māluṅkyaputta,

... Therefore, Māluṅkyaputta, bear in mind what I have explained as explained and what I have not explained as unexplained. What are the things that I have not explained? Whether the universe is eternal or not, etc., I have not explained. Why, Māluṅkyaputta, have I not explained them? Because it is not useful, it is not fundamentally connected with the spiritual holy life, is not conducive to aversion, detachment, cessation, tranquility, deep penetration, full realization, Nirvāṇa. That is why I have not told you about them. ”

Only registered users can participate in the survey. Please come in.

Do you agree that machine learning is a good brain model?

- 65.3% Yes 66

- 34.6% No 35