Around the beta for 260 days: how we learned to listen to users

Everyone knows: dogfudding your own product (well, eating your dog's food - developing a product that you use yourself) is the right principle in all respects. Working on the Aimylogic chatbot designer, we at Just AI had a great idea of how it should be, but at first we didn’t think much - our NLU engineers usually write the code right away. And so we decided to follow the path of lean startup: roll out the beta, collect early feedback from users and write Aimylogic in a living way. We tell how we, together with users, went from beta to release.

The cards reveal:

· Dima Chechetkin, co-founder and director of strategic projects Just AI

· Gleb Oblomsky, director of products Just AI, Aimylogic

· Andrey Chikishev, technical support engineer Aimylogic

Dima: “Of course, we could not roll out the beta and do all the work ourselves. But, first, the resources of the team are always limited, and secondly, it would be foolish to formulate theoretical cases for the intended audience. Especially in this new market, as a conversational AI. We ourselves write scripts related to NLP, but we do it at a more specific level - mainly code. And yes, we knew which cases can be made using the visual editor. But still it was necessary to check and find out what bots (not to mention the voice skills) will be obtained from users.

In general, it was important for us to look at the actual use of the product. And this development method challenged our developers: most of them did not encounter the creation of a mass public product (and not a closed enterprise platform, for example). At first, the developers did not even know that the product team communicates with users! And when they found out, they were surprised. Yes, it was a way out of the comfort zone, but it immediately became clear - if the team makes a public product and immediately receives feedback, it sincerely strives for the best result for the users. Everyone does not care, and this is reflected in the product. "

External reasons to send Aimylogic to free floating right in beta were also. We were spurred on and added excitement to Alice’s exit from Yandex. In the US, the market for voice assistants was formed in parallel with the infrastructure for it - for example, Google launched the Assistant with the action designer. And Yandex Alice - without. We knew for sure that with the release of the first assistant in Russia, the market would need a clear and convenient tool for developing skills.

Gleb:“The idea of creating a simple and accessible designer bots who understand natural language, we have matured for a long time. We knew that he would be in demand, but doubted about the target audience - who exactly was the product addressed to, what needs would it close. The announcement by Yandex Dialogue Platform in March 2018 became the point after which internal workings began to take shape in Aimylogic. We did the first public MVP in 1.5 months, and by the end of May, Aimylogic was introduced to the world. ”

In the topic of conversational AI, we have been cooking for a long time and know what is being done in the global market, what our competitors are planning and what their solutions are not enough for. We ourselves have come up with unique features for Aimylogic, such as visualizing the process of creating and editing a script in the form of an conversational flow tree. In general, we understood that we can do anything.

The depth of the Aimylogic functionality was immediately provided by the Just AI NLU technologies, so we focused on the implementation of the most basic functionality and began to look at what they would ask for using “add-ons”. It was the users who helped us prioritize. So the first wave of feedback in Telegram led to the appearance of a feature for renaming script blocks and scaling. Here it is:

Dima:“And in general, many functions in Aimylogic could appear much later, but were stimulated by users. We just saw that they really help in working with the product. By the way, dragging screens is a feature that jumped from backlog up sharply in priority. And vice versa, at first a purely technical topic went on webbooks. Another feature that users have exactly prepped is zooming. When the users understood how to use Aimylogic, they started to get high on this and build large scenarios with a spreading logic, it became inconvenient for them to work without scaling. This is how a true professional tool for the design of conversational interfaces appeared, which gives the necessary level of decomposition. ”

Drag and drop screens:

Gleb:“Or, for example, the functions of embedded integrations with some business systems. Honestly, we thought that this feature would be needed almost immediately. But the first users were more concerned about the issues of flexibility and scalability of the convenience of working with the designer on large scenarios - we focused our main efforts on them during the beta. But now, judging by feedback, there is interest in such integration, so that we will continue to pay more attention to them.

The idea of conditional execution for each block also seemed to us very necessary. Here you have a script from the blocks, and each block can be assigned the conditions under which it will work. This seemed to give the tool flexibility. But Aimylogic's flexibility was enough without it, and we completely abandoned this feature. ”

More users influence the order of connecting channels in Aimylogic, where they would like to see their chatbots: Alice, Google Assistant, Telegram, VKontakte, chat widgets on sites and even Alexa. But Viber, for example, turned out to be unclaimed and went to backlog, but Instagram and WhatsApp are leading in the top of user wishes - and they will definitely appear in Aimylogic.

To make the product more convenient, users should listen to their feelings, and we should listen to users. True, convenience is not always verbalized, and they even complain less often on the button “not there” than on a specific bug. The user thinks: what if it was just taste, what if it only seems to me? Therefore, we investigated user behavior with the help of UX-tools and UX-techniques and paid attention to cases of mass confusion.

Gleb:“For us, Aimylogic generally started with UX - we looked at other chatbot designers and realized that there are practically no convenient tools for visualizing interactive dialogue combined with business logic. Well, either this case is implemented, as in DialogFlow, when everything needs to be remembered, and you see lists of reactions of bots. But it is not at all visual. The other extreme of chatbots editors is the visual part, but it is overloaded with NLU chips: you add, it seems, a simple block and you deal with intents and a bunch of incomprehensible controls. In such tools just get lost in what you do.

Even before we came up with the name "Aimylogic", we went through a large number of UX prototypes, testing various ideas. As a result, it was possible to find a balance between simple and understandable UX and sufficient flexibility and adaptability. And in the future, we improved a lot in Aimylogic thanks to the user experience. ”

So, we carefully watched Aimylogic users, including through the web viewer. And sometimes they could make sure that people really make an unnecessary or senseless movement that hinders them and makes it difficult to work in a product.

For example, in the first release, the Aimylogic help — a much-needed piece for a new product — was placed on the same canvas as the script editor. We noticed that, on average, our users' scripts occupy 70-100 screens, so the help turned out to be hidden and had to be scrolled. So she moved to the top bar. Perhaps the first thing you start to analyze and improve in the product based on the analysis of user experience is onboarding!

Help in the bar:

Dima:“When a creepy web of mouse movements appears in a webcam, something has gone wrong. One of these things we found when switching from the bot design screen to the screen, where we add content for the bot. It turned out that users added entities, saved them, then went to the editor to test everything in the widget. Then our leading UX-designer Katya Yulina suggested making a widget on all screens so that the user always had it at hand. So you can add or delete an entity without any extra gestures, save and immediately test it. Did - enjoy.

How it was: How it became:

How it became:

In general, we imagined exactly how users will apply Aimylogic and why create bots: consulting clients, ordering and delivering goods, entertainment, and the like. But specific examples of the use of the designer turned out to be much more curious! There were no surprises (and quite inspiring ones!).

Gleb: “There were a lot of insights, especially at first. But from the last thing that was remembered - in one of the universities, students prepare voice skills at Aimylogic as term papers! ”.

Dima:“One user literally bombarded us with the found bugs - and by the wording it was clear what the pros wrote. I wondered what he was doing and what he was trying to do with the help of Aimylogiс. It turned out that the guy teaches people to sell the crypt. I opened his script (and this was before the convenient features like dragging blocks, not to mention the compact view) and I see ... A script that does not fit on a 4K monitor! A huge number of screens that can not even be counted - the computer was noisy, trying to render it. So we learned that on the beta version of Aimylogic, the user built the whole online course script and with its help leads the client through all the stages of training, shows the video, asks for an answer. It became a real (and pleasant) discovery for me that a person trusted in a generally new product, devoted a lot of time to working through the script, not being sure that all this will not crash (still a beta version). But he did and did. We then used this script as a test site to test Aimylogic performance. Now the bot is working successfully in the Telegraph. "

Andrew: “And for me, it was a pleasant surprise that users without a technical background plunged into the product. First, we were visited by guys who said: they say, we are not able to do anything, make us a bot. We offered to try it yourself using a template, for example. And as a result, everything worked out perfectly for them - having seen that the product is not so complicated, they try and save themselves money as a result, they are no longer afraid to learn some technical things and develop their skills.

I was also surprised by the variety of scenarios - our users think really creatively. In Aimylogic implemented a lot of interesting ideas! Once I came across a curious social business game: every day a person comes into a bot and performs motivating tasks, gets points for them. Or, for example, there is a bot that helps pick up toothpaste, and it works in two languages. Another cool bot with an impressive amount of scenario allows you to create a fascinating story or a fairy tale in 10 steps - each time with a different ending. Users were even interested in how to create a dating bot - perhaps such a scenario will appear soon. ”

Among the chatbots on Aimylogic there are also virtual assistants for recording visitors to a barbershop or fitness center, chatbots-consultants for marketing agency and suburban real estate services, a sports betting bot and a bot recording blood pressure indicators, HR assistants, voice skills selection of stuffing for shavermy. And of course, text quests and narrative games for VKontakte, Telegram and Alice.

Looking at users, we began to create creativity too. This part is about how ideas for chatbots and skills are born.

Dima: “Yoga for the eyes,” for example, is just a stunned skill, the thing for which you are not ashamed. On the Google hackathon, on the eve of the launch of the Russian-speaking Google Assistant, it was necessary to come up with a script that it is important to implement in the voice channel. Well and, accordingly, understand why it is impossible to look at the dialogue. Every day I do exercises for the eyes. And so was born "Yoga for the eyes."

Andrei: “My landlord every month asks for meter readings. And I realized that I needed a bot to calculate utilities. And created such a script in Aimylogic. The bot calculates payment according to the tariffs and sends the data to the owner of the apartment himself. I also created the skill to sign up for volleyball classes - however, as long as the audience who goes to play is not ready to use Alice. ”

Gleb:“Current feedback channels from users are still satisfied with us. But the thought does not leave me to make a bot, which at least learns about the idea from the user, clarifies the basic need and puts all this on our board of food ideas! And if you then teach him to evaluate the complexity and product value? :) "

Dima:“But I really need a bot that would quickly find the necessary information in legal documents. It turns out that there is nothing difficult to defend your rights - it is not at all necessary to have a legal education, but you have to endlessly delve into a heap of any documentation, in resolutions and amendments, in order to write a legally competent rationale indicating any violations. I once spent my time, but wrapped up the way of calculating some utility bills, invented by the management company. But to fight regularly, you need to search, read, spend a lot of time and effort. If someone made a bot that could tell you what a problem situation arose, and he would give out a selection of documents that can help her resolve, I would definitely use it. ”

Andrew:“It would be cool if Alice or another virtual assistant could start a dialogue with you, motivate you to do something and, importantly, work with objections. For example, in the morning an assistant calls you for a run, you ignore him, but he persistently gives good arguments and reminds you of what you promised. But for now, unfortunately, Alice cannot “wake up” by herself, without a team. ”

So, this week Aimylogic went from beta to outer space. What does it mean? For the product - mature functionality and new adventures (for example, entering the international market). For users - new cool features like the ability to transfer the dialogue to the operator right in the chat with the bot.

Like this:

And of course, this means a range of tariffs with a variety of scenarios of work in Aimylogic. Now users will be able to decide for themselves which subscription is interesting and profitable for them - extended for business or special for developers. In the developer rate, for example, absolutely all product features are available, but the bot's maximum audience is severely limited. But you can create a bot, show it to the customer, conduct joint testing and transfer the bot to the customer account - where unique users will no longer have 100, but 50,000. You can also use Aimylogic completely free, but with a limited number of channels for connection and the number of unique visitors .

The cards reveal:

· Dima Chechetkin, co-founder and director of strategic projects Just AI

· Gleb Oblomsky, director of products Just AI, Aimylogic

· Andrey Chikishev, technical support engineer Aimylogic

Part one. Try lean startup if you dare

Dima: “Of course, we could not roll out the beta and do all the work ourselves. But, first, the resources of the team are always limited, and secondly, it would be foolish to formulate theoretical cases for the intended audience. Especially in this new market, as a conversational AI. We ourselves write scripts related to NLP, but we do it at a more specific level - mainly code. And yes, we knew which cases can be made using the visual editor. But still it was necessary to check and find out what bots (not to mention the voice skills) will be obtained from users.

In general, it was important for us to look at the actual use of the product. And this development method challenged our developers: most of them did not encounter the creation of a mass public product (and not a closed enterprise platform, for example). At first, the developers did not even know that the product team communicates with users! And when they found out, they were surprised. Yes, it was a way out of the comfort zone, but it immediately became clear - if the team makes a public product and immediately receives feedback, it sincerely strives for the best result for the users. Everyone does not care, and this is reflected in the product. "

External reasons to send Aimylogic to free floating right in beta were also. We were spurred on and added excitement to Alice’s exit from Yandex. In the US, the market for voice assistants was formed in parallel with the infrastructure for it - for example, Google launched the Assistant with the action designer. And Yandex Alice - without. We knew for sure that with the release of the first assistant in Russia, the market would need a clear and convenient tool for developing skills.

Gleb:“The idea of creating a simple and accessible designer bots who understand natural language, we have matured for a long time. We knew that he would be in demand, but doubted about the target audience - who exactly was the product addressed to, what needs would it close. The announcement by Yandex Dialogue Platform in March 2018 became the point after which internal workings began to take shape in Aimylogic. We did the first public MVP in 1.5 months, and by the end of May, Aimylogic was introduced to the world. ”

Part two. Feature as premonition

In the topic of conversational AI, we have been cooking for a long time and know what is being done in the global market, what our competitors are planning and what their solutions are not enough for. We ourselves have come up with unique features for Aimylogic, such as visualizing the process of creating and editing a script in the form of an conversational flow tree. In general, we understood that we can do anything.

The depth of the Aimylogic functionality was immediately provided by the Just AI NLU technologies, so we focused on the implementation of the most basic functionality and began to look at what they would ask for using “add-ons”. It was the users who helped us prioritize. So the first wave of feedback in Telegram led to the appearance of a feature for renaming script blocks and scaling. Here it is:

Dima:“And in general, many functions in Aimylogic could appear much later, but were stimulated by users. We just saw that they really help in working with the product. By the way, dragging screens is a feature that jumped from backlog up sharply in priority. And vice versa, at first a purely technical topic went on webbooks. Another feature that users have exactly prepped is zooming. When the users understood how to use Aimylogic, they started to get high on this and build large scenarios with a spreading logic, it became inconvenient for them to work without scaling. This is how a true professional tool for the design of conversational interfaces appeared, which gives the necessary level of decomposition. ”

Drag and drop screens:

Gleb:“Or, for example, the functions of embedded integrations with some business systems. Honestly, we thought that this feature would be needed almost immediately. But the first users were more concerned about the issues of flexibility and scalability of the convenience of working with the designer on large scenarios - we focused our main efforts on them during the beta. But now, judging by feedback, there is interest in such integration, so that we will continue to pay more attention to them.

The idea of conditional execution for each block also seemed to us very necessary. Here you have a script from the blocks, and each block can be assigned the conditions under which it will work. This seemed to give the tool flexibility. But Aimylogic's flexibility was enough without it, and we completely abandoned this feature. ”

More users influence the order of connecting channels in Aimylogic, where they would like to see their chatbots: Alice, Google Assistant, Telegram, VKontakte, chat widgets on sites and even Alexa. But Viber, for example, turned out to be unclaimed and went to backlog, but Instagram and WhatsApp are leading in the top of user wishes - and they will definitely appear in Aimylogic.

Part Three Magic ux

To make the product more convenient, users should listen to their feelings, and we should listen to users. True, convenience is not always verbalized, and they even complain less often on the button “not there” than on a specific bug. The user thinks: what if it was just taste, what if it only seems to me? Therefore, we investigated user behavior with the help of UX-tools and UX-techniques and paid attention to cases of mass confusion.

Gleb:“For us, Aimylogic generally started with UX - we looked at other chatbot designers and realized that there are practically no convenient tools for visualizing interactive dialogue combined with business logic. Well, either this case is implemented, as in DialogFlow, when everything needs to be remembered, and you see lists of reactions of bots. But it is not at all visual. The other extreme of chatbots editors is the visual part, but it is overloaded with NLU chips: you add, it seems, a simple block and you deal with intents and a bunch of incomprehensible controls. In such tools just get lost in what you do.

Even before we came up with the name "Aimylogic", we went through a large number of UX prototypes, testing various ideas. As a result, it was possible to find a balance between simple and understandable UX and sufficient flexibility and adaptability. And in the future, we improved a lot in Aimylogic thanks to the user experience. ”

So, we carefully watched Aimylogic users, including through the web viewer. And sometimes they could make sure that people really make an unnecessary or senseless movement that hinders them and makes it difficult to work in a product.

For example, in the first release, the Aimylogic help — a much-needed piece for a new product — was placed on the same canvas as the script editor. We noticed that, on average, our users' scripts occupy 70-100 screens, so the help turned out to be hidden and had to be scrolled. So she moved to the top bar. Perhaps the first thing you start to analyze and improve in the product based on the analysis of user experience is onboarding!

Help in the bar:

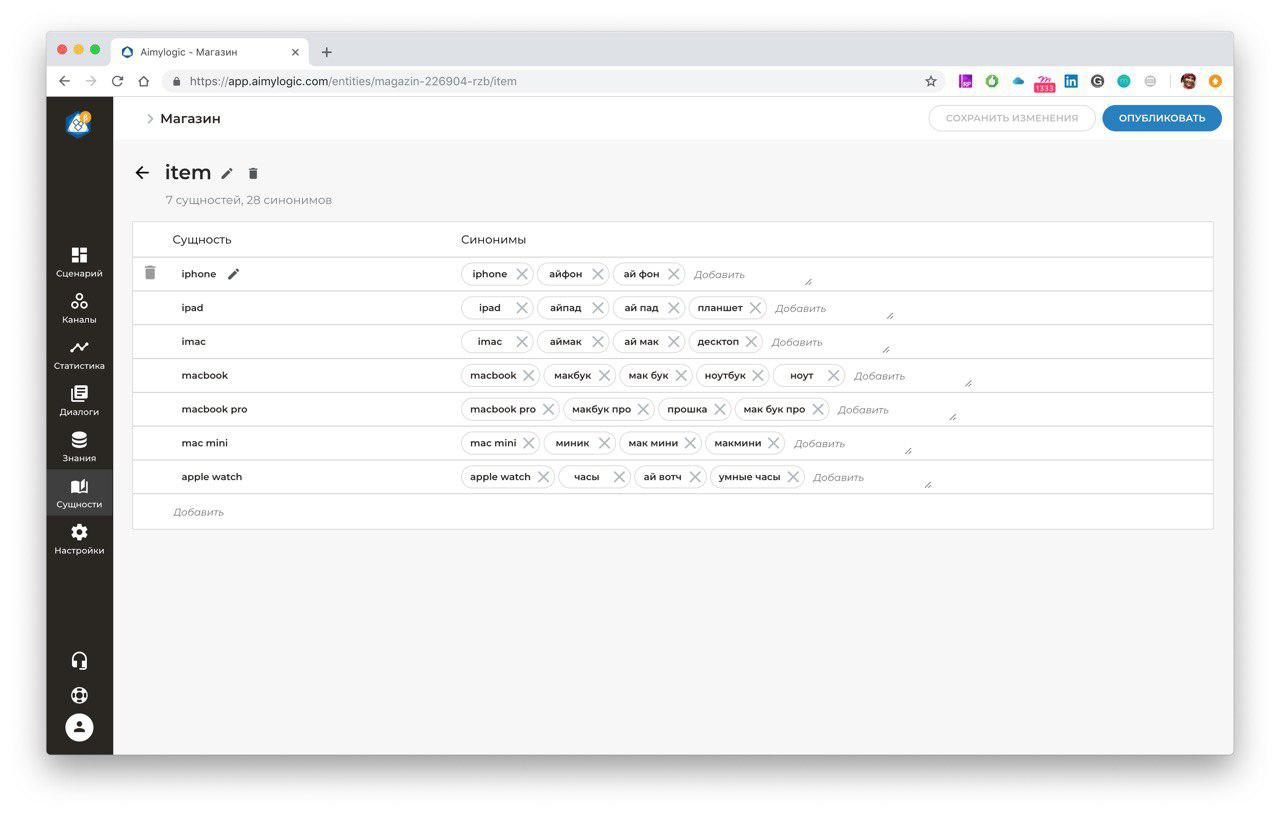

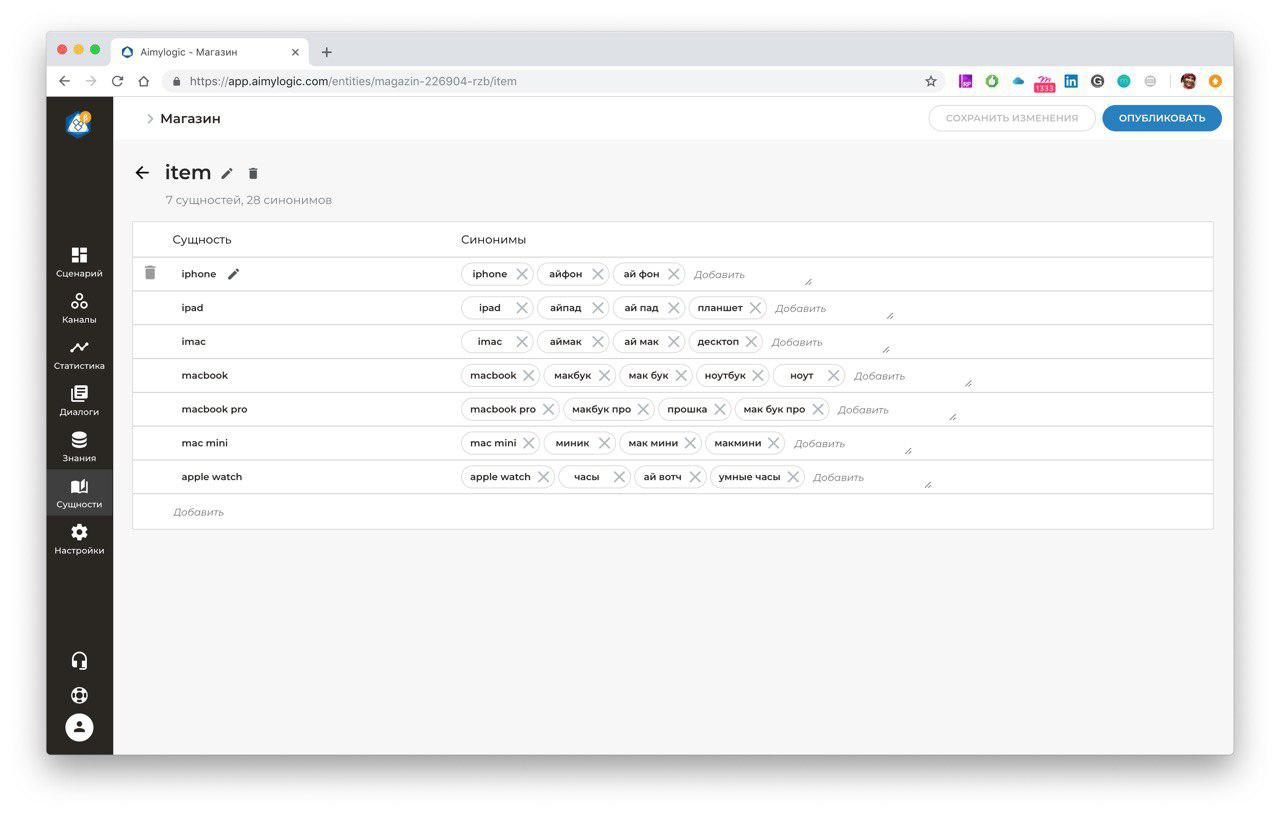

Dima:“When a creepy web of mouse movements appears in a webcam, something has gone wrong. One of these things we found when switching from the bot design screen to the screen, where we add content for the bot. It turned out that users added entities, saved them, then went to the editor to test everything in the widget. Then our leading UX-designer Katya Yulina suggested making a widget on all screens so that the user always had it at hand. So you can add or delete an entity without any extra gestures, save and immediately test it. Did - enjoy.

How it was:

How it became:

How it became:

Part Four Users can surprise

In general, we imagined exactly how users will apply Aimylogic and why create bots: consulting clients, ordering and delivering goods, entertainment, and the like. But specific examples of the use of the designer turned out to be much more curious! There were no surprises (and quite inspiring ones!).

Gleb: “There were a lot of insights, especially at first. But from the last thing that was remembered - in one of the universities, students prepare voice skills at Aimylogic as term papers! ”.

Dima:“One user literally bombarded us with the found bugs - and by the wording it was clear what the pros wrote. I wondered what he was doing and what he was trying to do with the help of Aimylogiс. It turned out that the guy teaches people to sell the crypt. I opened his script (and this was before the convenient features like dragging blocks, not to mention the compact view) and I see ... A script that does not fit on a 4K monitor! A huge number of screens that can not even be counted - the computer was noisy, trying to render it. So we learned that on the beta version of Aimylogic, the user built the whole online course script and with its help leads the client through all the stages of training, shows the video, asks for an answer. It became a real (and pleasant) discovery for me that a person trusted in a generally new product, devoted a lot of time to working through the script, not being sure that all this will not crash (still a beta version). But he did and did. We then used this script as a test site to test Aimylogic performance. Now the bot is working successfully in the Telegraph. "

Andrew: “And for me, it was a pleasant surprise that users without a technical background plunged into the product. First, we were visited by guys who said: they say, we are not able to do anything, make us a bot. We offered to try it yourself using a template, for example. And as a result, everything worked out perfectly for them - having seen that the product is not so complicated, they try and save themselves money as a result, they are no longer afraid to learn some technical things and develop their skills.

I was also surprised by the variety of scenarios - our users think really creatively. In Aimylogic implemented a lot of interesting ideas! Once I came across a curious social business game: every day a person comes into a bot and performs motivating tasks, gets points for them. Or, for example, there is a bot that helps pick up toothpaste, and it works in two languages. Another cool bot with an impressive amount of scenario allows you to create a fascinating story or a fairy tale in 10 steps - each time with a different ending. Users were even interested in how to create a dating bot - perhaps such a scenario will appear soon. ”

Among the chatbots on Aimylogic there are also virtual assistants for recording visitors to a barbershop or fitness center, chatbots-consultants for marketing agency and suburban real estate services, a sports betting bot and a bot recording blood pressure indicators, HR assistants, voice skills selection of stuffing for shavermy. And of course, text quests and narrative games for VKontakte, Telegram and Alice.

Part Five. How did the team love to dogfud

Looking at users, we began to create creativity too. This part is about how ideas for chatbots and skills are born.

Dima: “Yoga for the eyes,” for example, is just a stunned skill, the thing for which you are not ashamed. On the Google hackathon, on the eve of the launch of the Russian-speaking Google Assistant, it was necessary to come up with a script that it is important to implement in the voice channel. Well and, accordingly, understand why it is impossible to look at the dialogue. Every day I do exercises for the eyes. And so was born "Yoga for the eyes."

Andrei: “My landlord every month asks for meter readings. And I realized that I needed a bot to calculate utilities. And created such a script in Aimylogic. The bot calculates payment according to the tariffs and sends the data to the owner of the apartment himself. I also created the skill to sign up for volleyball classes - however, as long as the audience who goes to play is not ready to use Alice. ”

Gleb:“Current feedback channels from users are still satisfied with us. But the thought does not leave me to make a bot, which at least learns about the idea from the user, clarifies the basic need and puts all this on our board of food ideas! And if you then teach him to evaluate the complexity and product value? :) "

Dima:“But I really need a bot that would quickly find the necessary information in legal documents. It turns out that there is nothing difficult to defend your rights - it is not at all necessary to have a legal education, but you have to endlessly delve into a heap of any documentation, in resolutions and amendments, in order to write a legally competent rationale indicating any violations. I once spent my time, but wrapped up the way of calculating some utility bills, invented by the management company. But to fight regularly, you need to search, read, spend a lot of time and effort. If someone made a bot that could tell you what a problem situation arose, and he would give out a selection of documents that can help her resolve, I would definitely use it. ”

Andrew:“It would be cool if Alice or another virtual assistant could start a dialogue with you, motivate you to do something and, importantly, work with objections. For example, in the morning an assistant calls you for a run, you ignore him, but he persistently gives good arguments and reminds you of what you promised. But for now, unfortunately, Alice cannot “wake up” by herself, without a team. ”

Part six. Hurray, release!

So, this week Aimylogic went from beta to outer space. What does it mean? For the product - mature functionality and new adventures (for example, entering the international market). For users - new cool features like the ability to transfer the dialogue to the operator right in the chat with the bot.

Like this:

And of course, this means a range of tariffs with a variety of scenarios of work in Aimylogic. Now users will be able to decide for themselves which subscription is interesting and profitable for them - extended for business or special for developers. In the developer rate, for example, absolutely all product features are available, but the bot's maximum audience is severely limited. But you can create a bot, show it to the customer, conduct joint testing and transfer the bot to the customer account - where unique users will no longer have 100, but 50,000. You can also use Aimylogic completely free, but with a limited number of channels for connection and the number of unique visitors .

Aimylogic in facts and figures

- The most popular scenarios created in Aimylogic are “Yoga for the Eyes” with 80,600 unique users and the game “Yes, my lord!”, Which was played by 51,500 people!

- 266,000 people took advantage of bots and skills created in Aimylogic at the beginning of February

- 2800 bots and voice skills works on Aimylogic. 400 of them have constant traffic.

- Webhuki - tul, which everyone loves. And users, and our technical support. In the Aimylogic user chat, the word “webhuk” sounded 150 times.

- We asked the users how much time they spend on average creating a bot: it turned out, from 30 minutes to 14 days. But still the best answer sounded like this: “If you do not take into account the documentation, you made it in 5 minutes, another 10 minutes went away, in order to tie the bot’s events with the events in the game engine. Here I will tell you more, I managed to explain how your instrument, a child who is 4 years old, works. And he practically collected a simple bot himself. ”

- ∞ - The number of cups of coffee drunk by our developers during the time Aimylogic was in beta. And this is just coffee!