Entity Framework: improving performance when saving data to the database

When adding / changing a large number of records (10³ and above), the performance of the Entity Framework leaves much to be desired. The reason for this is both the architectural features of the framework itself and non-optimal generated SQL. Looking ahead - saving data bypassing the context reduces execution time by orders of magnitude.

Contents of the article:

1. Insert / Update using standard Entity Framework tools

2. Finding a solution to the problem

3. Integration of Entity Framework and SqlBulkCopy

4. Advanced insertion using MERGE

5. Performance comparison

6. Conclusions

Let's start with Insert. The standard way to add new records to the database is to add it to the context and save it:

Each call to the Add method results in an expensive execution call to the internal DetectChanges algorithm . This algorithm scans all entities in the context and compares the current value of each property with the original value stored in the context, updates the relationships between entities, etc. A known way to improve performance, relevant until the release of EF 6, is to disable DetectChanges while adding entities to the context:

It is also recommended not to keep tens of thousands of objects in the context and save data in blocks, while maintaining the context and creating a new every N objects, for example, like here . Finally, in EF 6, an optimized AddRange method has been introduced that raises performance to the Add + AutoDetectChangesEnabled bundle level :

Unfortunately, the above approaches do not solve the main problem, namely: when saving data to the database, a separate INSERT request is generated for each new record!

With Update, the situation is similar. The following code:

will lead to the execution of a separate SQL query for each changed object:

In the simplest cases, EntityFramework.Extended may help :

This code will bypass the context and generate 1 SQL query. For more information on EF rate, and work with this library in an article authored by TP7 . Obviously, the solution is not universal and is suitable only for writing to all target lines of the same value.

Feeling a persistent aversion to writing “bikes,” I first looked for best-practices for multiple insertion using EF. It would seem that a typical task - but a suitable solution "out of the box" could not be found. At the same time, SQL Server offers a number of techniques for quickly inserting data, such as the bcp utility and the SqlBulkCopy class . The latter will be discussed below.

System.Data.SqlClient.SqlBulkCopy is a class from ADO.NET that is designed to write a large amount of data to SQL Server tables. As a data source, it can take DataRow [] , DataTable , or an IDataReader implementation .

What can:

Minuses:

You can read more about the class in an article by JeanLouis , but here we will consider our urgent problem - namely, the lack of integration of SqlBulkCopy and EF. There is no established approach to solving this problem, but there are several projects of varying degrees of suitability, such as:

EntityFramework.BulkInsert

In fact, it turned out to be inoperative. While studying Issues, I came across a discussion with ... the notorious Julie Lerman, describing a problem similar to mine and which remained unanswered by the authors of the project.

EntityFramework.Utilities

Live project, active community. No Database First support, but promise to add.

Entity Framework Extensions

$ 300.

Let's try to do everything ourselves. In the simplest case, inserting data from a collection of objects using SqlBulkCopy looks like this:

The task in itself to implement IDataReader based on a collection of objects is trivial, so I will limit myself to a link and go on to describe ways to handle errors during insertion using SqlBulkCopy . By default, data is inserted in its own transaction. When an exception occurs, a SqlException is thrown and a rollback occurs, i.e. data in the database will not be written at all. And the “native” error messages of this class cannot be called uninformative. For example, what might contain SqlException.AdditionalInformation :

or:

Unfortunately, SqlBulkCopy often does not provide information to uniquely identify the row / entity that caused the error. Another unpleasant feature is that when you try to insert a duplicate record on the primary key, SqlBulkCopy will throw an exception and exit, not providing the opportunity to handle the situation and continue execution.

Mapping

In the case of correctly generated entities and databases, checks for conformity of types or the length of the field in the table become irrelevant, as here . It is more useful to deal with column mapping performed through the SqlBulkCopy.ColumnMappings property :

For EF In 99% of cases, you will need to set ColumnMappings explicitly (due to navigation properties and any additional properties). Navigation properties can be screened out with Reflection:

Such a code is suitable for a POCO class without additional properties, otherwise you will have to switch to "manual control". Obtaining a table schema is also quite simple:

This makes it possible to manually map between the source class and the target table.

Using the SqlBulkCopy.BatchSize property and the SqlBulkCopyOptions

SqlBulkCopy.BatchSize class :

SqlBulkCopyOptions - enumeration:

We can optionally enable verification of triggers and restrictions on the database side (disabled by default). When specifying BatchSize and UseInternalTransaction , the data will be sent to the server in blocks in separate transactions. Thus, all successful blocks until the first erroneous will be saved in the database.

SqlBulkCopy can only add records to the table, and does not provide any functionality for changing existing records. Nevertheless, we can speed up the execution of Update operations! How? We paste the data into a temporary empty table, and then synchronize the tables using the MERGE statement , which debuted in SQL Server 2008:

Using MERGE, it is easy and simple to implement various logic for processing duplicates: update data in the target table, or ignore or even delete matching records. Thus, we can save data from the collection of EF objects in the database using the following algorithm:

Steps 1 and 3 will be discussed in more detail.

Temporary table

It is necessary to create a table in the database that completely repeats the table layout for inserting data. Creating copies manually is the worst option possible, since all further work on comparing and synchronizing table schemas will also fall on your shoulders. It is safer to copy the circuit programmatically and immediately before insertion. For example, using SQL Server Management Objects (SMO) :

It is worth paying attention to the ScriptingOptions class , which contains several dozen parameters for fine-tuning the generated SQL. Expand the resulting StringCollection into a String . Unfortunately, I did not find a better solution than replacing the name of the source table with the name of the temporary a la String.Replace ("Order", "Order_TEMP") in the script . I would be grateful for a hint of a beautiful decision to create a copy of the table within the same database. Let's execute the finished script in any convenient way. Copy of table created!

Copying data from a temporary table to a target It

remains to execute the MERGE statement on the SQL Server side, which compares the contents of the temporary and target tables and performs an update or insert (if necessary). For example, for the [Order] table, the code may look like this:

This SQL query compares records from the temporary table [Order_TEMP] with records from the target table [Order], and performs Update if a record with the same value in the Id field is found, or Insert if no such record is found. Let's execute the code in any convenient way, and you're done! Do not forget to clean / delete the temporary table to taste.

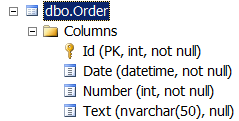

Runtime environment: Visual Studio 2013, Entity Framework 6.1.1 (Database First), SQL Server 2012. For testing, the [Order] table was used (the table layout is given above). Measurements were made of the runtime for the approaches to saving data in the database considered in the article, the results are presented in the following (time is indicated in seconds):

Wow! If you use the Add method to add to the context and SaveChanges to save, saving 100,000 records to the database will take almost 2 hours! While SqlBulkCopy spends less than a second on the same task!

Again SqlBulkCopy out of competition. The source code for the test application is available on GitHub .

In the case of working with a context containing a large number of objects (10³ and above), abandoning the Entity Framework infrastructure (adding to the context + saving the context) and switching to SqlBulkCopy for writing to the database can provide a performance increase of tens or even hundreds of times. However, in my opinion, using the EF + SqlBulkCopy bundle everywhere is a clear sign that something is wrong with your application architecture. The approach considered in the article should be considered as a simple means to accelerate performance in bottlenecks of an already written system, if it is difficult to change the architecture / technology for any reason. Any developer using the Entity Framework should know the strengths and weaknesses of this tool. Good luck!

Contents of the article:

1. Insert / Update using standard Entity Framework tools

2. Finding a solution to the problem

3. Integration of Entity Framework and SqlBulkCopy

4. Advanced insertion using MERGE

5. Performance comparison

6. Conclusions

1. Insert / Update with standard Entity Framework tools

Let's start with Insert. The standard way to add new records to the database is to add it to the context and save it:

context.Orders.Add(order);

context.SaveChanges();

Each call to the Add method results in an expensive execution call to the internal DetectChanges algorithm . This algorithm scans all entities in the context and compares the current value of each property with the original value stored in the context, updates the relationships between entities, etc. A known way to improve performance, relevant until the release of EF 6, is to disable DetectChanges while adding entities to the context:

context.Configuration.AutoDetectChangesEnabled = false;

orders.ForEach(order => context.Orders.Add(order));

context.Configuration.AutoDetectChangesEnabled = true;

context.SaveChanges();

It is also recommended not to keep tens of thousands of objects in the context and save data in blocks, while maintaining the context and creating a new every N objects, for example, like here . Finally, in EF 6, an optimized AddRange method has been introduced that raises performance to the Add + AutoDetectChangesEnabled bundle level :

context.Orders.AddRange(orders);

context.SaveChanges();

Unfortunately, the above approaches do not solve the main problem, namely: when saving data to the database, a separate INSERT request is generated for each new record!

SQL

INSERT [dbo].[Order]([Date], [Number], [Text])

VALUES (@0, @1, NULL)

With Update, the situation is similar. The following code:

var orders = context.Orders.ToList();

//.. записали новые данные

context.SaveChanges();

will lead to the execution of a separate SQL query for each changed object:

SQL

UPDATE [dbo].[Order]

SET [Text] = @0

WHERE ([Id] = @1)

In the simplest cases, EntityFramework.Extended may help :

//update all tasks with status of 1 to status of 2

context.Tasks.Update(

t => t.StatusId == 1,

t2 => new Task { StatusId = 2 });

This code will bypass the context and generate 1 SQL query. For more information on EF rate, and work with this library in an article authored by TP7 . Obviously, the solution is not universal and is suitable only for writing to all target lines of the same value.

2. Search for a solution to the problem

Feeling a persistent aversion to writing “bikes,” I first looked for best-practices for multiple insertion using EF. It would seem that a typical task - but a suitable solution "out of the box" could not be found. At the same time, SQL Server offers a number of techniques for quickly inserting data, such as the bcp utility and the SqlBulkCopy class . The latter will be discussed below.

System.Data.SqlClient.SqlBulkCopy is a class from ADO.NET that is designed to write a large amount of data to SQL Server tables. As a data source, it can take DataRow [] , DataTable , or an IDataReader implementation .

What can:

- Send data to the server block with transaction support;

- Perform mapping of columns from the DataTable to the database table;

- Ignore constraints, foreign keys on insertion (optional).

Minuses:

- Atomic insertion (optional);

- Inability to continue work after an exception has occurred;

- Weak error handling capabilities.

You can read more about the class in an article by JeanLouis , but here we will consider our urgent problem - namely, the lack of integration of SqlBulkCopy and EF. There is no established approach to solving this problem, but there are several projects of varying degrees of suitability, such as:

EntityFramework.BulkInsert

In fact, it turned out to be inoperative. While studying Issues, I came across a discussion with ... the notorious Julie Lerman, describing a problem similar to mine and which remained unanswered by the authors of the project.

EntityFramework.Utilities

Live project, active community. No Database First support, but promise to add.

Entity Framework Extensions

$ 300.

3. Integration of Entity Framework and SqlBulkCopy

Let's try to do everything ourselves. In the simplest case, inserting data from a collection of objects using SqlBulkCopy looks like this:

//entities - коллекция сущностей EntityFramework

using (IDataReader reader = entities.GetDataReader())

using (SqlConnection connection = new SqlConnection(connectionString))

using (SqlBulkCopy bcp = new SqlBulkCopy(connection))

{

connection.Open();

bcp.DestinationTableName = "[Order]";

bcp.ColumnMappings.Add("Date", "Date");

bcp.ColumnMappings.Add("Number", "Number");

bcp.ColumnMappings.Add("Text", "Text");

bcp.WriteToServer(reader);

}

The task in itself to implement IDataReader based on a collection of objects is trivial, so I will limit myself to a link and go on to describe ways to handle errors during insertion using SqlBulkCopy . By default, data is inserted in its own transaction. When an exception occurs, a SqlException is thrown and a rollback occurs, i.e. data in the database will not be written at all. And the “native” error messages of this class cannot be called uninformative. For example, what might contain SqlException.AdditionalInformation :

The given value of type String from the data source cannot be converted to type nvarchar of the specified target column.

or:

SqlDateTime overflow. Must be between 1/1/1753 12:00:00 AM and 12/31/9999 11:59:59 PM.

Unfortunately, SqlBulkCopy often does not provide information to uniquely identify the row / entity that caused the error. Another unpleasant feature is that when you try to insert a duplicate record on the primary key, SqlBulkCopy will throw an exception and exit, not providing the opportunity to handle the situation and continue execution.

Mapping

In the case of correctly generated entities and databases, checks for conformity of types or the length of the field in the table become irrelevant, as here . It is more useful to deal with column mapping performed through the SqlBulkCopy.ColumnMappings property :

If the data source and the destination table have the same number of columns and the starting position of each source column in the data source corresponds to the starting position of the corresponding destination column, the ColumnMappings collection is not required. However, if the number of columns or their order is different, you must use ColumnMappings to ensure that the data between the columns is correctly copied.

For EF In 99% of cases, you will need to set ColumnMappings explicitly (due to navigation properties and any additional properties). Navigation properties can be screened out with Reflection:

Get property names for mapping

var columns = typeof(Order).GetProperties()

.Where(property =>

property.PropertyType.IsValueType

|| property.PropertyType.Name.ToLower() == "string")

.Select(property => property.Name)

.ToList();

Such a code is suitable for a POCO class without additional properties, otherwise you will have to switch to "manual control". Obtaining a table schema is also quite simple:

We read the table schema

private static List GetColumns(SqlConnection connection)

{

string[] restrictions = { null, null, "", null };

var columns = connection.GetSchema("Columns", restrictions)

.AsEnumerable()

.Select(s => s.Field("Column_Name"))

.ToList();

return columns;

}

This makes it possible to manually map between the source class and the target table.

Using the SqlBulkCopy.BatchSize property and the SqlBulkCopyOptions

SqlBulkCopy.BatchSize class :

| Batchsize | The number of lines in each package. At the end of each packet, the number of lines contained in it is sent to the server. |

SqlBulkCopyOptions - enumeration:

| Member Name | Description |

|---|---|

| Checkconstraints | Check restrictions when inserting data. By default, restrictions are not checked. |

| Default | Use default values for all parameters. |

| Firetriggers | When this setting is specified, the server invokes insert triggers for rows inserted into the database. |

| KeepIdentity | Save source identification values. When this setting is not specified, identification values are assigned by the target table. |

| Keepnulls | Store NULL values in the target table regardless of the default value settings. When this setting is not specified, null values, where possible, are replaced with default values. |

| Tablelock | Get a bulk update lock for the duration of the bulk copy operation. When this setting is not specified, row locking is used. |

| UseInternalTransaction | When this setting is specified, each bulk copy operation is performed in a transaction. If you set this setting and provide the constructor with an SqlTransaction object, an ArgumentException will be thrown. |

We can optionally enable verification of triggers and restrictions on the database side (disabled by default). When specifying BatchSize and UseInternalTransaction , the data will be sent to the server in blocks in separate transactions. Thus, all successful blocks until the first erroneous will be saved in the database.

4. Advanced insert using MERGE

SqlBulkCopy can only add records to the table, and does not provide any functionality for changing existing records. Nevertheless, we can speed up the execution of Update operations! How? We paste the data into a temporary empty table, and then synchronize the tables using the MERGE statement , which debuted in SQL Server 2008:

MERGE (Transact-SQL)

Performs insert, update, or delete operations on the target table based on the results of the join to the source table. For example, you can synchronize two tables by inserting, updating, or deleting rows in one table based on differences found in another table.

Using MERGE, it is easy and simple to implement various logic for processing duplicates: update data in the target table, or ignore or even delete matching records. Thus, we can save data from the collection of EF objects in the database using the following algorithm:

- create / clear a temporary table that is completely identical to the target table;

- Insert data using SqlBulkCopy into a temporary table;

- using MERGE, add entries from the temporary table to the target.

Steps 1 and 3 will be discussed in more detail.

Temporary table

It is necessary to create a table in the database that completely repeats the table layout for inserting data. Creating copies manually is the worst option possible, since all further work on comparing and synchronizing table schemas will also fall on your shoulders. It is safer to copy the circuit programmatically and immediately before insertion. For example, using SQL Server Management Objects (SMO) :

Server server = new Server();

//SQL auth

server.ConnectionContext.LoginSecure = false;

server.ConnectionContext.Login = "login";

server.ConnectionContext.Password = "password";

server.ConnectionContext.ServerInstance = "server";

Database database = server.Databases["database name"];

Table table = database.Tables["Order"];

ScriptingOptions options = new ScriptingOptions();

options.Default = true;

options.DriAll = true;

StringCollection script = table.Script(options);

It is worth paying attention to the ScriptingOptions class , which contains several dozen parameters for fine-tuning the generated SQL. Expand the resulting StringCollection into a String . Unfortunately, I did not find a better solution than replacing the name of the source table with the name of the temporary a la String.Replace ("Order", "Order_TEMP") in the script . I would be grateful for a hint of a beautiful decision to create a copy of the table within the same database. Let's execute the finished script in any convenient way. Copy of table created!

Nuances of using SMO in .NET 4+

It should be noted that a call to Database.ExecuteNonQuery in .NET 4+ throws an exception of the form:

due to the fact that the wonderful SMO library is only for .NET 2 Runtime. Fortunately, there is a workaround :

Another option is to use Database.ExecuteWithResults .

Mixed mode assembly is built against version 'v2.0.50727' of the runtime and cannot be loaded in the 4.0 runtime without additional configuration information.

due to the fact that the wonderful SMO library is only for .NET 2 Runtime. Fortunately, there is a workaround :

...

Another option is to use Database.ExecuteWithResults .

Copying data from a temporary table to a target It

remains to execute the MERGE statement on the SQL Server side, which compares the contents of the temporary and target tables and performs an update or insert (if necessary). For example, for the [Order] table, the code may look like this:

MERGE INTO [Order] AS [Target]

USING [Order_TEMP] AS [Source]

ON Target.Id = Source.Id

WHEN MATCHED THEN

UPDATE SET

Target.Date = Source.Date,

Target.Number = Source.Number,

Target.Text = Source.Text

WHEN NOT MATCHED THEN

INSERT

(Date, Number, Text)

VALUES

(Source.Date, Source.Number, Source.Text);

This SQL query compares records from the temporary table [Order_TEMP] with records from the target table [Order], and performs Update if a record with the same value in the Id field is found, or Insert if no such record is found. Let's execute the code in any convenient way, and you're done! Do not forget to clean / delete the temporary table to taste.

5. Performance comparison

Runtime environment: Visual Studio 2013, Entity Framework 6.1.1 (Database First), SQL Server 2012. For testing, the [Order] table was used (the table layout is given above). Measurements were made of the runtime for the approaches to saving data in the database considered in the article, the results are presented in the following (time is indicated in seconds):

Insert

| The way to commit changes to the database | Number of records | ||

|---|---|---|---|

| 1000 | 10,000 | 100,000 | |

| Add + SaveChanges | 7.3 | 101 | 6344 |

| Add + (AutoDetectChangesEnabled = false) + SaveChanges | 6.5 | 64 | 801 |

| Add + separate context + SaveChanges | 8.4 | 77 | 953 |

| AddRange + SaveChanges | 7.2 | 64 | 711 |

| SqlBulkCopy | 0.01 | 0,07 | 0.42 |

Wow! If you use the Add method to add to the context and SaveChanges to save, saving 100,000 records to the database will take almost 2 hours! While SqlBulkCopy spends less than a second on the same task!

Update

| The way to commit changes to the database | Number of records | ||

|---|---|---|---|

| 1000 | 10,000 | 100,000 | |

| Savechanges | 6.2 | 60 | 590 |

| SqlBulkCopy + MERGE | 0.04 | 0.2 | 1,5 |

Again SqlBulkCopy out of competition. The source code for the test application is available on GitHub .

conclusions

In the case of working with a context containing a large number of objects (10³ and above), abandoning the Entity Framework infrastructure (adding to the context + saving the context) and switching to SqlBulkCopy for writing to the database can provide a performance increase of tens or even hundreds of times. However, in my opinion, using the EF + SqlBulkCopy bundle everywhere is a clear sign that something is wrong with your application architecture. The approach considered in the article should be considered as a simple means to accelerate performance in bottlenecks of an already written system, if it is difficult to change the architecture / technology for any reason. Any developer using the Entity Framework should know the strengths and weaknesses of this tool. Good luck!

Sitelinks

EntityFramework: Add, AddRange

Secrets of DetectChanges

Performance Considerations for Entity Framework 4, 5, and 6

Entity Framework Performance

Entity Framework and slow bulk INSERTs

Another look at Entity Framework: performance and pitfalls

SqlBulkCopy

SqlBulkCopy - crazy loading of big data or how to ride wild horse

Using SqlBulkCopy To Perform Efficient Bulk SQL Operations

SqlBulkCopy + data reader

Creating a Generic List DataReader for SqlBulkCopy

SqlBulkCopy for Generic List

SqlBulkCopy + MERGE

C # Bulk Upsert to SQL Server Tutorial

Secrets of DetectChanges

Performance Considerations for Entity Framework 4, 5, and 6

Entity Framework Performance

Entity Framework and slow bulk INSERTs

Another look at Entity Framework: performance and pitfalls

SqlBulkCopy

SqlBulkCopy - crazy loading of big data or how to ride wild horse

Using SqlBulkCopy To Perform Efficient Bulk SQL Operations

SqlBulkCopy + data reader

Creating a Generic List DataReader for SqlBulkCopy

SqlBulkCopy for Generic List

SqlBulkCopy + MERGE

C # Bulk Upsert to SQL Server Tutorial