How we made a mod for Oculus Rift for World of Tanks

Background

About a year and a half ago, DK1 fell into the hands of the developers of the Minsk studio Wargaming. A month later, when everyone had played enough in Team Fortress and Quake in Full 3D, the idea came up to patch something with Oculus in the Tanks themselves. About the process, results and pitfalls of working with Oculus - read below.

In World of Tanks, we support a certain number of gaming peripherals, which extends the player’s UX (vibroscale, for example). However, with the advent of the two-billion-dollar Oculus Rift, we decided that it was time to please not only the players' seats, but also give them a new “eye candy”.

Honestly, no one knew what it should look like, how to help the player. The task of developing the mod was set, as they say, "just for lulz". Slowly, when the brain refuses to think about the main tasks, we embarked on the integration: we downloaded the Oculus SDK, installed it and began to deal with the source codes of the examples.

Work began using the first devkit, which was somewhat dangerous for the psyche. The fact is that the first devkit had a very low screen resolution. Fortunately, quickly enough we got our hands on the HD version, which is more fun to work with.

SDK

We started development with the SDK version 0.2.4 for DK1. Then, when receiving HD Prototype, a new version of the SDK was not required. Therefore, we spent 90% of the time using the rather old, but nonetheless, SDK version that satisfies our needs. Then, when work on the Oculus mod was almost completed, DK2 came to us. And it turned out that the old SDK is no longer suitable for him. But is this really a problem? Download the new SDK, already version 0.4.2. It suddenly turned out that he was rewritten a little more than completely. I had to change almost the entire wrapper over the device, make various changes. But the most interesting thing happened with the rendering. If earlier the pixel shader was quite simple, then in the new version it was changed and complicated. And the fault is the lens. I don’t know why such a decision was made, but the side effect of the lenses is terrible chromatic aberration, decreasing from the edge of the scope to the center. To fix this defect, the pixel shader was rewritten. The solution is at least strange: to degrade application performance due to a strange engineering solution. But! An inquiring mind and resourcefulness will save the galaxy: it turned out that the lenses from DK1 and HD P are also excellent for DK2. And they have no side effects. Like this.

Initialization

The process of initializing the device itself, getting the context is completely transferred from the example - Ctrl + C Ctrl + V in action. There are no pitfalls here.

Rendering

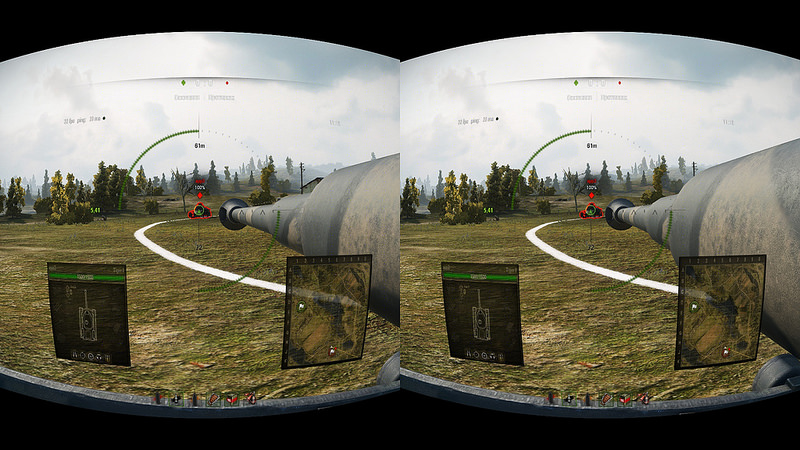

The stereoscopic image in the device is obtained in the classical way, by rendering the scene from two different angles and providing each of the images to the corresponding eye. You can read more about this here .

In accordance with this, our initial algorithm for constructing a stereoscopic image was as follows:

1. Installation of matrices

Since for each eye it is necessary to render with a slight offset, you must first modify the original view matrix. The necessary transformation matrix for each eye is provided by the Oculus Rift SDK. The modification is as follows:

• Get the matrix of additional transformation.

• Transpose it (Oculus Rift uses a different coordinate system).

• Multiply the left matrix by the original one.

2. Rendering twice with a matrix change

After modifying the view matrix for the left eye and setting it in the render context, we render as usual. After that, we modify the view matrix again for the right eye, expose it in the render context, and draw again.

3. The post-effect of distortion from lenses.

For a more complete filling of the visual perception zone, Oculus uses lenses that give a side effect in the form of distortion of the geometry of objects. In order to suppress this effect, an additional post-effect is applied to the final render, distorting the image in the opposite direction.

4. Output the final image

Finally, the image is formed by combining renders for both eyes, in which each is drawn on the corresponding half of the screen, and applying the post-distortion effect to them.

Challenges

During development, we quickly found that at the moment there are no clear, fully working guidelines for OR in games. If you run several different games with OR support, then each of them will have different implementations of interfaces and controls in the oculus. As far as we know, the developers of Oculus Rift do not specifically make strict requirements and rules. Their idea is for game developers to experiment themselves. For this reason, during the development process, we had to redo our integration with Oculus more than once: it would seem that it was already ready, but at some points something was not right, something was annoying, and as a result, half of the logic had to be thrown out.

Adds fun a couple more of our features. Firstly, in World of Tanks the camera basically hangs above the tank, and head turns in the oculus in this mode by design do not correspond to your experience from the real world. You cannot hang in your life over the technology you are driving (and sometimes it could be so useful ...).

In addition, World of Tanks is a PvP game where in every battle you face

Common UI Problem

In our game - however, as in most others - almost the entire UI (both in the hangar and in battle) is located on the sides of the screen. And if you produce UI output without any additional modifications, the following problem arises. Due to the design features of Oculus, only the central part of the image enters the field of view. And the UI, in turn, does not fall into this part (or falls partially).

Here are some ways to solve this problem we have tried.

1. Reduce the interface and make it appear in the center of the screen, falling into the scope. To do this, it is necessary to output it to a separate render target and then impose it during postprocessing.

This method solves the problem of UI visibility, but catastrophically reduces the information content and readability.

2. Use the Oculus orientation matrix to position the UI, that is, enable the user to inspect not only the 3D scene, but also the UI.

This method does not have the disadvantages of the first: information content and readability are limited only by the resolution of the device. But it has its drawbacks: to perform the usual actions (viewing tanks in a carousel; checking the balance of silver, etc.) it takes a lot of head movements and, which follows from the previous one, it is difficult to synchronize the movements of the UI and the camera for a 3D scene.

3. Combine the previous methods, ie “Scale” the interface and at the same time give an opportunity to inspect it. The main problem is the selection of such a parameter for the scale, which would preserve the readability of the text and allow you to get rid of a large number of head movements.

At first, we did not make a separation between the interfaces in the hangar and in battle. Moreover, the use of the Oculus orientation matrix to inspect the UI was inspired by the combat interface. Almost the entire visible area was occupied by the 3D scene, and this seemed correct, but the lack of the ability to find out the number of “units of strength” of your own tank or the location of allies / opponents on the minimap did not contribute to getting fun from a peppy tank chopper in full 3D. It was then that we thought about the “inspected” UI: to view, for example, minimap, you had to turn and tilt your head a bit - a completely natural movement for a person who knows the interface of our game. And then we ran into the problem that I described: after five minutes of constant rotation of the head in search of the minimap, the doll of the tank and the number of shells, the neck began to hurt.

The use of the hybrid version became evolution, as indicated above: now part of the interface was initially visible and for a complete inspection only a small “fine-tuning” of the head was required.

It would seem that the problem has been solved, but after watching the guys from our publisher dismissed this option as well.

Even despite the naturalness of the movements for obtaining information, the constant need to turn your head and then quickly return to its original position to perform active actions was considered uncomfortable and interfering with obtaining pleasure from the game. Since there are no active actions in the hangar, they decided to leave a hybrid option in it: by the time HD Prototype arrived in time, it gave decent text quality with a small UI scale.

In the same HD Prototype, due to the increased resolution, more 3D scenes began to be placed in the visible area. We decided to stop the experiments with the combat UI and make it static, but customizable, allowing us to directly change the position and size of its elements in runtime.

Along with solving these problems, I had to think about one more point: some elements of the UI should not stick together with others (for example, direction indicators, technical markers, sights). I had to make adjustments to the rendering order: some of the interface elements now began to be drawn together with the 3D scene.

Camera management

Oculus is not only an image output device, but also an input device. The input is the orientation quaternion (in DK1 and HD P - matrix) of the device in space. Therefore, we wanted to use this data not only for positioning the UI, but also for camera control.

Hangar

In the hangar, the camera control scheme was made as follows: the angle of inclination and the length of the "selfie stick" of the tank are controlled by the mouse. At the end of this “stick” there is a camera, the direction of which can be controlled using Oculus: to look around, examine not only the tank, but also the surrounding area.

Arcade Mode

In the arcade mode, the rotation of the mouse, as before, rotates the coordinate system from which the aiming takes place, the head turns in the oculus in this coordinate system.

Sniper mode

Initially, we explored the ability to aim with our heads. But they quickly abandoned this approach because of the low accuracy of shooting, load on the neck and difficulties in synchronizing the movements of the mouse and head, the speed of rotation of the head and turret of the tank.

Then they tried to make some kind of Team Fortress aiming system: the camera is controlled by head movements, but there is some space in the center of the visible area in which the sight can only be moved with the mouse for more targeted shooting. The prototype was not bad, but the difficulties of use remained.

Therefore, we decided to dwell on the simplest and most effective option: the camera is controlled by the head and mouse. That is, when the head moves, the aiming point does not change - only the direction of the camera. And when the mouse moves, both the aiming point and the direction of the camera change.

Strategic mode

Initially, it was not planned to use Oculus to play on art, so there are no improvements in the strategic mode.

Gunner mode

Once, having exhausted ideas on how to use Oculus as an input device in an interesting way, we discussed the pros and cons of the device and suggested that it would be easier for a player to imagine himself in a virtual 3D world as a virtual person than a virtual tank. And they decided to highlight the role in the tank, which the player will be interested in trying on: the role of the gunner turned out to be such a role. We already had a camera that can be mounted on some node of the tank, so all that was left was to adjust positioning and limit the field of view so that the player could not look inside the tank or through the barrel. For the test, we chose two tanks - IS and Tiger I - because of their popularity and the availability at the time of the development of their models in HD. The implementation took only a few days, but in the end the mode turned out to be the most interesting and unusual in terms of gameplay.

The name of the regime so far is “floating”: someone calls it “gunner’s camera”, someone calls it “camera from the commander’s turret”. The main feature of the mode is that the camera in it hangs in the coordinate system of a real tank tower, and at the same time, head turns are allowed using the Oculus Rift. The entire player’s tank is not hiding, so you can look around the whole bulk of your car. But in this perspective, the tank is perceived much more impressive than through a camera remote a couple of tens of meters. In this case, the question arose of which point of the tower to mount the camera in this mode. For the IS and Tiger I, we made a hardcode option: the camera in it is mounted next to the barrel. Generating points for the remaining hundreds of our tanks in automatic mode is not easy, and manual processing will take too much time.

conclusions

Oculus Rift is a very interesting device that adds a lot of new sensations to games. However, as often happens, creating a production-ready integration of such a device is by no means a matter of a couple of days. If you decide to embed its support in an existing game (not one that was originally made for VR), then you will need:

1. Partial alteration of the in-game menu. Even if you refuse to fully integrate it into virtual space (projecting elements onto game objects, positioning in real world coordinates, etc.), the menu display will need to be adjusted.

2. A strong, possibly radical alteration of the directly gameplay UI. Oculus Rift does not have the largest resolution, and if you just take the HUD from the game and hang it before your eyes in Oculus, then the interface elements will take up too much space. In addition, the feeling of “flies in front of the eyes” will not leave. This means that you will have to cut the UI, put something on the eyes, take something secondary on the back, and this requires reactions to the head's rotation from the UI. The combat UI projected onto game objects (cockpit, helmet, etc.) will potentially take up too few pixels on the screen when rendering, and there will be no information content from it.

3. The architecture of gaming cameras must be extensible. Oculus is not only an image output device on its screens, but also a head orientation input device. Therefore, this data needs to be somehow “pushed” into the game cameras in a human way. And if you need the opportunity to look around, the aiming system in the game should be independent of the orientation of the camera. In our case, the gunner’s camera, for example, is obtained by the composition of the logic of sniper and arcade modes. Also, the separation of the review logic and the aiming logic facilitates the integration of Oculus with existing control modes, without forcing them to override them.

4. And last, but equally important: Oculus requires a management system specific to it. An implementation in which the movement with the oculus is the same as the movement with the mouse may not be suitable (this option, perhaps, immediately falls only on flight simulators, where the logic of turning the pilot’s head is usually implemented anyway, and the view from the cockpit is the main mode review).

In short, the advantages of Oculus include:

• sufficient openness for cooperation;

• SDK development;

• PR;

• John Carmack (lol).

Cons:

• lack of general recommendations on gameplay integration;

• strangeness with chromatic aberration in 0.4.2;

• difficulty of perception in third-person games (especially if the hero is a tank, not a humanoid);

• common problems for all helmets:

- focusing;

- screen resolution (even in the HD version (sic!));

- discomfort with prolonged use (neck + headache).

The mod itself can be downloaded here at this link .