The synapse ensemble is a structural unit of the neural network

Last May, Google’s Deep Learning Laboratory staff and scientists from two American universities published a study called Intriguing properties of neural networks . An article about him was freely retold here on Habré , and the study itself was also criticized by a specialist from ABBYY .

As a result of their research, the Google team became disillusioned with the ability of network neurons to unravel the signs of input data and began to incline to the idea that neural networks did not unravel semantically significant signs in separate structural elements, but stored them in the entire network as a hologram. At the bottom of the illustration for this article in black and white, I presented the activation cards of the 29th, 31st and 33rd neurons of the network, which I taught to draw a picture. The fact that the carcass of a bird without a head and wings, portrayed for example by the 29th neuron, seems to people to be a semantically significant sign, the google people consider it to be just an error in the interpretation of the observer.

In an article, I will try to show with a real example that untangled signs can also be detected in artificial neural networks. I’ll try to explain why the Google people saw what they saw, but they couldn’t see the untangled signs, and I will show where semantically significant signs are hidden in the network. The article is a popular version of the report read at the conference " Neuroinformatics - 2015 " in January this year. The scientific version of the article can be read in the conference proceedings.

The question is now being actively debated both among those interested and among professionals. Evidence of enduring criticism has not yet been able to produce so far either side.

Despite the fact that experimenters using electrodes implanted in the brain in experiments on humans managed to detect the so-called "Jennifer Aniston neuron" - a neuron that responds only to the image of the actress. It was possible to catch both the neuron of Luke Skywalker and the neuron of Elizabeth Taylor. With the discovery in rats and bats of " coordinate neurons ", " place neurons " and " border neurons", Many finally get used to saying" a neuron of such and such a property. " Although the discoverers of the neuron, Jennifer Aniston, interpreting the data responsibly, are more likely to say that they found a part of large ensembles of neurons, rather than individual traits of neurons. Her neuron reacted to the concepts associated with it (for example, scenes from the series in which it is not in the frame), and the neuron Luke Skywalker found in humans was also triggered by Master Yoda. To completely confuse everyone in one study , neurons that selectively respond to a snake were found in monkeys that have never seen snakes in their lives.

In general, the theme of " Grandmother 's Neuron ", raised by neurophysiologists in 1967-69, causes considerable unrest to this day.

Part One: Data

Most often, a neural network is used for classification. At the entrance, she receives a lot of input noisy images, and at the exit she must successfully classify them according to a small number of signs. For example, whether a cat is present in the picture or not. This typical task is inconvenient for searching within the network for meaningful signs in the input data for a number of reasons. And the first of them is that there is no way to strictly observe the relationship between the semantic attribute and the state of some internal element. In an article from Google and several other studies, a tricky trick was used - first, the input data were calculated that would excite the selected neuron as much as possible, and then the researcher carefully examined these data, trying to find meaning in them. But we know that the human brain can skillfully find meaning even where it does not exist. The brain has evolved for a long time in this direction, and the fact that people see something other than a blot of ink on paper on the Rorschach test makes us cautious about any method of finding meaning in a picture containing many elements. Including in my pictures.

The method used in this and a number of other studies is also bad because the normal mode of operation of the neuron in general may not be maximum excitation. For example, in the middle picture, which shows the activation map of the 31st neuron, it can be seen that it is not excited on the correct input data to the maximum value, and indeed when looking at the picture it seems that the sense is most likely made by the areas where it is not active . To see something real, it may be useful to study the behavior of the network in small neighborhoods from its normal operating mode.

In addition, the Google study investigated very large networks. Non-teacher learning networks contained up to a billion variable parameters, for example. Even if there is a neuron in the network, it would be very difficult to accidentally stumble upon the word “existence” in large black letters.

Therefore, we will experiment with a completely different task. First, the experimenter makes a picture. The network has only two inputs, and two real numbers are supplied to them - the coordinates X and Y. And at the three outputs R, G and B we will expect a prediction of what color the point should be in the picture in these coordinates. All values are normalized to the segment (-1; 1). The root-mean-square error for all points of the image in total will become a criterion for the success of drawing training (in some pictures it is written in the lower left corner, RMSE).

The article uses a non-recurrent network of 37 neurons with hypertangent as a sigmoid and a somewhat non-standard arrangement of synapses. For training, the error back propagation method was used, with the Mini-Batch version of the algorithm, and an additional algorithm, which is a distant relative of energy restriction algorithms. In general, nothing supernatural. There are more modern algorithms, but most of them are not able to achieve more accurate results on networks of such a modest size.

Now fill the image with pixels colored as our network predicts. This will result in a map of neural network representations of the beautiful, used at the beginning of the article as a picture to attract attention. On the left is the original picture of a flying peacock, and on the right is how the neural network imagined it.

The first important conclusion can be made at a glance at the picture. It will not be very meaningful to hope that the signs of the image are described by neurons. If only because in the picture there are simply more distinct elements of images than neurons. That is, the authors of most studies looking for signs in neurons are not looking there.

Part Two: A bit of reverse engineering

Imagine that we were faced with the task of constructing a classifier from neurons without any training, which determines where the input point is - above or below the line passing through the point (0,0). To solve this problem, one neuron is enough. The weights of the synapses are correlated as the slope of the line and have different signs. The sign of potential at the exit will give us an answer.

Imagine that we were faced with the task of constructing a classifier from neurons without any training, which determines where the input point is - above or below the line passing through the point (0,0). To solve this problem, one neuron is enough. The weights of the synapses are correlated as the slope of the line and have different signs. The sign of potential at the exit will give us an answer.Now significantly complicate the puzzle. We will compare the two quantities in the same way, but now the input values will come in the form of a binary number. For example, 4-bit. By the way, not every learning algorithm will cope with such a task. However, the analytical answer is also obvious. We manage the same one neuron. In the first four synapses that take the first value, the weights are correlated as 8: 4: 2: 1, in the second - the same, but different by -k times. Spreading the solution of this problem into two separate neurons means complicating the solution of the problem, unless the input value converted from binary code is needed somewhere else in another part of the network. And what happens: on the one hand, the neuron is one, but, on the other hand, it reveals two completely separate ensembles of synapses representing the processing of different properties of the input data.

- For the presentation of properties in a neural network, not neurons, but groups of synapses can be responsible.

- In one neuron we often see a combination of several features.

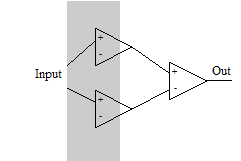

Let's do the third thought experiment. Suppose we have only one input and we need the neuron to turn on only in one range of values. Such a problem cannot be solved on a single neuron with a monotonic activation function. We need three neurons. The first one will be activated at the first boundary and activate the output neuron, the second one will be activated at a larger input value and slow down the output neuron at the second boundary.

Let's do the third thought experiment. Suppose we have only one input and we need the neuron to turn on only in one range of values. Such a problem cannot be solved on a single neuron with a monotonic activation function. We need three neurons. The first one will be activated at the first boundary and activate the output neuron, the second one will be activated at a larger input value and slow down the output neuron at the second boundary. This thought experiment teaches us new important findings:

- An ensemble of synapses can capture several neurons.

- If there are several synapses in the system that change a certain border with different intensities and at different times, an ensemble of synapses, including both of them, can be used to control the new “gap between borders” property.

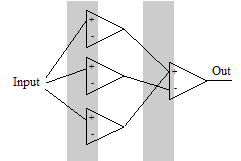

Finally, we put the last fourth thought experiment.

Finally, we put the last fourth thought experiment.Suppose we need to turn on the output neuron in two independent and non-overlapping areas. You can solve the problem using 4 neurons. The first neuron is activated and activates the output. The second neuron is partially activated and inhibits the output neuron. At this time, the third is activated, activating the output neuron again. Finally, the second neuron gains maximum activation and, due to the greater weight of its synapse, again inhibits the output neuron. Then the first boundary depends almost entirely on the first neuron, and the fourth - on the last in the first layer. However, the position of the second and third borders, although it can be changed without affecting other borders, but for this it is necessary to change the weights of several synapses in concert. This thought experiment taught us a few more principles.

Despite the fact that each synapse in a network of this type affects the processing of the entire signal, only a few of them affect individual properties strongly, ensuring their presentation and change. The impact of most synapses is significantly less powerful. If you try to influence a property by changing such “weak” synapses, then the properties that they are heavily involved in managing will change unacceptably much earlier than you notice any changes in the selected attribute.

- Some synapses constitute ensembles capable of altering individual characteristics. The ensemble works only within the framework of the surrounding environment of other neurons and synapses. Moreover, the work of the ensemble is almost independent of small changes in the environment.

These are the ensembles of synapses characterized by the described properties, we will look in our network to confirm the possibility of their existence on a real example.

Part Three: Synaptic Ensembles

The small size of the network that we set in the first part of the article gives us the opportunity to directly study how the change in weight of each of the synapses affects the picture generated by the network. Let's start with the very first synapse, strictly speaking, number 0. This synapse, in splendid isolation, is responsible for tilting the bird carcass to the left side, and for nothing else. No other synapse takes part in the formation of this reference line.

The picture shows a very large change in the weight of the synapse, from -0.8 to +0.3, especially so that the change caused is more noticeable. With such a large change in the weight of the synapse, other properties begin to change. In reality, the working range of weight changes for this synapse is much smaller.

Looking at the activation map of the neuron to which this synapse leads, you might think that the second synapse of this neuron will also somehow control the slope of the first reference line around which the entire geometry of our bird is built, but no. Changes in the weight of the second synapse lead to a change in another property - the width of the body of the bird.

It should be noted here that the effect of closely spaced synapses on a property can often be interpreted as a separate property. However, in the general case this is not true. For example, look at the map of changes caused by the 418th synapse, which is part of the ensemble that controls the green balance in the picture.

If we try to move the network along the basis of the 0th and 418th synapses, we will see the bird leaning to the left and becoming less green, but it is hardly possible to consider this as one property. In their article, the Google claimed that the natural basis, which excites one structural element of the network as much as possible, in terms of apparent semantic significance is no worse than the basis collected from a linear combination of such vectors for several elements. But we see that this is only true when the synapses along which the greatest change occurs are included in close synaptic ensembles. But if synapses regulate very different properties, then mixing does not help them get confused.

Now consider some more complex property that affects a small part of the picture. For example, the size and shape of the areas under the wings, where our peacock has short tail feathers. We write out all the neurons that directly affect these areas (for example, the left of them), namely, they are responsible for changing individual parts of the bird, and not its general geometry. It turns out that the ensemble includes synapses 21.22, 23, 24, 25, 26, 27.

For example, the 23rd and 24th synapses increase the region we have chosen, affecting the wing length in the opposite way. So, changing them at the same time, you can change the area, under the wing, little effect on the length of the wing. Just like in the fourth thought experiment we proposed.

All these synapses lead to the 6th neuron, and when you look at its activation map, to put it mildly, it is not obvious that it is involved in the control of this property. Which speaks in favor of the effectiveness of the visualization method proposed in this article.

If we look at what synapses affect the size and shape of this area together with other areas, then a couple more dozen of 37-46 (8th neuron), 70-83 (11th and 12th neurons), 90-94 (12 and 13th neurons), 129-138 (16th neuron) and several more, for example, 178th (19th neuron). In total, ~ 46 synapses from 460 available on the network are involved in the ensemble. At the same time, the rest of the synapses can bend almost the entire image, but the selected area is almost not affected, such as the 28th, even if you pull it very hard.

So what do we see? An ensemble of synapses that governs our chosen property. If necessary, the network compensates for its influence on other properties by a coordinated change in the weights of the synapses in the ensemble or by involving other ensembles. The synapse ensemble uses at least 7 neurons, this is one fifth of all neurons in the network and they are also used by many other ensembles.

Similarly, synapses that affect the color of areas, or the shape of feathers, can be examined in detail. To many other signs present in the image.

Part Four: Analysis of the Results

Network elements encoding semantically significant attributes may exist on the network, but these are not neurons, but ensembles of synapses of different sizes.

Most neurons are involved in several ensembles at once. Therefore, when studying the activation parameters of an individual neuron, we will see a combination of the influence of these ensembles. This, on the one hand, makes it very difficult to determine the role of neurons, and on the other hand, the researcher will find most if not literally meaningful, then containing obvious signs of meaning.

The Guglovtsev study, which was mentioned at the very beginning, of course, did not directly state that the structural units of the network do not encode semantically significant attributes. It is extremely difficult to prove nonexistence. Instead, they compared data that activated one random neuron and data that activated a linear combination of several randomly selected neurons in the same layer. They showed that the degree of apparent meaningfulness of such data does not differ and interpreted this as an indication that certain features are not encoded.

However, now looking at the material in the article, we understand that a single neuron, like a linear combination of several close neurons, demonstrates a response to the data of several ensembles of synapses, and indeed, they do not differ fundamentally in the information content. That is, the observation made in the study is correct, and it could be expected, in doubt only its interpretation, given in the most general words.

It also becomes clear why different works on the topic of highlighting semantically significant features in the structural elements of the network gave so many different results.

In conclusion, I want to say very briefly about where I started. On the activation maps of the last neurons in the network that look so eloquent. A neural network can approach the function required of it in the form of a sum of functions that effectively approximate individual sections. But the more data a network successfully learns, the less it can allocate its structural elements to encode each individual attribute. The more she is forced to seek and apply qualitative generalizations. If you need to carry out a simple classification with a billion custom parameters, each of them will most likely manage the property in only one small area and only together with hundreds of others. However, if the network is forced to learn a picture in which there are a hundred significant properties, and there are only four dozen neurons at its disposal, she will either fail or isolate semantically significant attributes into separate structural units, where they will be easy to detect. The output neurons of the network are associated with a not very large number of neurons, and to form a picture with so many significant elements, the network had to collect the results of several ensembles of synapses together on the penultimate neurons. Neurons 29, 31 and 33 were lucky, they collected geometric data that is easy to recognize and we immediately drew attention to them. Neurons 27, 28 or 32 were less fortunate, they gathered information about the location of color spots in the picture and only the neural network itself could understand this cacophony. The output neurons of the network are associated with a not very large number of neurons, and to form a picture with so many significant elements, the network had to collect the results of several ensembles of synapses together on the penultimate neurons. Neurons 29, 31 and 33 were lucky, they collected geometric data that is easy to recognize and we immediately drew attention to them. Neurons 27, 28 or 32 were less fortunate, they gathered information about the location of color spots in the picture and only the neural network itself could understand this cacophony. The output neurons of the network are associated with a not very large number of neurons, and to form a picture with so many significant elements, the network had to collect the results of several ensembles of synapses together on the penultimate neurons. Neurons 29, 31 and 33 were lucky, they collected geometric data that is easy to recognize and we immediately drew attention to them. Neurons 27, 28 or 32 were less fortunate, they gathered information about the location of color spots in the picture and only the neural network itself could understand this cacophony. which are easy to recognize and we immediately noticed them. Neurons 27, 28 or 32 were less fortunate, they gathered information about the location of color spots in the picture and only the neural network itself could understand this cacophony. which are easy to recognize and we immediately noticed them. Neurons 27, 28 or 32 were less fortunate, they gathered information about the location of color spots in the picture and only the neural network itself could understand this cacophony.