The simpler, the better, or when an ELB is not needed

Most likely, Amazon Web Services, the leading cloud provider, is primarily associated with EC2 (virtual instances) and ELB (balancer). A typical deployment scheme for a web service is EC2 instances behind the balancer (Elastic Load Balancer). There are a lot of advantages to this approach, in particular, we have “out of the box” checking the status of nodes, monitoring (the number of requests, logs), and easily configured auto scaling, etc. But far from always, ELB is the best choice for load balancing, and sometimes it’s not a suitable tool at all.

Most likely, Amazon Web Services, the leading cloud provider, is primarily associated with EC2 (virtual instances) and ELB (balancer). A typical deployment scheme for a web service is EC2 instances behind the balancer (Elastic Load Balancer). There are a lot of advantages to this approach, in particular, we have “out of the box” checking the status of nodes, monitoring (the number of requests, logs), and easily configured auto scaling, etc. But far from always, ELB is the best choice for load balancing, and sometimes it’s not a suitable tool at all. Under the cut, I’ll show two examples of using Route 53 instead of the Elastic Load Balancer: the first is from Loggly’s experience, and the second is from my personal one.

Loggly

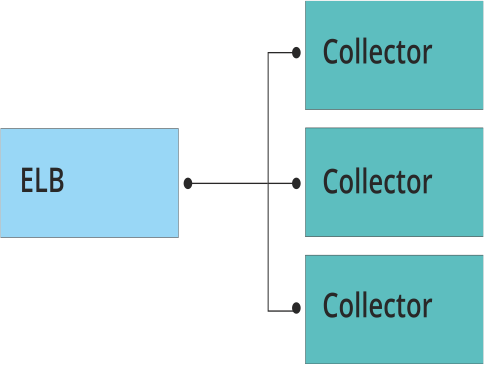

Loggly - a service for centralized collection and analysis of logs. Infrastructure deployed in the AWS cloud. The main work on collecting logs is performed by the so-called collectors - applications written in C ++ that receive information from clients via TCP, UDP, HTTP, HTTPS. The requirements for collectors are very serious: work as quickly as possible and not lose a single package! In other words, the application must collect all the logs, despite the intensity of the incoming traffic. Naturally, the collectors should be horizontally scaled, and the traffic between them should be distributed evenly.

The first pancake is lumpy

The guys from Loggly first decided to use ELB for balancing.

The first problem they encountered was performance. With tens of thousands of events per second, the delay on the balancer began to increase, which was not comparable with the purpose of the collectors. Further problems rained down like ripe apples: it is impossible to forward traffic to port 514, UDP is not supported, well, the well-known problem of the “cold balancer” Pre-Warming the Load Balancer manifests itself with a sharp increase in load.

Replacement on Route53

Then they began to look for a replacement ELB. It turned out that a simple DNS round robin is completely satisfied, and Route53 solves the problem of traffic distribution, eliminating problems with the ELB. Without an intermediate link in the form of a balancer, the delay decreased as traffic began to go directly from clients to instances with collectors. No additional “warm-up” is required with sharp increases in message volumes. Route53 also checks the "health" of the collectors and increases the availability of the system as a whole, information loss is reduced to zero.

Conclusion

For highly loaded services with sharp fluctuations in the number of requests using different protocols and ports, it is better not to try to use ELB: sooner or later you will run into limitations and problems.

Percona cluster

In our infrastructure, the main data warehouse is Percona Cluster. Many applications use it. The main requirements were fault tolerance, performance and minimal effort to support it. I wanted to do it once and forget it.

On the application side, we decided to use a constant DNS name for each environment (dev, test, live) to communicate with the cluster. This made life easier for developers and themselves in the configuration and assembly of applications.

ELB did not fit

The balancer did not suit us for approximately the same reasons as in the case of Loggly. We immediately thought about HA Proxy as a load balancer, especially since Percona is advised to use just such a solution. But I did not want to receive one more point of failure in the form of HA Proxy server. In addition, no one needs the additional costs of maintenance and administration.

Route53 + Percona

When we paid attention to this service as a load balancer and checking the status of cluster servers, it seemed that in a few clicks we would get the desired result. But, after a detailed study of the documentation, they found a fundamental limitation that cut down the entire architecture of the environment and the cluster in it. The fact is that Percona Cluster, like most other servers, is located in private subnets, and Route 53 can only check public addresses!

The disappointment did not last long - a new idea came up: to do the state check ourselves and use the Route 53 API to update the DNS record.

Final decision

On the project, Monit is used everywhere to monitor system services. It was configured for the following automatic actions:

- MySQL port check

- Change DNS records if no response

- Notification sending

- Attempt to restart service

We get this behavior when one of the cluster nodes crashes: the support service receives a notification, the DNS record changes so that the affected node does not receive requests, monitd tries to restart the service, if it fails, the notification again. The application continues to work as if nothing had happened, without even knowing about the problems.

Conclusion

Two cases described in the article show that Route 53 is sometimes better suited for load balancing and fault tolerance than ELB. At the same time, the cloud providers API allows you to bypass many of the limitations of their services.