HDR vs LDR, implementation of HDR Rendering

- Tutorial

As I promised, I am publishing the second article about some aspects of game development in three dimensions. Today I’ll talk about one technique that is used by almost any AAA class project . Her name is HDR Rendering . If interested - welcome to habrakat.

But first you need to talk. Based on the previous article, I realized that the audience of the Habrahabr buried Microsoft XNA technology . Allegedly doing something with his help is like writing games on the ZX Spectrum . They gave me an example: “ After all, there are SharpDX, SlimDX, OpenTK! ”, Even cited the example of Unity . But let’s dwell on the first three, all this is pure DX wrappers under .NETand Unity is generally a sandbox engine . What is DirectX10 + all about? After all, he is not and will not be in XNA . So, the overwhelming number of effects, chips and technologies is implemented on the basis of DirectX9c . And DirectX10 + introduces only additional functionality ( SM4.0, SM5.0 ).

Take, for example, Crysis 2 :

There are no DirextX10 and DirectX11 in these two screenshots . So why do people think that doing something on XNA is doing necrophilia? Yes, Microsoft has ceased to support XNA , but the stock of what is there is enough for 3 years for sure. Moreover, now there is monogame , it is open-source, cross-platform (win, unix, mac, android, ios, etc) and retains the same XNA architecture . By the way, FeZ from a previous article was written using monogame. And finally - articles aimed generally at computer graphics in three dimensions (all of these provisions are valid for both OpenGL and DirectX ), and not XNA - as you might think. XNA in our case is just a tool.

Okay, let's go

Games usually use LDR (Low Dynamic Range) rendering. This means that the color of the back buffer is limited to 0 ... 1. Where 8 bits are allocated to each channel, and this is 256 gradations. For example: 255, 255, 255 - white, all three channels (RGB) are equal to the maximum gradation. The concept of LDR is unfairly applied to the concept of realistic rendering, as in the real world, color is not defined by zero and one. A technology like HDRR comes to our aid . For starters, what is HDR ? High Dynamic Range Rendering , sometimes just “ High Dynamic Range"- the graphic effect used in computer games for more expressive rendering of the image in contrasting lighting of the scene. What is the essence of this approach? The fact that we draw our geometry (and lighting) is not limited to zero and one: one light source can give a pixel brightness of 0.5 units, and the other to 100 units. But as you can see at first glance, our screen reproduces just the same LDR format. And if we divide all the values of the color of the back buffer to the maximum brightness in the scene, we get the same LDR , and a light source of 0.5 units is almost not visible against the background of the second. And just for this a special method was invented called Tone Mapping . The essence of this approach is that we bring the dynamic range to LDRdepending on the average brightness of the scene. And in order to understand what I mean, consider the scene: two rooms, one indoor room , the other outdoor . The first room - has an artificial light source, the second room - has a light source in the form of the sun. The brightness of the sun is an order of magnitude higher than the brightness of an artificial light source. And in the real world, when we are in the first room - we adapt to this lighting, when we enter another room we adapt to a different level of lighting. When looking from the first room to the second - it will seem to us excessively bright, and when looking from the second to the first - black.

Another example: one outdoorroom. In this room - there is the sun itself and the diffused light from the sun. The brightness of the sun is an order of magnitude higher than its diffused light. In the case of LDR , the brightness values of the light would be equal. Therefore, using HDR you can achieve realistic glare from various surfaces. This is very noticeable on the water:

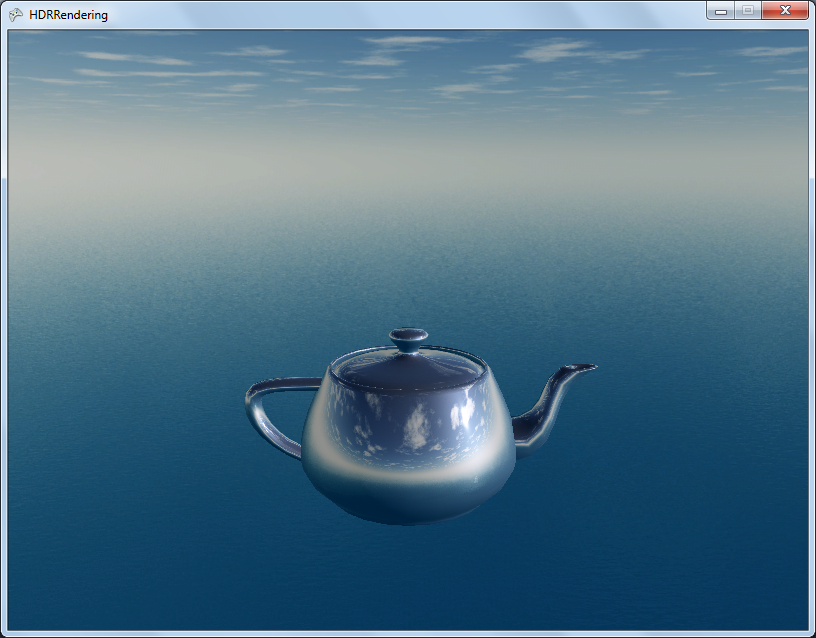

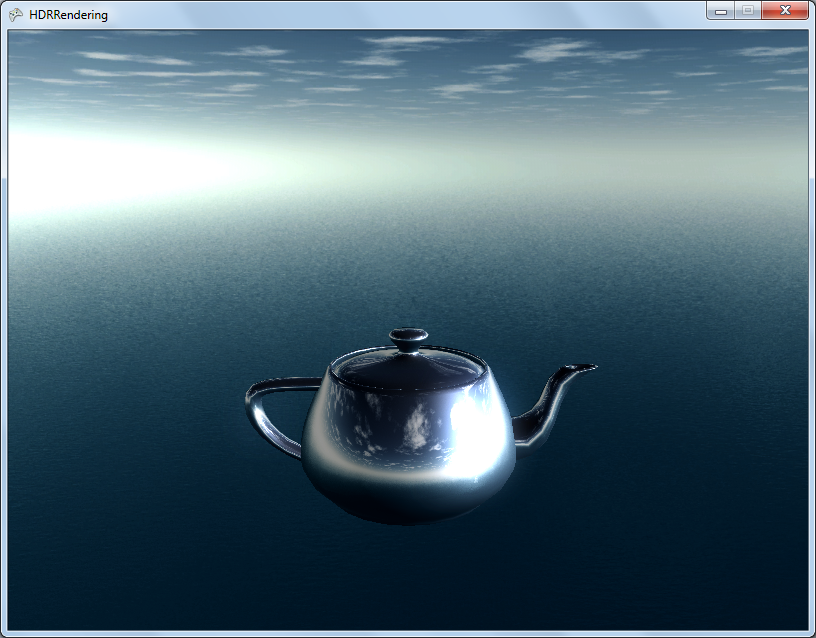

Or on highlights from the surface:

Well, the contrast of the scene as a whole (on the left is HDR, on the right is LDR):

Together with HDR, it is customary to use Bloom technology , bright areas are blurred and superimposed on top of the main image:

This makes lighting even softer.

Also, in the form of a bonus - I will talk about Color Grading . This trip is commonly used in AAA-class games.

Color grading

Very often in games, the scene must have its own color tone, this color tone can be common for the entire game, as well as for individual sections of the scene. And in order not to have a hundred shaders-postprocessors each time - use the Color Grading approach. What is the essence of this approach?

The famous RGB letters are a three-dimensional color space, where each channel is a kind of coordinate. In the case of the R8G8B8 format: 255 gradations per channel. So, what will happen if we apply the usual processing operations (for example, curves or contrast) to this space? Our space will change and in the future we can assign to any pixel - a pixel from this space.

Create simple RGBspace (I want to replace that we take every 8th pixel, because if we take all 256 gradations, the texture size will be very large):

This is a three-dimensional texture, where each axis has its own channel.

And take some scene that needs to be modified (while adding our space to the image):

We carry out the transformations we need (by eye):

And we extract our modifiable space:

Now, in this space - we can apply all modifications with color to any image. Just matching the original color with the changed color space.

Implementation

Well, briefly on the implementation of HDR in XNA . In XNA, the back buffer format is set to (mainly) R8G8B8A8 , because rendering directly to the screen cannot support HDR a priori. For this workaround - we need to create a new RenderTarget (I previously described how they work here ) with a special format: HalfVector4 *. This format supports floating values for RenderTarget .

* - in XNA there is such a format - as HDRBlendable , it's the same HalfVector4 - but RT itself takes up less space (because we don’t need floating-point on the alpha channel ).

Let's get the necessary RenderTarget :

private void _makeRenderTarget()

{

// Use regular fp16

_sceneTarget = new RenderTarget2D(GraphicsDevice,

GraphicsDevice.PresentationParameters.BackBufferWidth,

GraphicsDevice.PresentationParameters.BackBufferHeight,

false,

SurfaceFormat.HdrBlendable, DepthFormat.Depth24Stencil8,

0,

RenderTargetUsage.DiscardContents);

}

Create a new RT with the back buffer size (screen resolution) with mipmap disabled (since this texture will be drawn on the screen quad) with the surface format - HdrBlendable (or HalfVector4 ) and a 24-bit depth / stencil buffer buffer of 8 bits . Also turn off multisampling .

For this RenderTarget it is important to enable the depth buffer (unlike the usual post-process RT), as we will draw our geometry there.

Further - everything is just like in LDR , we draw a scene, only now we don’t need to be limited to drawing brightness [0 ... 1].

Add a skybox with a nominal brightness multiplied by three and a classic Utah teapot with DirectionalLight- lighting andReflective surface.

The scene is created and now we need to somehow bring the HDR format to LDR . Take the simplest ToneMapping - divide all of these values by the conditional value max.

float3 _toneSimple(float3 vColor, float max)

{

return vColor / max;

}

We’ll twist the camera and understand that the scene is still static and that you can easily achieve a similar picture by applying contrast to the image.

In real life, our eye adapts to the right lighting: in a poorly lit room we still see, but until there is no bright source of light in front of our eyes. This is called light adaptation. And the coolest thing is that HDR and color adaptation blends perfectly with each other.

Now we need to calculate the average color value on the screen. This is quite problematic because floating-point format does not support filtering. We proceed as follows: create the Nth number of RT, where each next is less than the previous one:

int cycles = DOWNSAMPLER_ADAPATION_CYCLES;

float delmiter = 1f / ((float)cycles+1);

_downscaleAverageColor = new RenderTarget2D[cycles];

for (int i = 0; i < cycles; i++)

{

_downscaleAverageColor[(cycles-1)-i] = new RenderTarget2D(_graphics, (int)((float)width * delmiter * (i + 1)), (int)((float)height * delmiter * (i + 1)), false, SurfaceFormat.HdrBlendable, DepthFormat.None);

}

And we will draw each previous RT into the next RT using some blur. After these cycles, we get a 1x1 texture , which, in fact, will contain an average color.

If you start it all now, then the color adaptation will really be, but it will be instantaneous, and it doesn’t. We need to look blindly (in the form of increased brightness) when looking from a sharply dark area to sharply bright, and then everything returns to normal. To do this, it’s enough to get another RT 1x1 , which will be responsible for the current adaptation value, at the same time, each frame we bring the current adaptation closer to the color calculated at the moment. Moreover, the value of this approximation should be tied to the same gameTime.ElapsedGameTimeso that the number of FPS does not affect the rate of adaptation.

Well, now, as the max parameter for _toneSimple, we can pass our average color.

There are tons of ToneMapping 's formulas , here are some of them:

Reinhard

float3 _toneReinhard(float3 vColor, float average, float exposure, float whitePoint)

{

// RGB -> XYZ conversion

const float3x3 RGB2XYZ = {0.5141364, 0.3238786, 0.16036376,

0.265068, 0.67023428, 0.06409157,

0.0241188, 0.1228178, 0.84442666};

float3 XYZ = mul(RGB2XYZ, vColor.rgb);

// XYZ -> Yxy conversion

float3 Yxy;

Yxy.r = XYZ.g; // copy luminance Y

Yxy.g = XYZ.r / (XYZ.r + XYZ.g + XYZ.b ); // x = X / (X + Y + Z)

Yxy.b = XYZ.g / (XYZ.r + XYZ.g + XYZ.b ); // y = Y / (X + Y + Z)

// (Lp) Map average luminance to the middlegrey zone by scaling pixel luminance

float Lp = Yxy.r * exposure / average;

// (Ld) Scale all luminance within a displayable range of 0 to 1

Yxy.r = (Lp * (1.0f + Lp/(whitePoint * whitePoint)))/(1.0f + Lp);

// Yxy -> XYZ conversion

XYZ.r = Yxy.r * Yxy.g / Yxy. b; // X = Y * x / y

XYZ.g = Yxy.r; // copy luminance Y

XYZ.b = Yxy.r * (1 - Yxy.g - Yxy.b) / Yxy.b; // Z = Y * (1-x-y) / y

// XYZ -> RGB conversion

const float3x3 XYZ2RGB = { 2.5651,-1.1665,-0.3986,

-1.0217, 1.9777, 0.0439,

0.0753, -0.2543, 1.1892};

return mul(XYZ2RGB, XYZ);

}Exposure

float3 _toneExposure(float3 vColor, float average)

{

float T = pow(average, -1);

float3 result = float3(0, 0, 0);

result.r = 1 - exp(-T * vColor.r);

result.g = 1 - exp(-T * vColor.g);

result.b = 1 - exp(-T * vColor.b);

return result;

}I use my own formula:

Exposure2

float3 _toneDefault(float3 vColor, float average)

{

float fLumAvg = exp(average);

// Calculate the luminance of the current pixel

float fLumPixel = dot(vColor, LUM_CONVERT);

// Apply the modified operator (Eq. 4)

float fLumScaled = (fLumPixel * g_fMiddleGrey) / fLumAvg;

float fLumCompressed = (fLumScaled * (1 + (fLumScaled / (g_fMaxLuminance * g_fMaxLuminance)))) / (1 + fLumScaled);

return fLumCompressed * vColor;

}Well, the next step is Bloom (I partially described it here ) and Color Grading :

Using Color Grading :

Any color pixel value (RGB) after ToneMapping 'a lies in the range from 0 to 1. Our color space Color Grading also conditionally lies in the range from 0 to 1. Therefore, we can replace the current pixel color value with the pixel color in the color space. At the same time, filtering the sampler will linearly interpolate between our 32 values on the Color Grading map . Those. we “as if” are

replacing the reference color space - with our changed one.

For color grading you need to enter the following function:

float3 gradColor(float3 color)

{

return tex3D(ColorGradingSampler, float3(color.r, color.b, color.g)).rgb;

}

where ColorGradingSampler is a three-dimensional sampler.

Well, LDR / HDR comparison:

LDR:

HDR:

Conclusion

This simple approach is one of the chips of 3D AAA games . And as you can see - it can be implemented on the good old DirectX9c , and the implementation in DirectX10 + is fundamentally different. You will find more information in the source .

It is also worth distinguishing between each other HDRI (used in photography) and HDRR (used in rendering).

Conclusion 2

Unfortunately, when I wrote articles on game dev in 2012 - there were much more feedback and ratings, but now my expectations were slightly not met. I do not pursue an assessment of a topic. I do not want him to be artificially high or low. I want it to be rated: not necessarily as: “Good article!”, But also with “The article, in my opinion, is incomplete, with% item% the situation remains incomprehensible.” I am glad even of a negative but constructive assessment. As a result, I publish an article, and somehow it collects a couple of comments and ratings. And taking into account the fact that habrahabr is a self-regulatory community, the conclusion suggests itself: the article is not interesting -> it does not make sense to publish such a thing.

PS We are all human and make mistakes, and therefore, if you find a mistake in the text, write me a personal message, and do not rush to write an angry comment!