Coachman, do not drive horses or why is a quick backup not always good?

Recently, Veeam announced a new feature in the upcoming update of its main product, the whole point of which is to prevent the application from working too quickly. It sounds at least strange, so I suggest trying to figure out why it is necessary to artificially reduce the speed of backup creation when the voice of the mind actively insists on the contrary.

And, since “they start to dance from the stove”, we will look at the situation from the very beginning - with the principles underlying virtual backups, dig a little inward and reveal one obvious problem that about 90% of virtual environment administrators do not take into account. I also note that to simplify the narrative, descriptions of all the functions will be given on the basis of the VMware hypervisor functionality, as the most transparent and documented.

The main idea is to reduce the load on the storage where the production system is located, since uncontrolled activity often leads to too much delay in I / O operations, and this causes unpleasant consequences:

• Application performance inside the guest OS drops to critically low levels and even below

• Collapse of cluster structures such as DAG, SQL always on, etc.

• The guest OS may simply lose the system drive

AND many other, at first glance, incredible things related to degradation of performance at the storage level.

It's no secret that backups are based on snapshots. This alpha and omega allows you to achieve two extremely important goals: the consistency of the file structure on the disks of the virtual machine and the protection of the files of the disks themselves for the backup period.

After successfully stopping disk operations and creating a snapshot, you can proceed directly to saving the VM disks. You can do this in different ways:

• You can pick up a file by accessing the host directly through the LAN stack. This method always works, but it is always very slow (unless the entire backup infrastructure is built on 10G links).

• A more profitable option is the ability to temporarily attach the disk to another machine and download it from there. In this case, the data will be obtained directly from the storage, through the I / O stack. Copy speed in this case, all other things being equal, will be better by at least an order of magnitude.

• And the fastest, but also the most expensive option is to pick up the file directly from the SAN, having received all the necessary metadata from the hypervisor. In this case, the copy speed can be higher and two orders of magnitude, and three. It all depends on the connection between the SAN and the backup storage location.

But no matter what option you use, after the copying of the disks is completed, it is time to reverse the operation - all changes that have occurred with the virtual machine during backup and accumulated in the snapshot should be consolidated with the original disk, after which the snapshot can be safely deleted, and VM will continue to work as if nothing had happened.

And here two very important nuances come up, which few pay attention to until they encounter their manifestations.

Snapshot consolidation is a very difficult procedure from the point of view of the load on the host storage system and directly depends on the storage speed (performance). This time. And two - this operation has the highest priority at the hypervisor level, because there is nothing more important than the integrity of information, and it’s hard to argue with that.

Adding the first to the second, we can easily conclude that if a heartbeat request arrives at the cluster VM during the snapshot consolidation, the hypervisor can easily delay its transmission (by placing it in a temporary buffer) if it considers that the response to this request will take an unacceptable amount of time and may result in a mismatch in the consolidation operation.

At this point, we will have three opinions:

• From the point of view of the guest OS, everything is fine with her. It works, but the fact that the hypervisor freezes it a little is not always noticeable at its level. But it is worth noting that there are exceptions, and, as described at the beginning of the article, the OS may lose touch with its disks. Including system with all the consequences.

• From the point of view of the hypervisor, everything is just fine. He carefully removes the snapshot, and the requests entering the VM, at his discretion, put in his buffer or in the so-called Helper snapshot, so that later he can send this data to the VM. Data integrity is ensured, everyone is dancing.

• But on the cluster at this moment, panic. A cluster member (or even several participants) has already missed several polls, and it’s time to start recounting the quorum, or even to report that the cluster is more likely dead than alive. In any case, the situation is very unpleasant.

If someone wants to test their cluster, I want to warn you that you should not create a snapshot, count to ten and immediately delete it. Snapshot must live, buildmuscle... grow in volume and only then it needs to be consolidated. Otherwise, the test does not make sense. You can estimate the time required for a correct experiment in a simple way: download vmdk VM files via Datastore Browser. The time required for such an operation will be 99% correct indicator.

Some clients after their DAG again collapsed after a three-day backup of the mailbox server located on the storage system of the late Paleolithic times, love to blame us for all mortal sins, referring to the obvious connection - the cluster fell apart during backup, which means that backup software is to blame. But, unfortunately, all the facts described in this article are confirmed by VMware itself. In the articleplaced in their KB, it is written in black and white - during the consolidation of the snapshot, the loaded VM may stop responding from outside. Typical consequences of this are a BSOD reporting a lost system drive; if the VM was part of a cluster, then it is excluded from it, the cluster breaks up, or in the best case you just see a very strong degradation in performance (although this can be relatively successfully addressed by increasing RAM). And in best practices it is not recommended to store a snapshot for more than two days.

For our part, we also collected a selection of information on this topic in our HF article .

Obviously, the bottleneck in this process is the internal speed of storage. In the morning it seemed that we had just a great drive, and by evening we had to turn off the production to reduce the load on the store, in the hope that this time it would be possible to remove the forgotten snapshot that managed to grow to hundreds of gigabytes a month ago (and VM performance dropped to a critical point).

In the beginning, the frontal attack option was tested - a parameter was introduced that directly limits the number of operations with storage systems. But this method had too many drawbacks inherent in static systems. And sometimes it came to a shot in the leg - young pioneers who like to store backups on the same storage system where the VMs are located can identify all possible ways to make backup even slower, and bring the host to panic.

Therefore, in the new version, it was decided to completely abandon such a model and switch to the dynamic processing of current I / O latency indicators. At the global application settings level, it looks like this:

Moreover, it should be noted that in addition to global settings, you can set your own parameters for each specific data storage or volume, in case of working with Hyper-V. But this is a matter of licensing.

So consider the same proposed bunch of parameters.

“ Stop assigning new tasks to datastore at:“If during backup we see that the latency (IOPS latency) has exceeded the acceptable threshold, the VBR server will not create new file transfer streams until the storage performance returns to the acceptable level. Or simply put, we will not try to squeeze out all the juices from the storage if it is heavily loaded.

“ Throttle I / O of existing tasks at:”- is applied if the backup task is already running, and there will be a delay in accessing the disk caused by external load. For example, if during backup, an SQL server is launched on one of the machines, creating an additional load on the same storage system, then the VBR server will artificially reduce its own disk access rate so as not to interfere with the production system. When the performance returns to its previous level, the VBR server will also automatically increase the data transfer rate. This paragraph supplements the previous one. If, as a first measure, we simply stop creating an additional load while working at a certain steady level, then the second measure is to reduce the intensity of ongoing operations to please production systems.

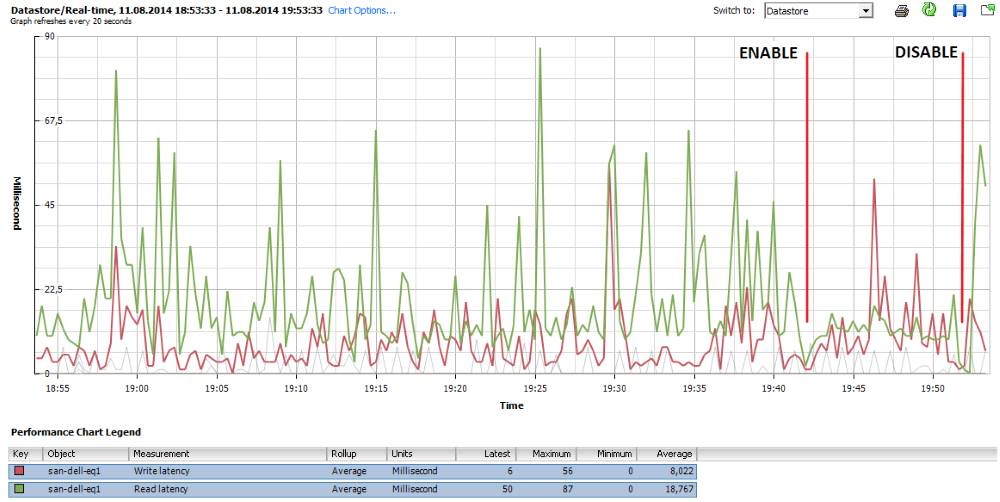

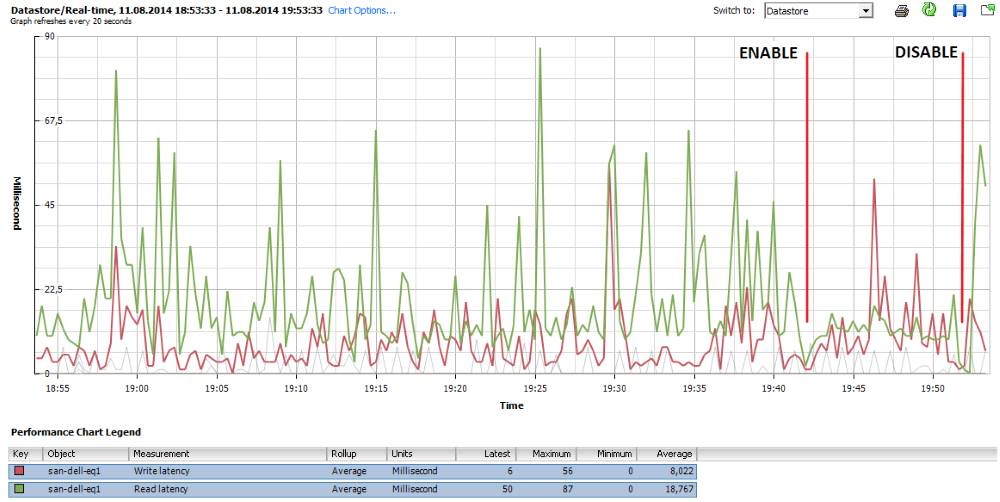

An example of how these parameters work out is clearly visible in this screenshot:

At the very beginning of the graph, the storage is in a “rest” state, i.e. normal working procedures occur. At 18:55, a backup task was included, which caused the expected increase in delays in read / write operations with pronounced peaks reaching 80 ms. Around 19:42, the control mechanism was activated (Enable mark), and, as we can see, the average value of the delays in read operations (green graph) is kept at 20 ms and grows only when the control mechanism is turned off (Disable mark).

I note that the default thresholds of 20 and 30 ms were not taken from the ceiling, but as a result of the evaluation of monitoring data from many of our customers, who kindly agreed to provide them. Thus, these values are perfect for most virtual environments.

Perhaps this is where I will finish the description of this function. I tried to make it as accessible as possible, but if there are still questions, I will be glad to continue in the comments or through the PM.

Feel free to ask questions about our other products or their individual functions. I will try to answer everyone, and if it does not work out briefly, I will make a separate article.

And, since “they start to dance from the stove”, we will look at the situation from the very beginning - with the principles underlying virtual backups, dig a little inward and reveal one obvious problem that about 90% of virtual environment administrators do not take into account. I also note that to simplify the narrative, descriptions of all the functions will be given on the basis of the VMware hypervisor functionality, as the most transparent and documented.

The main idea is to reduce the load on the storage where the production system is located, since uncontrolled activity often leads to too much delay in I / O operations, and this causes unpleasant consequences:

• Application performance inside the guest OS drops to critically low levels and even below

• Collapse of cluster structures such as DAG, SQL always on, etc.

• The guest OS may simply lose the system drive

AND many other, at first glance, incredible things related to degradation of performance at the storage level.

How did we get to this

It's no secret that backups are based on snapshots. This alpha and omega allows you to achieve two extremely important goals: the consistency of the file structure on the disks of the virtual machine and the protection of the files of the disks themselves for the backup period.

After successfully stopping disk operations and creating a snapshot, you can proceed directly to saving the VM disks. You can do this in different ways:

• You can pick up a file by accessing the host directly through the LAN stack. This method always works, but it is always very slow (unless the entire backup infrastructure is built on 10G links).

• A more profitable option is the ability to temporarily attach the disk to another machine and download it from there. In this case, the data will be obtained directly from the storage, through the I / O stack. Copy speed in this case, all other things being equal, will be better by at least an order of magnitude.

• And the fastest, but also the most expensive option is to pick up the file directly from the SAN, having received all the necessary metadata from the hypervisor. In this case, the copy speed can be higher and two orders of magnitude, and three. It all depends on the connection between the SAN and the backup storage location.

But no matter what option you use, after the copying of the disks is completed, it is time to reverse the operation - all changes that have occurred with the virtual machine during backup and accumulated in the snapshot should be consolidated with the original disk, after which the snapshot can be safely deleted, and VM will continue to work as if nothing had happened.

And here two very important nuances come up, which few pay attention to until they encounter their manifestations.

Snapshot consolidation is a very difficult procedure from the point of view of the load on the host storage system and directly depends on the storage speed (performance). This time. And two - this operation has the highest priority at the hypervisor level, because there is nothing more important than the integrity of information, and it’s hard to argue with that.

Adding the first to the second, we can easily conclude that if a heartbeat request arrives at the cluster VM during the snapshot consolidation, the hypervisor can easily delay its transmission (by placing it in a temporary buffer) if it considers that the response to this request will take an unacceptable amount of time and may result in a mismatch in the consolidation operation.

At this point, we will have three opinions:

• From the point of view of the guest OS, everything is fine with her. It works, but the fact that the hypervisor freezes it a little is not always noticeable at its level. But it is worth noting that there are exceptions, and, as described at the beginning of the article, the OS may lose touch with its disks. Including system with all the consequences.

• From the point of view of the hypervisor, everything is just fine. He carefully removes the snapshot, and the requests entering the VM, at his discretion, put in his buffer or in the so-called Helper snapshot, so that later he can send this data to the VM. Data integrity is ensured, everyone is dancing.

• But on the cluster at this moment, panic. A cluster member (or even several participants) has already missed several polls, and it’s time to start recounting the quorum, or even to report that the cluster is more likely dead than alive. In any case, the situation is very unpleasant.

If someone wants to test their cluster, I want to warn you that you should not create a snapshot, count to ten and immediately delete it. Snapshot must live, build

Mom, it's not my fault

Some clients after their DAG again collapsed after a three-day backup of the mailbox server located on the storage system of the late Paleolithic times, love to blame us for all mortal sins, referring to the obvious connection - the cluster fell apart during backup, which means that backup software is to blame. But, unfortunately, all the facts described in this article are confirmed by VMware itself. In the articleplaced in their KB, it is written in black and white - during the consolidation of the snapshot, the loaded VM may stop responding from outside. Typical consequences of this are a BSOD reporting a lost system drive; if the VM was part of a cluster, then it is excluded from it, the cluster breaks up, or in the best case you just see a very strong degradation in performance (although this can be relatively successfully addressed by increasing RAM). And in best practices it is not recommended to store a snapshot for more than two days.

For our part, we also collected a selection of information on this topic in our HF article .

How we decided to fight this

Obviously, the bottleneck in this process is the internal speed of storage. In the morning it seemed that we had just a great drive, and by evening we had to turn off the production to reduce the load on the store, in the hope that this time it would be possible to remove the forgotten snapshot that managed to grow to hundreds of gigabytes a month ago (and VM performance dropped to a critical point).

In the beginning, the frontal attack option was tested - a parameter was introduced that directly limits the number of operations with storage systems. But this method had too many drawbacks inherent in static systems. And sometimes it came to a shot in the leg - young pioneers who like to store backups on the same storage system where the VMs are located can identify all possible ways to make backup even slower, and bring the host to panic.

Therefore, in the new version, it was decided to completely abandon such a model and switch to the dynamic processing of current I / O latency indicators. At the global application settings level, it looks like this:

Moreover, it should be noted that in addition to global settings, you can set your own parameters for each specific data storage or volume, in case of working with Hyper-V. But this is a matter of licensing.

So consider the same proposed bunch of parameters.

“ Stop assigning new tasks to datastore at:“If during backup we see that the latency (IOPS latency) has exceeded the acceptable threshold, the VBR server will not create new file transfer streams until the storage performance returns to the acceptable level. Or simply put, we will not try to squeeze out all the juices from the storage if it is heavily loaded.

“ Throttle I / O of existing tasks at:”- is applied if the backup task is already running, and there will be a delay in accessing the disk caused by external load. For example, if during backup, an SQL server is launched on one of the machines, creating an additional load on the same storage system, then the VBR server will artificially reduce its own disk access rate so as not to interfere with the production system. When the performance returns to its previous level, the VBR server will also automatically increase the data transfer rate. This paragraph supplements the previous one. If, as a first measure, we simply stop creating an additional load while working at a certain steady level, then the second measure is to reduce the intensity of ongoing operations to please production systems.

An example of how these parameters work out is clearly visible in this screenshot:

At the very beginning of the graph, the storage is in a “rest” state, i.e. normal working procedures occur. At 18:55, a backup task was included, which caused the expected increase in delays in read / write operations with pronounced peaks reaching 80 ms. Around 19:42, the control mechanism was activated (Enable mark), and, as we can see, the average value of the delays in read operations (green graph) is kept at 20 ms and grows only when the control mechanism is turned off (Disable mark).

I note that the default thresholds of 20 and 30 ms were not taken from the ceiling, but as a result of the evaluation of monitoring data from many of our customers, who kindly agreed to provide them. Thus, these values are perfect for most virtual environments.

Conclusion

Perhaps this is where I will finish the description of this function. I tried to make it as accessible as possible, but if there are still questions, I will be glad to continue in the comments or through the PM.

Feel free to ask questions about our other products or their individual functions. I will try to answer everyone, and if it does not work out briefly, I will make a separate article.