Is it possible to steal money from mobile banking? Part 2

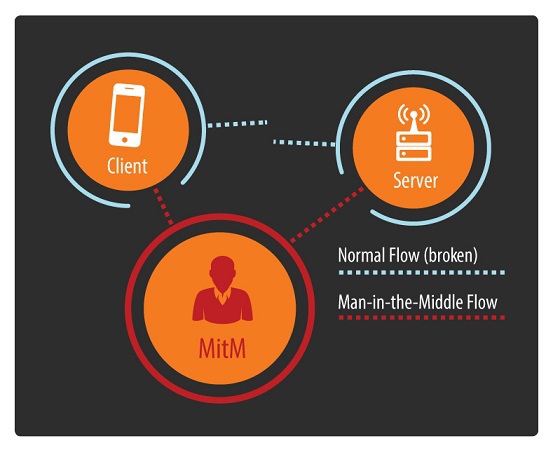

We continue the theme of mobile banking security, which we started in the first part . Probably, many have already guessed, the speech in this article will focus on the “man in the middle” attack, MitM. This attack was not chosen by chance. If the data transfer channel between the mobile banking application and the server is controlled by an attacker, the latter can steal money from the client’s account, that is, cause direct financial damage. But first things first.

In general, two reasons motivated us to conduct this study. First, from project to project, we see a recurring sad picture of vulnerabilities. According to the audit, it turns out another short film with a video-hacking MB for the customer. Secondly, two very interesting publications came out:“The Most Dangerous Code in the World: Validating SSL Certifications in Non-Browser Software” and “Rethinking SSL Development in an Applied World” - we recommend that you familiarize yourself with them. But first things first.

Man-in-the-middle

The main scenarios for implementing the “MitM” attack :

- Connecting a user to a fake Wi-Fi access point. This is the most common and real MitM attack scenario. It can be easily reproduced in a cafe, shopping or business center. The software for this attack is easy to find in the public domain.

- Connection to a fake operator base station. This scheme is becoming more accessible to the masses due to the rich selection of hardware and software and its low cost. The situation needs to be brought under control as quickly as possible.

- Using infected network equipment. An infection of network equipment is understood not only as the execution of malicious code on it, but also its targeted malicious reconfiguration, for example, through a vulnerability. Many examples of the implementation of such attacks are already known.

It is worth saying that these are just a few of the many possible scenarios. The main thing that an attacker needs is to ensure that network traffic from the victim to the server goes through a host controlled by him.

Malicious SOHO routers A

cool find and research from the guys from Team Cymru - they found malware that got into the internal network and changed its DNS settings to its own. What does it mean? That now not only internal machines, but also all mobile devices that clung to these routers via WiFi were redirected to sites controlled by the attacker! And here we have such an intricate MiTM.

The specifics of the operation of mobile devices with Wi-Fi networks:

- Automatic connection to known Wi-Fi networks (based on PNL, Preferred Network List)

o It is not possible to disconnect or configure in any simple way everywhere

- Network identification is based on SSID ( network name) and security settings

- If there are several known networks, then the choice of connection for each OS has its own

attacker can deploy its own Wi-Fi network, completely identical to the known network for a mobile device. As a result, the device will automatically connect to such an access point and will work through it. For example, using the KARMA program . Such a scheme and the lack of simple management of trusted networks simplifies the implementation of “MitM” for an attacker.

Channel protection

Before breaking, let’s examine what might prevent us from doing this.

By secure channel we mean a channel in which encryption and integrity control are used to ensure data transfer. But do not forget that not all cryptographic algorithms remain persistent and cryptography is not always used correctly.

Channels can be divided into 3 main groups:

- Open

While in the same network as the victim, the attacker sees all client-server interaction in open form.

- Protected by non-standard methods

As our practice shows, this is not the best solution: it leads to a number of errors that lead to compromise of the transmitted data.

- Protected by standard methods

The most common option is SSL / TLS

Certificate verification process.

To ensure the health of this scheme, the device has many root certificates (CAs) that are stored in a special store of trusted root certificates, and everything that is signed by them is trusted for the device.

Certificates are divided into:

• system - preinstalled in the system

• user - installed by the user

Certificate verification is carried out along the chain: from the one sent to the device to the root (CA) that the device trusts. Next are the checks for the host name, revocation, etc. Further checks may vary (on this, by the way, you can also play when attacking an application) depending on the implementation of the library, OS, etc.

SSL and Android

In Android OS up to version 4.0, all certificates were stored in a single file - Bouncy Castle Keystore File.

File: /system/etc/security/cacerts.bks It

was impossible to change it without root privileges, and the OS did not provide any ways to modify it. Any change to the certificate (addition, revocation) required updating the OS.

Starting with Android 4.0, the approach to working with certificates has changed. Now all certificates are stored in separate files, and if necessary, you can delete them from trusted ones.

System stored in: / system / etc / security / cacerts

User stored in: / data / misc / keychain / cacerts-added

To view the certificates in Android OS, go to Settings -> Security -> Trusted Certificates (Settings -> Security -> Trusted credentials).

The number of system certificates in various versions of Android:

- Android 4.0.3: 134

- Android 4.2.2: 140

- Android 4.4.2: 150 The

number of certificates can also vary from manufacturer to manufacturer and for each device model.

To install a user certificate in Android OS, you need to upload the root certificate to the SD card and go to Settings -> Security -> Install from the memory card or via MDM (DevicePolicyManager) in Android, starting with version 4.4. Forcing a user to install a certificate using social engineering is possible, but not very simple.

SSL and iOS

In iOS, you can’t see the built-in certificates, and you can only get information about them from the Apple website . To view user certificates, go to Settings -> General -> Profile (s).

System stored in: /System/Library/Frameworks/Security.framework/certsTable.data

Custom stored in: /private/var/Keychains/TrustStore.sqlite3

Number of system certificates in different versions of iOS (Number of certificates can be updated):

- iOS 5: 183

- iOS 6: 183

- iOS 7: 211

In iOS, there are several ways to install user certificates:

- Via the Safari browser - you must go to the link on which the certificate with the extension .pem or the configuration profile with the extension .profile

is located - Through attaching the certificate to E-mail

- Through the MDM API

It can be seen that installing a user certificate through social engineering on iOS is much easier than on Android.

Possible vectors for installing certificates through social engineering:

1) The user does everything himself because of ignorance. For example, it is promised free Internet access at a specific access point after installing a certain certificate;)

2) A used phone is purchased with a built-in malicious certificate

3) The certificate is installed on the iOS phone in a few seconds, if it happens to be in the hands of an attacker (for example, he asked to call)

4) Network equipment with a "good" certificate - here is the NSA and all things. In the process of viewing system certificates, certificates from Japan and America were noticed. That is, they can conduct MiTM, re-signing the certificate with their own, and the device does not even “pick up” the attack (and a backdoor is not needed in the crypt). Ours are not there = (

Vulns, bugs, errors, ...

In this section, we will consider what problems exist during the interaction between the client application (in our case, the application for mobile banking) and the server.

All the examples given here are real, we got this information in the process of security audits of applications for mobile banking.

- Lack of HTTPS (SSL)

No matter how surprising it may sound, a year ago we came across mobile banking applications in which all communication, including financial transactions, took place using the HTTP protocol: authentication, data on transfers, everything was in the clear. This means that in order to obtain financial gain, an attacker simply had to be on the same network with the victim and have the minimum qualifications. Then, for a successful attack, it was enough to correct the destination account numbers and, if desired, the amount.

As for the interaction of the application with third-party services, the situation has become a little better compared to last year, but as before, most of them go for additional information for their operation through an open channel, which an attacker can easily influence. As a rule, this information is related to:

• bank news

• location of ATMs

• exchange rates

• social networks;

• statistics of the program for developers;

• advertising

In the best case scenario, an attacker can simply misinform the victim, and in the worst case, inject his own code that runs on the victim’s device, which will help to steal authentication data or money in the future. The reason is that the Android OS allows you to download code from the Internet, and then execute it. Under certain circumstances, an attacker can inject such code into an open channel. Or another situation where the developer sends crash dump programs over an open channel, and there you can find the username and password from MB and other interesting information.

- Native encryption

We also met this option both in practice and in the research process. We do not understand its use, but, according to our assumptions, it may be associated with the established internal processes of the bank. At the same time, traffic in SSL is not additionally developed by the developer.

As our practice shows, using your own encryption is not good. Sometimes this is not even encryption, but simply your own binary protocol, which, at first glance, seems incomprehensible, encrypted. So after a little manual analysis, MiTM is also possible.

- Incorrect use of SSL

The most common class of errors is the incorrect use of SSL. Most often it is associated with the following reasons:

• Lack of test infrastructure at the customer

Sometimes the customer cannot provide a good test infrastructure for one reason or another. And this leads to the fact that developers have to go to a number of tricks to verify the correct operation of the application.

• Inattention of the developer

This item is partially related to the previous one, and leads to the fact that the development process uses various debugging code to speed up the testing process. And before the release of the program, they forget about this code.

• Use of vulnerable frameworks

Often, developers use various frameworks to simplify. In other words, they use someone else's code, the low-level part of which is often hidden and inaccessible. This code also contains vulnerabilities, which developers often do not even guess about. This is especially true in the light of active cross-platform development for mobile devices. Example, vulnerability in Appcelerator Titanium or Heartbleed (CVE-2014-0160) in OpenSSL (you can also attack a client with exploit ).

•

Developer errors A developer can use various libraries for working with SSL, each has its own specifics, and it must be taken into account. So, with the transition from library to library, the developer may incorrectly use the constant during initialization or redefine the function to his own.

The main errors when working with SSL:

- Disable checks (debugging API)

- Incorrect redefinition of standard handlers on your own

- Incorrect configuration of API calls

- Weak encryption settings

- Use of an vulnerable version of the library

- Incorrect processing of call results

- No validation of the host name or use of incorrect regex for validation

Unconditional classic - disabling certificate validation!

Unconditional classic - disabling hostname verification in a certificate!

Root certificate compromise

When using SSL, there is a dependency on root certificates. The possibility of their compromise cannot be ruled out - recall, for example, the latest incidents with Bit9, DigiNator and Comodo. Do not forget about the certificates of other countries, companies for which traffic can be said to be open.

As already shown, devices have a large number of CA certificates, and when any of them are compromised, almost all SSL traffic for the device is compromised.

If the CA certificate is compromised:

1) The user can remove the certificate from trusted

a. In Android OS, the user can do this with both built-in certificates and custom

b. In iOS, a user can only remove user certificates

2) An OS developer can release an update

3) A certificate publisher can revoke their certificate. The certificate validation engine can dynamically verify this

a. Android does not support either CRL or OCSP

b. iOS uses OCSP It is

difficult to hope that the user will manage the root certificates themselves. So the only way is to wait for the OS update. OCSP is not implemented everywhere. The user in the interval between compromising and updating the system is vulnerable.

CA certificates are of different types - more precisely, they can serve different purposes (mail encryption, code signing, etc.), but they are usually stored in one secure store and can be used to check trust in an HTTPS connection. Unfortunately, correct verification of certificate assignment is not always implemented. Thus, an attacker can obtain a legitimate certificate from the issuing center for one purpose, and use it to establish an HTTPS connection during a MitM attack.

In addition, when a user works in a browser, he may notice actions with a suspicious certificate by the red lock icon in the address bar. In the same situation, when working through the application, the user will not be informed in any way, unless the developer has foreseen this in advance, which makes the attack hidden.

SSL pinning

You can use the SSL Pinning approach to protect against compromising root system certificates and specially built-in user certificates.

Pinning is the process of associating a host with its expected X509 certificate or public key. The approach is to embed a certificate or public key that we trust when interacting with the server directly in the application and refuse to use the built-in certificate store. As a result, when working with our server, the application will check the validity of the certificate only on the basis of the cryptographic primitive that is flashed into it.

MB applications are great for using SSL Pinning, as developers know exactly which servers the application will connect to, and the list of such servers is small.

SSL Pinning can be of two main types:

- Certificate Pinning:

o Ease of implementation

o Low flexibility of approach

- Public Key Pinning:

o Problems with implementation on some platforms

o Good flexibility of approach

Each approach has its pros and cons .

The advantage is also the ability to use:

1) Self-Signed certificates

2) Private CA-Issued certificates

To implement SSL Pinning, you need to redefine some standard functions and write your own handlers. It is worth noting that SSL Pinning will not work if you use WebView in Android and UIWebView in iOS due to their specifics.

SSL Pinning Engine

App1 will only use a wired certificate or public key to verify the validity of the certificate.

App2 to check the validity of the certificate will go to the system certificate store, where it will go through all the certificates sequentially.

The use of SSL Pinning these days

For the first time, technology has spread widely in Chrome 13 for Google services. Then there were Twitter, Cards.io, etc.

Now all the mobile app stores (Google Play, App Store, WindowsPhone Market) already use this approach to work with their devices.

The code for implementing SSL Pinning can now be found on the OWASP website for Android, iOS, and .NET. Starting with Android 4.2, SSL Pinning is supported at the system level.

Bypass SSL Pinning

SSL Pinning can be bypassed / disabled if jailbreak or root access is present on the mobile device. As a rule, this is only necessary for researchers to analyze network traffic. To disconnect on Android, there is the Android SSL Bypass program, for iOS, the iOS SSL Kill Switch and TrustMe programs.

In theory, malware can use these same approaches.

Like any code, SSL Pinning checks may not be implemented correctly, and you should pay attention to this.

Analysis

Enough theory, let's move on to practice and results!

For this study, we used 2 devices: iPhone with iOS 7 and Android with 4.0.3.

Of the tools used: Burp, sslsplit, iptables and openssl. Here is such a simple set. As you might guess, dynamic analysis was used - there was just an attempt to authenticate with the bank through the application. It's funny that all applications are available for free in stores (Google Play and App Store), and we do not need registered accounts in all (there are several exceptions) banks! So this experiment can be carried out by anyone with a certain level of knowledge and “straight” hands.

We checked two aspects:

1) How valid is the SSL certificate validation on the client side:

- Used a self-signed certificate

- Used a certificate issued by a trusted CA to a different host name

2) Whether SSL Pinning is enabled:

- Used a CA certificate for this host;

We conducted an active attack “MitM”. To do this, we forced the victim to access the Internet through our controlled gateway, on which we manipulated the certificates.

To test the work with self-signed certificates, we generated our own.

To verify the presence of SSL Pinning and the correctness of the host name verification, we generated our own root certificate and installed it on a mobile device.

So the results are:

IOS has 6% of applications, and Android 11% of applications had their own protocol, and the security of this interaction requires deep manual analysis. From the visual analysis of traffic, we can say that it contains both clear, readable data, and compressed / encrypted. Our practice and world experience allow us to say that, most likely, these applications are vulnerable to the “MitM” attack.

14% of iOS apps and 15% of Android apps are vulnerable to self-signed certificates. Stealing funds for these applications is just a matter of time.

14% of iOS applications and 23% of Android applications are vulnerable to CA-signed cert hostname. It can also be noted that when checking host names, there can be many validation errors. Due to the fact that this check was performed with only one name, we can consider these results as only the lower limit of the number of vulnerable applications.

It should be said that only one bank has a vulnerable application for iOS and Android at the same time.

SSL pinning is very rare: in 1% of applications for iOS and in 8% of applications for Android. It is worth noting that, perhaps, this check did not pass for any other reasons not related to SSL pinning, and the percentage of applications using this mechanism is even less. Also, we did not try to circumvent this protective mechanism, did not analyze the correctness of its implementation.

As our experience in analyzing the security of mobile applications shows, there is almost always a code responsible for disabling certificate validation. Functions are usually called in the spirit of Fake *, NonValidating *, TrustAll *, etc. This code is used by developers for test purposes. In this regard, due to the carelessness of the developer, the code may fall into the final release of the program. Thus, a certificate validation vulnerability can appear in one version and disappear in another, which makes this vulnerability “floating” from version to version. As a result, the security of this code depends on the correctness of the processes organized by the developer.

CONCLUSION

Incorrect work with SSL is just one of the vulnerabilities that leads to the theft of money from the accounts of bank customers. The use of other vulnerabilities (sometimes less critical) and their chains can also lead to financial losses. At the same time, on the server side, too, sometimes everything is not good (the ABS sometimes beckons to itself), but this is a completely different story.

PS It is worth noting that similar vulnerabilities were found by the author in the process of participating in various BugBounty programs;)

PSS Thanks to Yegor Karbutov, Ivan Chalykin, Nikita Kelesis for help in analyzing such a huge number of programs!