GPU SSSP Implementation

annotation

In this article I want to tell you how to efficiently parallelize the SSSP algorithm - finding the shortest path in a graph using graphics accelerators. As a graphics accelerator, the GTX Titan card of the Kepler architecture will be considered .

Introduction

Recently, graphics accelerators (GPUs) have been playing an increasingly important role in non-graphical computing. The need for their use is due to their relatively high productivity and lower cost. As you know, on the GPU, problems on structural grids are well solved, where parallelism is easily distinguished one way or another. But there are tasks that require large capacities and use non-structural grids. An example of such a problem is the Single Shortest Source Path problem (SSSP) - the task of finding the shortest paths from a given vertex to all the others in a weighted graph. To solve this problem on the CPU, there are at least two well-known algorithms: the Deystra algorithm and the Ford-Bellman algorithm. There are also parallel implementations of the Deystra and Ford-Bellman algorithm on the GPU. Here are the main articles that describe solutions to this problem:

- Accelerating Large Graph Algorithms on the GPU Using CUDA, Pawan Harish and PJ Narayanan

- A New GPU-based Approach to the Shortest Path Problem, Hector Ortega-Arranz, Yuri Torres, Diego R. Llanos, and Arturo Gonzalez-Escribano

There are other English-language articles. But all of these articles use the same approach - the idea of the Deystra algorithm. I will describe how you can use the idea of the Ford-Bellman algorithm and the advantages of the Kepler architecture to solve the problem. A lot has already been written about the architecture of the GPU and the algorithms mentioned, so in this article I will not write more about this. Also, it is believed that the concepts of warp (warp), cuda block, SMX, and other basic things related to CUDA are familiar to the reader.

Data structure description

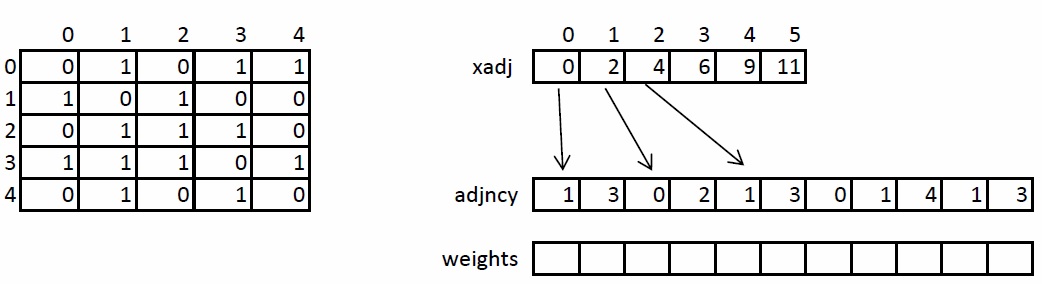

We briefly consider the storage structure of an undirected weighted graph, since in the future it will be mentioned and transformed. The graph is specified in compressed CSR format. This format is widely used for storing sparse matrices and graphs. For a graph with N vertices and M edges, three arrays are needed: xadj, adjncy, weights. The xadj array is of size N + 1, the other two are 2 * M, since in a non-oriented graph for any pair of vertices it is necessary to store the direct and reverse arcs.

The principle of storing the graph is as follows. The entire list of neighbors of vertex I is in the adjncy array from index xadj [I] to xadj [I + 1], not including it. The similar indices store the weights of each edge from vertex I. For illustration, the figure on the left shows a graph of 5 vertices written using the adjacency matrix, and on the right in CSR format.

GPU implementation of the algorithm

Input preparation

In order to increase the computational load on one streaming multiprocessor (SMX), it is necessary to convert the input data. All transformations can be divided into two stages:

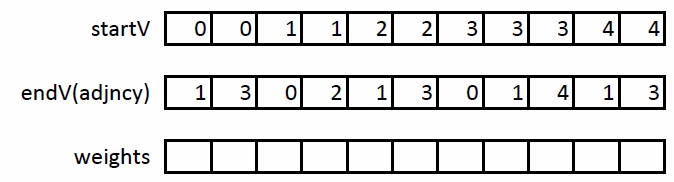

- Extending CSR format to coordinate format (COO)

- Sort COO format

At the first stage, it is necessary to expand the CSR format as follows: we introduce another startV array in which we will store the beginning of the arcs. Then in the adjncy array their ends will be stored. Thus, instead of storing neighbors, we will store arcs. An example of this transformation on the graph described above:

At the second stage, it is necessary to sort the edges obtained so that each pair (U, V) occurs exactly once. Thus, when processing an edge (U, V), it is possible to immediately process an edge (V, U) without having to re-read the data about this edge from the global memory of the GPU.

Core computing core

The basis for implementation on the GPU is the Ford-Bellman algorithm . This algorithm is well suited for implementation on the GPU due to the fact that the ribs can be viewed independently of each other and the data of the ribs and their weights are arranged in a row, which improves the bandwidth of the GPU memory:int k = blockIdx.x * blockDim.x + threadIdx.x;

if(k < maxV)

{

double w = weights[k];

unsigned en = endV[k];

unsigned st = startV[k];

if(dist[st] > dist[en] + w) // (*)

{

dist[st] = dist[en] + w;

modif[0] = iter;

}

elseif(dist[en] > dist[st] + w) // (**)

{

dist[en] = dist[st] + w;

modif[0] = iter;

}

}

In this kernel, each thread processes two edges (forward and reverse), trying to improve the distance along one of them. It is clear that both conditions of the if block cannot be fulfilled simultaneously. Unlike the Ford-Bellman algorithm, where each edge is scanned sequentially, in the GPU-implemented algorithm, a situation of “race” of flows may occur - when two or more flows come in to update the same dist [I] cell. We show that in this case the algorithm remains correct.

Suppose that there are two threads K1 and K2 that want to update the cell dist [I]. This means that condition (*) or (**) is fulfilled. Two cases are possible. The first - one of the two threads recorded the smallest value. Then at the next iteration for these two threads the condition will be false, and the value in the cell dist [I] will be minimal. The second - one of the two threads recorded not the minimum value. Then, at the next iteration, the condition will be true for one of the threads, and false for the other. Thus, the result will be the same in both cases, but achieved in a different number of iterations.

According to the optimized version of the Ford-Bellman algorithm, if at any iteration there were no changes in the dist array, then iterating further does not make sense. The modif variable was introduced on the GPU for these purposes, in which the threads wrote the number of the current iteration.

One iteration - one kernel launch. In the basic version, on the CPU we start the kernel in a loop, then we read the modif variable and, if it has not changed from the previous iteration, then in the dist array the answer to the problem is the shortest path from the given vertex to all the others.

Optimization of the implemented algorithm

Next, we consider some optimizations that can significantly improve the performance of the algorithm. Knowledge of the final architecture can be helpful in performing optimizations.

Modern CPUs have a three-level cache. The size of the first level cache is 64K and is contained on all processor cores. The size of the second level cache varies from 1 to 2MB. The cache of the third level is common to the entire CPU and has a size of the order of 12-15MB.

Modern GPUs have a two-level cache. The size of the first level cache is 64KB. Used for shared memory and crowding out registers. No more than 48KB is available for shared memory. It is contained in each computing unit. The maximum size of the second level cache is 1.5 MB and is common to the entire GPU. Used to cache data downloaded from the global memory of the GPU. The most modern GPU GK110 chip has 15 processing units. It turns out that approximately 48KB of the first level cache and 102KB of the second level cache fall on one block. Compared to the CPU, this is very small, so the read operations from the global memory of the GPU are more expensive than from the main memory of the central processor. Also, the Kepler architecture has the ability to directly access the read-only texture cache.

Using texture cache

In this task, you need to constantly update and read the dist array of distances. This array takes up quite a bit of space in the global memory of the GPU compared to information about arcs and their weights. For example, for a graph with the number of vertices of 2 20 (approximately 1 million), the dist array will occupy 8 MB. Despite this, access to this array is carried out randomly, which is bad for the GPU, since additional downloads from the global memory to each computing warp are generated. In order to minimize the number of downloads per warp, you must save the data in the L2 cache, read, but not used by other warp data.Since the texture cache is read-only, in order to use it, I had to enter two different links to the same array of dist distances. Relevant Code:

__global__ voidrelax_ker(constdouble * __restrict dist, double *dist1, … …){

int k = blockIdx.x * blockDim.x + threadIdx.x + minV;

if(k < maxV)

{

double w = weights[k];

unsigned en = endV[k];

unsigned st = startV[k];

if(dist[st] > dist[en] + w)

{

dist1[st] = dist[en] + w;

modif[0] = iter;

}

elseif(dist[en] > dist[st] + w)

{

dist1[en] = dist[st] + w;

modif[0] = iter;

}

}

}

As a result, it turned out that inside the kernel all read operations are performed with one array, and all write operations with another. But both dist and dist1 links point to the same GPU memory location.

Data localization for better cache utilization

For the best performance of the optimization described above, it is necessary that the downloaded data be in the L2 cache for as long as possible. The dist array is accessed using predefined indexes stored in endV and startV arrays. To localize calls, we divide the dist array into segments of a certain length, for example, P elements. Since there are N vertices in the graph, we get (N / P + 1) different segments. Next, we will sort the edges into these segments as follows. In the first group, we assign the edges whose ends fall into the zero segment, and the beginning - first in the zero, then in the first, etc. In the second group, we assign the edges whose ends fall in the first segment, and the beginning - first in the zero, then in the first, etc.After this permutation of the edges, the values of the elements of the dist array, corresponding, for example, to the first group will be in the cache for as long as the threads in the first group will request data from the zero segment for end vertices and zero, first, etc. for the starting vertices. Moreover, the edges are arranged so that the threads will request data from no more than three different segments.

Algorithm Test Results

For testing, synthetic non - oriented RMAT graphs were used , which well model real graphs from social networks and the Internet. Graphs have an average connectivity of 32, the number of vertices is a power of two. The table below shows the graphs that were tested.| Number of vertices 2 ^ N | Number of vertices | Number of arcs | The size of the dist array in MB | The size of the arrays of ribs and weights in MB |

| 14 | 16 384 | 524,288 | 0.125 | four |

| 15 | 32,768 | 1,048,576 | 0.250 | eight |

| sixteen | 65,536 | 2 097 152 | 0,500 | sixteen |

| 17 | 131 072 | 4 194 304 | one | 32 |

| 18 | 262 144 | 8 388 608 | 2 | 64 |

| nineteen | 524,288 | 16 777 216 | four | 128 |

| 20 | 1,048,576 | 33 554 432 | eight | 256 |

| 21 | 2 097 152 | 67 108 864 | sixteen | 512 |

| 22 | 4 194 304 | 134 217 728 | 32 | 1024 |

| 23 | 8 388 608 | 268 435 456 | 64 | 2048 |

| 24 | 16 777 216 | 536 870 912 | 128 | 4096 |

It can be seen from the table that the distance array dist for a graph with the number of vertices of 2 18 or more does not fit entirely into the L2 cache on the GPU. Testing was performed on the Nividia GTX Titan GPU, which has 14 SMX with 192 cuda cores (2688 in total) and a 3rd generation Intel core i7 processor with a frequency of 3.4GHz and 8MB cache. To compare the performance on the CPU, the optimized Dijkstra algorithm was used. No optimizations in the form of data permutation before working on the CPU were made. Instead of time, the performance indicator is the number of arcs processed per second of time. In this case, it is necessary to divide the time obtained by the number of arcs in the graph. As the final result, an average of 32 points was taken. The maximum and minimum values were also calculated.

For compilation, Intel compilers of the 13th version and NVCC CUDA 5.5 with the flags –O3 –arch = sm_35 were used.

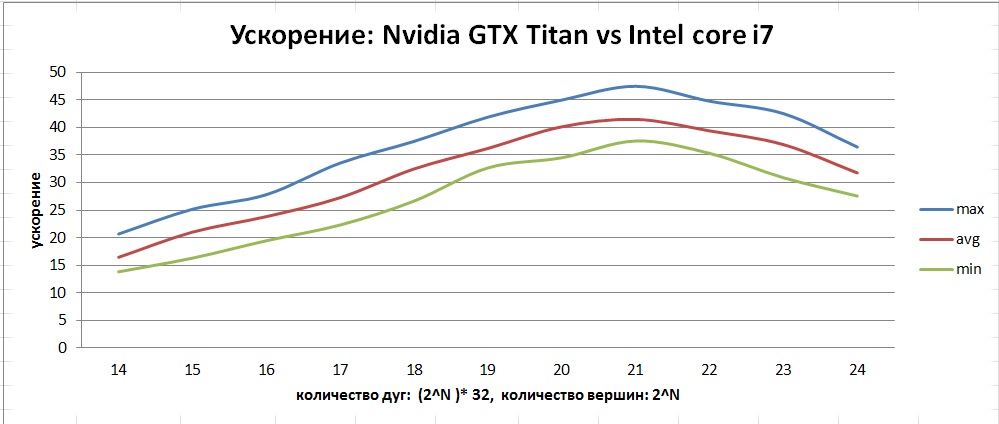

As a result of the work done, consider the following graph:

This graph shows the average performance curves for the following algorithms:

- cache && restrict on - GPU algorithm with all optimizations

- cache off - GPU algorithm without optimizing permutations for improved caching

- restrict off - GPU algorithm without texture cache optimization

- cache && restrict off - basic GPU algorithm without optimizations

- CPU - basic algorithm on the CPU

It can be seen that both optimizations greatly affect performance. It is worth noting that misuse of const __restrict optimization can degrade performance. The resulting acceleration can be seen in this graph:

According to the graph above, it can be seen that, unlike the CPU, the GPU has a larger range of deviations from the average. This is due to the peculiarity of the algorithm implementation in the form of a “race” of flows, since from start to start we can get a different number of iterations.

Conclusion

As a result of the work done, the SSSP algorithm was developed, implemented and optimized - the search for the shortest paths in a graph. This algorithm searches for the shortest distances in a graph from a given vertex to all others. Among all the graphs that fit in the GTX Titan memory, the maximum performance is shown by graphs with the number of vertices up to 2 21 - about 1100 million edges per second. The maximum average acceleration that was achieved was about 40 on graphs with the number of vertices from 1 to 4 million.