The logic of thinking. Part 1. Neuron

About a year and a half ago I laid out on Habr a cycle of video lectures with my vision of how the brain works and what are the possible ways of creating artificial intelligence. Since then, it has been possible to make significant progress. Something turned out to be deeper understood, something managed to simulate on a computer. What is nice, there are like-minded people who are actively involved in the work on the project.

In this series of articles, it is planned to talk about the concept of intelligence that we are currently working on and demonstrate some solutions that are fundamentally new in the field of modeling the work of the brain. But in order for the story to be understandable and consistent, it will contain not only a description of new ideas, but also a story about the functioning of the brain in general. Some things, especially at the beginning, may seem simple and well-known, but I would advise you not to skip them, as they largely determine the general evidence of the story.

Brain Overview

Nerve cells, they are neurons, together with their fibers that transmit signals, form the nervous system. In vertebrates, the majority of neurons are concentrated in the cranial cavity and spinal canal. This is called the central nervous system. Accordingly, the brain and spinal cord are distinguished as its components.

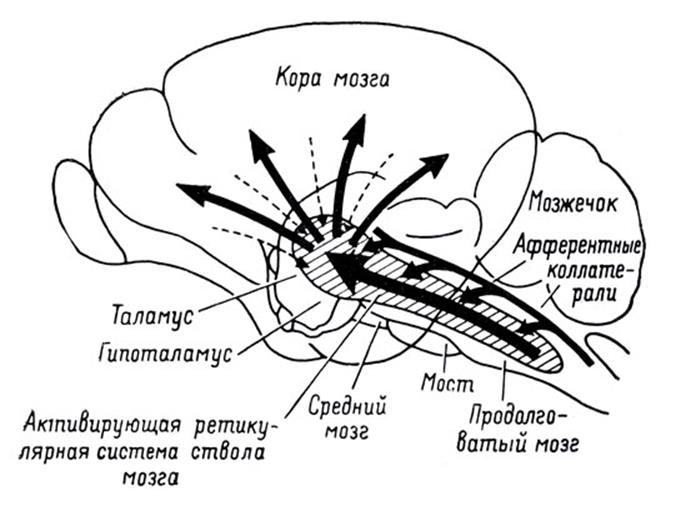

The spinal cord collects signals from most of the receptors in the body and transmits them to the brain. Through the structures of the thalamus, they are distributed and projected onto the cortex of the cerebral hemispheres.

The projection of information on the cortex

In addition to the cerebral hemispheres, the cerebellum, which, in fact, is a small independent brain, is also involved in the processing of information. The cerebellum provides precise motility and coordination of all movements.

Vision, hearing and smell provide the brain with a stream of information about the outside world. Each of the components of this stream, having passed along its path, is also projected onto the cortex. The cortex is a layer of gray matter with a thickness of 1.3 to 4.5 mm that makes up the outer surface of the brain. Due to the convolutions formed by the folds, the bark is packed in such a way that it takes up three times less area than in a flattened form. The total crustal area of one hemisphere is approximately 7000 sq. Cm.

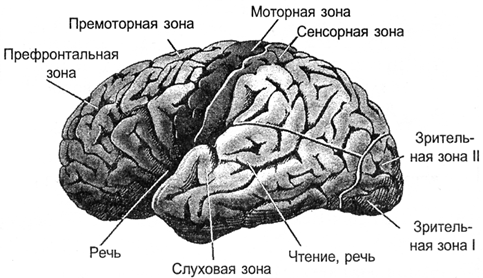

As a result, all signals are projected onto the cortex. The projection is carried out by bundles of nerve fibers, which are distributed in limited areas of the cortex. The area on which either external information is projected, or information from other parts of the brain forms a zone of the cortex. Depending on what signals are coming to such a zone, it has its own specialization. Distinguish the motor zone of the cortex, sensory zone, Broca, Wernicke, visual zones, occipital lobe, only about a hundred different zones.

Zones of bark

In the vertical direction, the cortex is usually divided into six layers. These layers do not have clear boundaries and are determined by the predominance of one or another type of cell. In different zones of the cortex, these layers can be expressed in different ways, stronger or weaker. But, in general, it can be said that the crust is quite universal, and it can be assumed that the functioning of its different zones obeys the same principles.

Layers of bark

Through afferent fibers, signals enter the cortex. They get to the III, IV level of the cortex, where they are distributed to the neurons located nearby to the place where the afferent fiber fell. Most neurons have axonal connections within their cortex. But some neurons have axons that go beyond it. Through these efferent fibers, signals go either outside the brain, for example, to the executive organs, or are projected onto other parts of the cortex of one or another hemisphere. Depending on the direction of signal transmission, efferent fibers are usually divided into:

- associative fibers that connect separate sections of the cortex of one hemisphere;

- commissural fibers that connect the cortex of two hemispheres;

- projection fibers that connect the cortex to the nuclei of the lower parts of the central nervous system.

If we take a direction perpendicular to the surface of the cortex, it is noted that neurons located along this direction respond to similar stimuli. Such vertically arranged groups of neurons are commonly called cortical columns.

You can imagine the cerebral cortex as a large canvas, cut into separate zones. The picture of the activity of neurons in each of the zones encodes certain information. Bunches of nerve fibers formed by axons extending beyond their cortical zone form a system of projection connections. Certain information is projected onto each zone. Moreover, several information flows that can come both from the zones of their own and the opposite hemisphere can simultaneously arrive at one zone. Each stream of information is like a kind of picture drawn by the activity of axons of the nerve bundle. The functioning of a separate zone of the cortex is the acquisition of many projections, storing information, processing it, forming its own picture of activity and further projecting information resulting from the work of this zone.

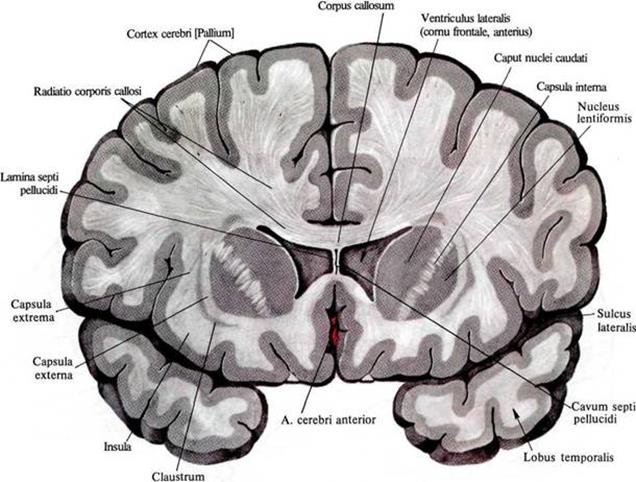

A significant volume of the brain is white matter. It is formed by axons of neurons that create those same projection paths. In the figure below, white matter can be seen as a light filling between the cortex and the internal structures of the brain.

Distribution of white matter in the frontal section of the brain

Using diffuse spectral MRI, it was possible to track the direction of individual fibers and build a three-dimensional model of the connectedness of the cortical zones (Connectomics project (Connect)).

The picture below gives a good idea of the structure of bonds (Van J. Wedeen, Douglas L. Rosene, Ruopeng Wang, Guangping Dai, Farzad Mortazavi, Patric Hagmann, Jon H. Kaas, Wen-Yih I. Tseng, 2012).

Left side

view Rear

view Right view

By the way, the asymmetry of the projection paths of the left and right hemispheres is clearly visible in the rear view. This asymmetry largely determines the differences in the functions that the hemispheres acquire as they learn.

Neuron

The basis of the brain is a neuron. Naturally, the simulation of the brain using neural networks begins with an answer to the question, what is the principle of its operation.

The work of a real neuron is based on chemical processes. At rest between the internal and external environment of the neuron there is a potential difference - a membrane potential of about 75 millivolts. It is formed due to the work of special protein molecules working like sodium-potassium pumps. Due to the energy of ATP nucleotide, these pumps drive potassium ions inward and sodium ions outward of the cell. Since the protein in this case acts as ATPase, that is, an enzyme that hydrolyzes ATP, it is called "sodium potassium ATPase". As a result, the neuron turns into a charged capacitor with a negative charge inside and a positive charge outside.

Scheme of the neuron (Mariana Ruiz Villarreal)

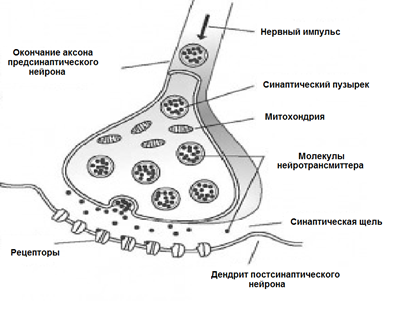

The surface of the neuron is covered with branching processes - dendrites. The dendrites are adjacent to the axonal ends of other neurons. The places of their connections are called synapses. Through synaptic interaction, a neuron is able to respond to incoming signals and, under certain circumstances, generate its own impulse, called a spike.

Signal transmission in synapses occurs due to substances called neurotransmitters. When a nerve impulse along the axon enters the synapse, it releases from the special vesicles the neurotransmitter molecules characteristic of this synapse. On the membrane of the neuron receiving the signal, there are protein molecules - receptors. Receptors interact with neurotransmitters.

Chemical synapse

Receptors located in the synaptic cleft are ionotropic. This name is emphasized by the fact that they are also ion channels capable of transporting ions. Neurotransmitters act on receptors so that their ion channels open. Accordingly, the membrane is either depolarized or hyperpolarized, depending on which channels are affected and, accordingly, what type of synapse. In excitatory synapses, channels open that pass cations into the cell — the membrane is depolarized. In the inhibitory synapses, channels conducting anions open, which leads to hyperpolarization of the membrane.

In certain circumstances, synapses can change their sensitivity, which is called synaptic plasticity. This leads to the fact that the synapses of one neuron acquire a different susceptibility to external signals.

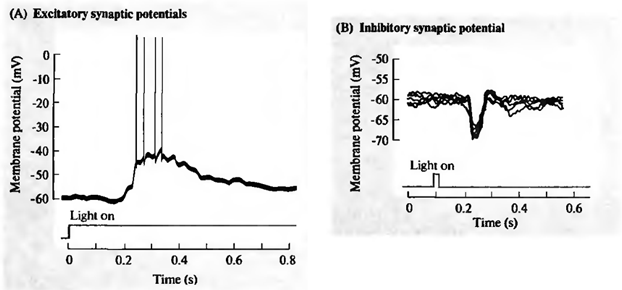

At the same time, a lot of signals arrive at the synapses of the neuron. The inhibitory synapses pull the membrane potential towards the accumulation of charge inside the cage. Activating synapses, on the contrary, try to discharge the neuron (figure below).

Excitation (A) and inhibition (B) of the retinal ganglion cell (Nicholls J., Martin R., Wallas B., Fuchs P., 2003)

When the total activity exceeds the initiation threshold, a discharge occurs, called the action potential or spike. Spike is a sharp depolarization of the neuron membrane, which generates an electrical impulse. The entire pulse generation process lasts about 1 millisecond. At the same time, neither the duration nor the amplitude of the pulse depends on how strong were the causes that caused it (figure below).

Registration of the action potential of the ganglion cell (Nicholls J., Martin R., Wallas B., Fuchs P., 2003)

After soldering, ion pumps provide neurotransmitter re-capture and clearing of the synaptic cleft. During the refractory period following the spike, the neuron is not able to generate new impulses. The duration of this period determines the maximum generation frequency that a neuron is capable of.

Adhesions that arise as a result of activity at synapses are called evoked. The repetition rate of induced spikes encodes how well the incoming signal matches the sensitivity setting of the neuron synapses. When the incoming signals are precisely at the sensitive synapses that activate the neuron, and this does not interfere with the signals coming to the inhibitory synapses, then the reaction of the neuron is maximal. The image that is described by such signals is called a stimulus characteristic of a neuron.

Of course, the idea of the work of neurons should not be oversimplified. Information between some neurons can be transmitted not only by spikes, but also due to channels connecting their intracellular contents and transmitting electric potential directly. This propagation is called gradual, and the connection itself is called the electrical synapse. Dendrites depending on the distance to the body of the neuron are divided into proximal (close) and distal (distant). Distal dendrites can form sections that operate as semi-autonomous elements. In addition to synaptic pathways of excitation, there are extrasynaptic mechanisms that cause metabotropic adhesions. In addition to evoked activity, there is also spontaneous activity. And finally, brain neurons are surrounded by glial cells, which also have a significant effect on ongoing processes.

The long evolutionary path has created many mechanisms that the brain uses in its work. Some of them can be understood on their own, the meaning of others becomes clear only when considering sufficiently complex interactions. Therefore, do not take the above description of the neuron as exhaustive. To move on to deeper models, we first need to deal with the “basic” properties of neurons.

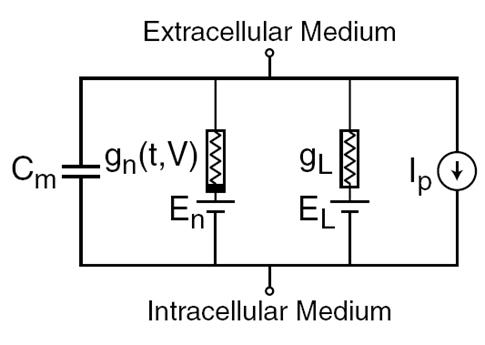

In 1952, Alan Lloyd Hodgkin and Andrew Huxley made descriptions of electrical mechanisms that determine the generation and transmission of a nerve signal in a giant squid axon (Hodgkin, 1952). What was rated by the Nobel Prize in physiology and medicine in 1963. The Hodgkin-Huxley model describes the behavior of a neuron by a system of ordinary differential equations. These equations correspond to an autowave process in an active medium. They take into account many components, each of which has its own biophysical counterpart in a real cell (figure below). Ion pumps correspond to the current source I p . The inner lipid layer of the cell membrane forms a capacitor with a capacity of C m . Synaptic receptor ion channels provide electrical conductivity gn , which depends on the input signals that varies with time t, and the total amount of membrane potential V. The leakage current conductor membrane pores creates g L . On ion channels ion motion takes place under the influence of electrochemical gradients which correspond to the voltage sources with an electromotive force of E n and E L .

The main components of the Hodgkin-Huxley model

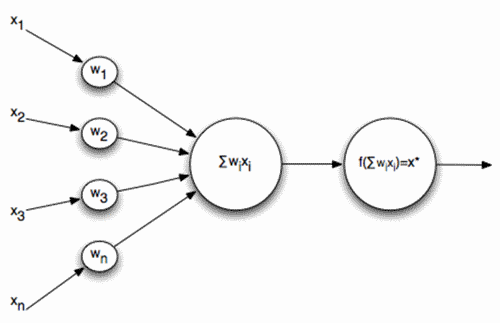

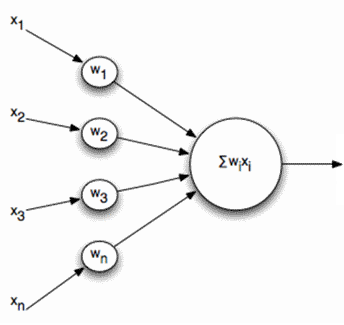

Naturally, when creating neural networks, there is a desire to simplify the neuron model, leaving only the most essential properties in it. The most famous and popular simplified model is the McCulloch – Pitts artificial neuron, developed in the early 1940s (McCulloch J., Pitts W., 1956).

McCulloch - Pitts Formal Neuron

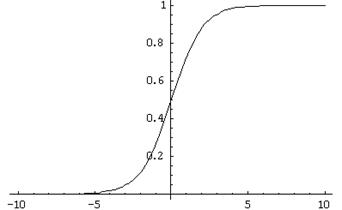

Signals are sent to the inputs of such a neuron. These signals are weighted summarized. Further, a certain nonlinear activation function, for example, sigmoidal, is applied to this linear combination. Often, the logistic function is used as a sigmoid:

Logistic function

In this case, the activity of a formal neuron is written as

As a result, such a neuron turns into a threshold adder. With a rather steep threshold function, the neuron output signal is either 0 or 1. The weighted sum of the input signal and the neuron weights is a convolution of two images: the image of the input signal and the image described by the neuron weights. The result of convolution is higher, the more precisely the correspondence of these images. That is, a neuron, in fact, determines how much the supplied signal resembles the image recorded on its synapses. When the convolution value exceeds a certain level and the threshold function switches to unity, this can be interpreted as a decisive statement by the neuron that it recognized the presented image.

Real neurons do in some way resemble McCulloch-Pitts neurons. The amplitude of their spikes does not depend on what signals at the synapses caused them. Spike is either there or not. But real neurons respond to a stimulus not with a single impulse, but with an impulse sequence. Moreover, the pulse frequency is higher, the more accurately the image characteristic of a neuron is recognized. This means that if we build a neural network from such threshold adders, then with a static input signal, although it will give some kind of output result, this result will be far from reproducing how real neurons work. In order to bring the neural network closer to the biological prototype, we need to simulate the work in dynamics, taking into account the time parameters and reproducing the frequency properties of the signals.

But you can go the other way. For example, we can distinguish a generalized characteristic of neuron activity, which corresponds to the frequency of its impulses, that is, the number of spikes for a certain period of time. If we proceed to this description, we can imagine a neuron as a simple linear adder.

Linear adder

The output signals and, accordingly, the input signals for such neurons are no longer diatomic (0 or 1), but are expressed by a certain scalar value. The activation function is then written as

A linear adder should not be perceived as something fundamentally different from a pulsed neuron, it just allows you to go to longer time intervals when modeling or describing. Although the impulse description is more correct, the transition to a linear adder is in many cases justified by a strong simplification of the model. Moreover, some important properties that are difficult to see in a pulsed neuron are quite obvious for a linear adder.

Continuation

Used literature

Aleksey Redozubov (2014)