Recommender system on .Net or first steps with MyMediaLite

I once went to BigData courses, on the recommendation of friends, and I was lucky to participate in the competition. I will not talk about training on the course, but I will talk about the MyMediaLite library on .Net and how I used it.

Foreplay

On the nose was the final laboratory work. Throughout the course, I did not particularly enter into competition in laboratory work; closer to the end, my life forced me to fight - in order to get a certificate, I had to earn points. The last lecture was not very informative, rather a review and I decided not to waste time, at the same time to do the last lab. Unfortunately, at that moment I did not have my own cluster with Apache Spark installed. On the training cluster, as everyone rushed to do the laboratory, there were few chances and resources for success. My choice fell on MyMediaLite on C # .Net. Fortunately, there was a working server, not heavily loaded and allocated for experiments, pretty good, with two CPUs and 16 Gb of RAM.

Conditions of the problem

We were provided with the following data:

- movie ratings table train.csv (fields userId, movieId, rating, timestamp). A good half of the sample is given for tearing (randomly sorted by movieId and userId), the second half remains with the course supervisor to assess the quality of the recommendation system

- tags.csv table (userId, movieId, tag, timestamp fields) with movie tags

- table movies.csv (fields movieId, title, genres) with the name of the movie and its genre

- links.csv table (movieId, imdbId, tmdbId fields) correspondence of the film identifier in the imdb and themoviedb databases (you can find additional characteristics of films there)

- table test.csv (fields userId, movieId and rating) in fact, the second half of the selection, but without ratings.

It is necessary to predict the ratings of the films in the test.csv table, create a resulting file that contains data in the format: userId, movieId, rating and upload to the checker. The quality of recommendations will be evaluated by RMSE and it should be no worse (meaning no more) 0.9 for offset. Next will be the struggle for the best result.

All data files are available here https://goo.gl/iVEbfA

Excellent article on how to read RMSE

My decision

The latest version of the code is available in gihaba.

Well, who goes into battle without intelligence? “Intelligence data” was obtained during the breaks of lectures and it turned out that they slipped us :) the notorious movielens 1m , with the addition of some other data set. Those who have already mastered the lab praised SVD ++.

As a rule, any machine learning consists of three parts:

- Representativeation

- Evaluation

- Optimization

I also went this way and divided the sample into two parts, 70% and 30%, respectively. The second part of the sample is needed to verify the accuracy of the model. The very first version of the code was written, according to the results of which the laboratory work was successfully passed. The result is 0.880360573502 on the BiasedMatrixFactorization model . He swept away all the tinsel with tags and links immediately, they could be used as additional features to get a better result. I didn’t waste time on it and it was the right decision, IMHO. Users who were absent from the training set were also boldly ignored, and ratings were given by unknown values returned by the BiasedMatrixFactorization class. This was a serious mistake that cost me first place. On model SVD ++, the result was 0.872325203952 . The checker showed the first place and I, with a calm soul, rehearsing the winner's speech, went to bed. But as they say, chickens are counted in the fall.

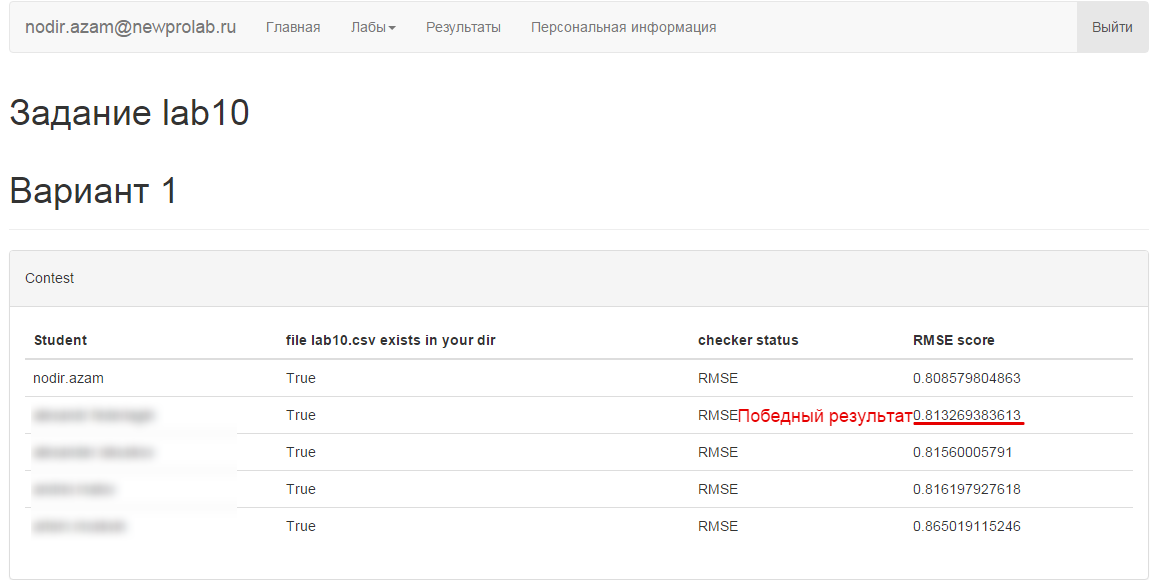

Competition Results

I’ll be brief, the winning place passed from hand to hand several times. As a result, at the time of the deadline, my friend got first place, and I - second. We, programmers, are a stubborn people, and still managed to squeeze out the best result on BiasedMatrixFactorization . Alas, after the deadline.

Alternative solution

My friend wenk , who won first place, kindly agreed to provide his code. His solution was implemented on a cluster with Apache Spark using ALS from scikit-learn.

# coding: utf-8

# In[1]:

import os

import sys

os.environ["PYSPARK_SUBMIT_ARGS"]=' --driver-memory 5g --packages com.databricks:spark-csv_2.10:1.1.0 pyspark-shell'

sys.path.insert(0, os.environ.get('SPARK_HOME', None) + "/python")

import py4j

from pyspark import SparkContext,SparkConf,SQLContext

conf = (SparkConf().setMaster("spark://bd-m:7077")

.setAppName("lab09")

.set("spark.executor.memory", "50g")

.set("spark.driver.maxResultSize","5g")

.set("spark.driver.memory","2g")

.set("spark.cores.max", "26"))

sc = SparkContext(conf=conf)

sqlCtx = SQLContext(sc)

# In[2]:

ratings_src=sc.textFile('/lab10/train.csv',26)

ratings=ratings_src.map(lambda r: r.split(",")).filter(lambda x: x[0]!='userId').map(lambda x: (int(x[0]),int(x[1]),float(x[2])))

ratings.take(5)

# In[3]:

test_src=sc.textFile('/lab10/test.csv',26)

test=test_src.map(lambda r: r.split(",")).filter(lambda x: x[0]!='userId').map(lambda x: (int(x[0]),int(x[1])))

test.take(5)

# In[4]:

from pyspark.mllib.recommendation import ALS, MatrixFactorizationModel

from pyspark.mllib.recommendation import Rating

rat = ratings.map(lambda r: Rating(int(r[0]),int(r[1]),float(r[2])))

rat.cache()

rat.first()

# In[14]:

training,validation,testing = rat.randomSplit([0.6,0.2,0.2])

# In[15]:

print training.count()

print validation.count()

print testing.count()

# In[16]:

training.cache()

validation.cache()

# In[17]:

import math

def evaluate_model(model, dataset):

testdata = dataset.map(lambda x: (x[0],x[1]))

predictions = model.predictAll(testdata).map(lambda r: ((r[0], r[1]), r[2]))

ratesAndPreds = dataset.map(lambda r: ((r[0], r[1]), r[2])).join(predictions)

MSE = ratesAndPreds.map(lambda r: (r[1][0] - r[1][1])**2).reduce(lambda x, y: x + y) / ratesAndPreds.count()

RMSE = math.sqrt(MSE)

return {'MSE':MSE, 'RMSE':RMSE}

# In[12]:

rank=20

numIterations=30

# In[28]:

model = ALS.train(training, rank, numIterations)

# In[ ]:

numIterations=30

lambda_=0.085

ps = []

for rank in range(25,500,25):

model = ALS.train(training, rank, numIterations,lambda_)

metrics = evaluate_model(model, validation)

print("Rank = " + str(rank) + " MSE = " + str(metrics['MSE']) + " RMSE = " + str(metrics['RMSE']))

ps.append((rank,metrics['RMSE']))

# In[10]:

ls = []

rank=2

numIterations = 30

for lambda_ in [0.0001, 0.001, 0.01, 0.1, 1.0, 10.0, 100.0, 1000.0]:

model = ALS.train(training, rank, numIterations, lambda_)

metrics = evaluate_model(model, validation)

print("Lambda = " + str(lambda_) + " MSE = " + str(metrics['MSE']) + " RMSE = " + str(metrics['RMSE']))

ls.append((lambda_,metrics['RMSE']))

# In[23]:

ls = []

rank=250

numIterations = 30

for lambda_ in [0.085]:

model = ALS.train(training, rank, numIterations, lambda_)

metrics = evaluate_model(model, validation)

print("Lambda = " + str(lambda_) + " MSE = " + str(metrics['MSE']) + " RMSE = " + str(metrics['RMSE']))

ls.append((lambda_,metrics['RMSE']))

#Lambda = 0.1 MSE = 0.751080178965 RMSE = 0.866648821014

#Lambda = 0.075 MSE = 0.750219897276 RMSE = 0.866152352232

#Lambda = 0.07 MSE = 0.750033337876 RMSE = 0.866044651202

#Lambda = 0.08 MSE = 0.749335888762 RMSE = 0.865641894066

#Lambda = 0.09 MSE = 0.749929174577 RMSE = 0.865984511742

#rank 200 Lambda = 0.085 MSE = 0.709501168484 RMSE = 0.842318923261

get_ipython().run_cell_magic(u'time', u'', u'rank=400\nnumIterations=30\nlambda_=0.085\nmodel = ALS.train(rat, rank, numIterations,lambda_)\npredictions = model.predictAll(test).map(lambda r: (r[0], r[1], r[2]))')

# In[7]:

te=test.collect()

base=sorted(te,key=lambda x: x[0]*1000000+x[1])

# In[8]:

pred=predictions.collect()

# In[9]:

t_=predictions.map(lambda x: (x[0], {x[1]:x[2]})).reduceByKey(lambda a,b: dict(a.items()+b.items())).collect()

t={}

for i in t_:

t[i[0]]=i[1]

s="userId,movieId,rating\r\n"

for i in base:

if t.has_key(i[0]):

u=t[i[0]]

if u.has_key(i[1]):

s+=str(i[0])+","+str(i[1])+","+str(u[i[1]])+"\r\n"

else:

s+=str(i[0])+","+str(i[1])+",3.67671059005\r\n"

else:

s+=str(i[0])+","+str(i[1])+",3.67671059005\r\n"

# In[12]:

text_file = open("lab10.csv", "w")

text_file.write(s)

text_file.close()

My experience

For myself, I noted some facts:

- You always need to carefully study the data, not to "score on gaps", but try to fill them with close values. For example, the average rating in the sample instead of empty values, significantly improved the result

- Rounding the result (rating) reduced the accuracy of the prediction than the long tail

- The best option was calculated on the entire sample, without validation. DoCrossValidation Method Used

- Ideally, it was necessary to build a graph of the dependence of the parameters (the number of iterations, etc.) and the result of RMSE. To move towards victory is not blind, but sighted

- Apache Spark gives a gain in computation time, since it runs on several machines. If time is critical, use spark

- MyMediaLite is quite a decent library for small, time-critical tasks. Can justify itself when it is unprofitable to raise a cluster with a spark

Ah, if I knew all this before, I would become a winner ... I appreciate your opinion and advice, friends, do not kick much ...