How Groupon Migrated From a Monolithic Rails Application to the New Node.js Infrastructure

I-Tier: Monolith Cleavage

Recently, we completed the Groupon’s annual web traffic migration project in the USA from a monolithic Ruby on Rails application to the new Node.js stack and we have significant results.

From the beginning, the entire American Frontend web frontend was Ruby's single source code. The front-end code developed rapidly, which made it difficult to maintain and complicated the process of adding new features. As a solution to the problem with this gigantic monolith, we decided to restructure the front end by dividing it into smaller, independent and easier to manage parts. The basis of this project was the separation of the monolithic website into several independent Node.js applications. We also redesigned the infrastructure to ensure that all applications work together. The result was an Interaction Tier (I-Tier).

Here are some of the important points of this global architectural migration:

• Pages on the site load much faster

• Our development teams can develop and add new features faster and with less dependence on other teams

• We can avoid re-developing the same features in different countries, where Grupon is available.

This post is the first of a series of posts about how we restructured the site and what enormous advantages we see in the future that will underlie the advancement of the Groupon company.

Short story

Groupon was originally a one-page site that displayed daily deals for Chicago residents. An example of such a typical deal could be a discount to a local restaurant or a ticket for a local concert.

Each transaction had a “tipping point” - the minimum number of people who had to buy a transaction in order for it to become valid. If enough people bought a deal reaching a tipping point, everyone received a discount. Otherwise, everyone was the loser.

The site was originally created as a Ruby on Rails application. The Rails application was a good choice to start with, as for our development team it was one of the easiest ways to quickly raise and launch a site. In addition, it was fairly easy to implement new features using Rails; at that time it was a huge plus for us, since the set of features was constantly increasing.

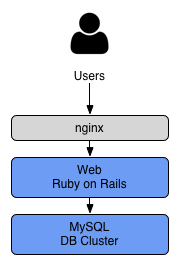

The original Rails architecture was simple enough:

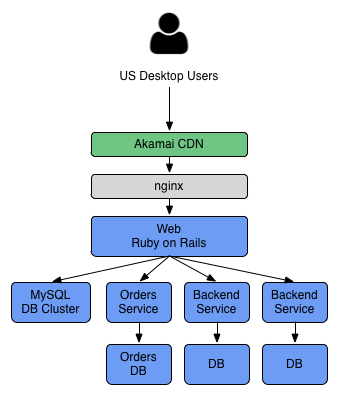

However, we quickly outgrew the ability to serve all our traffic with a single Rails application aimed at a single database cluster. We added more front-end servers and database replicas and placed everything behind the CDN, but this only worked until writing to the database became a critical element. Order processing has caused a number of entries in the database; as a result, we decided to move this code from our Rails application to a new service with its separate database cluster.

We continued to follow a similar pattern, breaking the existing backend destination into new services, however, everything else on the website (views, controllers, resources, etc.) remained part of the original Rails application:

This change of architecture won us time, but we knew it was not for long. The source code, as before, could only be managed by a small team of developers at that time, and this allowed us to keep the site from congestion during peak traffic.

Globalization

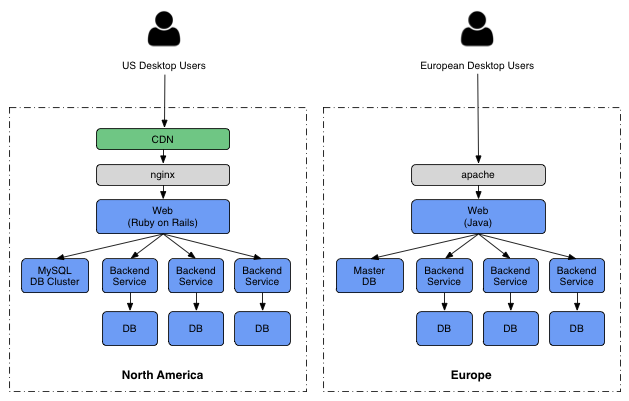

Around this time, Groupon began to expand globally. In a short period, we have moved from operations only in the United States to operations in 48 different countries. During this time, we also acquired several international companies such as CityDeal. Each acquisition already included its own software stack.

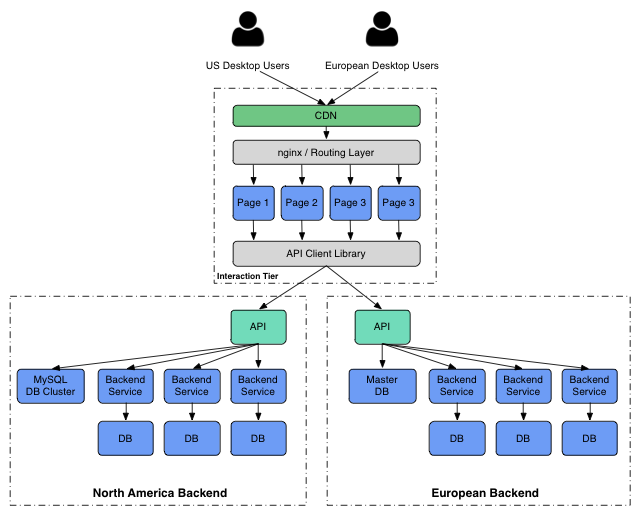

The CityDeal architecture had similarities with the Groupon architecture, but it was a fully autonomous implementation created by another team. As a result, the differences were in design and technology - Java instead of Ruby, Apache instead of nginx, PostgreSQL instead of MySQL.

As you can see, in the case of rapidly developing companies, we had to choose between slowing down the pace for integrating different stacks and supporting both systems, knowing that we would be in technical debt, which would need to be returned later. For the first time, we made an international decision to keep American and European sales separate in exchange for a more rapid development of the company. And the more acquisitions followed, the more complexities were added to the architecture.

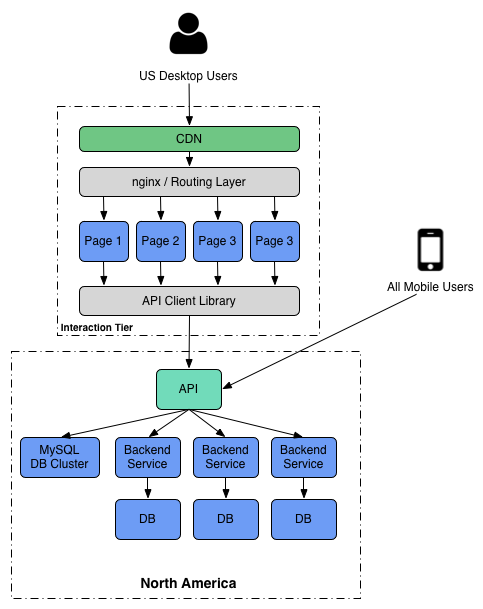

Mobile applications

We also created mobile clients for iPhone, iPad, Android and Windows Mobile; we definitely did not want to create a separate mobile application for each country in which Groupon was available. Instead, we decided to create an API layer on top of each of our backend software platforms; our mobile clients turned to any API endpoint corresponding to the user's country.

This worked well for our mobile team. They could build a mobile application that worked in all of our countries.

But there was still a snag. Whenever we create a new product or feature, we first did it for the web and only then created an API, so the feature could be used on a mobile device.

Now that our company has become half mobile in the USA, we need to build, first of all, a mobile way of thinking. Accordingly, we want to create an architecture in which a separate backend could serve mobile and desktop clients with minimal development efforts.

Compound Monoliths

While Groupon continued to develop and launch new products, the amount of Ruby frontend source code was increasing. Too many developers have been working on the same code. This went so far as to make it difficult for developers to run the application locally. Test suites slowed down and unreliable tests became a problem. And since it was one code base, the whole application needed to be deployed urgently. When an error occurred in production that required a rollback, instead of one feature, changes to each team would be returned. In a word, we had many problems with monolithic code.

But we have repeatedly faced similar problems. We not only had to deal with code in the USA, but we had many of the same problems with European code. We needed to completely restructure the frontend.

Rewrite all

Restructuring the entire frontend is risky. This takes a lot of time, involving many different people, and there is a big chance that in the end nothing will come out better than the old system. Or, worse, it will take a lot of time, and you will give up halfway, spending a lot of effort on it, but not getting any results.

But we had tremendous success in the past when we restructured smaller parts of our infrastructure. For example, both our mobile website and client portal have been rebuilt with excellent results. This experience gave us a good starting point and from it we began to set goals for our project.

Goal 1: Combine Our Front Ends

With numerous software stacks that implement the same features in different countries, we were not able to move as fast as we wanted. We needed to eliminate redundancy in our stack.

Goal 2: Put mobile apps on par with the web

Since about half our company in the USA became mobile, we could not afford to create a separate desktop and mobile version. We needed an architecture in which the web would be another client that would use the same API as our mobile applications.

Goal 3: Make the site faster

Our site was slower than we wanted. While we were in a hurry trying to control the growth of the site, the US frontend had accumulated technical debt, which made it difficult to optimize. We wanted to find a solution that would not require a lot of code to serve requests. We wanted something simple.

Goal 4: Allow teams to progress independently

When Grupon was first launched, the site was really simple. But since then we have added many new products with development teams to support them around the world. We wanted each team to be able to create and deploy their features independently of other teams and quickly. We needed to eliminate the interdependence between the teams, the reason for which was that everything was in one code.

An approach

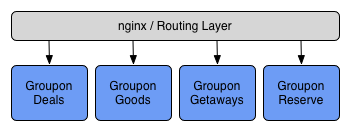

First, we decided to break down each main feature of the site into separate web applications:

We built a framework on Node.js, which included the general functionality needed by all applications, so that our teams could easily create these individual applications.

Note: why Node.js?

Before building our new front-end layer, we evaluated several different software stacks to figure out which is best for us.

We were looking for a solution for a specific task - to efficiently serve many incoming HTTP requests, execute parallel API requests to the services of each of these HTTP requests; and return the results to HTML. We also wanted something that we could confidently monitor, deploy and support.

We wrote prototypes using several software stacks and tested them. We will post more detailed information with specifics later, but in general we find that Node.js is well suited for this particular problem.

Approach, continued ...

Then we added the routing layer on top, which redirected users to the appropriate application based on the page they visited:

We built the Groupon routing services (which we call Grout) as a nginx module. This allows us to do many interesting things, such as conducting A / B tests between different implementations of the same application on different servers.

And so that all these independent web applications work together normally, we created separate services for sharing layouts and CSS styles that support the general configuration and control A / B test modes. We will post more detailed information about these services later.

All this is located in front of our API and nothing in the front-end layer is allowed to access the database or backend service directly. This allows us to create a single integrated API layer that serves both our desktop and mobile applications:

We are working on combining our backend systems, but in the short term we still need to support our American and European subsystems. Therefore, at the same time, we developed our frontend, which could work with two backends:

Results:

We have just completed the transition of our US frontend from Ruby to the new Node.js. The old monolithic front-end has been split into approximately 20 separate web applications, each of which has been completely rewritten. We currently serve an average of 50,000 requests per minute, but we expect this traffic to increase during the holiday season. And this number will increase significantly, as we move traffic from our other 48 countries.

These are the advantages that we have identified recently:

- Pages load faster - usually by 50%. This is partly due to a change in technology and partly because we had the chance to rewrite all of our web pages to speed things up. And we are still planning significant strides here as we make additional changes.

- We serve the same amount of traffic with less equipment compared to the old stack.

- Teams are able to deploy changes to their applications independently.

- We can make changes to the code of an individual feature much faster than we could do with our old architecture.

In general, this migration made it possible for our development team to load pages faster, with fewer interdependencies, and some performance limitations of our old platform were eliminated. But we plan to make many other improvements in the future, and in the near future we will post details.

[ original article on engineering.groupon.com]