Additional security software for NAS

The series of articles is titled "Building a Secure NAS" . Therefore, this article will be considered an increase in the level of security. Also, those tools that I did not use will be described, but it is possible to apply.

Who and how?

Who is going to attack the system and how will it do it?

This is usually the first question to be answered before talking about security.

At least, in the case of NAS, there is already an implicit answer. But in order to fully answer this question, build a threat model and a model of the offender.

Companies include the threat modeling phase in their development cycles.

Microsoft has SDL for this , people have other models .

They involve the use of certain techniques, such as STRIDE or DREAD (STRIDE is still more general and well supported instrumentally).

In STRIDE, for example, a model is built from data streams and is usually large, heavy, and poorly understood. However, the tool provides a list of potential threats, which facilitates their consideration.

The threat model is proprietary information because it makes it easier for an attacker to analyze the system and search for weak points. If he gets a model, he will not have to build it on his own, because analysts have already taken care of everything.

It was a moment of advertising.

So build a model of serious companies. And if this is interesting, I can somehow describe in a separate article.

Here I will describe what is in English called "hardening" and will deal with the security flaws that were made in the process of building the system.

Basically, maintaining security at this level is accomplished by closing known system vulnerabilities, monitoring it, and periodically checking it.

Related Literature

What to read:

- It is worth starting with the Hardened Linux project , where those who wish can find a lot of useful information .

- The harden-doc package contains the Securing Debian Manual of the old 2012, but running does not interfere with understanding the issue.

- Then, read the Debian 8 Hardening Quick Start Guide .

- And finally, a little tutorial on Hardening deb packages and C / C ++ code , which you probably won't need.

Snapshots and docker

Previously, zfs-autosnapshot was installed. He repeatedly helped me, because I could restore corrupted configurations (for Nextcloud, for example) from snapshots.

However, over time, the system began to slow down, and a few thousand snapshots spawned.

It turned out that when creating the parent file system for containers, I forgot to set the flag com.sun:auto-snapshot=false.

In the original article, this problem has already been fixed, here I will show how to get rid of unnecessary snapshots.

Сначала надо выключить zfs-auto-snapshot на родительской файловой системе докера:

zfs set com.sun:auto-snapshot=false tank0/docker/libТеперь удалите неиспользуемые контейнеры и образы:

docker container prune

docker image pruneПроизведите удаление снэпшотов:

zfs list -t snapshot -o name -S creation | grep -e ".*docker/lib.*@zfs-auto-snap" | tail -n +1500 | xargs -n 1 zfs destroy -vrИ выключите их на всех файловых системах образов:

zfs list -t filesystem -o name -S creation | grep -e "tank0/docker/lib" | xargs -n 1 zfs set com.sun:auto-snapshot=falseПодробнее возможно почитать здесь.

Ldap

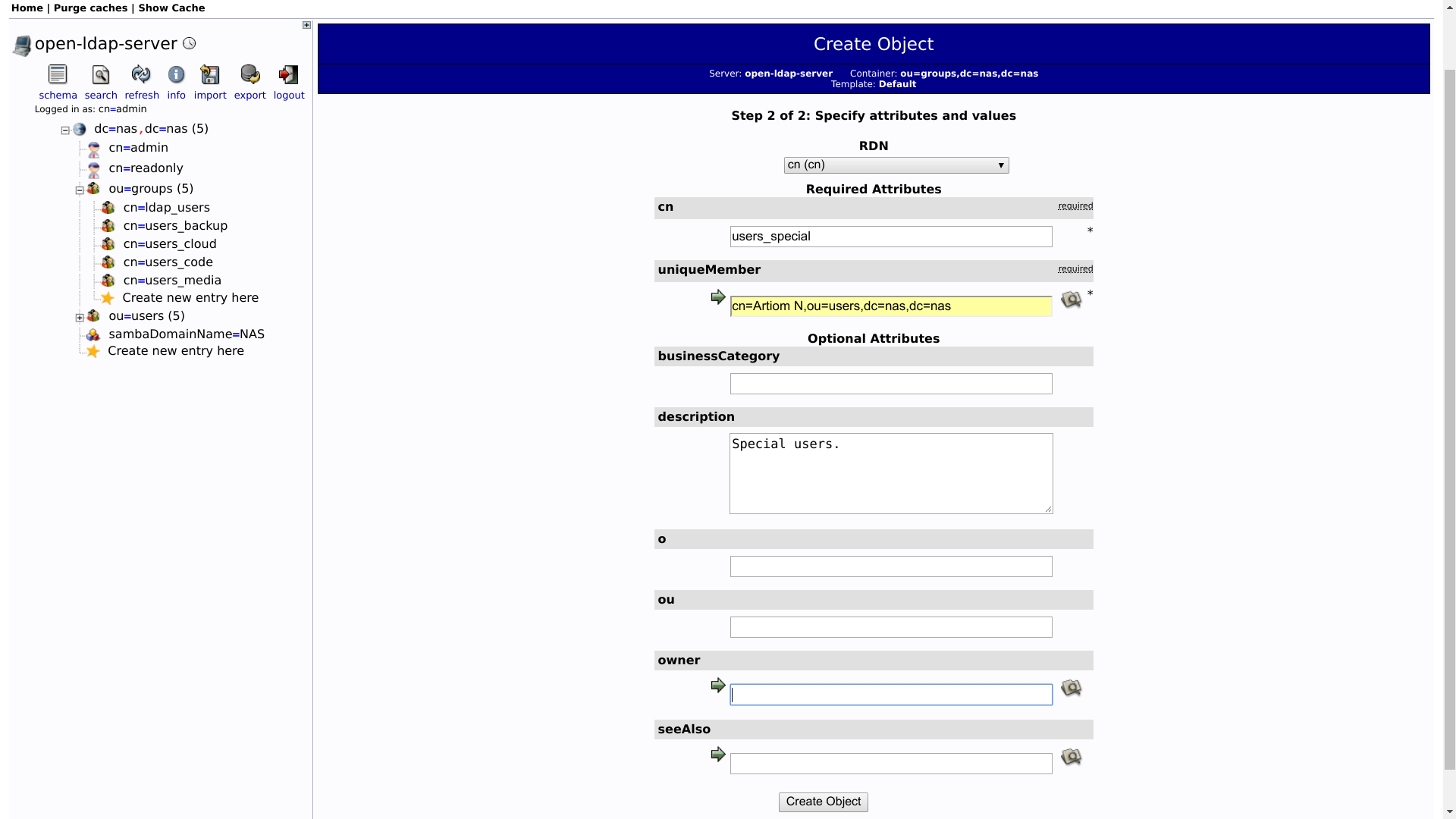

Last time, only one LDAP user was created with an administrative role.

But most services do not need to change anything in the user database. Therefore, it would be nice to add a user only for reading. In order not to create roles manually, it is possible to use a container initialization script.

First, add to the docker-compose.ymlsettings to enable the user to read only:

- "LDAP_READONLY_USER=true"

- "LDAP_READONLY_USER_USERNAME=readonly"

- "LDAP_READONLY_USER_PASSWORD=READONLY_PASSWORD"Full file under the spoiler.

version: "2"

networks:

ldap:

docker0:

external:

name: docker0

services:

open-ldap:

image: "osixia/openldap"

hostname: "open-ldap"

restart: always

environment:

- "LDAP_ORGANISATION=NAS"

- "LDAP_DOMAIN=nas.nas"

- "LDAP_ADMIN_PASSWORD=ADMIN_PASSWORD"

- "LDAP_CONFIG_PASSWORD=CONFIG_PASSWORD"

- "LDAP_READONLY_USER=true"

- "LDAP_READONLY_USER_USERNAME=readonly"

- "LDAP_READONLY_USER_PASSWORD=READONLY_PASSWORD"

- "LDAP_TLS=true"

- "LDAP_TLS_ENFORCE=false"

- "LDAP_TLS_CRT_FILENAME=ldap_server.crt"

- "LDAP_TLS_KEY_FILENAME=ldap_server.key"

- "LDAP_TLS_CA_CRT_FILENAME=ldap_server.crt"

volumes:

- ./certs:/container/service/slapd/assets/certs

- ./ldap_data/var/lib:/var/lib/ldap

- ./ldap_data/etc/ldap/slapd.d:/etc/ldap/slapd.d

networks:

- ldap

ports:

- 172.21.0.1:389:389

- 172.21.0.1:636:636

phpldapadmin:

image: "osixia/phpldapadmin:0.7.1"

hostname: "nas.nas"

restart: always

networks:

- ldap

- docker0

expose:

- 443

links:

- open-ldap:open-ldap-server

volumes:

- ./certs:/container/service/phpldapadmin/assets/apache2/certs

environment:

- VIRTUAL_HOST=ldap.*

- VIRTUAL_PORT=443

- VIRTUAL_PROTO=https

- CERT_NAME=NAS.cloudns.cc

- "PHPLDAPADMIN_LDAP_HOSTS=open-ldap-server"

#- "PHPLDAPADMIN_HTTPS=false"

- "PHPLDAPADMIN_HTTPS_CRT_FILENAME=certs/ldap_server.crt"

- "PHPLDAPADMIN_HTTPS_KEY_FILENAME=private/ldap_server.key"

- "PHPLDAPADMIN_HTTPS_CA_CRT_FILENAME=certs/ldap_server.crt"

- "PHPLDAPADMIN_LDAP_CLIENT_TLS_REQCERT=allow"

ldap-ssp:

image: openfrontier/ldap-ssp:https

volumes:

- /etc/ssl/certs/ssl-cert-snakeoil.pem:/etc/ssl/certs/ssl-cert-snakeoil.pem

- /etc/ssl/private/ssl-cert-snakeoil.key:/etc/ssl/private/ssl-cert-snakeoil.key

restart: always

networks:

- ldap

- docker0

expose:

- 80

links:

- open-ldap:open-ldap-server

environment:

- VIRTUAL_HOST=ssp.*

- VIRTUAL_PORT=80

- VIRTUAL_PROTO=http

- CERT_NAME=NAS.cloudns.cc

- "LDAP_URL=ldap://open-ldap-server:389"

- "LDAP_BINDDN=cn=admin,dc=nas,dc=nas"

- "LDAP_BINDPW=ADMIN_PASSWORD"

- "LDAP_BASE=ou=users,dc=nas,dc=nas"

- "MAIL_FROM=admin@nas.nas"

- "PWD_MIN_LENGTH=8"

- "PWD_MIN_LOWER=3"

- "PWD_MIN_DIGIT=2"

- "SMTP_HOST="

- "SMTP_USER="

- "SMTP_PASS="Then, you need to make a dump and delete:

$ cd /tank0/docker/services/ldap

$ tar czf ~/ldap_backup.tgz .

$ ldapsearch -Wx -D "cn=admin,dc=nas,dc=nas" -b "dc=nas,dc=nas" -H ldap://172.21.0.1 -LLL > ldap_dump.ldif

$ docker-compose down

$ rm -rf ldap_data

$ docker-compose up -dSo that the server, when recovering from dump, does not swear at duplicate elements, delete the following lines in the file:

dn: dc=nas,dc=nas

objectClass: top

objectClass: dcObject

objectClass: organization

o: NAS

dc: nas

dn: cn=admin,dc=nas,dc=nas

objectClass: simpleSecurityObject

objectClass: organizationalRole

cn: admin

description: LDAP administrator

userPassword:: PASSWORD_BASE64

And restore users and groups:

$ ldapadd -Wx -D "cn=admin,dc=nas,dc=nas" -H ldap://172.21.0.1 -f ldap_dump.ldifIn the database there will be such a beast:

dn: cn=readonly,dc=nas,dc=nas

cn: readonly

objectClass: simpleSecurityObject

objectClass: organizationalRole

userPassword:: PASSWORD_BASE64

description: LDAP read only userRoles in the LDAP server configuration for it will be created by the container.

Perform checks after recovery and delete the backup:

$ rm ~/ldap_backup.tgzAdding groups to LDAP

Convenient is the division of LDAP users into groups similar to POSIX groups in Linux.

For example, it is possible to create groups whose users will have access to repositories, access to the cloud, or access to the library.

Groups are easily added to phpLDAPAdmin, and I will not focus on this.

I note only the following:

- The group is created from the "Default" template. This is not a POSIX group, but a group of names.

- Accordingly, the group has an attribute

objectClassthat includes the valuegroupOfUniqueNames.

Docker

In Docker, almost everything is done for you.

By default, it uses the system call restriction that is enabled in the OMV core:

# grep SECCOMP /boot/config-4.16.0-0.bpo.2-amd64

CONFIG_HAVE_ARCH_SECCOMP_FILTER=y

CONFIG_SECCOMP_FILTER=y

CONFIG_SECCOMP=yHere it is possible to read a little more about the basic Docker security rules.

Also, if AppArmor is enabled, Docker can integrate with it and forward its profiles to the container .

Network

Eliminate OS detection

The network is located behind the router, but it is possible to do a curious exercise by changing some parameters of the network stack so that the OS cannot be identified by the answers.

There is little real benefit from this, because the attacker will study the banners of the services and anyway understand what kind of OS you are using.

# nmap -O localhost

Starting Nmap 7.40 ( https://nmap.org ) at 2018-08-26 14:39 MSK

Nmap scan report for localhost (127.0.0.1)

Host is up (0.000015s latency).

Other addresses for localhost (not scanned): ::1

Not shown: 992 closed ports

PORT STATE SERVICE

53/tcp open domain

80/tcp open http

443/tcp open https

5432/tcp open postgresql

Device type: general purpose

Running: Linux 3.X|4.X

OS CPE: cpe:/o:linux:linux_kernel:3 cpe:/o:linux:linux_kernel:4

OS details: Linux 3.8 - 4.6

Network Distance: 0 hops

OS detection performed. Please report any incorrect results at https://nmap.org/submit/ .

Nmap done: 1 IP address (1 host up) scanned in 4.07 secondsLoading settings from sysctl.conf:

# sysctl -p /etc/sysctl.conf

net.ipv4.conf.all.accept_redirects = 0

net.ipv6.conf.all.accept_redirects = 0

net.ipv4.conf.all.send_redirects = 0

net.ipv4.conf.all.accept_source_route = 0

net.ipv6.conf.all.accept_source_route = 0

net.ipv4.tcp_rfc1337 = 1

net.ipv4.ip_default_ttl = 128

net.ipv4.icmp_ratelimit = 900

net.ipv4.tcp_synack_retries = 7

net.ipv4.tcp_syn_retries = 7

net.ipv4.tcp_window_scaling = 1

net.ipv4.tcp_timestamps = 1And so...

# nmap -O localhost

Starting Nmap 7.40 ( https://nmap.org ) at 2018-08-26 14:40 MSK

Nmap scan report for localhost (127.0.0.1)

Host is up (0.000026s latency).

Other addresses for localhost (not scanned): ::1

Not shown: 992 closed ports

PORT STATE SERVICE

53/tcp open domain

80/tcp open http

443/tcp open https

5432/tcp open postgresql

No exact OS matches for host (If you know what OS is running on it, see https://nmap.org/submit/ ).

TCP/IP fingerprint:

OS:SCAN(V=7.40%E=4%D=8/26%OT=53%CT=1%CU=43022%PV=N%DS=0%DC=L%G=Y%TM=5B8291C

OS:3%P=x86_64-pc-linux-gnu)SEQ(SP=FA%GCD=1%ISR=105%TI=Z%CI=I%TS=8)OPS(O1=MF

OS:FD7ST11NW7%O2=MFFD7ST11NW7%O3=MFFD7NNT11NW7%O4=MFFD7ST11NW7%O5=MFFD7ST11

OS:NW7%O6=MFFD7ST11)WIN(W1=AAAA%W2=AAAA%W3=AAAA%W4=AAAA%W5=AAAA%W6=AAAA)ECN

OS:(R=Y%DF=Y%T=80%W=AAAA%O=MFFD7NNSNW7%CC=Y%Q=)T1(R=Y%DF=Y%T=80%S=O%A=S+%F=

OS:AS%RD=0%Q=)T2(R=N)T3(R=N)T4(R=Y%DF=Y%T=80%W=0%S=A%A=Z%F=R%O=%RD=0%Q=)T5(

OS:R=Y%DF=Y%T=80%W=0%S=Z%A=S+%F=AR%O=%RD=0%Q=)T6(R=Y%DF=Y%T=80%W=0%S=A%A=Z%

OS:F=R%O=%RD=0%Q=)T7(R=Y%DF=Y%T=80%W=0%S=Z%A=S+%F=AR%O=%RD=0%Q=)U1(R=Y%DF=N

OS:%T=80%IPL=164%UN=0%RIPL=G%RID=G%RIPCK=G%RUCK=G%RUD=G)IE(R=Y%DFI=N%T=80%C

OS:D=S)

Network Distance: 0 hops

OS detection performed. Please report any incorrect results at https://nmap.org/submit/ .

Nmap done: 1 IP address (1 host up) scanned in 19.52 secondsThese settings are required to register in /etc/sysctl.conf, then with each reboot they will be read automatically.

###################################################################

# Additional settings - these settings can improve the network

# security of the host and prevent against some network attacks

# including spoofing attacks and man in the middle attacks through

# redirection. Some network environments, however, require that these

# settings are disabled so review and enable them as needed.

#

# Do not accept ICMP redirects (prevent MITM attacks)

net.ipv4.conf.default.accept_redirects = 0

net.ipv6.conf.default.accept_redirects = 0

net.ipv4.conf.all.accept_redirects = 0

net.ipv6.conf.all.accept_redirects = 0

# _or_

# Accept ICMP redirects only for gateways listed in our default

# gateway list (enabled by default)

# net.ipv4.conf.all.secure_redirects = 1

#

# Do not send ICMP redirects (we are not a router)

net.ipv4.conf.all.send_redirects = 0

#

# Do not accept IP source route packets (we are not a router)

net.ipv4.conf.all.accept_source_route = 0

net.ipv6.conf.all.accept_source_route = 0

## protect against tcp time-wait assassination hazards

## drop RST packets for sockets in the time-wait state

## (not widely supported outside of linux, but conforms to RFC)

net.ipv4.tcp_rfc1337 = 1

#

# Log Martian Packets

#net.ipv4.conf.all.log_martians = 1

#

###################################################################

# Magic system request Key

# 0=disable, 1=enable all

# Debian kernels have this set to 0 (disable the key)

# See https://www.kernel.org/doc/Documentation/sysrq.txt

# for what other values do

#kernel.sysrq=1

###################################################################

# Protected links

#

# Protects against creating or following links under certain conditions

# Debian kernels have both set to 1 (restricted)

# See https://www.kernel.org/doc/Documentation/sysctl/fs.txt

#fs.protected_hardlinks=0

#fs.protected_symlinks=0

vm.overcommit_memory = 1

vm.swappiness = 10

###################################################################

# Anti-fingerprinting.

#

# Def: 64.

net.ipv4.ip_default_ttl = 128

# Скорость генерации ICMP пакетов (по умолчанию 1000)

net.ipv4.icmp_ratelimit = 900

# Количество повторных отсылок пакетов, на которые не получен ответ.

# Def: 5.

net.ipv4.tcp_synack_retries = 7

# Def: 5.

net.ipv4.tcp_syn_retries = 7

# Изменять параметры TCP window и timespamp в соответствии с 1323.

net.ipv4.tcp_window_scaling = 1

net.ipv4.tcp_timestamps = 1

# Redis requirement.

net.core.somaxconn = 511Protection against defining versions of services is more useful, for which an attacker can also use Nmap:

# nmap -sV -sR --allports --version-trace 127.0.0.1Not shown: 991 closed ports

PORT STATE SERVICE VERSION

22/tcp open ssh OpenSSH 7.4p1 Debian 10+deb9u3 (protocol 2.0)

25/tcp open smtp Postfix smtpd

80/tcp open http nginx 1.13.12

111/tcp open rpcbind 2-4 (RPC #100000)

139/tcp open netbios-ssn Samba smbd 3.X - 4.X (workgroup: WORKGROUP)

443/tcp open ssl/http nginx 1.13.12

445/tcp open netbios-ssn Samba smbd 3.X - 4.X (workgroup: WORKGROUP)

3493/tcp open nut Network UPS Tools upsd

8000/tcp open http Icecast streaming media server 2.4.2

Service Info: Hosts: nas.localdomain, NAS; OS: Linux; CPE: cpe:/o:linux:linux_kernel

Final times for host: srtt: 22 rttvar: 1 to: 100000But not everything is smooth with disguise:

- For each service an individual approach is needed here.

- It is not always possible to remove the version.

For example, for SSH it is possible to add an option DebianBanner noto /etc/ssh/sshd_confg.

As a result:

22/tcp open ssh OpenSSH 7.4p1 (protocol 2.0)Better, alas, will not work: the version is used by SSH to determine which features are supported, and it is possible to change it only by patching the server .

Port-knocking

Not the most well-known protection technique that allows a remote user who knows the secret to connect to a closed port.

The work resembles a code lock : everyone knows that service daemons are running on the server, but "they are not there" until the code is dialed.

For example, in order to connect to an SSH server, a user needs to knock on UDP ports 7000, TCP 7007, and UDP 7777.

After that, with his IP firewall will be allowed on the private TCP port 22.

Read more about how this works, it is possible to read here . And in the Debian manual .

I do not recommend using, because fail2ban is usually sufficient.

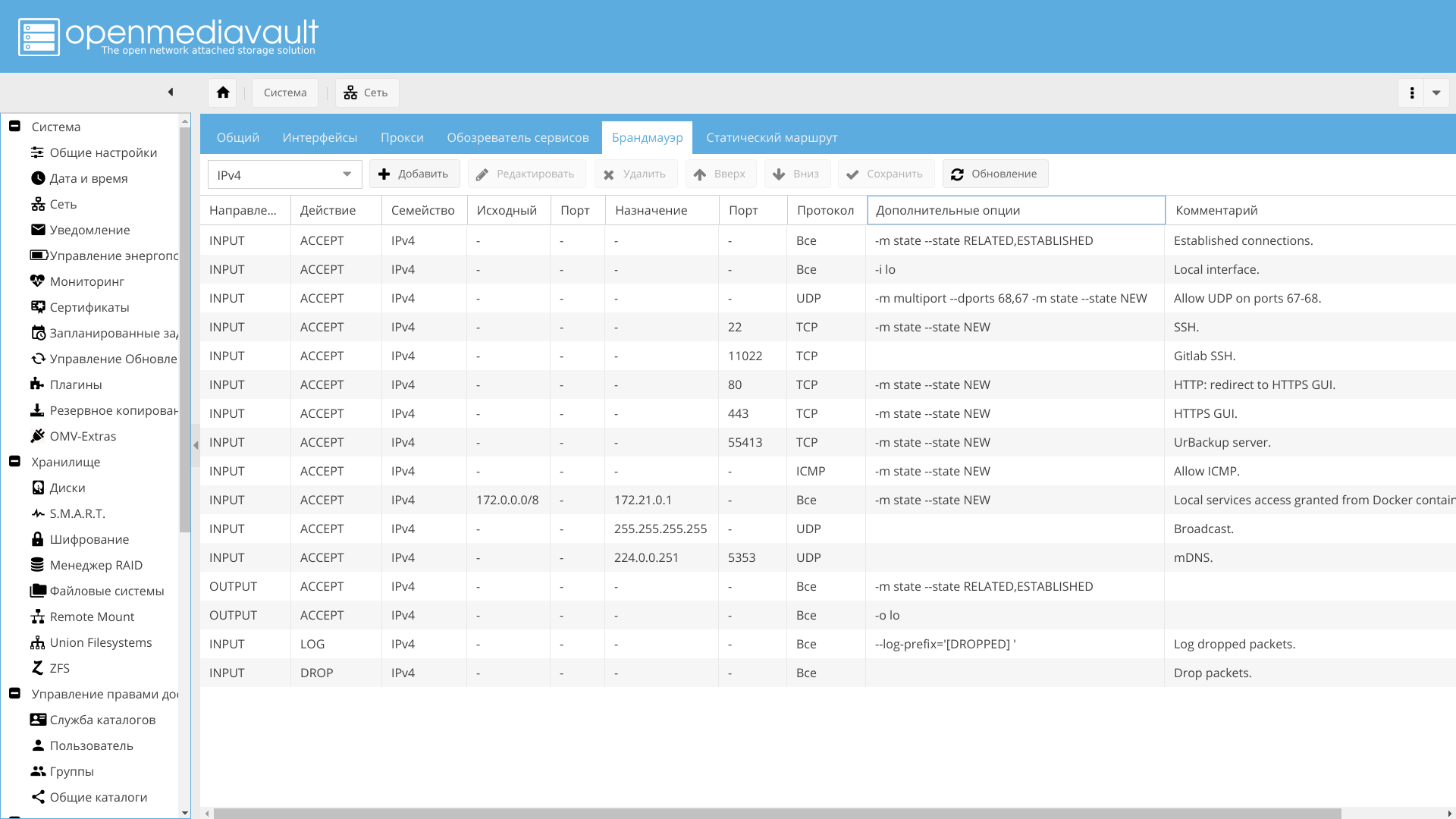

Firewall

I configure the firewall via OpenMediaVault Web GUI, which I recommend to you.

Open the necessary ports, such as 443 and 22, the rest - to taste. Also, it is advisable to enable logging of dropped packets.

Ssh

# grep "invalid user" /var/log/auth.log|head

Aug 26 00:07:57 nas sshd[29786]: input_userauth_request: invalid user test [preauth]

Aug 26 00:07:59 nas sshd[29786]: Failed password for invalid user test from 185.143.160.137 port 51268 ssh2

Aug 26 00:11:01 nas sshd[5641]: input_userauth_request: invalid user 0 [preauth]

Aug 26 00:11:01 nas sshd[5641]: Failed none for invalid user 0 from 5.188.10.180 port 49025 ssh2

Aug 26 00:11:04 nas sshd[5644]: input_userauth_request: invalid user 0101 [preauth]

Aug 26 00:11:06 nas sshd[5644]: Failed password for invalid user 0101 from 5.188.10.180 port 59867 ssh2

Aug 26 00:32:55 nas sshd[20367]: input_userauth_request: invalid user ftp [preauth]

Aug 26 00:32:56 nas sshd[20367]: Failed password for invalid user ftp from 5.188.10.144 port 47981 ssh2

Aug 26 00:32:57 nas sshd[20495]: input_userauth_request: invalid user guest [preauth]

Aug 26 00:32:59 nas sshd[20495]: Failed password for invalid user guest from 5.188.10.144 port 34202 ssh2At the risk of appearing banal, I will remind you what is required:

- Strictly prohibit logging in as root.

- Restrict access to specified users only.

- Change port to non-standard.

- It is advisable to disable password authentication, leaving only the key.

Read more is possible, for example here .

All this is easily done from the OpenMediaVault interface via the menu "Services -> SSH".

Except for the fact that I did not change the port to a non-standard one, leaving 22 in the local network and simply replacing the port in the NAT router.

# grep "invalid user" /var/log/auth.log|sed 's/.*invalid user \([^ ]*\) .*/\1/'|sort|uniq

0

0101

1234

22

admin

ADMIN

administrateur

administrator

admins

alfred

amanda

amber

Anonymous

apache

avahi

backup@network

bcnas

benjamin

bin

cacti

callcenter

camera

cang

castis

charlotte

clamav

client

cristina

cron

CSG

cvsuser

cyrus

david

db2inst1

debian

debug

default

denis

elvira

erik

fabio

fax

ftp

ftpuser

gary

gast

GEN2

guest

I2b2workdata2

incoming

jboss

john

juan

matilda

max

mia

miner

muhammad

mysql

nagios

nginx

noc

office

oliver

operator

oracle

osmc

pavel

pi

pmd

postgres

PROCAL

prueba

RSCS

sales

sales1

scaner

selena

student07

sunos

support

sybase

sysadmin

teamspeak

telecomadmin

test

test1

test2

test3

test7

tirocu

token

tomcat

tplink

ubnt

ubuntu

user1

vagrant

victor

volition

www-data

xghwzp

xxx

zabbix

zimbraOnce this is done, unauthorized entry attempts will be made much less frequently.

To further improve the situation, it is possible to block attackers from certain IPs after several attempts at entry.

What can be used for:

- Fail2ban . A popular utility that works not only for SSH, but also for many other applications.

- Denyhosts . Looks like fail2ban.

- Sshguard , if you wish, you can try to use it, but I was not interested in them in detail.

I am using fail2ban. It will monitor the logs for various unwanted actions by certain IPs, and ban them in case of exceeding the number of positives:

2018-08-29 21:17:25,351 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.144

2018-08-29 21:17:25,473 fail2ban.actions [8650]: NOTICE [sshd] Ban 5.188.10.144

2018-08-29 21:17:27,359 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.144

2018-08-29 21:28:13,128 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.176

2018-08-29 21:28:13,132 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.176

2018-08-29 21:28:15,137 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.176

2018-08-29 21:28:20,145 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.176

2018-08-29 21:28:25,153 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.176

2018-08-29 21:28:25,421 fail2ban.actions [8650]: NOTICE [sshd] Ban 5.188.10.176

2018-08-29 21:30:05,272 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.180

2018-08-29 21:30:05,274 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.180

2018-08-29 21:30:13,285 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.180

2018-08-29 21:30:13,286 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.180

2018-08-29 21:30:15,289 fail2ban.filter [8650]: INFO [sshd] Found 5.188.10.180

2018-08-29 21:30:15,803 fail2ban.actions [8650]: NOTICE [sshd] Ban 5.188.10.180The ban is made by adding a firewall rule. After a specified time, the rule is deleted, and the user can again try to log in.

Initially, only SSH is included, but it is possible to enable monitoring of the logs of the Web server and other services, at least the same OMV .

And also, take out logs from containers and set fail2ban on them too.

I recommend adding services to taste.

You can read more about the configuration, for example, here or on your own Wiki .

Logs

Lwatch

A small utility that should be installed for convenience. Highlight the logs and show them in a beautiful form.

It is possible to use any such utility , as long as errors and problem areas of the logs are highlighted so that their visual analysis is facilitated.

Logcheck

It is worthwhile to install and configure logcheck just so that when identifying problems with the configuration reported in the logs, you immediately see it in the mail.

It helps to see well what is going wrong, although it requires adjustment.

Installation:

# apt-get install logcheckImmediately after installation, it will send reports.

System Events

=-=-=-=-=-=-=

Oct 2 02:02:15 nas kernel: [793847.981226] [DROPPED] IN=br-ce OUT= PHYSIN=veth6c2a68e MAC=ff:ff:ff:ff:ff:ff: SRC=172.22.0.11 DST=255.255.255.255 LEN=29 TOS=0x00 PREC=0x00 TTL=64 ID=40170 DF PROTO=UDP SPT=35623 DPT=35622 LEN=9

Oct 2 02:02:20 nas hddtemp[13791]: /dev/sdh: Micron_1100 N #020Ђ: 32 C

Oct 2 02:02:37 nas kernel: [793869.247128] [DROPPED] IN=br-7ba OUT= MAC= SRC=172.31.0.1 DST=172.31.255.255 LEN=239 TOS=0x00 PREC=0x00 TTL=128 ID=23017 DF PROTO=UDP SPT=138 DPT=138 LEN=219

Oct 2 02:02:37 nas kernel: [793869.247174] [DROPPED] IN=br-7ba OUT= MAC= SRC=172.31.0.1 DST=172.31.255.255 LEN=232 TOS=0x00 PREC=0x00 TTL=128 ID=23018 DF PROTO=UDP SPT=138 DPT=138 LEN=212

Oct 2 02:02:37 nas kernel: [793869.247195] [DROPPED] IN=br-673 OUT= MAC= SRC=192.168.224.1 DST=192.168.239.255 LEN=239 TOS=0x00 PREC=0x00 TTL=128 ID=8959 DF PROTO=UDP SPT=138 DPT=138 LEN=219

Oct 2 02:02:37 nas kernel: [793869.247203] [DROPPED] IN=br-673 OUT= MAC= SRC=192.168.224.1 DST=192.168.239.255 LEN=232 TOS=0x00 PREC=0x00 TTL=128 ID=8960 DF PROTO=UDP SPT=138 DPT=138 LEN=212

Oct 2 02:02:50 nas hddtemp[13791]: /dev/sdh: Micron_1100 N #020Ђ: 32 CIt is seen that there is a lot of superfluous, and further configuration will be reduced to its filtering.

First you need to turn off the hddtemp, which does not work correctly due to non-ASCII characters in the SSD name.

After correcting the hddtemp file, the messages stopped coming:

^\w{3} [ :0-9]{11} [._[:alnum:]-]+ hddtemp\[[0-9]+\]: /dev/([hs]d[a-z]|sg[0-9]):.*[0-9]+.*[CF]

^\w{3} [ :0-9]{11} [._[:alnum:]-]+ hddtemp\[[0-9]+\]: /dev/([hs]d[a-z]|sg[0-9]):.*drive is sleepingThen, it is possible to see what logcheck is talking about blocking broadcast traffic with firewall:

[793869.247128] [DROPPED] IN=br-7ba OUT= MAC= SRC=172.31.0.1 DST=172.31.255.255 LEN=239 TOS=0x00 PREC=0x00 TTL=128 ID=23017 DF PROTO=UDP SPT=138 DPT=138 LEN=219Therefore, it is necessary to allow broadcast traffic from the router and containers:

- Port 35622 from container with urbackup.

- Port 5678 from the router is the discovery of RouterOS neighbors. It is possible to disable it on the router.

- Port 5353 to address 224.0.0.251 is mDNS .

Logcheck check:

sudo -u logcheck logcheck -t -dFinally, problems are visible:

Oct 21 21:58:18 nas systemd[1]: Removed slice User Slice of user.

Oct 21 21:58:31 nas systemd[1]: smbd.service: Unit cannot be reloaded because it is inactive.

Oct 21 21:58:31 nas root: /etc/dhcp/dhclient-enter-hooks.d/samba returned non-zero exit status 1It turns out that SAMBA does not start. Indeed, the analysis showed that I had disguised it through the systemctl, and OMV was trying to launch it.

Logcheck will still be spammed with various messages.

For example, zfs-auto-snapshot has passed:

Oct 21 22:00:57 nas zfs-auto-snap: @zfs-auto-snap_frequent-2018-10-21-1900, 16 created, 16 destroyed, 0 warnings.To ignore:

^\w{3} [ :0-9]{11} [._[:alnum:]-]+ zfs-auto-snap: \@zfs-auto-snap_[[:alnum:]-]+, [0-9]+ created, [0-9]+ destroyed, 0 warnings.$rrdcached also ignore:

^\w{3} [ :[:digit:]]{11} [._[:alnum:]-]+ rrdcached\[[0-9]+\]: flushing old values$

^\w{3} [ :[:digit:]]{11} [._[:alnum:]-]+ rrdcached\[[0-9]+\]: rotating journals$

^\w{3} [ :[:digit:]]{11} [._[:alnum:]-]+ rrdcached\[[0-9]+\]: started new journal [./[:alnum:]]+$

^\w{3} [ :[:digit:]]{11} [._[:alnum:]-]+ rrdcached\[[0-9]+\]: removing old journal [./[:alnum:]]+$Also, it is advisable to remove zed, if it has not yet been removed:

^\w{3} [ :0-9]{11} [._[:alnum:]-]+ (/usr/bin/)?zed: .*$

^\w{3} [ :0-9]{11} [._[:alnum:]-]+ (/usr/bin/)?zed\[[0-9]+\]: .*$Well, and so on. Many people think that logcheck is quite a useless utility.

This is true if you use it as something that you have set and forgotten.

However, if you understand that logcheck is just a customizable log filter, without heuristics, magic, and adaptive algorithms, the question of its usefulness does not arise. Iteratively, examining what he sends and, either by inserting it into the ignore list or by correcting it, it is gradually possible to achieve informative reports.

Using an automated tool for analysis is much better than running logs through the same regular expressions with your hands, and often better than using a complete data analysis system like Splunk.

About logcheck and configuration may be read on Wiki Gentoo and here .

Node-level IDS

Here I refer to my own article “A Brief Analysis of Solutions in the Field of IDS and Development of a Neural Network Anomaly Detector in Data Networks” , in which there are several examples.

You can read a more complete review and comparison of similar IDS on the Wiki .

STIG-4

A complex and large script for static analysis of known gaps from RedHat.

There is its port in Debian , which I recommend downloading and running at least once.

# cd

root@nas:~# git clone https://github.com/hardenedlinux/STIG-4-Debian

Cloning into 'STIG-4-Debian'...

remote: Enumerating objects: 572, done.

remote: Total 572 (delta 0), reused 0 (delta 0), pack-reused 572

Receiving objects: 100% (572/572), 634.37 KiB | 0 bytes/s, done.

Resolving deltas: 100% (316/316), done.

root@nas:~# cd STIG-4-Debian/

root@nas:~/STIG-4-Debian# bash stig-4-debian.sh -H

Script Run: Mon Nov 12 23:58:34 MSK 2018

Start checking process...

[ FAIL ] The cryptographic hash of system files and commands must match vendor values.

...

Pass Count: 54

Failed Count: 137The process of working with this script is something like this:

- Run the script.

- Search the web for every line from

[ FAIL ]. - As a rule, a link to the STIG online database will be found.

- Correct, following the instructions in the database, making a discount on the fact that this is Debian.

- Run the script again.

Rkhunter

Too much has been written about RkHunter .

Used long ago, widely, still evolving. Available in the Debian repository.

Tiger

A modular shell script that performs system auditing and intrusion detection.

In some ways similar to STIG-4.

It can use third-party utilities for analyzing logs, for detecting violation of checksums.

Consists of a large number of different modules.

For example, there is a module that detects services that use deleted files, which happens when the libraries used by the service were changed during the system update, but the service was not restarted for some reason.

There are modules for searching service users that are no longer used, system checks for the absence of security patches, umask checks, etc.

Read more in man .

Already 10 years does not develop (yes, I'm not the only one who throws software).

Samhain

Typical HIDS that can:

- Check the integrity of the entire system using cryptographic hashes.

- Search for various executable files with the installed SUID, which should not be installed.

- Detect hidden processes.

- Sign logs and databases.

Plus, it has centralized monitoring with a web-based interface and centralized logging to the server.

Usually, it is used to check whether the system binary files have changed between updates.

When updating the operating system, the signature database is rebuilt.

Naturally, ideally, the database should be stored on another machine and periodically perform offline checks. Of course, the base is signed, but when the machine is running, if the attacker takes control, no one bothers to change the result of issuing such systems.

Once upon a time I used this HIDS: it is not very convenient and requires effort.

The problem here is not Samhain, but in the very class of similar systems.

Tripwire

Almost the same as Samhain, but Tripwire is already Serious business.

Supported by a large enough company, has a corporate version.

Lynis

Utility from the author RkHunter .

Partially proprietary, but has a free version.

On the home page there is a small comparison of it with several other systems.

It is similar to Tiger and performs approximately the same work, but unlike him, it is supported by the author.

Use or not, decide for yourself. In one of the tutorials referenced at the beginning of the article, Lynix is used along with Tripwire.

Chkrootkit

The name speaks for itself. Utility to find rootkits.

In short:

- It searches for system binaries modified by rootkits.

- Checks for no network interfaces in promiscuous mode.

- Checks if the records were deleted from latslog, wtmp and utmp.

- Searches for kernel-level Trojans signatures, both in memory and in the file system.

It has a rather impressive list of rootkits detected.

Ninja

A system for determining elevation of process privileges for Linux.

Do not confuse with the ninja-build build system.

Runs like a daemon and monitors the processes. If the process starts with zero UID / GID, Ninja writes information about it to the log, and if it is launched from an unauthorized user, it can kill it (the process will have to be dealt with by the user later).

Some executable files may be included in the white list (for example, su).

Authorized users are members of a given group.

There are Ubuntu repositories that easily connect to Debian.

Security scanners

I will not dwell on this topic in detail. The topic is too extensive. Scanners are required in order to check the security of the system, acting with the same tools as the attacker.

In the case of basic checks, Nmap and W3af are enough for Web services.

Systems of alternative security models

Linux has an selective (also discretionary) access model .

But access models are known about ten. Of these, besides discretionary, role and mandate are also common .

The following systems mainly partially implement the latter, sometimes with role buds.

You can read more about all three in the IBM Secure Linux article series .

In the NAS, I have not used any of them yet, but I plan to enable AppArmor.

It cannot be said that these systems greatly increase security, but they narrow the surface of attack.

Of course, they won't save from exiting the browser's sandbox through a bug in the system call, with the ability to write to the kernel address space, but can help from malicious actions in user space.

Access Control Lists

The first version of the mandatory control system, dependent on the capabilities of the file system.

Of course, ZFS supports full access control lists.

- Разрешение на добавление нового файла в каталог.

- Разрешение на создание подкаталога в каталоге.

- Разрешение на удаление файла.

- Разрешение на удаление файла или каталога внутри каталога.

- Разрешение на выполнение файла или поиска в содержимом каталога.

- Разрешение на перечисление содержимого каталога.

- Разрешение на чтение списка ACL (команда ls).

- Разрешение для чтения базовых атрибутов файла (отличных от списков ACL, атрибутов на уровне команды "stat").

- Разрешение для чтения содержимого файла.

- Разрешение для чтения расширенных атрибутов файла или поиска в каталоге расширенных атрибутов файла.

- Разрешение для создания расширенных атрибутов или записи в каталог расширенных атрибутов.

- Разрешение для изменения или замены содержимого файла.

- Разрешение для изменения данных о времени, связанных с файлом или каталогом, на произвольное значение.

- Разрешение для создания или изменения списков ACL с помощью команды chmod.

- Разрешение для изменения владельца или группы владельца файла. Или возможность выполнения для данного файла команд chown или chgrp .

- Разрешение для владения файлом или изменения группы владельца файла на группу, в состав которой входит пользователь. Для изменения владельца или группы владельца файла на произвольного пользователя или группу необходимо право PRIV_FILE_CHOWN.

- Наследование файлами каталога только списками ACL из родительского каталога.

- Наследование списков ACL в подкаталогах только из родительского каталога.

- Наследование списков ACL из родительского каталога, но только по отношению к новым файлам или подкаталогам, а не непосредственно к каталогу. Для определения наследуемого списков ACL необходимо установить флаг file_inherit, флаг dir_inherit или оба флага.

- Наследование списков ACL из родительского каталога содержимым первого уровня каталога, но не второго или последующих уровней.

Configuring ACLs is quite a chore and they are more likely used for fine access control than for protection. But this mechanism of the mandatory access control model fully implements.

Unlike EXT systems, in ZFS ACL control is implemented not through separate utilities setfacl/getfacl, but through standard chmodand ls.

AppArmor

Mandatory access control system based on file paths.

Processes that are identified based on the paths to their executable files transferred to the system call execare controlled by the system, when they use system calls open, execand the like.

These calls are passed the paths to the files with which the process wants to work.

AppArmor checks if the process is allowed to access these paths.

If not, the system call will return an error.

Also can set process capabilites.

Processes are described by text-based profile files, which are combined into a database. There is a learning mode for quickly creating a profile when the process is being analyzed, and all the paths that he used are recorded in the profile.

#include <tunables/global>

profile ping /{usr/,}bin/ping flags=(complain) {

#include <abstractions/base>

#include <abstractions/consoles>

#include <abstractions/nameservice>

capability net_raw,

capability setuid,

network inet raw,

network inet6 raw,

/{,usr/}bin/ping mixr,

/etc/modules.conf r,

# Site-specific additions and overrides. See local/README for details.

#include <local/bin.ping>

}It can be seen that the profile does not have to describe everything, because has the ability to connect abstractions. Also, it is worth noting that the user can easily supplement the profile in a separate configuration file ( local/bin.ping), without touching the settings that come with the package.

Regular option for many deb-based systems.

And it integrates with firejail , which allows for increased isolation.

I use it on working systems, occasionally I supplement the base, correct the rules and send them patches.

For use in Debian, there is a manual .

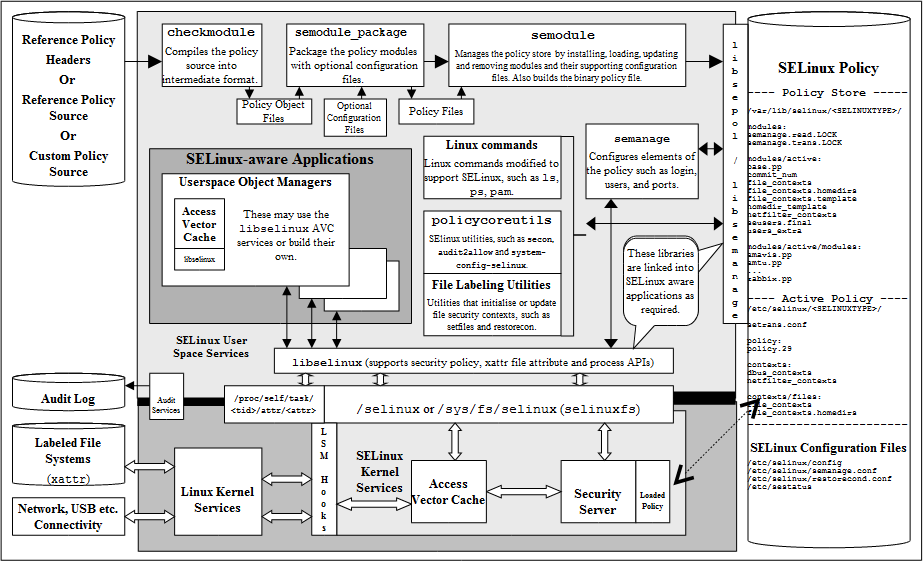

SELinux

Mandatory access control system.

A gift from the NSA , about working with which in Debian is described in the official manual .

The central notion of SELinux is the type enforcement model.

All users, files, processes, network resources, and so on have SELinux tags. One of the components of the label is "type".

For example, a browser may be launched, labeled firefox_t.

SELinux simply provides the ability to map application labels to resource labels to which they have access.

Example:

allow firefox_t user_home_t : file { read write };This simply allows the browser running how firefox_tto read and write to files in your home directory, labeled as user_home_t.

Example:

allow user_t user_home_t:file { create read write unlink };The rule allows the type user_tto create, read, write and delete files with a type user_home_t. The type user_tmost likely has a user.

Labels, roles and types constitute the security context.

An important difference from the sandboxes similar to the AppArmor sandbox systems is that the security context is preserved when the file is transferred between different file systems.

- Пользователь в SELinux не эквивалентен пользователю в Linux. При смене пользователя через su или sudo, пользователь SELinux не меняется. Обычно несколько Linux пользователей являются одним SELinux пользователем, но возможно и отображение 1:1, как это сделано для root.

- Роль, одну или более, могут иметь пользователи. Например "непривилегированный пользователь", "Web-администратор", "Администратор БД". Объекты также могут иметь роль, и обычно это роль

object_r.

Что конкретно ограничивает и позволяет роль, определяется политикой. - Тип или домен является основным средством для определения доступа. Тип — это способ классифицировать приложение, либо ресурс.

- Контекст или метку имеет каждый процесс в системе. Это атрибут, используемый для того, чтобы определить разрешён ли доступ процесса к объекту. Например, процесс может иметь контекст

user_u:user_r:user_t, а файл в каталоге пользователя имеет контекстuser_u:object_r:user_home_t. Контекст состоит из четырёх полей:

user:role:type:rangeПервое поле — SELinux user. Второе и третье — роль и тип, соответственно. Четвёртое — необязательное поле MLS диапазона.

Например, файлы в вашем домашнем каталоге, вероятно помечены, как user_home_t.user_home_t — это тип, и политика будет считать все файлы с данным типом, относящимися к вашему домашнему каталогу.

- Объектные классы или категории объектов, такие как

dirдля каталогов илиfileдля файлов используются внутри политики, чтобы более точно определить, какие виды доступа разрешены. Каждый объектный класс содержит набор разрешений, определяющий варианты доступа к объекту. Например,fileсодержит разрешения на создание (create), чтение (read), запись (write) и удаление (unlink), а классunix_stream_socket object(сокет UNIX) имеет разрешения на создание (create), установление соединения (connect), и посылку данных (sendto).

As it becomes clear from the above, setting up such a system is very dreary and requires scrupulousness.

But there is an extensive policy base. Therefore, most typical actions (for example, allowing Samba to fumble home directories) are performed by several simple commands.

Also, as in AppArmor, there is a permissive mode in which applications are allowed to access resources, but all calls are logged.

This then allows you to create a profile for the application.

The difference is that this mode is enabled for the entire system, whereas in AppArmor it is possible to selectively switch it for specific applications.

The small introductory description on SELinux is on Habré.

And a little more detailed .

For enlightenment, read the course from IBM .

Grsecurity

Difficult and somehow did not take root on Debian. The last time I checked it, he broke the system.

In general, this system (not a patch to the core for a long time), is the legacy of Gentoo . Everything is simple there: hardening is done by several teams .

However, as the installation of the OS .

There is a guide for Debian on how to use the GrSecurity and PaX symbiosis.

Tomoyo

Tomoyo in Debian is described in a small tutorial .

Mandatory access control system based on file names. Looks like AppArmor, but less common. It has been developing since 2003.

The same principle, there is also a training mode, similar tools.

Configuration files are less structured, compared to AppArmor, well, the base profiles are smaller.

Little things

The old memory used to remove the excess from earlier /etc/securettyso that root can only log in from certain terminals (usually local), but now there is no much sense in this.

Now similar security mechanisms are implemented through PAM, and their configuration can be found in /etc/security.

Instead of such complex packages as Samhain and Tripwire, it is often a rather small debsums utility that checks the checksums of the installed binaries.

Conclusion

The article was quite voluminous. And although much is not told, to delay its output is no longer a desire.

As always, configuration and stuff are available in my Github repository , which is gradually being added.