Backstage Networks at Kubernetes

- Transfer

Note perev. : The author of the original article, Nicolas Leiva, is a Cisco solutions architect who decided to share with his peers, network engineers, how the Kubernetes network works from the inside. To do this, he explores its simplest configuration in the cluster, actively using common sense, his knowledge of networks and standard Linux / Kubernetes utilities. It turned out voluminously, but very clearly.

In addition to the fact thatKelsey Hightower’s Kubernetes The Hard Way guidejust works ( even on AWS! ), I liked that the network was kept clean and simple; and this is a great opportunity to understand what the role of, for example, the Container Network Interface ( CNI) Having said that, I’ll add that the Kubernetes network is actually not very intuitive, especially for beginners ... and also do not forget that “there is simply no such thing as a network for containers ”.

Although there are already good materials on this topic (see links here ), I could not find such an example that I would combine everything necessary with the conclusions of the teams that network engineers love and hate, demonstrating what is actually happening behind the scenes. Therefore, I decided to collect information from many sources - I hope this helps and you better understand how everything is connected with each other. This knowledge is important not only to test yourself, but also to simplify the process of diagnosing problems. You can follow the example in your cluster fromKubernetes The Hard Way : all IP addresses and settings were taken from there (as of the commits for May 2018, before using Nabla containers ).

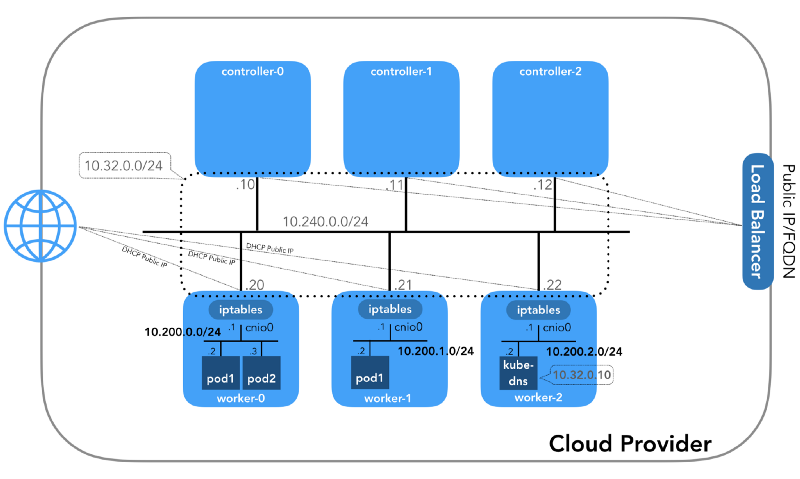

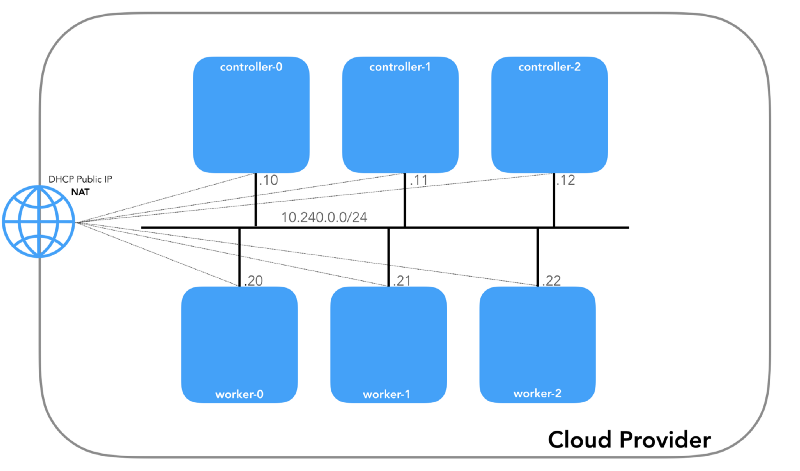

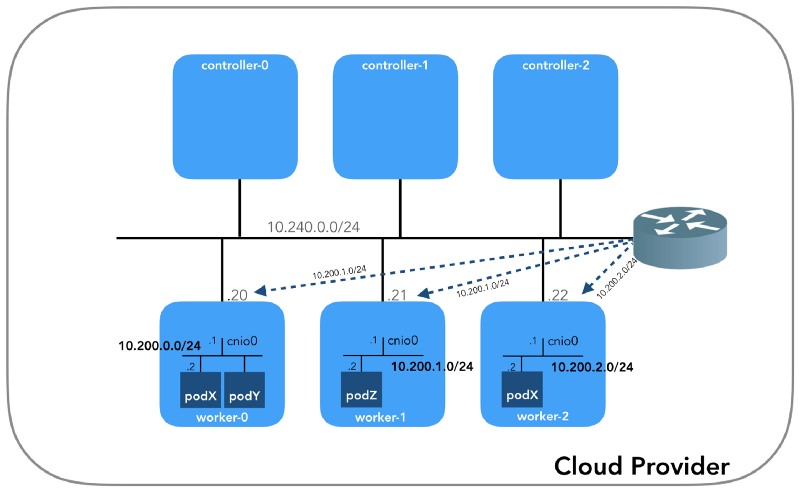

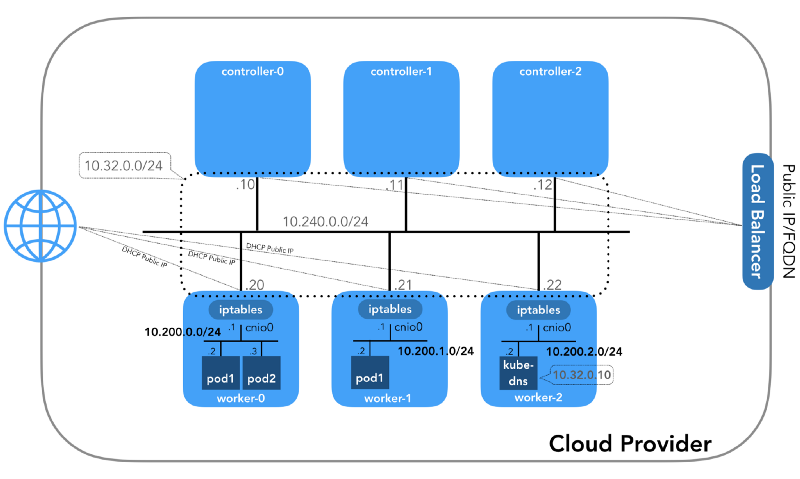

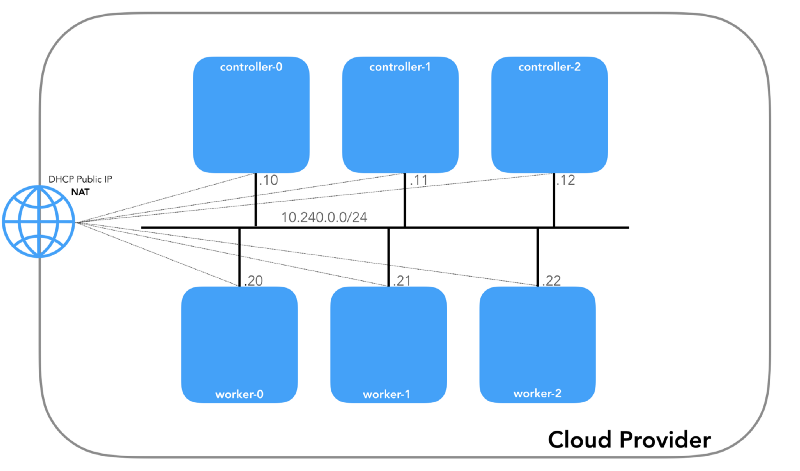

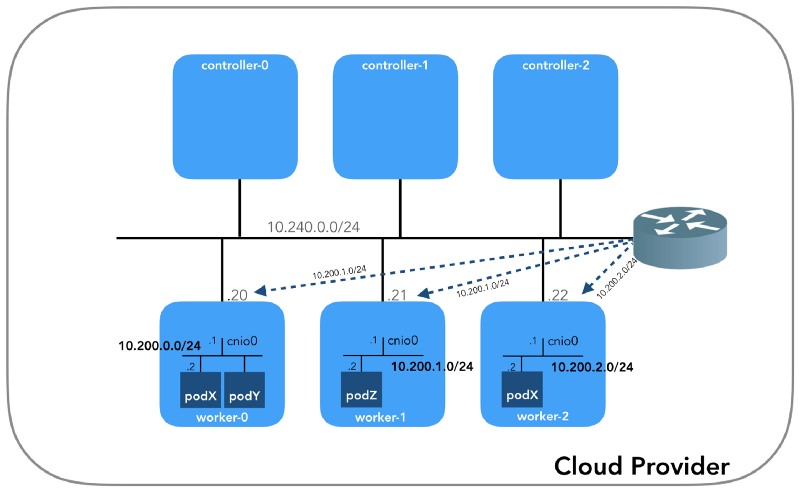

And we will start from the end, when we have three controllers and three working nodes:

You can notice that there are also at least three private subnets here! A little patience, and they will all be considered. Remember that even though we refer to very specific IP prefixes, they are simply taken from Kubernetes The Hard Way , so they have only local significance, and you are free to choose any other address block for your environment in accordance with RFC 1918 . For the case of IPv6, there will be a separate blog article.

This is an internal network of which all nodes are a part. Defined by a flag

(

(

Each instance will have two IP addresses: private from the host network (controllers -

All nodes must be able to ping each other if the security policies are correct (and if

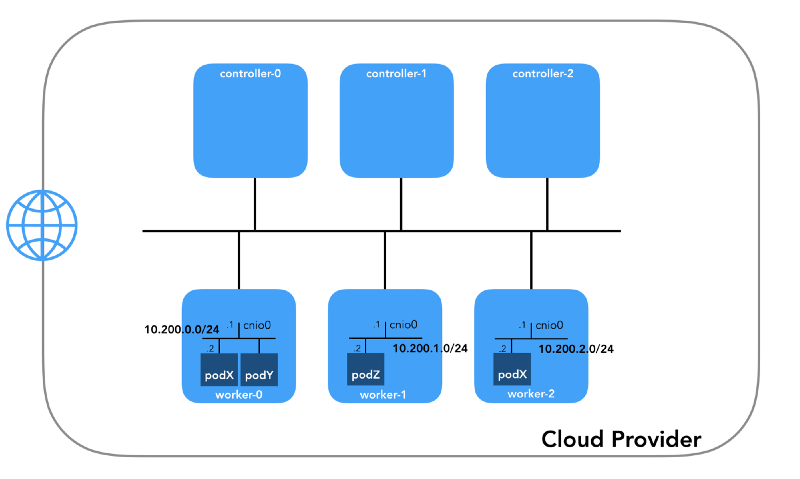

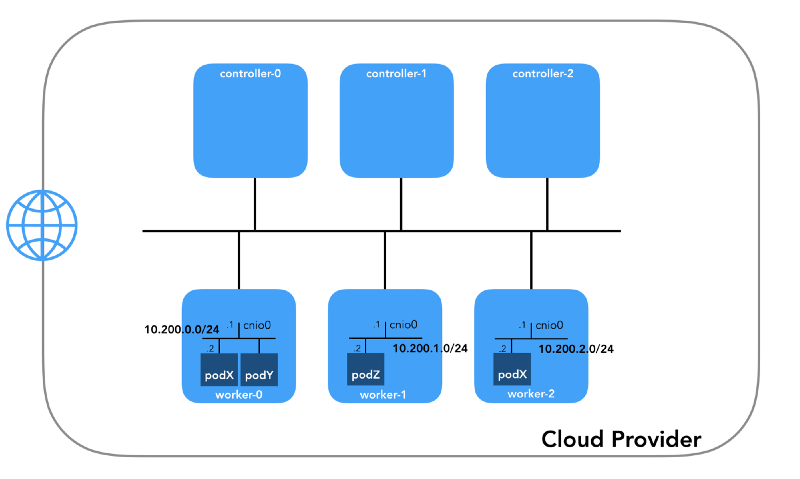

This is the network in which the pods live. Each work node uses a subnet of this network. In our case,

To understand how everything is configured, take a step back and look at the Kubernetes network model , which requires the following:

All of this can be implemented in many ways, and Kubernetes passes the network setup to the CNI plugin .

Linux provides seven different namespaces (

Go down to the ground and see how it all relates to the cluster. Firstly, network plugins in Kubernetes are diverse, and CNI plugins are one of them ( why not CNM? ). Kubelet on each node tells the container runtime which network plug-in to use. The Container Network Interface ( CNI ) is between the container runtime and the network implementation. And already the CNI plugin sets up the network.

The real CNI plugin binaries are in

Please note that call parameters

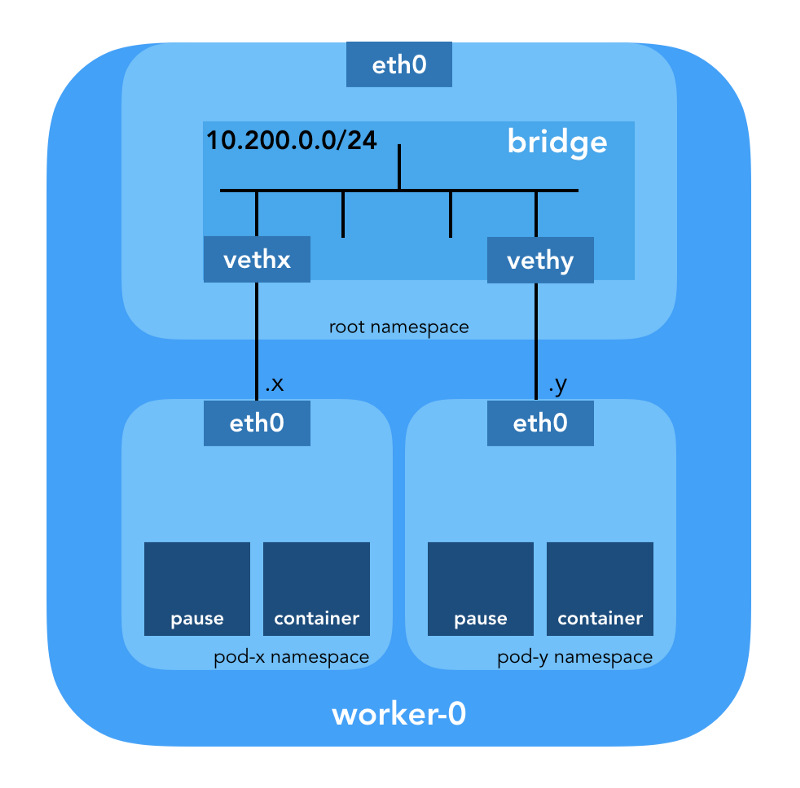

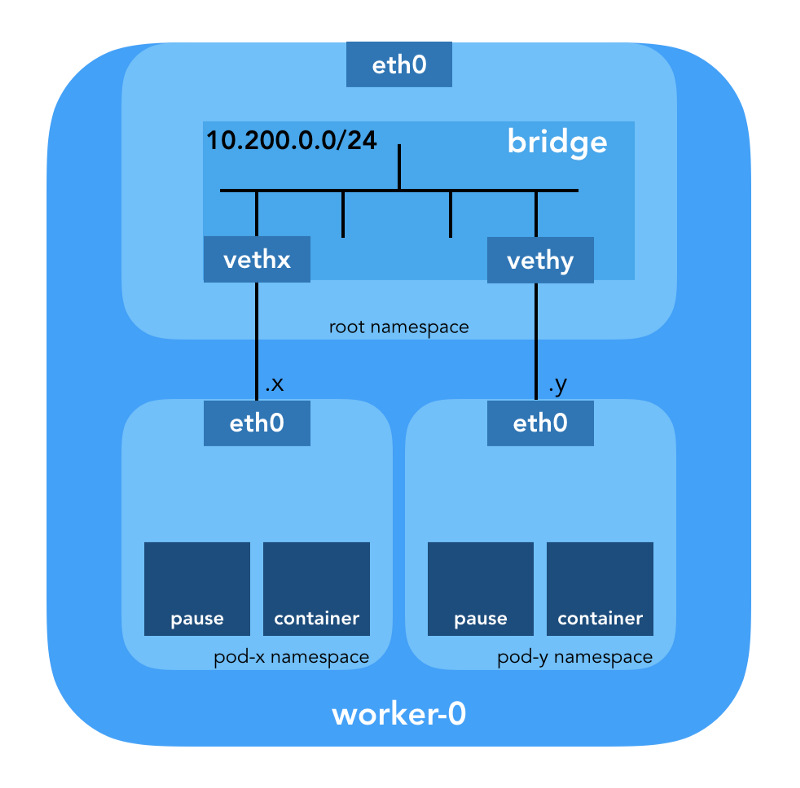

First of all, Kubernetes creates a network namespace for the hearth, even before calling any plugins. This is implemented using a special container

The config used for CNI indicates the use of a plug-in

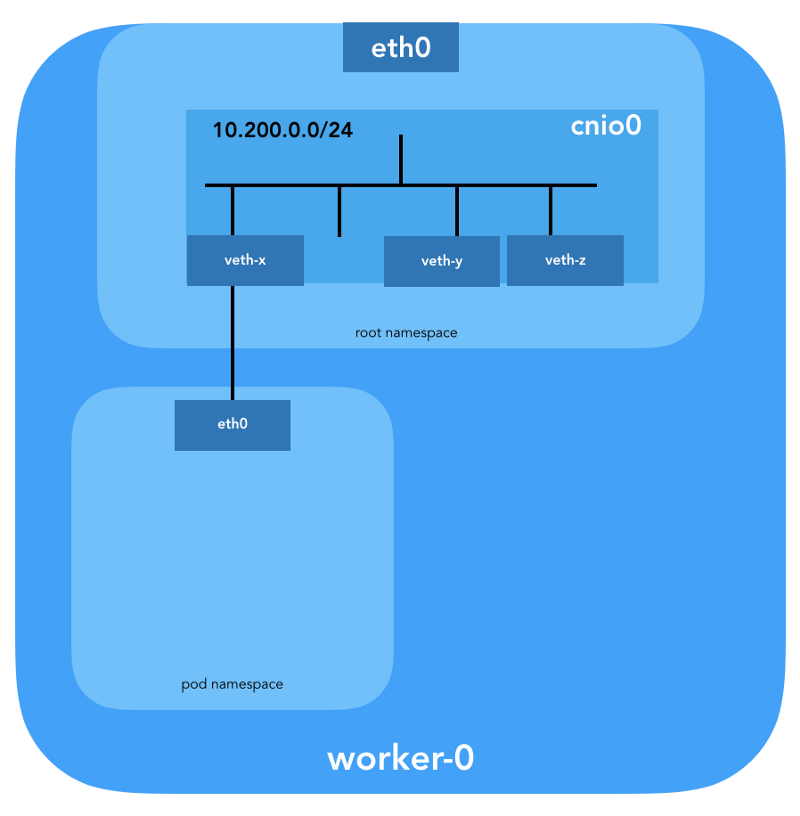

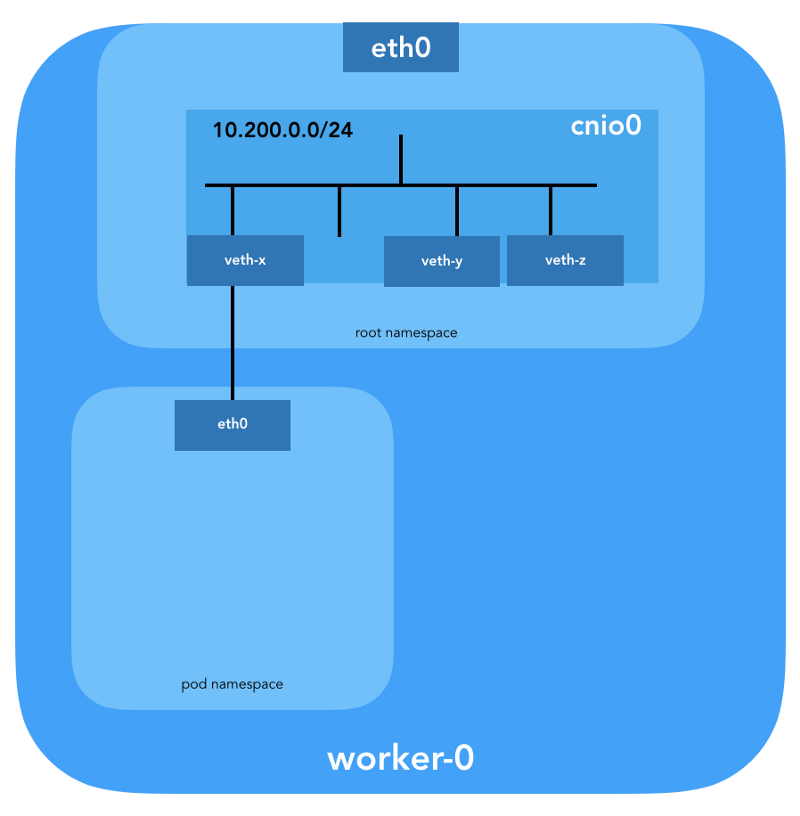

A veth pair will also be configured to connect the hearth to the newly created bridge:

To assign L3 information, such as IP addresses, the IPAM (

And the last important detail: we requested masquerading (

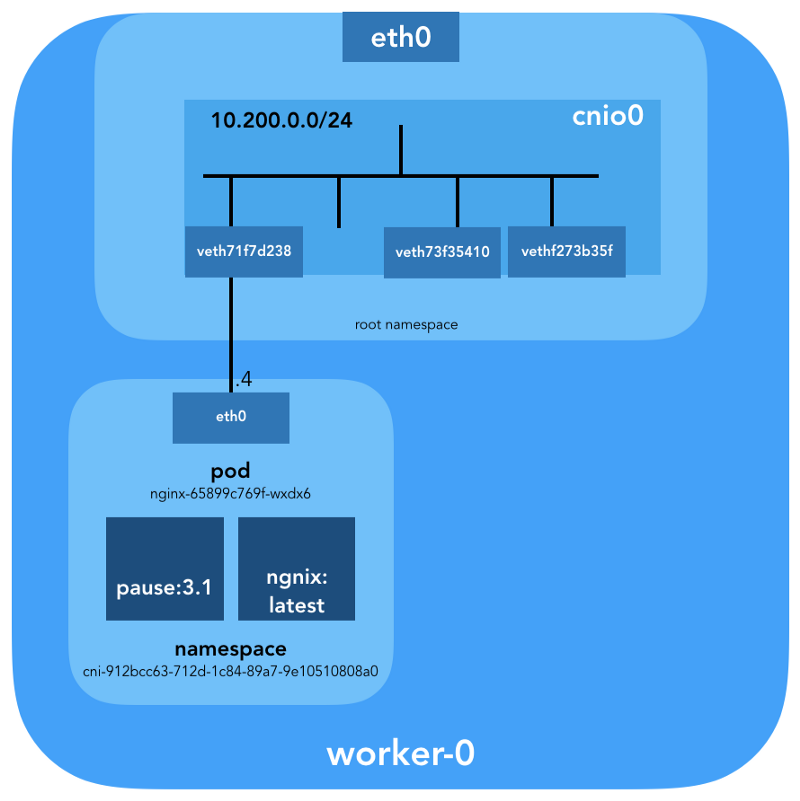

Now we are ready to customize the pods. Let's look at all the network spaces of the names of one of the work nodes and analyze one of them after creating the deployment

Using the

You can also list all network namespaces using

To see all the processes running in the network space

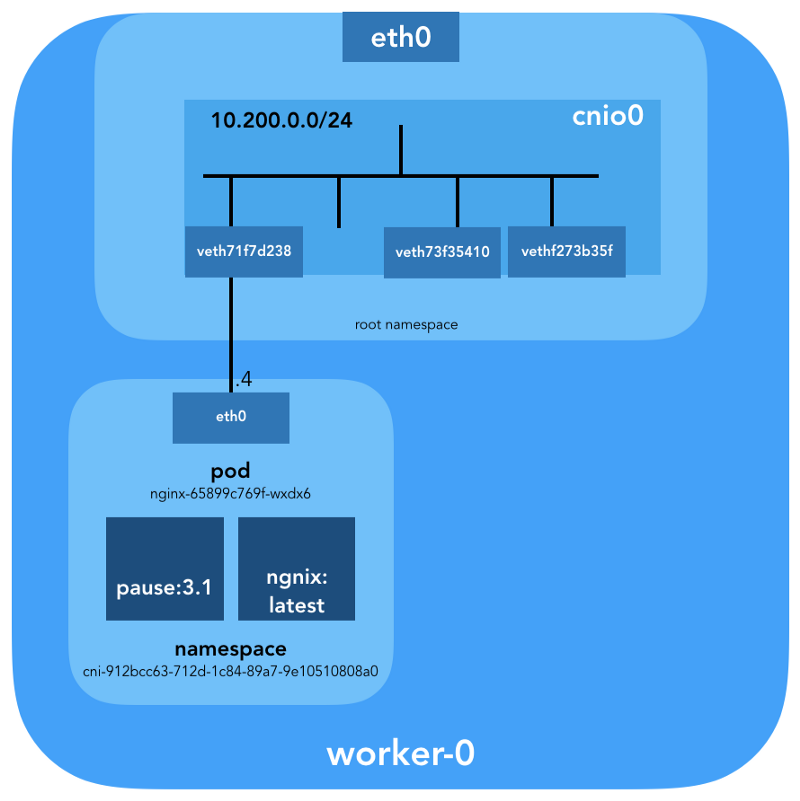

It can be seen that in addition

Now let's see what this pod tells about

More details:

Мы видим название пода —

Зная пространство имён containerd (

… и

The container ID

Remember that processes with PID 27331 and 27355 are running in a network namespace

... and:

Now we know for sure which containers are running in this pod (

How is this under (

For these purposes, we will use

... and

This confirms that the IP address obtained earlier through

We see that the

To be sure of this finally, let's see:

Great, now everything is clear with the virtual link. With the help,

So, the picture is as follows:

How do we actually forward traffic? Let's look at the routing table in the network namespace pod:

At least we know how to get to the root namespace (

We know how to send packets to the VPC Router (at VPC has a "hidden» [implicit] router, which typically has a second address from the main space subnet IP-addresses). Now: Does the VPC Router know how to get to the network of each hearth? No, he doesn’t, therefore it is assumed that the routes will be configured by the CNI plug-in or manually (as in the manual). Apparently, the AWS CNI-plugin does just that for us at AWS. Remember that there are many CNI plugins , and we are considering an example of a simple network configuration :

Using the command,

(

We get:

Pings from one container to another should be successful:

To understand the movement of traffic, you can look at the packets using

The source IP from pod 10.200.0.21 is translated into the IP address of the host 10.240.0.20.

In iptables, you can see that the counts are increasing:

On the other hand, if you remove

Ping should still pass:

And in this case - without using NAT:

So, we checked that "all containers can communicate with any other containers without using NAT."

You may have noticed in example c

There are various ways to publish a service; the default type is

(

How exactly? .. Again

As soon as packets are created by the process (

The following targets correspond to TCP packets sent to the 53rd port at 10.32.0.10, and are transmitted to the recipient 10.200.0.27 with the 53rd port:

The same for UDP packets (recipient 10.32.0.10:53 → 10.200.0.27:53):

There are other types

Under is now available from the Internet as

The packet is sent from worker-0 to worker-1 , where it finds its recipient:

Is such a circuit ideal? Maybe not, but it works. In this case, the programmed rules

In other words, the address for the recipient of packets with port 31088 is broadcast on 10.200.1.18. The port is also being broadcast, from 31088 to 80.

We have not touched on another type of service -

It might seem that there is a lot of information, but we only touched the tip of the iceberg. In the future I’m going to talk about IPv6, IPVS, eBPF and a couple of interesting current CNI plugins.

Read also in our blog:

In addition to the fact thatKelsey Hightower’s Kubernetes The Hard Way guidejust works ( even on AWS! ), I liked that the network was kept clean and simple; and this is a great opportunity to understand what the role of, for example, the Container Network Interface ( CNI) Having said that, I’ll add that the Kubernetes network is actually not very intuitive, especially for beginners ... and also do not forget that “there is simply no such thing as a network for containers ”.

Although there are already good materials on this topic (see links here ), I could not find such an example that I would combine everything necessary with the conclusions of the teams that network engineers love and hate, demonstrating what is actually happening behind the scenes. Therefore, I decided to collect information from many sources - I hope this helps and you better understand how everything is connected with each other. This knowledge is important not only to test yourself, but also to simplify the process of diagnosing problems. You can follow the example in your cluster fromKubernetes The Hard Way : all IP addresses and settings were taken from there (as of the commits for May 2018, before using Nabla containers ).

And we will start from the end, when we have three controllers and three working nodes:

You can notice that there are also at least three private subnets here! A little patience, and they will all be considered. Remember that even though we refer to very specific IP prefixes, they are simply taken from Kubernetes The Hard Way , so they have only local significance, and you are free to choose any other address block for your environment in accordance with RFC 1918 . For the case of IPv6, there will be a separate blog article.

Host Network (10.240.0.0/24)

This is an internal network of which all nodes are a part. Defined by a flag

--private-network-ipin GCP or an option --private-ip-addressin AWS when allocating computing resources.Initializing controller nodes in GCP

for i in 0 1 2; do

gcloud compute instances create controller-${i} \

# ...

--private-network-ip 10.240.0.1${i} \

# ...

done(

controllers_gcp.sh)Initializing Controller Nodes in AWS

for i in 0 1 2; do

declare controller_id${i}=`aws ec2 run-instances \

# ...

--private-ip-address 10.240.0.1${i} \

# ...

done(

controllers_aws.sh)

Each instance will have two IP addresses: private from the host network (controllers -

10.240.0.1${i}/24, workers - 10.240.0.2${i}/24) and public, appointed by the cloud provider, which we will talk about later, as we get to NodePorts.Gcp

$ gcloud compute instances list

NAME ZONE MACHINE_TYPE PREEMPTIBLE INTERNAL_IP EXTERNAL_IP STATUS

controller-0 us-west1-c n1-standard-1 10.240.0.10 35.231.XXX.XXX RUNNING

worker-1 us-west1-c n1-standard-1 10.240.0.21 35.231.XX.XXX RUNNING

...Aws

$ aws ec2 describe-instances --query 'Reservations[].Instances[].[Tags[?Key==`Name`].Value[],PrivateIpAddress,PublicIpAddress]' --output text | sed '$!N;s/\n/ /'

10.240.0.10 34.228.XX.XXX controller-0

10.240.0.21 34.173.XXX.XX worker-1

...All nodes must be able to ping each other if the security policies are correct (and if

pinginstalled on the host).Hearth network (10.200.0.0/16)

This is the network in which the pods live. Each work node uses a subnet of this network. In our case,

POD_CIDR=10.200.${i}.0/24for worker-${i}.

To understand how everything is configured, take a step back and look at the Kubernetes network model , which requires the following:

- All containers can communicate with any other containers without using NAT.

- All nodes can communicate with all containers (and vice versa) without using NAT.

- The IP that the container sees must be the same as others see it.

All of this can be implemented in many ways, and Kubernetes passes the network setup to the CNI plugin .

“The CNI plugin is responsible for adding a network interface to the container's network namespace (for example, one end of a veth pair ) and making the necessary changes on the host (for example, connecting the second end of veth to a bridge). Then he must assign an IP interface and configure the routes according to the IP Address Management section by calling the desired IPAM plugin. ” (from Container Network Interface Specification )

Network namespace

“The namespace wraps the global system resource into an abstraction that is visible to processes in this namespace in such a way that they have their own isolated instance of the global resource. Changes in the global resource are visible to other processes included in this namespace, but not visible to other processes. ” ( from the namespaces man page )

Linux provides seven different namespaces (

Cgroup, IPC, Network, Mount, PID, User, UTS). Network ( Network) namespaces ( CLONE_NEWNET) define network resources that are accessible to the process: “Each network namespace has its own network devices, IP addresses, IP routing tables, directory /proc/net, port numbers and so on” (from the article “ Namespaces in operation ") .Virtual Ethernet Devices (Veth)

“A virtual network pair (veth) offers an abstraction in the form of a“ pipe ”, which can be used to create tunnels between network namespaces or to create a bridge to a physical network device in another network space. When the namespace is freed, all veth devices in it are destroyed. ” (from the network namespaces man page )

Go down to the ground and see how it all relates to the cluster. Firstly, network plugins in Kubernetes are diverse, and CNI plugins are one of them ( why not CNM? ). Kubelet on each node tells the container runtime which network plug-in to use. The Container Network Interface ( CNI ) is between the container runtime and the network implementation. And already the CNI plugin sets up the network.

“The CNI plugin is selected by passing the command line option--network-plugin=cnito Kubelet. Kubelet reads the file from--cni-conf-dir(by default this/etc/cni/net.d) and uses the CNI configuration from this file to configure the network for each hearth. ” (from Network Plugin Requirements )

The real CNI plugin binaries are in

-- cni-bin-dir(by default this /opt/cni/bin). Please note that call parameters

kubelet.serviceinclude --network-plugin=cni:[Service]

ExecStart=/usr/local/bin/kubelet \\

--config=/var/lib/kubelet/kubelet-config.yaml \\

--network-plugin=cni \\

...First of all, Kubernetes creates a network namespace for the hearth, even before calling any plugins. This is implemented using a special container

pausethat “serves as the“ parent container “for all hearth containers” (from the article “ The Almighty Pause Container ”) . Kubernetes then executes the CNI plugin to attach the container pauseto the network. All hearth containers use the network namespace ( netns) of this pausecontainer.{

"cniVersion": "0.3.1",

"name": "bridge",

"type": "bridge",

"bridge": "cnio0",

"isGateway": true,

"ipMasq": true,

"ipam": {

"type": "host-local",

"ranges": [

[{"subnet": "${POD_CIDR}"}]

],

"routes": [{"dst": "0.0.0.0/0"}]

}

}The config used for CNI indicates the use of a plug-in

bridgefor configuring the Linux software bridge (L2) in the root namespace under the name cnio0( default name is cni0), which acts as a gateway ( "isGateway": true).

A veth pair will also be configured to connect the hearth to the newly created bridge:

To assign L3 information, such as IP addresses, the IPAM (

ipam) plugin is called . In this case, the type is used host-local“that stores the state locally on the host file system, which ensures the uniqueness of IP addresses on one host” (from описания host-local) . The IPAM plugin returns this information to the previous plugin (bridge), due to which all routes specified in the config can be configured ( "routes": [{"dst": "0.0.0.0/0"}]). If gwnot specified, it is taken from the subnet . The default route is also configured in the network namespace of the hearth, pointing to the bridge (which is configured as the first IP subnet of the hearth). And the last important detail: we requested masquerading (

"ipMasq": true) of traffic coming from the hearth network. We don’t really need NAT here, but this is the config in Kubernetes The Hard Way . Therefore, for completeness, I must mention that the entries in the iptablesplugin are bridgeconfigured for this particular example. All packages from the hearth, where the recipient is not within range 224.0.0.0/4, will be for the NAT, which does not quite meet the requirement "all containers can communicate with any other containers without using NAT." Well, we will prove why NAT is not needed ...

Hearth routing

Now we are ready to customize the pods. Let's look at all the network spaces of the names of one of the work nodes and analyze one of them after creating the deployment

nginxfrom here . We will use lsnsthe option -tto select the desired type of namespace (i.e. net):ubuntu@worker-0:~$ sudo lsns -t net

NS TYPE NPROCS PID USER COMMAND

4026532089 net 113 1 root /sbin/init

4026532280 net 2 8046 root /pause

4026532352 net 4 16455 root /pause

4026532426 net 3 27255 root /pauseUsing the

-ik option , lswe can find their inode numbers:ubuntu@worker-0:~$ ls -1i /var/run/netns

4026532352 cni-1d85bb0c-7c61-fd9f-2adc-f6e98f7a58af

4026532280 cni-7cec0838-f50c-416a-3b45-628a4237c55c

4026532426 cni-912bcc63-712d-1c84-89a7-9e10510808a0You can also list all network namespaces using

ip netns:ubuntu@worker-0:~$ ip netns

cni-912bcc63-712d-1c84-89a7-9e10510808a0 (id: 2)

cni-1d85bb0c-7c61-fd9f-2adc-f6e98f7a58af (id: 1)

cni-7cec0838-f50c-416a-3b45-628a4237c55c (id: 0)To see all the processes running in the network space

cni-912bcc63–712d-1c84–89a7–9e10510808a0( 4026532426), you can run, for example, the following command:ubuntu@worker-0:~$ sudo ls -l /proc/[1-9]*/ns/net | grep 4026532426 | cut -f3 -d"/" | xargs ps -p

PID TTY STAT TIME COMMAND

27255 ? Ss 0:00 /pause

27331 ? Ss 0:00 nginx: master process nginx -g daemon off;

27355 ? S 0:00 nginx: worker processIt can be seen that in addition

pauseto this pod we launched nginx. Container pausedivides the namespace netand ipcto all the other containers of the hearth. Remember the PID from pause- 27255; we will return to it. Now let's see what this pod tells about

kubectl:$ kubectl get pods -o wide | grep nginx

nginx-65899c769f-wxdx6 1/1 Running 0 5d 10.200.0.4 worker-0More details:

$ kubectl describe pods nginx-65899c769f-wxdx6Name: nginx-65899c769f-wxdx6

Namespace: default

Node: worker-0/10.240.0.20

Start Time: Thu, 05 Jul 2018 14:20:06 -0400

Labels: pod-template-hash=2145573259

run=nginx

Annotations:

Status: Running

IP: 10.200.0.4

Controlled By: ReplicaSet/nginx-65899c769f

Containers:

nginx:

Container ID: containerd://4c0bd2e2e5c0b17c637af83376879c38f2fb11852921b12413c54ba49d6983c7

Image: nginx

... Мы видим название пода —

nginx-65899c769f-wxdx6 — и ID одного из его контейнеров (nginx), но про pause — пока что ни слова. Копнём глубже рабочий узел, чтобы сопоставить все данные. Помните, что в Kubernetes The Hard Way не используется Docker, поэтому для получения подробностей о контейнере мы обращаемся к консольной утилите containerd — ctr (см. также статью «Интеграция containerd с Kubernetes, заменяющая Docker, готова к production» — прим. перев.):ubuntu@worker-0:~$ sudo ctr namespaces ls

NAME LABELS

k8s.ioЗная пространство имён containerd (

k8s.io), можно получить ID контейнера nginx:ubuntu@worker-0:~$ sudo ctr -n k8s.io containers ls | grep nginx

4c0bd2e2e5c0b17c637af83376879c38f2fb11852921b12413c54ba49d6983c7 docker.io/library/nginx:latest io.containerd.runtime.v1.linux… и

pause тоже:ubuntu@worker-0:~$ sudo ctr -n k8s.io containers ls | grep pause

0866803b612f2f55e7b6b83836bde09bd6530246239b7bde1e49c04c7038e43a k8s.gcr.io/pause:3.1 io.containerd.runtime.v1.linux

21640aea0210b320fd637c22ff93b7e21473178de0073b05de83f3b116fc8834 k8s.gcr.io/pause:3.1 io.containerd.runtime.v1.linux

d19b1b1c92f7cc90764d4f385e8935d121bca66ba8982bae65baff1bc2841da6 k8s.gcr.io/pause:3.1 io.containerd.runtime.v1.linuxThe container ID

nginxending in …983c7matches what we got from kubectl. Let's see if we can figure out which pausecontainer belongs to the bottom nginx:ubuntu@worker-0:~$ sudo ctr -n k8s.io task ls

TASK PID STATUS

...

d19b1b1c92f7cc90764d4f385e8935d121bca66ba8982bae65baff1bc2841da6 27255 RUNNING

4c0bd2e2e5c0b17c637af83376879c38f2fb11852921b12413c54ba49d6983c7 27331 RUNNINGRemember that processes with PID 27331 and 27355 are running in a network namespace

cni-912bcc63–712d-1c84–89a7–9e10510808a0?ubuntu@worker-0:~$ sudo ctr -n k8s.io containers info d19b1b1c92f7cc90764d4f385e8935d121bca66ba8982bae65baff1bc2841da6

{

"ID": "d19b1b1c92f7cc90764d4f385e8935d121bca66ba8982bae65baff1bc2841da6",

"Labels": {

"io.cri-containerd.kind": "sandbox",

"io.kubernetes.pod.name": "nginx-65899c769f-wxdx6",

"io.kubernetes.pod.namespace": "default",

"io.kubernetes.pod.uid": "0b35e956-8080-11e8-8aa9-0a12b8818382",

"pod-template-hash": "2145573259",

"run": "nginx"

},

"Image": "k8s.gcr.io/pause:3.1",

...... and:

ubuntu@worker-0:~$ sudo ctr -n k8s.io containers info 4c0bd2e2e5c0b17c637af83376879c38f2fb11852921b12413c54ba49d6983c7

{

"ID": "4c0bd2e2e5c0b17c637af83376879c38f2fb11852921b12413c54ba49d6983c7",

"Labels": {

"io.cri-containerd.kind": "container",

"io.kubernetes.container.name": "nginx",

"io.kubernetes.pod.name": "nginx-65899c769f-wxdx6",

"io.kubernetes.pod.namespace": "default",

"io.kubernetes.pod.uid": "0b35e956-8080-11e8-8aa9-0a12b8818382"

},

"Image": "docker.io/library/nginx:latest",

...Now we know for sure which containers are running in this pod (

nginx-65899c769f-wxdx6) and network namespace ( cni-912bcc63–712d-1c84–89a7–9e10510808a0):- nginx (ID:)

4c0bd2e2e5c0b17c637af83376879c38f2fb11852921b12413c54ba49d6983c7; - pause (ID:)

d19b1b1c92f7cc90764d4f385e8935d121bca66ba8982bae65baff1bc2841da6.

How is this under (

nginx-65899c769f-wxdx6) connected to the network? We use the previously obtained PID 27255 from pauseto run commands in its network namespace ( cni-912bcc63–712d-1c84–89a7–9e10510808a0):ubuntu@worker-0:~$ sudo ip netns identify 27255

cni-912bcc63-712d-1c84-89a7-9e10510808a0For these purposes, we will use

nsenterthe option -tthat defines the target PID, and -nwithout specifying a file, to get into the network namespace of the target process (27255). Here's what it says ip link show:ubuntu@worker-0:~$ sudo nsenter -t 27255 -n ip link show

1: lo: mtu 65536 qdisc noqueue state UNKNOWN mode DEFAULT group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

3: eth0@if7: mtu 1500 qdisc noqueue state UP mode DEFAULT group default

link/ether 0a:58:0a:c8:00:04 brd ff:ff:ff:ff:ff:ff link-netnsid 0 ... and

ifconfig eth0:ubuntu@worker-0:~$ sudo nsenter -t 27255 -n ifconfig eth0

eth0: flags=4163 mtu 1500

inet 10.200.0.4 netmask 255.255.255.0 broadcast 0.0.0.0

inet6 fe80::2097:51ff:fe39:ec21 prefixlen 64 scopeid 0x20

ether 0a:58:0a:c8:00:04 txqueuelen 0 (Ethernet)

RX packets 540 bytes 42247 (42.2 KB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 177 bytes 16530 (16.5 KB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0 This confirms that the IP address obtained earlier through

kubectl get podis configured on the eth0hearth interface . This interface is part of a veth pair , one end of which is in the hearth, and the other in the root namespace. To find out the interface of the second end, we will use ethtool:ubuntu@worker-0:~$ sudo ip netns exec cni-912bcc63-712d-1c84-89a7-9e10510808a0 ethtool -S eth0

NIC statistics:

peer_ifindex: 7We see that the

ifindexfeast is 7. Let us verify that it is in the root namespace. This can be done with ip link:ubuntu@worker-0:~$ ip link | grep '^7:'

7: veth71f7d238@if3: mtu 1500 qdisc noqueue master cnio0 state UP mode DEFAULT group default To be sure of this finally, let's see:

ubuntu@worker-0:~$ sudo cat /sys/class/net/veth71f7d238/ifindex

7Great, now everything is clear with the virtual link. With the help,

brctllet's see who else is connected to the Linux bridge:ubuntu@worker-0:~$ brctl show cnio0

bridge name bridge id STP enabled interfaces

cnio0 8000.0a580ac80001 no veth71f7d238

veth73f35410

vethf273b35fSo, the picture is as follows:

Routing check

How do we actually forward traffic? Let's look at the routing table in the network namespace pod:

ubuntu@worker-0:~$ sudo ip netns exec cni-912bcc63-712d-1c84-89a7-9e10510808a0 ip route show

default via 10.200.0.1 dev eth0

10.200.0.0/24 dev eth0 proto kernel scope link src 10.200.0.4At least we know how to get to the root namespace (

default via 10.200.0.1). Now let's see the host routing table:ubuntu@worker-0:~$ ip route list

default via 10.240.0.1 dev eth0 proto dhcp src 10.240.0.20 metric 100

10.200.0.0/24 dev cnio0 proto kernel scope link src 10.200.0.1

10.240.0.0/24 dev eth0 proto kernel scope link src 10.240.0.20

10.240.0.1 dev eth0 proto dhcp scope link src 10.240.0.20 metric 100We know how to send packets to the VPC Router (at VPC has a "hidden» [implicit] router, which typically has a second address from the main space subnet IP-addresses). Now: Does the VPC Router know how to get to the network of each hearth? No, he doesn’t, therefore it is assumed that the routes will be configured by the CNI plug-in or manually (as in the manual). Apparently, the AWS CNI-plugin does just that for us at AWS. Remember that there are many CNI plugins , and we are considering an example of a simple network configuration :

Deep immersion in NAT

Using the command,

kubectl create -f busybox.yamlcreate two identical containers busyboxwith the Replication Controller:apiVersion: v1

kind: ReplicationController

metadata:

name: busybox0

labels:

app: busybox0

spec:

replicas: 2

selector:

app: busybox0

template:

metadata:

name: busybox0

labels:

app: busybox0

spec:

containers:

- image: busybox

command:

- sleep

- "3600"

imagePullPolicy: IfNotPresent

name: busybox

restartPolicy: Always(

busybox.yaml) We get:

$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

busybox0-g6pww 1/1 Running 0 4s 10.200.1.15 worker-1

busybox0-rw89s 1/1 Running 0 4s 10.200.0.21 worker-0

...Pings from one container to another should be successful:

$ kubectl exec -it busybox0-rw89s -- ping -c 2 10.200.1.15

PING 10.200.1.15 (10.200.1.15): 56 data bytes

64 bytes from 10.200.1.15: seq=0 ttl=62 time=0.528 ms

64 bytes from 10.200.1.15: seq=1 ttl=62 time=0.440 ms

--- 10.200.1.15 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.440/0.484/0.528 msTo understand the movement of traffic, you can look at the packets using

tcpdumpor conntrack:ubuntu@worker-0:~$ sudo conntrack -L | grep 10.200.1.15

icmp 1 29 src=10.200.0.21 dst=10.200.1.15 type=8 code=0 id=1280 src=10.200.1.15 dst=10.240.0.20 type=0 code=0 id=1280 mark=0 use=1The source IP from pod 10.200.0.21 is translated into the IP address of the host 10.240.0.20.

ubuntu@worker-1:~$ sudo conntrack -L | grep 10.200.1.15

icmp 1 28 src=10.240.0.20 dst=10.200.1.15 type=8 code=0 id=1280 src=10.200.1.15 dst=10.240.0.20 type=0 code=0 id=1280 mark=0 use=1In iptables, you can see that the counts are increasing:

ubuntu@worker-0:~$ sudo iptables -t nat -Z POSTROUTING -L -v

Chain POSTROUTING (policy ACCEPT 0 packets, 0 bytes)

pkts bytes target prot opt in out source destination

...

5 324 CNI-be726a77f15ea47ff32947a3 all -- any any 10.200.0.0/24 anywhere /* name: "bridge" id: "631cab5de5565cc432a3beca0e2aece0cef9285482b11f3eb0b46c134e457854" */

Zeroing chain `POSTROUTING'On the other hand, if you remove

"ipMasq": truethe CNI plugin from the configuration, you can see the following (this operation is performed exclusively for educational purposes - we do not recommend changing the config on a working cluster!):$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

busybox0-2btxn 1/1 Running 0 16s 10.200.0.15 worker-0

busybox0-dhpx8 1/1 Running 0 16s 10.200.1.13 worker-1

...Ping should still pass:

$ kubectl exec -it busybox0-2btxn -- ping -c 2 10.200.1.13

PING 10.200.1.6 (10.200.1.6): 56 data bytes

64 bytes from 10.200.1.6: seq=0 ttl=62 time=0.515 ms

64 bytes from 10.200.1.6: seq=1 ttl=62 time=0.427 ms

--- 10.200.1.6 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.427/0.471/0.515 msAnd in this case - without using NAT:

ubuntu@worker-0:~$ sudo conntrack -L | grep 10.200.1.13

icmp 1 29 src=10.200.0.15 dst=10.200.1.13 type=8 code=0 id=1792 src=10.200.1.13 dst=10.200.0.15 type=0 code=0 id=1792 mark=0 use=1So, we checked that "all containers can communicate with any other containers without using NAT."

ubuntu@worker-1:~$ sudo conntrack -L | grep 10.200.1.13

icmp 1 27 src=10.200.0.15 dst=10.200.1.13 type=8 code=0 id=1792 src=10.200.1.13 dst=10.200.0.15 type=0 code=0 id=1792 mark=0 use=1Cluster Network (10.32.0.0/24)

You may have noticed in example c

busyboxthat the IP addresses allocated for the hearth busyboxwere different in each case. What if we wanted to make these containers available for communication from other hearths? One could take the current IP addresses of the pod, but they will change. For this reason, you need to configure a resource Servicethat will proxy requests to many short-lived hearths.“Service in Kubernetes is an abstraction that defines the logical set of hearths and the policies by which they can be accessed.” (from Kubernetes Services documentation )

There are various ways to publish a service; the default type is

ClusterIPsetting the IP address from the CIDR block of the cluster (i.e., accessible only from the cluster). One such example is the DNS Cluster Add-on configured in Kubernetes The Hard Way.# ...

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "KubeDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.32.0.10

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

# ...(

kube-dns.yaml) kubectlshows that it Serviceremembers endpoints and makes them broadcast:$ kubectl -n kube-system describe services

...

Selector: k8s-app=kube-dns

Type: ClusterIP

IP: 10.32.0.10

Port: dns 53/UDP

TargetPort: 53/UDP

Endpoints: 10.200.0.27:53

Port: dns-tcp 53/TCP

TargetPort: 53/TCP

Endpoints: 10.200.0.27:53

...How exactly? .. Again

iptables. Let's go through the rules created for this example. Their complete list can be seen by the team iptables-save. As soon as packets are created by the process (

OUTPUT) or arrive at the network interface ( PREROUTING), they pass through the following chains iptables:-A PREROUTING -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

-A OUTPUT -m comment --comment "kubernetes service portals" -j KUBE-SERVICESThe following targets correspond to TCP packets sent to the 53rd port at 10.32.0.10, and are transmitted to the recipient 10.200.0.27 with the 53rd port:

-A KUBE-SERVICES -d 10.32.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp cluster IP" -m tcp --dport 53 -j KUBE-SVC-ERIFXISQEP7F7OF4

-A KUBE-SVC-ERIFXISQEP7F7OF4 -m comment --comment "kube-system/kube-dns:dns-tcp" -j KUBE-SEP-32LPCMGYG6ODGN3H

-A KUBE-SEP-32LPCMGYG6ODGN3H -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp" -m tcp -j DNAT --to-destination 10.200.0.27:53The same for UDP packets (recipient 10.32.0.10:53 → 10.200.0.27:53):

-A KUBE-SERVICES -d 10.32.0.10/32 -p udp -m comment --comment "kube-system/kube-dns:dns cluster IP" -m udp --dport 53 -j KUBE-SVC-TCOU7JCQXEZGVUNU

-A KUBE-SVC-TCOU7JCQXEZGVUNU -m comment --comment "kube-system/kube-dns:dns" -j KUBE-SEP-LRUTK6XRXU43VLIG

-A KUBE-SEP-LRUTK6XRXU43VLIG -p udp -m comment --comment "kube-system/kube-dns:dns" -m udp -j DNAT --to-destination 10.200.0.27:53There are other types

Servicesin Kubernetes. In particular, Kubernetes The Hard Way talks about NodePort- see Smoke Test: Services .kubectl expose deployment nginx --port 80 --type NodePortNodePortpublishes the service on the IP address of each node, placing it on a static port (it’s called NodePort). The service NodePortcan also be accessed from outside the cluster. You can check the dedicated port (in this case - 31088) using kubectl:$ kubectl describe services nginx

...

Type: NodePort

IP: 10.32.0.53

Port: 80/TCP

TargetPort: 80/TCP

NodePort: 31088/TCP

Endpoints: 10.200.1.18:80

... Under is now available from the Internet as

http://${EXTERNAL_IP}:31088/. Here EXTERNAL_IPis the public IP address of any working instance . In this example, I used the public IP address of worker-0 . The request is received by a host with an internal IP address of 10.240.0.20 (the cloud provider is engaged in public NAT), however, the service is actually started on another host ( worker-1 , which can be seen by the endpoint's IP address - 10.200.1.18):ubuntu@worker-0:~$ sudo conntrack -L | grep 31088

tcp 6 86397 ESTABLISHED src=173.38.XXX.XXX dst=10.240.0.20 sport=30303 dport=31088 src=10.200.1.18 dst=10.240.0.20 sport=80 dport=30303 [ASSURED] mark=0 use=1The packet is sent from worker-0 to worker-1 , where it finds its recipient:

ubuntu@worker-1:~$ sudo conntrack -L | grep 80

tcp 6 86392 ESTABLISHED src=10.240.0.20 dst=10.200.1.18 sport=14802 dport=80 src=10.200.1.18 dst=10.240.0.20 sport=80 dport=14802 [ASSURED] mark=0 use=1Is such a circuit ideal? Maybe not, but it works. In this case, the programmed rules

iptablesare as follows:-A KUBE-NODEPORTS -p tcp -m comment --comment "default/nginx:" -m tcp --dport 31088 -j KUBE-SVC-4N57TFCL4MD7ZTDA

-A KUBE-SVC-4N57TFCL4MD7ZTDA -m comment --comment "default/nginx:" -j KUBE-SEP-UGTFMET44DQG7H7H

-A KUBE-SEP-UGTFMET44DQG7H7H -p tcp -m comment --comment "default/nginx:" -m tcp -j DNAT --to-destination 10.200.1.18:80In other words, the address for the recipient of packets with port 31088 is broadcast on 10.200.1.18. The port is also being broadcast, from 31088 to 80.

We have not touched on another type of service -

LoadBalancer, - which makes the service publicly available using the load balancer of the cloud provider, but the article already turned out to be large.Conclusion

It might seem that there is a lot of information, but we only touched the tip of the iceberg. In the future I’m going to talk about IPv6, IPVS, eBPF and a couple of interesting current CNI plugins.

PS from the translator

Read also in our blog:

- “ Illustrated Guide to Networking at Kubernetes ”;

- “ Comparison of network performance for Kubernetes ”;

- “ Experiments with kube-proxy and host inaccessibility in Kubernetes ”;

- “ Improving the reliability of Kubernetes: how to quickly notice that a node has fallen ”;

- “ Play with Kubernetes - a service for practical acquaintance with K8s ”;

- “ Our experience with Kubernetes in small projects ” (video report, which includes an introduction to the technical device of Kubernetes) ;

- “ Container Networking Interface (CNI) is the network interface and standard for Linux containers .”