The past performance gains: the end of the frequency race, multi-core and why progress is stuck in one place

KDPV: one of Intel’s attempts to create a demotivator :)

Almost 10 years ago, Intel announced the closure of Tejas and Jayhawk projects - the successors of the NetBurst (Pentium 4) architecture in the direction of increasing the clock frequency. This event actually marked the transition to the era of multi-core processors. Let's try to figure out what caused this and what brought the results.

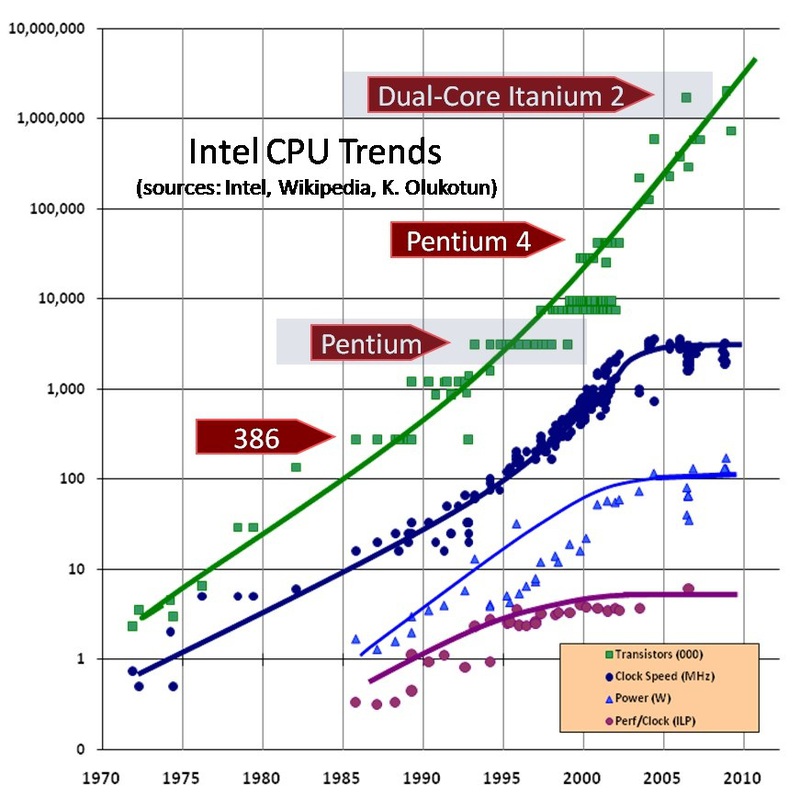

In order to understand the causes and extent of what happened with this transition, I suggest taking a look at the following graph. Shows the number of transistors, clock speed, power consumption, and instruction level parallelism ( ILP ).

Doubling the number of transistors every few years, known as Moore’s law, is not the only pattern. It can be noted that until the year 2000, the clock frequency and power consumption grew according to similar laws. For decades, Moore’s law has been implemented because transistors have been decreasing and decreasing in size, following another law known as Dennard’s scaling . According to this law, under ideal conditions, such a decrease in transistors with a constant processor area did not require an increase in power consumption.

As a result, if the first 8086 processor at a frequency of 8MHz consumed less than 2W, then the Pentium 3 operating at a frequency of 1GHz consumed 33W already. That is, power consumption increased by 17 times, and the clock frequency during the same time increased by 125 times. Note that productivity during this time has grown much stronger, because frequency comparison does not take into account such things as the appearance of L1 / L2 cache and out-of-order execution, as well as the development of superscalar architecture and pipelining. This time can rightfully be called the golden age: scaling the process technology (reducing the size of transistors) turned out to be an idea that ensured a steady increase in productivity for several decades.

The combination of technological and architectural achievements led to the fact that Moore's law was executed until the mid-2000s, where the turning point came. At 90nm, the transistor's gate becomes too thin to prevent leakage current, and power consumption has already reached all conceivable limits. Power consumption up to 100W and a cooling system weighing up to half a kilogram are more likely to be associated with a welding machine, but with anything, but not with a complex computing device.

Intel and other companies have made some headway in terms of increasing productivity and reducing energy consumption thanks to innovative solutions such as the use of hafnium oxide, switching to Tri-Gate transistors, etc. But each such improvement was only one-time, and could not even be compared closely with what could be achieved simply by reducing the transistors. If from 2007 to 2011 the processor clock speed increased by 33%, then from 1994 to 1998 this figure was 300%.

Turn towards Multicore

Over the past 8 years, Intel and AMD have focused their efforts on multi-core processors as a solution for increasing productivity. But there are a number of reasons to believe that this direction has practically exhausted itself. First of all, an increase in the number of cores never gives an ideal increase in performance. The performance of any parallel program is limited by a part of the code that cannot be parallelized. This limitation is known as Amdahl’s law and is illustrated in the following graph.

Also, one should not forget about such reasons as, for example, the difficulty of efficiently loading a large number of cores, which also worsen the picture.

AMD Bulldozer could be a good example of how using more cores leads to lower performance. This microprocessor was designed with the expectation that a shared cache and logic would save a chip area and eventually accommodate more cores. But in the end it turned out that when using all the cores, the power consumption of the chip forces a significant decrease in the clock frequency, and the slow shared cache reduces performance even more. Despite the fact that in general it was a good processor, an increase in the number of cores did not even close to the expected performance. And AMD is not the only ones who have encountered this problem.

Another reason why adding new kernels doesn't really help solve the problem is application optimization. There are not many tasks that, such as processing bank transactions, can be easily parallelized to almost any number of cores.

Some scientists (with arguments of varying degrees of persuasiveness) believe that the tasks of the real world, like iron, have natural parallelism, and it remains only to create a parallel model of computing and architecture. But most of the well-known algorithms used for practical tasks are consistent in nature. Their parallelization is not always possible, costly and does not give the desired high results. This is especially noticeable when you look at computer games. Game developers, while making progress in the direction of loading the work of multi-core processors, but they are moving in this direction very slowly. There are not many games that, like the last parts of Battlefield, can load all the cores with work. And, as a rule, such games were created from the very beginning with the possibility of using multi-core as the main goal.

(I admit, I can’t check the information about Battlefield. I have neither the game itself, nor the computer on which to play it. :))

We can say that now for Intel or AMD adding new cores is an easier task, rather than using them for software developers .

The advent and limitations of Manycore

The end of the era of scaling of the technological process led to the fact that a large number of companies were engaged in the development of specialized processor cores. If earlier, in an era of rapid growth in productivity, general-purpose processor architectures practically replaced specialized coprocessors and expansion cards from the market, then with a slowdown in productivity growth, specialized solutions gradually began to regain their positions.

Despite the statements of a number of companies, the specialized manycore chips in no way violate Moore's law and are no exception to the realities of the semiconductor industry. The limitations of power consumption and concurrency are as relevant to them as they are to any other processor. What they offer is a choice in favor of a less universal, more specialized architecture that can show better performance on a narrow range of tasks. Also, such solutions are less burdened by energy restrictions - the limit for Intel CPUs is 140W, and older models of video cards from Nvidia are in the region of 250W.

Intel's MIC (Many Integrated Cores) architecture is partly an attempt to take advantage of a separate memory interface and create a giant ultra-parallel number crusher. AMD, meanwhile, is directing its efforts to tasks less demanding on performance, developing the architecture of GCN (Graphics Core Next). Despite the market segment of such solutions, they essentially offer specialized coprocessors for a number of tasks, one of which is graphics.

Unfortunately, this approach will not solve the problem. The integration of specialized units in the processor chip or their placement on expansion cards allows you to increase productivity per watt of power consumption. But the fact that the size of the transistors decreased and decreased, while their power consumption and clock frequency - no, led to the emergence of a new concept - dark silicon (dark silicon). This term is used to refer to a large part of the microprocessor that cannot be used, while remaining within the acceptable power consumption.

Research into the future of multi-core devices shows that no matter how the microprocessor is arranged and what its topology is, the increase in the number of cores is severely limited by power consumption. Given the low productivity gain, the addition of new cores does not provide sufficient advantages to justify the need and to pay for further improvement of the process. And if you look at the magnitude of the problem and how long it needs to be resolved, it becomes clear that no radical or even incremental solution to this problem can be expected from typical academic or industrial studies.

It is necessary to find a new idea how to use the huge number of transistors that Moore's law provides. Otherwise, the economic component of the development of a new technological process will collapse, and Moore’s law will cease to be fulfilled even before it reaches its technological limit.

Over the next few years, we are likely to see 14nm and 10nm chips. Most likely, 6-8 cores will become commonplace for any computer user, quad-core processors will penetrate almost everywhere, and we will see even closer integration of the CPU and GPU.

But it is not clear what will happen next. Each subsequent performance improvement appears to be extremely small compared to the growth that has occurred in past decades. Due to the increase in leakage currents, the Dennard law has ceased to be implemented, and new technology capable of providing an equally steady increase in productivity is not observed.

In the following parts, I will talk about how developers are trying to solve this problem, about their short-term and more distant plans.