WebGL landscape rendering

- Transfer

- Recovery mode

Part One - Introduction

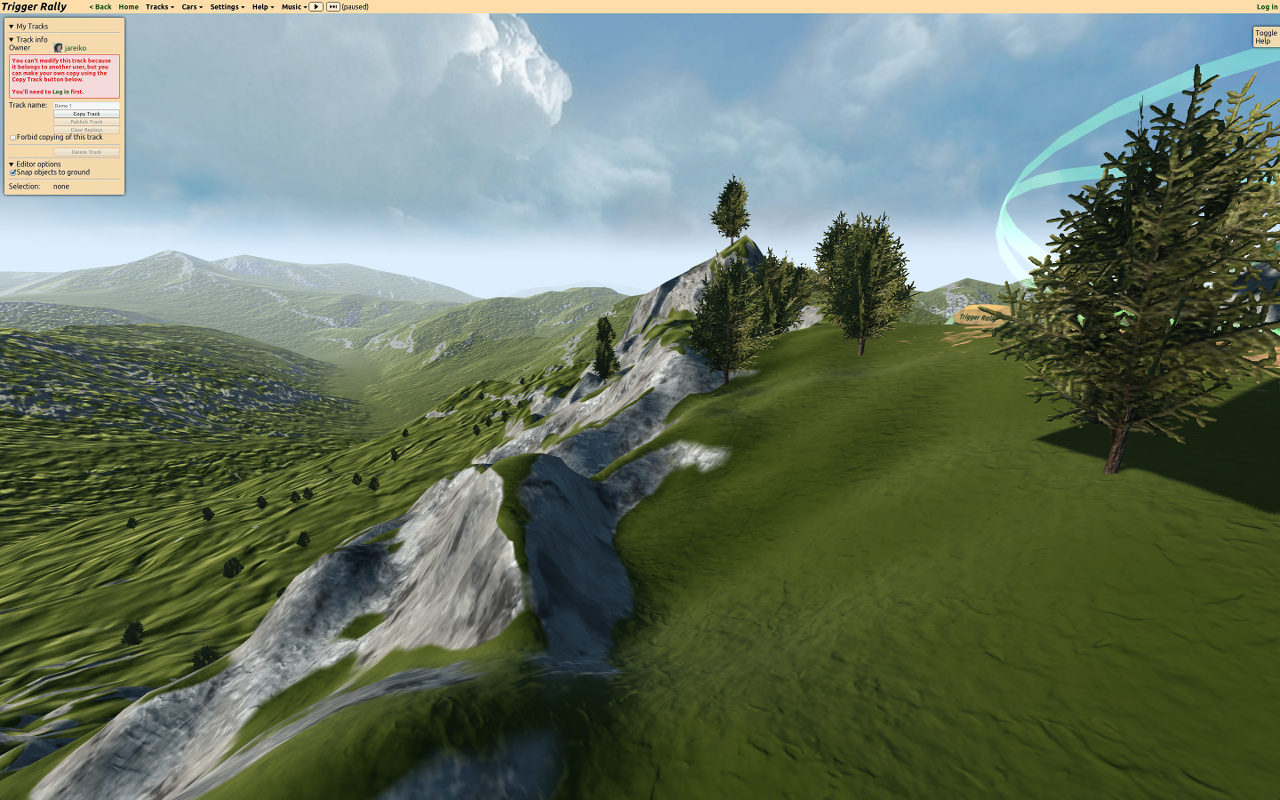

In this series of posts, I will talk about landscape rendering technology in the Rally Trigger game, based on WebGL.

From a translator: I stumbled upon this series of articles, which is currently still being written. I must say - the articles are excellent, everything is perfectly chewed!

Interesting problem

Note: JavaScript performance is the number one theme for HTML5 game developers. In this article we review recent developments in the world asm.js.

WebGL makes the coding process even more fun than it is. WebGL is OpenGL for the browser, providing access to GPU capabilities, but with some limitations. It is important to note that the CPU-side, led by JavaScript, processes data much more slowly than the native OpenGL application. This is due to the fact that when transferring data from the processor to the GPU, there are many checks aimed at making web applications safe. But as soon as the data gets on the video card, rendering is fast.

A great way to offload the processor (and therefore load the video card) is to cache static data (vertices and textures) on the GPU at startup and call the rendering functions as little as possible.

But it is extremely difficult to make the static environment look good. Since the camera will most often be very close to the surface of the earth, there should be no noticeable difference in resolution between the far and near parts of the surface.

In addition, there is a limit to the number of triangles that the GPU can render real time. The stock ( note: author) used a good word - budget ) of triangles (for landscape rendering) will be extremely limited, so we cannot afford a large number of details on the entire surface of the earth.

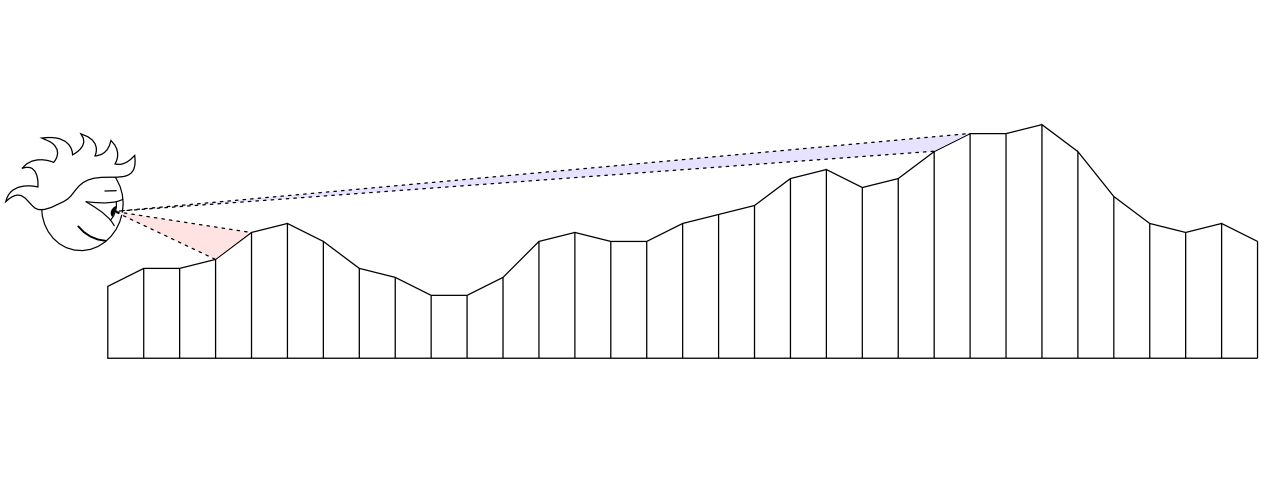

Since the budget of the triangles is limited, we must decide how to distribute them for the best effect. The result of the even distribution is low detail near the camera and too high in the distance where the player cannot appreciate it:

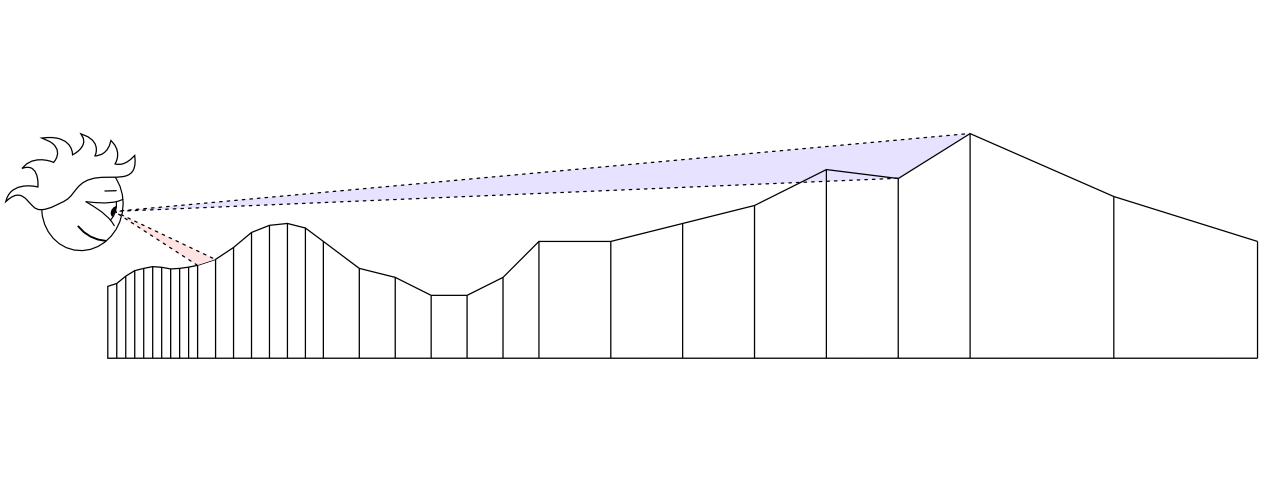

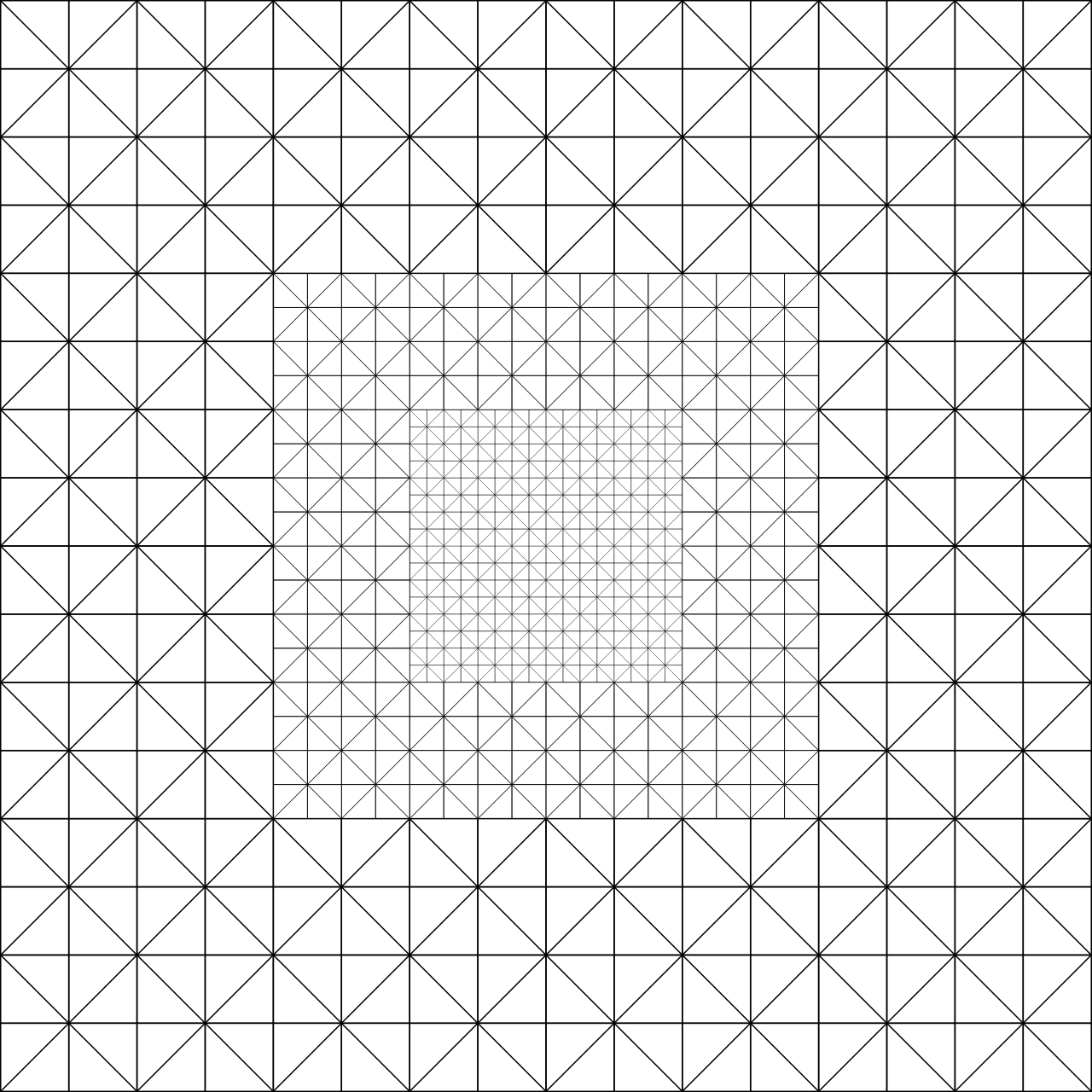

Ideally, it would not be bad to use as many triangles as possible near the camera and less in the distance:

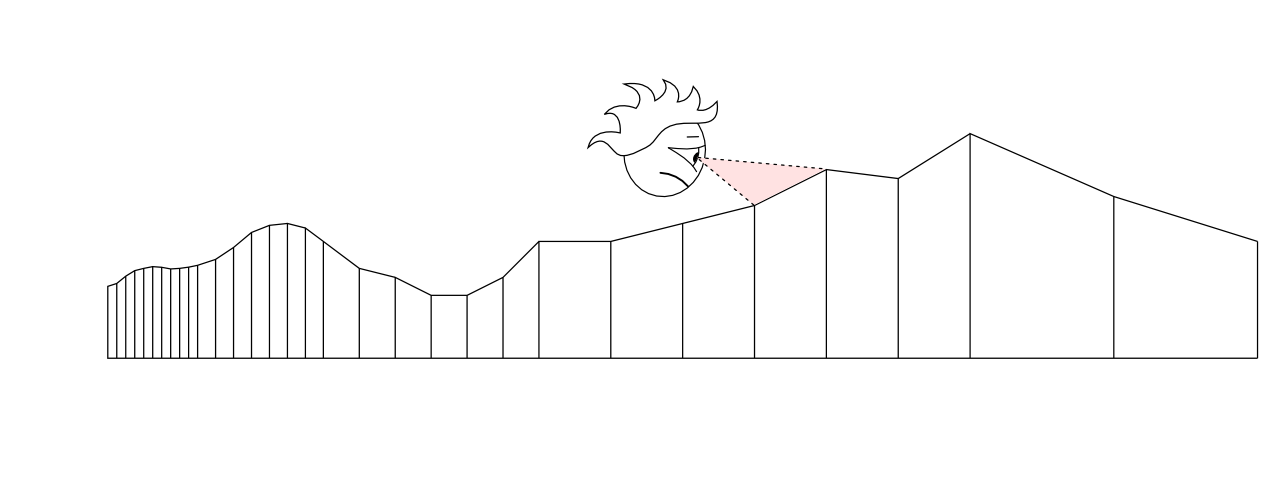

But it will look bad with a moving camera:

So, if you are ready to load the CPU a little - you can use one of many algorithms to adapt the localization of detail (LOD) to the current position of the camera. The algorithm, in fact, evenly distributes the work between the processor and the video card.

Solution: Geoclipmapping

( note transl.: I can’t even imagine how to correctly translate the term )

How can we achieve adaptive detailing of the landscape with only static data about the peaks? Geoclipmapping and vertex texturing rush to the rescue!

Instead of using an array of vertices, we will store data on the height of each point in the landscape in the texture. Due to this, in the future it will be possible to easily calculate a polygonal mesh with a higher number of triangles closer to the camera and vice versa - the farther the smaller the triangles:

During rendering, we shift this mesh to the current camera position and load the desired texture segment with a height map into the vertex shader .

It sounds simple, but it’s a little more complicated, so in the next article I’ll talk in more detail about how it works and how to make productive geoclipmapping with morphing on WebGL . Well, after that I'll discuss about height maps with different resolutions and surface shading.

Part two